When healthcare companies start exploring AI agents, the conversation almost always begins in the wrong place. "We need to hire ML engineers." "Should we fine-tune a model?" "What GPU infrastructure do we need?" These questions reveal a fundamental misunderstanding of what building a healthcare agent actually involves in 2026.

The truth is simpler and more accessible than most people expect: building a healthcare AI agent is an orchestration problem, not a machine learning problem. You do not need ML engineers, GPU clusters, or custom model training. You need LLM APIs, FHIR connectors, a workflow orchestrator, healthcare domain knowledge, and solid software engineering. If you have a team that has built healthcare applications before, you already have 80% of what you need.

We have built agents across 20+ healthcare applications at this point, and the pattern is remarkably consistent. Here is exactly how it works.

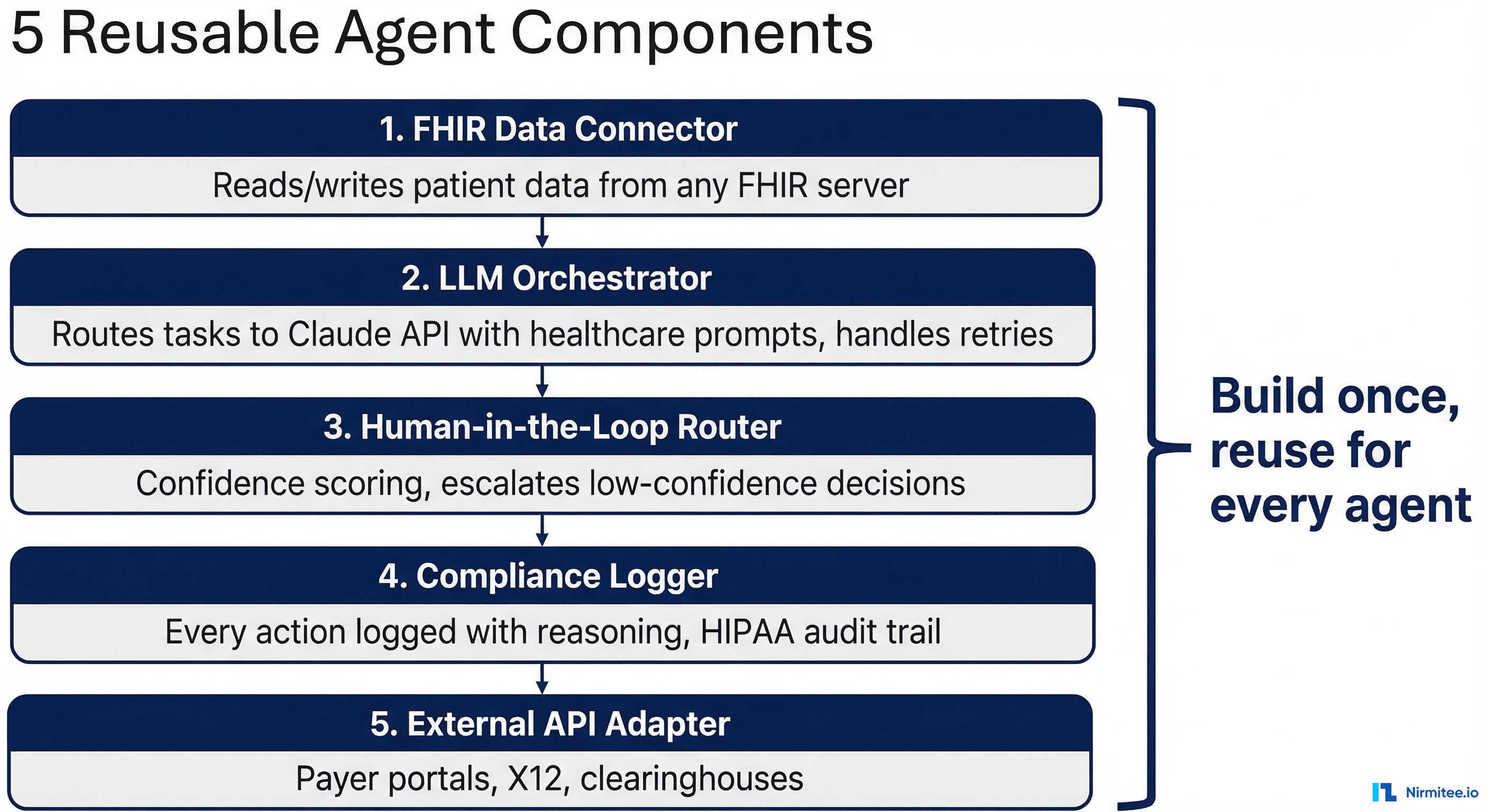

The 5 Reusable Agent Components

Every healthcare agent we have built — whether it handles prior authorization, clinical document processing, eligibility verification, or claims management — is composed of the same five components. The domain logic changes, but the architecture does not.

Component 1: FHIR Data Connector

This is the agent's interface to clinical and administrative data. It reads and writes FHIR R4 resources — Patient, Encounter, Claim, Coverage, DocumentReference, and dozens of others. It handles authentication (SMART on FHIR), pagination, search parameters, and the FHIR-specific quirks that anyone who has worked with healthcare data knows well.

The key design decision here is abstraction. The agent does not think in terms of FHIR resources. The connector translates FHIR into a simplified domain model that the LLM can reason about. Instead of handing the LLM a raw Bundle with nested references, the connector resolves references, flattens the relevant data, and presents it as a clean context object: "Patient John Smith, DOB 1985-03-15, Coverage: Blue Cross PPO, Member ID: BCX-12345678, Primary Care: Dr. Sarah Chen."

This abstraction layer is where your healthcare domain knowledge matters most. You need to know which FHIR fields are relevant for each workflow, how to handle missing data gracefully, and how to resolve the common data quality issues that plague real-world FHIR implementations.

Component 2: LLM Orchestrator

This is the reasoning engine. It takes structured context from the FHIR connector, applies workflow-specific prompt templates, calls an LLM API (Claude, GPT-4, or equivalent), and parses the response into structured actions.

The orchestrator is not a single prompt. It is a multi-step workflow where each step has its own prompt template optimized for that specific task. A prior authorization agent might have separate steps for: (1) determine if auth is required based on payer rules, (2) extract relevant clinical data to support medical necessity, (3) map to appropriate CPT/ICD codes, (4) generate the authorization request, (5) review the response and determine next action.

Each step is a focused prompt with clear instructions, relevant context, and a structured output format. The orchestrator manages the flow between steps, passes context forward, and handles branching logic (if auth is not required, skip to notification; if additional clinical data is needed, route to data collection).

This is not prompt engineering in the "write a clever prompt" sense. It is workflow engineering that happens to use LLM calls as processing steps. The prompts themselves are straightforward — the complexity is in the orchestration logic.

Component 3: Human-in-the-Loop Router

Every agent decision gets a confidence score. The router examines that score and the decision context, then determines the path: auto-approve, flag for quick review, or escalate for full human review.

The thresholds are configurable per workflow and per customer. A large hospital system with high transaction volume might set aggressive auto-approval thresholds (auto-approve above 93%) to maximize throughput. A small practice that is new to automation might want conservative thresholds (auto-approve above 98%) while they build trust in the system.

The router also handles the feedback loop. When a human overrides an agent decision, the router captures the override with context: what the agent decided, what the human decided instead, and why. This data feeds back into the orchestrator's prompt templates as examples, improving future accuracy.

Implementation-wise, this is a rules engine with a queue management system. It is the least technically novel component, but arguably the most important for production deployment. Without it, you either have a fully autonomous agent (too risky for healthcare) or a fully manual process with AI suggestions (too slow to deliver value).

Component 4: Compliance Logger

Every action the agent takes — every FHIR resource read, every LLM call, every decision, every escalation — is logged with full context. This is not application logging. It is a structured compliance audit trail designed to answer the questions that HIPAA auditors, clinical compliance teams, and legal departments will ask.

Each log entry includes: timestamp, agent ID, workflow step, data accessed (which FHIR resources, which fields), reasoning (the LLM's chain of thought), decision made, confidence score, whether human review was required, and the outcome. The log is append-only and tamper-evident.

The compliance logger also enforces minimum necessary access. Before the FHIR connector retrieves data, the logger checks the workflow's data access policy: does this workflow need the patient's full medical history, or just their coverage information? This prevents the agent from accessing data it does not need for the current task — a HIPAA requirement that is easier to enforce programmatically than with human staff.

Component 5: External API Adapter

Healthcare agents rarely operate in isolation. They need to communicate with payer systems (X12 transactions, payer-specific APIs), pharmacy networks (NCPDP SCRIPT), labs (HL7 ORU messages), clearinghouses, and other external services.

The adapter layer normalizes these integrations behind a consistent interface. The agent does not need to know whether it is talking to an X12 endpoint, a REST API, or an HL7 MLLP connection. It expresses its intent ("check eligibility for this patient with this payer") and the adapter handles the protocol-specific implementation.

This is the component that benefits most from reuse. Once you have built an adapter for a specific payer's eligibility API, every future agent that needs eligibility data for that payer uses the same adapter. Over time, you build a library of adapters that dramatically accelerates new agent development.

The Orchestration Pattern in Practice

Here is what the orchestration pattern looks like in pseudocode for a prior authorization agent:

async def process_prior_auth(encounter_id):

# Step 1: Gather context via FHIR

patient = fhir.get_patient(encounter_id)

coverage = fhir.get_coverage(patient.id)

procedures = fhir.get_planned_procedures(encounter_id)

# Step 2: Check if auth is required

auth_check = await llm.evaluate(

template="auth_requirement_check",

context={"patient": patient, "coverage": coverage,

"procedures": procedures},

)

compliance.log("auth_check", auth_check)

if not auth_check.required:

return notify_no_auth_needed(encounter_id)

# Step 3: Gather clinical evidence

clinical_data = fhir.get_clinical_evidence(

patient.id,

auth_check.required_evidence_types

)

# Step 4: Generate auth request

auth_request = await llm.evaluate(

template="generate_auth_request",

context={"patient": patient, "coverage": coverage,

"procedures": procedures,

"clinical_evidence": clinical_data},

)

compliance.log("auth_generation", auth_request)

# Step 5: Confidence routing

if auth_request.confidence > threshold.auto_approve:

result = await payer_api.submit_auth(auth_request)

compliance.log("auto_submitted", result)

elif auth_request.confidence > threshold.quick_review:

await review_queue.add(auth_request, priority="normal")

else:

await review_queue.add(auth_request, priority="high",

flag="low_confidence")Notice what is not in this code: there is no model training, no tensor operations, no GPU management, no custom neural network architecture. Every step is an API call — to FHIR, to the LLM, to the payer, to the review queue. The intelligence comes from the orchestration and the prompt templates, not from custom ML models.

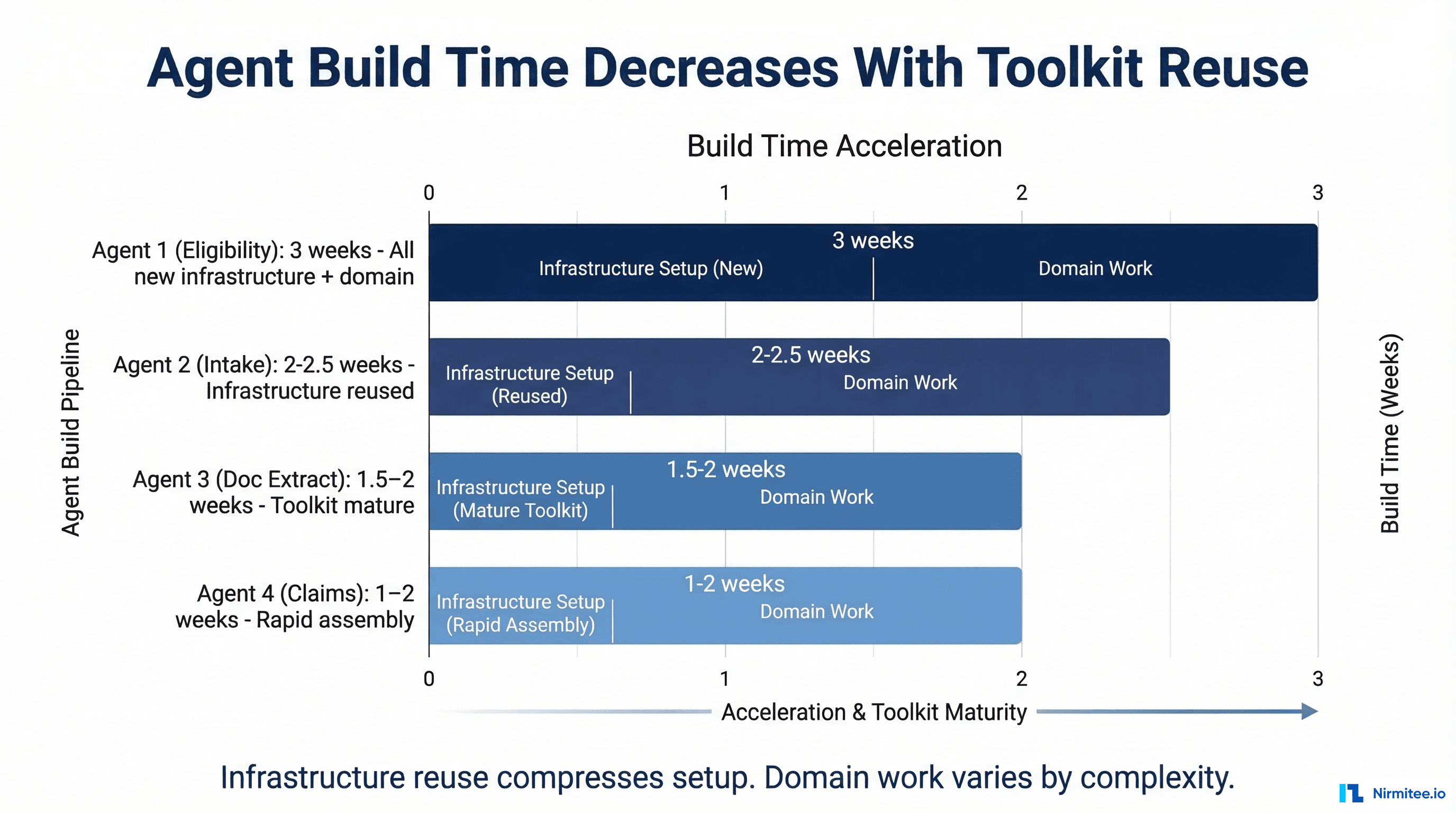

Why Build Time Accelerates

This is the part that surprises teams the most. The first agent takes the longest because you are building the components. Every subsequent agent reuses them.

Agent 1: Prior Authorization (3 weeks). You build all five components from scratch. The FHIR connector, the LLM orchestrator, the HITL router, the compliance logger, and the payer API adapter. The domain logic — understanding prior auth rules, clinical evidence requirements, payer-specific formats — takes about 40% of the time. The remaining 60% is the reusable infrastructure.

Agent 2: Eligibility Verification (2 weeks). You reuse the FHIR connector, orchestrator, HITL router, and compliance logger. You add a new payer API adapter for 270/271 transactions (or reuse the existing one if the payer is the same). Most of the work is in the domain-specific prompt templates and the edge case handling. Total build time drops by 30-40%.

Agent 3: Clinical Document Classification (1.5 weeks). Same infrastructure, new domain. The document classification prompts are different from auth and eligibility, but the orchestration pattern is identical. The FHIR connector already knows how to read DocumentReference resources. Build time drops further.

Agent 4 and beyond: 1-2 weeks each. By this point, your team has internalized the pattern. New agents are primarily prompt engineering and domain logic, with minimal infrastructure work. You are building a library of workflow-specific components that plug into the same architecture.

This acceleration curve is the strongest argument for building agents in-house rather than buying point solutions. Each vendor-purchased agent is an isolated system with its own integration requirements. Each in-house agent adds to your shared infrastructure and reduces the cost of the next one.

Your Healthcare Dev Team Can Build This

The skills required to build healthcare agents overlap significantly with the skills of an experienced healthcare development team:

FHIR expertise. If your team has built FHIR integrations, they already understand the data model, the API patterns, and the real-world quirks. This is arguably the hardest part to hire for — and you already have it.

Workflow engineering. Healthcare developers who have built claims processing, scheduling systems, or clinical workflows understand the domain logic that agents need. They know the edge cases, the payer-specific rules, and the compliance requirements.

API integration experience. Building agents requires calling LLM APIs, which is fundamentally the same skill as calling any other API. If your team can integrate with a payer API, they can integrate with Claude or GPT-4.

Prompt engineering. This is the genuinely new skill, but it is learnable in weeks, not months. Good prompt engineering for healthcare agents is mostly about clearly expressing domain knowledge in structured text. It is closer to writing a detailed specification than to writing code.

What your team probably does not need:

- ML engineers. You are not training models. You are calling APIs. If you later want to fine-tune models for specific tasks, that is an optimization, not a prerequisite.

- GPU infrastructure. LLM inference happens in the cloud via API calls. Your infrastructure requirements are the same as any other API-heavy application: web servers, databases, message queues.

- Data scientists. You do not need statistical modeling or feature engineering. The LLM handles the reasoning. You need domain experts who can evaluate whether the reasoning is correct.

- PhD researchers. Agent building is engineering, not research. The research was done by Anthropic, OpenAI, and others. You are applying their work to your domain.

The one role worth adding is a prompt engineer with healthcare domain knowledge — someone who can translate clinical and operational workflows into structured prompts. In practice, this is often an existing team member who develops the skill, not a new hire.

Common Objections and Why They Are Wrong

"LLMs hallucinate. We cannot use them in healthcare." LLMs hallucinate when given open-ended tasks with insufficient context. Agents use LLMs for narrow, well-defined tasks with rich structured context. The agent is not asking the LLM to generate medical knowledge — it is asking the LLM to match a patient's data against specific payer rules, with those rules provided in the prompt. The hallucination rate in structured, context-rich tasks is orders of magnitude lower than in open-ended generation. And the confidence scoring and HITL routing catch the remaining errors.

"We tried a chatbot and it did not work." Chatbots and agents are fundamentally different architectures. A chatbot is a single LLM call with user input. An agent is a multi-step workflow with structured data, defined actions, and quality controls. The failure of a chatbot says nothing about the viability of an agent, the same way the failure of a spreadsheet to manage a hospital does not indict database software.

"The LLM vendors will build healthcare-specific agents and we will just buy them." LLM vendors do not have your domain knowledge, your customer relationships, or your data. They will build generic healthcare tools that handle the easy cases. The value — and the competitive advantage — is in handling your specific workflows with your specific payer relationships and clinical contexts. That requires domain knowledge that is built over years, not purchased off the shelf.

"Our compliance team will never approve an AI making decisions." Your compliance team will approve a system that has better audit trails, more consistent rule application, and more granular access controls than your current manual processes. Frame it as an improvement to compliance, not a risk to it. The HITL routing and compliance logging components are specifically designed to exceed the audit requirements that human-only processes struggle to meet.

The Bottom Line

Building healthcare AI agents is a solvable engineering problem. The architecture is a set of well-defined components — FHIR connector, LLM orchestrator, HITL router, compliance logger, and external API adapter — that you build once and reuse across every agent.

You do not need to hire ML engineers, build GPU infrastructure, or train custom models. You need your existing healthcare development team, a structured approach to prompt engineering, and the domain knowledge you have already spent years accumulating.

The first agent takes three weeks. The fourth takes one. By the time you have built a handful, you have an agent development capability that is genuinely difficult for competitors to replicate — not because the technology is proprietary, but because the domain knowledge embedded in your orchestration layer, prompt templates, and adapter library represents years of healthcare expertise translated into executable workflows.

Start with the workflow that causes the most operational pain. Build the five components. Deploy with conservative HITL thresholds. Let the confidence data guide your automation rates upward. And when your board asks about your AI strategy, show them the architecture — not a research roadmap, but a production system built by your existing team.

Building production-grade healthcare AI agents requires careful architecture. Our Agentic AI for Healthcare team ships agents that meet clinical and compliance standards. We also offer specialized Healthcare AI Solutions services. Talk to our team to get started.