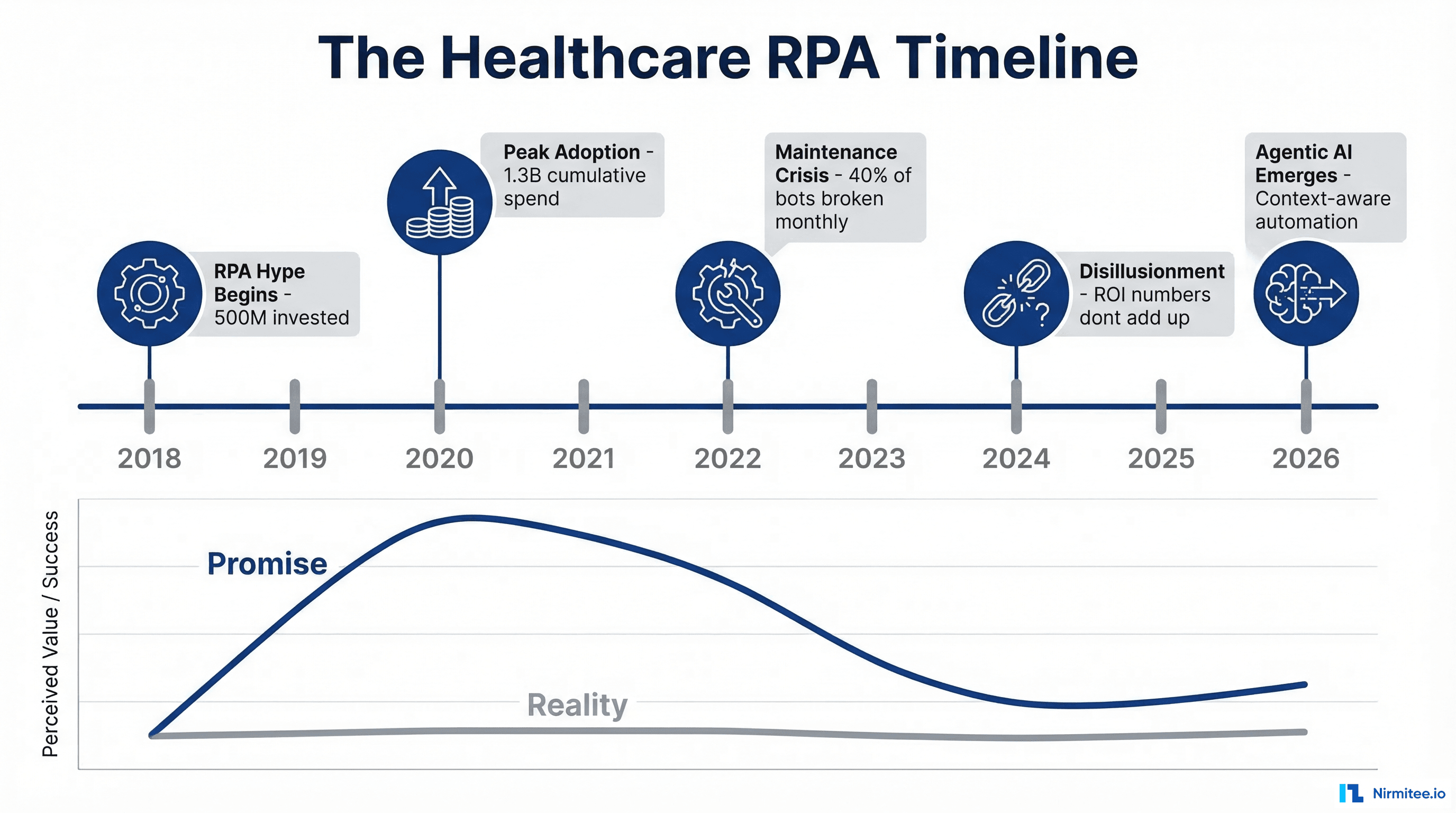

Healthcare organizations spent over 1.3 billion dollars on RPA between 2018 and 2023. The promise was irresistible: automate repetitive tasks, reduce errors, cut costs. The reality was different. Industry analysts report that 30-50% of healthcare RPA implementations are abandoned within 18 months, and the ones that survive require constant maintenance that erodes their ROI.

Now agentic AI is making the same promises. But this time, the technology is fundamentally different — and understanding exactly why RPA failed is the key to getting agentic AI right.

Why Healthcare Killed RPA

RPA was built for a world of stable interfaces, predictable inputs, and unchanging rules. Healthcare is none of these things.

Problem 1: Brittle Screen Scraping

Most healthcare RPA bots work by recording mouse clicks and keyboard strokes on payer portals, EHR screens, and web applications. When the vendor updates their UI — which happens constantly — the bot breaks. A button moves 20 pixels to the left, and your entire eligibility verification automation goes down.

Healthcare IT teams reported spending 40-60% of their RPA budget on maintenance — fixing bots that broke because a portal changed its layout, added a CAPTCHA, or modified its login flow.

Problem 2: No Context Awareness

RPA bots follow scripts. They do not understand what they are doing. If a patient's name is "Robert Smith" in the EHR but "Bob Smith" on the insurance card, the bot cannot make the connection. A human would instantly recognize this as the same person. An RPA bot flags it as a mismatch and stops.

Healthcare data is inherently messy. Names are misspelled, dates are formatted differently across systems, insurance IDs have variable formats, and addresses change. Every one of these variations is an edge case that requires a new rule in the RPA script.

Problem 3: Exception Handling is the Job

In most industries, 80% of transactions follow the happy path and 20% are exceptions. In healthcare, the ratio is often reversed. Payer-specific rules, state regulations, plan variations, clinical exceptions, and patient-specific circumstances mean that the "standard" path might only apply to 40-50% of cases.

RPA bots handle the easy 40-50% and route everything else to humans. But the easy cases were not where the bottleneck was — they were already fast. The value was in automating the complex cases, which RPA cannot do.

Problem 4: Compliance Theater

RPA bots do what they are told. They do not understand HIPAA minimum necessary access, they cannot log their reasoning (because they have none), and they access every field on the screen whether they need it or not. Audit trails for RPA bots show actions taken but not why — making compliance reviews an exercise in reconstruction rather than verification.

What Makes Agentic AI Fundamentally Different

Agentic AI is not RPA with an LLM attached. The architecture is entirely different.

API-First, Not Screen-First

Agentic AI uses APIs, not screen scraping. The agent calls payer APIs (X12 270/271), reads FHIR resources, and writes structured data back to the EHR. When a payer updates their portal UI, the agent does not notice because it is not looking at the UI.

When APIs are not available (some legacy payers still require portal access), the agent uses the LLM to understand the page semantically, not by memorizing button positions. If a button moves, the agent reads the page and finds it by understanding what the button does, not where it was.

Reasoning, Not Rules

An LLM-powered agent reasons about its task. When it encounters "Bob Smith" vs "Robert Smith," it considers context: same date of birth, same address, insurance ID matches — this is the same person. It does not need a rule for every name variation. It reasons from context, the way a human would, but faster and more consistently.

This is not artificial general intelligence. It is pattern matching with context awareness, applied to well-defined healthcare workflows. The agent knows what it is trying to accomplish, understands the data it is working with, and can reason about edge cases within its domain.

Graceful Degradation, Not Binary Failure

When an RPA bot encounters something it does not recognize, it crashes or produces wrong results silently. An agentic AI system assigns a confidence score to every decision:

- High confidence (above 95%) — Proceed autonomously, log the decision

- Medium confidence (80-95%) — Proceed but flag for quick human review

- Low confidence (below 80%) — Route to human with full context and suggested action

This means the agent handles what it can, escalates what it cannot, and never silently produces bad results. Over time, as human overrides are captured, the agent's confidence calibration improves.

Self-Documenting Compliance

Every agent decision includes a reasoning chain: what data was accessed, why, what decision was made, and at what confidence level. This is not an afterthought logging system — it is built into the agent architecture. HIPAA auditors can see exactly what happened and why, for every single transaction.

Compare this to RPA audit trails that show "Bot clicked button at coordinates (435, 287)" — technically accurate but meaningless for compliance purposes.

The Migration Path: From RPA to Agents

If you have existing RPA implementations, you do not need to rip them out all at once. The migration path is pragmatic:

Phase 1: Identify your highest-maintenance bots. These are the ones your team spends the most time fixing. They break because the underlying workflows are complex and variable — exactly where agents excel.

Phase 2: Run agents in parallel. Deploy the agent alongside the existing RPA bot for the same workflow. Compare results. The agent will handle the edge cases the bot cannot, and you will have data to prove it.

Phase 3: Retire bots progressively. Once the agent demonstrates consistent accuracy (typically 95%+ within the first month), retire the RPA bot. Redirect the maintenance budget to agent development.

Phase 4: Expand. Each retired bot frees up maintenance resources that fund the next agent. The compounding effect means your third and fourth agent deployments are faster and cheaper than the first.

Why This Time Is Different

Healthy skepticism is warranted. Every automation wave has made big promises. Here is what is structurally different about agentic AI:

- LLMs are commodity infrastructure. You are not training models — you are calling APIs. Claude, GPT-4, and their successors are available on demand. The cost per token drops every quarter.

- FHIR standardization. Healthcare data interoperability is finally real. FHIR R4 adoption has crossed the tipping point, giving agents standardized data to work with instead of screen scraping custom UIs.

- The orchestration pattern is proven. The agent architecture — LLM for reasoning, APIs for action, confidence scoring for safety, audit logging for compliance — is a software engineering problem, not a research problem.

- Healthcare domain knowledge is the bottleneck, not the AI. The organizations that succeed will be the ones that combine LLM capability with deep understanding of payer workflows, clinical data structures, and compliance requirements. This favors domain experts over AI companies.

The Bottom Line

RPA failed healthcare because it was a rigid tool applied to a dynamic environment. It memorized steps instead of understanding goals. It followed rules instead of reasoning about context. And it broke whenever the world changed — which in healthcare is constantly.

Agentic AI solves these specific failure modes. Not because it is smarter in some abstract sense, but because the architecture is designed for exactly the challenges that killed RPA: variable inputs, changing interfaces, complex exceptions, and strict compliance requirements.

The question is not whether agentic AI will replace RPA in healthcare. It already is. The question is whether you will be among the early movers who capture the efficiency gains, or the late adopters who pay premium prices for catch-up implementations.

From prior auth to clinical documentation, our Agentic AI for Healthcare practice builds agents that automate real healthcare workflows. We also offer specialized Healthcare AI Solutions services. Talk to our team to get started.