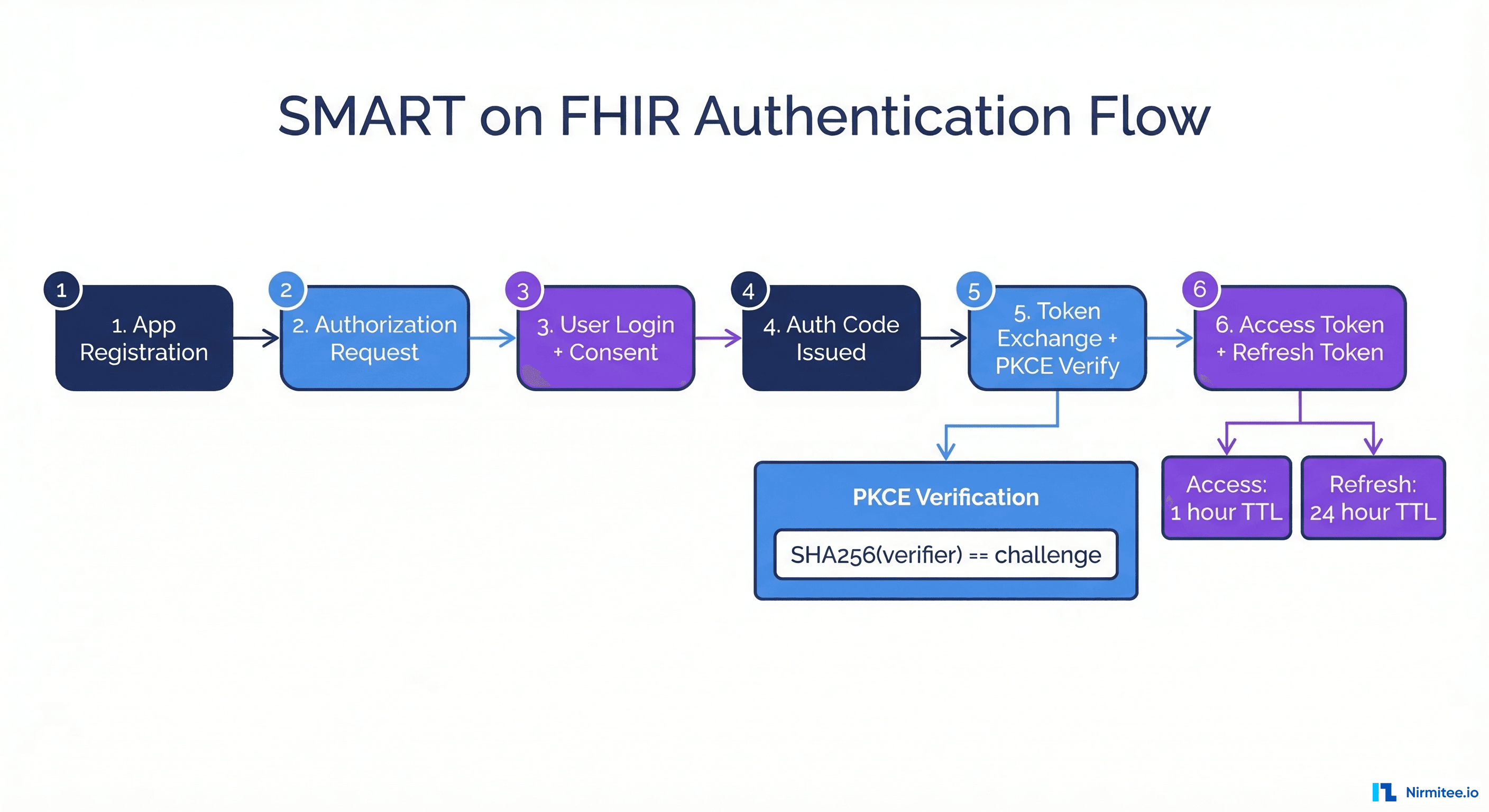

SMART on FHIR is how third-party apps access EHR data securely. It's OAuth 2.0 with healthcare-specific extensions — scopes tied to FHIR resources, launch context for patient/encounter selection, and PKCE for public clients. The spec is clear. The implementation is where things break.

We built a complete SMART on FHIR authorization server as part of our FHIR R4 platform — standalone mode, no external IdP dependency. We ran it through Inferno's SMART Standalone Patient App test suite and got 47 out of 51 tests passing (the 4 failures are TLS-related, expected in HTTP dev mode).

Along the way, we hit six specific failure modes that the spec doesn't warn you about. Each one cost us hours of debugging. Here's what broke, why it broke, and the exact code patterns that fixed it.

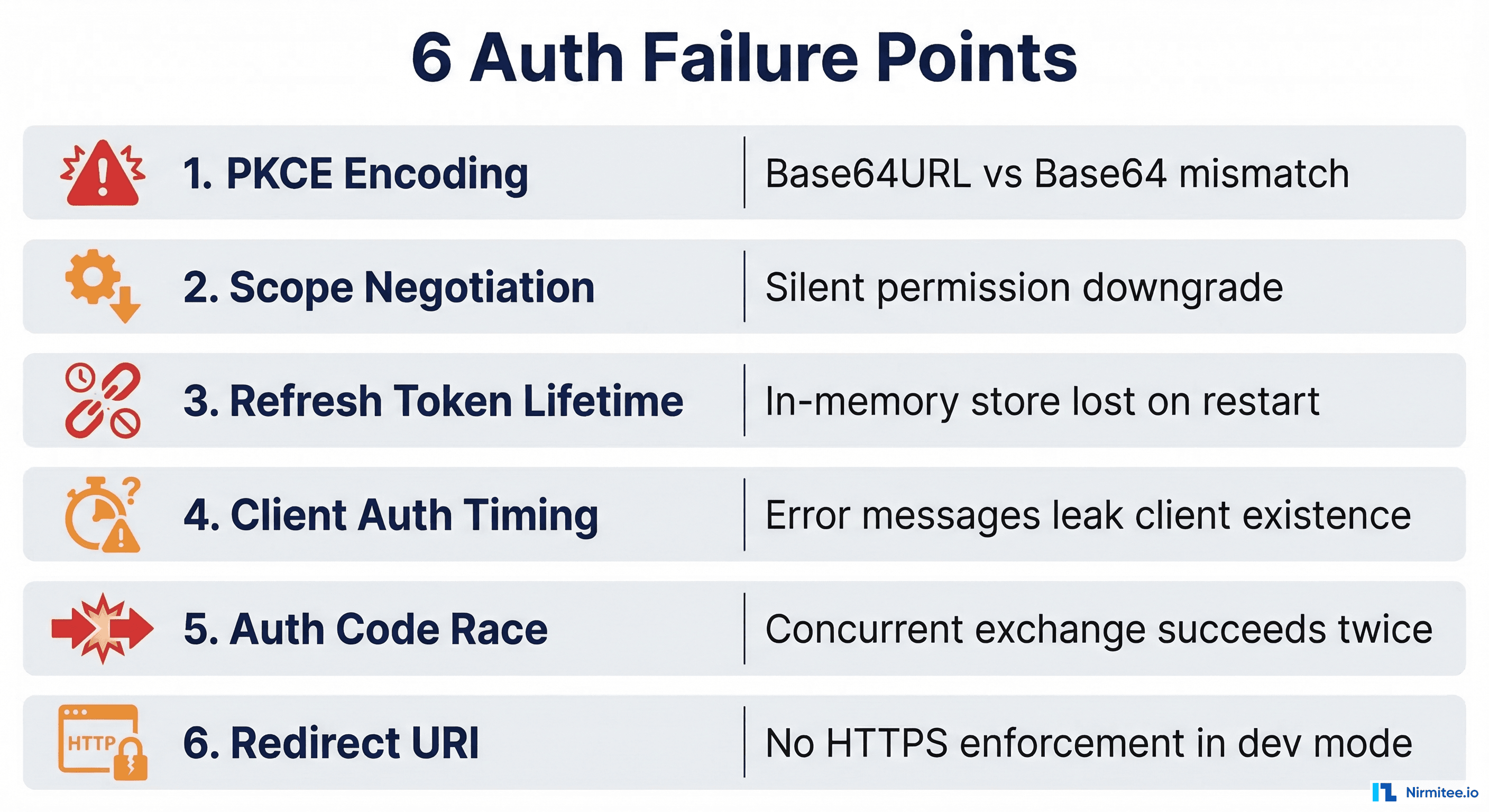

The 6 Failure Points at a Glance

Before we dive into each one, here's what we're dealing with. These aren't theoretical vulnerabilities from a security audit. They're bugs we shipped, caught in testing, and fixed.

PKCE Encoding — Base64URL vs Base64 Will Ruin Your Week

PKCE (Proof Key for Code Exchange) is mandatory for public clients in SMART on FHIR. The flow: client generates a random verifier, hashes it with SHA256, Base64URL-encodes the hash as the challenge, sends the challenge during authorization, then proves possession by sending the verifier during token exchange.

Simple enough. Except Base64URL encoding is not Base64 encoding.

Standard Base64 uses +, /, and = padding. Base64URL uses -, _, and no padding. If your server uses one and your client uses the other, PKCE verification silently fails. No error message tells you it's an encoding mismatch — you just get "invalid_grant" and spend three hours staring at hashes.

The Fix

func verifyPKCE(verifier, challenge string) bool {

hash := sha256.Sum256([]byte(verifier))

// RawURLEncoding: no padding, URL-safe alphabet

computed := base64.RawURLEncoding.EncodeToString(hash[:])

return subtle.ConstantTimeCompare([]byte(computed), []byte(challenge)) == 1

}Three things matter here:

base64.RawURLEncoding— notStdEncoding, notURLEncoding(which adds padding). The "Raw" prefix means no=padding.subtle.ConstantTimeCompare— timing-safe comparison prevents attackers from inferring the challenge byte-by-byte.- Verifier length validation — RFC 7636 requires 43-128 characters. We validate this before hashing, not after.

The Inferno test suite caught this immediately. Our first implementation used base64.URLEncoding (with padding). Every PKCE verification failed. The fix was changing one import — but finding it took hours because the error surface is "token exchange failed" with no indication that encoding is the problem.

What to Watch For

If you're using a library for PKCE, check which Base64 variant it uses. Python's base64.urlsafe_b64encode adds padding — you need to .rstrip(b'='). Node's Buffer.toString('base64url') does the right thing but wasn't available before Node 15. Java's Base64.getUrlEncoder().withoutPadding() is correct but Base64.getUrlEncoder() alone is not.

Scope Negotiation — Your Server Is Silently Granting Less Than You Think

SMART scopes follow the pattern context/resource.operation. A client might request patient/Patient.read patient/Observation.read launch/patient offline_access openid fhirUser. The server is supposed to negotiate — grant the intersection of what was requested and what the client is registered for.

The problem: most implementations do this silently. The client asks for 6 scopes, gets back 3, and doesn't notice until a specific API call fails 20 minutes into the session.

How Silent Downgrade Happens

func negotiateScopes(requested, allowed string) (string, error) {

requestedScopes := strings.Fields(requested)

allowedSet := toSet(strings.Fields(allowed))

var negotiated []string

for _, s := range requestedScopes {

if !isValidSMARTScope(s) {

return "", fmt.Errorf("invalid scope: %s", s)

}

if allowedSet[s] {

negotiated = append(negotiated, s)

}

// Scope not in allowed set? Silently dropped. No log. No warning.

}

if len(negotiated) == 0 {

return "", fmt.Errorf("no requested scopes are allowed")

}

return strings.Join(negotiated, " "), nil

}The spec says the server SHOULD return the granted scopes in the token response. We do. But most client libraries don't check whether the granted scopes match the requested scopes. They assume they got what they asked for.

The Dangerous Edge Cases

offline_accesssilently dropped — Client expects refresh tokens, doesn't get one, session expires after 1 hour with no recovery path.openidsilently dropped — Client expects anid_token, getsnull, crashes on JWT parse.- Wildcard scope mismatch — Client requests

patient/*.read, registered forpatient/Patient.read patient/Observation.read. Does the wildcard match the individual resources? Our implementation says no — wildcards must be explicitly registered.

Our fix: log every scope that was requested but not granted, and include the granted scopes prominently in the token response. We also added a scope_warning field (non-standard) in dev mode that lists what was dropped.

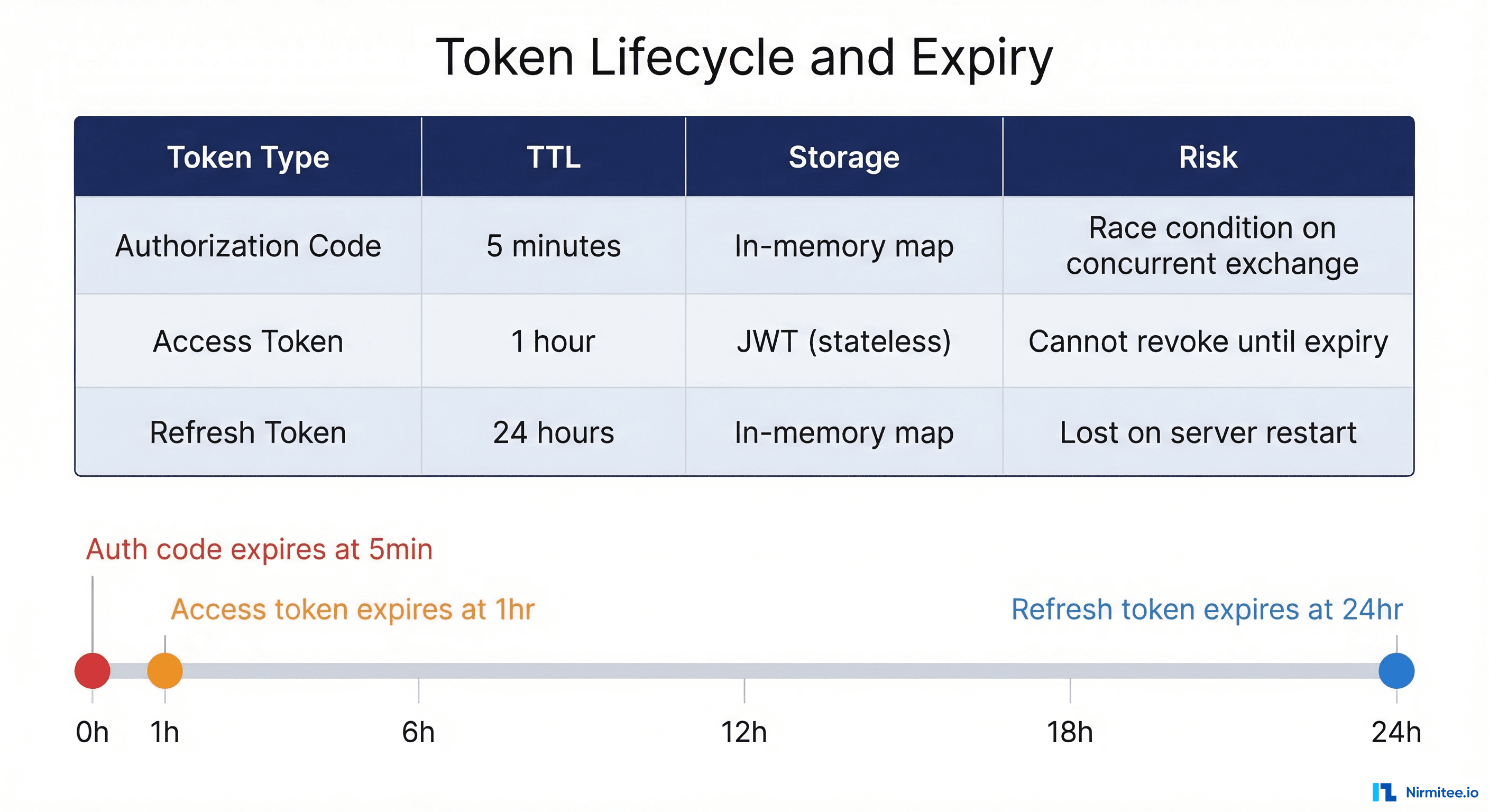

Refresh Token Lifetime — Your Tokens Won't Survive a Deploy

Refresh tokens are the most misunderstood part of SMART on FHIR. The spec says you can issue them when offline_access scope is granted. It doesn't say where to store them, how long they should live, or what happens when your server restarts.

Our initial implementation stored everything in memory. This works for development. For production, it's a disaster:

- Deploy = all sessions killed. Every active refresh token disappears. Every client needs to re-authenticate. If you deploy twice a day, your users re-login twice a day.

- Cleanup races with token exchange. The background cleanup runs every 5 minutes with a write lock. If a client tries to refresh a token at the exact moment cleanup is holding the lock, the request blocks.

- Token expiry vs. Cleanup timing. A token expires at T=300s. Cleanup runs at T=300.001s. Between those moments, the expired token is still in the map and technically exchangeable.

The Production Fix

We built a PostgreSQL-backed store that uses atomic DELETE ... RETURNING for one-time-use guarantees:

-- Atomic consume: reads and deletes in one operation

DELETE FROM smart_launch_contexts

WHERE code = $1 AND expires_at > NOW()

RETURNING patient_id, encounter_id, scopes, client_idNo race conditions. Survives restarts. Cleanup happens via PostgreSQL's own scheduled jobs, not application-level goroutines.

Client Auth Timing — Your Error Messages Are Leaking Information

Token exchange requires client authentication. Confidential clients send a client_id and client_secret. Public clients send only client_id with a PKCE verifier.

The security-critical detail: your error responses must not reveal whether a client_id exists.

The Information Leak

An attacker can enumerate valid client IDs by observing whether they get "unknown client" or "invalid client_secret". This is a textbook timing side-channel, and we shipped it in our first version.

The Fix

Use the same error message for all authentication failures. When a client doesn't exist, still perform a dummy timing-safe comparison to equalize response time. The subtle.ConstantTimeCompare function protects the comparison itself, but the surrounding lookup logic must also be equalized.

// Same error message regardless of failure mode

client, exists := s.clients[req.ClientID]

if !exists {

timingSafeEqual("dummy", "dummy") // Equalize timing

return &OAuthError{Code: "invalid_client", Description: "authentication failed"}

}

if !client.IsPublic && !timingSafeEqual(req.ClientSecret, client.ClientSecret) {

return &OAuthError{Code: "invalid_client", Description: "authentication failed"}

}HTTP Basic vs. Form-Encoded

Another gotcha: the spec allows client credentials via HTTP Basic auth or form-encoded body. Our implementation tries Basic first, then falls back to form. This means an attacker can detect which method was used by the server's response time. For maximum security, both paths should take identical time.

Authorization Code Race — One Code, Two Tokens

Authorization codes are single-use. After exchange for tokens, the code is deleted. Simple — until two requests arrive at the same millisecond.

The delete-before-validate pattern is actually correct for security — it prevents replay. But it creates a dead code path: the expiry check after deletion can never trigger because expired codes are either cleaned up by the background job or deleted by the exchange function.

The real risk: if the mutex lock is held during deletion but released before validation, two concurrent requests could both succeed. Our implementation holds the lock during the delete, which prevents this — but only because Go's sync.RWMutex is fair.

The PostgreSQL Solution

-- Only one connection can DELETE the same row

-- Second concurrent request gets 0 rows affected

DELETE FROM authorization_codes WHERE code = $1 RETURNING *Atomic. No application-level locking. No race conditions. The database handles serialization.

Redirect URI Validation — Exact Match Is Both Too Strict and Too Loose

Redirect URI validation uses exact string matching against registered URIs. No wildcards, no patterns, no URL parsing. This is simultaneously too strict and too loose.

Too strict:

http://localhost:3000/callbackis registered. Client sendshttp://localhost:3000/callback?state=abc. Rejected.localhostvs127.0.0.1. Same destination, different strings. Rejected.- URL-encoded paths:

%2Fvs/. Semantically identical, string comparison fails.

Too loose:

- No HTTPS enforcement in standalone mode.

- No host validation. Any URI can be registered.

- Localhost accepts any port number.

The Pragmatic Approach

We kept exact string matching because it's what the spec recommends (RFC 6749 Section 3.1.2.3) and what Inferno tests expect. But we added validation at registration time: production mode requires HTTPS (except localhost), custom URL schemes must follow reverse-DNS pattern, and obviously dangerous URIs (data:, javascript:) are rejected.

The key insight: redirect URI security happens at registration time, not at authorization time.

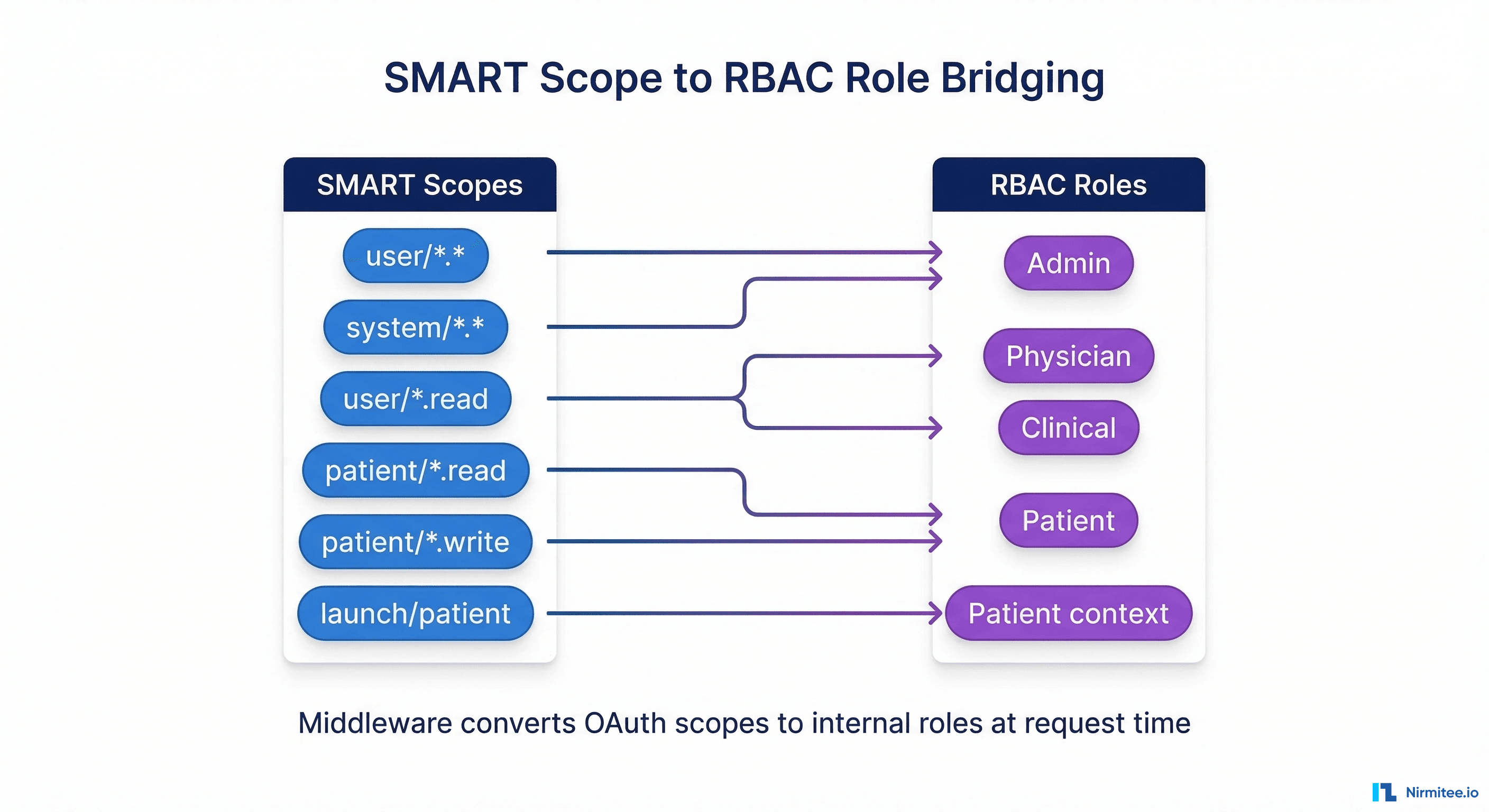

Bonus — Scope-to-RBAC Bridging

SMART on FHIR gives you OAuth scopes. Your application probably uses role-based access control. Bridging between them is where auth gets creative.

Our middleware converts SMART scopes to internal roles at request time: user/*.* or system/*.* maps to admin, user/* scopes map to physician + clinical roles, and patient/* scopes map to the patient role. This means a token with patient scopes gets row-level security filtering to the patient's own records.

The gotcha: scope-to-role mapping is a policy decision, not a technical one. The spec doesn't tell you how to do this. Document your mapping explicitly and make it configurable.

The Inferno Scorecard

- 47/51 tests passing

- 4 failures: all TLS-related (expected in HTTP development mode)

- PKCE with S256 challenge method: passing

- Token refresh with offline_access: passing

- Patient context isolation: passing

- OpenID Connect discovery: passing

- JWKS key rotation: passing

What We'd Do Differently

- Start with PostgreSQL-backed token storage from day one. The in-memory store saved us zero time and cost us a week of debugging race conditions.

- Run Inferno tests from the first commit. Every assumption we made about the spec was slightly wrong. Inferno catches them on day one.

- Log every scope negotiation decision. Silent scope downgrade is the hardest auth bug to diagnose.

- Use a single error message for all auth failures. You know this intellectually but don't do it until a pen test calls you on it.

- Test with both confidential and public clients simultaneously. The PKCE and client authentication code paths interact in ways you won't anticipate.

SMART on FHIR auth is solvable. But the spec gives you just enough rope to build something that works in your test environment and fails in every other one. Test against Inferno early, fix the six issues above, and save yourself the debugging time we spent.

Building a SMART on FHIR implementation? We've already navigated these issues. Talk to our team about how we can accelerate your timeline.

Struggling with healthcare data exchange? Our Healthcare Interoperability Solutions practice helps organizations connect clinical systems at scale. We also offer specialized Healthcare Software Product Development services. Talk to our team to get started.