The healthcare AI industry has a dirty secret: most of what gets built never reaches the patients it was designed to help. Despite more than $50 billion in venture funding flowing into healthcare AI since 2020, the overwhelming majority of these projects stall in pilot, fail in production, or quietly get shelved after burning through budgets that were supposed to transform care delivery.

This is not a think piece filled with vague warnings. This is a technical post-mortem — backed by published research, real failure case studies, and auditable data — that explains precisely why healthcare AI projects fail, what goes wrong at the technical level, and how the next generation of agentic AI systems must be architected differently to avoid repeating the same catastrophic mistakes.

If you are a CTO evaluating an AI vendor, a clinical informaticist deploying a prediction model, or a health system executive approving a seven-figure AI investment, this analysis will save you from becoming the next cautionary tale.

The Failure Rate Data: What the Numbers Actually Say

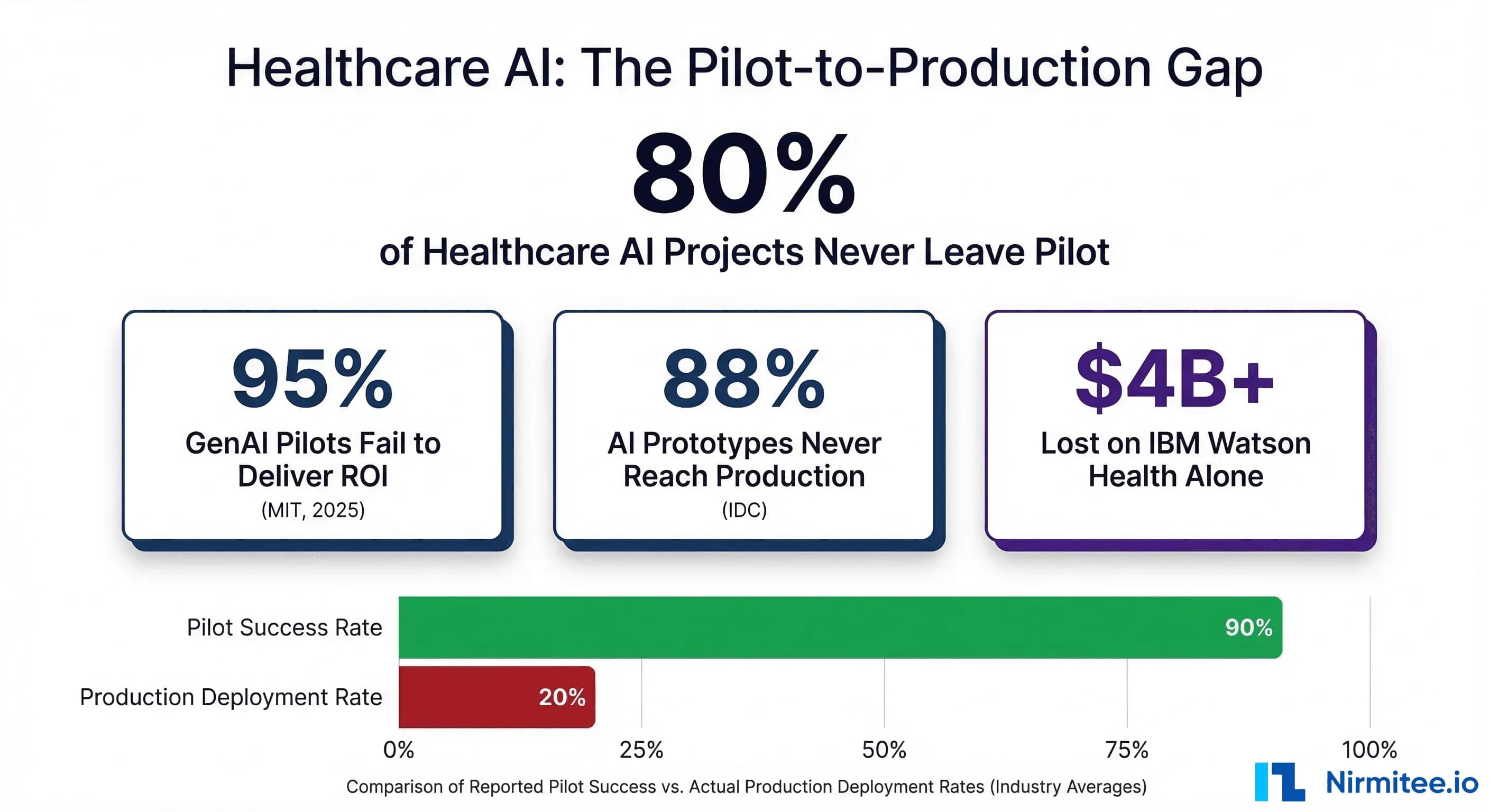

The headline statistic — 80% of healthcare AI projects never scale beyond pilot — comes from multiple converging data sources. But the real picture is even worse than that number suggests.

MIT NANDA Initiative: 95% Fail to Deliver ROI

In 2025, MIT's NANDA initiative published "The GenAI Divide: State of AI in Business 2025," based on 150 executive interviews, a survey of 350 employees, and analysis of 300 public AI deployments. The finding that made headlines: only 5% of generative AI pilot programs achieved rapid revenue acceleration. The other 95% stalled, delivering little to no measurable impact on profit and loss statements within six months of deployment.

Critics correctly noted that the six-month ROI window is narrow. But for healthcare systems operating on thin margins — the median US hospital operating margin was just 1.5% in 2024 — a project that cannot demonstrate value within six months is a project that gets defunded. The MIT finding, regardless of methodological debates, reflects how healthcare CFOs actually make investment decisions.

IDC: 88% of AI Prototypes Never Reach Production

IDC's 2025 global survey found that for every 33 AI pilots a company launches, only 4 ever reach production. That is an 88% failure rate at the prototype-to-production transition — the exact point where healthcare AI projects consistently die. The model works in the lab. It works on retrospective data. It passes internal validation. Then it encounters real clinical workflows, real EHR data quality issues, real regulatory requirements, and real clinician skepticism — and it fails.

Gartner: 85% Fail Due to Data Quality

Gartner's research estimates that 85% of AI models fail due to poor data quality. In healthcare, this is compounded by the fragmentation of clinical data across dozens of systems — EHRs, lab information systems, pharmacy systems, radiology PACS, claims databases — each with different schemas, coding standards, and completeness rates. A model trained on clean, curated research data encounters production data that is missing fields, uses free-text instead of structured codes, and contains systematic biases baked in by decades of inequitable care delivery.

The Model Drift Problem

Even models that successfully deploy face a ticking clock. Research shows that 91% of machine learning models suffer from model drift, with performance degradation beginning within days of deployment as real-world data diverges from training distributions. In healthcare, this drift is accelerated by seasonal disease patterns, changing patient populations, EHR system upgrades that alter data schemas, and evolving clinical protocols. A sepsis model trained on pre-COVID data, for example, performs dramatically worse on post-COVID patient populations where baseline inflammatory markers shifted.

Case Study 1: IBM Watson Health — A $4 Billion Lesson in Hype-Driven Development

IBM Watson Health remains the largest and most instructive failure in healthcare AI history. The numbers alone tell a devastating story: $4 billion invested in acquisitions (Merge Healthcare, Truven Health Analytics, Phytel, Explorys), $62 million burned on a single failed partnership with MD Anderson Cancer Center, and the entire division ultimately sold to Francisco Partners for approximately $1 billion in January 2022 — a 75% loss on investment.

But the real lessons are technical, not financial.

The MD Anderson Catastrophe

In 2013, MD Anderson Cancer Center partnered with IBM to build the "Oncology Expert Advisor" — a system that would use Watson's natural language processing to read patient health records, search cancer research databases, and recommend treatments for leukemia patients. The vision was compelling: an AI that could synthesize the entire corpus of oncology literature and match it against individual patient records to suggest optimal treatment protocols.

Five years and $62 million later, the project was dead. The system was never used on a single actual patient. An internal audit report stated plainly: "The Oncology Expert Advisor is not ready for human investigational or clinical use, and its use in the treatment of patients is prohibited."

What Went Wrong Technically

1. The EHR Data Problem: Watson could not reliably extract structured information from electronic health records. MD Anderson's oncology records contained missing data, ambiguous notations, out-of-chronological-order entries, and free-text descriptions that varied wildly between physicians. Watson's NLP, which performed impressively on curated Jeopardy questions, could not handle the messiness of real clinical documentation.

2. Training on Hypothetical Cases: A 2018 investigation by STAT News and internal IBM documents revealed that Watson for Oncology was primarily trained on a small number of hypothetical cancer cases curated by physicians at Memorial Sloan Kettering — not on real patient outcomes data. The system was essentially encoding the treatment preferences of a handful of MSK oncologists, not learning from actual patient responses to treatment. When deployed at hospitals with different patient populations, formularies, and treatment protocols, the recommendations were frequently inappropriate.

3. The Recommendation Quality Problem: Watson for Oncology generated treatment recommendations that oncologists at multiple partner institutions described as unsafe. In some cases, the system recommended treatments that were contraindicated given the patient's comorbidities. The fundamental issue was that the system conflated literature-based treatment options with patient-specific treatment recommendations — a distinction that experienced oncologists make intuitively but that Watson's architecture could not capture.

4. Integration Failure: Even if the recommendations had been clinically sound, the system could not integrate into existing clinical workflows. Physicians had to manually enter patient data into a separate Watson interface, wait for processing, and then manually transfer recommendations back into their clinical workflow. This added 20-30 minutes per patient — an unacceptable burden in high-volume oncology practices.

By 2018, more than a dozen IBM partners and clients had stopped or scaled back their oncology projects with Watson. The technology that was supposed to democratize expert-level cancer care instead demonstrated that impressive AI demos on curated data have almost no predictive value for real-world clinical performance.

Case Study 2: The Epic Sepsis Model — When Vendor-Reported Accuracy Is a Lie

The Epic Sepsis Model (ESM) is deployed across hundreds of hospitals in the United States, embedded directly into the Epic EHR that dominates the US hospital market. Epic's internal documentation claimed an area under the receiver operating characteristic curve (AUC) of 0.76 to 0.83 — performance that would make the model a useful clinical tool for early sepsis detection.

In 2021, researchers at the University of Michigan Medical School published the first independent external validation of the Epic Sepsis Model in JAMA Internal Medicine. Their findings were devastating.

The Real Numbers

Analyzing more than 27,000 adult patients across 38,500 hospitalizations at Michigan Medicine between December 2018 and October 2019, the researchers found:

- Real-world AUC: 0.63 — substantially below the 0.76-0.83 claimed by Epic, and below the 0.70 threshold generally considered the minimum for clinical utility

- 67% of sepsis patients were missed — the model failed to generate alerts for two-thirds of patients who actually developed sepsis

- Only 7% of true sepsis cases were identified by the model that clinicians had not already identified independently

- 18% of all hospitalized patients triggered false alerts, creating a massive burden of alert fatigue on nursing and physician staff

Why the Performance Collapsed

Distribution Shift: The model was trained and validated on Epic's internal datasets, which may not have been representative of the patient population, clinical protocols, and documentation practices at Michigan Medicine. This is the canonical example of distribution shift — a model that performs well on the data it was trained on but degrades substantially when applied to data from a different clinical environment.

Calibration Failure: The model was poorly calibrated for the actual prevalence of sepsis at Michigan Medicine (7% in the study population). A model calibrated for a different disease prevalence will systematically generate too many or too few alerts, regardless of its discrimination ability.

Proprietary Black Box: Because the Epic Sepsis Model is proprietary, hospitals deploying it could not inspect the model's features, retrain it on local data, or adjust its thresholds for their specific patient populations. They were forced to accept Epic's performance claims at face value — claims that, as the Michigan Medicine study proved, did not hold up under independent scrutiny.

The ESM case study is a warning to every health system deploying vendor-provided AI: if you have not independently validated the model on your own patient population, you do not know whether it works. Vendor-reported performance metrics are marketing materials, not clinical evidence.

Case Study 3: The Optum Algorithm — How Cost Optimization Became Racial Discrimination

In October 2019, a landmark study published in Science by Ziad Obermeyer and colleagues at UC Berkeley exposed systemic racial bias in a commercial risk-prediction algorithm developed by Optum, a subsidiary of UnitedHealth Group. The algorithm was used to manage the health of populations across the United States, affecting the care of approximately 200 million Americans per year.

What the Algorithm Did

The algorithm was designed to identify patients with complex health needs who would benefit from enrollment in care management programs — programs that provide additional resources, closer monitoring, and proactive interventions. Hospitals and health systems used the algorithm's risk scores to decide which patients received these enhanced services.

The Bias Mechanism

The algorithm used healthcare costs as a proxy for healthcare needs. The logic seemed reasonable on its surface: patients who cost more to treat must be sicker and therefore need more care. But this assumption embedded a fundamental societal bias directly into the prediction model.

Due to well-documented disparities in healthcare access, Black patients in the United States systematically spend less on healthcare than white patients with identical conditions. The reasons include lower rates of insurance coverage, reduced access to specialists, implicit bias in referral patterns, historical mistrust of medical institutions, and geographic barriers to care. The Obermeyer study found that Black patients spent approximately $1,800 less per year than white patients with the same number and severity of chronic conditions.

The algorithm interpreted this spending gap as a health gap — in the wrong direction. It concluded that Black patients who spent less on healthcare must be healthier, when in reality they were equally or more sick but receiving less care. The result: at the same algorithm-assigned risk score, Black patients were significantly sicker than white patients. The study found that the algorithm had effectively missed nearly 50,000 chronic conditions in Black patients.

The Scale of Harm

The numbers are staggering. At a commonly used risk threshold for enrollment in care management programs, the percentage of Black patients identified for additional care would increase from 17.7% to 46.5% if the algorithm were corrected — meaning the biased algorithm was denying enhanced care to nearly half of the Black patients who should have received it.

To their credit, Optum worked with Obermeyer's team to develop a corrected algorithm that used direct health outcome predictions instead of cost proxies, achieving an 84% reduction in bias. But the corrected algorithm revealed a deeper problem: there is no shortcut to careful feature selection, and proxy variables that seem neutral can embed the very inequities they claim to address.

The Technical Lesson

The Optum case is not about bad intentions. It is about what happens when engineers optimize a mathematical objective function — minimizing cost prediction error — without rigorously examining whether that objective function aligns with the actual clinical goal — identifying patients who need more care. This misalignment between optimization targets and real-world objectives is endemic in healthcare AI and represents one of the most dangerous failure modes: a model that is technically accurate at its stated task while being deeply harmful in its actual application.

The 7 Technical Root Causes of Healthcare AI Failure

After analyzing dozens of published failures, audits, and post-mortems across the healthcare AI landscape, seven root causes emerge consistently. These are not independent failure modes — they compound each other, creating a failure cascade that is far more difficult to overcome than any single technical challenge.

Root Cause 1: Data Distribution Shift

Every healthcare AI model is trained on historical data that represents a specific patient population, clinical environment, and point in time. The moment that model is deployed in a different setting — or the same setting at a different time — it encounters distribution shift.

The magnitude of this problem is underappreciated. A model trained on data from a tertiary academic medical center in Boston will encounter fundamentally different data at a rural community hospital in Mississippi. Patient demographics differ. Disease prevalence differs. Documentation practices differ. Lab equipment differs (different analyzers produce different reference ranges). Even the same hospital's data shifts over time as protocols change, new physicians arrive, and the patient population evolves.

Research shows that 91% of ML models suffer from model drift, with degradation beginning within days. In healthcare, this is not an abstract statistical problem — it directly translates to missed diagnoses, false alerts, and eroded clinician trust. The Epic Sepsis Model's performance collapse at Michigan Medicine (AUC dropping from 0.76-0.83 to 0.63) is a textbook example of distribution shift between training and deployment environments.

What production systems require: Continuous monitoring of input data distributions, automated drift detection, predetermined performance thresholds that trigger model retraining or fallback to non-AI clinical pathways, and regular recalibration on local data.

Root Cause 2: The Integration Gap

The second root cause is perhaps the most common and least discussed: the AI model works, but it cannot connect to the clinical workflow where decisions are actually made.

Healthcare IT environments are among the most complex integration landscapes in any industry. A typical academic medical center runs 100-200+ software applications. The EHR is the center of clinical workflow, but it is surrounded by lab information systems, pharmacy systems, radiology PACS, nursing documentation platforms, patient portals, billing systems, and dozens of departmental applications. Getting an AI model's output into the right screen, at the right time, in the right format, for the right clinician, requires deep integration work that is often 3-5x more expensive and time-consuming than building the model itself.

FHIR (Fast Healthcare Interoperability Resources) has improved the technical plumbing, but even with modern interoperability standards, the integration challenge remains substantial. FHIR APIs expose data, but they do not solve workflow integration — knowing that a patient's lab value is abnormal is not the same as delivering an actionable alert to the attending physician in a way that fits into their existing decision-making process.

IBM Watson's failure at MD Anderson illustrates this perfectly: even if Watson's recommendations had been clinically sound, the manual data entry and results transfer process made it operationally unusable in a high-volume clinical environment.

Root Cause 3: Alert Fatigue

Clinical decision support systems have been generating alerts for decades. The result is a well-documented crisis: physicians override 90-96% of all clinical alerts. This is not clinician negligence — it is a rational response to a system that generates so many false positives that attending to every alert would make clinical care impossible.

A systematic review and meta-analysis of alert override rates found the overall prevalence of alert override by physicians at 90% (95% CI: 85-95%). Individual studies report even higher rates in specific clinical contexts. Emergency department physicians, who operate under extreme time pressure, override alerts at rates approaching 96%.

When a new AI system is introduced into this environment, it is not entering a blank slate — it is entering a workflow where clinicians have already been conditioned to ignore automated alerts. Unless the AI system demonstrates immediately and consistently higher signal-to-noise ratios than existing CDS tools, it will be ignored with the same reflexive dismissal.

The Epic Sepsis Model demonstrated this dynamic precisely: by generating alerts on 18% of all hospitalized patients while missing 67% of actual sepsis cases, it produced far more noise than signal. Clinicians who encountered the ESM in practice rapidly learned to dismiss its alerts, undermining whatever residual predictive value the model might have had.

What production systems require: Alert thresholds calibrated to maintain false positive rates below 10-15%, tiered alert severity (not every output deserves an interruptive alert), integration with clinical context (do not alert on a lab value that the physician ordered and is already reviewing), and regular measurement of override rates as a key performance indicator.

Root Cause 4: Regulatory Misalignment

The FDA's regulatory framework for AI in healthcare is evolving rapidly but remains a source of significant uncertainty for development teams. Software as a Medical Device (SaMD) classification determines whether an AI tool requires FDA clearance, and the classification boundaries are not always clear.

As of early 2026, the FDA has authorized more than 1,250 AI-enabled medical devices for marketing in the United States. However, the traditional regulatory paradigm — design freeze, validate, submit, approve — is fundamentally mismatched with AI/ML models that are designed to learn and adapt over time. The FDA's Predetermined Change Control Plan (PCCP) framework, finalized in December 2024, attempts to address this by allowing manufacturers to pre-specify the types of changes an AI model might make post-approval. But the PCCP framework is new, untested at scale, and adds significant regulatory overhead to development timelines.

The practical impact is that development teams face a fork in the road early in their projects: build a "locked" model that can navigate existing regulatory pathways but cannot adapt to distribution shift, or build an adaptive model that is more clinically robust but faces a more uncertain and expensive regulatory pathway. Many teams choose a third option: avoid FDA-regulated clinical claims entirely, positioning their tools as "clinical decision support" that does not make autonomous decisions. This regulatory arbitrage constrains the clinical impact of the tool and often pushes the most valuable AI applications — those that could make or inform diagnostic and treatment decisions — into an indefinite regulatory limbo.

Root Cause 5: Clinician Workflow Disruption

AI tools that do not fit into how physicians, nurses, and care coordinators actually work will be abandoned regardless of their technical performance. This is not a technology problem — it is a human factors engineering problem that healthcare AI teams consistently underinvest in.

Research identifies 16 key barriers to AI adoption in healthcare, with workflow disruption, clinician resistance, and increased cognitive load among the most significant. The introduction of AI tools often disrupts established routines and requires adaptation to new processes, which clinicians — already operating under extreme time pressure and cognitive load — perceive as burdensome.

The pattern is predictable: a development team builds a model, demonstrates it to clinical leadership in a conference room, gets enthusiastic buy-in, and then deploys it into a clinical environment where the frontline users (nurses, residents, attending physicians) had no input into the design process. The tool requires extra clicks, displays information in an unfamiliar format, or delivers recommendations at a point in the workflow where the clinician has already made the relevant decision. Within weeks, usage drops to near zero.

What production systems require: Participatory design with frontline clinicians from day one — not just clinical champions, but the nurses and residents who will interact with the tool dozens of times per shift. Workflow time-motion studies before and after deployment. Zero additional clicks for the most common interaction patterns. Information delivery timed to the point of decision, not the point of data availability. And critically, architecture that embeds AI into existing tools rather than requiring clinicians to switch contexts to a separate application.

Root Cause 6: Validation Theater

Validation theater is the practice of demonstrating impressive model performance on carefully curated retrospective datasets while avoiding the rigorous prospective, real-world validation that would reveal the model's actual clinical utility.

The pattern is endemic in healthcare AI publications and vendor marketing. A team trains a model on a large retrospective dataset, achieves a high AUC (often 0.85-0.95) on a held-out test set drawn from the same dataset, publishes the results, and positions the model for clinical deployment. But the held-out test set shares the same data collection protocols, patient population, and time period as the training set — it does not represent an independent test of generalizability.

The Epic Sepsis Model is the canonical example: vendor-reported AUC of 0.76-0.83 collapsed to 0.63 under independent external validation. But Epic is far from alone. A 2019 systematic review of AI studies in healthcare found that only 6% included any form of external validation, and fewer than 1% had been prospectively validated in a clinical setting.

The incentive structure reinforces validation theater. Academic researchers are rewarded for publishing high AUC numbers. Vendors are rewarded for impressive demo performance. Hospital procurement committees evaluate AI tools based on vendor-provided metrics. At no point in this chain does anyone have a strong incentive to conduct the expensive, time-consuming external validation that would reveal whether the model actually works in production.

What production systems require: Mandatory external validation on at least three independent datasets before any clinical deployment. Prospective pilot studies with predetermined success criteria defined before deployment — not after. Public reporting of real-world performance metrics, including false positive rates, override rates, and clinician-assessed utility scores. And an organizational culture that treats a failed external validation as valuable information, not as a threat to a project's survival.

Root Cause 7: Cost Miscalculation

Healthcare organizations systematically underestimate the total cost of AI implementation by factors of 3-5x. Deloitte Healthcare found that 63% of healthcare AI projects exceeded their budgets by 25% or more. Hidden costs — compliance audits, cybersecurity hardening, workforce training, integration maintenance, and ongoing model monitoring — account for 30-50% of total implementation expenses.

The cost breakdown reveals where the miscalculations occur:

| Cost Category | Typical Budget Estimate | Actual Cost | Overrun Factor |

|---|---|---|---|

| Model Development | $50,000-$100,000 | $100,000-$200,000 | 2x |

| EHR Integration | $25,000-$50,000 | $100,000-$200,000 | 4x |

| Regulatory & Compliance | $10,000-$25,000 | $50,000-$100,000 | 4-5x |

| Training & Change Mgmt | $15,000-$25,000 | $50,000-$75,000 | 3x |

| Year 1 Operations | $20,000-$40,000 | $75,000-$125,000 | 3-4x |

The integration line item is where the most dramatic miscalculations occur. Organizations budget for API development but forget about data mapping, error handling, edge case management, performance optimization, security review, and the iterative testing cycles required to handle the diversity of real clinical data. Integration with a single EHR system often costs as much as or more than building the AI model itself.

Annual operational costs typically run 20-30% of the initial implementation investment. For a project with a true all-in cost of $500,000, that means $100,000-$150,000 per year in ongoing expenses — costs that are rarely included in initial ROI projections and that erode the business case for AI adoption over time.

Why Agentic AI Must Be Different

The emerging paradigm of agentic AI in healthcare — autonomous or semi-autonomous systems that can reason, plan, and execute multi-step workflows — inherits all seven of these root causes while introducing new risks specific to autonomous operation. If the lessons from the first generation of healthcare AI are not embedded into the architecture of agentic systems, the failure rates will be even higher.

What Agentic AI Gets Right (In Theory)

Workflow Integration by Design: Unlike prediction models that generate a score and leave the clinician to act on it, agentic AI systems are designed to operate within workflows — scheduling follow-ups, generating referral letters, updating care plans, coordinating between departments. This addresses Root Cause 5 (Workflow Disruption) by making the AI a workflow participant rather than a workflow interruption.

Reduced Alert Fatigue Through Action: Instead of generating another alert for a clinician to evaluate and dismiss, agentic systems can take low-risk actions autonomously (scheduling a follow-up appointment, ordering a routine lab) while escalating high-risk decisions to human review. This addresses Root Cause 3 (Alert Fatigue) by reducing the total volume of decisions requiring human attention.

Continuous Adaptation: Agentic systems that incorporate feedback loops from their actions can adapt to local clinical environments more rapidly than static prediction models. This theoretically addresses Root Cause 1 (Distribution Shift) by enabling real-time learning from production data.

What Agentic AI Gets Wrong (Without Guardrails)

Amplified Harm from Errors: A prediction model that generates a wrong score is annoying; an agentic system that autonomously takes a wrong action — scheduling the wrong test, sending the wrong referral, or worse — is dangerous. The observability and monitoring requirements for agentic healthcare AI are an order of magnitude more demanding than for passive prediction models.

Regulatory Uncertainty Amplified: If the FDA's SaMD framework is already strained by adaptive prediction models, it is entirely unprepared for autonomous agents that make chains of clinical decisions. The regulatory pathway for agentic healthcare AI is, as of March 2026, essentially undefined.

Cost Escalation: Agentic systems require more sophisticated infrastructure — orchestration layers, guardrail systems, audit logging, human-in-the-loop escalation pathways, and continuous monitoring — than simple prediction models. Organizations that underestimate costs for prediction model deployment will be even further off-budget for agentic AI deployments.

The Non-Negotiable Requirement: Agentic healthcare AI systems must be built with HIPAA-compliant architectures, deterministic guardrails that constrain autonomous actions to pre-approved safe boundaries, comprehensive audit trails for every action taken, and human-in-the-loop escalation for any action above a defined risk threshold. The path from here to agentic AI that works is not through less engineering rigor — it is through dramatically more.

The Right Way: A Framework for Healthcare AI That Survives Production

Based on the patterns in these failures and the rare successes in healthcare AI deployment, a framework emerges for projects that survive the pilot-to-production transition.

Phase 1: Problem Selection (Before Writing a Single Line of Code)

- Start with workflow, not with data. Shadow clinicians for 40+ hours before defining the AI use case. Understand the decision being made, the information available at the point of decision, the time constraints, and the consequences of error. If the AI does not fit into a specific, observable workflow moment, it will not be adopted.

- Define the failure mode explicitly. What happens when the AI is wrong? If the consequence of a false positive is a dismissed alert, the AI adds no value. If the consequence of a false negative is a missed diagnosis, the AI must clear a much higher performance bar. Quantify the cost of each error type before building anything.

- Validate the data availability. Not the existence of data — the availability of data in production, in real-time, in the format the model needs, at the quality level the model requires. Many healthcare AI projects fail because the data that exists in the research database does not exist (or is not accessible) in the production clinical environment.

Phase 2: Development (Build for Production, Not for Publication)

- Train on multi-site data from day one. Single-site training virtually guarantees distribution shift at deployment. If multi-site data is not available, use domain adaptation techniques and plan for mandatory local recalibration before deployment.

- Build the integration layer first. The AI model is the easy part. The EHR integration, data pipeline, alert delivery mechanism, and clinician-facing interface are the hard parts. Build and validate these before investing heavily in model optimization.

- Design for graceful degradation. What happens when the model encounters data outside its training distribution? What happens when the EHR API times out? What happens when the model's confidence is below the actionable threshold? Production systems need answers to these questions before deployment, not after.

Phase 3: Validation (Earn Trust With Evidence)

- External validation on 3+ independent datasets from different clinical environments before any deployment decision. If the model cannot maintain clinically useful performance across sites, it is not ready for production.

- Prospective pilot with predetermined success criteria. Define what "success" means quantitatively (sensitivity, specificity, override rate, clinician-assessed utility, time-to-action) before the pilot begins. If the pilot does not meet these criteria, do not deploy — iterate.

- Bias audit across all relevant demographic groups. The Optum case demonstrated that cost-based proxies can embed racial bias. Every healthcare AI model must be audited for differential performance across race, ethnicity, sex, age, insurance status, and socioeconomic indicators before deployment.

Phase 4: Deployment and Monitoring (The Real Work Begins)

- Continuous performance monitoring with automated drift detection. Track model performance metrics (AUC, calibration, sensitivity, specificity) in real-time on production data. Set predetermined thresholds that trigger alerts to the AI operations team and, if violated, automatically fall back to non-AI clinical pathways.

- Clinician feedback loops. Create lightweight mechanisms for clinicians to report when the AI is wrong, unhelpful, or disruptive. This qualitative data is as important as quantitative performance metrics for identifying problems that statistical monitoring misses.

- Quarterly performance reviews with clinical stakeholders. AI model performance should be reviewed with the same rigor as clinical quality metrics — because in healthcare, they are clinical quality metrics.

The 15-Question Checklist: Before You Deploy Healthcare AI

Before any healthcare AI system moves from pilot to production, every question on this list must have a documented answer. If any answer is "we don't know" or "we haven't done that yet," the system is not ready for production deployment.

Technical Validation

- Has the model been externally validated on at least three independent datasets from different clinical environments?

- What is the model's real-world performance (AUC, sensitivity, specificity, PPV, NPV) on each validation dataset, and how does it compare to vendor-reported or publication-reported metrics?

- Has a formal bias audit been conducted across all relevant demographic groups (race, ethnicity, sex, age, insurance status)?

- What is the model's performance on the specific patient population at your institution, validated prospectively?

- Is there a documented plan for model drift monitoring, including specific metrics, thresholds, and automated responses?

Integration and Workflow

- Has the AI been integrated into the production EHR workflow with end-to-end testing, including edge cases, missing data, and system timeouts?

- What is the measured impact on clinician workflow time (time-motion study), and is the net impact neutral or positive?

- What is the measured alert override rate during the pilot period, and is it below 50%?

- Have frontline clinicians (not just clinical champions) provided feedback on usability, and has that feedback been incorporated?

- What happens when the model fails, the data pipeline breaks, or the EHR integration times out? Is there a documented fallback procedure?

Regulatory and Ethical

- Has the FDA SaMD classification been determined, and if applicable, has the appropriate regulatory pathway been completed?

- Is there a comprehensive audit trail for every model prediction, including inputs, outputs, confidence scores, and any actions taken?

- Have patients been informed about the use of AI in their care, consistent with institutional transparency policies?

Financial and Operational

- Has the total cost of ownership (development, integration, deployment, training, Year 1-3 operations, model retraining) been calculated and compared against projected clinical and financial benefits?

- Is there an executive sponsor with budget authority who has committed to multi-year funding, including ongoing operational costs, not just initial development?

These fifteen questions are not bureaucratic hurdles designed to slow down innovation. They are the minimum requirements for safe, effective, and sustainable healthcare AI deployment, derived directly from the failure patterns documented in this analysis. Every failed project in this post-mortem violated multiple items on this list. Every project that succeeds will need to satisfy all of them.

Conclusion: The Path Forward Requires Honesty

The healthcare AI industry's 80% failure rate is not a technology problem. The models work. GPT-4 can pass medical licensing exams. Computer vision models can detect diabetic retinopathy as accurately as ophthalmologists. Sepsis prediction models can achieve AUCs above 0.85 on retrospective data.

The failure rate is an engineering, integration, validation, and organizational problem. It is a problem of healthcare organizations buying AI solutions without the infrastructure to deploy them. Of vendors selling demo performance as production performance. Of development teams that build models without understanding clinical workflows. Of regulatory frameworks that have not caught up with the technology they are meant to govern.

The next generation of healthcare AI — particularly agentic systems that operate autonomously within clinical workflows — will either repeat these failures at larger scale, or it will be built by teams that have internalized the lessons documented here. There is no third option.

The 80% failure rate is not inevitable. But reducing it requires honesty about why projects fail, willingness to invest in the unglamorous engineering work that separates demos from production systems, and the discipline to not deploy until the evidence — real evidence, not vendor marketing — proves the system is ready.

The patients depending on these systems deserve nothing less.

Frequently Asked Questions

Why do most healthcare AI projects fail to move beyond the pilot stage?

Healthcare AI projects fail to scale beyond pilot primarily due to seven compounding technical and organizational factors: data distribution shift between training and production environments, integration gaps with existing EHR workflows, clinician alert fatigue (90-96% override rates), regulatory uncertainty around the FDA SaMD pathway, workflow disruption that does not fit clinical practice, validation theater that masks poor real-world performance, and systematic cost underestimation by 3-5x. These are not independent failures — they cascade. A model that works on curated data encounters messy production data (distribution shift), cannot integrate into the EHR (integration gap), generates too many false alerts (alert fatigue), and erodes clinician trust to the point of abandonment.

What happened with IBM Watson Health and why did it fail in healthcare?

IBM invested approximately $4 billion acquiring healthcare data companies (Merge Healthcare, Truven Health Analytics, Phytel, Explorys) and spent $62 million on a failed partnership with MD Anderson Cancer Center. The Oncology Expert Advisor was never used on a single real patient. Technical failures included: Watson's NLP could not handle messy, ambiguous clinical documentation; the system was trained on hypothetical cancer cases from Memorial Sloan Kettering rather than real patient outcomes; and integration required manual data entry that added 20-30 minutes per patient. IBM sold the entire Watson Health division to Francisco Partners for approximately $1 billion in 2022 — a 75% loss on investment.

How accurate is the Epic Sepsis Model in real-world clinical settings?

A 2021 study published in JAMA Internal Medicine by University of Michigan researchers found that the Epic Sepsis Model achieved a real-world AUC of only 0.63 — substantially below the 0.76-0.83 range claimed by Epic. The model missed 67% of patients who actually developed sepsis while generating false alerts on 18% of all hospitalized patients. Only 7% of true sepsis cases were identified by the model that clinicians had not already identified independently. The performance collapse was attributed to distribution shift, poor calibration, and the proprietary nature of the model that prevented hospitals from retraining it on local data.

How did the Optum algorithm exhibit racial bias in healthcare?

A 2019 study published in Science by Ziad Obermeyer at UC Berkeley found that Optum's risk-prediction algorithm, used to manage healthcare for approximately 200 million Americans, systematically discriminated against Black patients. The algorithm used healthcare costs as a proxy for healthcare needs, but because Black patients spent approximately $1,800 less per year than white patients with identical chronic conditions (due to access barriers, not better health), the algorithm incorrectly classified them as healthier. The study found the algorithm missed nearly 50,000 chronic conditions in Black patients. When corrected to use direct health outcome predictions instead of cost proxies, the percentage of Black patients identified for enhanced care nearly tripled from 17.7% to 46.5%.

What should healthcare organizations do before deploying an AI system into production?

Healthcare organizations should complete a rigorous 15-point checklist before deploying any AI system: externally validate the model on at least three independent datasets, conduct a formal bias audit across demographic groups, validate prospectively on local patient populations, build and test EHR integration including edge cases and failure modes, measure clinician workflow impact with time-motion studies, monitor alert override rates (target below 50%), determine FDA SaMD classification, document comprehensive audit trails, calculate true total cost of ownership (development + integration + 3 years of operations), and secure multi-year executive sponsorship with committed budget. Skipping any of these steps significantly increases the probability of joining the 80% of projects that fail to scale beyond pilot.