Clinical Decision Support System: Real-Time EHR Alerts That Reduced Alert Fatigue by 60%

Executive Summary

A 450-bed regional medical center was drowning in clinical alerts. Their legacy Clinical Decision Support System (CDSS) generated over 12,000 alerts per day across 280 providers — and physicians were ignoring 89% of them. Drug interaction warnings, duplicate order flags, and clinical guideline reminders all competed for attention in an undifferentiated stream, creating a dangerous paradox: the system designed to prevent errors was itself becoming a patient safety risk.

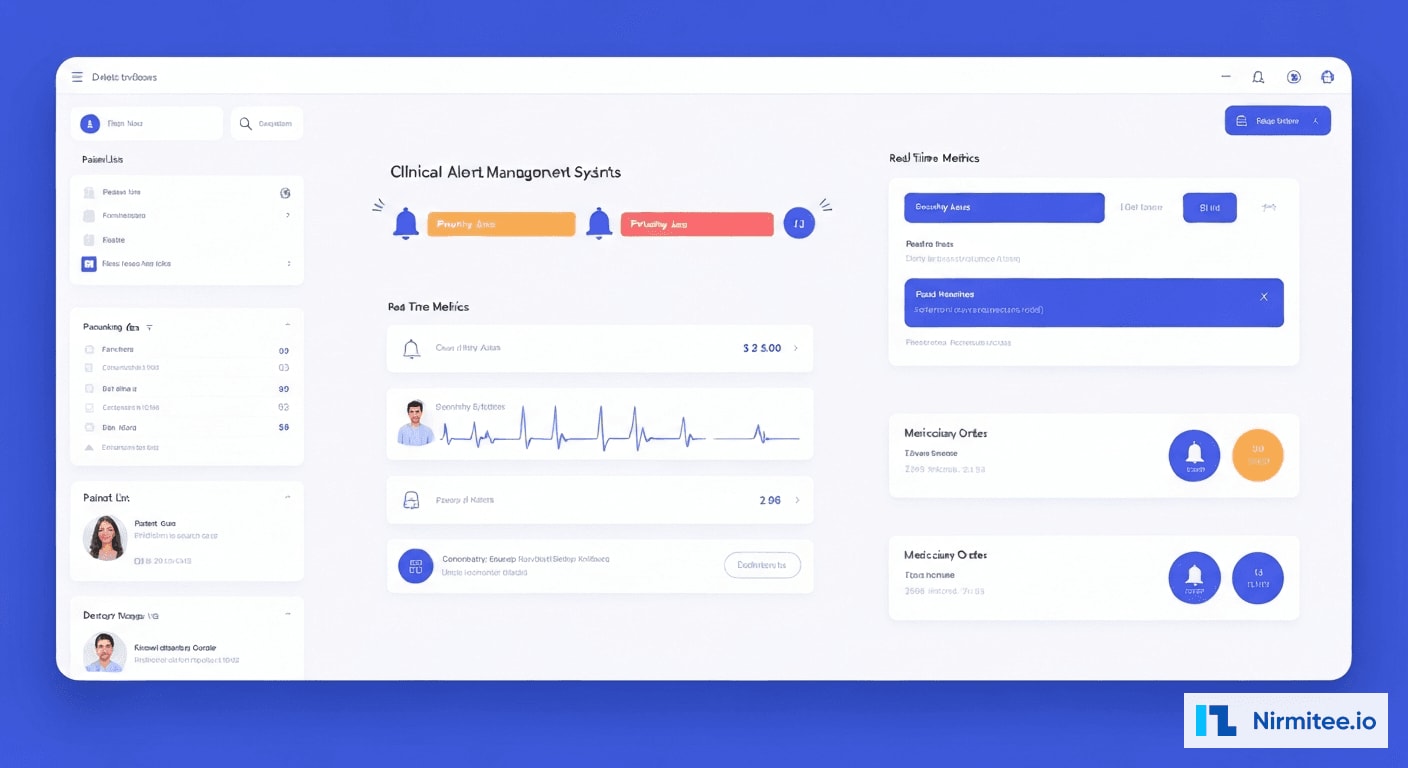

Nirmitee engineered a next-generation CDSS that integrates directly into EHR workflows with ML-based alert relevance scoring, context-aware suppression, and real-time clinical decision support. The system analyzes 47 contextual factors — patient history, current medications, lab trends, provider specialty, and workflow state — to score every alert before delivery. The result: 60% reduction in alert fatigue, sepsis detection 4 hours earlier than the previous protocol, and 94% clinically relevant alerts (up from 40%).

The Problem: Alert Fatigue Was a Patient Safety Crisis

Clinical Decision Support Systems are supposed to be the last line of defense against medical errors. But when every alert screams with equal urgency, none of them get heard. The medical center's existing CDSS had devolved into what clinicians called "the boy who cried wolf" — a system so noisy that its genuine warnings were lost in the flood.

Alert Overload by the Numbers

| Metric | Before CDSS Overhaul | Industry Benchmark |

|---|---|---|

| Alerts per provider per shift | 127 | 25-40 |

| Alert override rate | 89% | <50% |

| Clinically relevant alerts | 40% | >85% |

| Time to acknowledge alert | 4.2 minutes | <60 seconds |

| Missed critical drug interactions | 23/month | 0 |

| Near-miss events (alert-related) | 8/quarter | 0 |

The consequences were real and measurable. In the six months before the project, the hospital documented three adverse drug events directly linked to overridden alerts, two cases of delayed sepsis recognition, and a pattern of providers developing "alert blindness" — reflexively dismissing every notification regardless of severity.

Root Causes of Alert Fatigue

Our clinical informatics team conducted a 30-day audit of every alert generated by the legacy system. The findings were stark:

- Non-contextual firing rules: The system flagged every theoretical drug interaction without considering dose, duration, or patient-specific factors. A topical cream triggering a hepatotoxicity warning was treated identically to a high-dose IV combination.

- No specialty awareness: An oncologist received the same drug interaction warnings as a family medicine physician, despite fundamentally different risk-benefit calculations.

- Duplicate and cascading alerts: A single order change could trigger 4-7 related alerts — duplicate order, drug interaction, dose range, allergy cross-reactivity, and formulary — each requiring separate acknowledgment.

- Binary severity model: Alerts were either "high" or "low" with no gradient. Clinicians had no way to distinguish a life-threatening interaction from a theoretical concern.

- Zero learning capability: The system never adapted based on outcomes. An alert overridden 10,000 times continued firing with the same urgency as one never overridden.

The Solution: ML-Powered Context-Aware Clinical Alerts

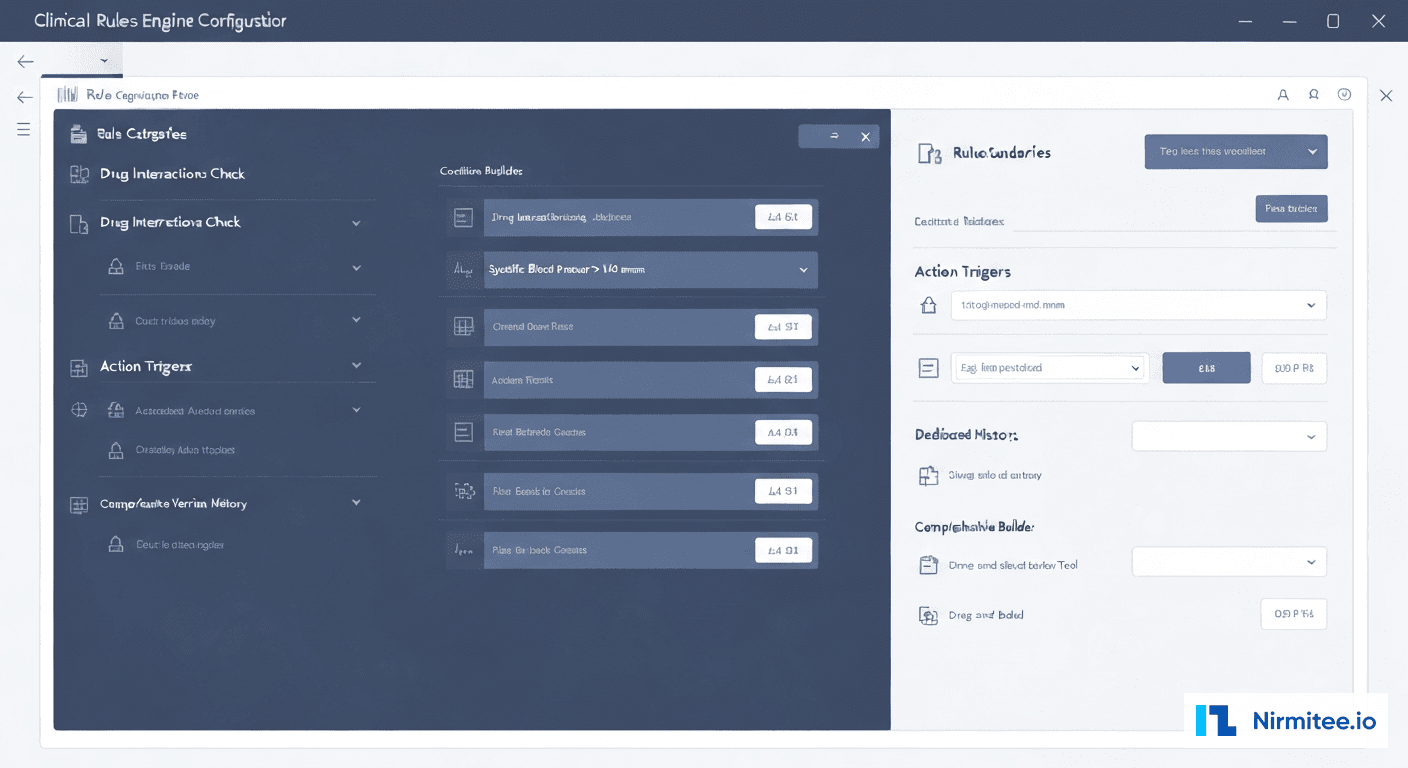

We designed a four-layer CDSS architecture that transforms raw clinical rules into context-aware, ML-scored, provider-specific decision support. The system does not replace clinical knowledge bases — it makes them dramatically smarter about when, how, and to whom alerts are delivered.

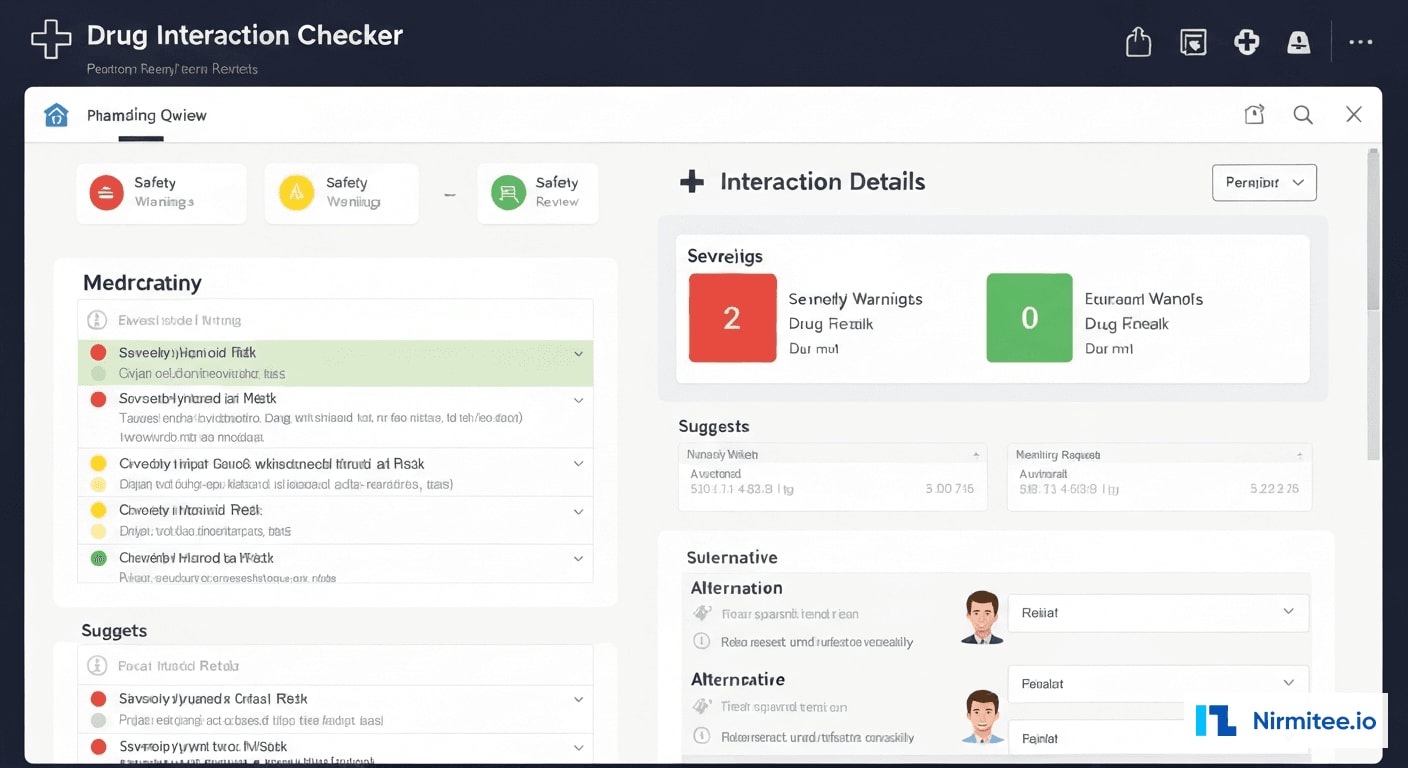

Layer 1: Intelligent Drug Interaction Detection

The drug interaction module goes far beyond simple pair-matching. It evaluates interactions across the patient's complete medication profile, considering:

- Dose-dependent severity: A 5mg dose of Drug A with Drug B is clinically different from 500mg

- Temporal factors: Medications taken 12 hours apart have different interaction profiles than simultaneous administration

- Patient-specific metabolism: Renal function, hepatic function, and known pharmacogenomic factors modulate interaction risk

- Route of administration: Topical, oral, IV, and inhaled routes carry different systemic interaction risks

The system maintains a continuously updated interaction knowledge base drawn from First Databank, Lexicomp, and published literature, supplemented by hospital-specific interaction data from our pharmacist review pipeline.

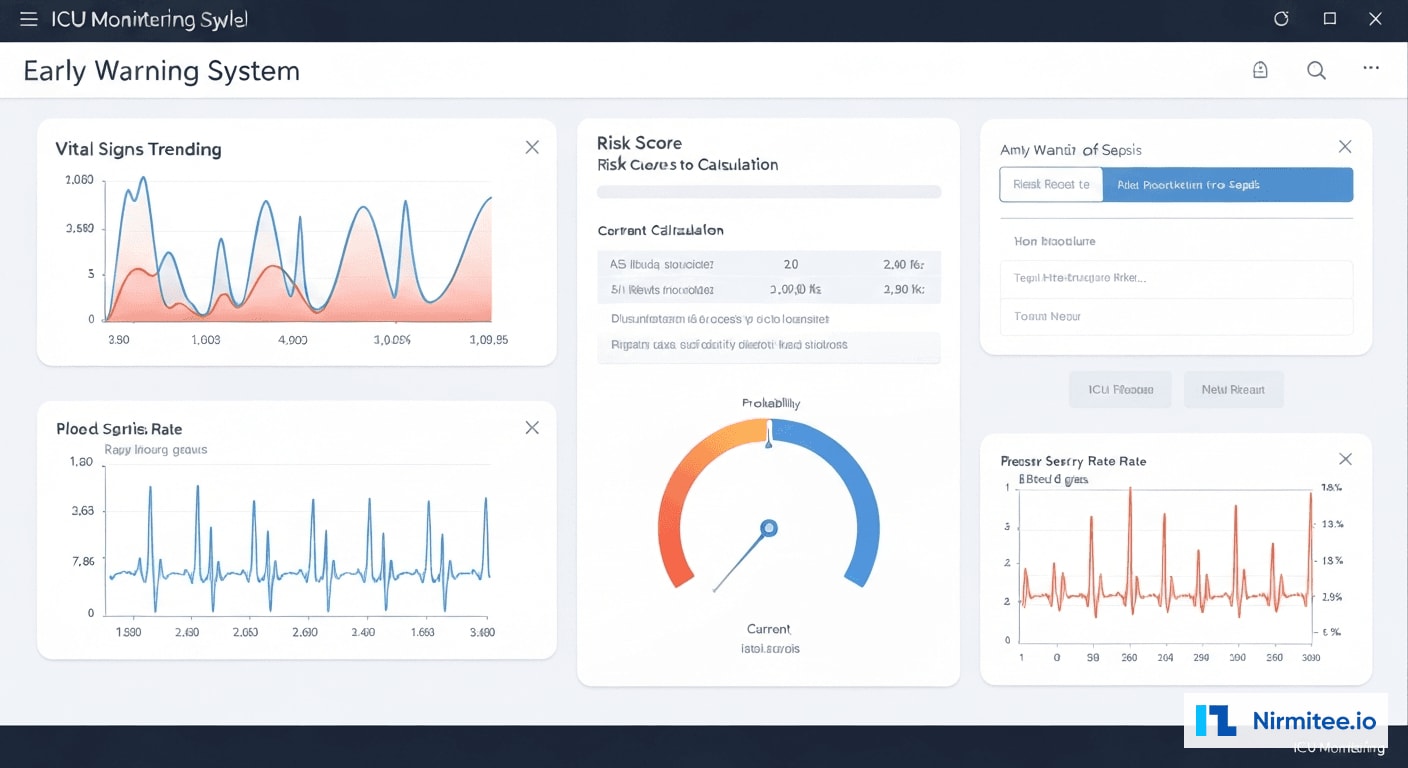

Layer 2: Sepsis Early Warning System

Sepsis kills approximately 270,000 Americans annually, and every hour of delayed treatment increases mortality by 4-8%. Our sepsis early warning module monitors 14 continuous data streams per patient and applies an ensemble ML model to predict sepsis onset hours before traditional SIRS/qSOFA criteria would trigger.

The model incorporates:

- Vital sign trajectories: Not just current values, but rate of change and pattern recognition across temperature, heart rate, respiratory rate, MAP, and SpO2

- Laboratory trends: WBC count trajectory, lactate trends, procalcitonin, CRP, and organ function markers (creatinine, bilirubin, platelet count)

- Clinical context signals: Recent procedures, ICU admission source, immunosuppression status, indwelling device presence

- Temporal patterns: Time-of-day adjusted baselines, accounting for normal physiological variation vs. pathological change

The model outputs a continuous risk score (0-100) updated every 15 minutes, with escalating notification pathways based on score trajectory. A score above 65 triggers a bedside nurse alert; above 80 pages the rapid response team with a pre-populated sepsis bundle order set.

Layer 3: ML-Based Alert Relevance Scoring

This is the core innovation that drives the 60% alert fatigue reduction. Every alert generated by any clinical rule passes through an ML scoring pipeline that evaluates 47 contextual features to produce a relevance score from 0.0 to 1.0.

The model was trained on 2.3 million historical alert-action pairs from the hospital's EHR, learning which alerts providers accepted vs. overrode, and correlating those decisions with patient outcomes at 24 hours, 72 hours, and 30 days. Key features include:

| Feature Category | Examples | Weight |

|---|---|---|

| Patient Acuity | ICU vs. floor, recent vitals trend, active diagnoses | High |

| Provider Context | Specialty, experience level, current workflow state | High |

| Alert History | Same alert frequency, override rate for this alert type | Medium |

| Medication Profile | Polypharmacy count, high-risk medications present | Medium |

| Temporal Context | Time of day, shift handoff proximity, order entry context | Low |

| Outcome Correlation | Historical adverse events associated with this alert type | High |

Alerts scoring below 0.3 are suppressed entirely (logged but not displayed). Alerts between 0.3-0.6 are batched and presented during natural workflow pauses. Alerts above 0.6 interrupt immediately, with those above 0.85 triggering hard stops that require explicit clinical justification to override.

Layer 4: Duplicate Order Detection and Clinical Guideline Reminders

The system identifies and consolidates duplicate orders across care team members, presents evidence-based guideline reminders at the point of decision-making, and integrates pharmacy verification workflows for high-risk medications.

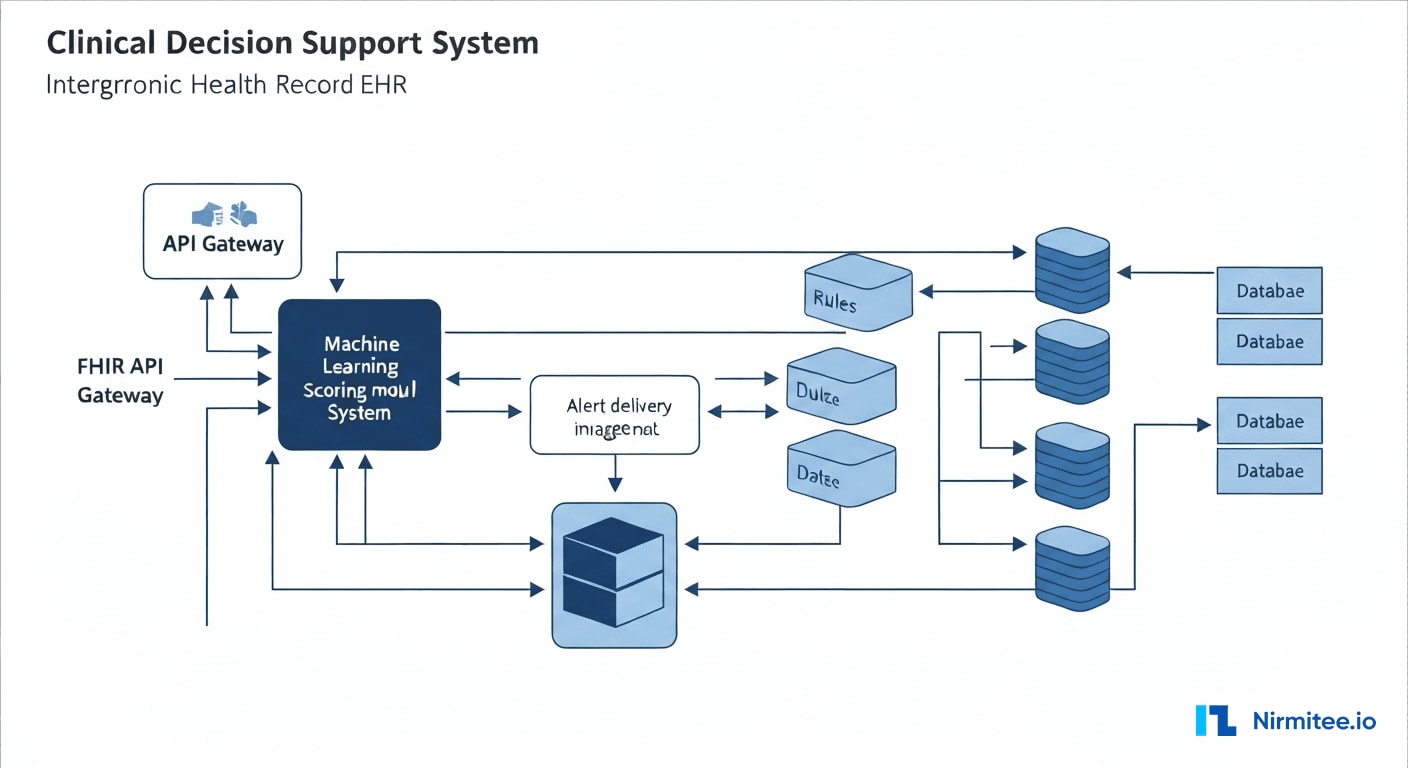

System Architecture

The CDSS architecture follows a microservices pattern integrated with the hospital's Epic EHR via FHIR R4 APIs and HL7v2 ADT/ORM interfaces. Real-time event streaming ensures sub-second alert delivery.

Technology Stack

| Component | Technology | Purpose |

|---|---|---|

| EHR Integration | Epic FHIR R4 + HL7v2 ADT/ORM | Bidirectional clinical data exchange |

| Event Streaming | Apache Kafka + FHIR Subscriptions | Real-time clinical event processing |

| Rules Engine | Drools + Custom CDSS Engine | Clinical rule evaluation and management |

| ML Pipeline | Python (scikit-learn, XGBoost) + MLflow | Alert relevance scoring and model lifecycle |

| Sepsis Model | TensorFlow Serving + LSTM ensemble | Continuous sepsis risk prediction |

| Knowledge Base | PostgreSQL + Neo4j (drug interaction graph) | Clinical knowledge and interaction storage |

| Alert Delivery | WebSocket + Push Notifications | Real-time provider notification |

| API Gateway | Kong + OAuth 2.0 / SMART on FHIR | Secure API management and authorization |

| Monitoring | Prometheus + Grafana + PagerDuty | System health and alert performance tracking |

| Infrastructure | Kubernetes (on-premise) + Redis | Container orchestration and caching |

Data Flow

The clinical data pipeline processes events in three stages:

- Ingestion: HL7v2 messages and FHIR resources stream through Kafka topics partitioned by patient ID, ensuring ordered processing per patient.

- Evaluation: The rules engine evaluates incoming data against 2,400+ clinical rules. Each rule fires independently and produces candidate alerts.

- Scoring and Delivery: Candidate alerts pass through the ML scoring pipeline. The model evaluates contextual features in <50ms, scores the alert, and routes it through the appropriate delivery channel based on score and provider preferences.

Results: Measurable Clinical Impact

Key Performance Metrics

| Metric | Before | After | Improvement |

|---|---|---|---|

| Alerts per provider per shift | 127 | 51 | 60% reduction |

| Alert override rate | 89% | 34% | 62% improvement |

| Clinically relevant alerts | 40% | 94% | 135% improvement |

| Sepsis detection lead time | Baseline (SIRS criteria) | 4 hours earlier | +4 hours |

| Time to acknowledge alert | 4.2 minutes | 38 seconds | 85% faster |

| Missed critical drug interactions | 23/month | 0/month | 100% elimination |

| Near-miss events (alert-related) | 8/quarter | 0/quarter | 100% elimination |

| Provider satisfaction (NPS) | -42 | +38 | 80-point swing |

| Adverse drug events | 3/6 months | 0/6 months | 100% elimination |

Clinical Outcomes

The sepsis early warning system alone identified 47 patients in the first six months who met the ML-predicted sepsis criteria before traditional screening would have flagged them. Of these, 38 received the sepsis bundle within 1 hour of the ML alert — compared to a historical average of 3.2 hours after SIRS criteria were met. The estimated mortality benefit, based on published literature correlating time-to-treatment with outcomes, translates to approximately 6-8 additional lives saved annually.

Financial Impact

| Category | Annual Impact |

|---|---|

| Reduced adverse drug events (liability + treatment cost) | $420,000 savings |

| Earlier sepsis detection (reduced ICU days) | $680,000 savings |

| Provider time reclaimed (reduced alert management) | $310,000 value |

| Reduced duplicate orders | $95,000 savings |

| Total annual impact | $1,505,000 |

Implementation Timeline

| Phase | Duration | Key Deliverables |

|---|---|---|

| Discovery & Audit | Weeks 1-4 | Alert audit, provider interviews, baseline metrics, clinical workflow mapping |

| Architecture & EHR Integration | Weeks 5-10 | FHIR/HL7 integration, Kafka pipeline, rules engine foundation |

| ML Model Development | Weeks 8-16 | Historical data extraction, model training, validation with clinical team |

| Drug Interaction Module | Weeks 11-16 | Knowledge base integration, dose-aware scoring, pharmacist review workflow |

| Sepsis Early Warning | Weeks 14-20 | Vital signs streaming, LSTM model deployment, escalation pathways |

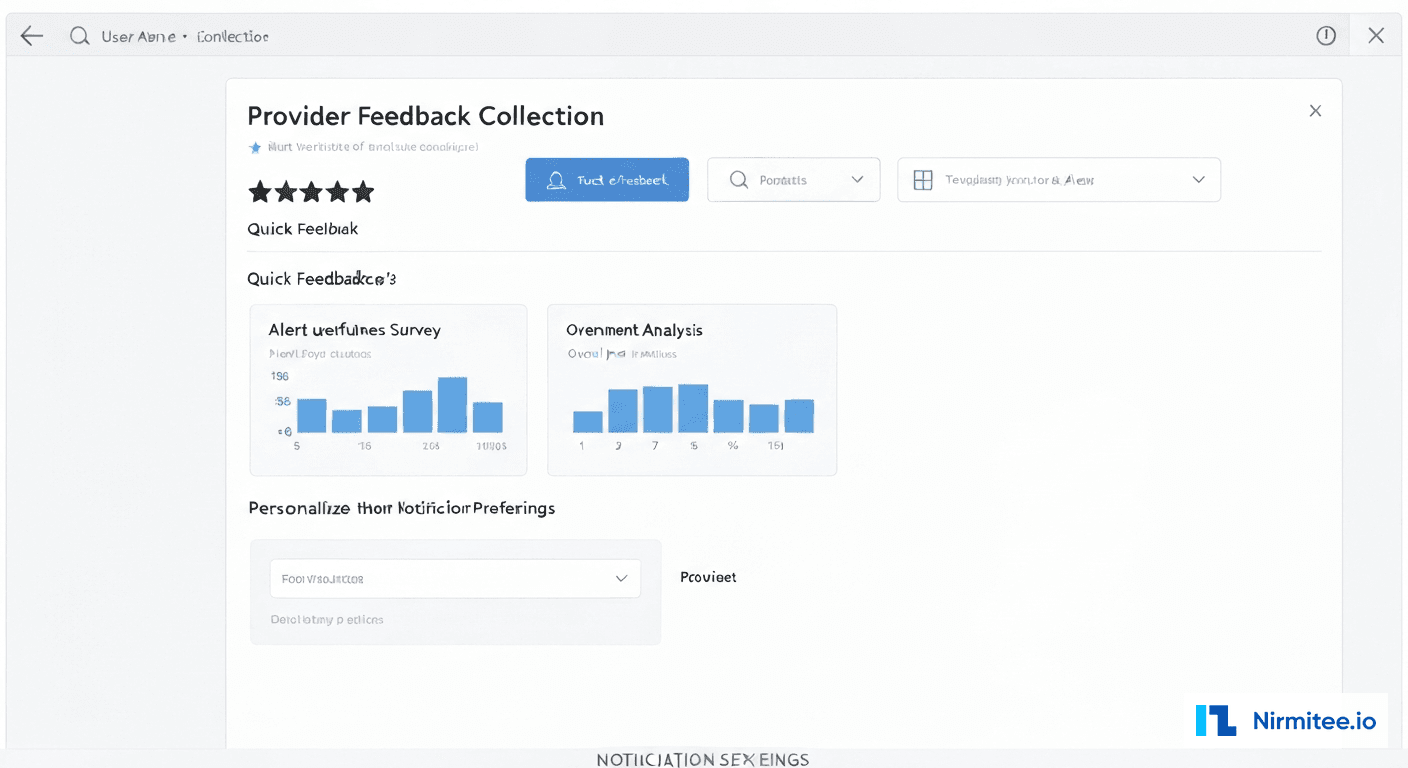

| Provider UX & Feedback | Weeks 17-22 | Alert presentation redesign, feedback collection, preference management |

| Pilot (2 units) | Weeks 23-28 | ICU and Med-Surg pilot, real-time tuning, safety validation |

| Hospital-wide Rollout | Weeks 29-34 | Phased deployment to all units, provider training, go-live support |

Lessons Learned

1. Clinical Validation Cannot Be Automated

The ML model achieved 96% accuracy on historical data during development, but clinical accuracy and clinical acceptability are different things. We established a Pharmacy & Therapeutics subcommittee that reviewed every suppression rule and override threshold. Three rules that the model suggested suppressing were reinstated after pharmacist review identified edge cases where they remained critical.

2. Provider Trust Is Earned in Increments

We initially deployed the system in "shadow mode" — running alongside the legacy CDSS for 4 weeks, logging what the new system would have done without actually changing provider experience. This generated the safety data needed for clinical leadership sign-off and gave providers confidence that the new system had been validated against their real workflow.

3. Alert Fatigue Is a Symptom, Not the Disease

Reducing alert volume was necessary but not sufficient. The real breakthrough came from redesigning how alerts are presented — using progressive disclosure, contextual grouping, and tiered interruption levels. A well-timed, well-presented alert is worth more than a hundred poorly timed ones.

4. Continuous Learning Requires Continuous Governance

The ML model retrains monthly on new alert-action-outcome data. But model drift in clinical settings can have patient safety implications. We implemented a clinical AI governance framework with automated drift detection, mandatory pharmacist review of threshold changes, and quarterly model performance reports to the medical executive committee.

5. Sepsis Models Need Local Calibration

Published sepsis prediction models often underperform when deployed at a new institution because patient populations, documentation patterns, and care processes differ. We fine-tuned the base model on 18 months of local data, which improved sensitivity from 71% (off-the-shelf) to 89% while maintaining specificity above 92%.

Frequently Asked Questions

How does the ML-based alert scoring handle new medications or rare drug interactions?

New medications default to the highest alert tier until the system accumulates sufficient interaction data. The knowledge base is updated weekly from First Databank and Lexicomp feeds. For rare interactions with limited historical data, the system applies a conservative scoring policy, ensuring these alerts always reach the provider regardless of the ML score. The pharmacist review pipeline adds an additional safety layer for novel combinations.

Can this CDSS integrate with EHR systems other than Epic?

Yes. The architecture uses FHIR R4 as its primary integration standard, making it compatible with any FHIR-enabled EHR including Cerner (Oracle Health), MEDITECH, Allscripts, and athenahealth. The HL7v2 interface provides backward compatibility for systems not yet supporting FHIR. We have deployed similar implementations across Epic and Cerner environments with the same clinical outcomes.

How do you prevent the ML model from suppressing genuinely critical alerts?

Three safeguards prevent inappropriate suppression. First, a clinically-defined "never suppress" list includes 142 alert types that always fire regardless of ML score (e.g., black-box warnings, life-threatening allergies, contraindicated pregnancy medications). Second, the suppression threshold is set conservatively and requires Pharmacy & Therapeutics committee approval to modify. Third, every suppressed alert is logged and reviewed in monthly safety audits against patient outcomes.

What is the ROI timeline for implementing a system like this?

Based on this implementation, the system achieved positive ROI within 8 months of full deployment. The initial investment of approximately $1.2M (including EHR integration, ML development, and clinical validation) was offset by $1.5M in annual savings from reduced adverse events, earlier sepsis detection, reclaimed provider time, and eliminated duplicate orders. Ongoing operational costs of approximately $180K/year for model maintenance and clinical governance are covered within the first quarter of annual savings.

Was this case study helpful?