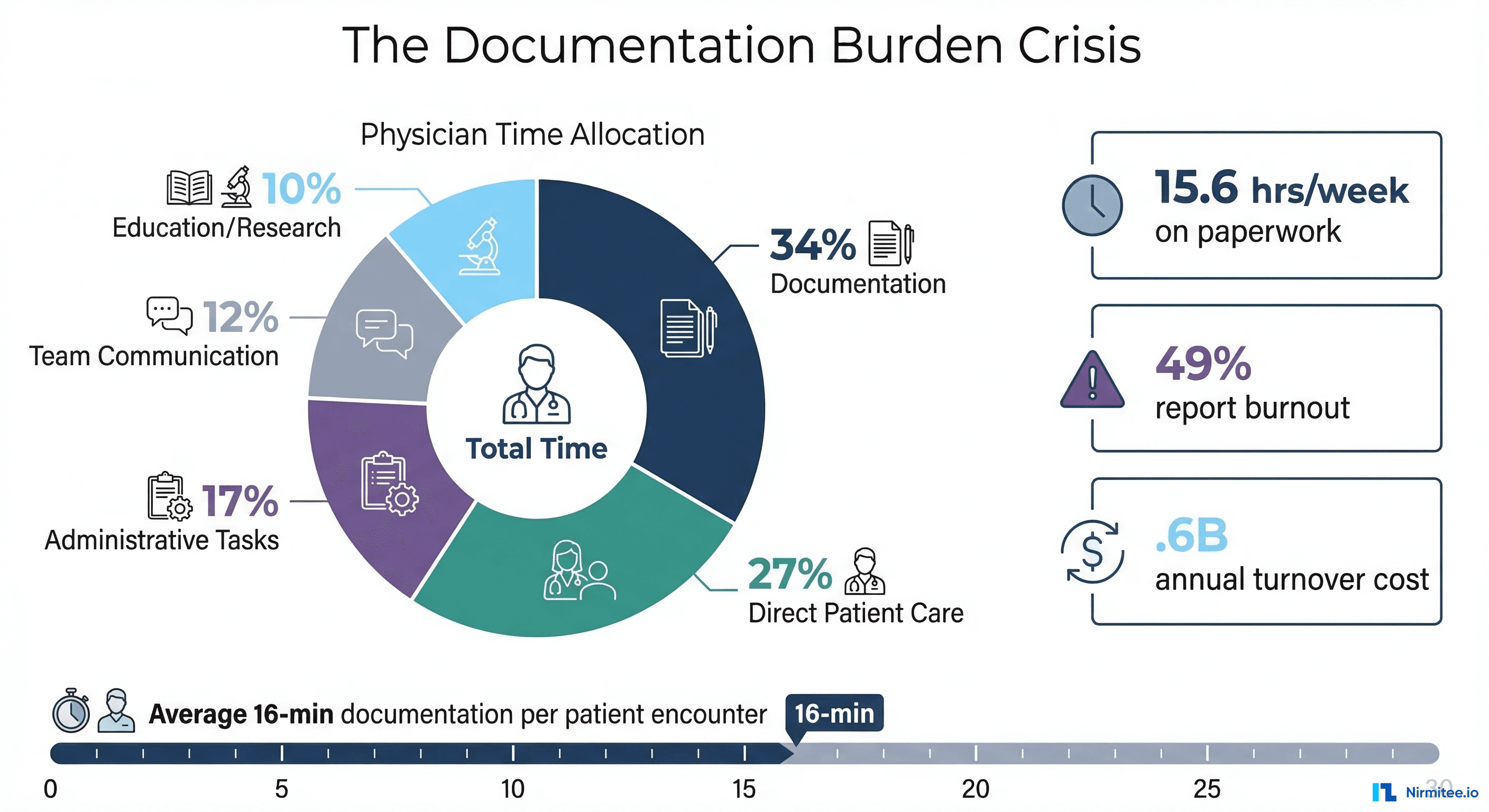

Every physician enters medicine to heal. Yet for most, the reality is starkly different. According to the Medscape 2025 Physician Burnout Report, 49% of physicians report feeling burned out, and the single largest contributor is not patient complexity or long hours — it is documentation.

The average physician spends 15.6 hours per week on paperwork and administrative tasks, with clinical documentation consuming the lion's share. That translates to roughly 16 minutes of documentation for every patient encounter — nearly twice the time spent on direct clinical care. Across a typical 20-patient day, physicians spend over 5 hours writing notes, often continuing well into the evening in what the industry calls "pajama time" — the 1-2 hours nightly spent completing charts at home after their families have gone to bed.

The financial toll is staggering. Physician burnout costs the US healthcare system an estimated $4.6 billion annually in turnover, reduced productivity, and early retirement. For a health system with 50 physicians, that represents roughly $2.3 million per year in burnout-related losses. And the problem is accelerating: a 2024 JAMA Network Open study found that physicians who spend more than 50% of their workday on EHR tasks are 2.8 times more likely to leave clinical practice within two years.

The documentation burden has created a vicious cycle. Health systems lose experienced clinicians to burnout, increasing workload on remaining physicians, accelerating their burnout, and driving further attrition. Administrative demands have become so consuming that medical students and residents are now citing documentation burden as a primary reason for choosing specialties with lower note-writing requirements — or leaving clinical medicine altogether.

This is the crisis that ambient AI scribes are designed to solve — and in 2026, they are no longer experimental. They are becoming standard infrastructure across US health systems.

What Is an Ambient AI Scribe?

An ambient AI scribe is an artificial intelligence system that passively listens to the physician-patient conversation during a clinical encounter, then automatically generates a structured clinical note — typically in SOAP (Subjective, Objective, Assessment, Plan) format — that is ready for physician review and insertion into the electronic health record (EHR).

Unlike traditional medical transcription services or human scribes, ambient AI systems operate without any active input from the physician. There is no dictation, no templates to fill, no checkboxes to click. The physician simply talks to the patient, and the AI handles everything else.

The key distinction from dictation-based documentation tools is the word "ambient." These systems do not require the physician to narrate their thought process aloud or speak in a structured format. They understand the natural flow of a clinical conversation — including interruptions, tangents, patient questions, and physical exam findings mentioned in passing — and extract the medically relevant information.

Consider what this means in practice. A cardiologist conducting a follow-up visit for a patient with atrial fibrillation does not need to pause the conversation to dictate: "Patient reports compliance with apixaban 5mg twice daily, denies bleeding episodes, reports occasional palpitations lasting under 30 seconds." Instead, the physician asks natural questions — "How have you been doing with the blood thinner? Any bleeding or bruising? Still getting those fluttering feelings?" — and the AI extracts the clinically relevant information, maps it to the correct medical terminology, and generates the note.

This is fundamentally different from the weekend AI scribe demo that any developer can wire together with Whisper and GPT-4. Production ambient AI scribes handle speaker diarization (distinguishing physician from patient voice), medical terminology recognition, structured data extraction, and EHR-native note formatting — challenges that take 6-12 months of dedicated engineering to solve at clinical grade.

How Ambient AI Clinical Documentation Agents Work

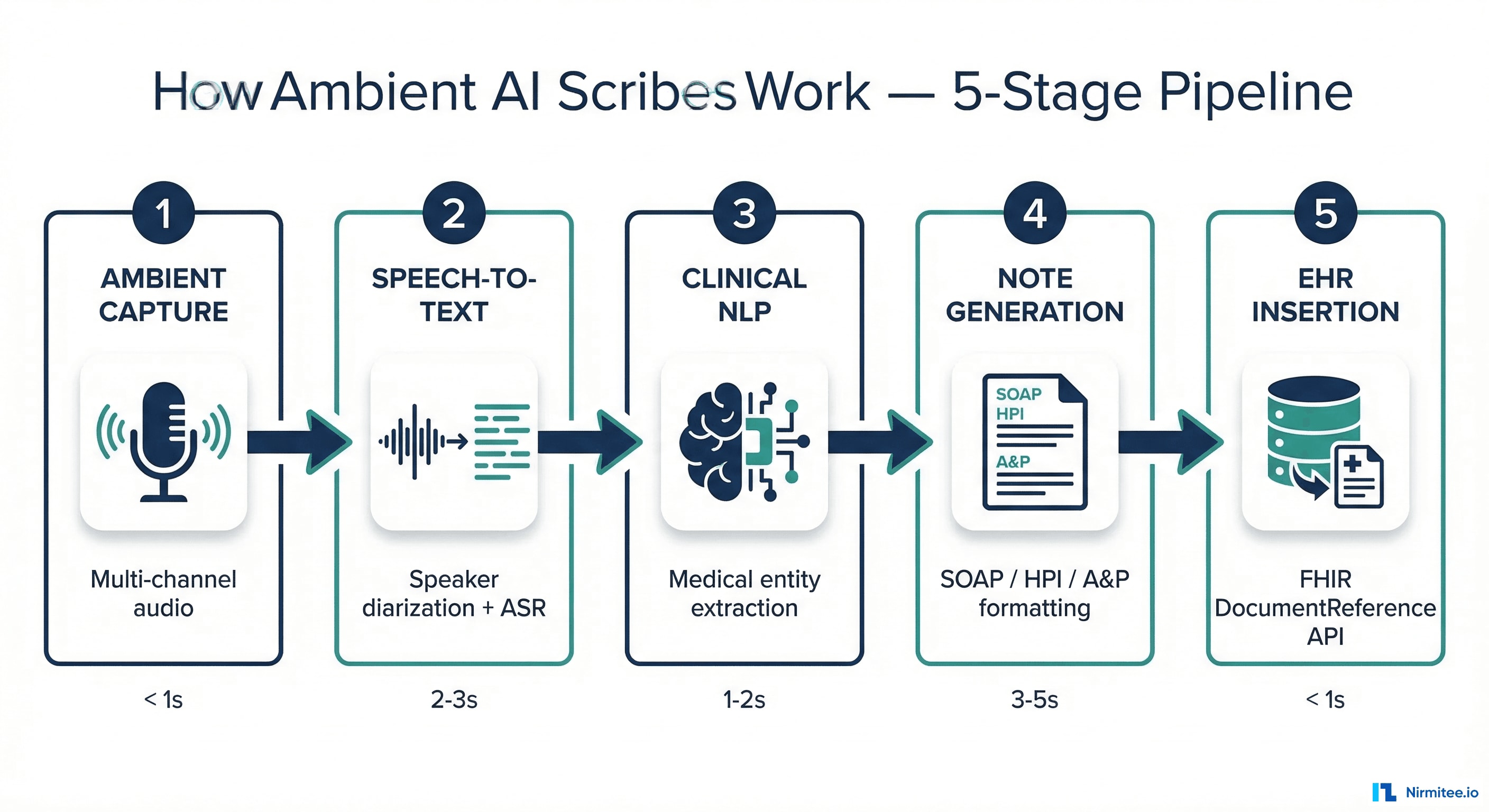

Understanding the technical pipeline behind ambient AI scribes is critical for any CMIO or CTO evaluating these systems. The process involves five distinct stages, each with its own accuracy requirements, latency constraints, and failure modes.

Stage 1: Ambient Audio Capture

The system captures audio from the clinical encounter using one or more microphones — typically a smartphone app, a dedicated device on the exam room desk, or integrated hardware in the clinical workstation. Multi-channel capture with noise cancellation is essential for handling the acoustically challenging environment of a busy clinic: overhead pages, hallway conversations, equipment sounds, and multiple speakers in the room.

Critical technical decisions at this stage include sample rate (16kHz minimum for medical speech recognition), noise suppression algorithms (adaptive beamforming for multi-source environments), and voice activity detection (to avoid processing silence or ambient noise). Enterprise systems like DAX Copilot use dedicated edge processing to handle initial audio preprocessing before streaming to cloud inference, reducing bandwidth requirements and maintaining audio quality.

The audio capture stage also raises the first compliance question: where is the audio stored during processing, and when is it deleted? Best-practice implementations process audio in ephemeral memory, generate the transcript, and delete the original audio within minutes — never persisting raw recordings to disk.

Stage 2: Speech-to-Text with Speaker Diarization

The audio stream is transcribed into text using automatic speech recognition (ASR) models trained specifically on medical vocabulary. General-purpose ASR models fail on clinical conversations because they cannot reliably distinguish between "hypertension" and "hypotension," correctly transcribe medication dosages like "Metformin 500mg BID," or handle the rapid code-switching between clinical terminology and patient-friendly language that characterizes real exam room conversations.

Equally important is speaker diarization — the ability to distinguish who said what. The AI must correctly attribute "I have been having chest pain for three days" to the patient and "Let us order a troponin and an EKG" to the physician. Errors in diarization cascade into incorrect note generation, where patient-reported symptoms could be attributed as physician assessments or vice versa. Modern systems achieve 95-98% diarization accuracy in controlled environments, but performance degrades in rooms with three or more speakers (e.g., when a family member or interpreter is present).

The latency requirement here is strict: the transcript must be generated within 2-3 seconds of each utterance to support real-time note generation. Batch processing after the encounter is technically simpler but creates a delay between visit completion and note availability that reduces physician adoption.

Stage 3: Clinical Natural Language Processing

The raw transcript undergoes medical NLP to extract structured clinical entities: symptoms, diagnoses, medications (with doses, routes, and frequencies), procedures, lab orders, physical exam findings, and clinical reasoning. This stage maps unstructured conversational language to standardized medical terminology — converting "my sugar has been running high" to "hyperglycemia" and linking it to the appropriate ICD-10 code (R73.9).

Named entity recognition (NER) models trained on clinical text identify medical concepts, while relation extraction models determine how those concepts relate — for example, connecting a medication ("lisinopril 10mg") with its indication ("hypertension") and status ("continue current dose"). Negation detection is equally critical: "no chest pain" must be documented as a pertinent negative, not as a positive finding of chest pain.

This stage is where the most clinically dangerous errors occur. A missed negation — documenting "chest pain" instead of "no chest pain" — can trigger inappropriate clinical interventions. An incorrect medication dose — "500mg" instead of "50mg" — has obvious patient safety implications. Clinical NLP accuracy must exceed 99% for safety-critical entities like medications and allergies.

Stage 4: Structured Note Generation

The extracted clinical entities and their relationships are assembled into a structured clinical note following the documentation format required by the practice — SOAP notes, History and Physical (H&P), or specialty-specific templates. This is where large language models (LLMs) play their most visible role, generating natural-language prose that reads like a physician wrote it.

The note must include not just what was discussed, but also medical decision-making (MDM) documentation that supports the selected evaluation and management (E/M) billing code. Incomplete MDM documentation is the #1 cause of claim denials, making this stage directly revenue-relevant. A well-generated note captures the physician's assessment logic: why a particular diagnosis was considered, what differential diagnoses were ruled out, and what the risk-benefit analysis was for the chosen treatment plan.

Specialty-specific templates add another layer of complexity. A cardiology progress note has different documentation requirements than a psychiatry intake evaluation or a surgical operative report. Production systems must support dozens of template formats, each with specialty-specific sections, terminology, and documentation standards.

Stage 5: EHR Insertion via FHIR

The generated note must flow back into the EHR as a native clinical document — not a text blob pasted into a free-text field. Modern ambient AI systems use the FHIR DocumentReference resource to insert notes as structured clinical documents with proper metadata: author (the supervising physician), encounter reference, document type (progress note, H&P), and creation timestamp.

This is where Voice AI integration meets clinical workflow. A note that appears in a separate application requiring copy-paste adds friction and reduces adoption. Notes must appear in the same EHR workflow the physician already uses — directly in the encounter, ready for review and signature. The difference between a note appearing automatically in the encounter and one that requires three clicks to import can mean the difference between 90% adoption and 30% adoption.

The Vendor Landscape: Who Is Building Ambient AI Scribes in 2026?

The ambient AI scribe market has consolidated around four major platforms, each with distinct technical approaches, EHR integration depth, and target customer profiles. For health system buyers, understanding these differences is essential to selecting the right platform for your organizational context, specialty mix, and technical infrastructure.

DAX Copilot (Microsoft/Nuance)

Microsoft's DAX Copilot, built on the Nuance acquisition, is the incumbent enterprise leader with the deepest EHR integration footprint. DAX integrates natively with Epic, Oracle Health (Cerner), MEDITECH, and over a dozen other EHR systems. Its primary advantage is the Microsoft ecosystem play — organizations already invested in Azure, Teams, and Microsoft 365 get seamless ambient AI that extends across their technology stack.

DAX Copilot processes encounters through a combination of ambient listening and AI-powered note generation, with notes appearing directly in the EHR within minutes of the encounter. It supports over 550,000 clinicians across major health systems and is particularly strong in large, multi-specialty academic medical centers where enterprise-wide licensing makes economic sense.

The trade-off: DAX is priced at an enterprise level that makes it cost-prohibitive for smaller practices, and its dependency on the Microsoft cloud stack may not align with organizations using AWS or GCP infrastructure. Integration complexity is also higher for non-standard EHR configurations.

Abridge

Abridge has emerged as the clinical quality leader, earning Best in KLAS awards in both 2025 and 2026 for Ambient AI. Its proprietary Contextual Reasoning Engine generates notes that go beyond simple transcription — it understands clinical context, identifies documentation gaps, and produces notes that support accurate billing codes.

The customer list reads like a who's who of US academic medicine: Johns Hopkins, Kaiser Permanente, Duke Health, Mayo Clinic, Emory Healthcare, Sutter Health, and UPMC. Abridge integrates deeply within Epic, accessible from Haiku to Hyperdrive workflows without requiring physicians to leave their normal EHR environment.

Documented outcomes include a 78% decrease in cognitive load at Christus Health, 90% of clinicians at Corewell Health reporting more undivided patient attention, a 53% improvement in professional fulfillment at the University of Vermont Health Network, and 86% of clinicians at Lee Health reporting less after-hours documentation work. Abridge also offers dedicated products for nursing documentation and revenue cycle optimization, extending the ambient AI value beyond physician notes.

DeepScribe

DeepScribe has carved a niche as the specialty-focused leader, particularly dominant in oncology where it captures 4 million oncology visits annually. Its KLAS Spotlight Report score of 98.8 out of 100 reflects exceptional specialty-specific accuracy that generalist platforms struggle to match.

What differentiates DeepScribe technically is its Customization Studio — a platform that learns individual provider documentation styles and preferences, adapting note generation to match how each physician writes. This addresses one of the biggest adoption barriers: physicians rejecting AI-generated notes that do not match their personal documentation style. A physician who writes terse, bullet-point notes will get terse, bullet-point notes; one who writes narrative prose will get narrative prose.

DeepScribe also offers AI pre-charting (preparing patient context before the visit including HCC code insights) and AI coding (automated E/M, HCC, and ICD-10 coding with audit-ready documentation). It integrates with athenahealth, eClinicalWorks, Epic, and specialty-specific platforms like Flatiron and Ontada for oncology workflows. The SOC 2 and HIPAA certifications provide enterprise-grade security compliance.

Nabla

Nabla has achieved the broadest adoption by clinician count, with over 85,000 clinicians across 150+ health organizations processing more than 20 million patient encounters annually. Its approach emphasizes accessibility and ease of deployment, making it particularly attractive to mid-size practices and health systems that need ambient AI without enterprise-scale implementation projects.

Nabla's documented outcomes show 55% of clinicians saving at least 1 hour daily, a 1.5x increase in monthly appointment capacity, and a 27% reduction in clinician burnout. It supports 50+ medical specialties and integrates with Epic, athenahealth, Oracle Health, NextGen, and Greenway Health.

Its compliance posture is among the strongest: HIPAA, GDPR, SOC 2 Type 2, and ISO 27001 certified, with a firm commitment to not training models on user data and optional audio retention only in de-identified form. For health systems with international operations or European data processing requirements, Nabla's GDPR compliance is a significant differentiator. As Dr. Amelia Stegeman noted in a published testimonial, Nabla generates notes that are "concise, well-organized, and legally robust" — highlighting accuracy without fabricated diagnoses.

FHIR Integration: How AI-Generated Notes Enter the EHR

For CTOs and integration architects, the most technically consequential decision in ambient AI deployment is how the generated note flows back into the EHR. The industry is converging on FHIR R4 as the standard integration protocol, but the implementation details vary significantly across vendors and determine both the clinical utility and the long-term scalability of the deployment.

The FHIR DocumentReference Approach

The FHIR DocumentReference resource is the standard mechanism for inserting AI-generated clinical notes into the EHR. A properly constructed DocumentReference includes:

- status:

current(for active notes) orpreliminary(for notes awaiting physician review) - type: LOINC code identifying the document type (e.g., 11506-3 for Progress Note, 34117-2 for History and Physical)

- subject: Reference to the Patient resource

- author: Reference to the Practitioner who will sign the note — critically, this must be the physician, not the AI system

- content: The note itself, either as inline XHTML or as an attachment with a URL

- context.encounter: Reference to the specific clinical encounter that generated this note

- context.period: The time period of the encounter for accurate chronological ordering

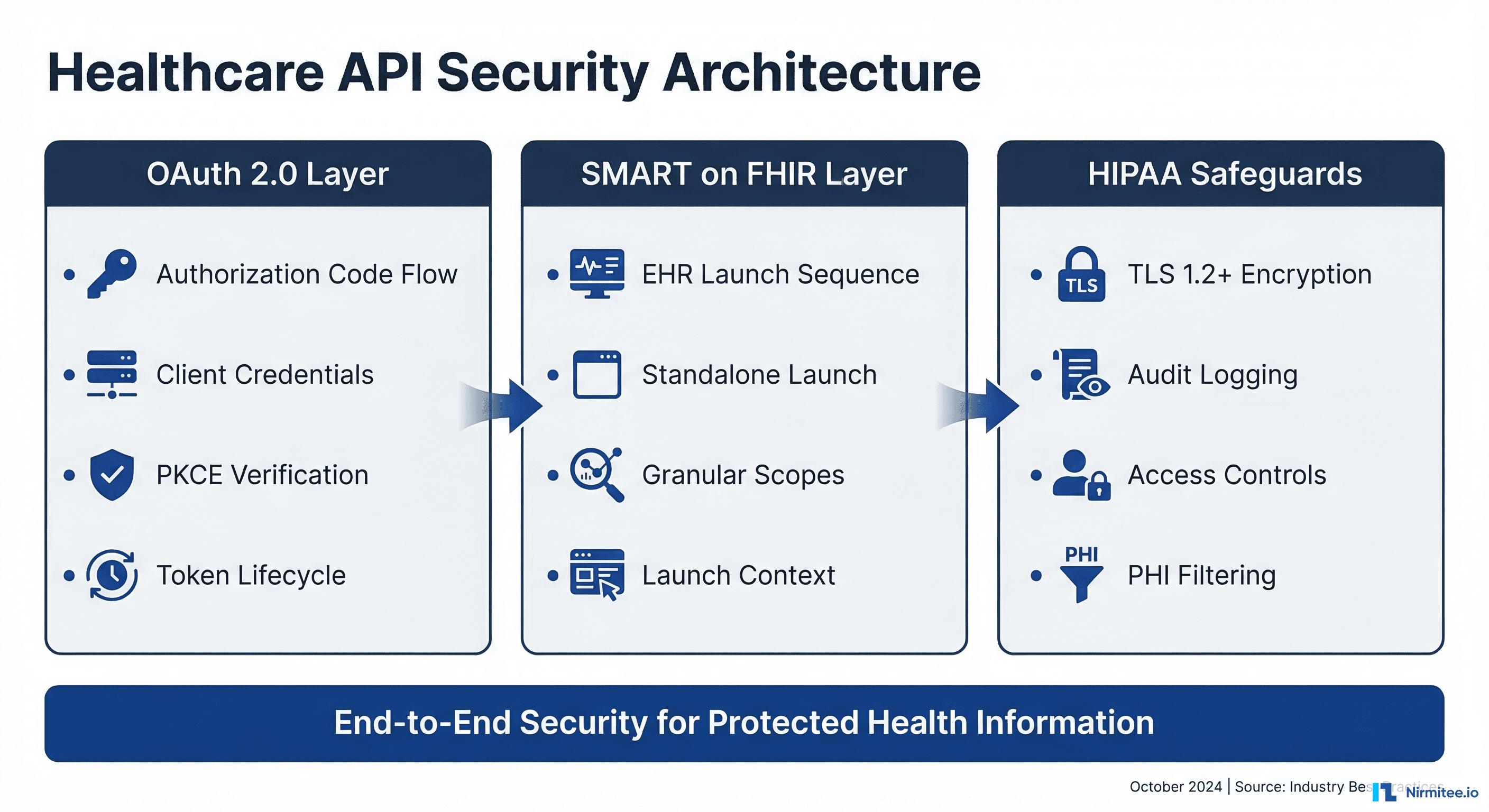

SMART on FHIR Authentication

The ambient AI system must authenticate to the EHR's FHIR API using SMART on FHIR — the OAuth 2.0-based authorization framework adopted by CMS and ONC as part of the 21st Century Cures Act. This means the AI scribe acts as a SMART backend service, authorized via client credentials or a system-level scope, with access limited to creating DocumentReference resources for encounters where the authorizing physician is the attending.

For health systems already building FHIR-native integrations, this is a familiar pattern. For those still relying on HL7v2 ADT feeds and custom EHR APIs, ambient AI adoption may accelerate their broader interoperability modernization journey — providing a tangible clinical use case that justifies the infrastructure investment in modern API-based integration.

The Structured vs. Unstructured Debate

A key architectural decision is whether AI-generated notes should be inserted as unstructured narrative text (a blob of prose in the note field) or as structured FHIR resources — individual Condition, MedicationStatement, Procedure, and Observation resources that populate discrete EHR fields and enable downstream computation.

Structured insertion is technically superior: it enables downstream analytics, clinical decision support, quality measure reporting, and population health management. A diagnosis extracted and coded as a structured Condition resource can trigger care gap alerts, update problem lists, and feed quality dashboards automatically. However, it requires the AI system to extract and codify clinical data with near-perfect accuracy — a standard that current systems approach but have not fully achieved for all entity types.

Most production deployments use a hybrid approach: unstructured narrative for the clinical note plus structured codes for high-confidence entities like primary diagnoses and active medications. This pragmatic middle ground delivers the narrative documentation physicians need while providing structured data for the most impactful downstream use cases.

Accuracy, Hallucination, and the Trust Problem

The most important question a CMIO should ask any ambient AI vendor is: "What is your hallucination rate, and how do you measure it?"

LLM-based note generation carries an inherent risk of clinical hallucination — the AI generating medically plausible but factually incorrect content. This might manifest as:

- A medication the patient is not taking appearing in the medication reconciliation section

- A symptom discussed in a previous visit being attributed to the current encounter

- A physical exam finding being fabricated when it was never performed

- A diagnosis code being assigned based on clinical reasoning the physician did not express

- Family history details being invented to fill expected template sections

- Vital signs being approximated when they were not actually taken during the encounter

These are not hypothetical scenarios. Every ambient AI vendor has documented instances of hallucination in their clinical validation studies. The question is not whether hallucinations occur, but how the system detects, flags, and prevents them from reaching the signed note.

Production-grade systems employ multiple safeguards:

- Provenance linking: Every statement in the generated note is linked back to the specific portion of the transcript that supports it. If the AI cannot cite a source utterance, the statement is flagged for physician review or omitted entirely.

- Confidence scoring: Each clinical assertion carries a confidence score. Low-confidence items are highlighted visually in the note review interface, drawing the physician's attention to statements that need verification.

- Temporal grounding: The system only generates content from the current encounter transcript, not from historical data, unless explicitly prompted by the physician's reference to prior records. This prevents the common hallucination pattern of incorporating information from previous visits.

- Human-in-the-loop: No note is finalized without physician review and attestation. The AI generates a draft; the physician reviews, edits, and signs. This is the ultimate safety net — but it only works if the review process is efficient enough that physicians actually read the note rather than rubber-stamping it.

- Contradiction detection: Advanced systems flag internal contradictions — for example, a note that states "no chest pain" in the subjective section but lists "chest pain" in the assessment differential.

Regulatory Considerations: Who Signs the AI-Generated Note?

The legal and regulatory framework for AI-generated clinical documentation is evolving rapidly, but one principle remains clear: the ordering/supervising physician retains full legal, clinical, and billing responsibility for every note they sign, regardless of whether it was generated by a human scribe, dictation software, or an AI agent.

The Attestation Requirement

CMS has not issued specific guidance on AI-generated clinical notes, but existing documentation guidelines are clear: the physician's electronic signature on a note constitutes attestation that the content is accurate and reflects their clinical judgment. This means:

- The physician must review the AI-generated note before signing — rubber-stamping without review does not satisfy the attestation requirement

- Any errors in the AI-generated content become the physician's responsibility once signed

- AI-generated notes used for billing must meet the same documentation requirements as manually written notes — they do not get a lower standard for being AI-generated

- The note must accurately reflect the medical decision-making that occurred during the encounter, including the complexity level that supports the billed E/M code

- False claims liability (under the False Claims Act) applies equally to AI-generated documentation that supports inappropriate billing codes

HIPAA Compliance for Ambient Listening

Ambient AI introduces a novel HIPAA surface area that existing compliance frameworks were not designed to address. When an AI system passively records clinical conversations, several requirements apply:

- Business Associate Agreement (BAA): The AI vendor is a business associate under HIPAA, and a BAA covering audio data, transcripts, and generated notes is mandatory. The BAA must specifically address AI model training — can the vendor use your clinical data to improve their models?

- Minimum Necessary Standard: The system should only capture and process the minimum data necessary for note generation — audio should be deleted after processing unless specifically retained for quality improvement under the BAA terms

- Patient Notification: While HIPAA does not require explicit patient consent for treatment-related documentation, several states (including California and Illinois) have two-party consent laws for audio recording. Most health systems implement an informed consent workflow where patients are notified about AI-assisted documentation and can opt out of ambient recording

- Audit Controls: HIPAA requires audit trails for all access to PHI. Every AI-generated note must be logged with the encounter context, the generating system version, the reviewing physician, and any edits made between the AI draft and the signed note

- Technical Safeguards: End-to-end encryption for audio streams (AES-256 minimum), encrypted storage for any retained data, and role-based access controls that limit who can access AI-generated drafts before physician review

State-Level Considerations

Beyond HIPAA, health systems must navigate a patchwork of state privacy laws. California's CMIA (Confidentiality of Medical Information Act) imposes stricter consent requirements than HIPAA for the use of medical information. Illinois' BIPA (Biometric Information Privacy Act) may apply to voiceprint data collected during ambient listening — creating potential liability if speaker diarization models store voice biometric data. New York's SHIELD Act adds breach notification requirements for AI-processed health data.

A health system operating across multiple states needs a compliance framework that satisfies the most restrictive jurisdiction — typically California — as the baseline for all locations.

Malpractice Liability Considerations

An emerging concern is whether AI-generated documentation creates new vectors for malpractice liability. If a physician signs an AI-generated note containing a hallucinated clinical finding, and a subsequent provider relies on that finding for treatment decisions, the liability chain becomes complex. The signing physician is likely liable, but questions remain about whether the AI vendor shares liability — particularly if the hallucination resulted from a known model deficiency.

Health systems should work with their malpractice carriers to ensure that AI-assisted documentation is covered under existing policies, and should document their AI validation and monitoring processes as evidence of reasonable care in vendor selection and deployment.

Build vs. Buy: A Decision Framework for Health Systems

The build-versus-buy question for ambient AI clinical documentation is fundamentally different from most health IT decisions because the cost of clinical error is measured in patient safety, not just dollars.

When to Buy a Commercial Ambient AI Platform

- You need deployment in under 6 months: Commercial platforms like DAX Copilot and Abridge can be operational in 8-16 weeks with existing EHR integrations. Building from scratch requires 12-18 months minimum.

- Your EHR is Epic, Cerner, or athenahealth: These platforms have pre-built, certified integrations that would take 12-18 months to build from scratch, including the EHR vendor certification process.

- You do not have a dedicated AI/ML engineering team: Building a clinical-grade NLP pipeline requires 5-8 specialized engineers (speech recognition, medical NLP, LLM fine-tuning, EHR integration) at a total compensation cost of $1.5-3 million per year.

- Clinical validation is non-negotiable: Commercial vendors have spent years on clinical validation studies across thousands of encounters. Building equivalent validation takes 6-12 months beyond initial development and requires access to clinical subject matter experts for note quality review.

- Multi-specialty deployment: Supporting 20+ specialties with specialty-specific note templates requires extensive medical knowledge engineering that commercial vendors have already built.

When to Build (or Build on Top of a Platform)

- You have unique documentation workflows that no commercial product supports — for example, team-based documentation in academic settings, highly specialized note formats for niche specialties, or integration with proprietary clinical decision support systems.

- You need deep integration with proprietary clinical systems — home-grown care management platforms, custom quality reporting systems, or non-standard EHR configurations that commercial vendors do not support.

- You are a health tech company building documentation as a feature, not a health system buying a tool. In this case, our guide to building an AI clinical scribe covers the 12 production challenges you will face — from speaker diarization to FHIR write-back.

- Data sovereignty requirements prevent sending audio or clinical data to external cloud services — for example, certain government healthcare facilities or organizations in jurisdictions with strict data localization laws.

- Cost optimization at scale: For very large health systems (500+ physicians), the per-physician licensing cost of commercial platforms may exceed the cost of building and maintaining an internal solution over a 5-year horizon.

The Hybrid Path

An increasingly common approach is to buy a commercial platform and build custom integrations on top. For example: deploy Abridge or DAX for ambient note generation, but build a custom FHIR-based integration layer that routes generated notes through your own clinical decision support rules, quality measure documentation checks, and specialty-specific post-processing before EHR insertion. This gives you commercial-grade AI with institutional-specific workflows — the best of both worlds.

ROI Analysis: The Business Case for Ambient AI Scribes

The financial case for ambient AI scribes is compelling because the costs of the status quo are enormous and measurable. Unlike many health IT investments where ROI is speculative, ambient AI documentation addresses a cost center that every CFO can quantify.

Quantifying the Documentation Burden Cost

For a 50-physician health system with an average panel size of 20 patients per day:

- Documentation time: 50 physicians x 3 hours/day x 250 working days = 37,500 physician-hours/year consumed by documentation

- At average physician compensation of $350K/year (~$168/hour fully loaded): 37,500 hours x $168 = $6.3 million annually in physician time spent on documentation rather than patient care

- Burnout-related turnover: With 49% burnout rates and an average physician replacement cost of $500K-$1M (recruitment fees, lost revenue during vacancy, onboarding), even 2-3 departures per year costs $1-3 million

- Human scribe costs: Health systems using human scribes pay $36,000-$55,000 per scribe per year, and a 50-physician practice may employ 15-25 scribes at a total cost of $540K-$1.38M

The 66% Time Reduction

Based on Nirmitee's Voice AI analysis, ambient AI scribes reduce documentation time by approximately 66% — from an average of 15-20 minutes per patient encounter to 2-3 minutes of physician review. For our 50-physician example:

- Time saved: 37,500 hours x 66% = 24,750 hours reclaimed annually

- Dollar value of reclaimed time: 24,750 hours x $168/hour = $4.16 million in reclaimed physician productivity

- Additional revenue potential: Physicians seeing 2-3 additional patients per day = 50 physicians x 2.5 patients x 250 days x $150 avg reimbursement = $4.69 million in incremental revenue capacity

- Reduced scribe costs: Eliminating or reducing human scribe workforce saves $400K-$1M annually

- Burnout reduction value: Even preventing one physician departure saves $500K-$1M in replacement costs

Total Cost of Ownership

Commercial ambient AI platforms typically price at $15,000-$30,000 per physician per year for enterprise contracts, with implementation costs of $3,000-$6,000 per physician for initial deployment, workflow redesign, and training.

For a 50-physician deployment:

- Annual license: $750K-$1.5M

- Year 1 implementation: $150K-$300K

- Training and change management: $50K-$100K

- Total Year 1 investment: ~$1.2M

- Ongoing annual cost: ~$1M

Against combined savings and revenue gains of $4-8 million annually, the first-year ROI ranges from 200-500% with a payback period of 4-5 months. By Year 2, with implementation costs amortized, the annual return improves further.

Implementation Roadmap: From Pilot to System-Wide Deployment

Successful ambient AI deployment follows a phased approach that balances clinical validation with operational scale. Rushing to system-wide deployment without adequate validation creates adoption risk; moving too slowly allows burnout to continue unchecked.

Phase 1: Pilot (Weeks 1-8)

- Select 5-10 physicians across 2-3 specialties for initial deployment — choose physicians who are technology-forward and willing to provide detailed feedback

- Configure EHR integration in a test environment and validate end-to-end note flow

- Establish baseline documentation time metrics using EHR audit logs

- Deploy ambient AI with mandatory physician review of every generated note

- Conduct weekly accuracy audits comparing AI-generated notes to physician-edited versions, tracking edit rates by section and specialty

Phase 2: Validation (Weeks 9-16)

- Expand to 20-30 physicians across 5-8 specialties

- Measure documentation time reduction, note accuracy, physician satisfaction, and patient satisfaction scores

- Address specialty-specific template requirements identified during the pilot

- Validate billing code accuracy with the coding team — ensure AI-generated MDM documentation supports appropriate E/M levels

- Finalize patient consent workflow and compliance documentation

- Develop physician champion network for peer support during wider rollout

Phase 3: Scale (Weeks 17-32)

- Roll out to full physician workforce in waves of 20-30 physicians per week

- Integrate with clinical decision support workflows for automated quality checks

- Deploy analytics dashboard for documentation quality monitoring

- Establish ongoing accuracy monitoring and vendor SLA enforcement

- Extend to advanced practice providers (NPs, PAs) and nursing documentation

- Begin measuring impact on downstream metrics: patient throughput, coding accuracy, physician retention

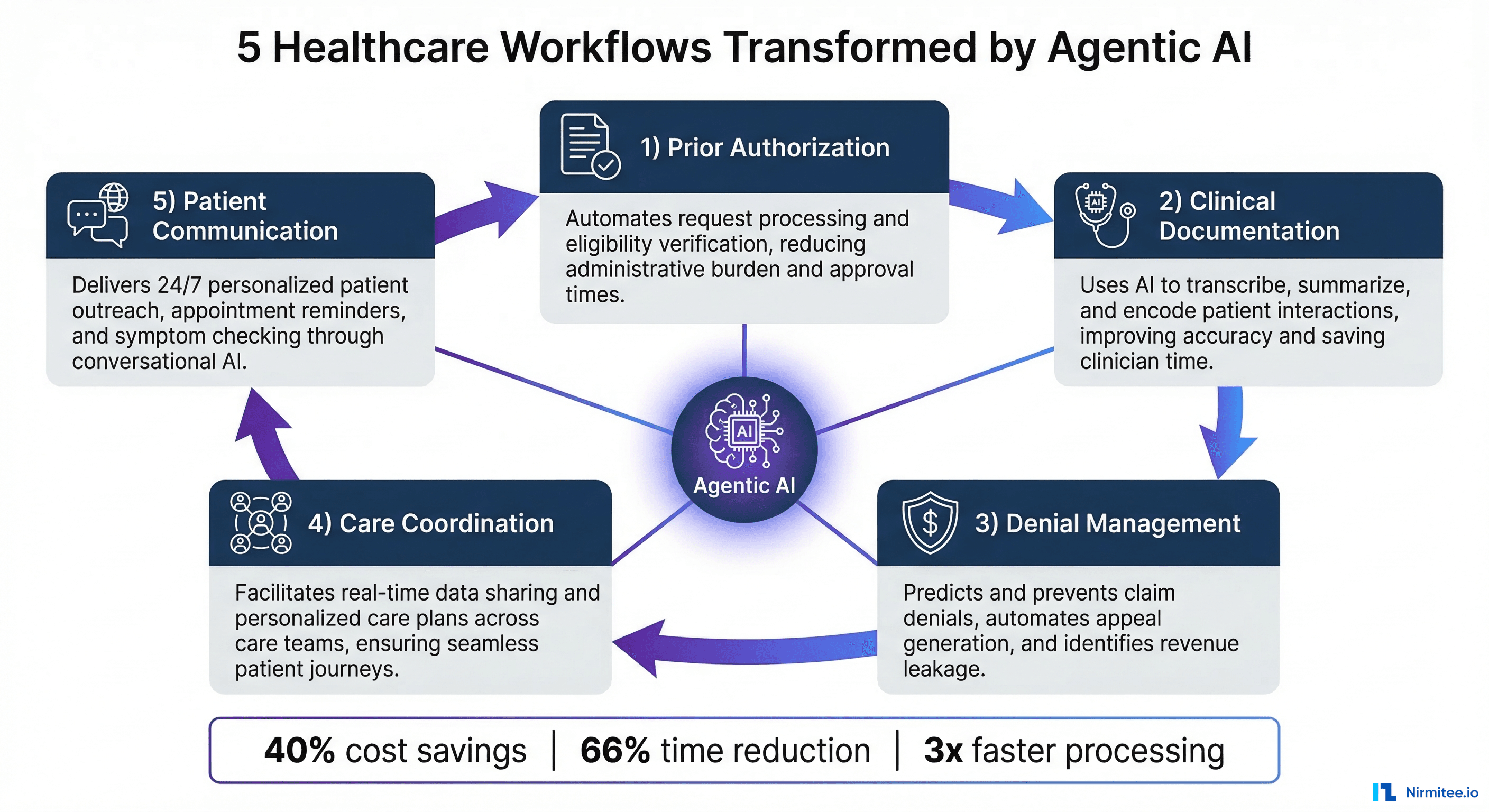

What Comes Next: The Evolution from Scribe to Clinical Agent

Ambient AI scribes represent the first generation of clinical AI agents — systems that observe clinical workflows and take autonomous action. The trajectory from documentation agent to comprehensive clinical workflow agent is already underway, and the organizations deploying ambient AI today are building the trust foundation and technical infrastructure for what comes next.

Near-term capabilities emerging in 2026-2027 include:

- Real-time clinical decision support: The ambient AI listens to the conversation and surfaces relevant clinical decision support — drug interaction alerts, relevant clinical guidelines, or missing screening questions — during the encounter, not after. When the physician prescribes a medication, the AI can immediately flag potential interactions with the patient's current regimen.

- Automated order entry: Based on the physician's verbal orders during the encounter, the AI pre-populates lab orders, imaging requests, and medication prescriptions for physician review and signature — reducing the post-visit order entry time that currently adds 5-10 minutes to every encounter.

- Quality measure documentation: The AI ensures that quality measure documentation requirements (HEDIS, MIPS, CQMs) are captured during the encounter, reducing retrospective chart chasing and improving quality scores without adding to physician workload.

- Post-visit patient communication: Generating patient-facing visit summaries in plain language, automatically sent through the patient portal — improving patient understanding and satisfaction while eliminating the physician time currently spent writing after-visit summaries.

- Referral letter generation: Automatically generating specialist referral letters that include relevant history, current medications, and the reason for referral — extracted directly from the encounter conversation.

The documentation scribe is the trojan horse for broader clinical AI adoption. Once physicians trust AI with their notes — the highest-stakes, most personal artifact of their clinical work — the path to trusting AI with order suggestions, care gap identification, and clinical reasoning support becomes dramatically shorter.

Conclusion: Documentation Should Not Be Why Physicians Leave Medicine

The physician burnout crisis is not inevitable. It is a direct consequence of a healthcare system that has prioritized data capture over patient care, transforming healers into data entry clerks. Ambient AI scribes offer a concrete, deployable solution that reclaims the physician-patient relationship by removing the documentation barrier that stands between them.

For health system leaders evaluating ambient AI documentation, the question is no longer "Does this technology work?" — the clinical evidence from Johns Hopkins, Kaiser Permanente, Mayo Clinic, and dozens of other systems confirms that it does. The question is "How quickly can we deploy it, and what is the cost of waiting?"

Every month of delay represents thousands of physician-hours lost to documentation, hundreds of patients receiving divided attention, and a compounding burnout risk that no wellness program or pizza party can offset. The technology is ready. The ROI is proven. The physicians are waiting.

If you are evaluating ambient AI for your health system or building clinical documentation capabilities into your product, explore how Nirmitee helps health systems deploy AI-native clinical workflows — from FHIR integration architecture to production-grade AI agent deployment.