The VP of Engineering signs off on your clinical AI agent. The chief medical officer is cautiously optimistic. Then the compliance team asks one question that kills the project for six months: "What decisions can this system make without a human in the loop?"

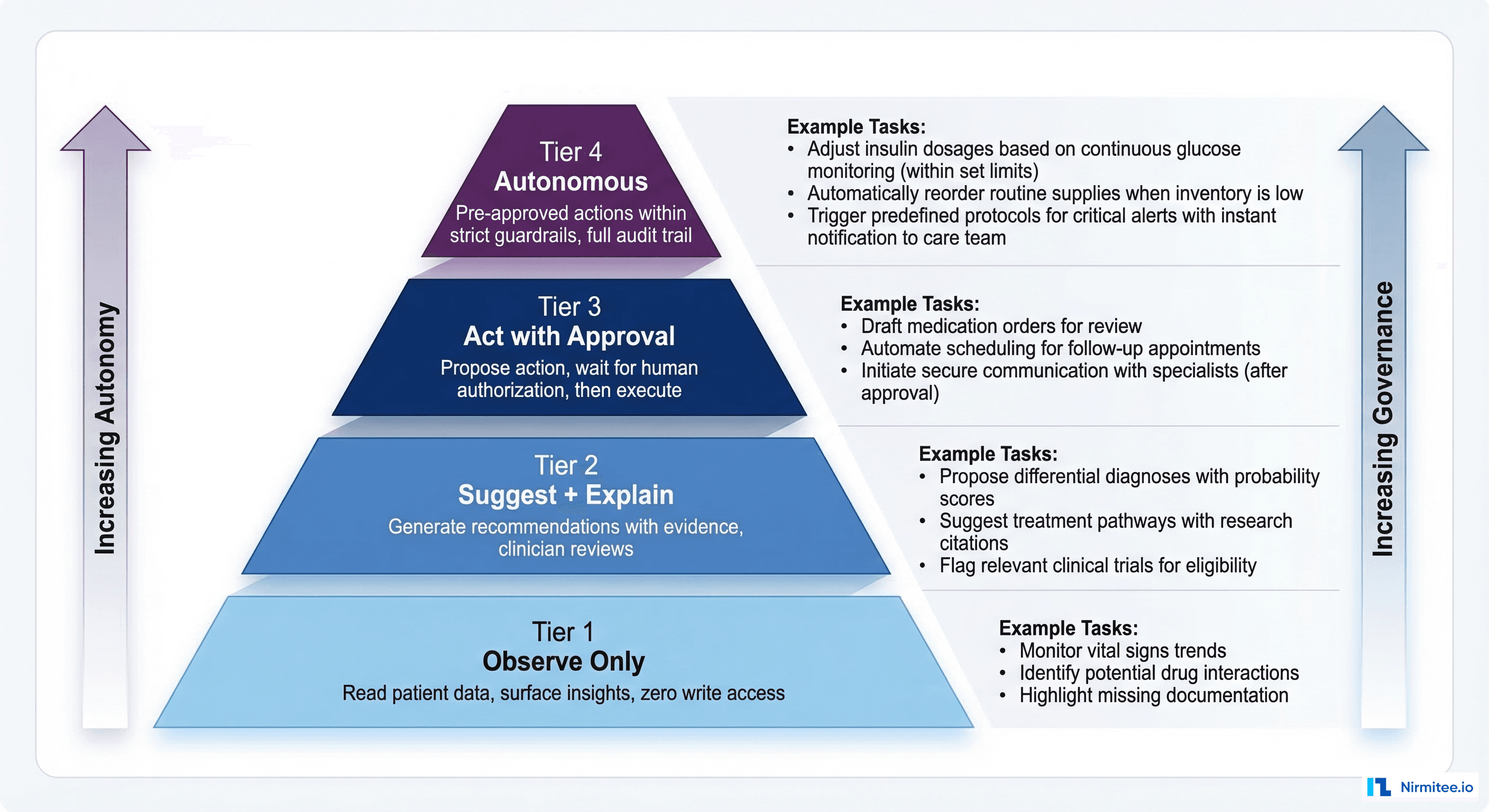

This question derails healthcare AI deployments more than any technical challenge. Not because the answer is complicated, but because most teams lack a structured framework for answering it. Bounded autonomy is that framework. It defines explicit tiers of agent authority — from passive observation to autonomous action — with governance requirements, escalation protocols, and audit trails mapped to each tier. It turns the compliance conversation from "should we allow AI to do this?" into "which tier does this capability fall into, and have we met the governance requirements for that tier?"

Here is the architecture pattern that gets healthcare AI past the compliance team and into production.

Why Compliance Teams Block AI Deployments

The root cause is uncertainty. Compliance officers, CISOs, and legal teams operate under strict liability frameworks. They need to answer three questions for every system that touches patient data:

- What can go wrong? If the AI makes an incorrect recommendation, what is the clinical impact? Can it cause patient harm?

- Who is responsible? When the AI acts, who holds clinical responsibility? The physician who approved the algorithm? The vendor who built it? The hospital that deployed it?

- Can we prove what happened? During an adverse event investigation, malpractice claim, or regulatory audit, can we reconstruct exactly what the AI did, why it did it, and what data it used?

Without a governance framework, each AI capability requires an ad-hoc risk assessment. That is why projects stall. Bounded autonomy provides a pre-approved framework: define the tiers once, classify each AI capability into its tier, and apply the governance requirements for that tier. New capabilities slot into existing tiers instead of requiring new risk assessments from scratch.

According to Deloitte's 2026 healthcare AI report, 61% of healthcare executives are already building agentic AI initiatives. But only those organizations that embed governance into the operating model — rather than bolting it on after deployment — are positioned to scale. Bounded autonomy is the governance model that enables scaling.

The Four Tiers of Bounded Autonomy

Tier 1: Observe Only

Authority: The agent can read patient data, aggregate information, and surface insights. It cannot write to the EHR, generate alerts, or make recommendations that appear in clinical workflows.

Clinical tasks:

- Patient chart summarization for the agent's internal reasoning (not displayed to clinicians)

- Data aggregation for population health dashboards

- Trend analysis on remote monitoring data

- Data quality assessment and gap identification

Governance requirements:

- Read-only SMART on FHIR scopes (e.g.,

patient/Observation.read, never.write) - No PHI export outside the clinical environment

- Access logging only — record what was read, not what was concluded

- Standard HIPAA access controls

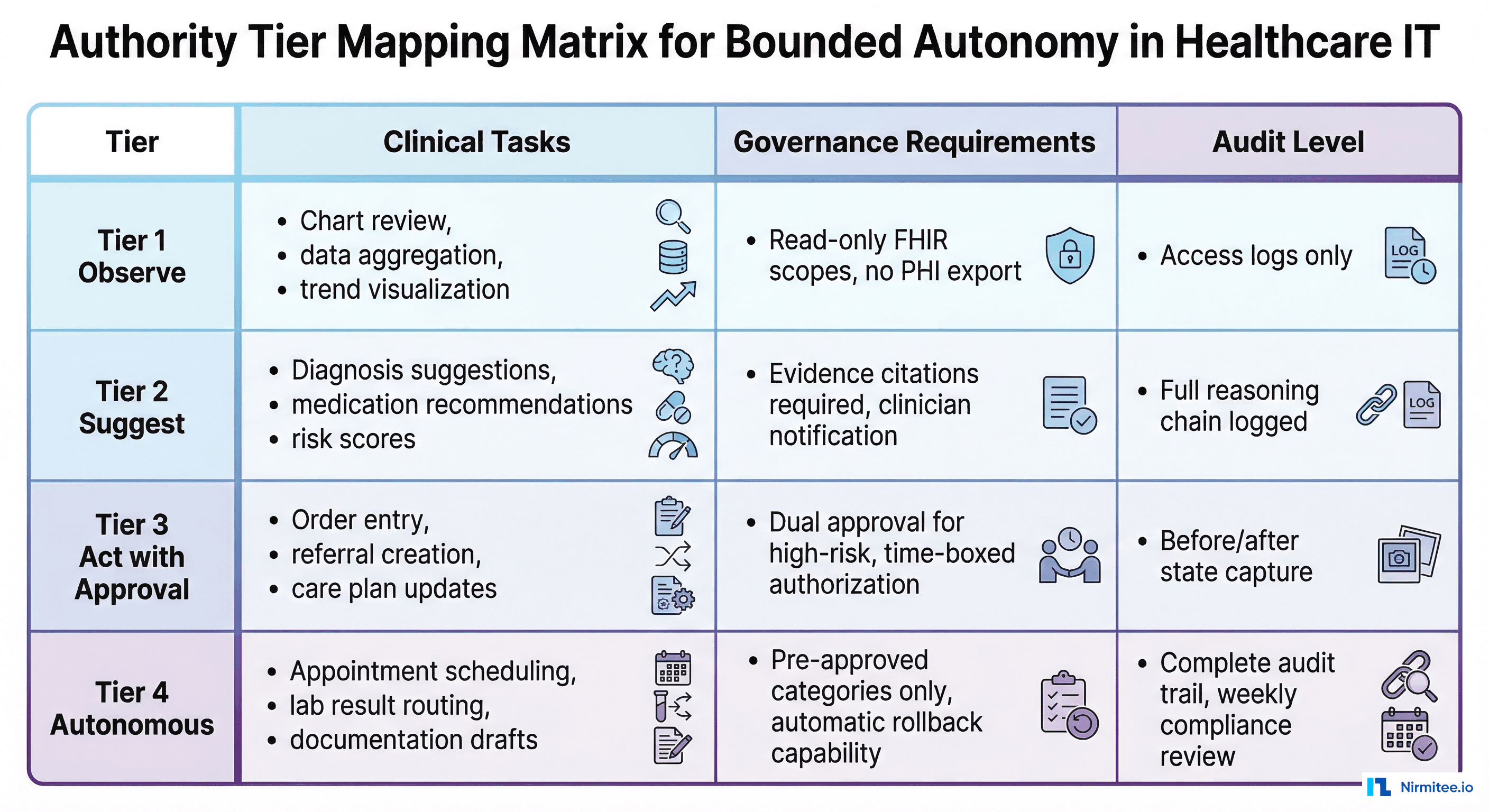

Compliance review: 2 weeks. Standard security review, no clinical validation required.

Tier 2: Suggest and Explain

Authority: The agent can generate recommendations, risk scores, and clinical suggestions — but they are always presented to a clinician for review. The agent cannot execute any action. A human reviews every suggestion before it influences care.

Clinical tasks:

- Diagnosis differential suggestions with supporting evidence

- Sepsis risk scoring displayed in clinical dashboards

- Drug interaction warnings surfaced via CDS Hooks

- Medication dose optimization recommendations

- Care gap identification with suggested interventions

Governance requirements:

- Every recommendation must include evidence citations (linked lab results, guideline references, supporting data)

- The clinician must acknowledge seeing the recommendation before it is marked as "reviewed."

- Full reasoning chain logged per the HL7 AI Transparency on FHIR specification (Level 3 observability)

- FDA SaMD classification review to determine if the suggestion qualifies as clinical decision support

- Explainability requirements: Clinicians must be able to understand why the agent made the suggestion

Compliance review: 4 weeks. Requires clinical validation study + CISO sign-off.

Tier 3: Act with Approval

Authority: The agent proposes a specific action (place an order, submit a referral, update a care plan) and queues it for human authorization. The action executes only after a physician or authorized clinician approves it. Think of it as a pre-filled form that still requires a signature.

Clinical tasks:

- Prior authorization submission with auto-populated clinical documentation

- Referral order creation with specialist matching

- Care plan updates based on new clinical data

- Medication adjustment recommendations ready for physician e-signature

- Discharge planning task initiation (scheduling, medication reconciliation)

Governance requirements:

- Dual approval for high-risk actions (e.g., both ordering physician and pharmacist for medication changes)

- Time-boxed authorization: if the physician does not approve within X minutes, the proposed action expires and requires re-generation

- Before/after state capture: document the patient's state before the proposed action and the expected state after

- Rollback capability: every action the agent proposes must be reversible within a defined window

- Complete audit trail from agent proposal through physician approval to action execution

Compliance review: 6-8 weeks. Requires CISO + CMO sign-off + clinical validation study.

Tier 4: Autonomous

Authority: The agent executes pre-approved actions within strictly defined guardrails. No human approves of each individual action. Instead, a physician or compliance committee pre-approves the category of actions, and the agent operates within those boundaries with full audit logging.

Clinical tasks (pre-approved categories only):

- Appointment reminder notifications

- Insurance eligibility verification

- Lab result routing to the correct provider inbox

- Documentation draft generation (physician reviews before signing)

- RPM alert triage: filtering noise from 70% of alerts that do not require clinical attention

- Routine census updates from ADT events

Governance requirements:

- Pre-approval by a governance committee (CMO + CISO + Legal + Ethics)

- Automatic rollback capability for any action

- Complete audit trail reviewed weekly by the compliance team

- Real-time monitoring dashboard accessible to CMO

- Automatic kill switch: if error rate exceeds threshold, the agent drops to Tier 2 (suggest only)

- Drift detection with automatic de-escalation on performance degradation

- Quarterly re-certification by the governance committee

Compliance review: 10-12 weeks. Full board review, including Ethics Committee + IRB if applicable.

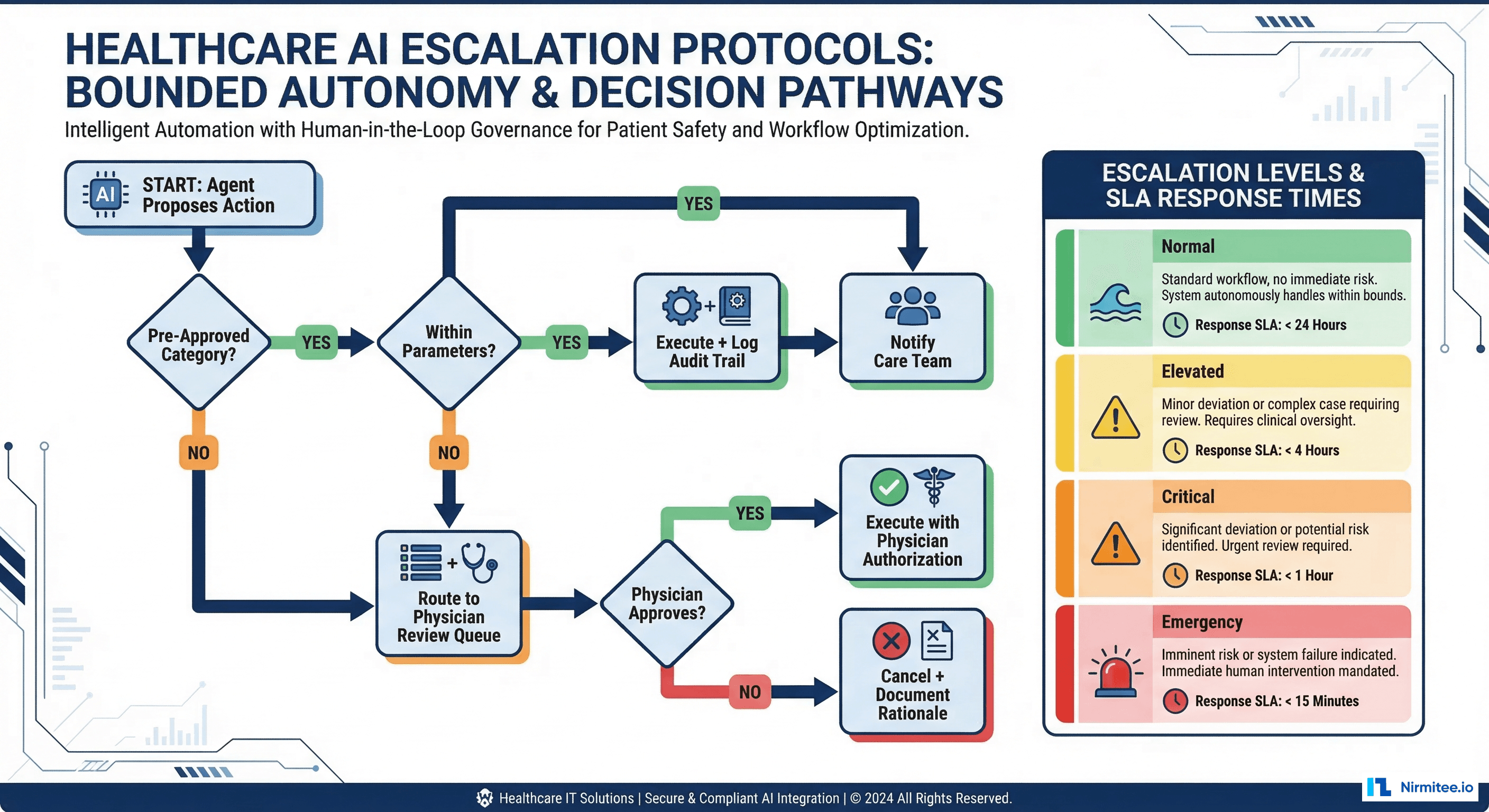

The Escalation Protocol

Bounded autonomy is not just about what an agent can do — it is about what happens when the agent encounters a situation outside its authority. The escalation protocol defines how agents hand off to humans gracefully:

# escalation_protocol.py - Bounded autonomy escalation logic

from enum import IntEnum

from dataclasses import dataclass

from typing import Optional

class Tier(IntEnum):

OBSERVE = 1

SUGGEST = 2

ACT_WITH_APPROVAL = 3

AUTONOMOUS = 4

@dataclass

class EscalationPolicy:

tier: Tier

max_confidence_for_escalation: float # Below this, escalate

escalation_target: str # Who gets the escalation

response_sla_minutes: int # How fast they must respond

fallback_on_timeout: str # What happens if no response

# Example policies per agent type

POLICIES = {

"sepsis-screener": EscalationPolicy(

tier=Tier.SUGGEST,

max_confidence_for_escalation=0.3,

escalation_target="attending_physician",

response_sla_minutes=5,

fallback_on_timeout="page_charge_nurse"

),

"appointment-scheduler": EscalationPolicy(

tier=Tier.AUTONOMOUS,

max_confidence_for_escalation=0.0, # Never escalates

escalation_target="scheduling_desk",

response_sla_minutes=60,

fallback_on_timeout="add_to_manual_queue"

),

"medication-adjuster": EscalationPolicy(

tier=Tier.ACT_WITH_APPROVAL,

max_confidence_for_escalation=0.5,

escalation_target="prescribing_physician",

response_sla_minutes=15,

fallback_on_timeout="expire_and_regenerate"

),

}

def should_escalate(agent_id: str, confidence: float) -> Optional[str]:

policy = POLICIES.get(agent_id)

if not policy:

return "compliance_team" # Unknown agent always escalates

if confidence < policy.max_confidence_for_escalation:

return policy.escalation_target

return None # No escalation neededReal-World Tier Mapping: What Goes Where

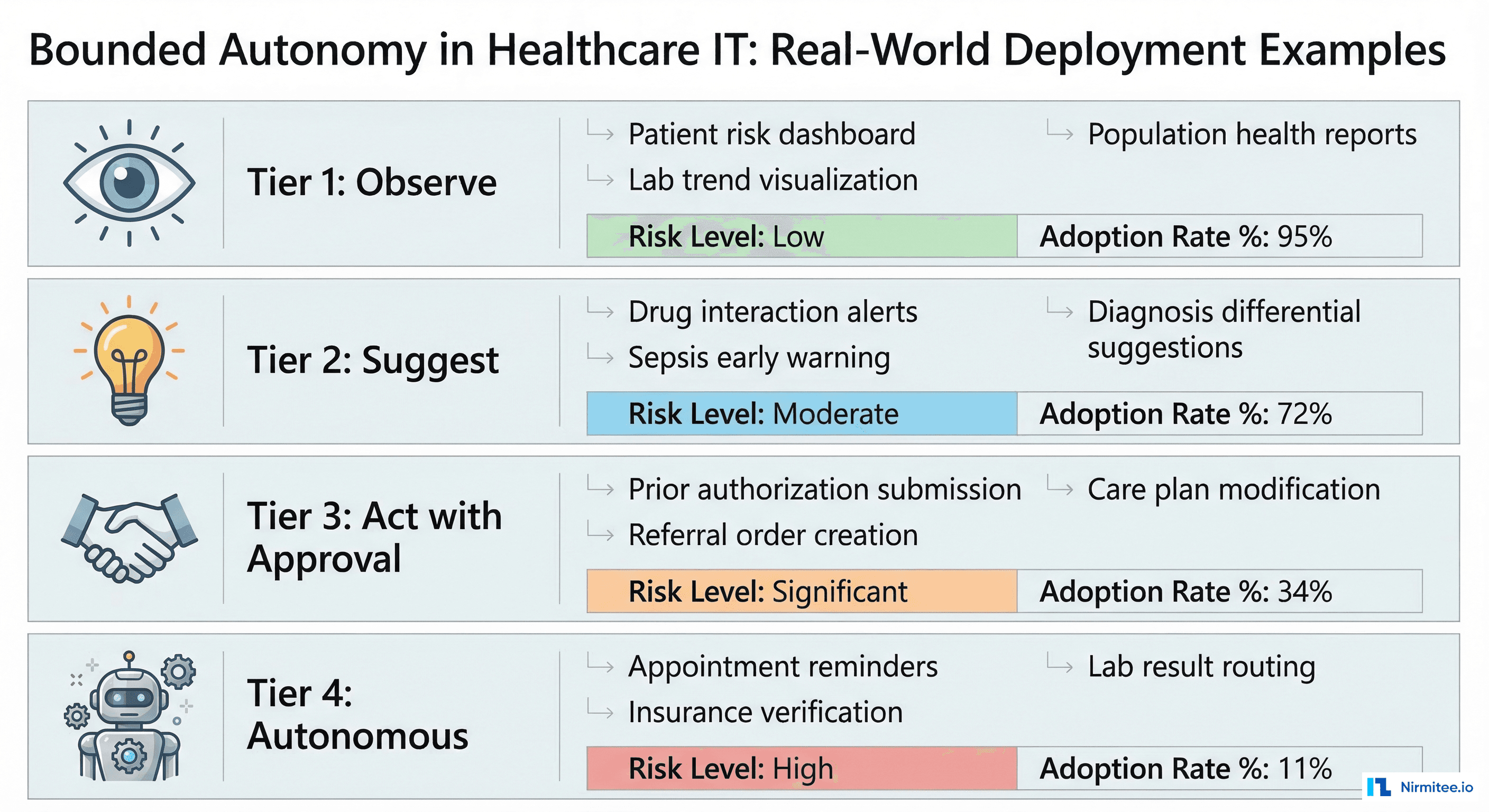

The most common mistake organizations make is putting too many capabilities in Tier 4 (Autonomous) to maximize ROI. This triggers compliance rejection. The opposite mistake — putting everything in Tier 1 (Observe) — delivers negligible value. Here is a realistic mapping based on deployments across US health systems:

| Capability | Recommended Tier | Rationale | Adoption Rate |

|---|---|---|---|

| Patient risk dashboard | Tier 1 | Read-only visualization, no action | 95% |

| Population health reports | Tier 1 | Aggregated data, no patient-level action | 92% |

| Sepsis early warning score | Tier 2 | Clinical decision support, physician reviews | 72% |

| Drug interaction alerts | Tier 2 | Safety-critical but physician decides | 68% |

| Prior auth submission | Tier 3 | Administrative action, physician approves clinical justification | 41% |

| Referral order creation | Tier 3 | Clinical action requiring authorization | 34% |

| Appointment reminders | Tier 4 | Low-risk, pre-approved category, fully audited | 88% |

| Insurance verification | Tier 4 | Administrative, no clinical impact | 76% |

| Lab result routing | Tier 4 | Workflow automation, rules-based | 52% |

Notice the pattern: the highest-adoption Tier 4 capabilities are administrative, not clinical. This is intentional. Start autonomous operations with zero-clinical-risk tasks, build the audit trail and operational confidence, then gradually promote capabilities from Tier 3 to Tier 4 as the governance committee gains trust in the system.

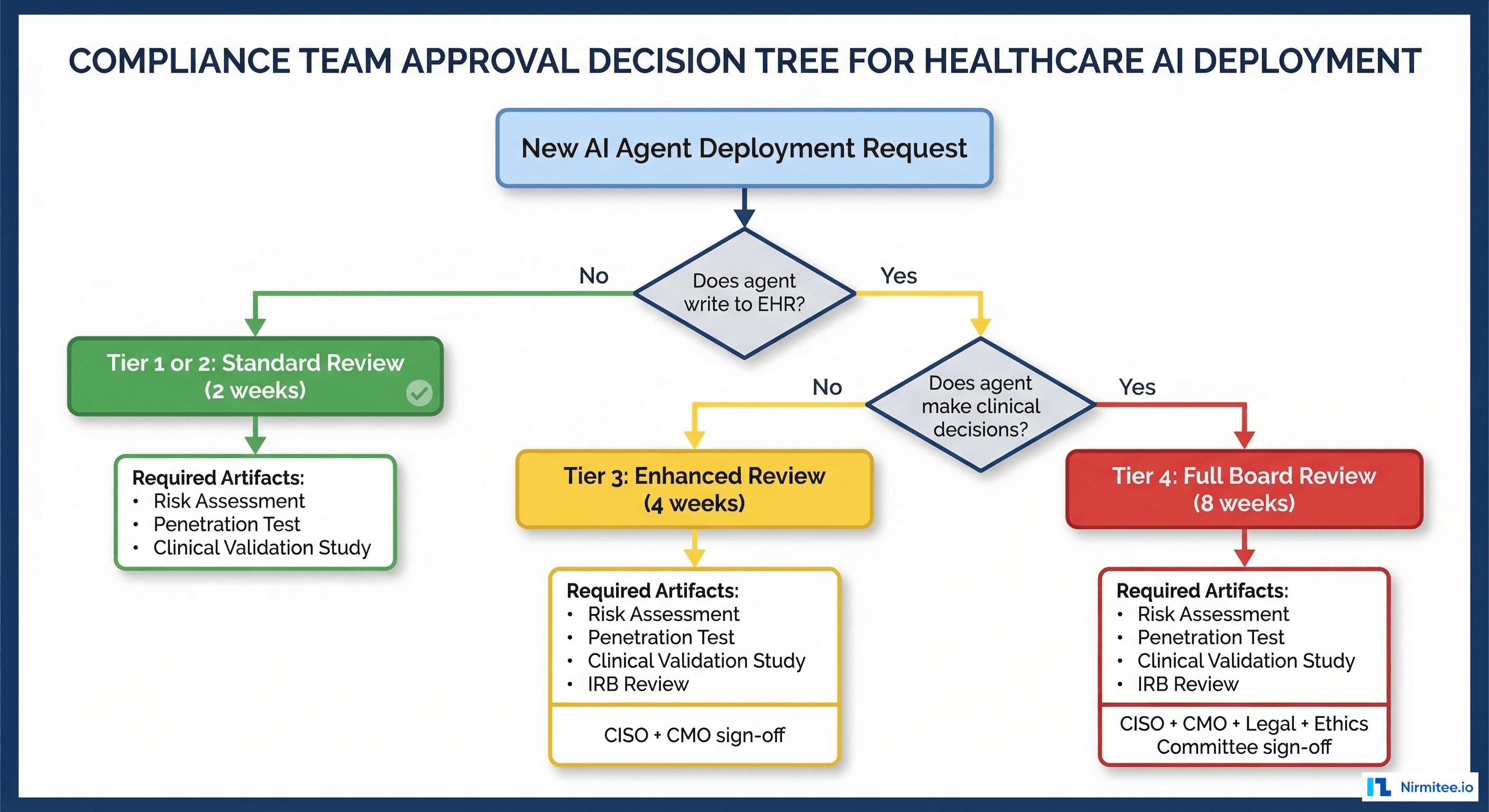

The Compliance Approval Decision Tree

When a new AI capability is proposed, the compliance team needs a structured decision process. Here is the tree:

- Does the agent write to the EHR or clinical system? No: Tier 1 or 2 (standard review, 2-4 weeks). Yes: continue.

- Does the agent make or influence clinical decisions? No: Tier 3 (enhanced review, 6-8 weeks). Yes: continue.

- Does the action execute without per-instance human approval? No: Tier 3 (enhanced review). Yes: Tier 4 (full board review, 10-12 weeks).

Each tier requires specific artifacts:

- Tier 1: Security assessment, HIPAA BAA review, data flow diagram

- Tier 2: Tier 1 artifacts + clinical validation study (sensitivity/specificity/AUROC on local data) + explainability documentation

- Tier 3: Tier 2 artifacts + penetration test + rollback procedure documentation + error rate thresholds

- Tier 4: Tier 3 artifacts + ethics committee review + automatic de-escalation triggers + quarterly re-certification plan

Audit Requirements Per Tier

Each tier has different audit depth requirements. This is where the HL7 AI Transparency on FHIR specification directly applies:

| Tier | Audit Depth | Retention | Review Frequency |

|---|---|---|---|

| Tier 1 (Observe) | Access logs: what data was read, when, by which agent | 6 years (HIPAA) | Annual |

| Tier 2 (Suggest) | Full reasoning chain: input data, model output, confidence score, evidence citations | 6 years | Quarterly |

| Tier 3 (Act with Approval) | Before/after patient state, proposed action, approver identity, time-to-approval, execution confirmation | 10 years (malpractice) | Monthly |

| Tier 4 (Autonomous) | Complete I/O capture, model version, configuration state, all decision factors, rollback availability | 10 years | Weekly + real-time dashboard |

Build the Tier 4 audit infrastructure from the start, even if your first deployment is Tier 1. Upgrading audit capabilities after deployment is significantly more expensive than building them in from day one. Use the centralized logging patterns and OpenTelemetry instrumentation we covered in earlier guides.

Automatic De-Escalation: The Safety Net

The most important feature of bounded autonomy is automatic de-escalation. When an autonomous agent (Tier 4) detects conditions that fall outside its authority, it must automatically drop to a lower tier:

- Confidence below threshold: Agent's confidence score drops below the minimum for its tier. De-escalate to Tier 2 (suggest to human).

- Error rate spike: Agent's error rate exceeds the pre-approved threshold. De-escalate to Tier 2 and alert the governance committee.

- Novel input: Agent encounters a patient scenario not represented in its training data (out-of-distribution detection). De-escalate to Tier 1 (observe only) and flag for review.

- Model drift detected: Performance monitoring shows degradation beyond acceptable bounds. De-escalate to Tier 2 pending model revalidation.

- System dependency failure: A critical data source (lab system, medication database) becomes unavailable. De-escalate because the agent cannot make fully-informed decisions.

De-escalation must be automatic, immediate, and logged. No human intervention should be required to reduce an agent's authority. Re-escalation (promoting back to the original tier) should require explicit human authorization.

Getting Started with Nirmitee

At Nirmitee, we design and implement bounded autonomy frameworks for healthcare AI systems. Our architects work with your compliance, clinical, and engineering teams to define tier classifications, build the governance infrastructure, and deploy agents that satisfy both the CMO and the CISO.

We have implemented this pattern across health systems deploying clinical AI for remote patient monitoring, FHIR-based agents, and event-driven clinical automation. Every deployment uses bounded autonomy as the governance foundation.

Talk to our healthcare AI governance team about implementing bounded autonomy for your clinical AI deployment.

Frequently Asked Questions

What is bounded autonomy in healthcare AI?

What are the four tiers of bounded autonomy?

How long does compliance review take for each tier?

What clinical tasks should be autonomous (Tier 4)?

What is automatic de-escalation in bounded autonomy?