There is a persistent narrative in healthcare AI that goes something like this: the goal is to remove humans from the loop. Start with human oversight, gradually reduce it, and eventually achieve full automation. Human involvement is framed as a temporary constraint — a necessary evil while the AI "gets good enough."

This narrative is wrong. Not just strategically wrong, but architecturally wrong. The most effective healthcare AI systems we have built are designed around human involvement as a permanent, structural feature. The human is not in the loop because the AI is not ready. The human is in the loop because that is what makes the system work.

After building AI agents across 20+ healthcare applications, we have learned that human-in-the-loop is not a compromise between automation and safety. It is the design principle that delivers both.

The Automation Spectrum — Finding the Sweet Spot

Healthcare workflows exist on a spectrum from fully manual to fully autonomous. Most organizations think about this spectrum as a journey — you start on the left (manual) and march toward the right (autonomous). The goal is to get as far right as possible.

In practice, the sweet spot is in the middle, and it stays there permanently. Here is why:

Fully manual processes are slow, expensive, inconsistent, and hard to scale. A human reviewing every prior authorization request, reading every clinical document, and checking every eligibility response creates bottlenecks that limit your throughput and increase your costs linearly with volume.

Fully autonomous AI is fast and cheap but unacceptable in healthcare. No matter how good the AI gets, there will always be edge cases where the correct action depends on context that the AI cannot fully capture — patient preferences, clinical judgment calls, unusual payer behavior, regulatory nuances. A system that handles these cases autonomously will make errors that are clinically, financially, or legally significant.

Human-augmented AI — where the AI handles the routine work autonomously and escalates uncertain cases to humans with full context — delivers the throughput of automation with the judgment of human oversight. And critically, it gets better over time because every human interaction generates training signal.

The sweet spot is not a waypoint on the path to full automation. It is the destination.

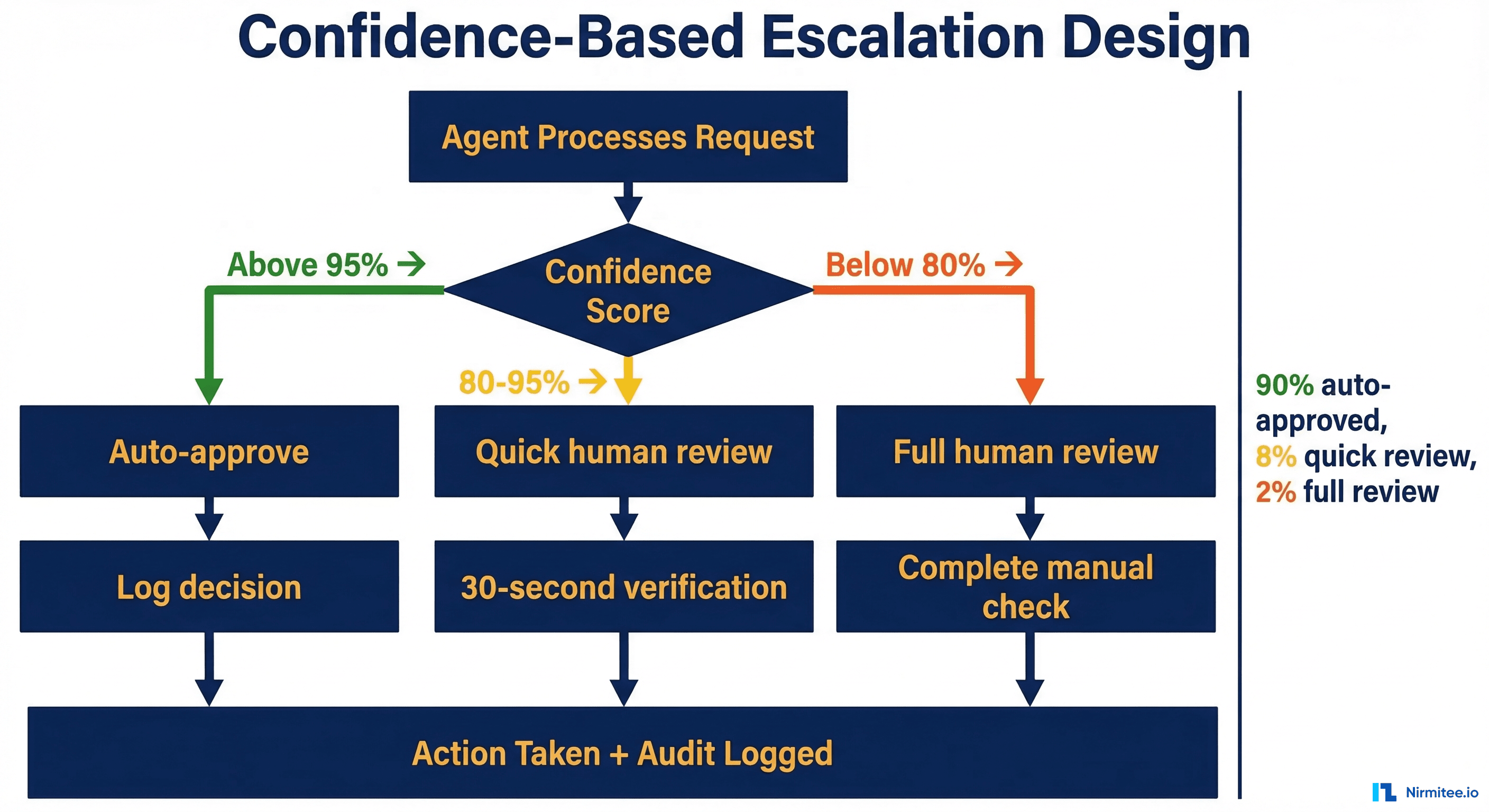

Confidence-Based Escalation Design

The engineering core of effective HITL systems is confidence scoring and escalation routing. Every agent decision gets a confidence score, and the routing logic determines what happens at each confidence level.

Tier 1: High Confidence (Above 95%) — Auto-Approve

The agent has seen this pattern many times, the data is clean, and the decision is straightforward. Examples: standard eligibility verification for a common payer, prior auth for a procedure that the payer always approves for this diagnosis, document classification for a clearly labeled lab result.

The agent proceeds autonomously and logs the full decision chain. No human touches this transaction, but a human could audit it at any time by reviewing the log. These cases represent 60-75% of volume for a well-tuned agent, and they are the source of the efficiency gains.

Tier 2: Medium Confidence (80-95%) — Quick Review

The agent has a recommendation but something is unusual. Maybe the patient's coverage has a rider that changes the auth requirements, or the clinical document has an ambiguous section, or the eligibility response has a warning code the agent has not seen before.

The agent presents its recommendation to a human reviewer along with the reasoning and the specific element that reduced confidence. The reviewer can approve the recommendation with one click (which takes 10-15 seconds) or override it with a corrected action. These cases represent 15-25% of volume.

This tier is where the magic happens. Notably, the not starting from scratch — they are reviewing a recommendation with full context. This is 5-10x faster than the human doing the work from scratch. And every approval or override is a data point that improves future confidence calibration.

Tier 3: Low Confidence (Below 80%) — Full Review

The agent does not have enough confidence to make a recommendation, or the case involves factors that are inherently subjective. Examples: a prior auth for an unusual procedure where clinical necessity is debatable, a patient record with conflicting data from multiple sources, a claim denial that involves an unusual payer policy.

The agent provides full context — all relevant data, its analysis of the situation, and the factors that prevented it from making a confident recommendation. The human makes the decision, and the agent logs the human's reasoning (captured through structured input fields) for future learning. Notably, these 5-15% of volume.

Crucially, even in Tier 3, the agent adds value. By gathering and organizing all relevant data, it reduces the human's time on the case from 15-20 minutes to 5-8 minutes. The human spends their time on judgment, not data gathering.

Calibrating Confidence Thresholds

The specific threshold numbers (95% and 80%) are starting points, not constants. They are calibrated per workflow, per customer, and over time based on actual accuracy data.

The calibration process works like this: during the first two weeks, set all thresholds conservatively (require human review for everything above 85%). Measure the override rate at each confidence level. If the agent is correct 99% of the time above 93%, you can safely set the auto-approve threshold at 93%. If the agent is correct only 90% of the time at that level, keep the threshold at 97% and investigate why the confidence scores are miscalibrated.

This data-driven calibration is one of the reasons HITL systems outperform both fully manual and fully autonomous approaches. You have empirical evidence for every threshold decision, not just engineering intuition.

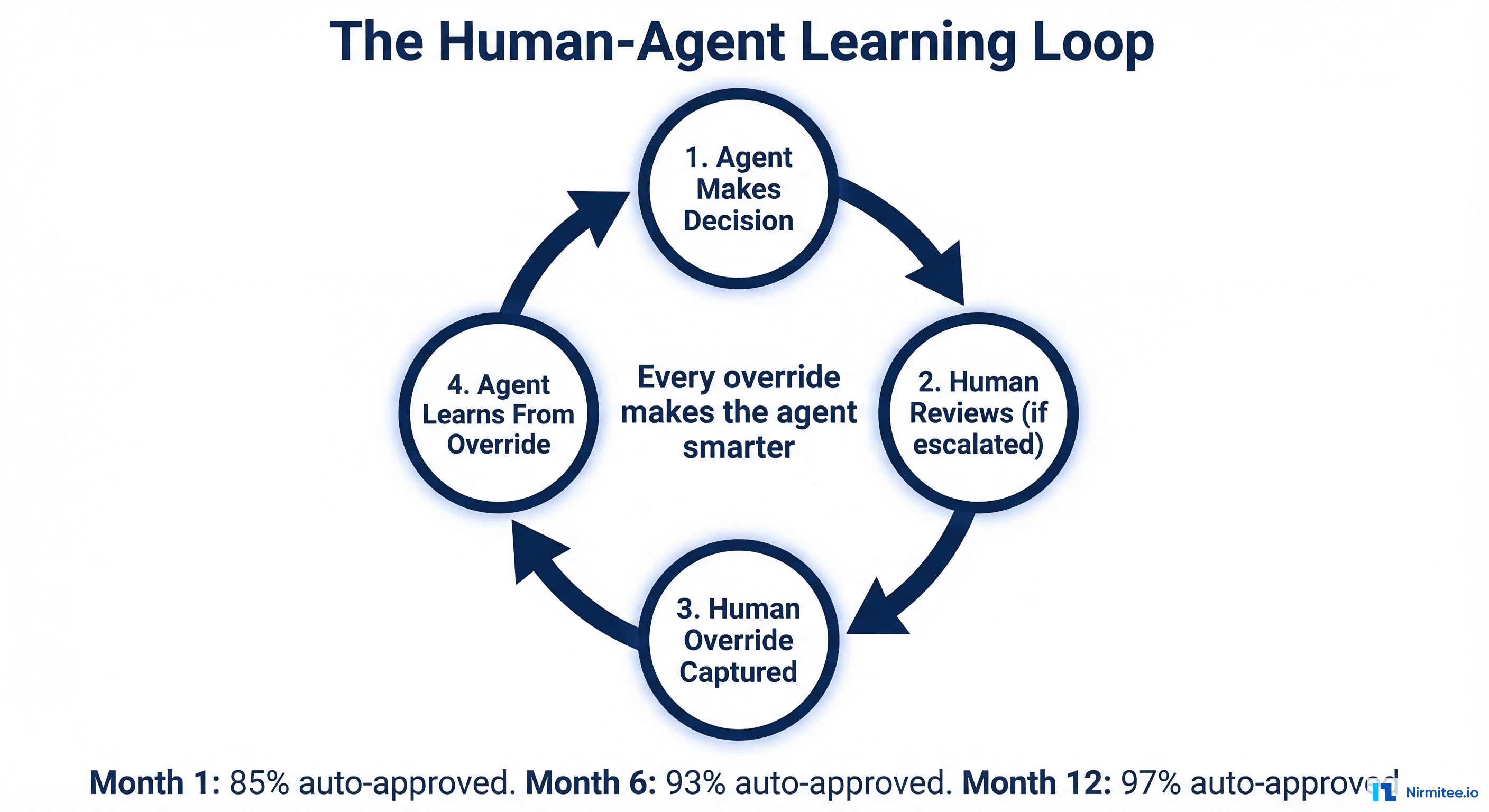

The Feedback Loop — Every Override Makes the Agent Smarter

The most underappreciated aspect of HITL design is the feedback loop. Every human interaction with the agent — every approval, every override, every correction — is structured training data.

Approval signals confirm that the agent's confidence calibration is correct at that confidence level. If humans consistently approve agent recommendations at 90% confidence, the system can gradually lower the auto-approve threshold for that scenario type.

Override signals are the most valuable. When a human changes the agent's decision, the system captures: what the agent decided, what the human decided instead, the confidence level at which the error occurred, and (through structured input fields) why the human made a different choice. This creates a corpus of "correction examples" that are added to the agent's prompt templates.

Escalation patterns reveal systematic gaps. When the consistently escalates cases involving a specific payer or procedure type, that signals a gap in the agent's domain knowledge for that area. The team can address it by adding specific rules or examples to the relevant prompt templates.

The feedback loop creates a system that gets measurably better every week without any model retraining or infrastructure changes. You are not fine-tuning an LLM — you are refining the prompts, calibrating the confidence thresholds, and expanding the library of examples that the orchestrator uses to guide the LLM's reasoning.

HITL Auto-Approval Rates Over Time

The practical result of the feedback loop is a steady increase in the auto-approval rate — the percentage of transactions the agent handles without any human involvement. Here is what we typically see:

- Month 1: 85% auto-approval rate. The agent handles straightforward cases. The team reviews everything in the medium-confidence band. The feedback loop is building its initial corpus of corrections and confirmations.

- Month 3: 90% auto-approval rate. The most common override patterns have been captured and incorporated into prompts. Confidence calibration has been adjusted based on actual accuracy data. The agent handles many cases that previously required quick review.

- Month 6: 94% auto-approval rate. The agent now handles most payer-specific edge cases and common data quality issues. The cases that still require human review are genuinely unusual — new payer rules, rare procedure types, unusual patient circumstances.

- Month 9: 96% auto-approval rate. Improvements slow because the remaining cases are inherently complex or subjective. But each percentage point at this level represents significant volume because the total transaction count has likely grown.

- Month 12: 97% auto-approval rate. The system has reached a stable equilibrium. The 3% that requires human review represents cases that genuinely benefit from human judgment. The agent continues to improve incrementally, but the remaining cases are where human oversight adds real value.

Note what is not in this trajectory: 100%. We do not design for 100% automation, and we counsel our clients against setting it as a goal. The 3-5% of cases that require human review are not a failure of the AI — they are the cases where human judgment is the right tool. Designing the system to handle them autonomously would require accepting error rates that are unacceptable in healthcare.

Clinician Trust Engineering

The most technically brilliant HITL system is useless if clinicians do not trust it. Trust engineering is a distinct discipline from system engineering, and it requires deliberate design decisions:

Transparency of reasoning. When an agent presents a recommendation, it must show its reasoning, not just its conclusion. "Recommend approval" is not enough. "Recommend approval because: CPT 27447 (knee replacement) with ICD-10 M17.11 (primary osteoarthritis, right knee) meets Blue Cross policy PA-2024-ORTHO-12 criteria 2a (conservative treatment failure documented in notes from 2025-06-15 and 2025-09-22)" — this is what builds trust. Clinicians can verify the logic, not just the output.

Graceful disagreement. When a clinician overrides the agent, the system should acknowledge the override without friction. No "Are you sure?" prompts, no multi-step justification requirements. The clinician is the authority. The system captures the override for learning purposes, but the experience should feel like correcting a helpful assistant, not arguing with a bureaucracy.

Visible learning. When the agent improves based on clinician feedback, that improvement should be visible. "Based on your correction last month regarding BlueCross PA requirements for bilateral procedures, the system now correctly handles these cases" — this feedback closes the loop and demonstrates that the clinician's input matters.

Consistent accuracy. Clinicians will tolerate an AI that is right 95% of the time and transparent about its uncertainty. They will not tolerate an AI that is right 99% of the time but occasionally confident and wrong. The confidence scoring and escalation design must be calibrated to never present a confident recommendation that turns out to be incorrect. It is better to escalate unnecessarily than to auto-approve incorrectly.

Gradual autonomy expansion. Let clinicians control the pace of automation. Start with all cases going through review. Let the clinician see the agent's track record and decide when to enable auto-approval for specific case types. This gives the clinician agency over the transition and builds trust through direct experience rather than top-down mandates.

The Regulatory Advantage

There is a strategic dimension to HITL that goes beyond operational efficiency: it is dramatically easier to get regulatory and buyer approval for AI systems that keep humans in the loop.

FDA perspective. The FDA's framework for clinical decision support distinguishes between systems that make recommendations for human review and systems that make autonomous decisions. The former have a significantly lighter regulatory pathway. A HITL agent that presents recommendations to clinicians is clinical decision support. A fully autonomous agent that acts without human review may require 510(k) clearance or even PMA approval, depending on the clinical context.

Healthcare buyer perspective. Hospital CIOs and CMIOs are evaluated on patient safety, not innovation speed. When you pitch a fully autonomous AI system, they hear risk. When you pitch an AI system that augments their staff with transparent recommendations and full audit trails, they hear efficiency with safety. The HITL design is not just a technical choice — it is a sales enabler.

HIPAA perspective. HITL systems with comprehensive audit logging demonstrate compliance more effectively than manual processes. Every agent decision is logged with data access records, reasoning chains, and human oversight documentation. Auditors can trace any transaction end-to-end. Compare this to manual processes where audit trails depend on staff remembering to document their reasoning — which in practice means 30-40% of decisions have incomplete audit records.

Malpractice perspective. In the event of an adverse outcome, a HITL system provides clear documentation of what the AI recommended, what confidence level it assigned, whether a human reviewed it, and what the human decided. This is significantly better legal positioning than "the AI made the decision" or "a human made the decision but we do not have documentation of their reasoning."

Implementation Principles

If you are designing a HITL system for healthcare, here are the principles we have learned from building them across multiple products:

Design the review experience first. Before writing a single line of agent code, design the human review interface. What information does the reviewer need? How should it be organized? What actions can they take? How long should a review take? The review experience determines whether your HITL system is efficient (15-second reviews) or a bottleneck (5-minute reviews).

Invest in structured override capture. When a human overrides the agent, capture why in a structured format, not free text. Use dropdown menus with specific reason codes: "payer policy changed," "clinical context not captured," "patient preference," "data quality issue." Structured data is usable for automated feedback loop improvement. Free text requires manual analysis.

Measure human time, not just accuracy. Agent accuracy is important, but the metric that drives ROI is total human time per transaction. A system that is 95% accurate but requires 3-minute reviews for the escalated cases may deliver less value than a system that is 90% accurate with 30-second reviews. Optimize for total workflow efficiency, not just AI accuracy.

Build for queue management, not individual cases. In production, reviewers work through queues of escalated cases. The queue management system — prioritization, assignment, load balancing, SLA tracking — is as important as the agent itself. A brilliant agent with a poorly designed review queue creates a different kind of bottleneck.

Plan for the feedback loop from day one. Do not treat the feedback loop as a future enhancement. Build the data capture, the correction pipeline, and the prompt update mechanism into the initial architecture. Retrofitting a feedback loop onto a system that was not designed for it is significantly harder than building it in from the start.

The Bottom Line

Human-in-the-loop is not a limitation of current AI technology. It is a design principle that makes healthcare AI systems effective, trustworthy, compliant, and continuously improving.

The systems that try to remove humans from the loop will face regulatory resistance, buyer skepticism, and the inevitable high-profile error that destroys trust. The systems that embrace human involvement as a structural feature will deliver efficiency gains that compound over time, build trust with clinicians and buyers, and create feedback loops that make them progressively better.

The engineering challenge is not "how do we get the AI good enough to not need humans." It is "how do we design the human-AI interaction to maximize the value of both." That is a harder problem, but it is also the one that produces systems which actually work in the real world of healthcare — where the stakes are too high for fully autonomous AI and too costly for fully manual processes.

From prior auth to clinical documentation, our Agentic AI for Healthcare practice builds agents that automate real healthcare workflows. We also offer specialized Healthcare AI Solutions services. Talk to our team to get started.