Every Healthtech Founder Is Asking the Same Question

Can we use LLMs in our product? The honest answer: it depends on what zone you're operating in.

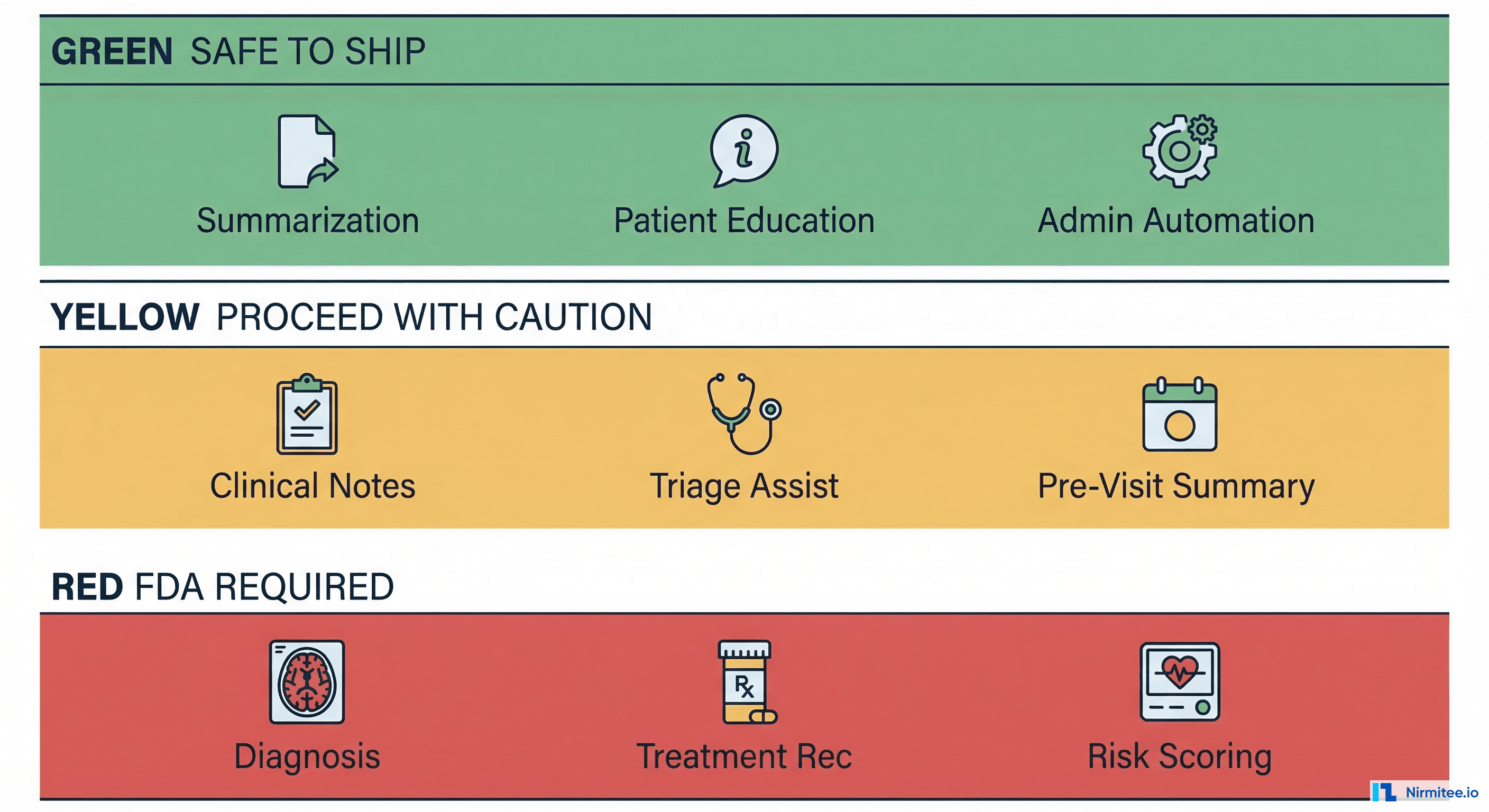

The regulatory landscape for AI in healthcare isn't a single line. It's three distinct zones — Green, Yellow, and Red — each with different risk profiles, compliance requirements, and legal exposure. Get the zone wrong, and you're looking at FDA enforcement actions, malpractice lawsuits, or both.

This guide breaks down exactly what you can ship today, what requires careful engineering, and what will land you in front of regulators. No theory. Real examples, actual regulation references, and dollar figures.

The Green Zone: Ship Now

These are LLM applications you can deploy today without FDA clearance. They share one critical characteristic: they don't make clinical decisions. They assist humans who make clinical decisions.

Summarization

Visit note summarization, discharge summary generation, and clinical document condensation. The LLM takes existing clinical information and restructures it for readability. No new clinical conclusions are drawn.

Example: Abridge raised $212.5M in 2025 for ambient clinical documentation — summarizing physician-patient conversations into structured notes. The physician reviews and signs every note. This is firmly Green Zone.

Patient Education Content

Generating patient-facing educational materials from clinical guidelines. Think: "What to expect after your knee replacement" generated from AAOS guidelines. The content explains existing medical knowledge; it doesn't create new clinical recommendations for a specific patient.

Administrative Automation

Scheduling optimization, billing code suggestions (CPT/ICD-10 lookup assistance), prior authorization letter drafting, and insurance eligibility checks. These are business operations, not clinical decisions.

Dollar impact: McKinsey estimates $200–$360 billion in annual administrative waste in US healthcare. Even capturing 5% of that with LLM automation represents a $10–$18 billion market.

Search and Retrieval

Semantic search across medical records, surfacing relevant prior visits, lab results, or imaging reports. The LLM is a search engine, not a diagnostician. It finds information; the clinician interprets it.

Documentation Assistance

Auto-completing clinical templates, suggesting ICD-10 codes based on note content, and formatting structured data from free text. Again — the clinician reviews, edits, and signs.

Why These Are Safe

The FDA's general wellness exemption (21 CFR Part 820 scope exclusion) and the 21st Century Cures Act's exclusion of certain Clinical Decision Support (CDS) software provide the legal basis. Specifically, Type 1 CDS — software that provides information to a clinician, makes its logic transparent, doesn't require the clinician to rely solely on its output, and enables independent review — is excluded from FDA device regulation under Section 3060(a) of the Cures Act.

The key engineering principle: the clinician is always the final decision-maker, and the AI's reasoning is visible.

The Yellow Zone: Proceed With Caution

These applications are not explicitly FDA-regulated (yet), but they directly influence clinical decisions. One wrong implementation detail can push you from Yellow into Red.

Clinical Note Suggestions

AI drafts a clinical note from ambient audio or structured data, and a clinician reviews and edits before signing. This is Yellow, not Green, because the AI's draft shapes the clinician's thinking. If the AI omits a critical finding, the clinician might miss it too — a phenomenon called automation bias.

Pre-Visit Summaries

Synthesizing a patient's history into a pre-visit briefing. This is more than search — the LLM is making editorial decisions about what's relevant. If it deprioritizes a critical allergy or past adverse reaction, the consequences are clinical.

Triage Assistance

Helping triage nurses or front-desk staff route patients to appropriate care levels. Not diagnosis — routing. But if the LLM suggests a lower acuity level for a patient having a STEMI, you have a dead patient and a lawsuit.

Care Gap Alerts

Flagging patients overdue for screenings, vaccinations, or follow-ups based on clinical guidelines (USPSTF, HEDIS measures). The alert says "this patient is overdue for a colonoscopy per USPSTF guidelines." It doesn't diagnose colon cancer.

Why Yellow Requires Special Engineering

These applications need specific safeguards to stay out of the Red Zone:

- Clinician-in-the-loop design: The clinician must actively review and modify AI output, not just click "approve." Design for engagement, not rubber-stamping.

- Confidence scores: Every AI-generated suggestion should include a confidence indicator. Low-confidence outputs should be visually distinct and require explicit clinician acknowledgment.

- Audit trails: Log the AI input, output, model version, and the clinician's subsequent action (accepted, modified, rejected). This is your legal defense.

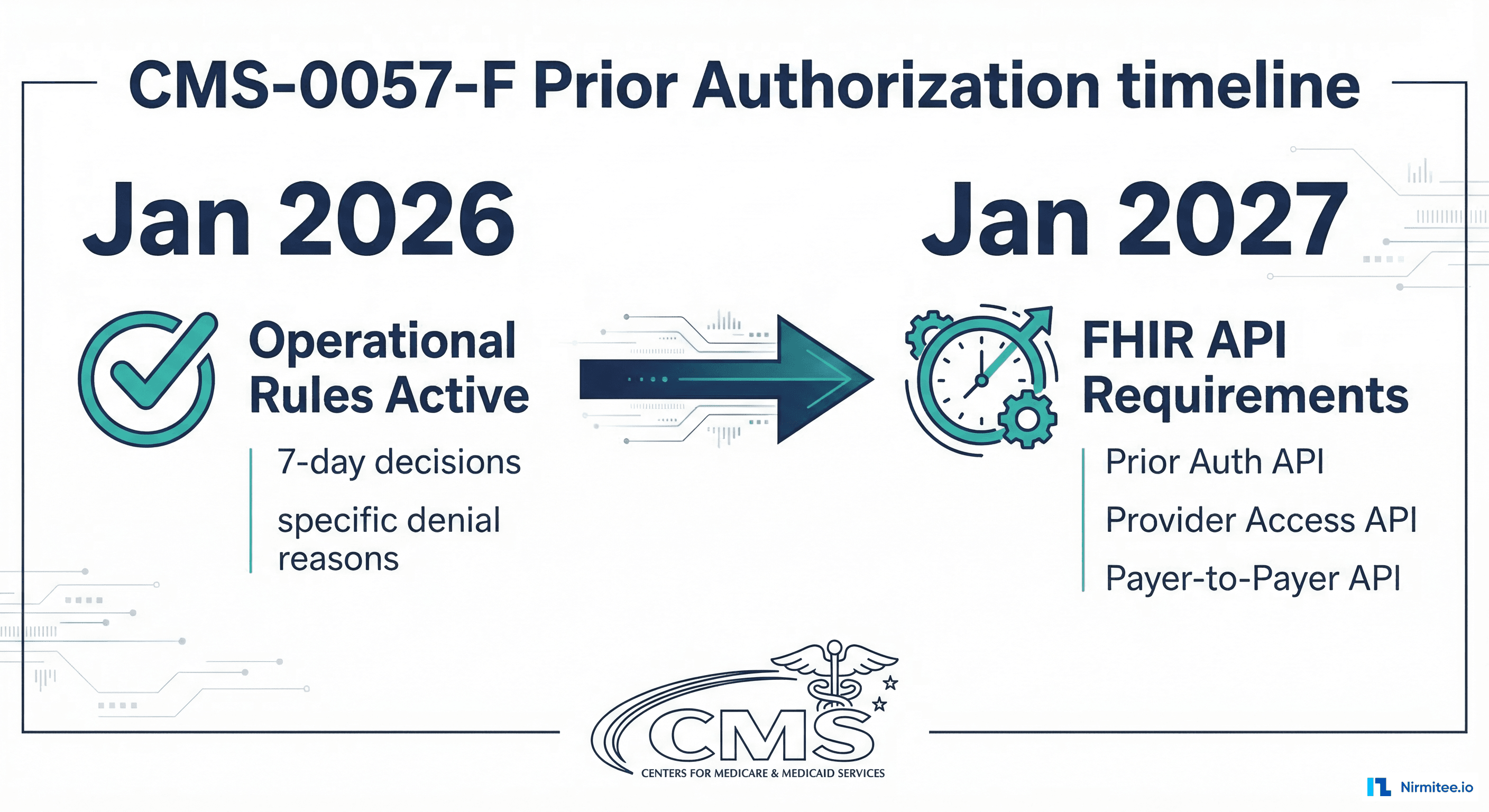

- Clear AI-generated labeling: Every piece of AI-generated content must be unambiguously labeled. ONC's HTI-1 rule (effective December 2025) requires "Predictive DSI" transparency for certified health IT.

The Red Zone: FDA Approval Required

If your LLM output can directly change a patient's treatment without mandatory clinician review, you're building a Software as Medical Device (SaMD). Full stop.

Diagnostic Suggestions

"This dermoscopic image is consistent with melanoma (confidence: 0.89)." That's a diagnostic claim. It doesn't matter if a clinician sees it — the software is performing a medical device function. The FDA has been clear: software that analyzes medical images to detect, diagnose, or predict disease is a medical device.

Precedent: The FDA has cleared over 950 AI/ML-enabled medical devices as of Q1 2026, the majority in radiology and cardiology. If you're in this space, you're competing with companies that already have clearance.

Treatment Recommendations

"Based on this patient's genotype and tumor markers, consider pembrolizumab 200mg IV q3w." That's a treatment recommendation driven by patient-specific data. This is Type 2 CDS and requires FDA oversight.

Risk Scoring That Drives Actions

A sepsis risk score that automatically triggers a rapid response team. A cardiac risk score that auto-orders an ECG. If the AI output directly initiates a clinical action — even through an automated workflow — it's a device.

Autonomous Clinical Actions

Any output that modifies a treatment plan, adjusts medication dosing, or changes a care pathway without a clinician actively reviewing and approving the specific change. This includes "smart" order sets where the AI selects orders based on its clinical assessment.

Regulatory Pathway

You'll need a 510(k) (if a predicate device exists) or De Novo classification (for novel devices without a predicate). Budget $500K–$2M and 12–18 months for a 510(k), or $1M–$5M and 18–30 months for De Novo.

The FDA's 2025 draft guidance on AI-Enabled Device Software Functions introduces the Total Product Lifecycle (TPLC) approach — recognizing that AI/ML models change over time. This framework requires a Predetermined Change Control Plan (PCCP) that describes anticipated model modifications and the methodology for validating changes without requiring a new submission for each update.

This is actually good news for LLM-based devices: you can plan for model updates (fine-tuning, prompt changes, even base model swaps) as long as you define the validation framework upfront.

The Legal Landmines

Regulatory compliance isn't your only concern. Civil liability, international regulation, and data protection law create overlapping minefields.

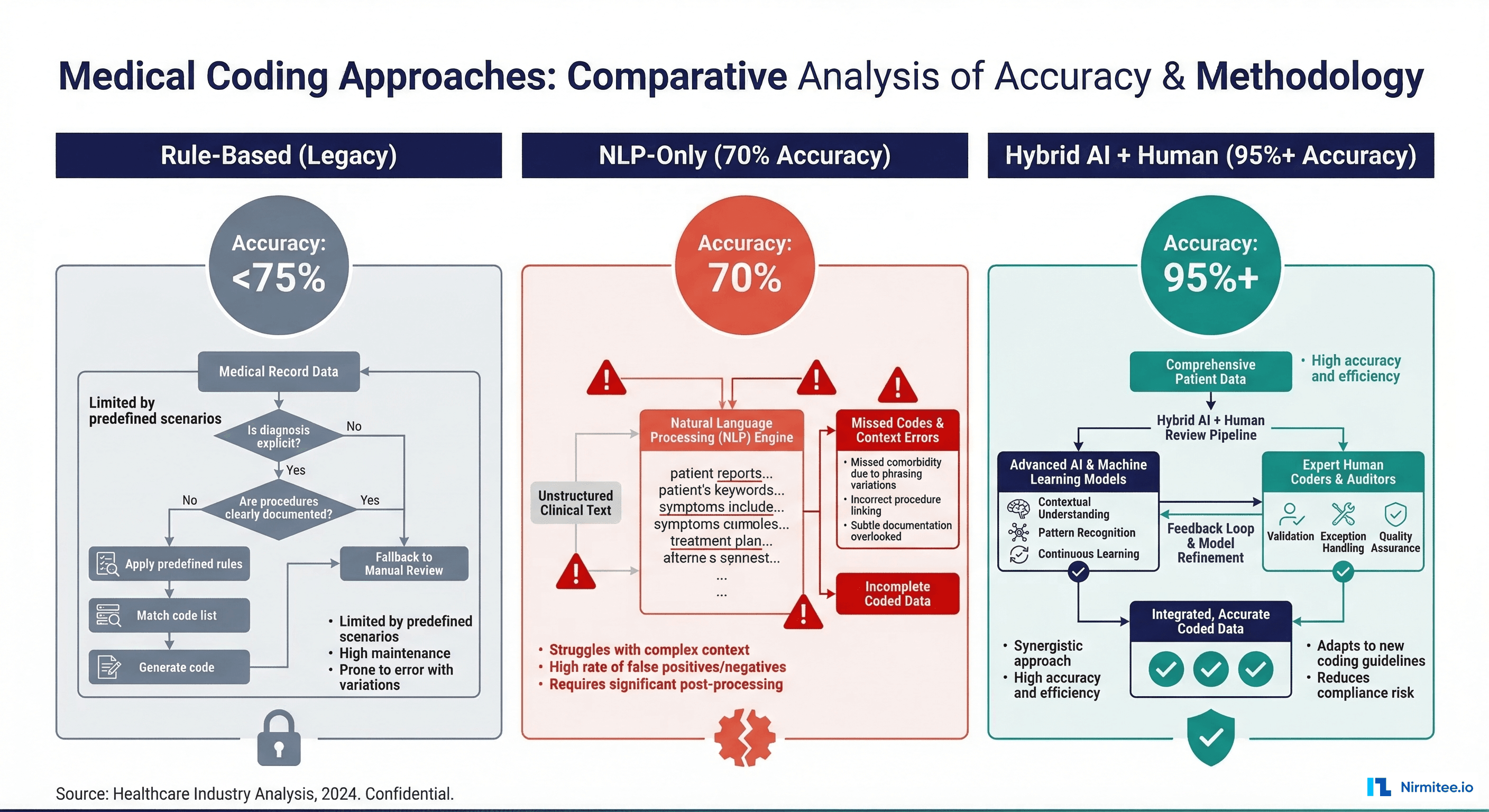

Hallucinations in Clinical Context

LLMs hallucinate. In a consumer chatbot, a hallucination is embarrassing. In a clinical context, a hallucination can be fatal. If your LLM suggests a drug interaction that doesn't exist (or misses one that does), and a patient is harmed, you're facing product liability claims.

Real risk: A 2024 study in Nature Medicine found that GPT-4 produced clinically inaccurate medication information in 35% of tested drug interaction scenarios. Not 3.5% — thirty-five percent.

Malpractice Liability

Who's liable when an AI-generated clinical note contains an error that leads to patient harm? The clinician who signed it? The AI vendor who generated it? The health system that deployed it?

Current case law is evolving, but the trend is toward shared liability. The clinician has a duty to review (and catch errors). The vendor has a duty to provide accurate, validated tools. The health system has a duty to implement appropriate safeguards. Your contracts need to reflect this reality — vague indemnification clauses won't survive litigation.

EU AI Act: High-Risk Classification

The EU AI Act (effective August 2025, enforcement from August 2026) classifies healthcare AI as high-risk (Annex III, Category 5). Requirements include:

- Conformity assessment before market placement

- Risk management system throughout the AI lifecycle

- Data governance — training data must be relevant, representative, and free from bias

- Technical documentation — model architecture, training methodology, performance metrics

- Human oversight — designed to allow human intervention and override

- Accuracy, robustness, and cybersecurity requirements

Non-compliance penalties: up to 35 million EUR or 7% of global annual turnover, whichever is higher.

India's DPDP Act and Patient Data

The Digital Personal Data Protection Act (DPDP) 2023 classifies health data as sensitive personal data. If you're training or fine-tuning LLMs on Indian patient data, you need explicit, informed consent for that specific purpose. "Consent for treatment" does not extend to "consent for AI training." Cross-border data transfer restrictions add another layer — you can't send PHI to a US-based LLM API without meeting DPDP's transfer requirements.

Engineering Safeguards You Need

Regardless of which zone you're operating in, these engineering patterns are non-negotiable for healthcare LLM deployments.

Comprehensive Audit Trail

Log every LLM inference with full context:

{

"inference_id": "uuid-v4",

"timestamp": "2026-03-13T10:30:00Z",

"model": "gpt-4o-2026-01",

"model_version": "ft:gpt-4o:org:healthcare-v3:abc123",

"input_tokens": 2847,

"output_tokens": 512,

"confidence_score": 0.87,

"latency_ms": 1420,

"clinician_id": "dr-smith-1234",

"clinician_action": "modified",

"patient_context": "encounter-789",

"phi_sent_external": false

}This isn't optional logging. This is your legal defense, your debugging tool, and your compliance evidence.

Hallucination Detection

Cross-reference LLM outputs against structured data sources. If the LLM says "patient is on metformin," verify against the medication list in the EHR. If the LLM generates a dosage, validate against drug databases (RxNorm, FDB). Flag discrepancies for clinician review.

Build a grounding layer — a retrieval-augmented generation (RAG) pipeline that anchors LLM outputs to verified clinical data. Measure grounding rates. Set minimum thresholds. Alert when grounding drops below threshold.

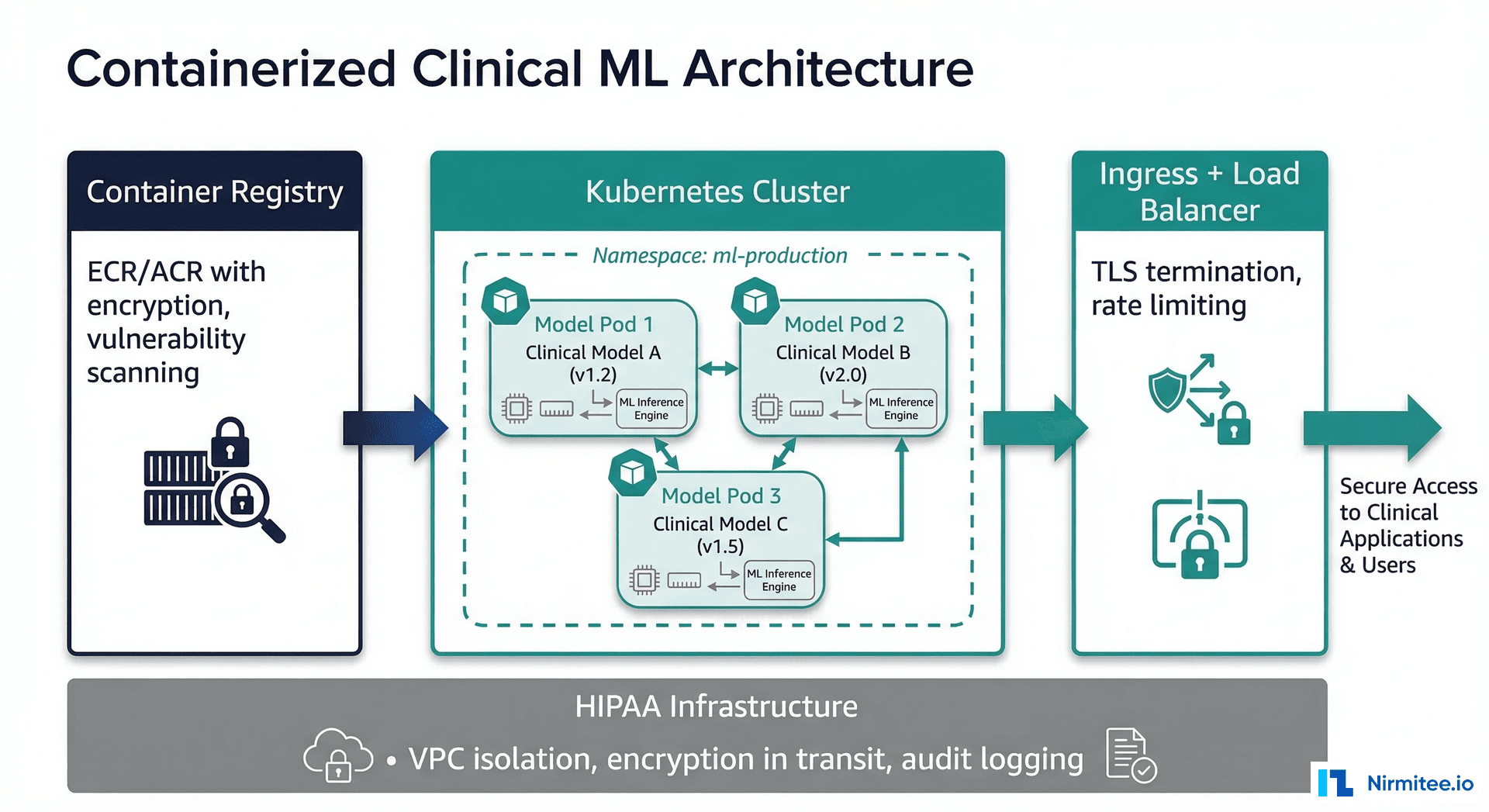

PHI Handling

Do not send Protected Health Information to external LLM APIs without a Business Associate Agreement (BAA). Period.

- BAA-covered options: Azure OpenAI Service, Google Cloud Vertex AI, AWS Bedrock — all offer BAA-covered LLM endpoints

- On-premise options: Llama 3, Mistral, and other open-weight models running on your infrastructure — no BAA needed because PHI never leaves your environment

- Hybrid approach: De-identify data before sending to external APIs, re-identify on return. Use structured de-identification (Safe Harbor or Expert Determination methods per HIPAA)

Model Versioning and Rollback

Treat model deployments like software deployments. Tag every model version (including prompt versions). Maintain rollback capability. Run shadow deployments before production cutover. Track performance metrics per version.

When OpenAI or Anthropic updates a base model, your fine-tuned model's behavior can change. Your validation suite needs to catch regressions before they reach patients.

Human-in-the-Loop Design Patterns

Design for active clinician engagement, not passive approval:

- Require modification: Force clinicians to edit at least one field before signing AI-generated notes. This breaks automation bias.

- Differential display: Show AI-generated content in a visually distinct format (different background color, font, or frame).

- Confidence-gated display: Only show AI suggestions above a confidence threshold. Below threshold, show "insufficient confidence" rather than a potentially wrong answer.

- Periodic calibration: Randomly present clinicians with AI outputs that contain deliberate errors. Track detection rates. If detection drops below 80%, your human-in-the-loop is failing.

The Practical Path Forward

Here's the strategy we recommend to healthtech companies building with LLMs:

Start in the Green Zone

Deploy summarization, documentation assistance, or administrative automation. Prove ROI. Build clinician trust. Collect real-world performance data. This is your foundation.

Green Zone deployments are also your training ground for engineering practices — audit trails, monitoring, PHI handling, model versioning. Get these right on low-risk applications before you need them for high-risk ones.

Move to Yellow With Safeguards

Once you've proven your engineering maturity and built clinician trust, expand into Yellow Zone applications. Implement every safeguard described above. Hire a regulatory consultant — not to file submissions, but to review your architecture for regulatory boundary violations.

Budget $50K–$100K for a regulatory gap analysis before shipping Yellow Zone features. It's cheap insurance against a $10M+ enforcement action.

Red Zone Only With Strategy and Capital

Don't stumble into the Red Zone. Enter it deliberately with regulatory strategy, clinical validation plans, and capital to fund the 12–30 month clearance timeline. Partner with a regulatory affairs firm experienced in AI/ML SaMD submissions.

Market signal: 62% of digital health funding in H1 2025 went to AI-focused companies. Investors understand the FDA timeline. They're funding companies that have a credible regulatory strategy, not companies that are trying to avoid regulation.

The Bottom Line

LLMs are transforming healthcare — that's not hype, it's math. The efficiency gains are real, the clinical value is demonstrable, and the market is massive. But the margin for error is measured in patient outcomes, not user churn.

Know your zone. Engineer for safety. Build for trust. The companies that get this right won't just avoid lawsuits — they'll build the next generation of healthcare infrastructure.

If you're building healthcare AI and need engineering or compliance guidance, reach out to our team. We've helped healthtech startups navigate from Green Zone MVPs to FDA-cleared products.

Ready to deploy AI agents in your healthcare workflows? Explore our Agentic AI for Healthcare services to see what autonomous automation can do. We also offer specialized Healthcare Software Product Development services. Talk to our team to get started.