AI Denial Prediction & Auto-Appeals: How ML Pre-Submission Scoring and Automated Appeals Recovered $1.06M Annually

Executive Summary

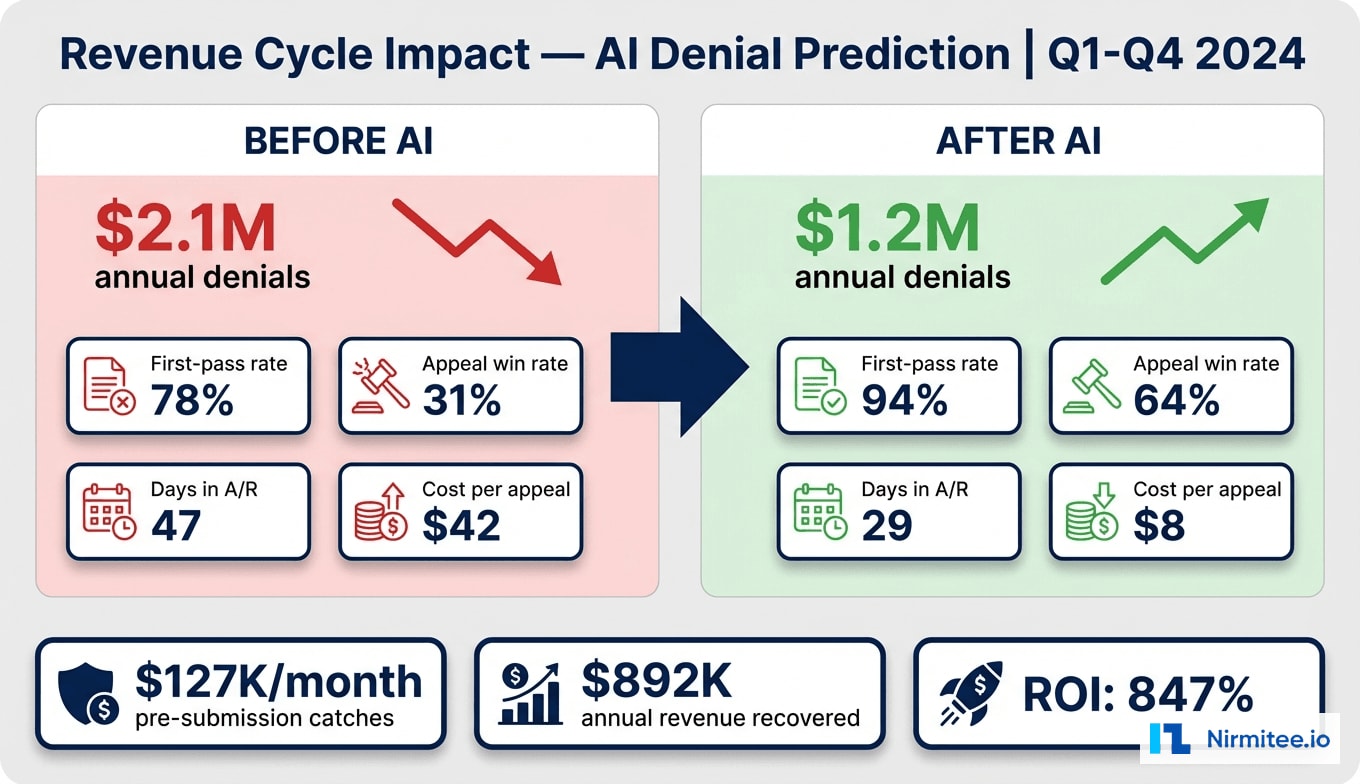

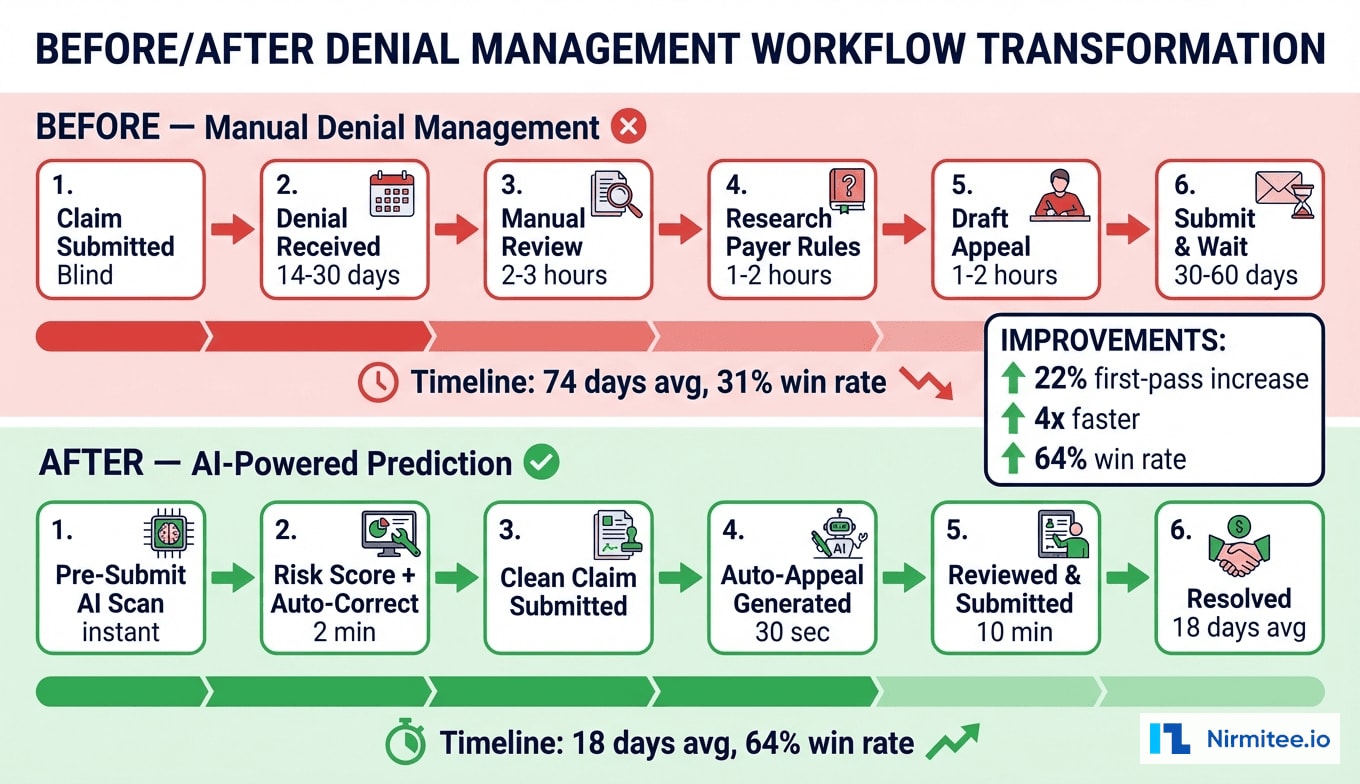

A 340-bed regional health system was losing $2.1 million annually to claim denials, with a first-pass acceptance rate stuck at 78% and appeal success rates below 31%. Their revenue cycle team of 14 specialists spent an average of 5.2 hours per appeal — researching payer-specific rules, compiling clinical documentation, and drafting letters that more often lost than won. With 2,400+ denials per month flowing through a manual, reactive process, the financial and operational strain was unsustainable.

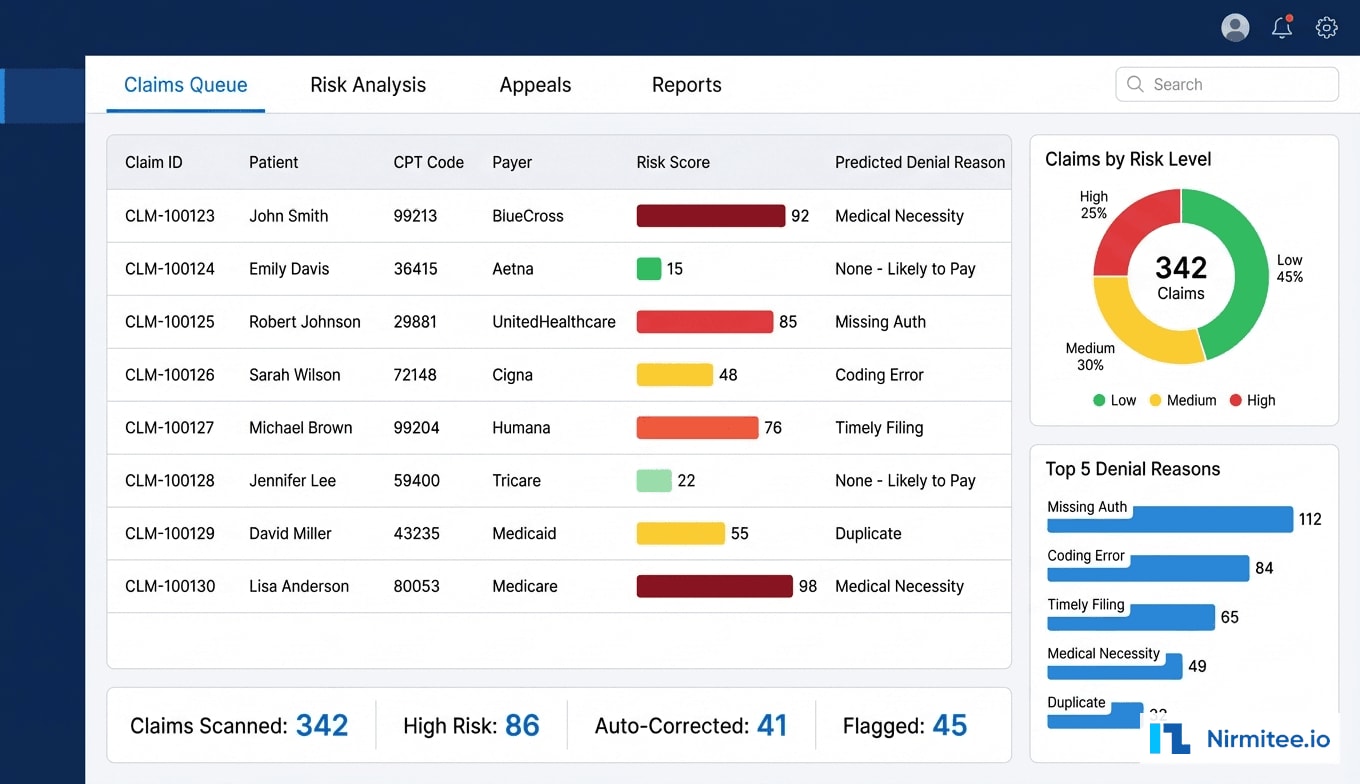

We built an AI-powered denial prediction and auto-appeals platform that fundamentally transformed their revenue cycle from reactive to proactive. The system analyzes every claim before submission, assigns a denial risk score based on 847,000 historical claims, auto-corrects common issues, and generates payer-specific appeal letters for claims that do get denied. The ML ensemble model combines Random Forest and XGBoost algorithms trained on CPT/ICD pair history, payer behavior patterns, provider coding tendencies, and authorization status signals.

Within 6 months of deployment, the health system achieved a 22% improvement in first-pass acceptance rate (78% to 94%), $127K per month in pre-submission catches that would have been denied, and a 64% auto-appeal win rate — more than double the previous manual rate. Total annual revenue recovery exceeded $1.06 million against a $112K implementation investment, delivering an 847% ROI.

The Problem: Reactive Denial Management Hemorrhaging Revenue

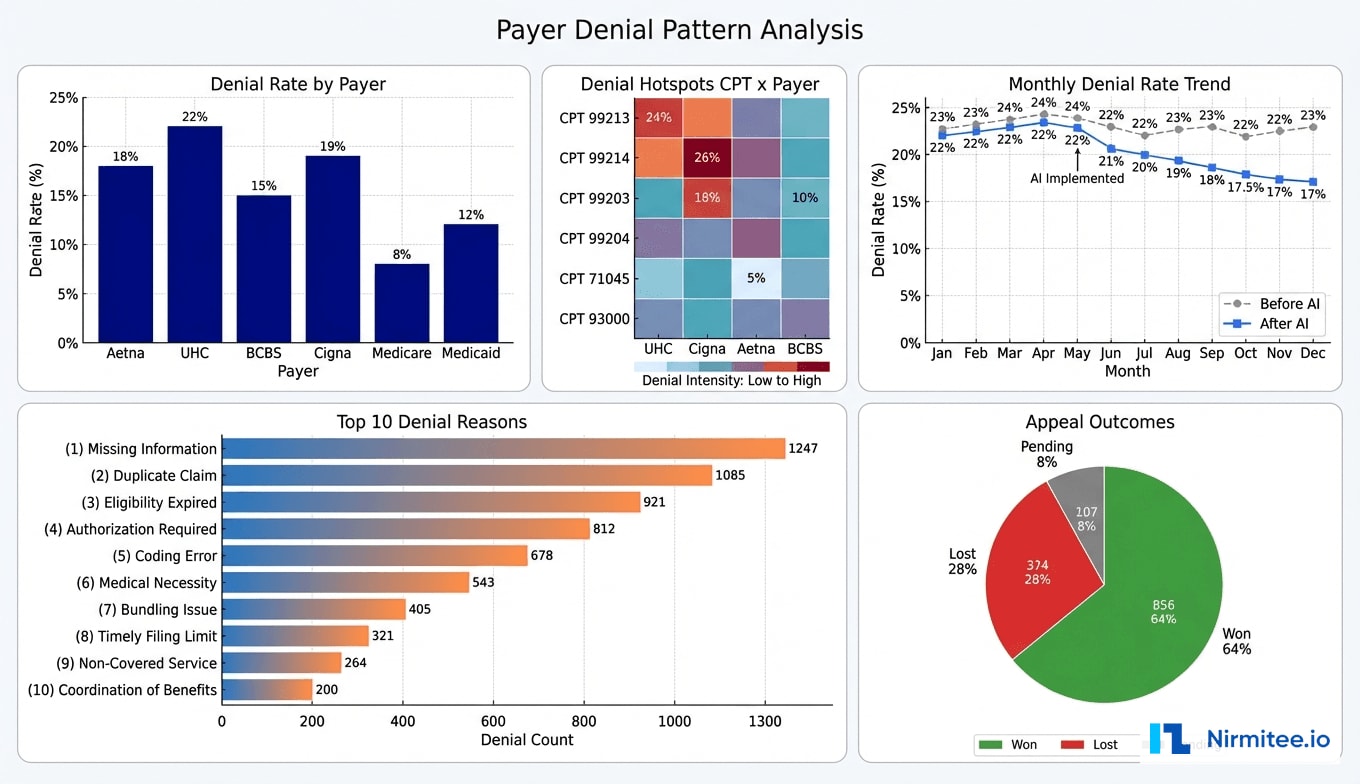

The health system's revenue cycle challenges were multi-layered and deeply entrenched. Their denial rate had been climbing steadily over three years — from 18% to 24% — driven by increasingly complex payer rules, prior authorization requirements, and coding specificity demands that changed faster than their team could track.

Scale of the Denial Problem

Monthly claim volume averaged 11,200 across all payers. At a 22% denial rate, approximately 2,464 claims were denied each month, representing $3.8 million in monthly charges at risk. The breakdown by payer revealed stark differences:

- UnitedHealthcare: 26% denial rate — the most aggressive on prior authorization enforcement

- Aetna: 21% — frequently denied for medical necessity documentation gaps

- BCBS: 18% — primary issues with timely filing and coding specificity

- Medicare: 11% — lower rate but higher per-claim impact due to audit risk

- Medicaid: 15% — eligibility verification gaps driving most denials

The Manual Appeal Bottleneck

The revenue cycle team could realistically work 40-50 appeals per day across 14 staff members. With 2,464 new denials monthly plus a backlog of 800+ pending appeals, they were forced to triage — only appealing claims above $500 and those with perceived high win probability. This meant approximately 35% of denied claims were written off without any appeal attempt, representing $380K in monthly revenue simply abandoned.

Each manual appeal consumed 5.2 hours on average: 1.5 hours identifying the correct denial reason and payer-specific appeal requirements, 2 hours gathering and reviewing clinical documentation, 1.2 hours drafting the appeal letter, and 0.5 hours for submission and tracking. At an average staff cost of $38/hour, each appeal cost $198 to process — and only 31% succeeded.

Root Causes of High Denial Rates

Our analysis of 18 months of denial data revealed that 68% of denials were preventable at the point of submission. The top preventable categories:

| Denial Category | % of Total | Preventable? | Avg. Claim Value |

|---|---|---|---|

| Missing/Invalid Prior Authorization | 23% | Yes — auth check at submission | $1,847 |

| Medical Necessity Documentation | 19% | Partially — NLP analysis | $2,134 |

| Coding Errors (CPT/ICD mismatch) | 16% | Yes — rule engine validation | $892 |

| Timely Filing | 11% | Yes — deadline tracking | $1,456 |

| Duplicate Claims | 8% | Yes — dedup check | $634 |

| Eligibility Issues | 7% | Yes — real-time 270/271 | $1,223 |

| Bundling/Unbundling | 6% | Yes — CCI edit check | $1,567 |

| Other/Non-Preventable | 10% | No | $945 |

Solution: AI-Powered Denial Prediction and Auto-Appeals Platform

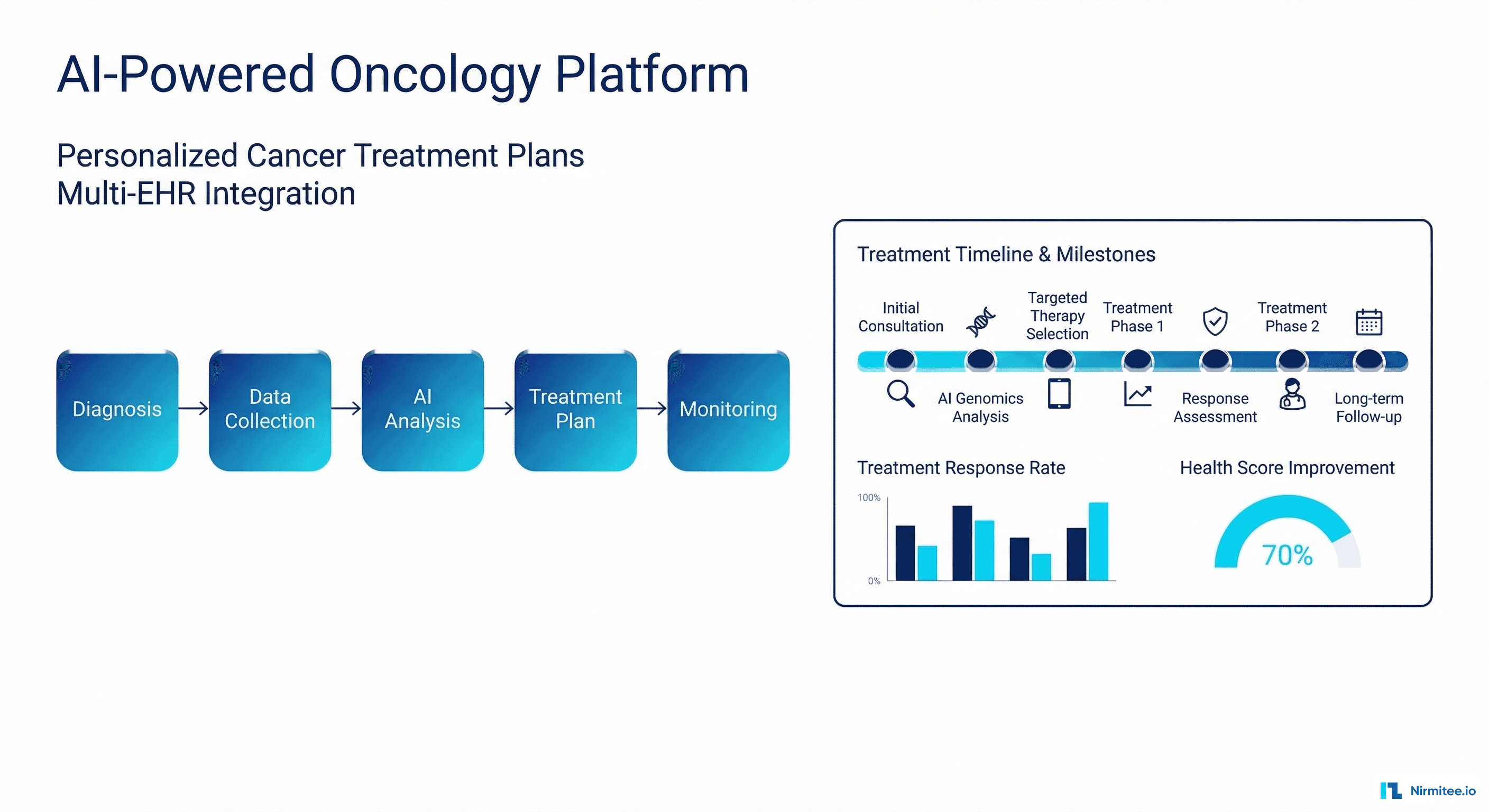

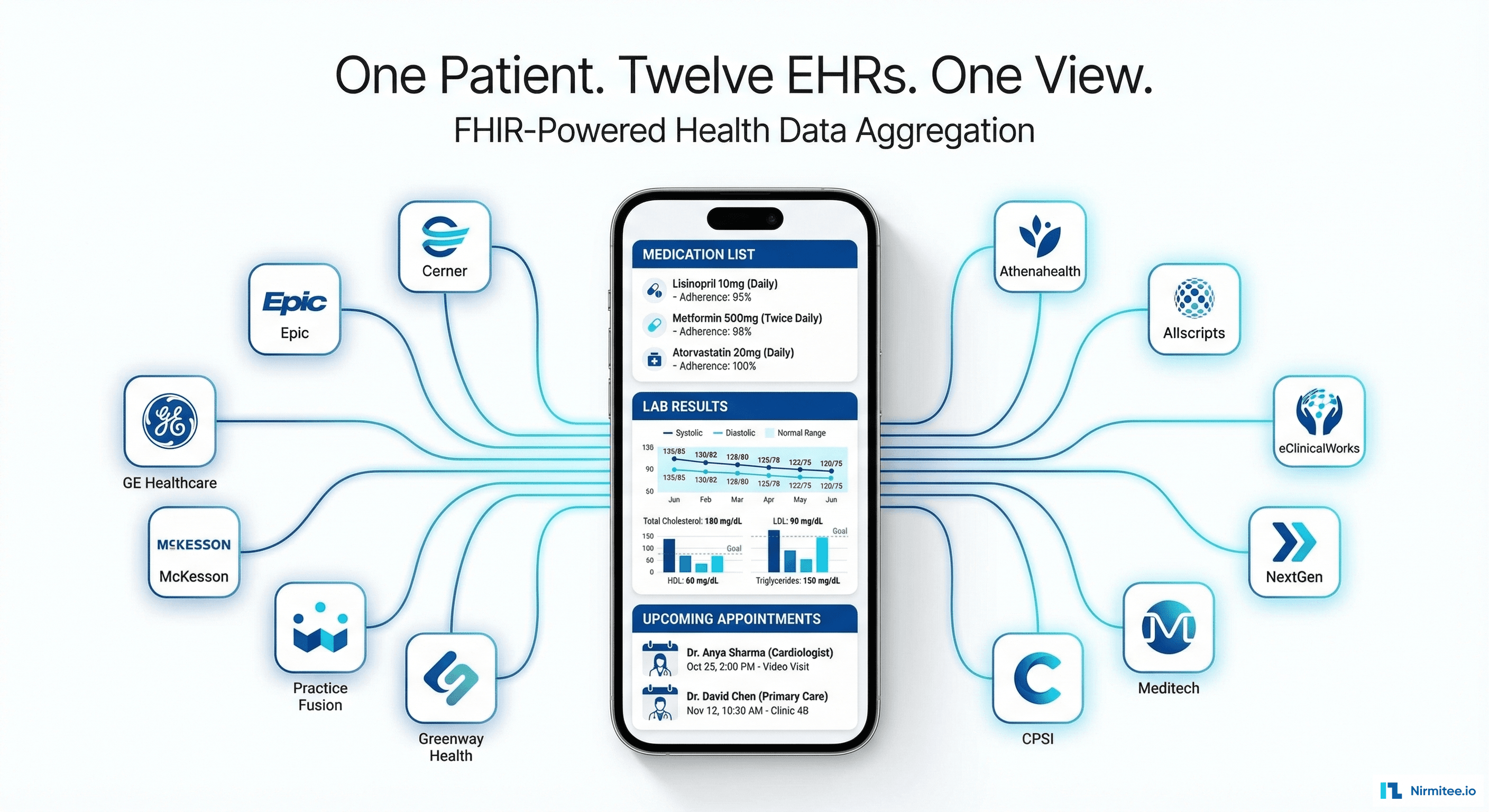

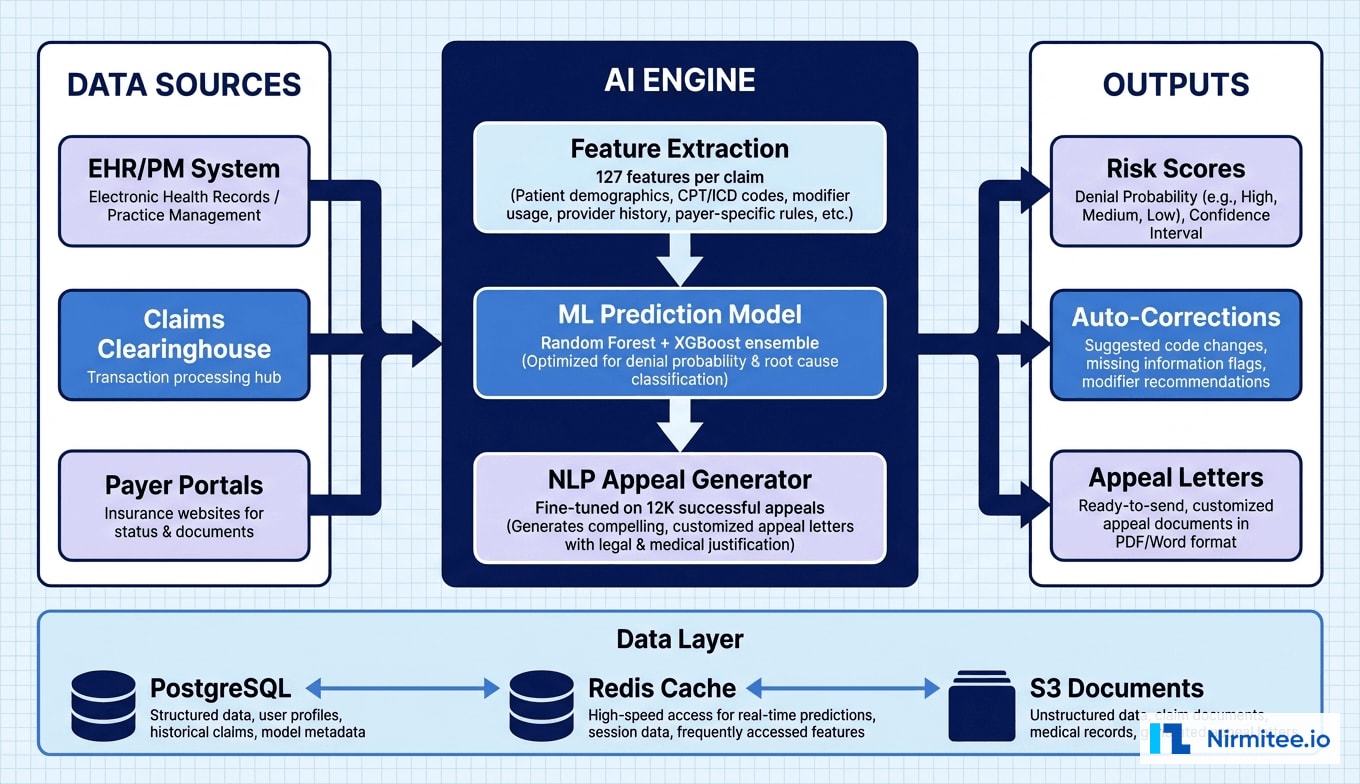

We designed and built a comprehensive AI platform with three integrated modules: Pre-Submission Risk Scoring, Auto-Correction Engine, and Intelligent Appeal Generation. Each module operates on real-time data from the EHR, practice management system, and historical denial records.

Module 1: Pre-Submission Risk Scoring

Every claim passes through the risk scoring engine before submission to the clearinghouse. The ML model evaluates 127 features per claim, organized into five feature categories:

- Claim-level features (32): CPT/ICD code pairs, modifier usage, place of service, units, billed amount, rendering provider specialty

- Payer-specific features (28): Historical denial rate for this payer + CPT combination, payer rule changes in last 90 days, contract-specific carve-outs, authorization requirements by procedure

- Provider features (24): Provider historical denial rate, coding pattern deviations, documentation completeness scores, specialty-specific benchmarks

- Patient features (22): Insurance plan type, eligibility status, benefit coverage verification, prior claims history, coordination of benefits status

- Temporal features (21): Days since date of service, day of week, end-of-month surge patterns, payer processing delays, holiday adjacency

The model outputs a risk score from 0-100 along with the top predicted denial reasons and their individual probabilities. Claims scoring above 60 are flagged for review, and those above 80 are held from submission until corrections are verified. The score distribution in production showed approximately 45% of claims scoring below 30 (low risk), 30% between 30-60 (medium), and 25% above 60 (high risk requiring attention).

Module 2: Auto-Correction Engine

For high-risk claims where the denial reason is correctable, the auto-correction engine applies fixes without manual intervention:

- Authorization attachment: Automatically matches and attaches prior auth numbers from the auth tracking database when the risk flag indicates a missing auth

- Modifier correction: Applies correct modifiers (25, 59, 76, etc.) based on CPT/payer rules when modifier errors are predicted

- CCI edit resolution: Detects bundling conflicts using CCI edit tables and suggests unbundling or correct modifier application

- Documentation sufficiency: NLP analysis of clinical notes to verify medical necessity documentation meets payer-specific requirements — flags insufficient documentation for provider review

- Eligibility verification: Real-time 270/271 eligibility check before submission, catching expired coverage or plan changes

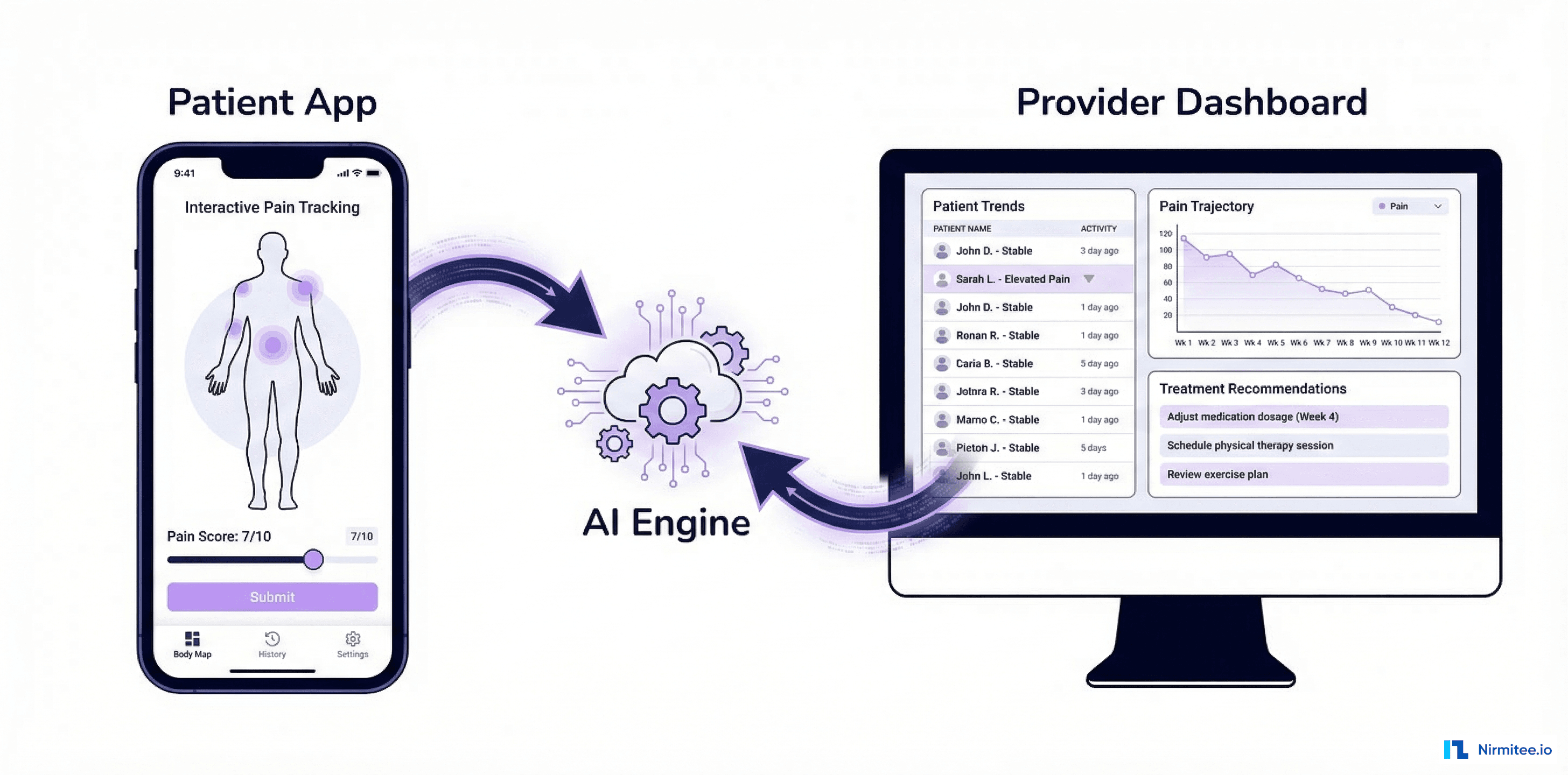

The auto-correction engine resolved 47% of high-risk flags without human intervention. The remaining 53% were escalated to the coding team with specific, actionable instructions — reducing their review time from 45 minutes to 8 minutes per claim.

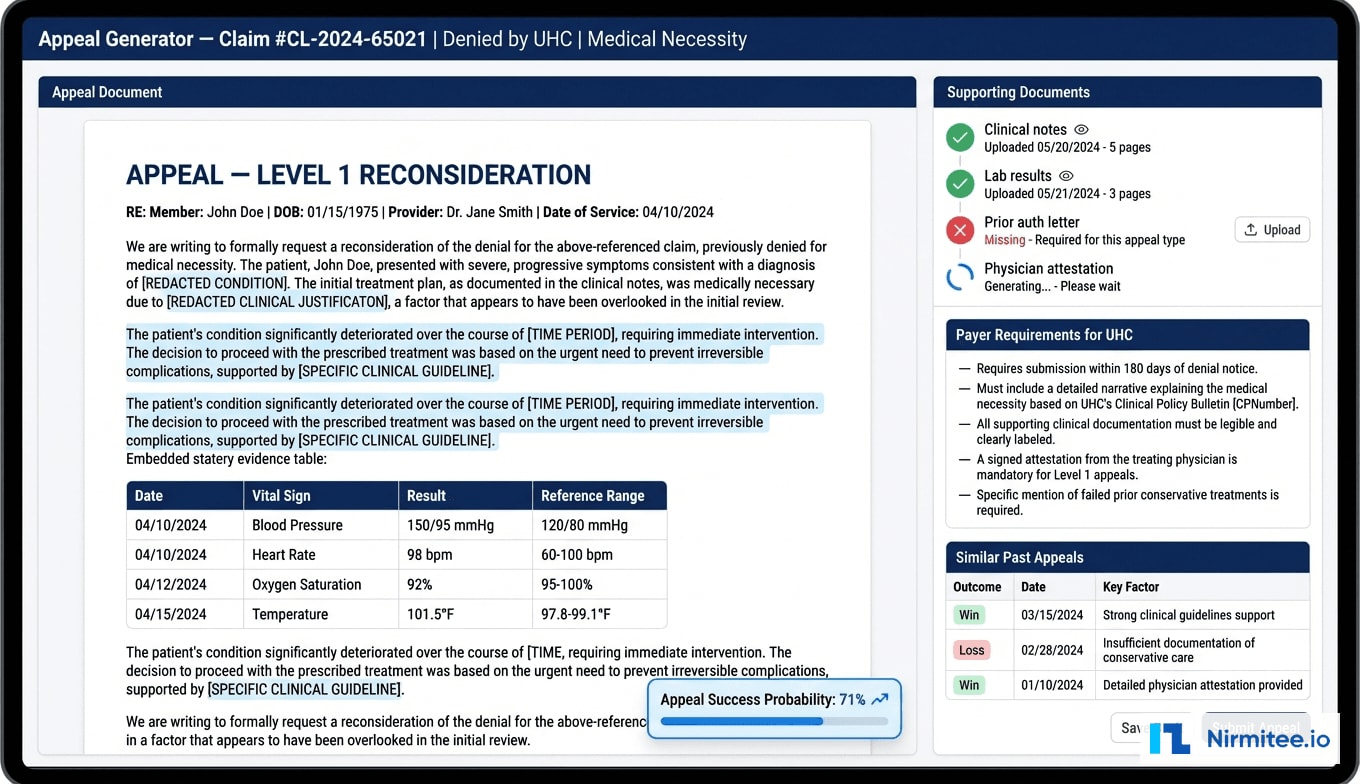

Module 3: Intelligent Appeal Generation

For claims denied despite pre-submission screening, the auto-appeal module generates payer-specific appeal letters within 30 seconds using a fine-tuned language model trained on 12,000 successful appeal letters.

The appeal generator performs four key functions:

- Denial reason classification: Parses the ERA/835 remittance advice to extract CARC/RARC codes, maps to payer appeal requirements, identifies appropriate appeal level

- Clinical evidence compilation: Pulls relevant documentation from the EHR — progress notes, lab results, imaging reports, medication history — selecting evidence supporting medical necessity for the specific CPT/ICD combination

- Letter generation: Creates structured appeal letters following payer-required format, incorporating clinical evidence citations, clinical guidelines (ACR Appropriateness Criteria, AMA CPT guidelines), and peer-reviewed literature

- Success probability estimation: Predicts appeal success based on denial reason, payer, appeal level, and documentation quality — enabling prioritization of high-value, high-probability appeals

Architecture and Technical Implementation

Technology Stack

| Component | Technology | Purpose |

|---|---|---|

| ML Pipeline | Python, scikit-learn, XGBoost | Denial prediction ensemble model |

| Feature Store | Redis + PostgreSQL | Real-time feature serving, historical features |

| NLP Engine | GPT-4 fine-tuned, spaCy | Appeal generation, clinical note analysis |

| API Layer | FastAPI, Python 3.11 | REST API for EHR integration |

| EHR Integration | FHIR R4, HL7v2 ADT/DFT | Clinical data extraction, claim triggers |

| Clearinghouse | Availity API, Change Healthcare | Claim submission, ERA receipt |

| Document Store | AWS S3 + Elasticsearch | Appeal docs, clinical evidence indexing |

| Message Queue | RabbitMQ | Async processing for batch claims |

| Monitoring | Datadog, custom dashboards | Model drift detection, performance |

| Infrastructure | AWS ECS, RDS, ElastiCache | HIPAA-compliant cloud hosting |

ML Model Training and Validation

The denial prediction model was trained on 847,000 historical claims spanning 3 years, with a 70/15/15 train/validation/test split. Key model metrics on the held-out test set:

- AUC-ROC: 0.91 — strong discrimination between denied and accepted claims

- Precision at 80% recall: 0.76 — when the model flags high-risk, it is correct 76% of the time

- F1 Score: 0.82 — balanced performance across precision and recall

- Calibration: Platt-scaled probabilities within 3% of actual denial rates across all deciles

The ensemble combines Random Forest (robustness, interpretability) with XGBoost (complex feature interactions). Top 5 predictors: (1) payer-specific CPT denial history, (2) authorization status, (3) provider coding deviation, (4) documentation completeness, (5) timely filing proximity.

Data Pipeline and Integration

The system integrates with Epic EHR via FHIR R4 APIs and HL7v2 interfaces for real-time claim event triggers:

- Real-time scoring: Individual claims scored within 200ms via FastAPI, triggered by PM system pre-submission workflow. Redis-cached features enable sub-second response.

- Batch processing: End-of-day batch runs score pending claims, generate reports, trigger auto-corrections. RabbitMQ manages the queue, processing 2,000+ claims in under 15 minutes.

Results: Measurable Revenue Cycle Transformation

Key Performance Metrics

| Metric | Before AI | After AI (6-Month) | Improvement |

|---|---|---|---|

| First-Pass Acceptance Rate | 78% | 94% | +22% relative |

| Monthly Pre-Submission Catches | N/A | $127K | New capability |

| Appeal Win Rate | 31% | 64% | +106% relative |

| Appeal Processing Time | 5.2 hours | 22 minutes | -93% |

| Cost per Appeal | $198 | $18 | -91% |

| Days in A/R | 47 days | 29 days | -38% |

| Annual Denial Write-Offs | $2.1M | $840K | -60% |

| Revenue via Appeals | $312K/year | $892K/year | +186% |

| Claims Appealed | 65% | 94% | +45% |

Financial Impact

- Pre-submission catches: $127K/month ($1.52M annualized) in claims corrected before submission

- Improved appeal recovery: $892K/year recovered through auto-appeals, up from $312K manually

- Reduced A/R carrying cost: 18-day reduction freed approximately $1.2M in cash flow

Against $112K implementation cost, the platform delivered an 847% first-year ROI.

Operational Impact

The 14-person team was reallocated: 4 specialists on complex appeals (external review, high-value surgical cases), 3 managing the AI system (model monitoring, exception handling, payer rule updates), and 7 redeployed to charge capture improvement and underpayment recovery — areas previously neglected due to the denial management burden.

Implementation Timeline

| Phase | Duration | Key Activities | Milestone |

|---|---|---|---|

| Discovery and Data Analysis | Weeks 1-3 | Denial data audit, payer pattern analysis, EHR assessment | Root cause analysis report |

| ML Model Development | Weeks 4-9 | Feature engineering, model training, historical validation | Model AUC above 0.88 |

| Auto-Correction Engine | Weeks 7-11 | Payer rule engine, CCI edits, auth matching | 40%+ automated corrections |

| Appeal Generator | Weeks 10-14 | NLP fine-tuning, templates, document assembly | Appeals pass clinical review |

| EHR and PM Integration | Weeks 8-13 | FHIR R4, HL7v2, clearinghouse APIs | End-to-end claim flow |

| Pilot and Validation | Weeks 14-17 | Shadow mode, A/B testing, staff training | 95% concordance |

| Production Rollout | Weeks 18-20 | Phased go-live, monitoring, feedback loops | Full production |

Lessons Learned

1. Payer-Specific Models Outperform Generic Ones

Switching from one universal model (AUC 0.84) to payer-specific sub-models with shared base features improved overall AUC to 0.91 and dramatically improved precision for UHC and Aetna, the two highest-denial-rate payers.

2. Appeal Letter Quality Matters More Than Speed

Training the NLP model on successful appeals only and incorporating payer-specific language patterns raised win rate from 48% to 64%. Appeals referencing specific clinical guidelines and structured evidence tables saw 23% higher win rates than generic language.

3. Model Monitoring Requires Continuous Investment

We built drift detection monitoring weekly accuracy by payer, auto-triggering retraining when AUC drops below 0.87. The model was retrained 4 times in year one — each time incorporating new denial patterns and rule changes.

4. Staff Adoption Hinges on Transparency

Showing specific reasons behind risk scores and providing override-and-explain mechanisms drove adoption. Override data fed back into the model, improving accuracy while giving staff a sense of control and contribution.

5. Prevention Delivers 4x the ROI of Appeals

Preventing a denial costs an API call (200ms). Appealing requires document assembly, submission, tracking, and 18+ days of waiting. The strategic lesson: invest heavily in prevention, treat appeals as the safety net.

Frequently Asked Questions

How long does it take to train the AI model on a new health system's denial data?

Initial training requires 18+ months of historical claims data. Data preparation takes 2-3 weeks, model training 3-5 days on cloud GPU, and validation another week. Clean data systems can be production-ready in 6 weeks; fragmented data may need 8-10 weeks for reconciliation.

Does the auto-appeal system work with all payers, including Medicare and Medicaid?

Yes — all commercial payers, Medicare (Parts A, B, Advantage), and state Medicaid programs. Each payer has dedicated rule sets for appeal requirements, deadlines, documentation, and formats. Medicare follows the 5-level appeal process; Medicaid is configured per state.

What happens when the AI model makes a wrong prediction?

False positives create a minor 2-hour delay for coder review. We accept a 12% false positive rate to maintain 92% recall on actual denials. False negatives are caught by the auto-appeal module downstream. Both error types feed into weekly model calibration.

How does the platform ensure HIPAA compliance?

Deployed in AWS GovCloud with AES-256 encryption at rest and TLS 1.3 in transit. FHIR R4 APIs use EHR-native access controls. NLP processes clinical notes in-memory without persisting PHI. Generated appeals stored in existing DMS. Annual HIPAA security risk assessments cover AI components.

Was this case study helpful?