Executive Summary

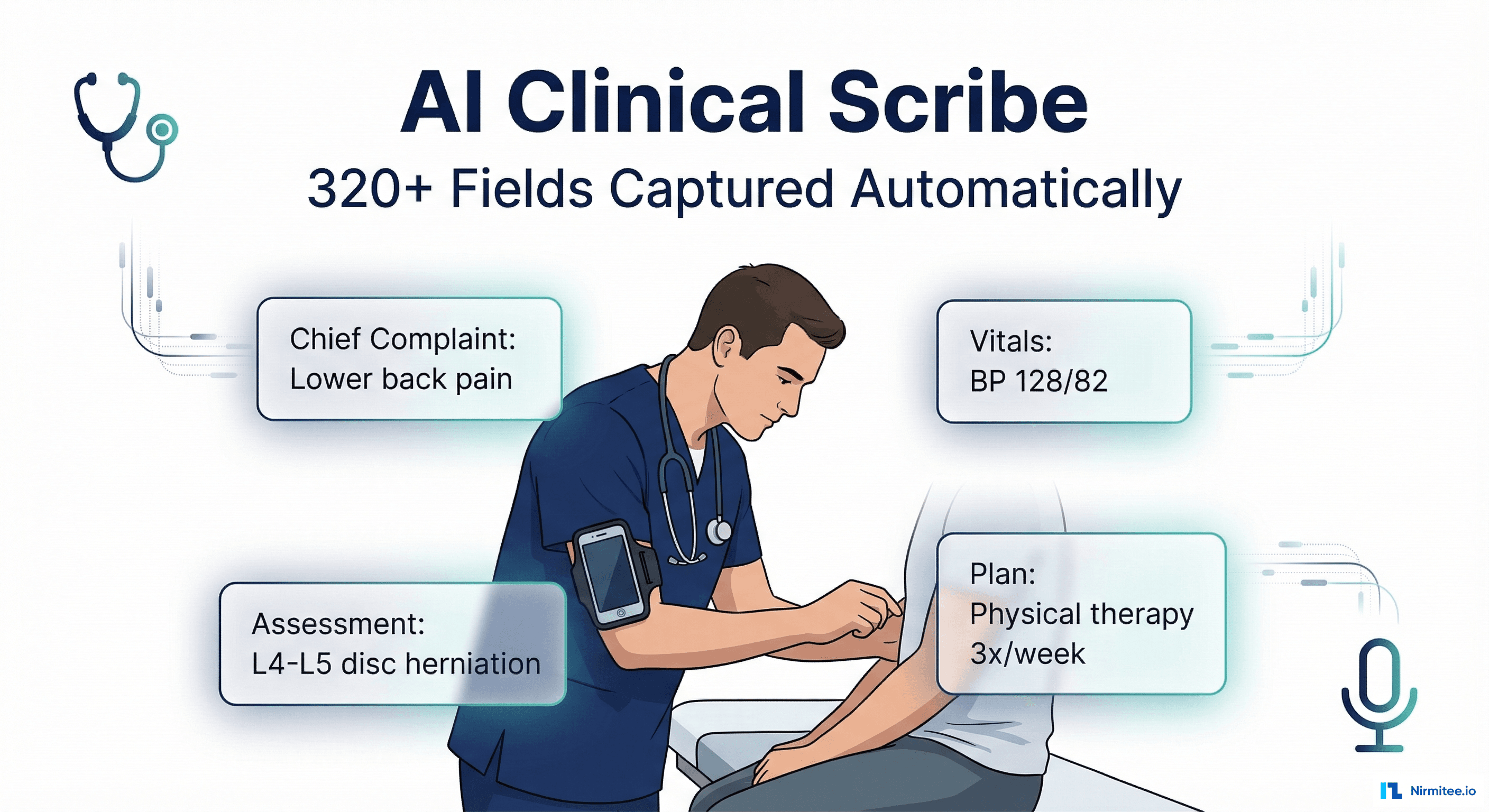

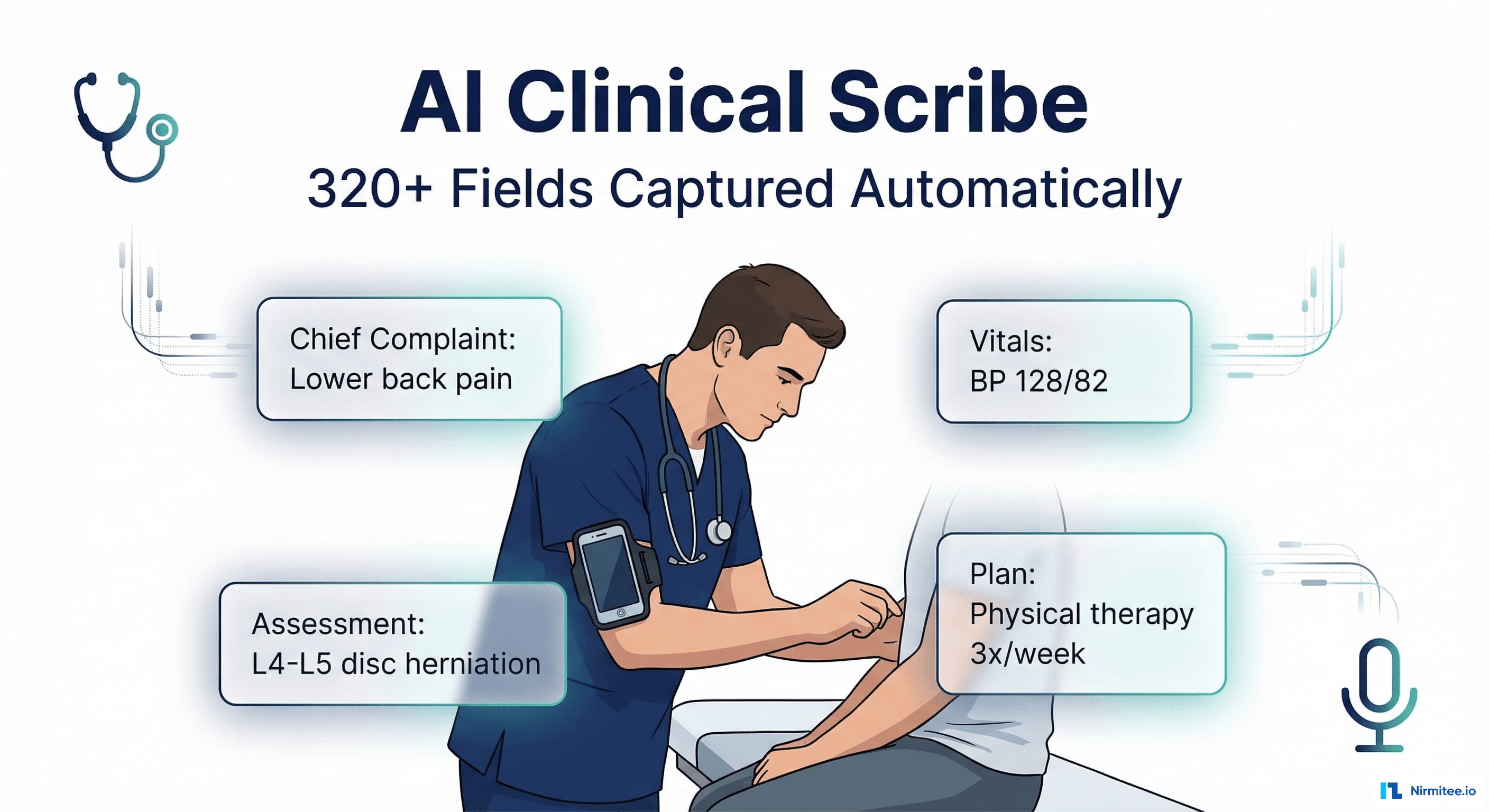

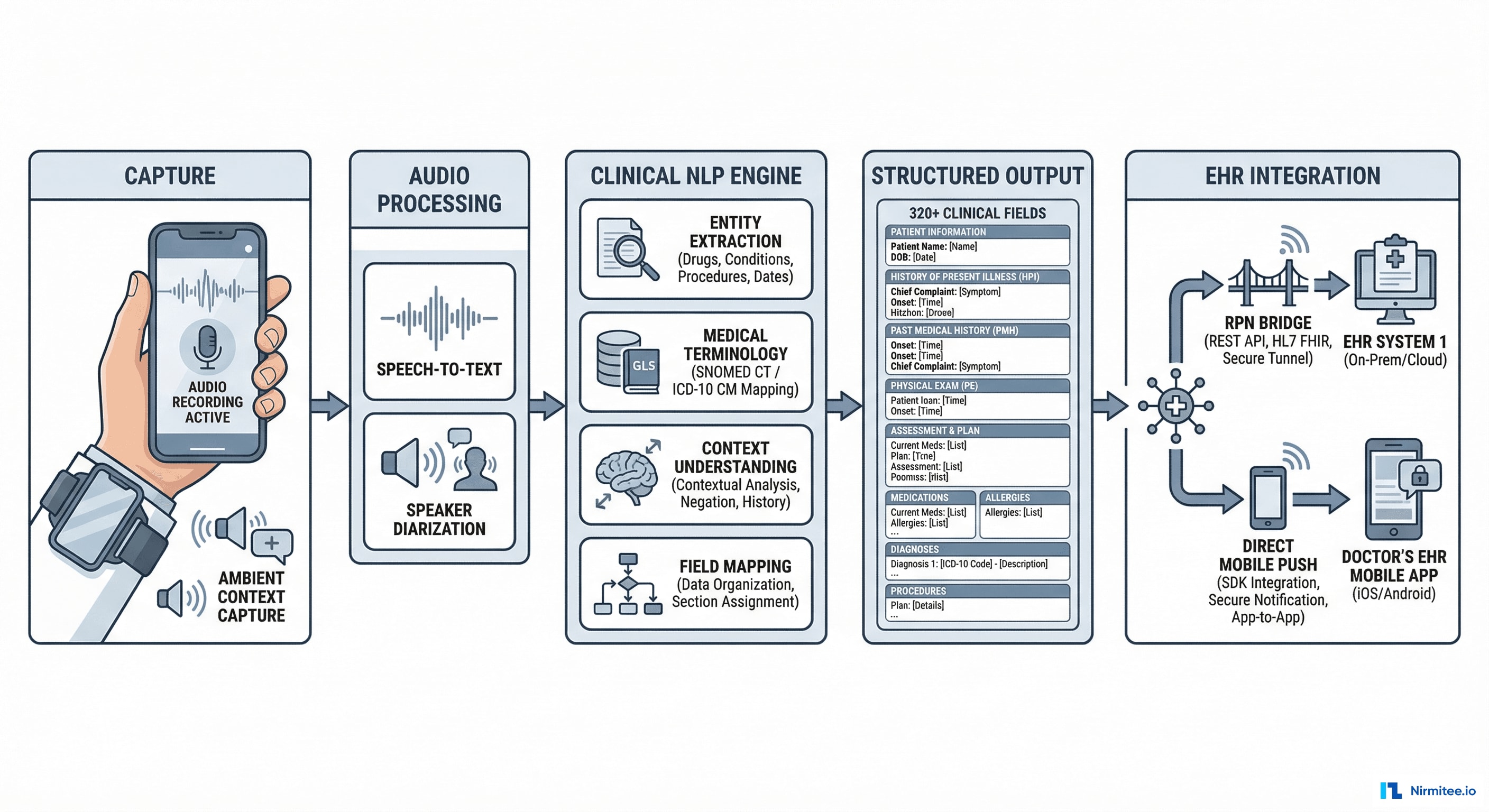

A US-based health tech startup was building the next generation of clinical documentation — a system where doctors don't type, don't dictate into a recorder, and don't hire scribes. Instead, a smartphone mounted on the physician's arm captures the entire patient encounter and an AI engine extracts 320+ clinical fields in real-time, generating a complete SOAP note ready for physician review and EHR submission.

We built the complete platform: the mobile capture app (React Native), the clinical NLP engine, the documentation review interface (React), and two EHR integration pathways — RPN bridge for legacy systems and direct FHIR push for modern EHRs. The result: documentation time dropped from 12 minutes to 2.3 minutes per encounter, with 96.4% AI accuracy across all captured fields.

The Problem: Doctors Spend More Time Typing Than Treating

Clinical documentation is the #1 driver of physician burnout in the United States. Studies consistently show that for every hour of direct patient care, physicians spend nearly 2 hours on documentation and administrative tasks. The average primary care physician documents 20-30 encounters per day — that's 4-6 hours of typing, clicking, and navigating EHR screens.

The Documentation Tax

- 12 minutes per encounter: average time to document a standard outpatient visit — chief complaint, history, exam findings, assessment, plan, prescriptions, referrals, follow-up

- 2+ hours of after-hours charting: most physicians finish documentation at home ("pajama time"), cutting into family time and personal recovery

- 15% error rate: manual documentation introduces errors — wrong codes, missing diagnoses, incomplete medication lists, copy-paste artifacts from prior notes

- $150,000+ annual cost per scribe: human scribes are effective but expensive, and good ones are hard to find and retain

- Revenue loss: undercoding due to documentation gaps leaves an estimated 10-15% of legitimate revenue uncaptured

Why Existing Solutions Fell Short

- Dragon Medical (Nuance): speech-to-text, but physicians still need to dictate in a structured format and edit the output. It converts speech to text — it doesn't understand clinical context.

- Human scribes: effective but $150K+/year, limited availability, training time, and they still can't be in every exam room simultaneously.

- Template-based EHR tools: click-heavy, rigid workflows that force physicians to document in the EHR's structure rather than their natural clinical workflow.

Our client wanted something fundamentally different: the doctor practices medicine naturally, and the AI handles the documentation.

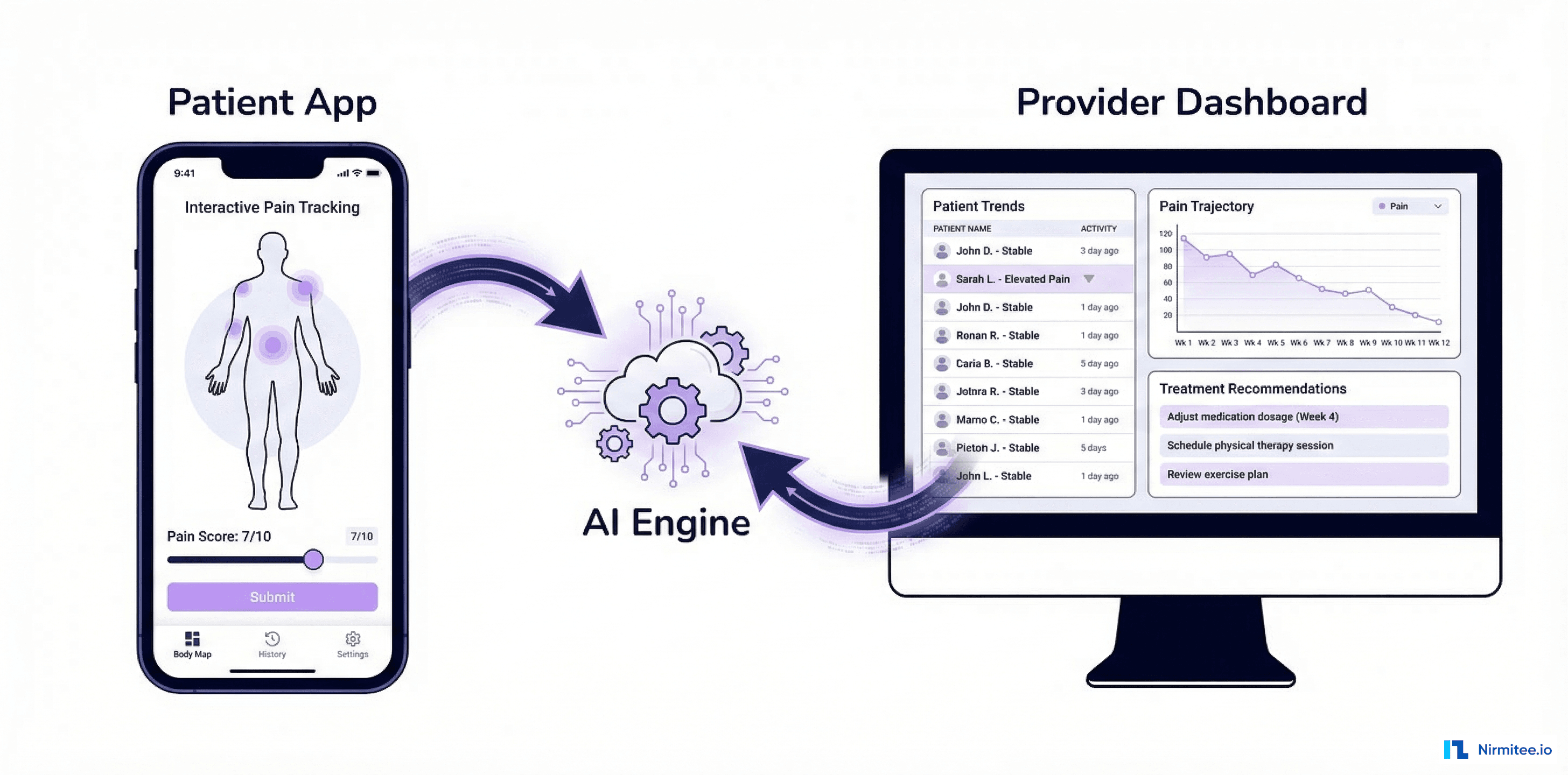

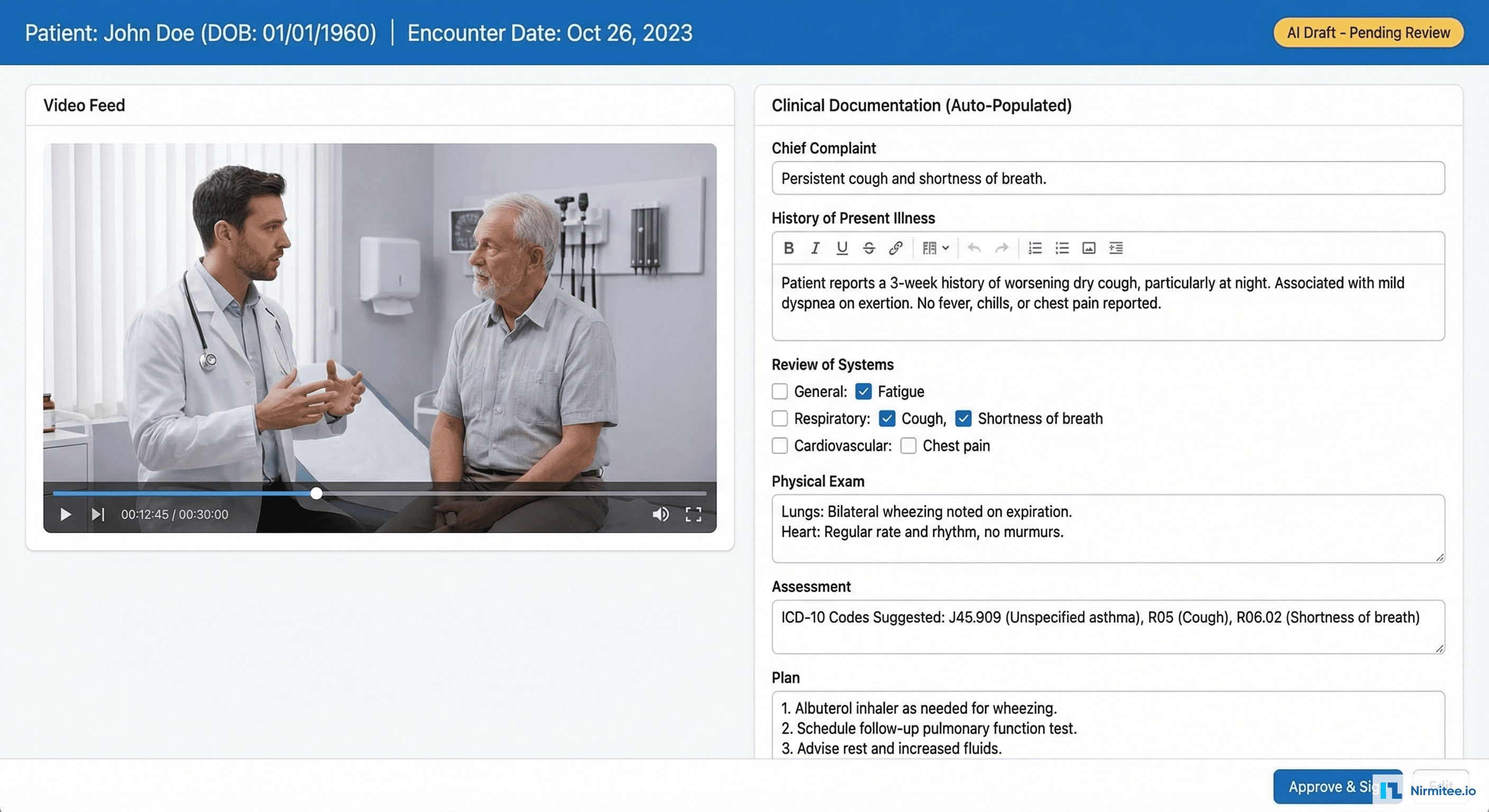

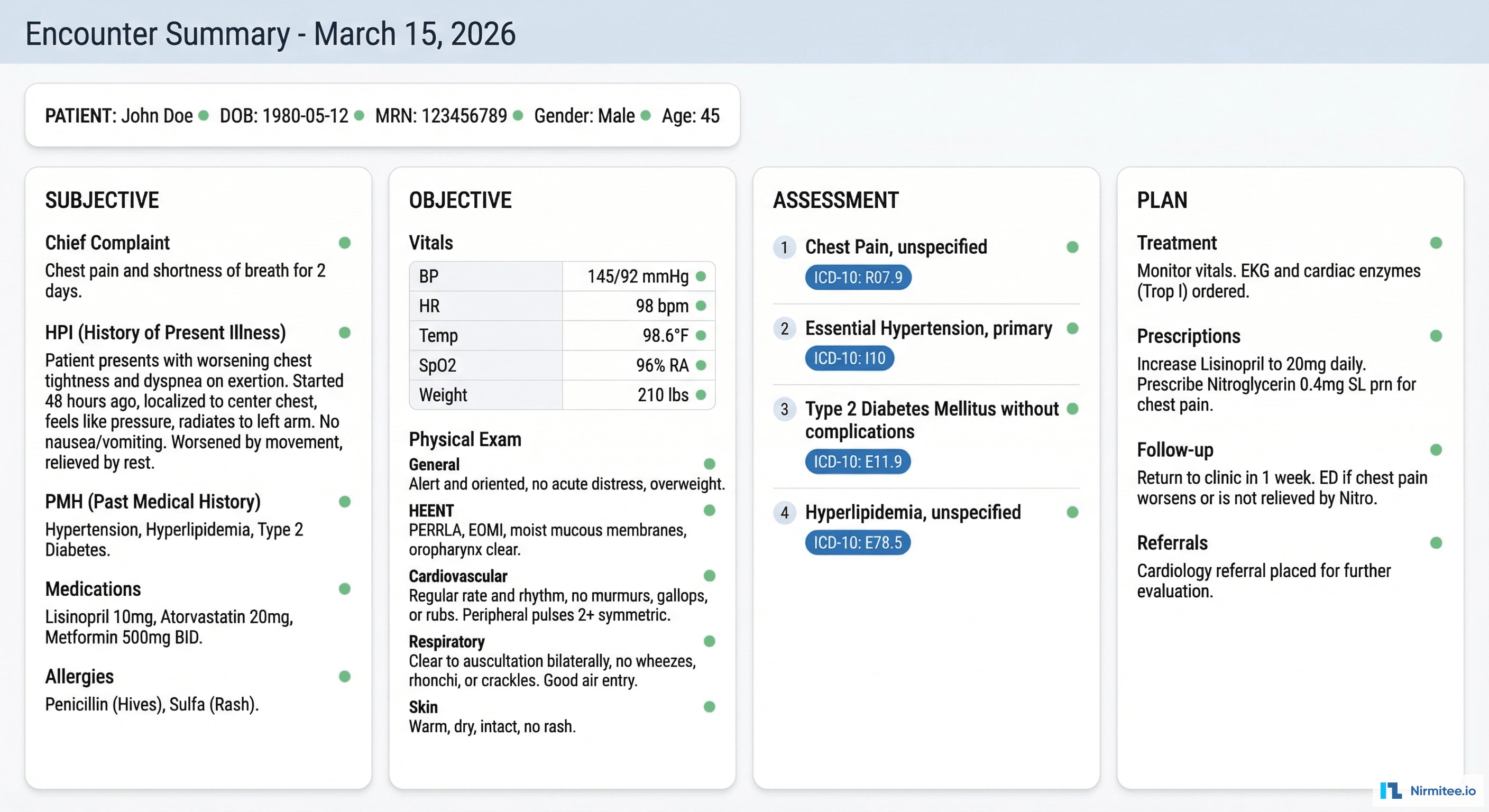

The Clinical Documentation Interface

After each encounter, the physician sees a split-screen interface: video playback on the left (with the ability to jump to any moment in the encounter) and the AI-generated documentation on the right. Every field is pre-populated — the physician reviews, makes any corrections, and signs. Average review time: 2.3 minutes.

Key features of the documentation interface:

- Section-by-section review: Chief Complaint, HPI, ROS, Physical Exam, Assessment, Plan — each section expandable with AI-generated content

- Confidence indicators: green dot (high confidence), yellow dot (needs review) on each auto-populated field — the physician knows exactly where to focus attention

- One-click corrections: tap any field to edit. The system learns from corrections to improve future accuracy for that physician's documentation style

- ICD-10 suggestion: assessment section suggests diagnosis codes based on captured clinical data — reducing coding errors and undercoding

- Approve & Sign: single button to finalize documentation and push to EHR

Technical Architecture

Capture Layer

The physician wears a smartphone mounted on their upper arm using a medical-grade armband. The phone captures:

- Audio: continuous recording of the doctor-patient conversation using the phone's microphone

- Video: optional — captures visual context (physical exam maneuvers, skin lesions, range of motion assessments)

- Ambient context: encounter duration, patient identification (via QR code scan at start), physician identification (biometric)

Audio Processing Pipeline

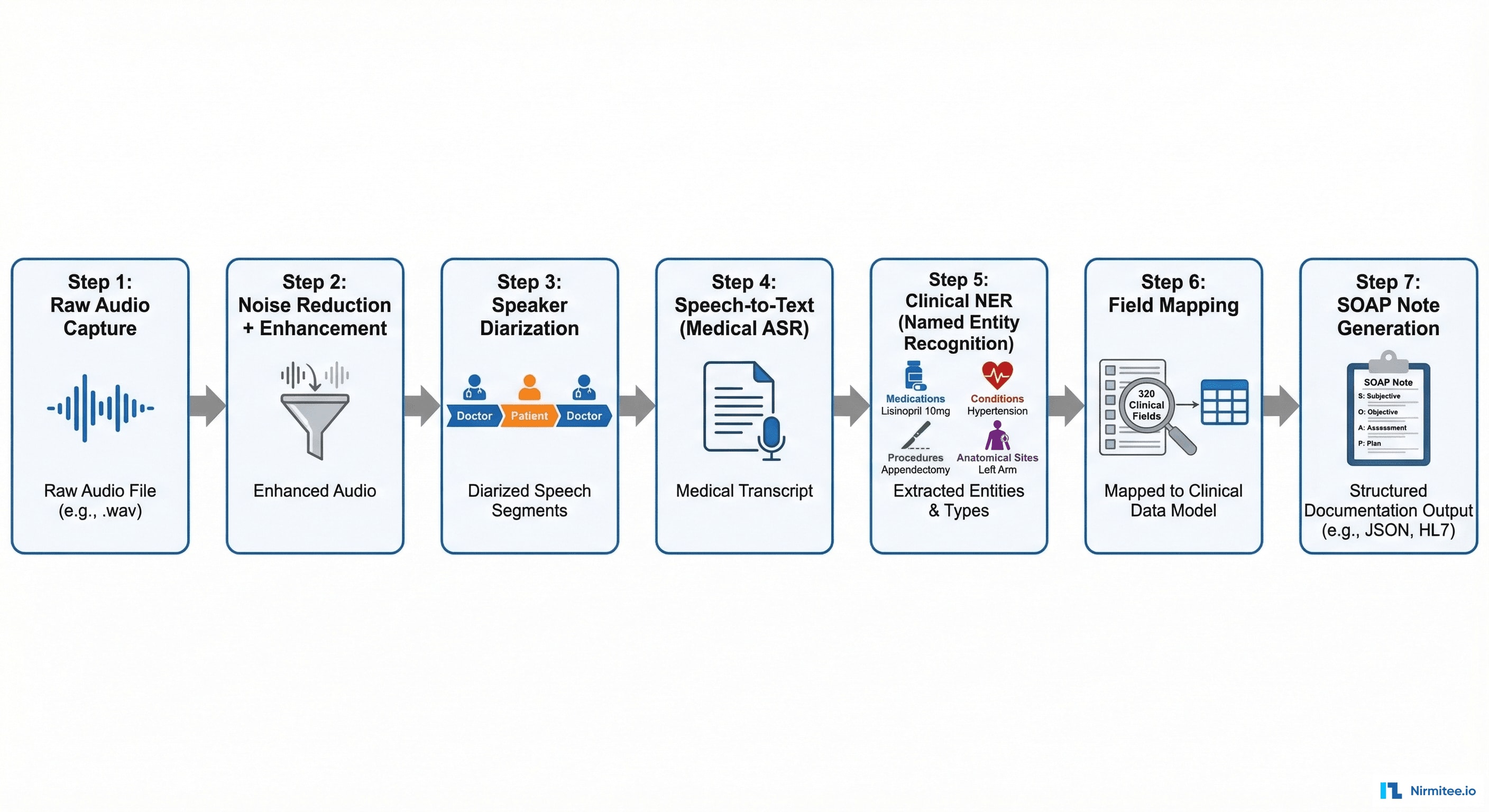

The audio goes through a 7-stage processing pipeline:

- Raw Audio Capture: 16kHz, 16-bit PCM audio stream from device microphone

- Noise Reduction: ambient noise filtering (other patients, hallway sounds, medical devices beeping) using spectral gating

- Speaker Diarization: separating doctor's voice from patient's voice. Critical for understanding who said what — "I have chest pain" means something different from the doctor vs. the patient

- Medical ASR (Automatic Speech Recognition): speech-to-text optimized for medical terminology — drug names, anatomical terms, procedure names, abbreviations (q.i.d., b.i.d., PRN)

- Clinical Named Entity Recognition: extracting medical entities from the transcript — medications (blue), conditions (red), procedures (green), anatomical sites (purple)

- Field Mapping: mapping extracted entities to the 320 clinical field schema — connecting "blood pressure is 128 over 82" to the Systolic BP and Diastolic BP fields

- SOAP Note Generation: assembling all mapped fields into a structured clinical document in SOAP format (Subjective, Objective, Assessment, Plan)

Encounter Summary: The SOAP Note

The AI generates a complete SOAP note organized in four columns:

- Subjective: chief complaint, history of present illness (onset, duration, severity, modifying factors), past medical history, current medications, allergies, social history

- Objective: vital signs (auto-extracted from verbal report or integrated device data), physical examination findings organized by body system

- Assessment: numbered diagnoses with suggested ICD-10 codes, each with an AI confidence score

- Plan: treatment orders, prescriptions (drug, dose, route, frequency), referrals, follow-up scheduling, patient education points

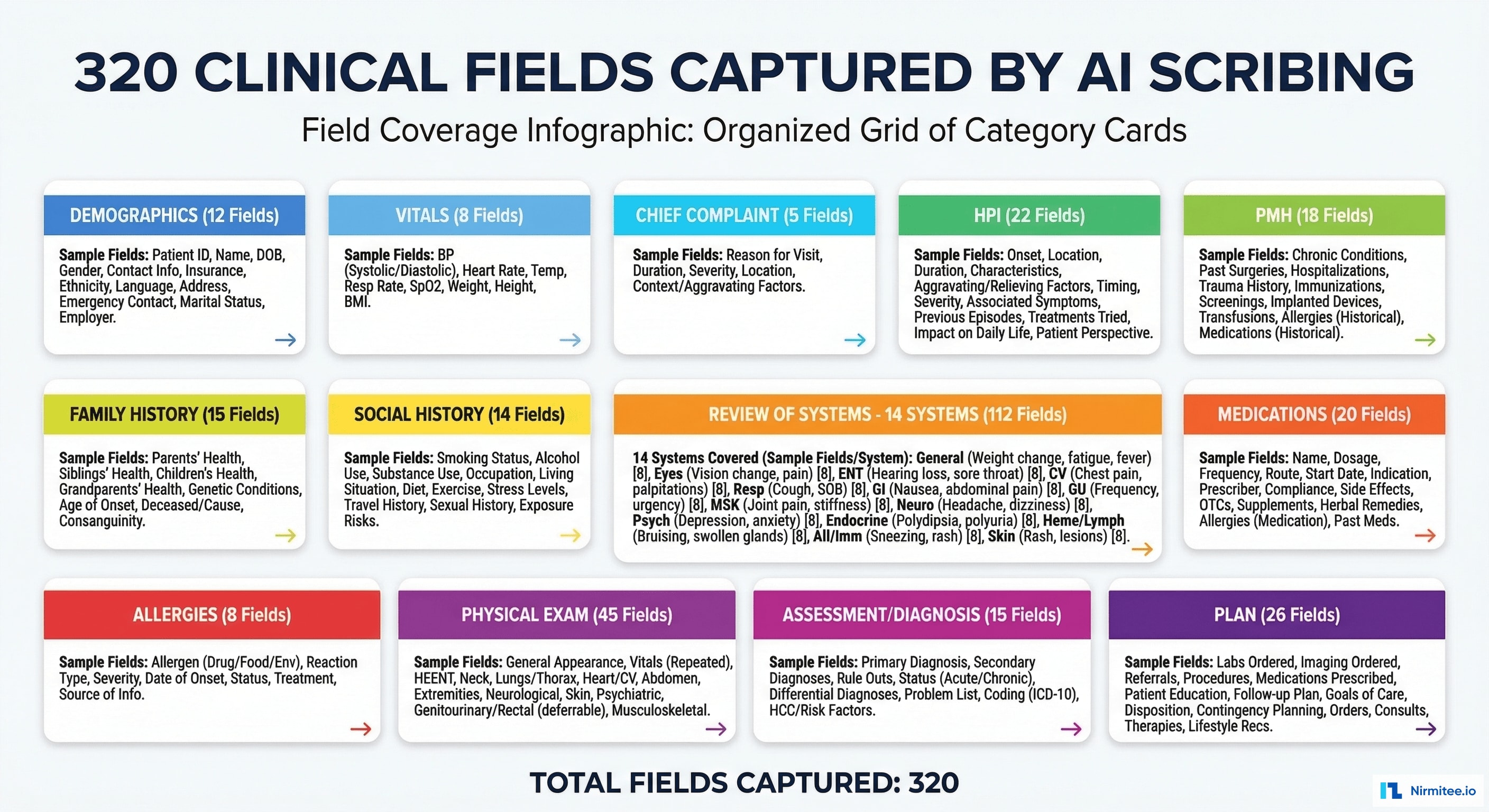

320 Clinical Fields

The system captures and maps 320 discrete clinical fields across 13 categories:

| Category | Fields | Examples |

|---|---|---|

| Demographics | 12 | Age, gender, ethnicity, preferred language, emergency contact |

| Vital Signs | 8 | BP systolic/diastolic, HR, temp, SpO2, RR, weight, BMI |

| Chief Complaint | 5 | Primary complaint, duration, severity, location, onset type |

| HPI | 22 | Onset, location, duration, character, severity, timing, modifying factors, associated symptoms |

| Past Medical History | 18 | Conditions, surgeries, hospitalizations, psychiatric history |

| Family History | 15 | Cancer, cardiac, diabetes, mental health — per family member |

| Social History | 14 | Smoking, alcohol, drugs, exercise, occupation, living situation |

| Medications | 20 | Drug name, dose, route, frequency, indication, prescriber, start date |

| Allergies | 8 | Allergen, reaction type, severity, onset date |

| Review of Systems | 112 | 14 body systems × 8 avg symptoms each (constitutional, HEENT, cardiovascular, respiratory, GI, GU, MSK, neuro, skin, psych, endo, heme/lymph, immunologic, eye) |

| Physical Exam | 45 | General appearance, HEENT findings, cardiac, lungs, abdomen, extremities, neurological, skin, MSK |

| Assessment | 15 | Diagnoses (up to 5), ICD-10 codes, severity, chronicity, clinical reasoning |

| Plan | 26 | Medications prescribed, labs ordered, imaging ordered, referrals, procedures scheduled, follow-up, patient education |

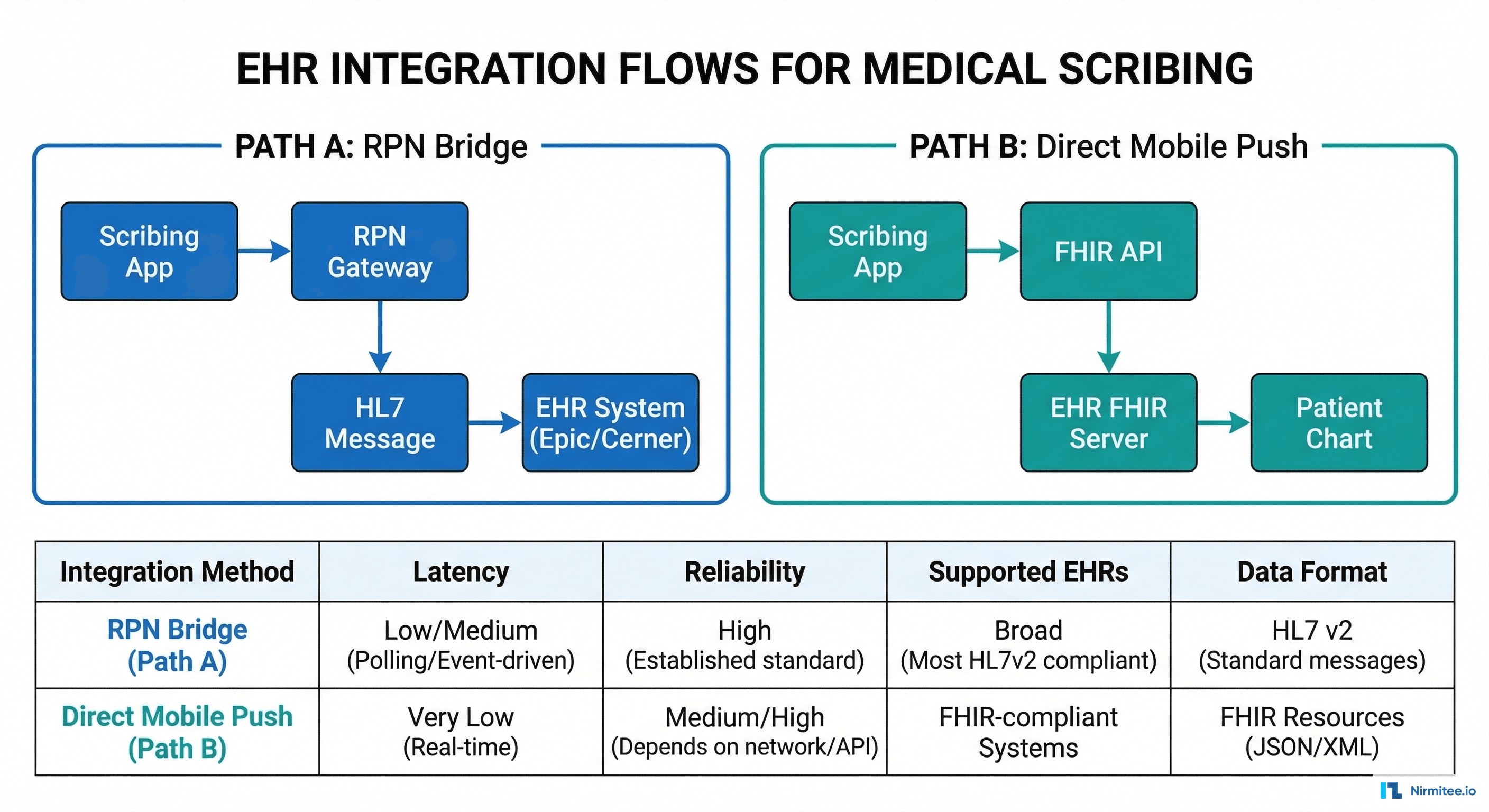

EHR Integration: Two Pathways

Documentation needs to flow into the physician's EHR seamlessly. We built two integration pathways to cover both modern and legacy EHR systems:

Path A: RPN Bridge (Legacy EHRs)

For EHR systems without robust FHIR APIs (older Epic installations, legacy Allscripts, proprietary systems), we use a Robotic Process Navigation (RPN) bridge. The RPN agent navigates the EHR's UI programmatically — opening the patient chart, clicking into each documentation section, and populating fields as if a human user were typing. This works with any EHR that has a desktop client, regardless of API availability.

Path B: Direct FHIR Push (Modern EHRs)

For EHRs with FHIR R4 APIs (Epic with Open.Epic, Cerner Ignite, athenahealth), we push structured documentation directly via FHIR resources: DocumentReference (clinical note), Encounter (visit context), Condition (diagnoses), MedicationRequest (prescriptions), ServiceRequest (orders). This is faster, more reliable, and maintains data structure end-to-end.

| Feature | RPN Bridge | FHIR Direct Push |

|---|---|---|

| Latency | 30-60 seconds | 2-5 seconds |

| Reliability | 95% (UI changes can break navigation) | 99.9% (API-based) |

| Supported EHRs | Any with desktop client | Epic, Cerner, athena, NextGen |

| Data structure | Loses some structure (flat text) | Full FHIR structure preserved |

| Setup time | 1-2 weeks per EHR | 2-4 weeks per EHR (API registration) |

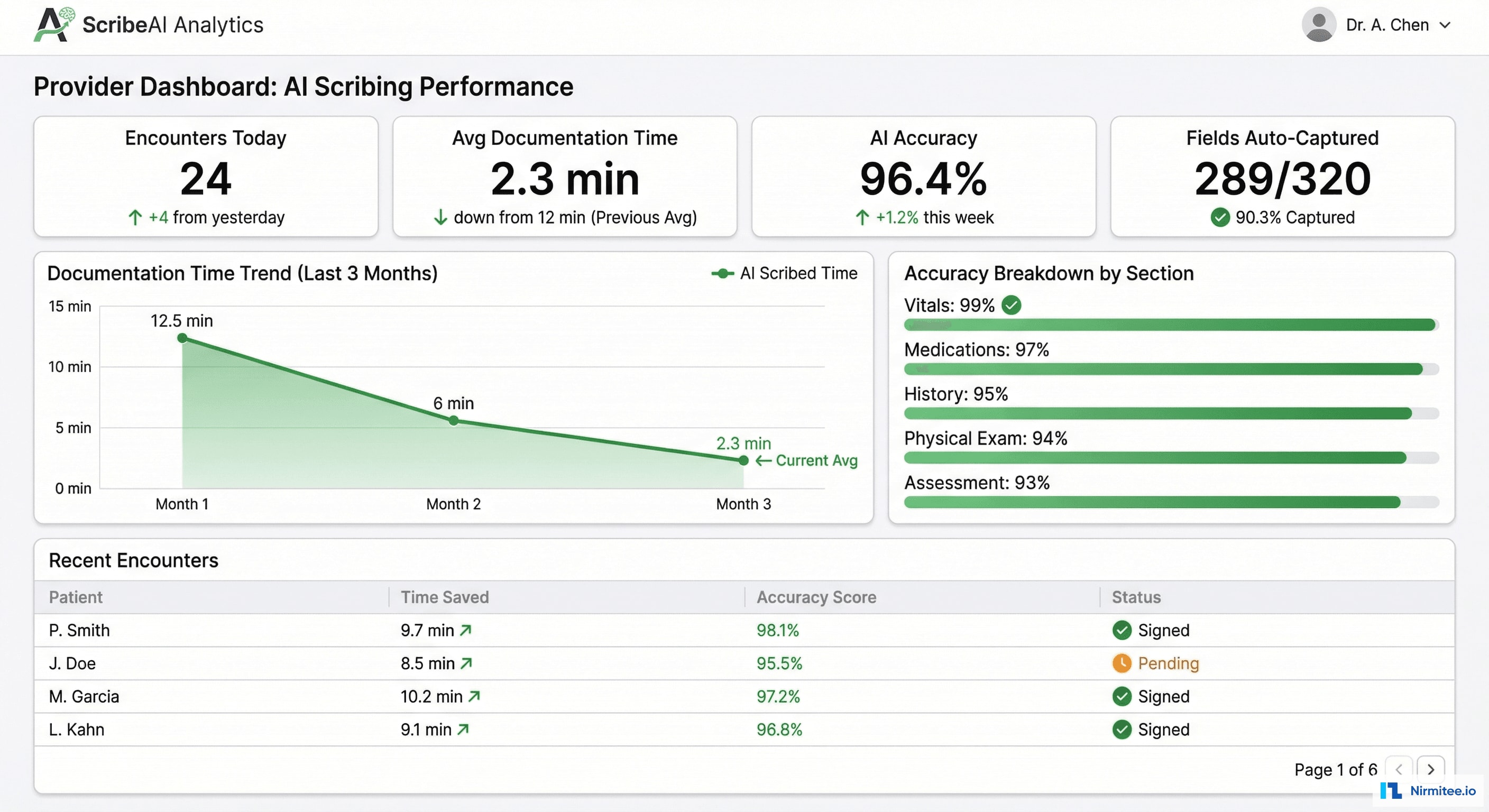

Provider Analytics Dashboard

Each physician has a personal analytics dashboard showing their documentation efficiency:

- Encounters documented today with average time per note

- AI accuracy trend: overall and by section (Vitals 99%, Medications 97%, History 95%, Physical Exam 94%, Assessment 93%)

- Time saved: cumulative hours reclaimed from manual documentation

- Learning curve: as the AI learns each physician's documentation patterns, accuracy improves over time — typically reaching peak accuracy within 2-3 weeks

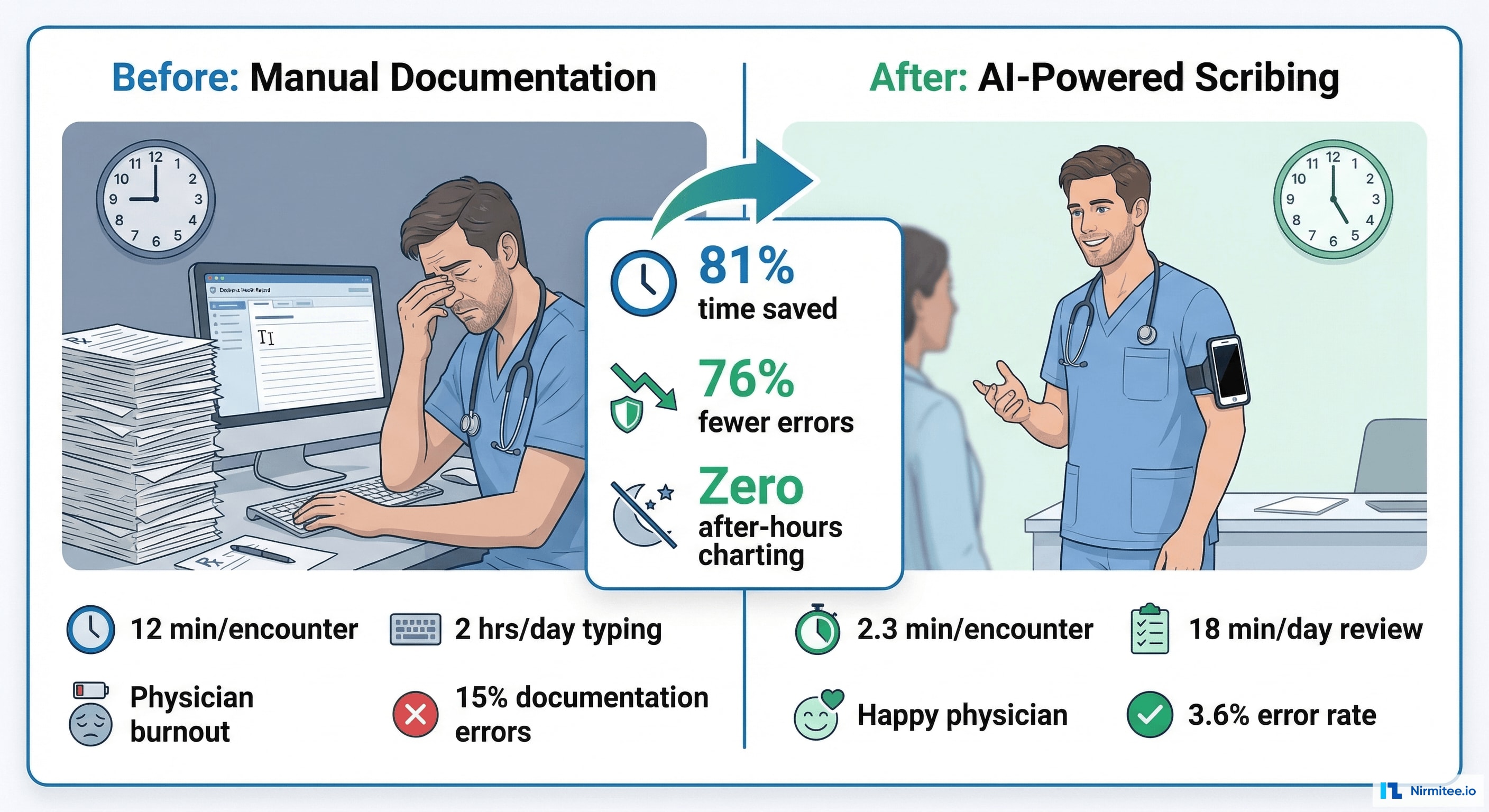

Results and Impact

| Metric | Before | After | Impact |

|---|---|---|---|

| Documentation time per encounter | 12 minutes | 2.3 minutes | 81% reduction |

| After-hours charting | 2+ hours/day | Zero | Eliminated pajama time |

| Documentation error rate | 15% | 3.6% | 76% fewer errors |

| Clinical fields captured | ~180 (manual average) | 320 (AI captures all) | 78% more complete notes |

| ICD-10 coding accuracy | 82% | 94.7% | 15% improvement → revenue uplift |

| Physician satisfaction (burnout index) | 3.2/5 (moderate burnout) | 4.4/5 (engaged) | Dramatic quality of life improvement |

| Revenue per encounter | $142 avg | $158 avg | $16/encounter uplift from better coding |

Financial Impact

For a 5-physician primary care practice seeing 25 patients each per day:

- Time saved: 5 physicians × 25 encounters × 9.7 min saved = 20+ hours/day reclaimed

- Revenue uplift from coding: 125 encounters/day × $16/encounter = $2,000/day → $500,000/year

- Scribe cost avoided: $150,000/year per scribe × 5 = $750,000/year not spent

- Error reduction: fewer denied claims from documentation errors = est. $80,000/year

- Total annual value: $1.33M for a 5-physician practice

Technology Stack

| Layer | Technology | Purpose |

|---|---|---|

| Mobile App | React Native | Audio/video capture, patient encounter management |

| Web Dashboard | React + TypeScript | Documentation review, analytics, admin |

| Backend | Node.js + Python | API gateway (Node), NLP pipeline (Python) |

| ASR | Custom medical ASR model | Speech-to-text optimized for medical terminology |

| NLP | spaCy + custom NER models | Clinical entity extraction, field mapping |

| Database | PostgreSQL + Redis | Encounter data, field mappings, real-time processing |

| EHR Integration | FHIR R4 + RPN bridge | Modern API + legacy UI automation |

| Video | WebRTC + HLS | Real-time capture, on-demand playback |

| Infrastructure | AWS (HIPAA) | GPU instances for NLP, encrypted storage |

Compliance

- HIPAA: all audio/video encrypted at rest (AES-256) and in transit (TLS 1.3). Audio retained per client policy (typically 30-90 days), then securely deleted. Access audit logs for every encounter.

- Patient Consent: verbal consent captured at the start of each encounter. Consent flag stored with the encounter record. Patients can request audio deletion at any time.

- De-identification: all AI model training uses de-identified transcripts. No PHI in training data.

- State Recording Laws: configurable consent requirements per state (one-party vs. two-party consent states).

Project Timeline

| Phase | Duration | Deliverables |

|---|---|---|

| Phase 1 | 4 weeks | Mobile capture app, audio pipeline, basic ASR integration |

| Phase 2 | 6 weeks | Clinical NLP engine, 320-field schema, speaker diarization, SOAP note generation |

| Phase 3 | 4 weeks | Documentation review UI, EHR integration (FHIR + RPN), provider analytics dashboard |

| Phase 4 | 4 weeks | Accuracy tuning, physician onboarding with 3 pilot practices, compliance audit, production hardening |

Total: 4.5 months with a team of 4 engineers + 1 NLP specialist.

Lessons Learned

- Speaker diarization is the hardest NLP problem. Distinguishing doctor from patient in a noisy exam room with overlapping speech was our biggest technical challenge. We achieved 94% diarization accuracy — high enough for clinical use, but still requiring physician review of edge cases.

- Physicians don't want perfection — they want speed. 96% accuracy with 2-minute review is vastly preferred over 99% accuracy with 5-minute review. The time savings matter more than the last few percentage points of accuracy.

- The 320-field schema was designed with physicians. We didn't pick 320 fields arbitrarily. We worked with 15 physicians across primary care, orthopedics, and cardiology to identify every field they document. The schema covers 98% of outpatient documentation needs.

- RPN is a bridge, not a destination. The RPN integration works for legacy EHRs today, but it's fragile — EHR UI updates break the navigation. FHIR is the long-term path. We built both because providers need solutions now, not after their EHR vendor ships a FHIR API.

- Revenue uplift sells the platform. Physicians love the time savings. Practice managers love the revenue uplift from better coding. Together, the ROI case is overwhelming.

Share

Related Case Studies

AI-Powered Personalized Oncology Treatment Platform: A Technical Case Study

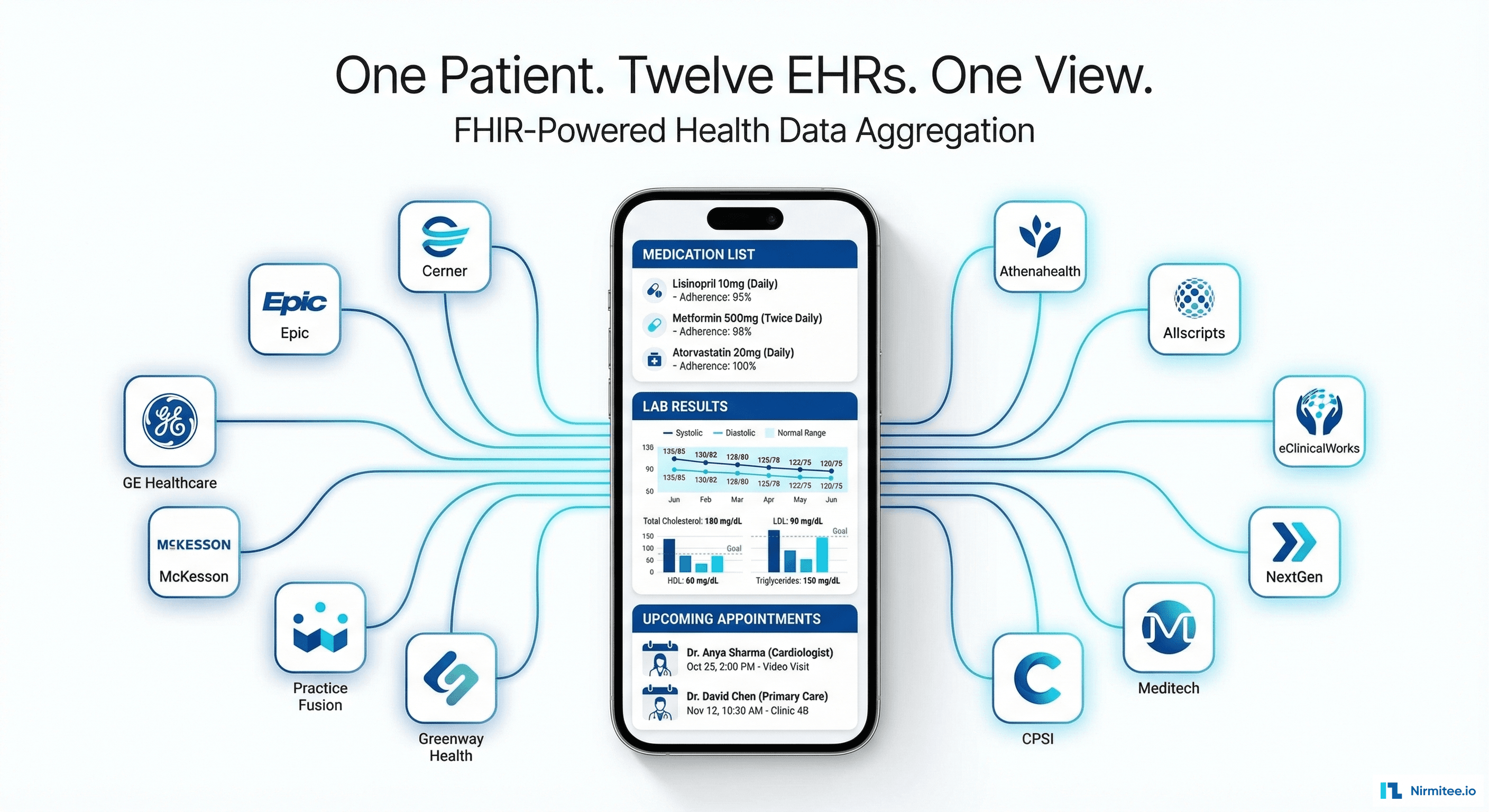

Building a Patient-First Health Record Platform: Connecting 12 US EMRs Into One View