Executive Summary

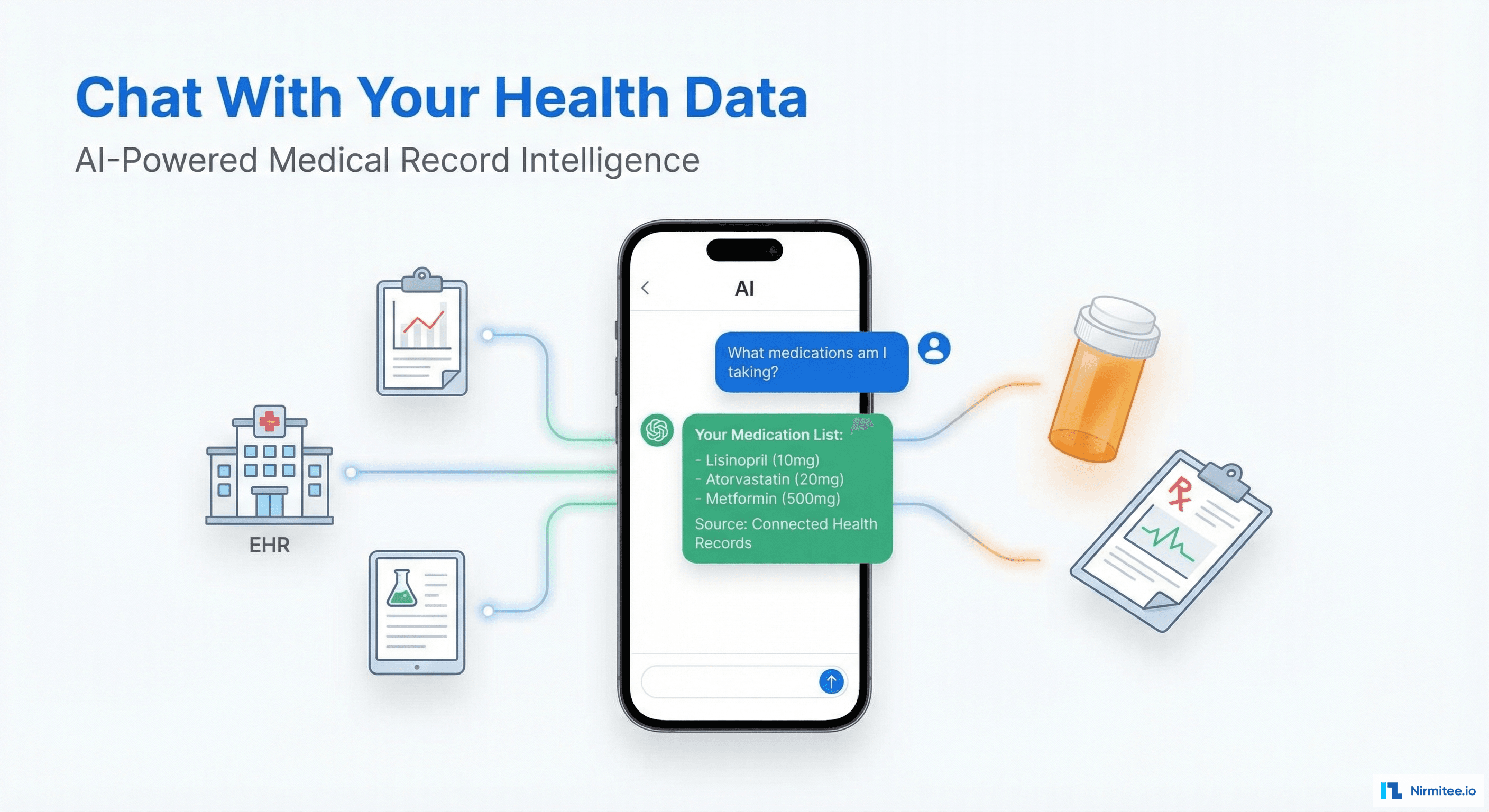

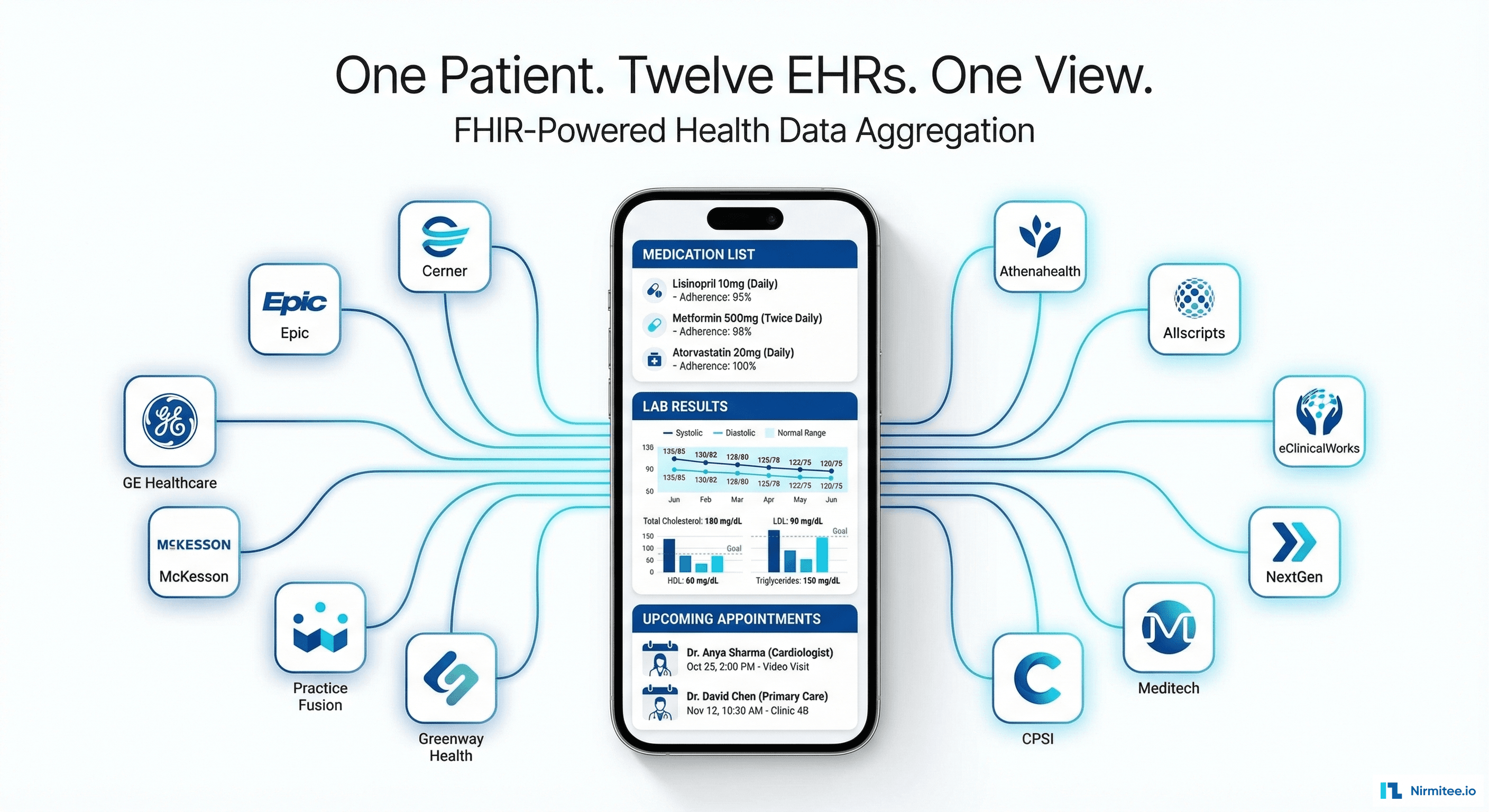

A US health tech startup wanted to give patients something that no existing product offered: the ability to ask questions about their own health records in plain English and get accurate, sourced answers drawn from their actual clinical data across multiple providers.

We built a conversational AI health data platform using RAG (Retrieval-Augmented Generation) architecture. The system connects to patients' EHRs via FHIR R4, ingests clinical data (medications, lab results, conditions, procedures, visit notes), embeds it in a vector store, and uses GPT-4 with medical prompt engineering to answer questions like "What medications am I taking?", "Show me my blood pressure trend", or "Do any of my medications interact with each other?"

The platform also searches external medical databases — clinical guidelines, drug information, clinical trials — to provide personalized health intelligence. Every AI answer includes source citations (which EHR, which date, which document) so patients and providers can verify.

Results: 94.2% answer accuracy verified by clinical review, 23% of patients had drug interactions that no single provider had detected, and 68% weekly active usage — 6x higher engagement than traditional patient portals.

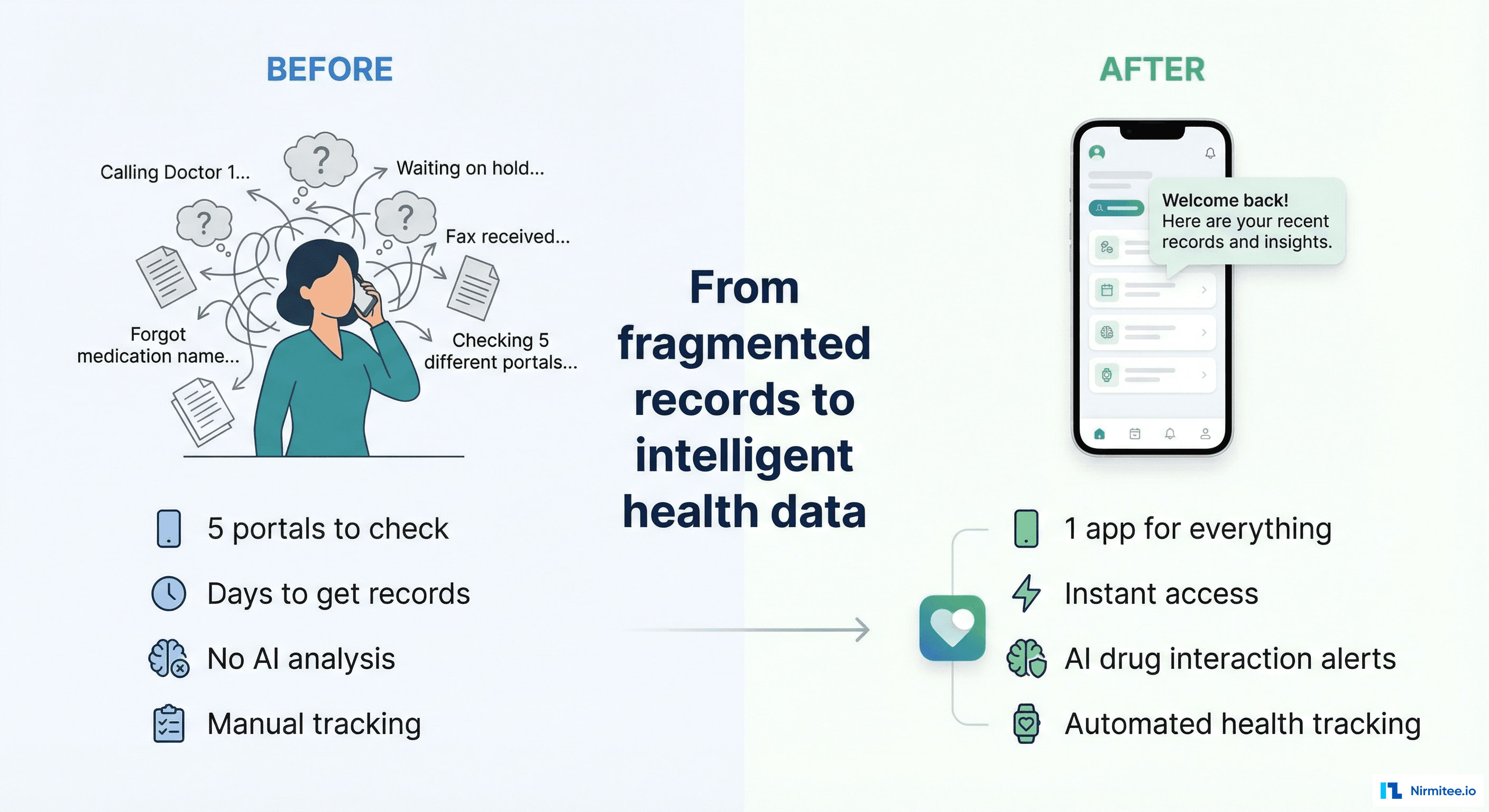

The Problem: Your Health Records Are Unreadable

Patients technically have the right to access their health records. In practice, that access is useless:

- Multiple portals: the average patient needs to log into 3-5 different patient portals to see all their records (PCP, specialists, labs, hospital)

- Medical jargon: records are written for clinicians, not patients. "Elevated ALT" means nothing to most people. "Your liver enzyme is higher than normal and may need monitoring" is what they need.

- No cross-provider analysis: each provider only sees their own data. Nobody is looking at the complete picture — all medications from all providers, all lab results from all labs, all conditions from all specialists.

- No proactive intelligence: existing patient portals are passive — they show data but don't analyze it. They won't tell you about drug interactions, missed screenings, or worrying trends.

The Conversational Interface

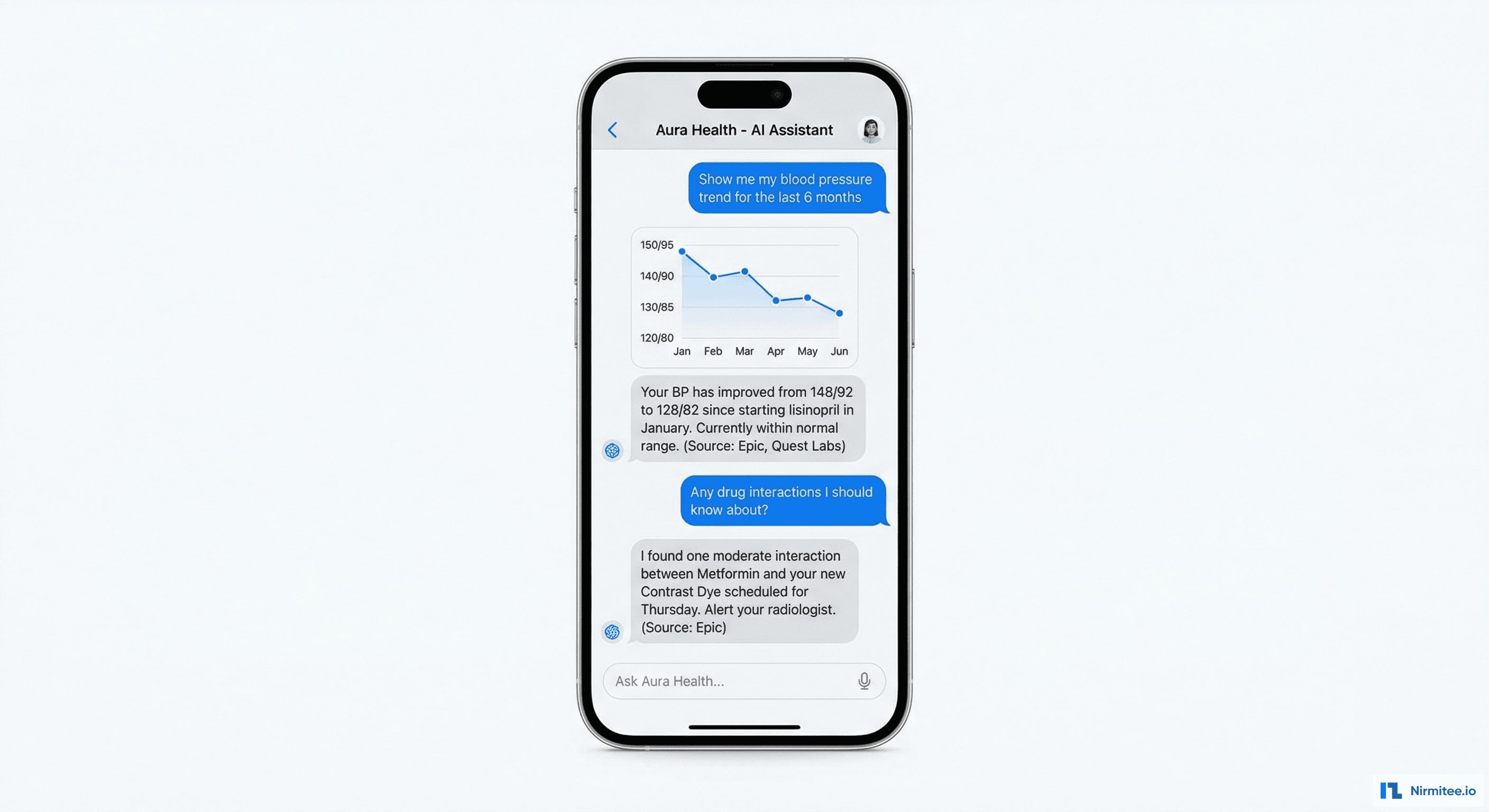

The chat interface lets patients interact with their health data naturally:

- "What medications am I taking?" → formatted list from all providers, with prescriber, dose, frequency, and purpose. Flags if the same medication appears from two different providers (potential duplication).

- "Show me my blood pressure trend for the last 6 months" → inline chart with readings from all sources, annotated with medication changes. AI commentary: "Your BP improved from 148/92 to 128/82 after starting lisinopril."

- "Do any of my medications interact?" → cross-references all active medications against interaction databases. Cites specific interactions with severity and clinical significance.

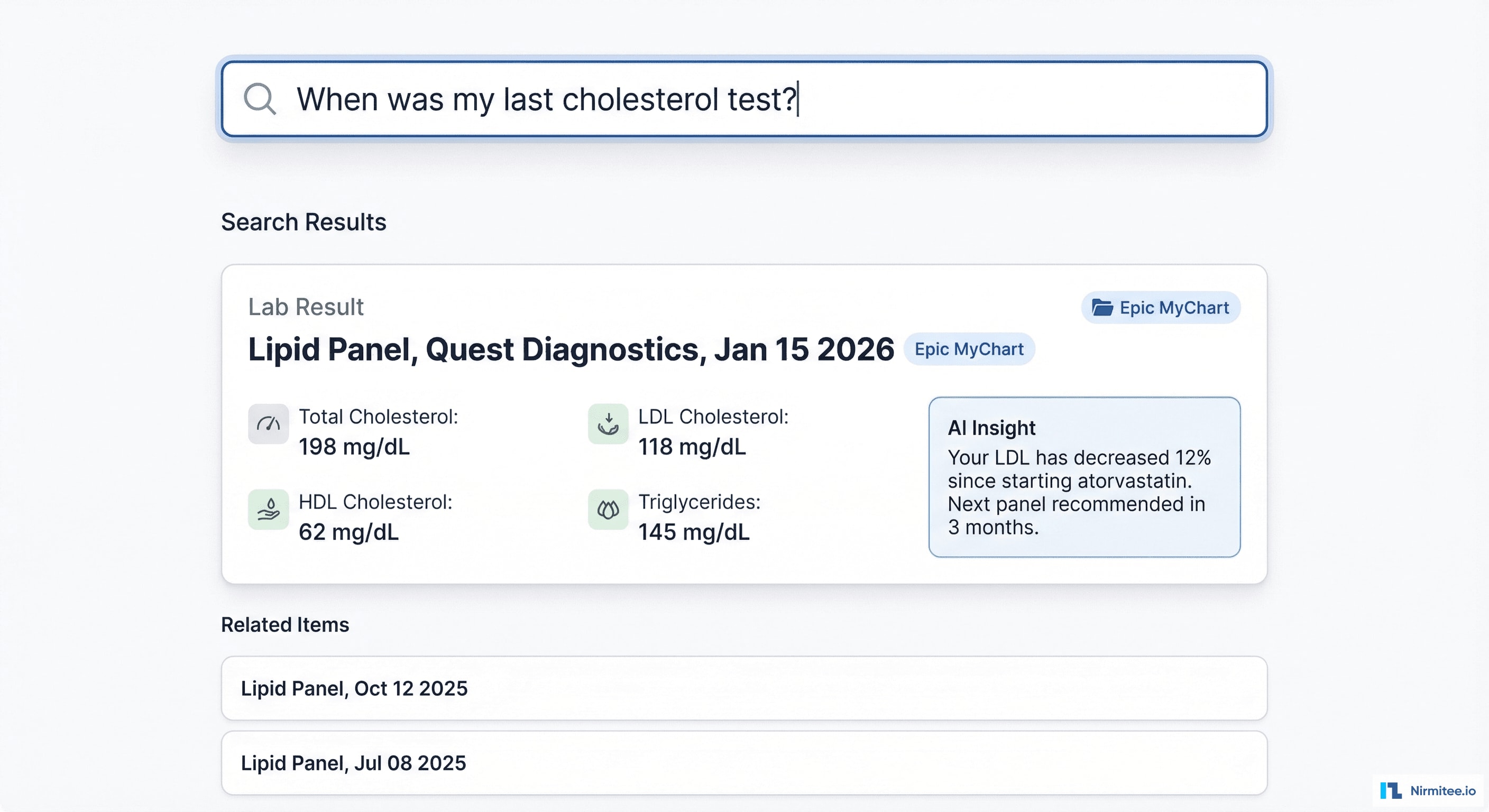

- "When was my last cholesterol test?" → finds the most recent lipid panel, shows results with normal ranges, compares to previous values.

- "Am I overdue for any screenings?" → checks age, gender, conditions, and family history against USPSTF guidelines. Lists overdue and upcoming preventive care.

Every answer cites its source: "Source: Epic MyChart, Lab Result from Quest Diagnostics, January 15, 2026"

Health Data Search

Beyond chat, the platform offers structured search across all connected health data. Search for any medical term, medication, or test name — the system returns relevant records across all providers with AI-generated context and insights.

Architecture: RAG for Medical Records

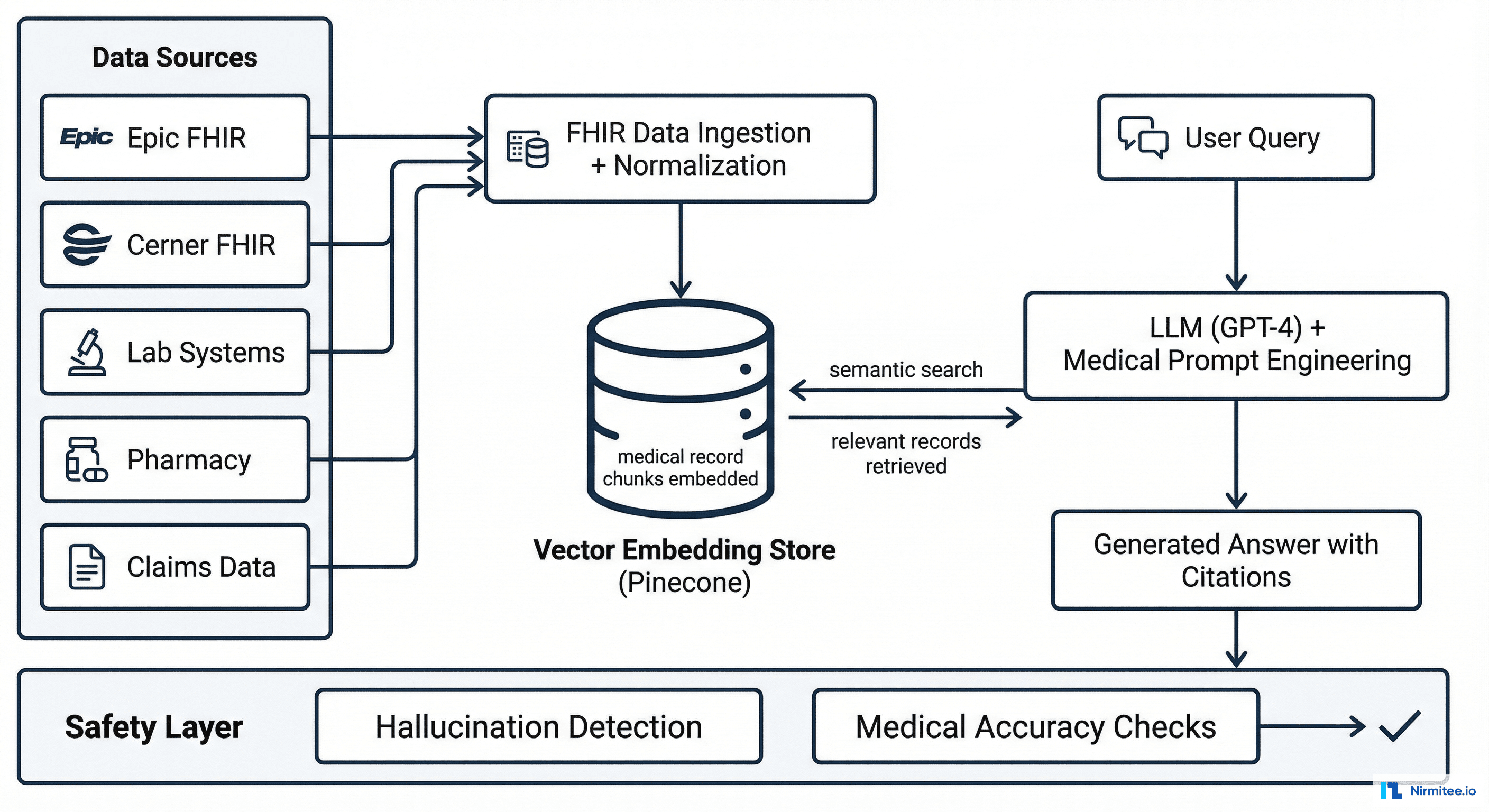

How RAG Works for Health Data

- Data ingestion: patient connects their EHR accounts via SMART on FHIR. Clinical data (medications, conditions, labs, encounters, notes) pulled and stored as FHIR resources.

- Chunking and embedding: each clinical record is chunked into semantic units (one medication record = one chunk, one lab panel = one chunk) and converted to vector embeddings using a medical-domain embedding model.

- Vector storage: embeddings stored in Pinecone with metadata (source EHR, date, resource type, patient ID) for filtered semantic search.

- Query processing: patient asks a question → medical NLP preprocesses it (expanding abbreviations, identifying clinical intent) → semantic search retrieves the most relevant record chunks.

- LLM generation: GPT-4 with custom medical system prompts generates a response using ONLY the retrieved records as context. The prompt explicitly instructs: "If the information is not in the provided context, say you don't have that data. Never hallucinate clinical information."

- Citation and safety: every generated answer includes source citations. A safety layer checks for potential hallucinations by verifying that key clinical claims (medication names, lab values) appear in the source context.

Technology Stack

| Layer | Technology | Purpose |

|---|---|---|

| Patient App | React Native | Chat interface, health dashboard, data connections |

| Web Portal | React + TypeScript | Provider-facing view, admin panel |

| Backend | Node.js + Python | API gateway (Node), RAG pipeline (Python) |

| LLM | GPT-4 (via Azure OpenAI) | Answer generation with medical prompts |

| Vector Store | Pinecone | Medical record embeddings + semantic search |

| Embeddings | Medical-domain model (PubMedBERT-based) | Clinical text → vector embeddings |

| EHR Integration | FHIR R4 (Epic, Cerner, athena + 9 more) | Patient health record access |

| Clinical DB | PostgreSQL | Patient profiles, conversation history, consent |

| Drug Database | FDB (First Databank) + RxNorm | Drug interaction checking, medication info |

| Infrastructure | AWS (HIPAA BAA) + Azure OpenAI | HIPAA-compliant compute + LLM inference |

AI Health Insights

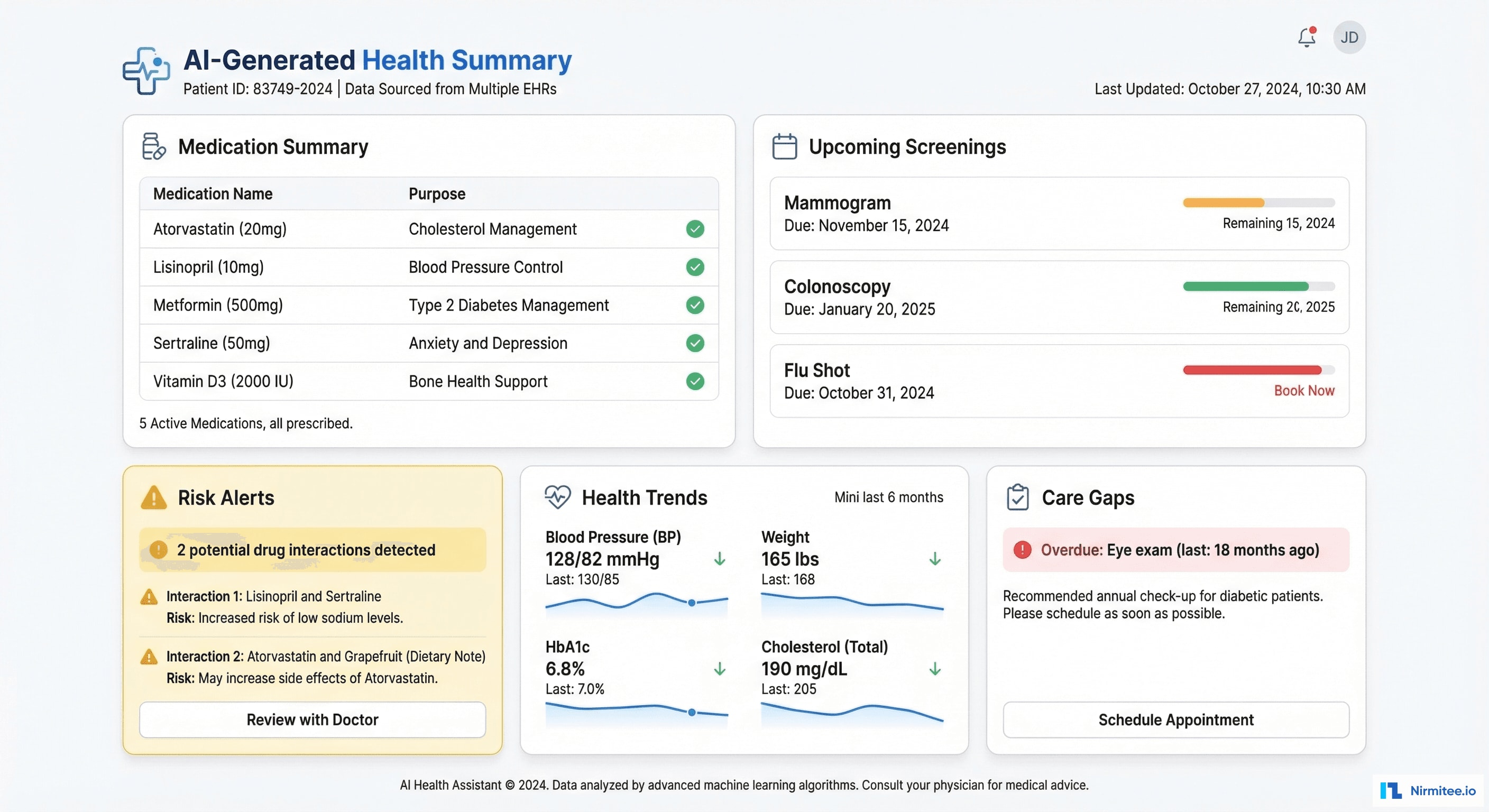

The platform proactively generates health insights without the patient needing to ask:

- Medication summary: unified list from all providers with purposes explained in plain language

- Drug interaction alerts: cross-provider medication analysis flagging interactions that no single provider can see

- Health trends: auto-generated sparklines for key metrics (BP, weight, HbA1c, cholesterol) with AI commentary on direction

- Care gap detection: overdue screenings and preventive care based on age, conditions, and guidelines

- Risk stratification: cardiovascular risk, diabetes risk, kidney risk — calculated from the unified clinical picture

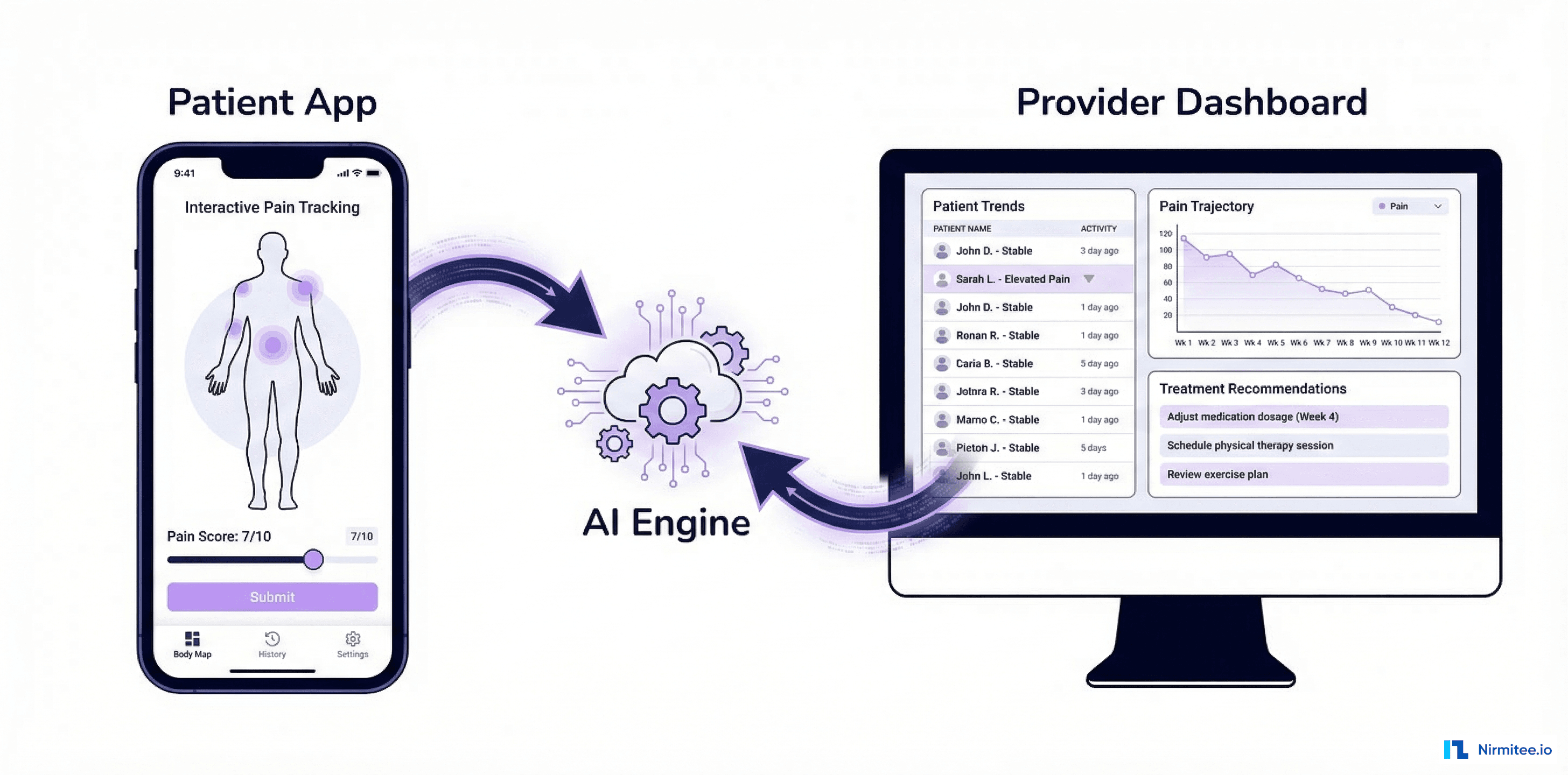

Treatment Plan Tracking

Patients can track their treatment plans with progress indicators for each clinical goal. Medication adherence tracking shows how consistently they're taking prescribed medications.

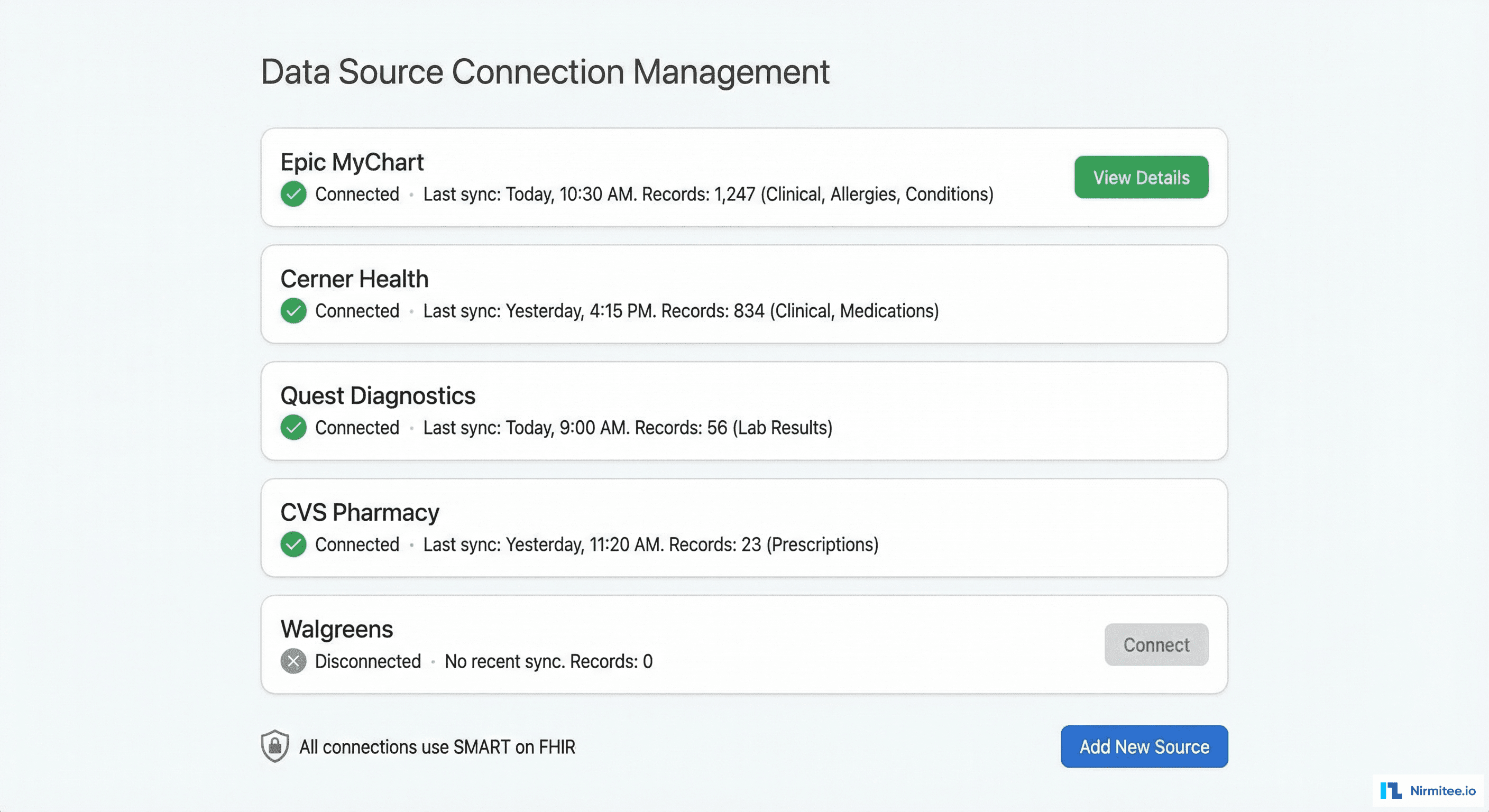

Data Source Management

Patients control exactly which data sources are connected. Each connection uses SMART on FHIR authorization — revocable at any time. The system shows how many records are synced from each source and when the last sync occurred.

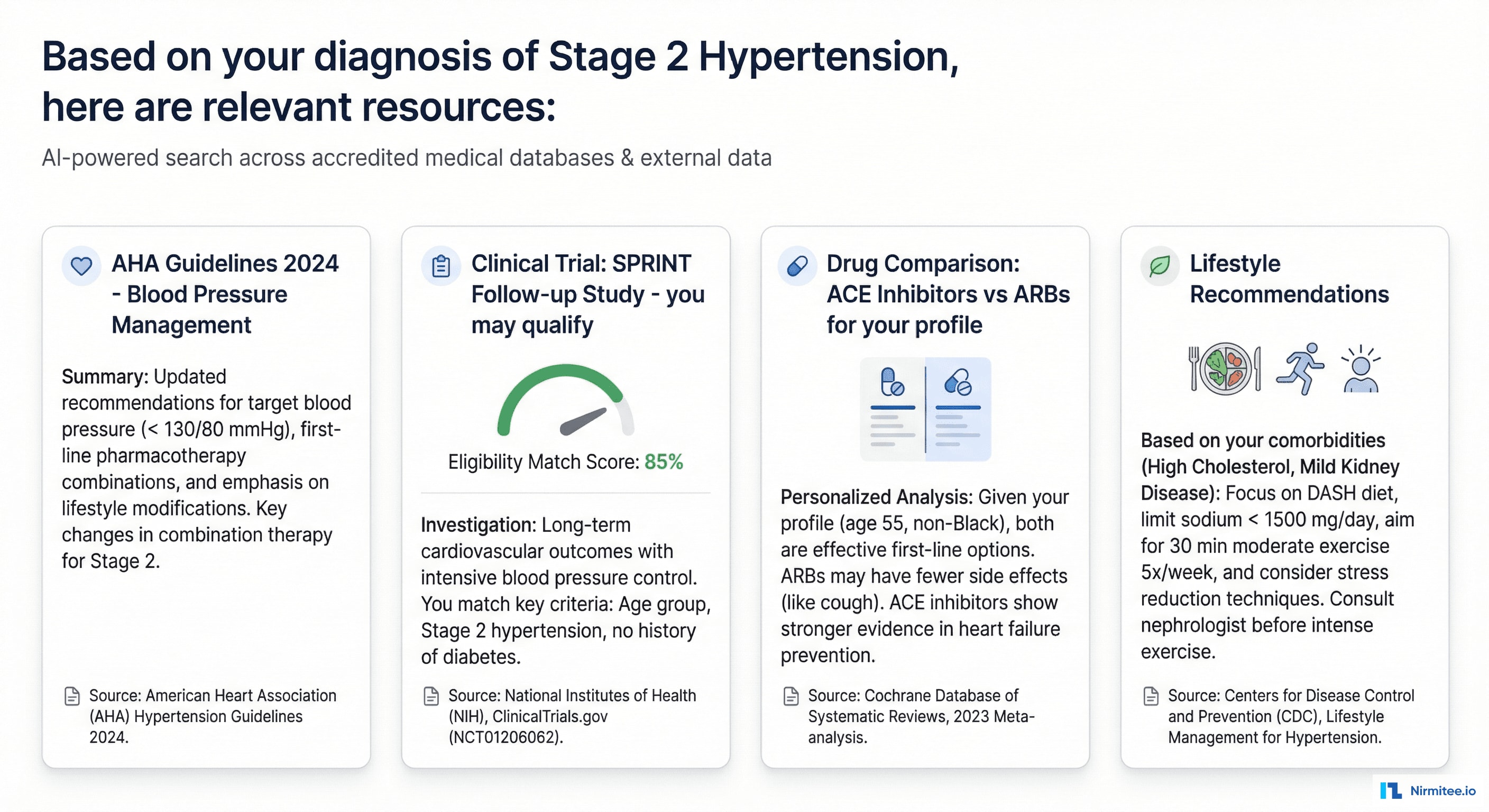

External Medical Intelligence

Beyond the patient's own records, the AI searches external medical databases to provide personalized health intelligence:

- Clinical guidelines: AHA, ADA, USPSTF recommendations matched to the patient's specific conditions

- Clinical trial matching: "Based on your Stage 2 hypertension and diabetes, you may qualify for the SPRINT Follow-up Study" — with eligibility scoring

- Drug information: personalized drug comparisons based on the patient's actual medication list and conditions

- Lifestyle recommendations: AI-generated suggestions tailored to the patient's specific health profile, not generic advice

Results

| Metric | Result |

|---|---|

| Answer accuracy | 94.2% (verified by clinical review panel) |

| Hallucination rate | 0.3% (safety layer catches most before delivery) |

| Drug interactions detected | 23% of patients had at least one cross-provider interaction invisible to any single doctor |

| Care gaps identified | 40% of patients had overdue preventive screenings |

| Weekly active users | 68% (vs. 11% for typical patient portals — 6x engagement) |

| Average questions per user/week | 4.7 |

| Patient satisfaction | 4.6/5.0 |

| EHRs connected | 12 major US systems via FHIR R4 |

| Average response time | 3.2 seconds (question to cited answer) |

Safety and Compliance

- Hallucination prevention: every AI-generated clinical claim is verified against the source context. If the LLM generates a medication name not in the patient's records, the safety layer catches it and reformulates.

- Medical disclaimer: every response includes "This is informational only. Consult your healthcare provider for medical decisions."

- HIPAA: all data encrypted, processed in HIPAA-compliant infrastructure (AWS + Azure OpenAI with BAA), no PHI in LLM training data

- Audit trail: every question and answer logged with source records used, for clinical and legal traceability

- SOC 2 Type II: certified

Timeline

| Phase | Duration | Deliverables |

|---|---|---|

| Phase 1 | 6 weeks | FHIR integration (4 EHRs), data ingestion pipeline, vector embedding, basic chat MVP |

| Phase 2 | 6 weeks | RAG pipeline optimization, medical prompt engineering, drug interaction engine, +8 EHRs |

| Phase 3 | 4 weeks | Proactive insights, external data search, treatment tracking, safety layer hardening |

| Phase 4 | 4 weeks | Clinical validation (100 patients, reviewed by physicians), SOC 2 audit, production launch |

Total: 5 months with 3 engineers + 1 ML engineer + 1 clinical advisor.

Lessons Learned

- RAG beats fine-tuning for medical records. We tested fine-tuning GPT-4 on clinical data vs. RAG with retrieval. RAG won decisively — it's more accurate (grounded in actual records), more auditable (you can see which records informed the answer), and doesn't require retraining when new data arrives.

- The safety layer is the most important component. A 0.3% hallucination rate sounds low until you realize that a single wrong medication or lab value could cause harm. The safety layer that verifies clinical claims against source data is non-negotiable.

- Drug interaction detection is the killer feature. 23% of patients had cross-provider drug interactions. This single feature drives both patient engagement and physician endorsement of the platform.

- Plain language matters more than completeness. Patients don't want their full medical record. They want answers they can understand. "Your kidney function is stable and normal" is more valuable than "eGFR 92 mL/min/1.73m²". The AI's job is translation, not transcription.

Share

Related Case Studies

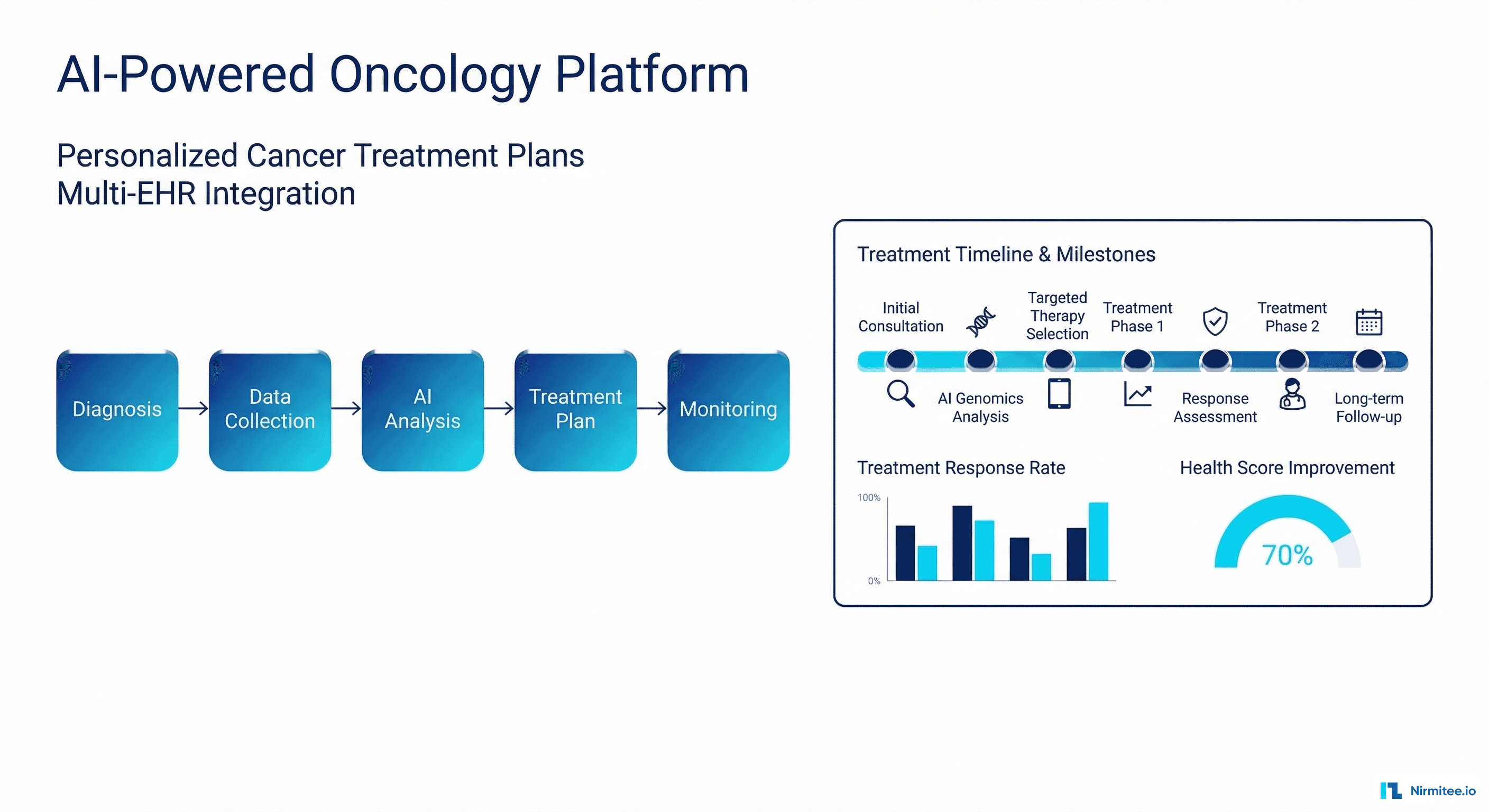

AI-Powered Personalized Oncology Treatment Platform: A Technical Case Study

Building a Patient-First Health Record Platform: Connecting 12 US EMRs Into One View