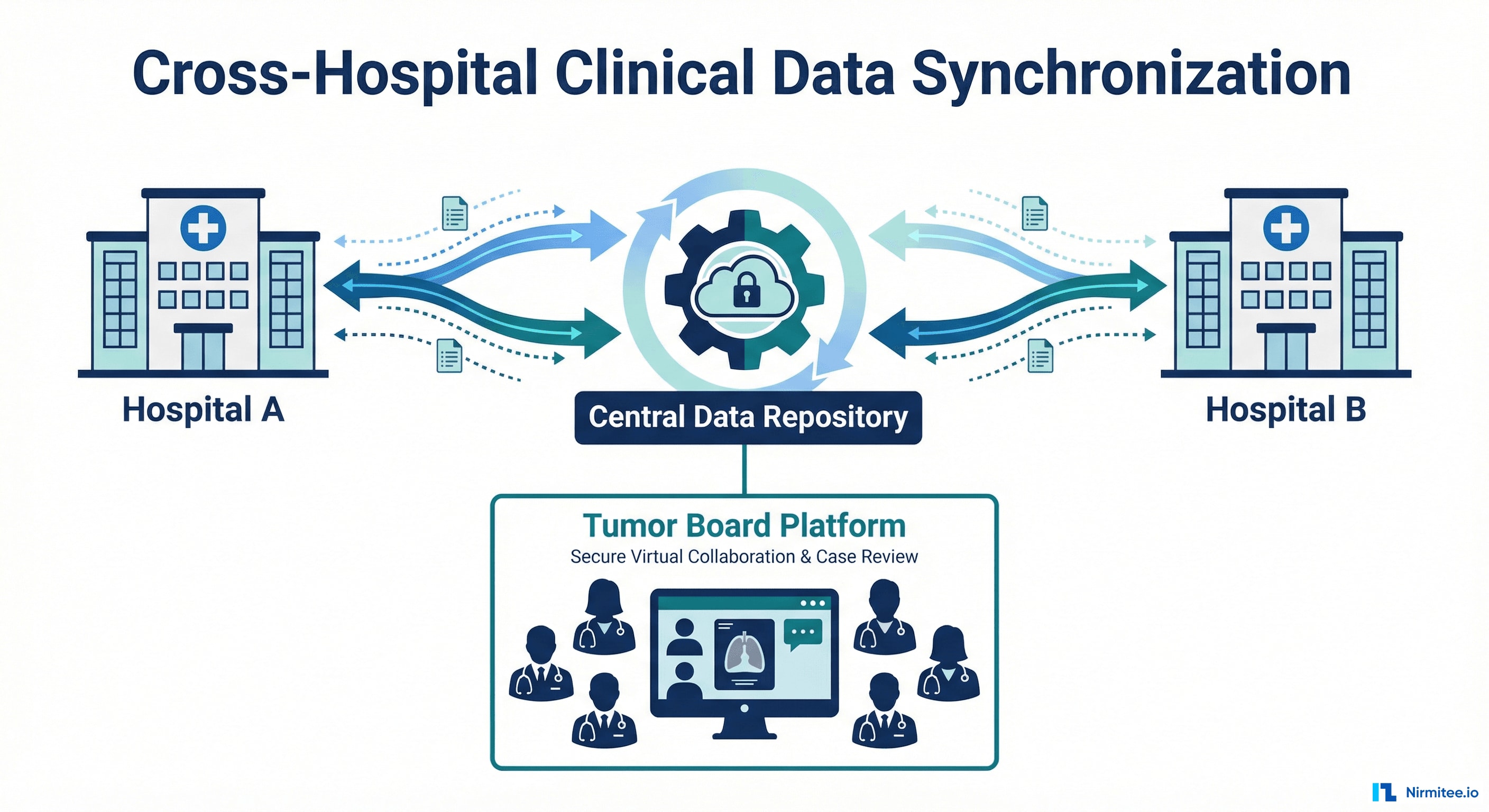

Case Study: Cross-Hospital Clinical Data Synchronization for a Regional Telemedical Network

Executive Summary

A regional hospital network in Northern Germany needed two independent hospitals to collaborate on cancer treatment decisions — but their clinical data was trapped in isolated systems that couldn't talk to each other.

We built a production-grade data synchronization platform that connects three Clinical Data Repositories, two Hospital Information Systems, and a Tumor Board application into a seamless network — enabling oncologists from both hospitals to make joint treatment decisions with complete, real-time patient data.

| Problem | Clinicians manually compiled patient records from two separate hospital systems for tumor board meetings — missing data, delayed decisions, duplicated effort |

| Solution | Real-time bidirectional data sync using FHIR R4 + openEHR standards, with CDC pipeline and zero-data-loss architecture |

| Timeline | 18 months (April 2024 — November 2025) |

| Team | 6 engineers (2 senior backend, 1 DevOps/SRE, 1 solution architect, 1 QA, 1 project lead) |

| Standards | HL7 FHIR R4, openEHR, HL7v2 |

| Stack | Spring Boot, Temporal, PostgreSQL, Debezium, Kafka, Kubernetes, Keycloak, Prometheus, Grafana |

Results at a Glance

- < 15 min sync latency (down from hours of manual data compilation)

- Zero data loss in production — fault-tolerant at every pipeline layer

- 13+ FHIR resource types synchronized bidirectionally across 3 CDR instances

- 20-field structured tumor board decisions flow back automatically — no more PDFs or fax

- 99.9%+ sync success rate with auto-retry and proactive alerting

- Scalable to N hospitals — adding a new facility requires a config file, not code changes

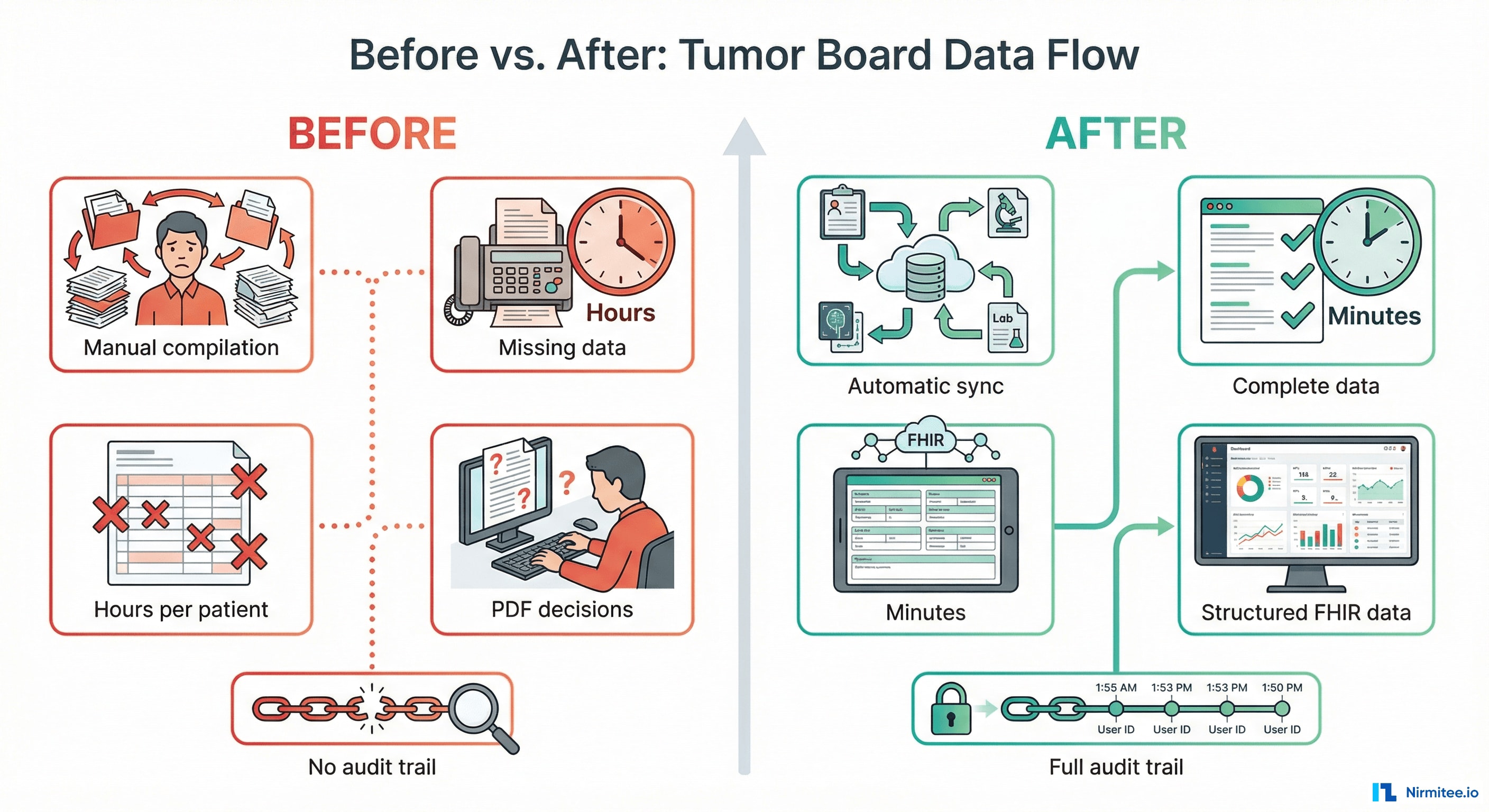

The Before: What Clinicians Were Dealing With

Before this system existed, here's what happened when a neuro-oncology patient needed a multidisciplinary tumor board review across two hospitals:

The Manual Process

- A physician at Hospital A identifies a patient who needs a tumor board discussion

- The physician manually searches their local HIS for the patient's imaging reports, pathology results, lab values, and prior treatments

- If the same patient has records at Hospital B, someone manually exports or faxes that data — often incomplete, often delayed

- A coordinator manually compiles all data into a presentation or printed packet for the board

- During the meeting, clinicians often discover missing information — a recent CT scan at the other hospital that nobody knew about

- After the board makes a recommendation, someone manually types the decision into each hospital's system — or distributes a PDF that sits in an email inbox

The Pain Points

| Problem | Clinical Impact |

|---|---|

| Data scattered across two isolated systems | Treatment decisions based on incomplete data |

| Manual compilation takes hours per patient | Fewer patients discussed per board; delays in starting treatment |

| No structured format for decisions | Recommendations lost in free-text notes or PDFs; not queryable |

| No automatic back-flow of decisions | Referring physicians don't see recommendations; follow-up delayed |

| No audit trail | Compliance risk; no way to verify what data the board reviewed |

| Adding a third hospital = more manual work | The process doesn't scale |

The After: What Clinicians Experience Now

- A physician opens their HIS and checks a single box to flag a case for the tumor board

- Within minutes, the Transfer Service automatically syncs the complete clinical record to the Central CDR

- If Hospital B also has records (matched via national insurance number), their data flows in too — merged automatically

- The Tumor Board application shows the complete, unified patient record from both hospitals

- The board decision is recorded as a structured 20-field clinical form — not free text

- Within minutes of signing, the decision flows back to both hospitals' local systems as a queryable record

| Before | After |

|---|---|

| Hours of manual data compilation | Automatic sync within 15 minutes |

| Incomplete data at tumor boards | Complete patient record from all hospitals |

| Free-text decisions in PDFs | Structured 20-field FHIR resource |

| Manual re-entry into each HIS | Automatic back-transfer to all hospitals |

| No audit trail | Full chain of custody per transfer |

| Adding a hospital = months | Adding a hospital = one config file |

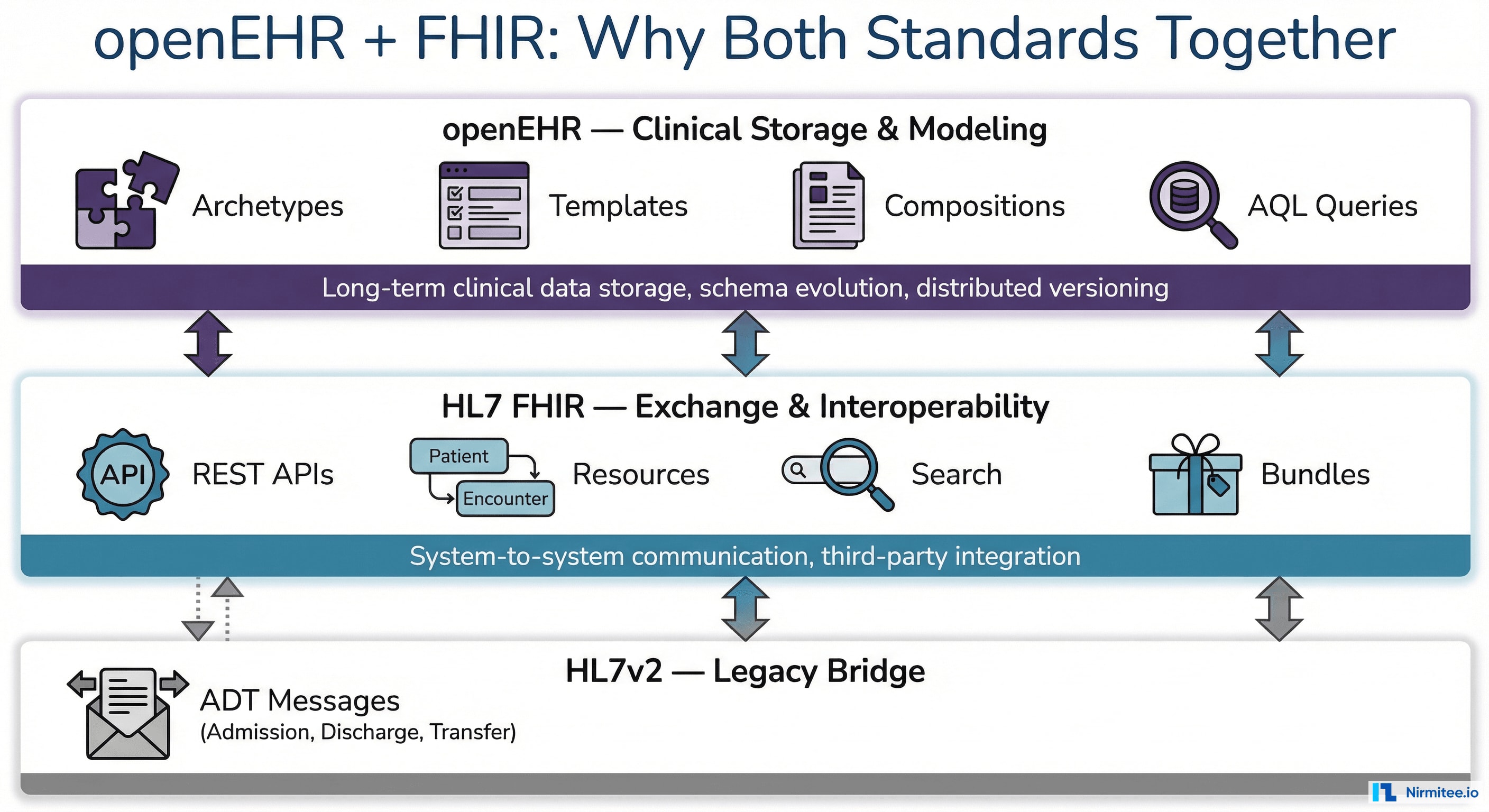

Understanding the Standards: Why openEHR + FHIR Together

This project required two seemingly overlapping healthcare data standards — and each one alone would have been insufficient.

What is openEHR?

openEHR is an open standard for the long-term storage, structure, and querying of electronic health records. Unlike traditional database schemas designed by developers, openEHR separates the clinical knowledge model from the technical storage:

- Archetypes — Clinician-defined, reusable data models for clinical concepts (blood pressure, diagnosis, medication order). Maintained by an international clinical community.

- Templates — Hospital-specific compositions of archetypes defining what a clinical form captures (e.g., a tumor board registration combining diagnosis, staging, and imaging archetypes).

- Compositions — Actual patient data instances. Each is a complete clinical document.

- AQL (Archetype Query Language) — A SQL-like language for querying clinical data across the entire EHR, regardless of template evolution.

| openEHR Capability | Why It Mattered |

|---|---|

| Schema evolution without migration | Tumor board templates evolved during the project — no database migrations needed |

| Vendor-neutral storage | Both hospitals used different CDR implementations; openEHR made data structurally identical |

| Clinical governance | Data structures follow internationally validated models for TNM staging, Karnofsky, ECOG |

| Distributed versioning | Built-in IMPORTED_VERSION semantics track data origin across CDRs — like Git for clinical records |

| Cross-template queries (AQL) | Query "all compositions for this patient" without knowing exact template structure |

What is HL7 FHIR?

HL7 FHIR (Fast Healthcare Interoperability Resources) is the modern standard for exchanging healthcare data between systems via REST APIs:

- Resources — 150+ standardized data objects (Patient, Encounter, Observation, DiagnosticReport, QuestionnaireResponse)

- RESTful API — Standard HTTP methods (GET, POST, PUT, DELETE) with JSON/XML payloads

- Search Parameters — Standardized queries for finding resources across systems

- Bundles & Transactions — Atomic multi-resource operations for data consistency

| FHIR Capability | Why It Mattered |

|---|---|

| API-first interoperability | Third-party Tumor Board app consumed data exclusively via FHIR APIs |

| Multi-system identity | Track the same patient across Hospital A, Hospital B, and Central RTN using FHIR identifiers |

| Flag resource for triggers | Clinician checks a box → FHIR Flag created → Transfer Service activates |

| QuestionnaireResponse | 20-field tumor board decision maps perfectly to this structured FHIR resource |

| HL7v2 bridge | Legacy HIS messages normalized into structured FHIR resources |

Why Both Together?

openEHR is the clinical brain — rich, versioned data with clinical governance. FHIR is the communication backbone — clean REST APIs for system-to-system exchange. HL7v2 bridges the legacy world. Our Transfer Service speaks all three.

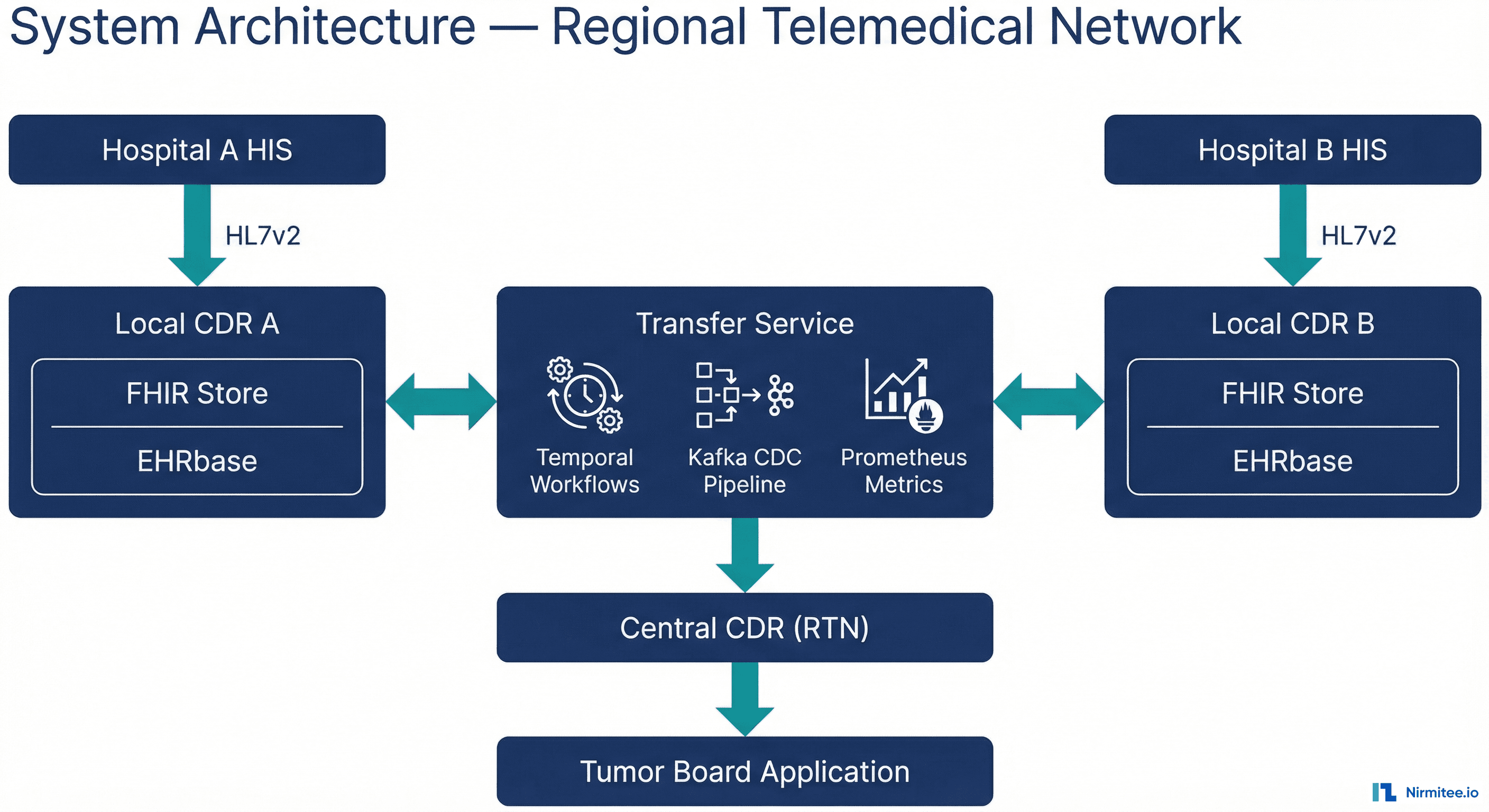

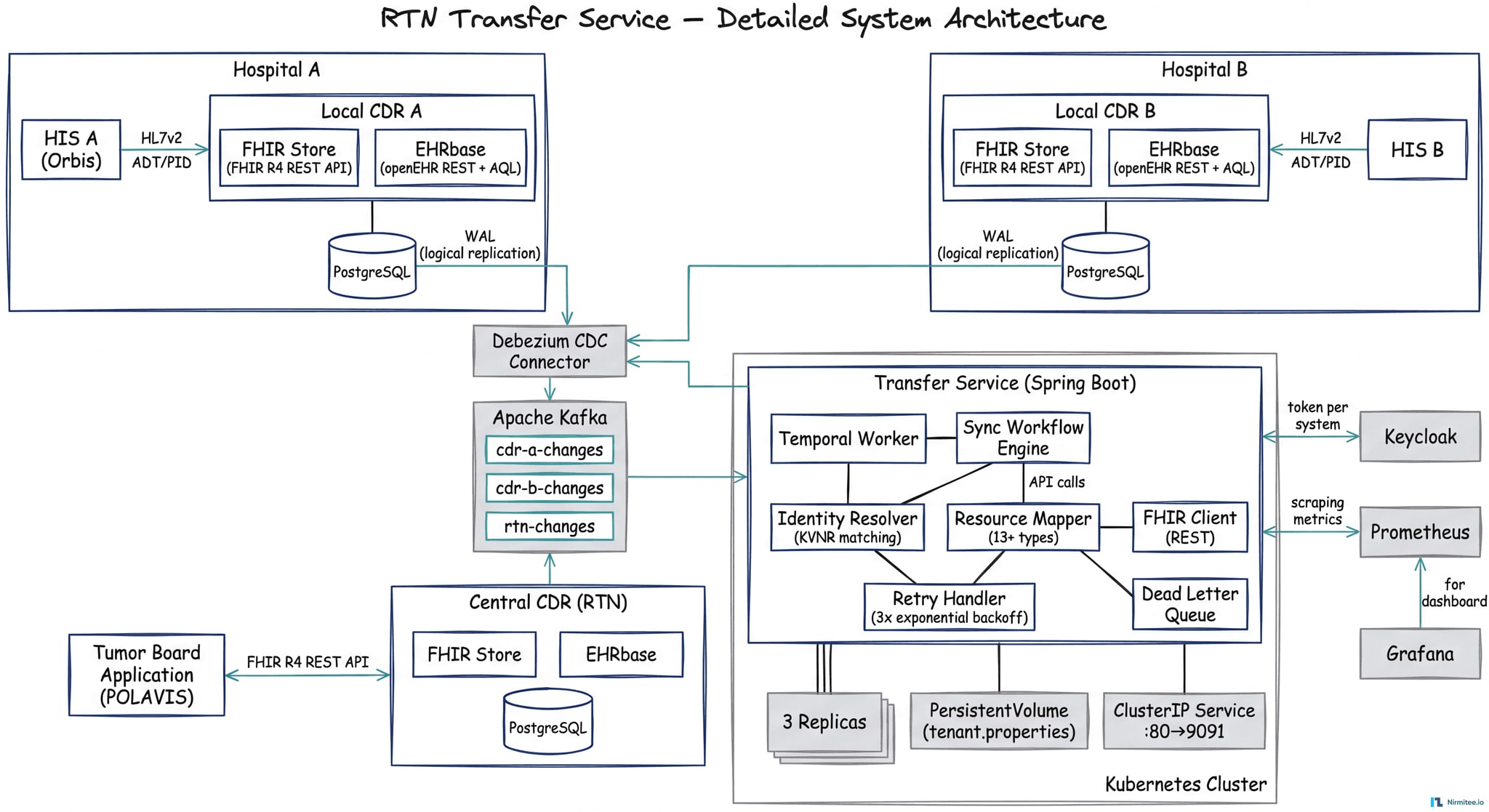

Technical Architecture

The Transfer Service is a Spring Boot microservice that orchestrates FHIR-based data synchronization between distributed CDR instances. It connects two local CDRs (Hospital A and Hospital B) through a central CDR (RTN), with a third-party Tumor Board application consuming data via the central FHIR API.

Detailed Component View

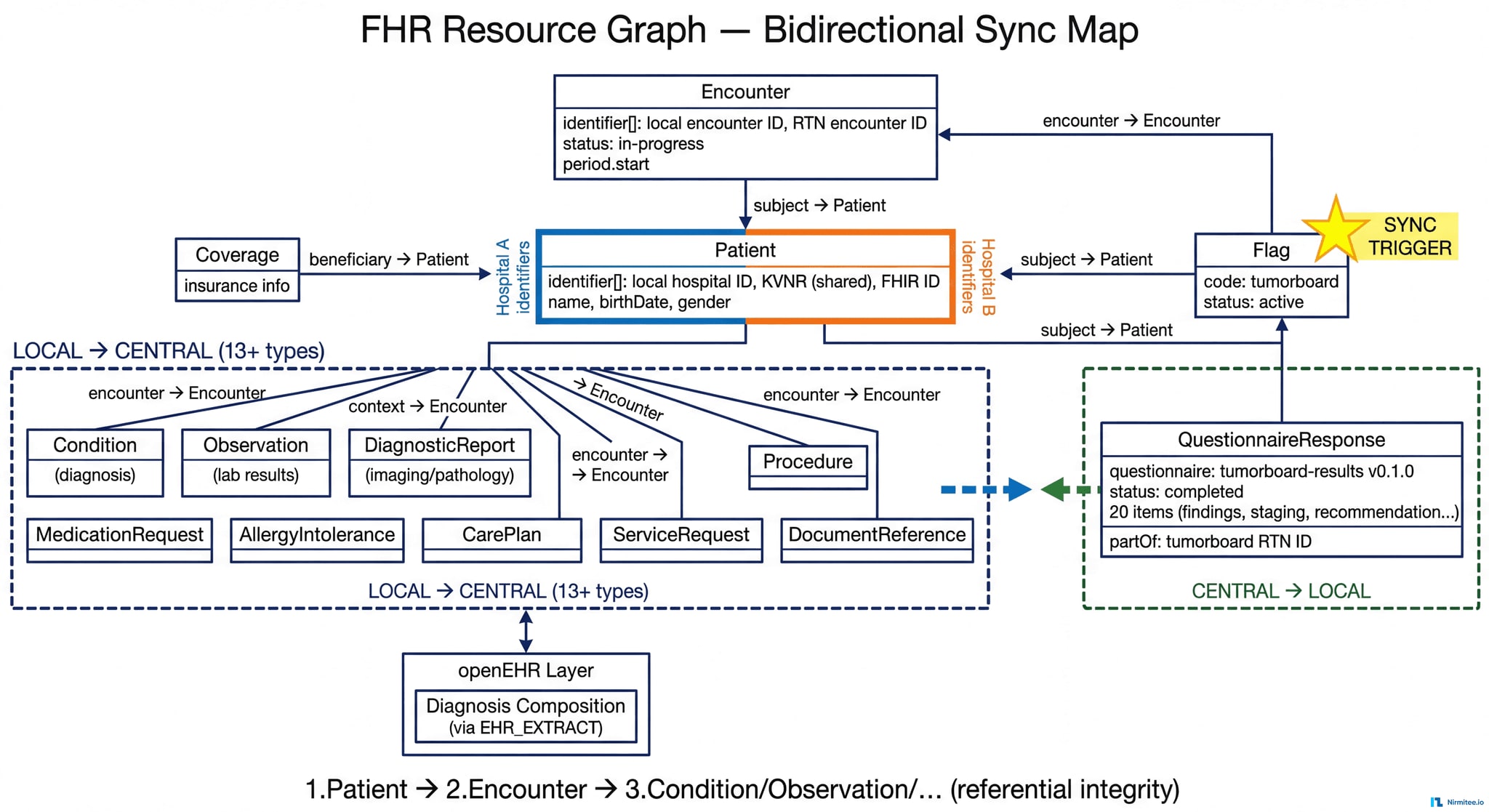

FHIR Resource Dependency Graph

The Transfer Service synchronizes 13+ FHIR resource types with strict referential integrity. The Flag resource acts as the sync trigger, and resources are created in dependency order to prevent orphaned references:

Marker-Based Trigger System

When a clinician flags a patient case in the HIS, an HL7v2 message creates a FHIR Flag resource in the local CDR with code tumorboard and status active. The Transfer Service monitors for these flags and triggers the full encounter data transfer to the central RTN.

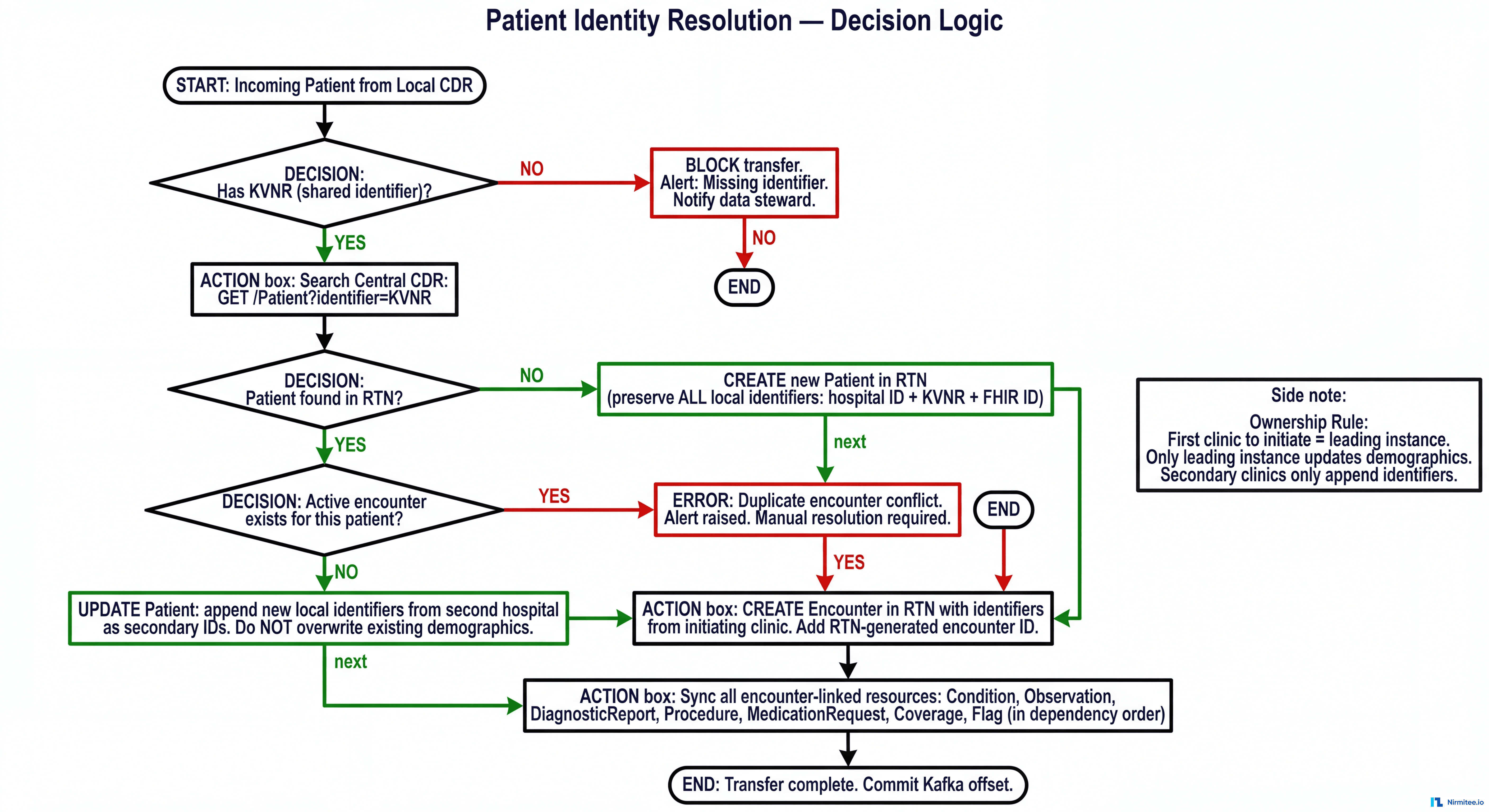

Patient Identity Resolution

Each patient carries multiple identifiers. The Transfer Service uses the national insurance number as the configurable shared identifier for cross-system matching. Before any transfer, the service validates the shared identifier exists — blocking transfers and raising alerts if missing, never silently skipping.

Identity Resolution Decision Logic

Encounter Merging

When Hospital A initiates a tumor case, the Transfer Service creates the patient and encounter centrally. When Hospital B flags the same patient (matched via insurance number), the service adds Hospital B's identifiers as secondary identifiers — without overwriting Hospital A's data. The initiating hospital retains ownership of core demographics.

Bidirectional FHIR Synchronization

Local → Central (13+ resource types): Patient, Encounter, Coverage, Flag, Condition, Observation, DiagnosticReport, Procedure, MedicationRequest, AllergyIntolerance, CarePlan, ServiceRequest, DocumentReference

Central → Local: QuestionnaireResponse (structured tumor board decisions)

The list of synced resource types is configurable — new types can be added without code changes. All source-system identifiers are preserved during transfer.

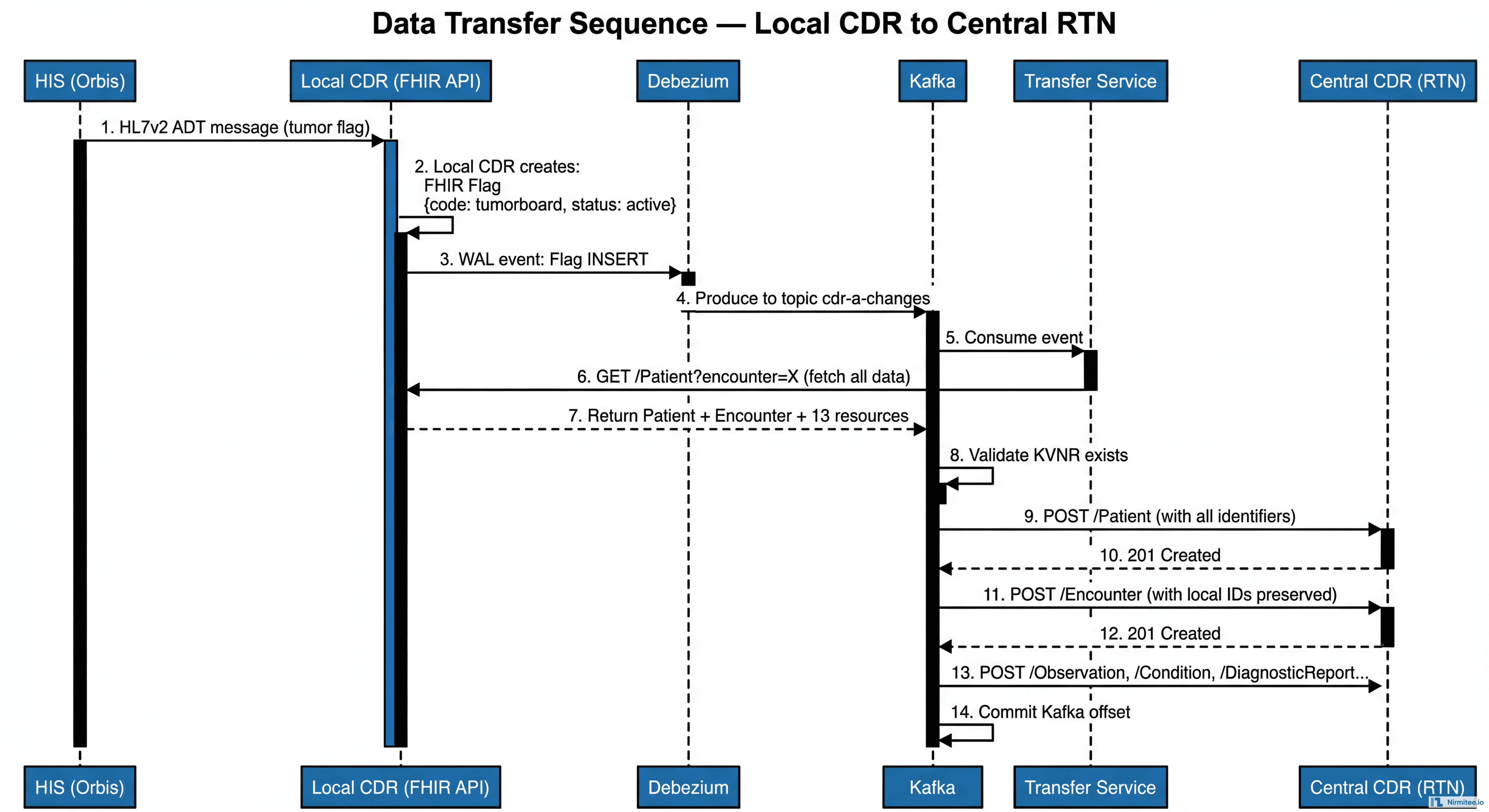

Data Transfer Sequence

The complete sequence from clinician flagging a case to data arriving in the Central CDR:

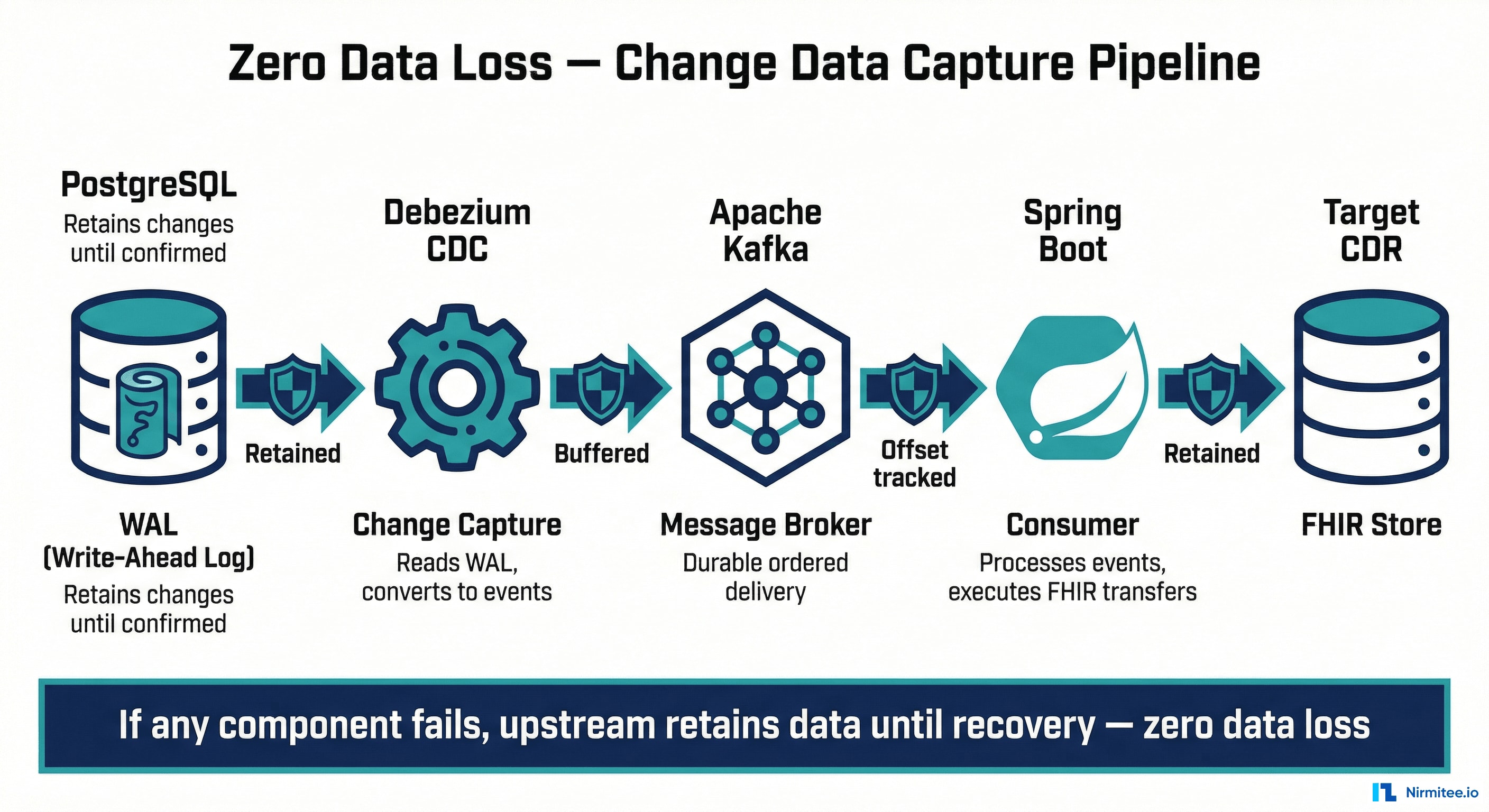

Zero Data Loss Architecture

This is a clinical system — a missed sync could mean a doctor doesn't see a pathology report at a cancer tumor board. We engineered for zero data loss at every layer.

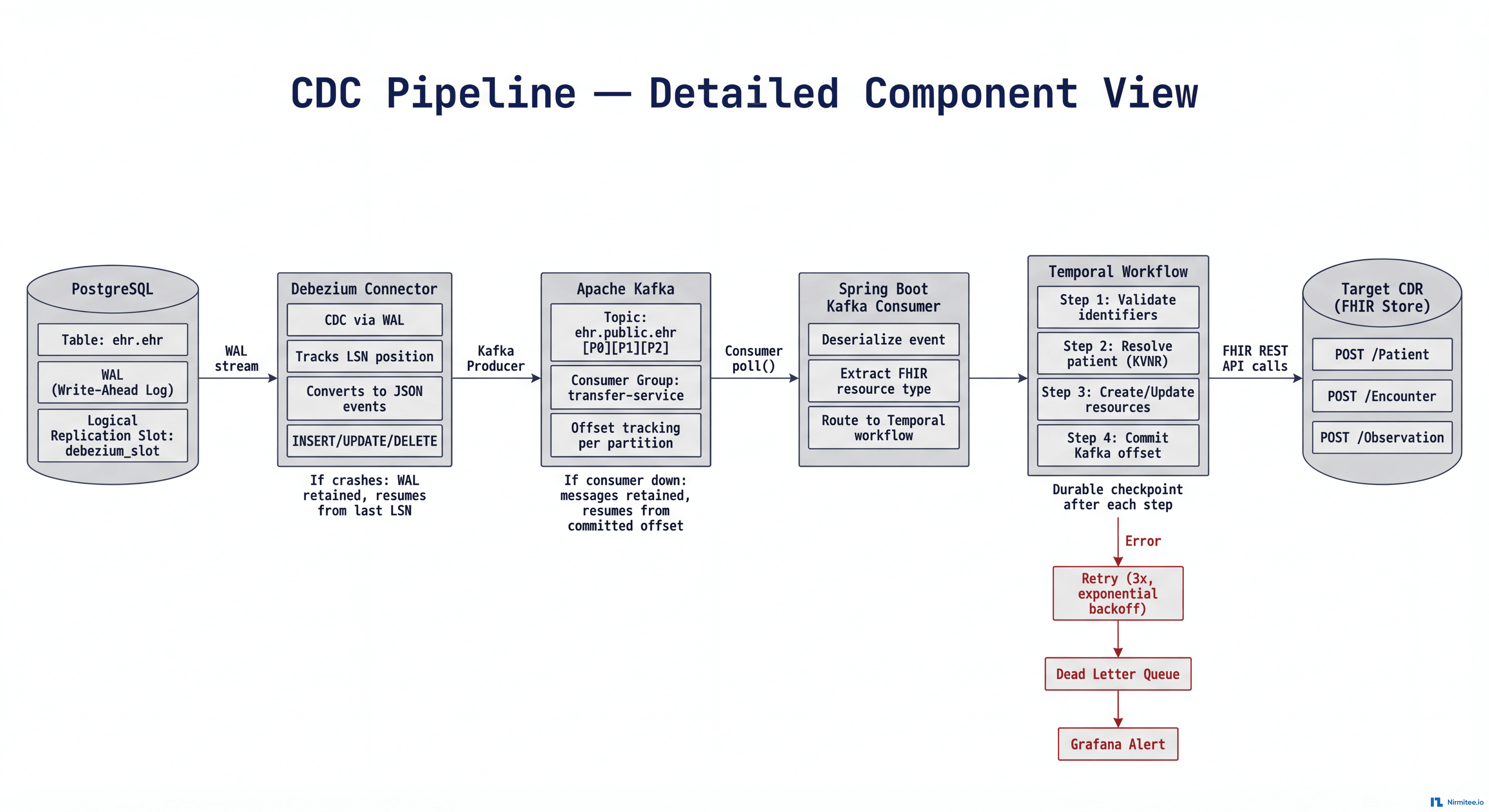

Change Data Capture Pipeline

The real-time pipeline flows: PostgreSQL (WAL) → Debezium (CDC) → Apache Kafka (message broker) → Spring Boot consumers (FHIR transfers) → Target CDR.

At every boundary, the sender retains data until the receiver acknowledges:

| Component Fails | What Happens | Data Lost? |

|---|---|---|

| Debezium crashes | PostgreSQL retains WAL segments until confirmed | No |

| Kafka goes down | Debezium pauses; changes held in WAL/memory | No |

| Consumer down | Kafka retains events until consumer resumes from last offset | No |

| Target CDR unreachable | Retries 3x with exponential backoff; then alerts + dead letter queue | No |

| Transfer Service pod crashes | Kubernetes auto-restarts; Temporal resumes from exact checkpoint | No |

| Network partition | Retries indefinitely with backoff; alert fires after threshold | No |

Temporal Workflow Orchestration

Multi-step syncs use Temporal for durable workflow orchestration. If the service crashes mid-sync (after creating Patient but before Encounter), Temporal resumes from exactly where it left off — no partial syncs, no orphaned resources. Every workflow is inspectable, with configurable timeouts and compensation logic for cleanup.

Sequential Sync with Referential Integrity

Resources are created in strict dependency order: Patient → Encounter → Condition/Observation/DiagnosticReport/Procedure. This prevents orphaned references. Failed transfers retry up to 3 times with exponential backoff; permanent failures route to a dead letter queue for manual investigation.

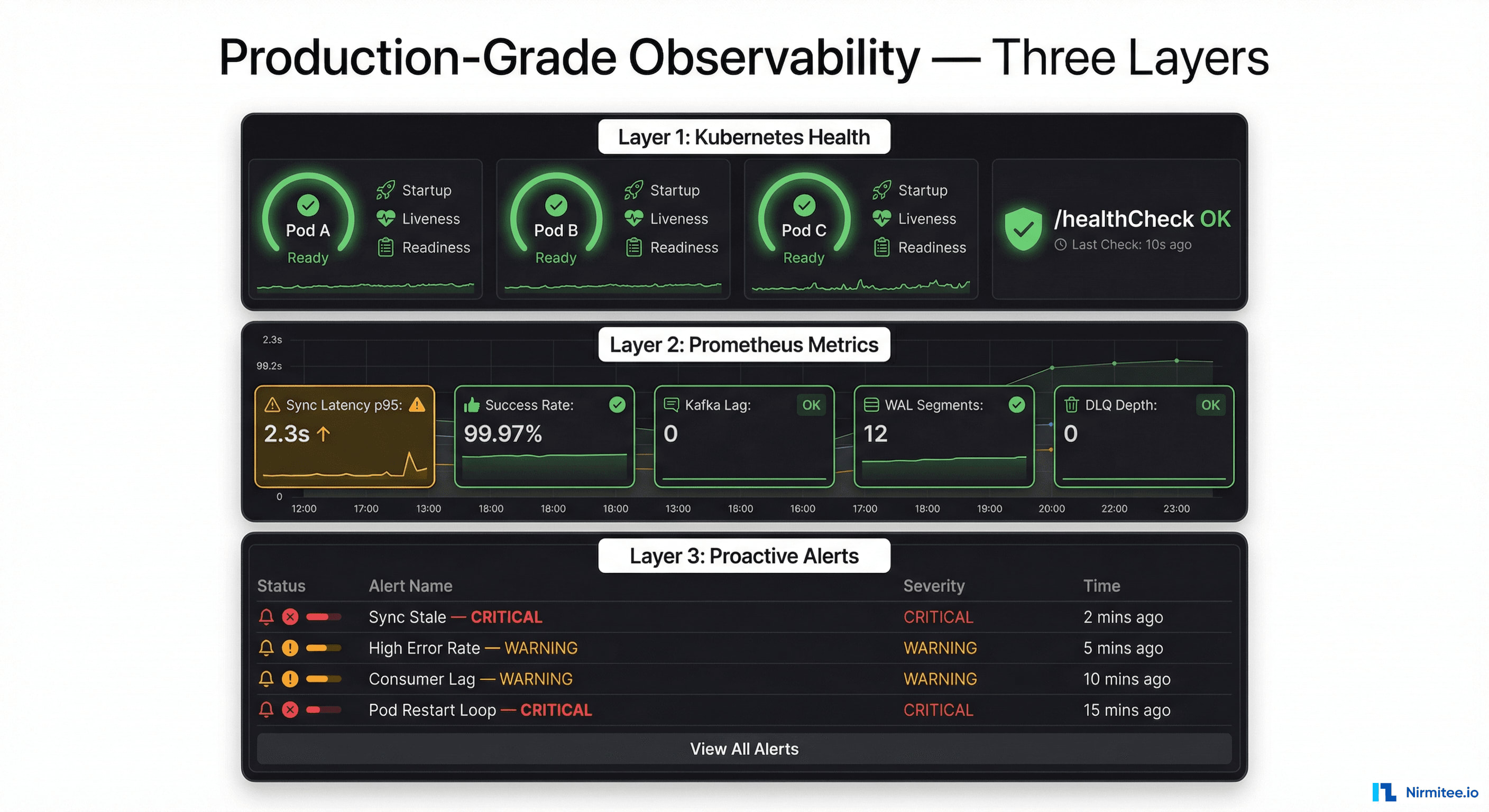

Production-Grade Observability

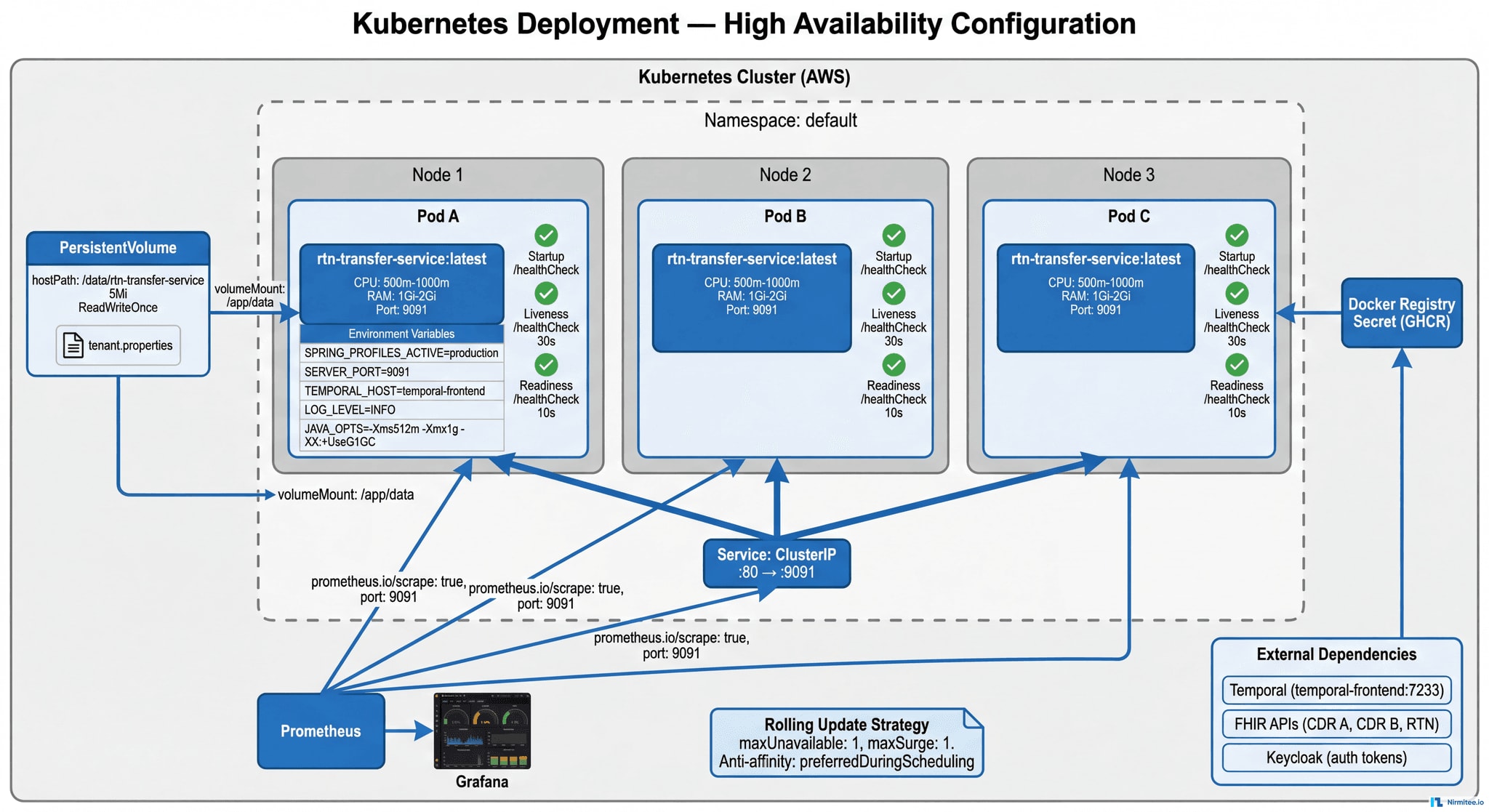

Kubernetes Deployment Architecture

Layer 1: Kubernetes Infrastructure

- 3 replicas with pod anti-affinity — spread across nodes for fault tolerance

- Health probes: Startup (30 failures/5 min), Liveness (every 30s), Readiness (every 10s)

- Rolling updates — zero-downtime deployments with instant rollback capability

- Security: Non-root containers, read-only filesystem, dropped capabilities

- Resource limits: 1 CPU / 2Gi RAM per pod — prevents OOM kills

Layer 2: Prometheus Application Metrics

- Sync operation latency (p50, p95, p99) per source → target pair

- Success/failure counters per tenant and resource type

- Time since last successful sync (SLA: < 15 min)

- Kafka consumer lag, Debezium connector status, WAL segment count

- Error counters by type: auth failure, timeout, missing identifier, conflict

- Dead letter queue depth for permanent failures

Layer 3: Proactive Grafana Alerts

| Alert | Condition | Severity |

|---|---|---|

| Sync Stale | No successful sync > 15 min | CRITICAL |

| High Error Rate | > 5% failures in 10 min | WARNING |

| Consumer Lag Growing | Kafka lag increasing > 5 min | WARNING |

| WAL Segments High | PostgreSQL WAL > threshold | CRITICAL |

| Dead Letter Queue | Any message in DLQ | WARNING |

| Pod Restart Loop | > 3 restarts in 10 min | CRITICAL |

| Auth Token Failure | Keycloak refresh failing | CRITICAL |

| CDR Unreachable | FHIR API returning 5xx | CRITICAL |

| Disk Pressure | Volume > 80% | WARNING |

| Missing Identifiers | Patient missing shared ID | WARNING |

Grafana Dashboard Suite

- Sync Overview — real-time data flow map with green/yellow/red status per connection

- Pipeline Health — Debezium status, Kafka lag, WAL count, latency distributions

- Error Analysis — error rates by type, retry patterns, DLQ depth

- Resource Breakdown — volume and latency per FHIR resource type

- Tenant Dashboard — per-hospital sync status and pending transfers

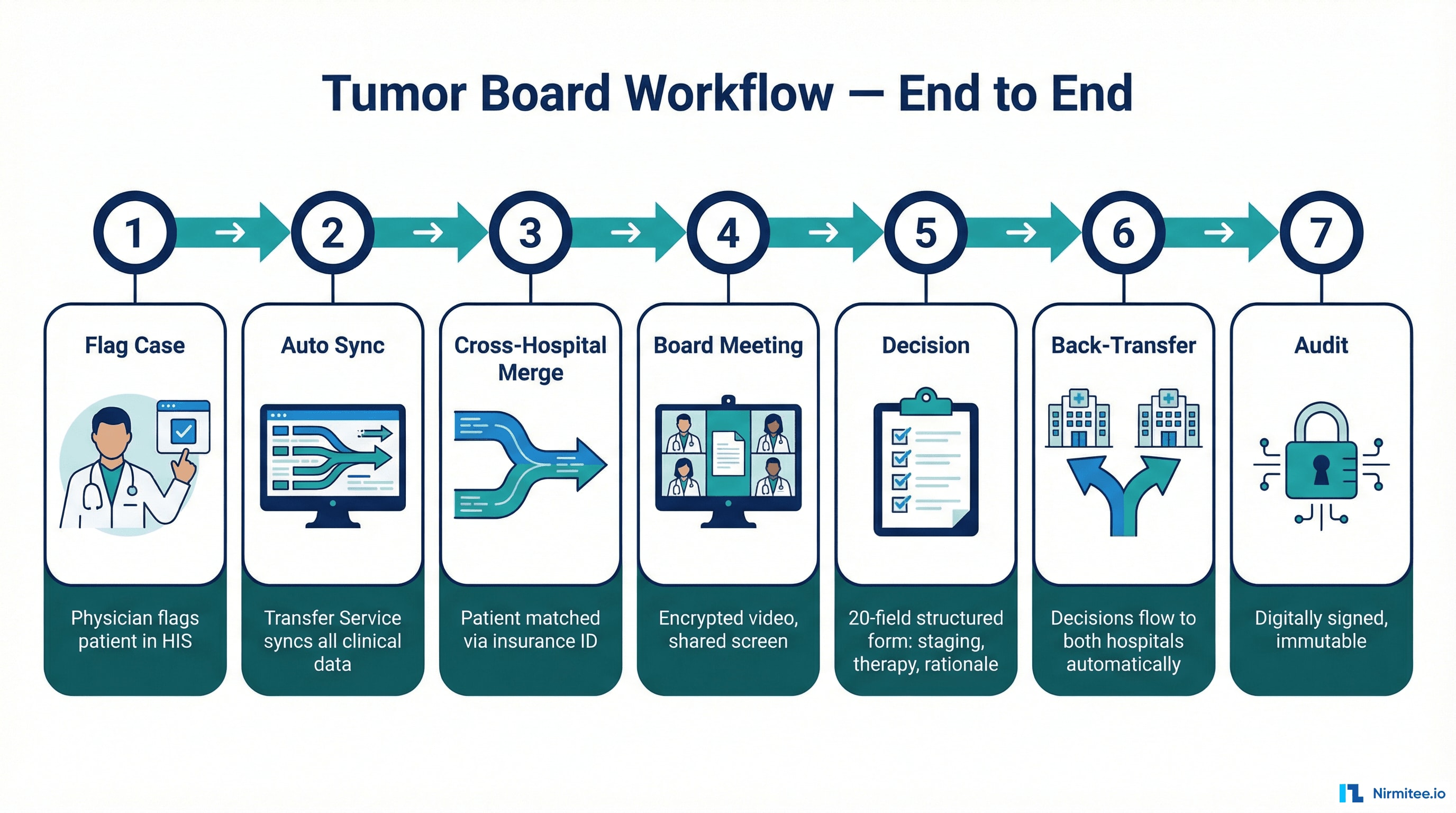

Tumor Board Workflow — End to End

| Phase | Clinician Experience | Under the Hood |

|---|---|---|

| 1. Flag Case | Physician checks a box in HIS | HL7v2 → CDR → FHIR Flag → Transfer Service triggered |

| 2. Auto Sync | Nothing — it's automatic | 13+ FHIR resources synced to Central CDR |

| 3. Cross-Hospital Merge | Nothing — it's automatic | Second hospital's data matched via insurance ID, merged |

| 4. Board Meeting | Complete patient data on screen | Tumor Board app reads Central CDR FHIR API |

| 5. Decision | Minute-taker fills structured form | FHIR QuestionnaireResponse with 20 clinical fields |

| 6. Back-Transfer | Decision appears in referring physician's HIS | Central CDR → Transfer Service → Local CDRs |

| 7. Audit | Protocol immutable after digital signing | Full chain of custody; encrypted PDF for externals |

What the Structured Decision Captures

The tumor board recommendation is a 20-field FHIR QuestionnaireResponse — not free text:

| Field | Example |

|---|---|

| Relevant Findings | MRI Breast: 3.2 cm irregular mass, BIRADS 5 |

| Medical History | 58-year-old, premenopausal, no family cancer history |

| TNM Stage | cT2 cN0 cM0, Stage IIA |

| Pathology Summary | Invasive ductal carcinoma, G2, ER 80%, PR 60%, HER2-negative, Ki67 25% |

| Lab Values | Hb 12.8, WBC 6200, Plt 245k, CEA 2.1, CA 15-3 18 |

| Recommendation | Breast-conserving surgery + sentinel biopsy. Adjuvant TC chemo (4 cycles) then tamoxifen 5-10y. |

| Rationale | Good operability. Ki67 25% + G2 justify chemo. HR+/HER2- supports endocrine therapy. |

| Participants | 6 specialists: Gynecology, Oncology, Radiology, Pathology, Radiation Therapy, Breast Care Nurse |

This flows back to both hospitals as structured, queryable data — not a PDF attachment.

Technology Stack

| Layer | Technology | Purpose |

|---|---|---|

| Application | Spring Boot (Java) | Transfer Service microservice |

| Workflow | Temporal | Durable multi-step sync orchestration |

| Data Standards | HL7 FHIR R4 | REST API-based data exchange |

| Data Standards | openEHR | Clinical data storage, versioning, AQL queries |

| Data Standards | HL7v2 | Legacy HIS integration |

| Messaging | Apache Kafka | Durable, ordered event delivery |

| CDC | Debezium | Real-time PostgreSQL change capture |

| Database | PostgreSQL | CDR storage with logical replication |

| Auth | Keycloak | RBAC with per-system tokens |

| Infrastructure | Kubernetes (AWS) | HA container orchestration |

| Monitoring | Prometheus + Grafana | Metrics, dashboards, proactive alerts |

Why This Project Is Hard — And Why That Matters

Most healthcare integration projects move data between two systems using one standard. This project required:

- Dual-standard mastery (FHIR + openEHR) — deep expertise in both, plus bridging them through a unified Transfer Service that speaks FHIR REST, openEHR REST, and HL7v2

- Clinical safety engineering — a missed sync means a doctor doesn't see a pathology report at a cancer tumor board. Every design decision was evaluated through this lens.

- Multi-system identity resolution — the same patient has different IDs at each hospital. Merging into a coherent central record without data loss required deterministic matching with clear ownership rules.

- Production discipline — Kubernetes HA, Prometheus metrics, Grafana alerts, Temporal durability, dead letter queues, exponential backoff, comprehensive runbooks. A system that runs 24/7 where downtime has clinical consequences.

- Scalable by design — adding Hospital C, D, or E means adding a config file. The entire architecture scales without structural changes.

Engagement: April 2024 — November 2025 | Team: 6 engineers | Methodology: Agile with weekly delivery

Was this case study helpful?