Executive Summary

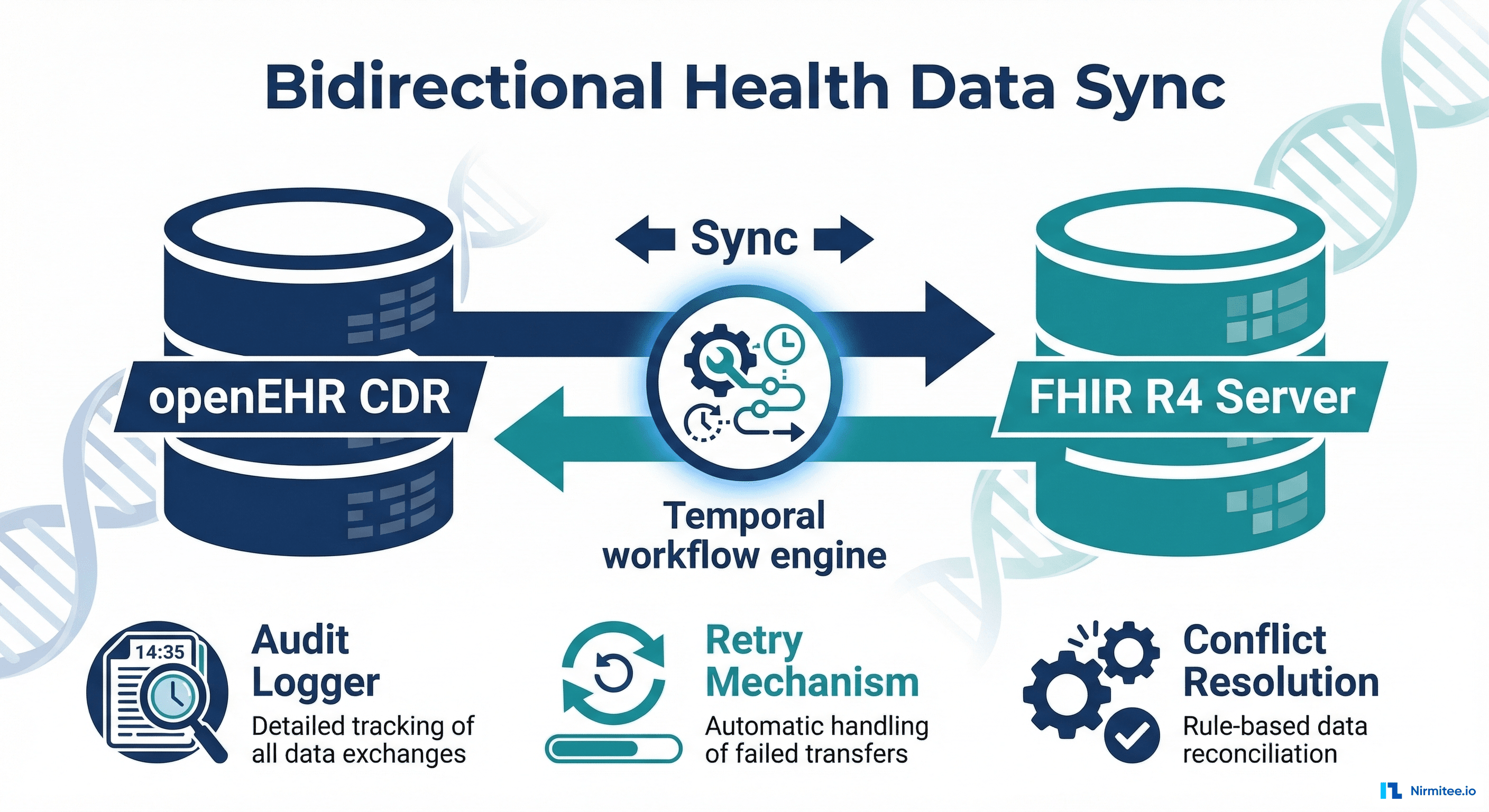

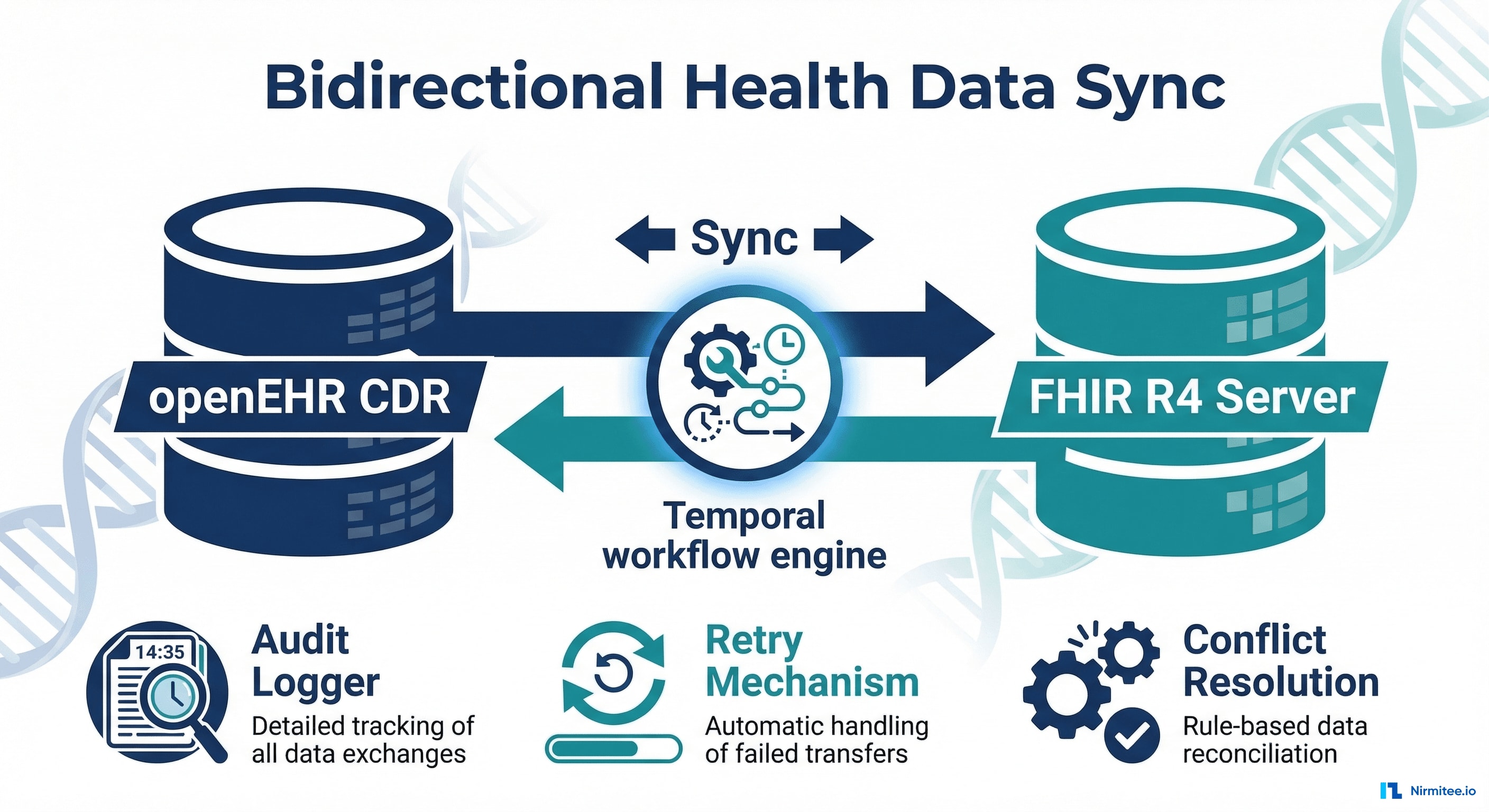

A cancer care network operating across 5 clinical facilities needed to synchronize patient data between their local openEHR Clinical Data Repositories and a central FHIR R4 server. The challenge: bidirectional sync with zero data loss, complete audit trails, and automatic retry for failed operations — across systems that speak fundamentally different data languages.

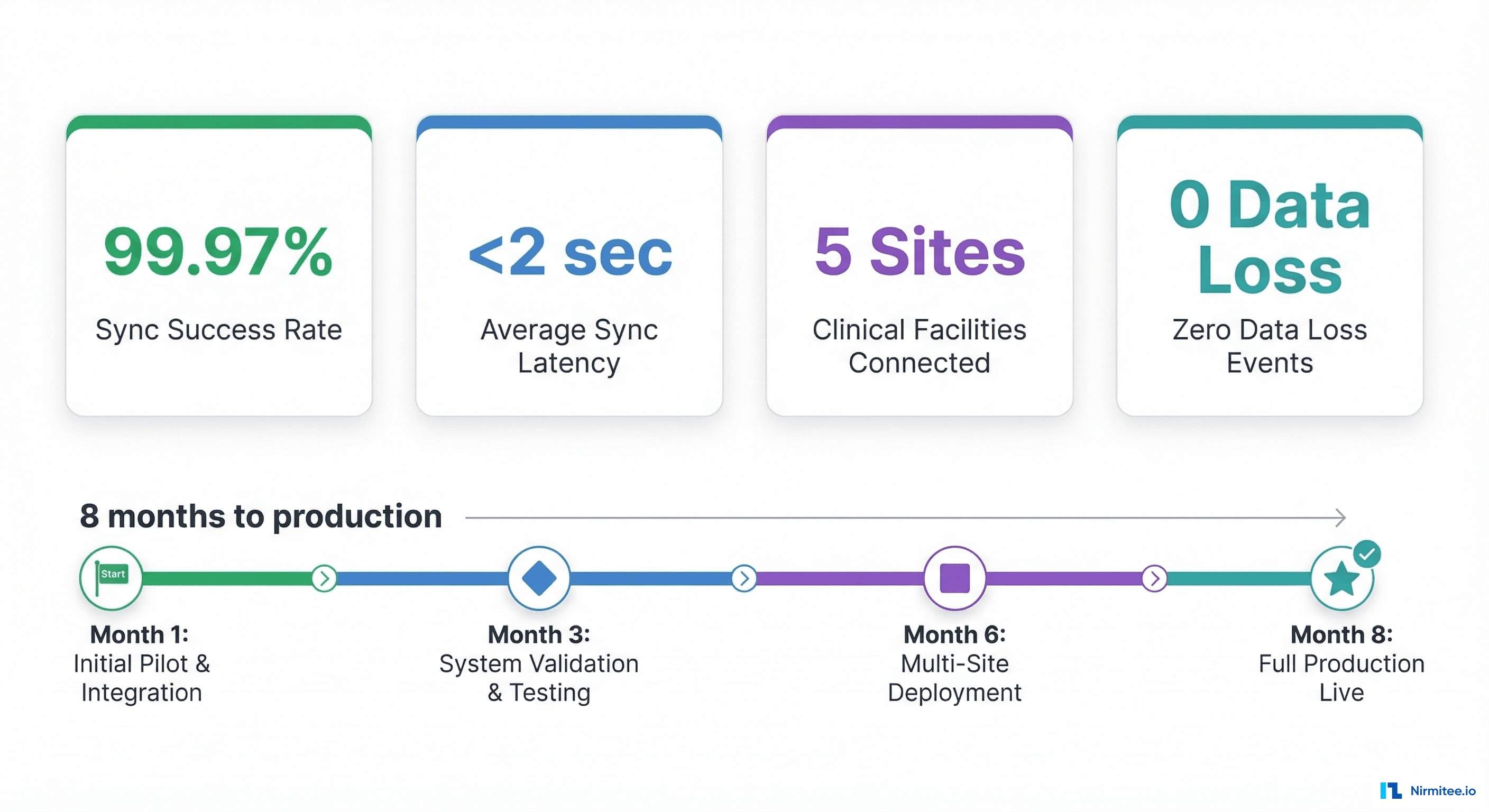

We built a Temporal-powered workflow engine that translates between openEHR archetypes and FHIR R4 resources, handles bidirectional sync with conflict resolution, and guarantees delivery through exponential backoff retries and dead-letter queuing. The result: 99.97% sync success rate with sub-2-second latency across all 5 sites.

The Problem: Two Standards, Five Sites, One Patient

openEHR and FHIR R4 are both healthcare data standards — but they solve different problems. openEHR excels at clinical data modeling with rich archetypes and templates. FHIR R4 excels at interoperability and API access with standardized RESTful resources.

This cancer care network used openEHR at each local facility for clinical documentation — capturing detailed oncology data using domain-specific archetypes. But they needed FHIR R4 at the central level for:

- Cross-site analytics: Population health metrics, treatment outcome comparison across facilities

- External integrations: Lab systems, imaging platforms, and referring providers that speak FHIR

- Regulatory reporting: Quality measures and cancer registry submissions that require FHIR format

- Research: Clinical trial eligibility screening and cohort identification

What Made This Hard

- Data model mismatch: openEHR uses deeply nested archetypes with rich clinical semantics. FHIR uses flat resources with extensions. Mapping between them is non-trivial and lossy if done carelessly.

- Bidirectional requirement: Data flows both ways. A new lab result at a local site pushes to central FHIR. A treatment plan created centrally pushes back to local openEHR. Conflict resolution is critical.

- Zero tolerance for data loss: This is oncology. A lost lab result or treatment update could directly impact patient care. Every sync must be guaranteed.

- Network reliability: Five sites across different geographic locations with varying network quality. Sync must handle disconnections, timeouts, and partial failures gracefully.

System Architecture

Architecture Components

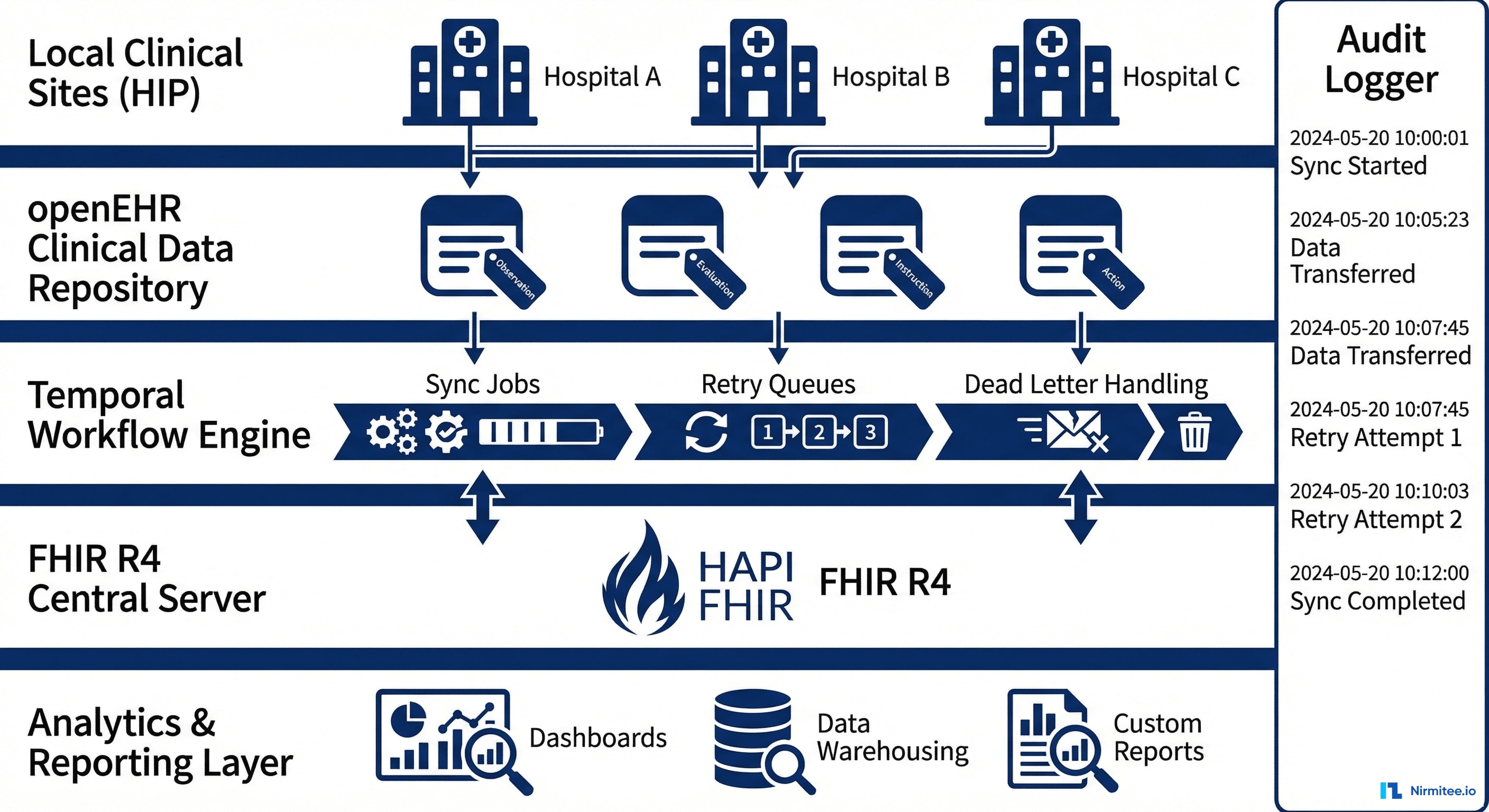

Local Clinical Sites (HIP — Health Information Provider): Each of the 5 facilities runs an openEHR Clinical Data Repository (CDR). Clinicians document patient encounters using openEHR templates designed for oncology — tumor staging, treatment protocols, adverse events, follow-up assessments.

Change Detection Layer: Each local CDR emits change events when clinical data is created or updated. These events trigger sync workflows. We implemented polling-based change detection with timestamps (openEHR doesn't have native webhooks) — checking for changes every 30 seconds.

Temporal Workflow Engine: The brain of the sync system. Temporal orchestrates every sync operation as a durable workflow that survives crashes, retries on failure, and maintains complete execution history. Each sync is a workflow with defined steps, timeouts, and failure handling.

Transform Layer: Bidirectional mapping between openEHR archetypes and FHIR R4 resources. This is where the hard work happens — preserving clinical semantics while converting between fundamentally different data models.

Central FHIR R4 Server (HAPI FHIR): Stores the canonical, aggregated view of all patient data across 5 sites. Exposes standard FHIR APIs for external integrations, analytics, and research.

Audit Logger: Every sync operation — success, failure, retry, conflict resolution — is logged with timestamps, source/destination, data hashes, and the user/system that triggered it. Complete chain of custody for every piece of clinical data.

Data Mapping: openEHR Archetypes to FHIR Resources

Mapping Rules

| openEHR Archetype | FHIR R4 Resource | Mapping Complexity |

|---|---|---|

| COMPOSITION (encounter) | Encounter + Composition | Medium — nested content requires flattening |

| OBSERVATION (lab result) | Observation | Low — direct mapping with unit conversion |

| OBSERVATION (vital signs) | Observation (vital-signs profile) | Low — LOINC codes match well |

| INSTRUCTION (medication order) | MedicationRequest | High — dosing instructions differ structurally |

| ACTION (procedure performed) | Procedure | Medium — status mapping required |

| EVALUATION (diagnosis) | Condition | Medium — staging data needs extensions |

| EVALUATION (risk assessment) | RiskAssessment | High — custom archetypes need FHIR extensions |

| CLUSTER (tumor staging) | Condition + Observation (TNM) | High — openEHR cluster maps to multiple FHIR resources |

Handling Lossy Conversions

openEHR archetypes often contain richer clinical detail than FHIR resources can represent natively. Our approach:

- FHIR Extensions: For clinically significant data that doesn't fit standard FHIR resources, we defined custom FHIR extensions. These are documented and published for downstream consumers.

- Provenance tracking: Every FHIR resource includes a Provenance resource linking back to the original openEHR composition. If downstream systems need the full clinical detail, they can request the source archetype.

- Round-trip fidelity testing: Automated tests that sync data openEHR → FHIR → openEHR and verify no clinical information is lost. Any mapping that fails round-trip testing is flagged for manual review.

Temporal Workflows: Guaranteed Delivery

Why Temporal

We chose Temporal over alternatives (message queues, cron jobs, custom retry logic) because:

- Durable execution: Workflows survive process crashes, server restarts, and network failures. If a sync is interrupted, it resumes exactly where it left off.

- Built-in retry: Exponential backoff with configurable max retries. No custom retry logic needed.

- Execution history: Complete, queryable history of every workflow execution — when it started, what steps ran, what failed, how it was resolved. Critical for healthcare audit requirements.

- Visibility: Temporal's UI shows real-time workflow status across all 5 sites. Operations team can see sync health at a glance.

Sync Workflow Steps

- Detect change — polling layer identifies new/updated data at a local site

- Validate archetype — ensure the openEHR data conforms to expected template structure

- Transform — convert openEHR archetype to FHIR R4 resource(s) using mapping rules

- Validate FHIR — run FHIR profile validation on the output resource

- Check conflicts — compare with existing data on destination. If conflict detected, apply resolution strategy

- Write to destination — create or update the FHIR resource on the central server

- Log audit trail — record the complete sync event with timestamps, hashes, and provenance

- Acknowledge — mark the source change as synced to prevent re-processing

Failure Handling

- Transient failures (network timeout, server 503): automatic retry with exponential backoff (1s → 2s → 4s → 8s → 16s → 32s), max 6 retries

- Validation failures (malformed data): routed to dead-letter queue for manual review. Clinical staff notified via email.

- Conflict failures (same record updated at two sites): flagged for clinical review with both versions preserved. No auto-resolution for clinical data.

Results

| Metric | Result |

|---|---|

| Sync success rate | 99.97% (remaining 0.03% resolved via dead-letter review within 4 hours) |

| Average sync latency | 1.8 seconds (change detection to destination write) |

| Clinical facilities connected | 5 sites across 3 regions |

| Data loss events | Zero — every record accounted for via audit trail |

| Daily sync volume | 12,000+ clinical events per day across all sites |

| Archetype-to-FHIR mappings | 47 archetype templates mapped to 23 FHIR resource types |

| Round-trip fidelity | 100% for core clinical data (conditions, medications, observations) |

Technology Stack

| Component | Technology | Purpose |

|---|---|---|

| openEHR CDR | EHRbase / Better Platform | Local clinical data storage at each site |

| FHIR Server | HAPI FHIR (Java) | Central aggregated data repository |

| Workflow Engine | Temporal (Go) | Durable workflow orchestration, retry, audit |

| Transform Layer | TypeScript | Archetype ↔ FHIR mapping logic |

| API Layer | Node.js (Express) | REST APIs for admin, monitoring, manual triggers |

| Database | PostgreSQL | Audit logs, sync state, configuration |

| Monitoring | Grafana + Prometheus | Sync latency, success rates, queue depths |

| Infrastructure | AWS (multi-AZ) | High availability, cross-region replication |

Timeline

| Month | Milestone |

|---|---|

| Month 1-2 | openEHR archetype analysis, FHIR mapping rules design, Temporal infrastructure setup |

| Month 3-4 | Core sync engine: openEHR → FHIR direction. First 2 sites connected. Audit logger. |

| Month 5-6 | Reverse sync: FHIR → openEHR. Conflict resolution. Dead-letter handling. 3 more sites. |

| Month 7-8 | Round-trip fidelity testing. Performance optimization. Production hardening. Monitoring dashboards. |

Lessons Learned

- Temporal changed everything. Before Temporal, we had custom retry logic, cron-based sync jobs, and manual dead-letter processing. Temporal replaced all of it with a single, observable, durable workflow engine.

- Mapping is the hardest part. The sync engine itself was straightforward. The archetype-to-FHIR mapping consumed 60% of the engineering effort. Rich clinical archetypes don't flatten cleanly into FHIR resources.

- Audit logging is not optional. In healthcare, you must prove that every piece of data arrived, when it arrived, and that it wasn't modified in transit. The audit log was as important as the sync engine itself.

- Clinical conflicts need humans. We tried automated conflict resolution. Oncologists rejected it. When two sites update the same patient record, a clinician must review both versions and decide. The system's job is to surface the conflict immediately, not resolve it.

Share

Related Case Studies

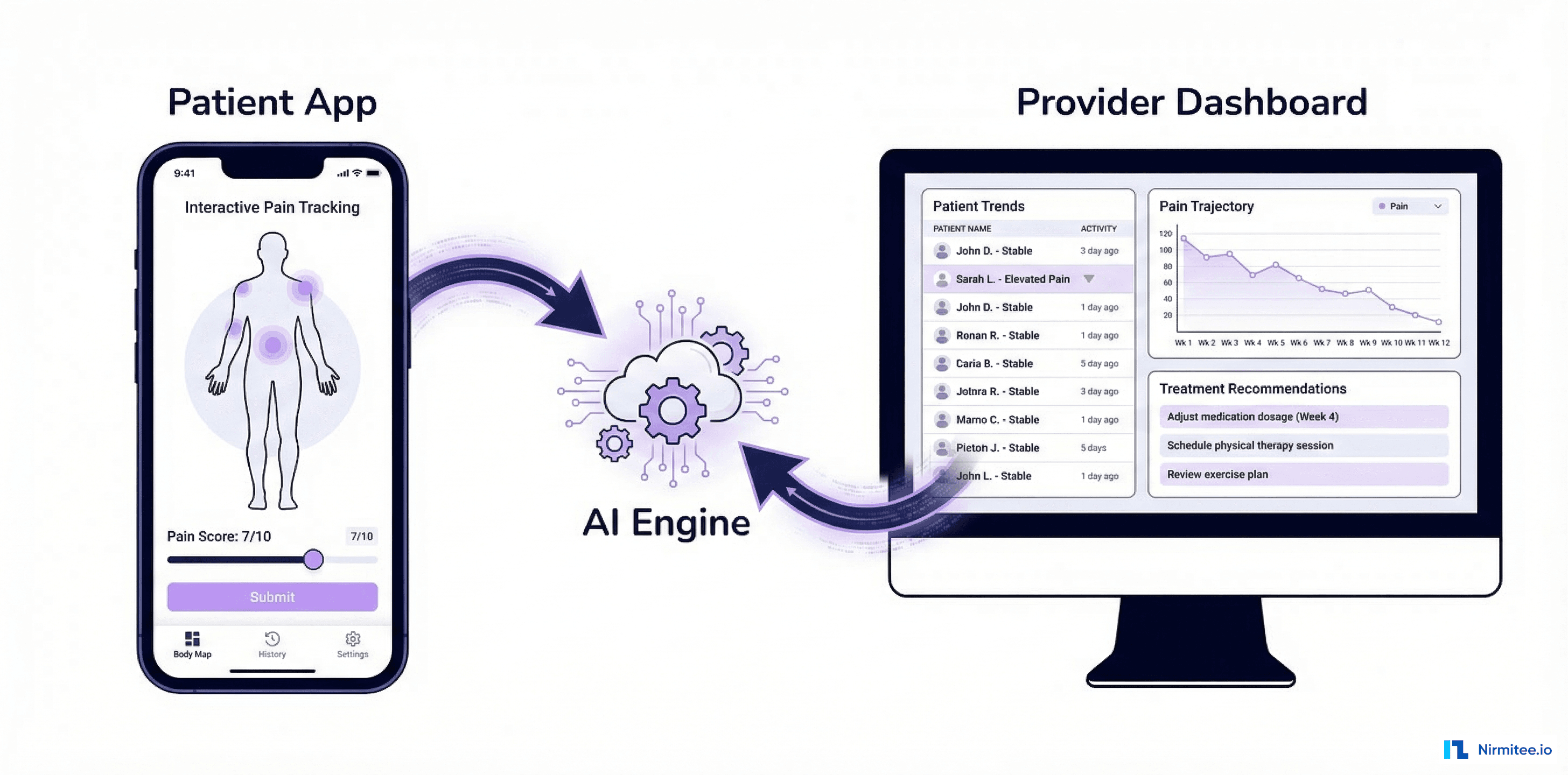

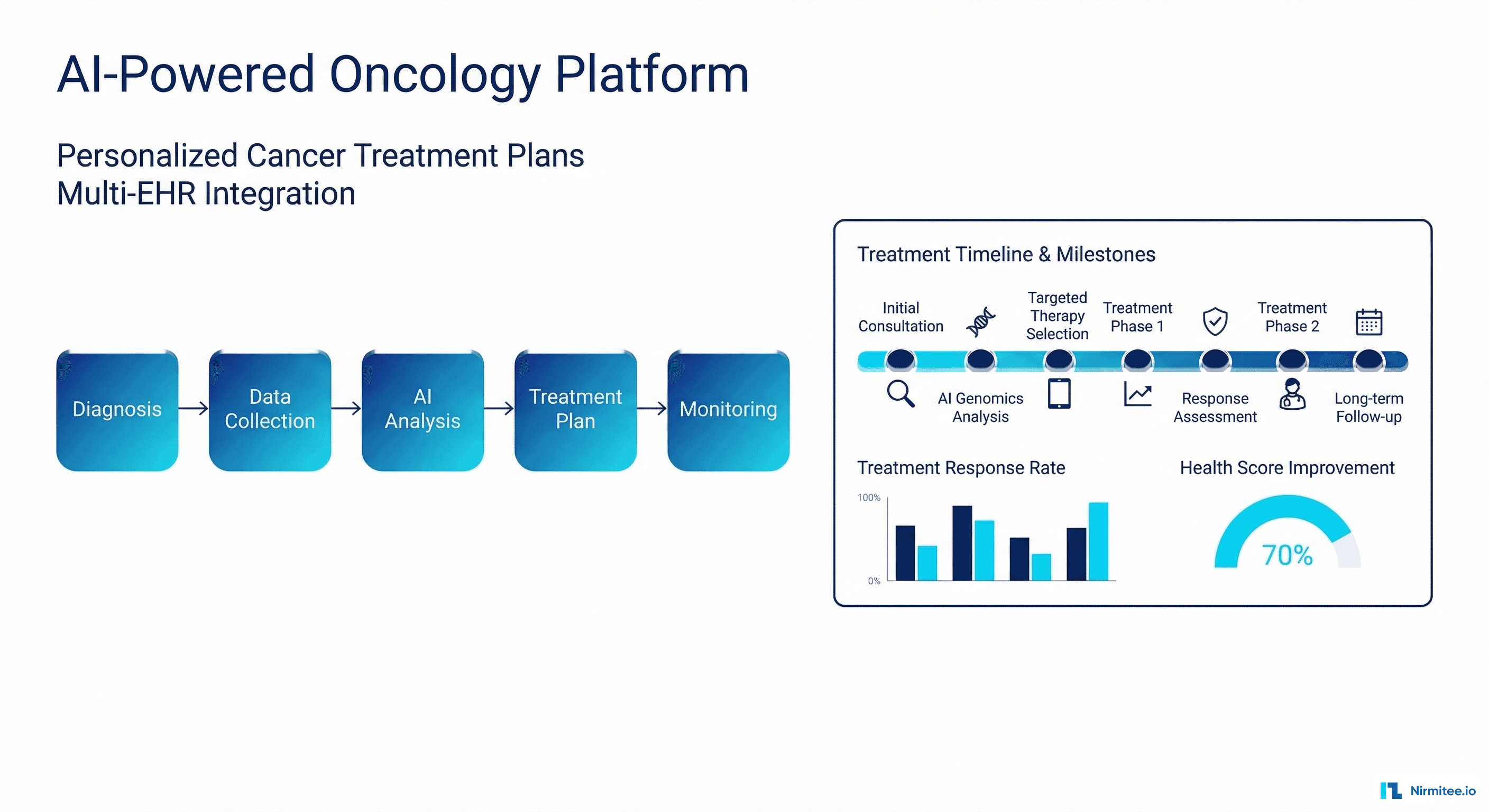

AI-Powered Personalized Oncology Treatment Platform: A Technical Case Study

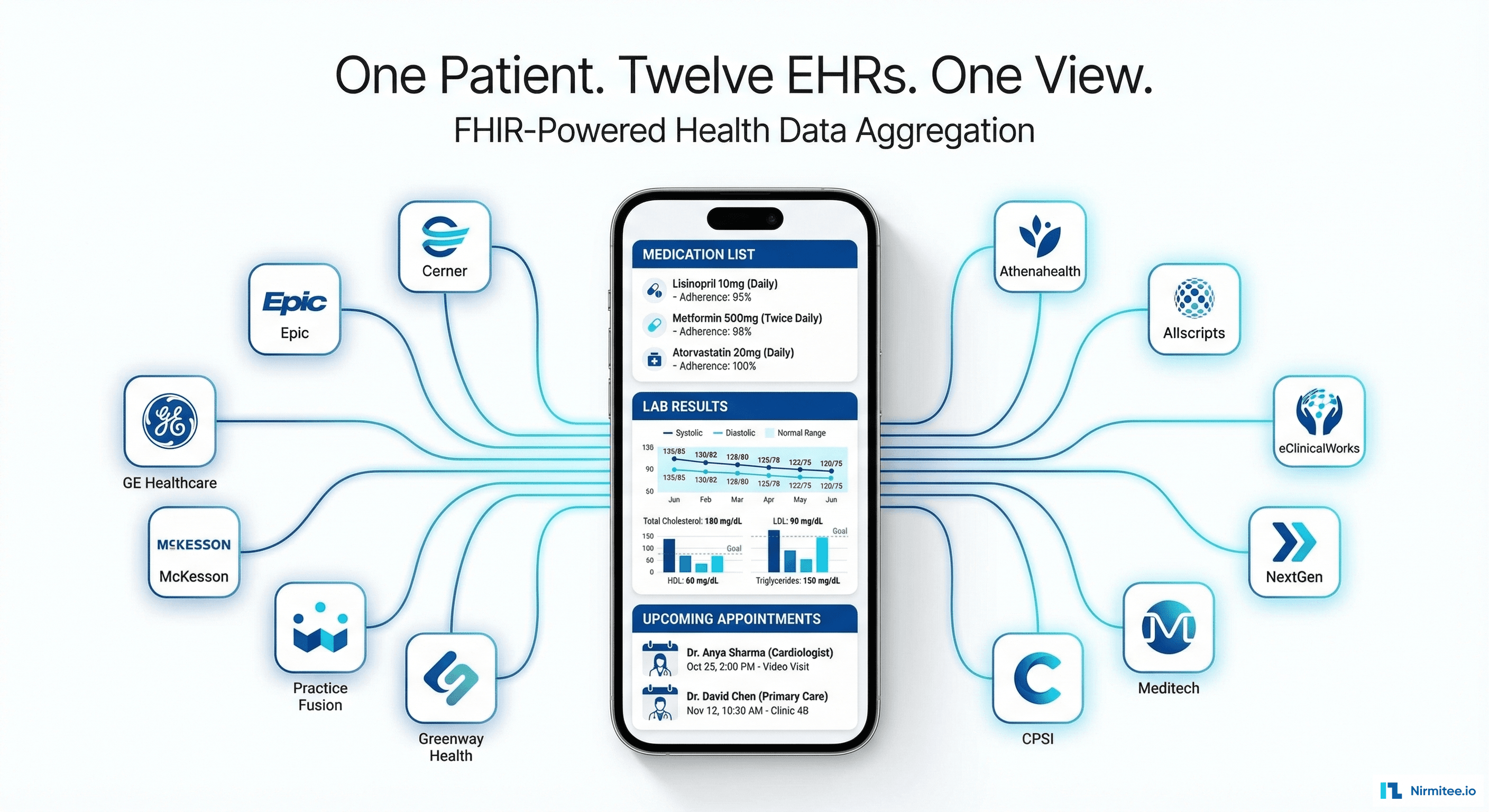

Building a Patient-First Health Record Platform: Connecting 12 US EMRs Into One View