At 2:47 AM on a Tuesday, your PagerDuty fires: "FHIR Server 5xx rate exceeds 5%." The on-call engineer -- three months into the job, never seen this alert before -- opens their laptop, stares at the Grafana dashboard, and starts guessing. They check the application logs. They restart the server. They check the database. Twenty minutes later, they discover the root cause was a connection pool leak triggered by a specific query pattern. But those twenty minutes translated to 400 failed lab result deliveries that now need manual reconciliation.

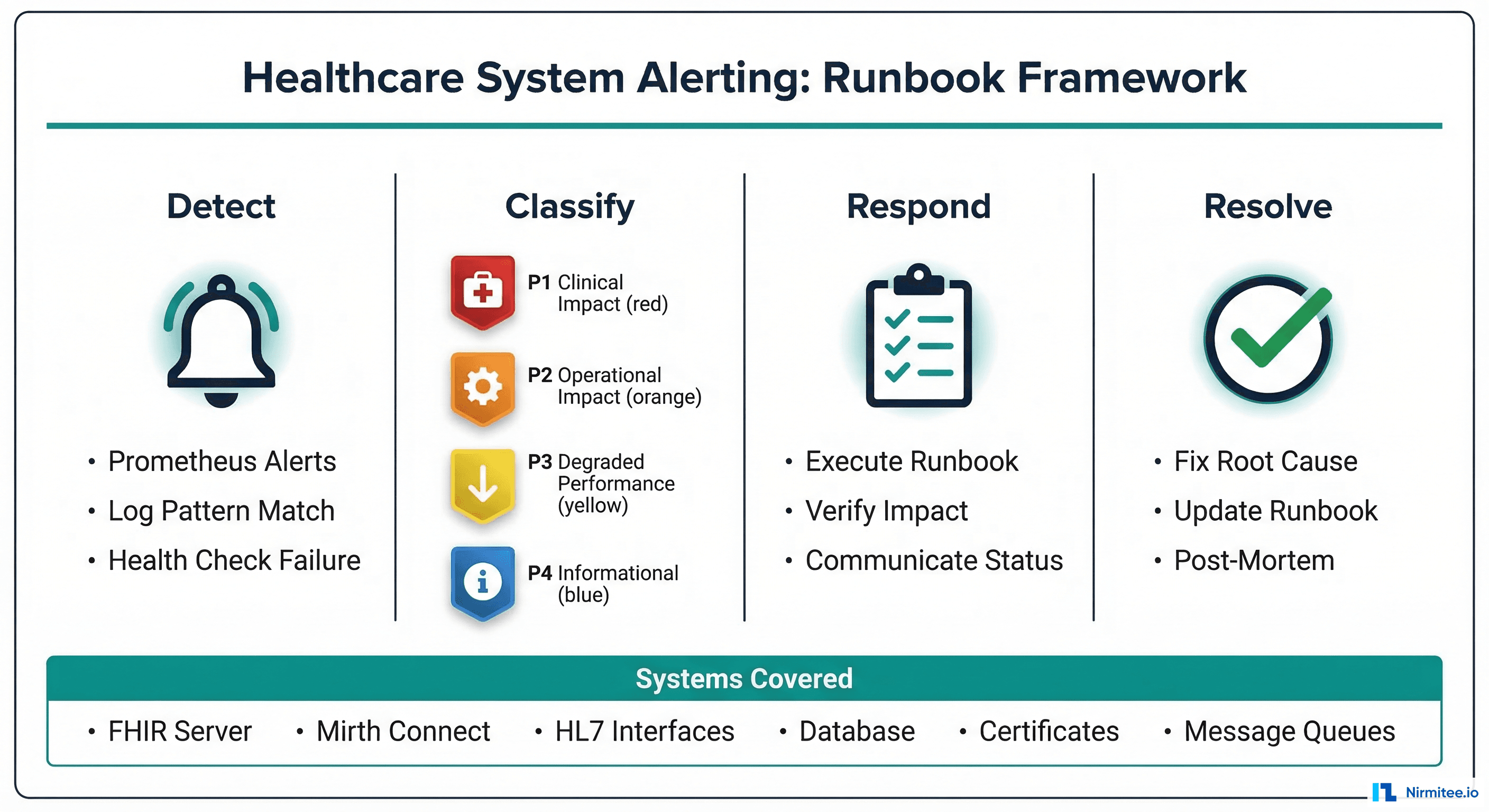

This scenario plays out at health systems every week. The alert fired correctly. The engineer responded quickly. But without a runbook -- a documented, step-by-step diagnostic and resolution procedure for that specific alert -- the response was improvised instead of systematic. In healthcare, improvised incident response carries clinical risk.

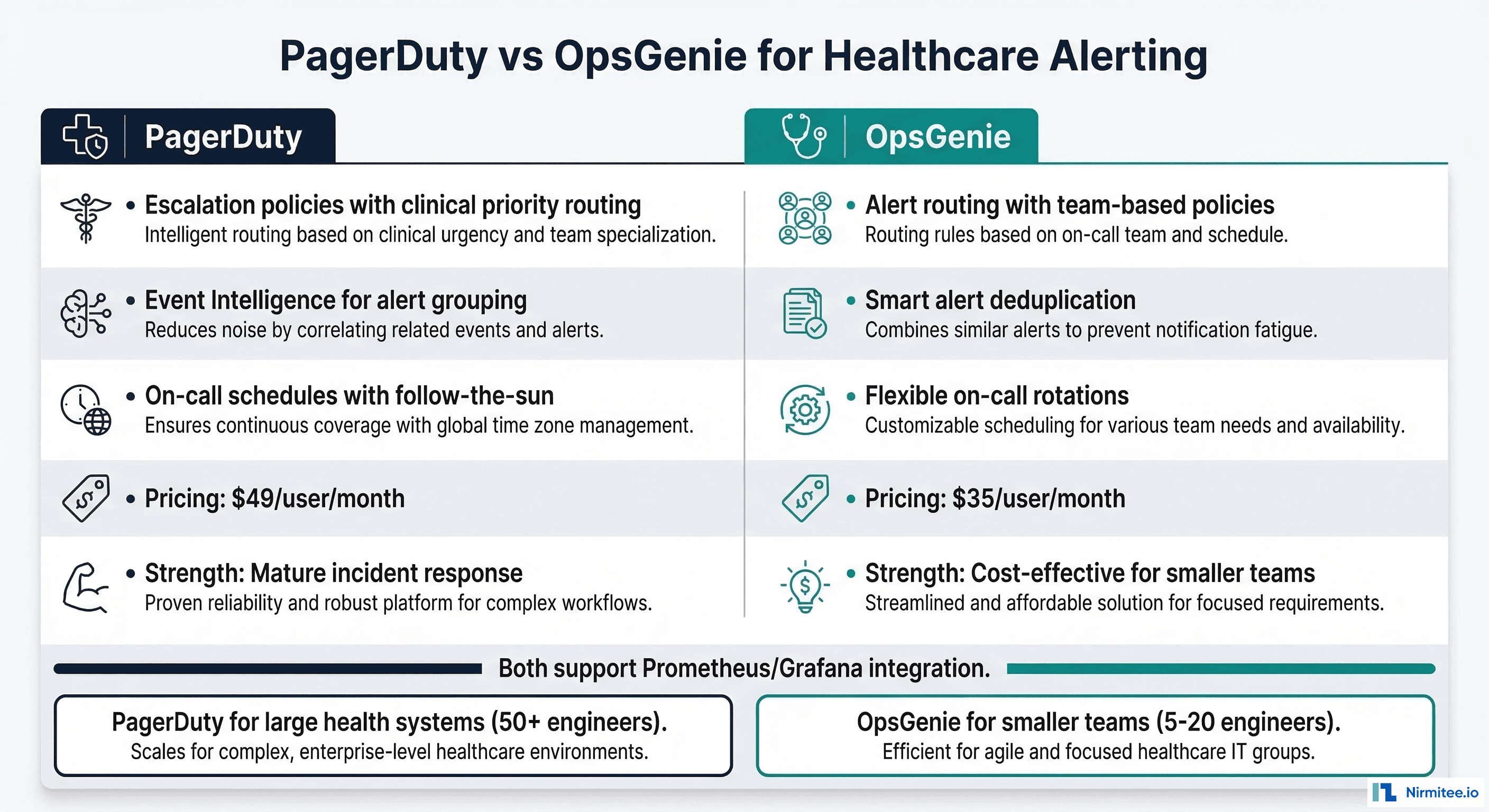

This guide provides production-ready runbook templates for the six most common healthcare system alerts, severity classification frameworks adapted for clinical impact, and PagerDuty/OpsGenie configuration to route alerts correctly. Every runbook has been tested in production environments processing millions of healthcare messages daily.

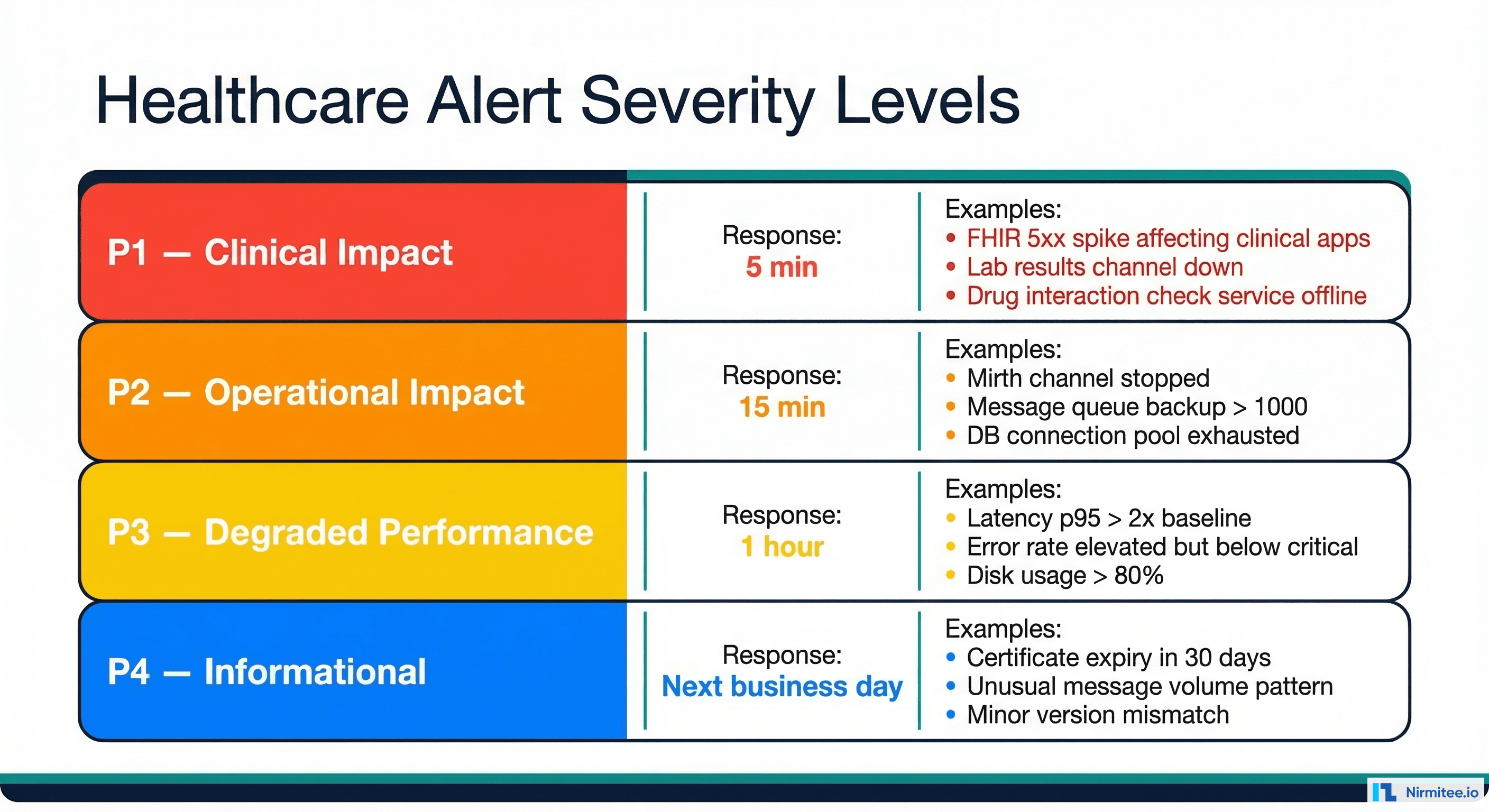

Severity Classification for Healthcare Systems

Standard IT severity levels (Sev1-Sev4) do not account for the unique dimension of healthcare alerting: clinical impact. A database running at 95% CPU is a Sev2 in most industries. In healthcare, it depends entirely on what that database serves. If it backs a drug interaction checking service, it is a clinical safety issue. If it backs a reporting system, it can wait until morning.

Healthcare-Adapted Severity Levels

| Level | Name | Response Time | Definition | Escalation |

|---|---|---|---|---|

| P1 | Clinical Impact | 5 minutes | System failure directly affecting patient care delivery, clinical decision-making, or medication safety | Immediate page to on-call + engineering lead + clinical informatics |

| P2 | Operational Impact | 15 minutes | System degradation affecting healthcare operations but no immediate clinical safety risk | Page on-call engineer, Slack alert to team |

| P3 | Degraded Performance | 1 hour | Performance degradation, elevated error rates, or capacity concerns that may escalate if unaddressed | Slack notification, ticket created |

| P4 | Informational | Next business day | Planned maintenance notifications, certificate expiry warnings, capacity trend alerts | Email notification, backlog ticket |

Symptom-Based vs Cause-Based Alerting

A critical distinction in healthcare alerting: alert on symptoms, not causes. Symptoms are what users experience. Causes are what engineers investigate.

- Bad (cause-based): "PostgreSQL CPU at 95%." This fires every time the database is busy, but does not tell you if anything is actually broken.

- Good (symptom-based): "FHIR search p95 latency exceeds 2 seconds for 5 minutes." This tells you users are experiencing slow responses, regardless of the underlying cause.

Cause-based alerts generate noise. Symptom-based alerts generate action. The runbooks below are organized around symptoms, with diagnostic steps that identify the underlying cause.

PagerDuty Service Configuration

# PagerDuty service configuration for healthcare alerting

services:

- name: "FHIR Server - Clinical"

description: "FHIR server supporting clinical applications"

escalation_policy: "clinical-systems-escalation"

alert_creation: "create_alerts_and_incidents"

auto_resolve_timeout: 14400 # 4 hours

acknowledge_timeout: 1800 # 30 min re-alert if not ack'd

integrations:

- type: "prometheus"

name: "Prometheus Alertmanager"

incident_urgency_rule:

type: "use_support_hours"

during_support_hours:

type: "constant"

urgency: "high"

outside_support_hours:

type: "constant"

urgency: "high" # Healthcare = always high for clinical

- name: "Integration Engine - Operational"

description: "Mirth Connect and message processing"

escalation_policy: "integration-team-escalation"

alert_creation: "create_alerts_and_incidents"

incident_urgency_rule:

type: "use_support_hours"

during_support_hours:

type: "constant"

urgency: "high"

outside_support_hours:

type: "constant"

urgency: "low" # Non-clinical can wait for business hours

escalation_policies:

- name: "clinical-systems-escalation"

rules:

- escalation_delay_in_minutes: 5

targets:

- type: "user"

id: "on-call-engineer"

- escalation_delay_in_minutes: 15

targets:

- type: "user"

id: "engineering-lead"

- escalation_delay_in_minutes: 30

targets:

- type: "user"

id: "vp-engineering"

- type: "user"

id: "clinical-informatics-lead"Runbook 1 — FHIR Server 5xx Error Spike

Alert Definition

- alert: FhirServer5xxSpike

expr: |

sum(rate(http_server_requests_seconds_count{status=~"5.."}[5m])) /

sum(rate(http_server_requests_seconds_count[5m])) * 100 > 5

for: 5m

labels:

severity: P1

service: fhir-server

runbook: "https://wiki.internal/runbooks/fhir-5xx-spike"

annotations:

summary: "FHIR server 5xx rate at {{ $value | printf "%.1f" }}%"

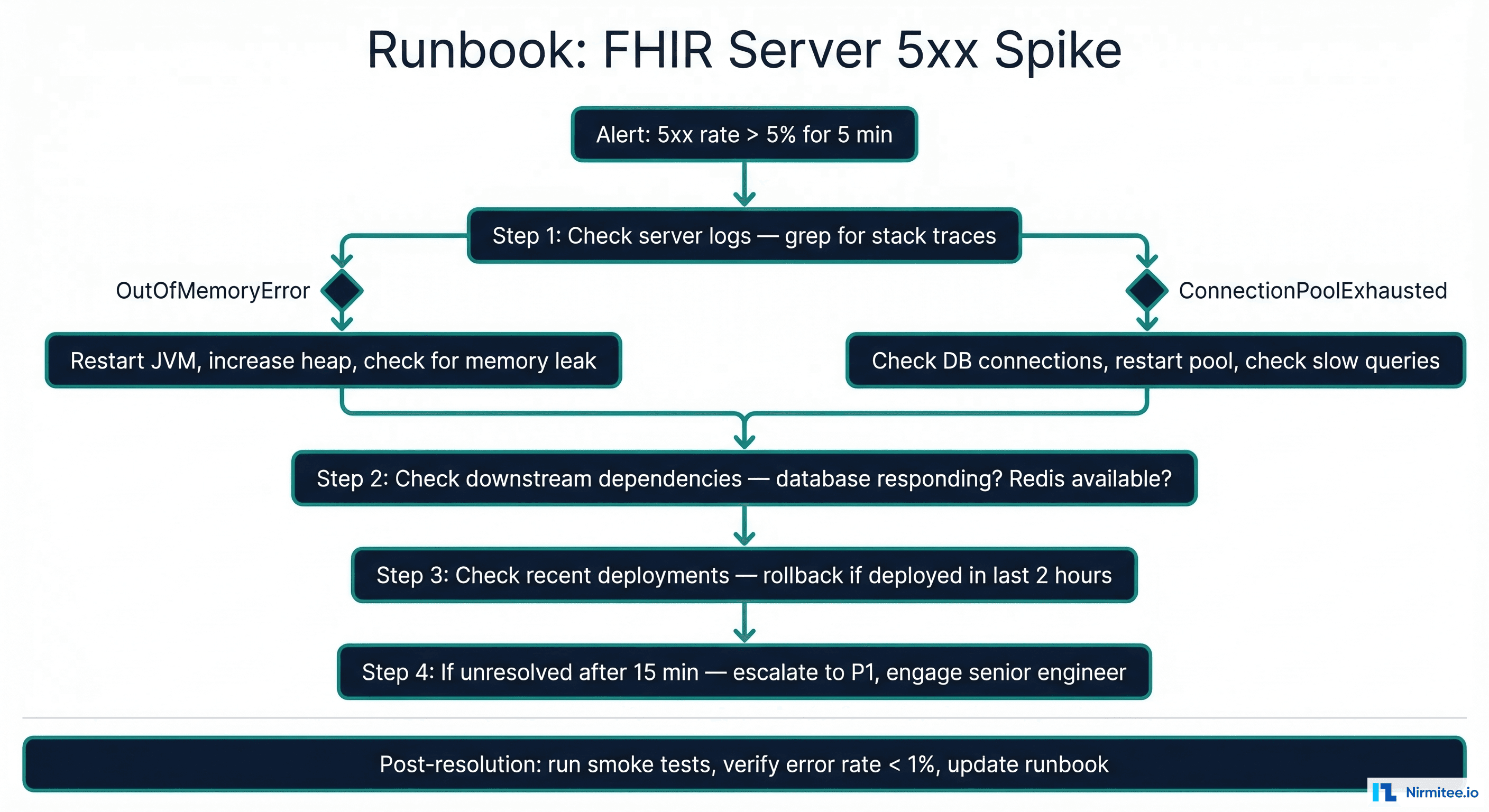

impact: "Clinical applications unable to read/write patient data"Diagnostic Steps

Step 1: Identify the error pattern (2 minutes)

# Check which endpoints are failing

curl -s http://localhost:8080/actuator/metrics/http.server.requests | jq '.availableTags[] | select(.tag=="uri") | .values[]'

# Check application logs for stack traces

journalctl -u hapi-fhir --since "5 minutes ago" | grep -E "ERROR|Exception" | head -20

# Check JVM health

curl -s http://localhost:8080/actuator/health | jq '.components'Step 2: Classify the root cause

| Log Pattern | Likely Cause | Immediate Action |

|---|---|---|

java.lang.OutOfMemoryError | Heap exhaustion from large queries or memory leak | Restart JVM with increased heap; investigate query patterns |

HikariPool-1 - Connection is not available | Database connection pool exhausted | See Runbook 4 (DB Connection Pool) |

PSQLException: FATAL: too many connections | PostgreSQL max_connections reached | Kill idle connections; increase max_connections temporarily |

SocketTimeoutException | Downstream service (terminology server, external FHIR) timeout | Check downstream service health; increase timeout temporarily |

SearchCoordinatorSvcImpl - Failed to load | Corrupted search result cache | Clear search cache; restart if persistent |

Step 3: Apply the fix (varies by cause)

# Emergency JVM restart (if OOM or unresponsive)

sudo systemctl restart hapi-fhir

# Wait for startup health check (typically 30-60 seconds)

until curl -sf http://localhost:8080/fhir/metadata > /dev/null; do

sleep 5

echo "Waiting for FHIR server to start..."

done

# Verify error rate has dropped

for i in $(seq 1 5); do

sleep 30

curl -s http://localhost:8080/actuator/metrics/http.server.requests?tag=status:500 | jq '.measurements[] | select(.statistic=="COUNT") | .value'

doneStep 4: Verify and close

- Confirm 5xx rate below 1% for 15 consecutive minutes

- Run smoke tests against critical endpoints (Patient search, Observation read, CapabilityStatement)

- Check for backlogged messages in integration engine queues

- Notify the clinical informatics team of the resolution and any data gap window

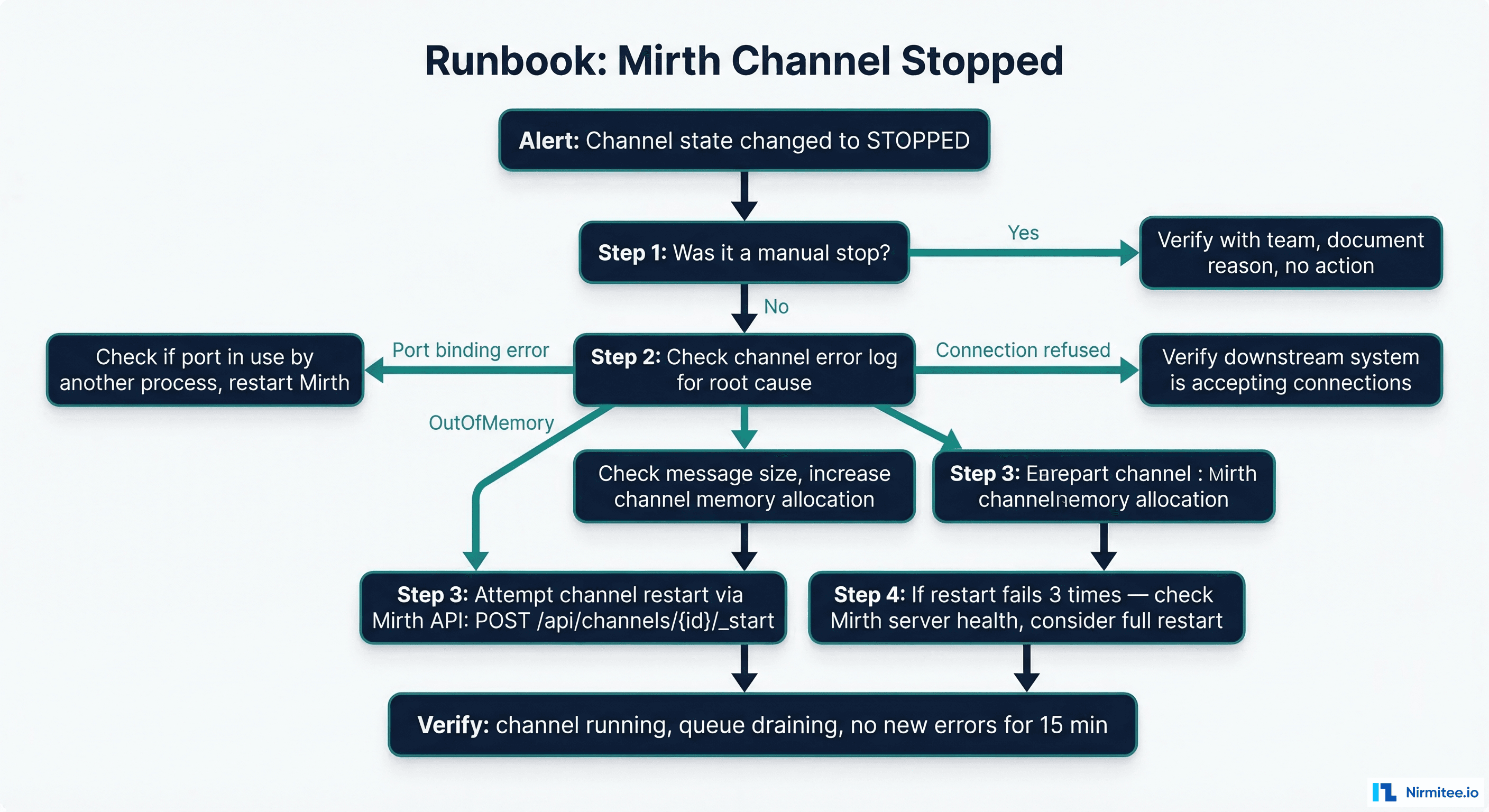

Runbook 2 — Mirth Channel Stopped

Alert Definition

- alert: MirthChannelStopped

expr: mirth_channel_state{state="STOPPED"} == 1

for: 2m

labels:

severity: P2

service: mirth-connect

runbook: "https://wiki.internal/runbooks/mirth-channel-stopped"

annotations:

summary: "Mirth channel {{ $labels.channel_name }} is STOPPED"

impact: "Messages for this channel are queuing and not being processed"Diagnostic Steps

Step 1: Determine if the stop was intentional (1 minute)

# Check Mirth channel status via API

curl -s -u admin:admin https://mirth:8443/api/channels/statuses | python3 -c "import sys, xml.etree.ElementTree as ET; root = ET.fromstring(sys.stdin.read()); [print(f'{ch.find("name").text}: {ch.find("state").text}') for ch in root.findall('.//dashboardStatus')]"

# Check deployment history for recent changes

curl -s -u admin:admin https://mirth:8443/api/channels/{channel_id}/historyStep 2: Check the channel error log

# Get recent errors for the stopped channel

curl -s -u admin:admin "https://mirth:8443/api/channels/{channel_id}/messages?status=ERROR&limit=10" | python3 -c "import sys, json; msgs = json.loads(sys.stdin.read()); [print(f'Error: {m.get("errors",{}).get("content","unknown")}') for m in msgs.get('messages',[])]"Step 3: Attempt a restart

# Start the channel via Mirth API

curl -s -X POST -u admin:admin "https://mirth:8443/api/channels/{channel_id}/_start"

# Verify channel state changed to STARTED

sleep 10

curl -s -u admin:admin "https://mirth:8443/api/channels/{channel_id}/status" | grep "state"

# Monitor for 5 minutes to ensure it stays running

for i in $(seq 1 10); do

sleep 30

STATE=$(curl -s -u admin:admin "https://mirth:8443/api/channels/{channel_id}/status" | grep -o "STARTED\|STOPPED")

echo "$(date): Channel state = $STATE"

[ "$STATE" = "STOPPED" ] && echo "Channel stopped again -- investigate root cause" && break

doneCommon Root Causes and Fixes

| Symptom | Root Cause | Fix |

|---|---|---|

| Channel stops immediately after start | Port already in use by another channel or process | Check netstat -tlnp | grep PORT; resolve conflict |

| Channel stops after processing N messages | Memory leak in custom transformer/filter code | Review custom JavaScript; add memory limits |

| Channel stops with SSL error | Certificate expired or trust store outdated | Update certificate; see Runbook 5 (Certificate Expiry) |

| Channel stops with database error | Mirth internal DB (Derby/PostgreSQL) issue | Check Mirth DB connectivity; restart Mirth service |

Runbook 3 — HL7 Parsing Failures

Alert Definition

- alert: HL7ParsingFailureSpike

expr: |

sum(rate(mirth_messages_errored_total{error_type="PARSE"}[5m])) by (channel_name) /

sum(rate(mirth_messages_received_total[5m])) by (channel_name) * 100 > 2

for: 10m

labels:

severity: P2

service: mirth-connect

runbook: "https://wiki.internal/runbooks/hl7-parsing-failures"

annotations:

summary: "HL7 parsing failure rate at {{ $value | printf "%.1f" }}% on {{ $labels.channel_name }}"Diagnostic Steps

Step 1: Get sample failed messages

# Extract the raw content of recent parsing failures

curl -s -u admin:admin "https://mirth:8443/api/channels/{channel_id}/messages?status=ERROR&limit=5" | python3 -c "

import sys, json

msgs = json.loads(sys.stdin.read())

for m in msgs.get('messages', []):

raw = m.get('rawData', '')

print('--- Message ---')

print(raw[:500])

print()

"Step 2: Identify the parsing failure pattern

| Pattern | Cause | Fix |

|---|---|---|

| Missing MSH segment | Upstream sending partial messages (TCP fragmentation) | Increase MLLP receive timeout; enable message reassembly |

| Wrong field separator | Upstream changed encoding characters | Update channel source encoding settings |

| Invalid date format in fields | Upstream sending non-HL7 date formats | Add pre-processing transformer to normalize dates |

| Unexpected segment order | Upstream EHR version upgrade changed message structure | Update channel filter/transformer for new structure |

| Binary content in message | Base64-encoded PDF/image in OBX-5 not properly encoded | Add binary content handler in transformer |

Step 3: Temporary mitigation

# Route failing messages to error queue for manual review

# Add this to the channel source transformer:

if (msg['MSH']['MSH.9']['MSH.9.1'].toString() === '') {

// Message has no message type -- route to error queue

destinationSet.removeAll();

destinationSet.add('error-queue');

logger.error('Missing message type in MSH.9 -- routed to error queue');

}

// For bulk reprocessing after fix is deployed:

// Mirth API: POST /api/channels/{id}/messages/_reprocess

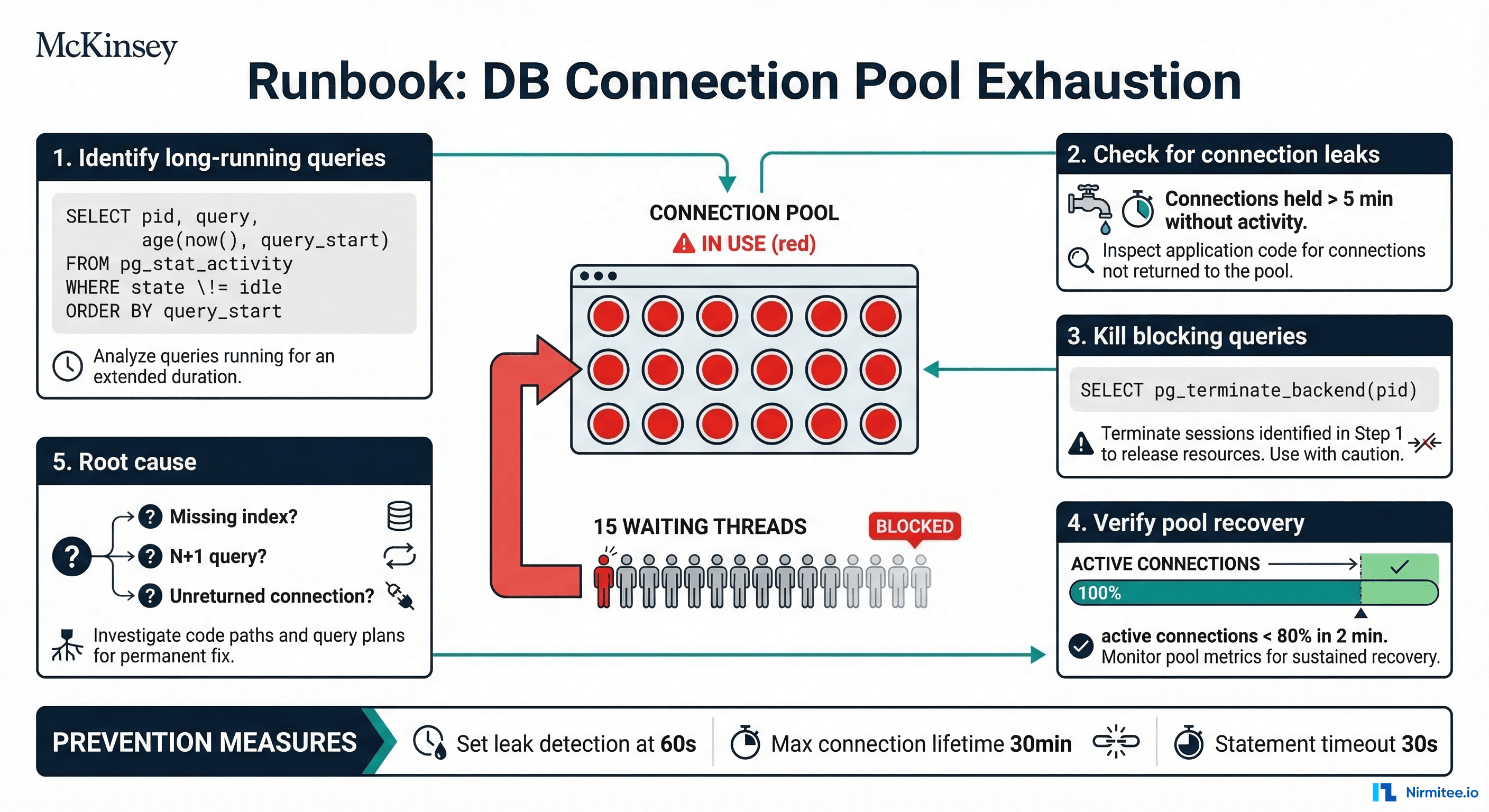

// with body containing message IDs to reprocessRunbook 4 — DB Connection Pool Exhaustion

Alert Definition

- alert: DBConnectionPoolExhausted

expr: |

hikaricp_connections_active / hikaricp_connections_max > 0.9

for: 5m

labels:

severity: P2

service: database

runbook: "https://wiki.internal/runbooks/db-pool-exhausted"

annotations:

summary: "Connection pool at {{ $value | printf "%.0f" }}% capacity"Diagnostic Steps

Step 1: Identify what is holding connections (2 minutes)

# Find long-running queries holding connections

psql -h db-host -U hapi -d hapi -c "

SELECT pid,

now() - pg_stat_activity.query_start AS duration,

query,

state,

wait_event_type,

wait_event

FROM pg_stat_activity

WHERE (now() - pg_stat_activity.query_start) > interval '30 seconds'

AND state != 'idle'

ORDER BY duration DESC

LIMIT 10;

"Step 2: Identify blocking locks

# Find blocked and blocking queries

psql -h db-host -U hapi -d hapi -c "

SELECT blocked_locks.pid AS blocked_pid,

blocked_activity.usename AS blocked_user,

blocking_locks.pid AS blocking_pid,

blocking_activity.usename AS blocking_user,

blocked_activity.query AS blocked_statement,

blocking_activity.query AS blocking_statement

FROM pg_catalog.pg_locks blocked_locks

JOIN pg_catalog.pg_stat_activity blocked_activity ON blocked_activity.pid = blocked_locks.pid

JOIN pg_catalog.pg_locks blocking_locks

ON blocking_locks.locktype = blocked_locks.locktype

AND blocking_locks.relation IS NOT DISTINCT FROM blocked_locks.relation

AND blocking_locks.page IS NOT DISTINCT FROM blocked_locks.page

AND blocking_locks.tuple IS NOT DISTINCT FROM blocked_locks.tuple

AND blocking_locks.pid != blocked_locks.pid

JOIN pg_catalog.pg_stat_activity blocking_activity ON blocking_activity.pid = blocking_locks.pid

WHERE NOT blocked_locks.granted;

"Step 3: Emergency relief

# Kill the longest-running non-idle queries (CAUTION: may lose in-progress transactions)

psql -h db-host -U hapi -d hapi -c "

SELECT pg_terminate_backend(pid)

FROM pg_stat_activity

WHERE (now() - pg_stat_activity.query_start) > interval '5 minutes'

AND state != 'idle'

AND pid != pg_backend_pid();

"

# Verify connections freed

psql -h db-host -U hapi -d hapi -c "

SELECT count(*) as active_connections,

(SELECT setting::int FROM pg_settings WHERE name='max_connections') as max_connections

FROM pg_stat_activity

WHERE state != 'idle';

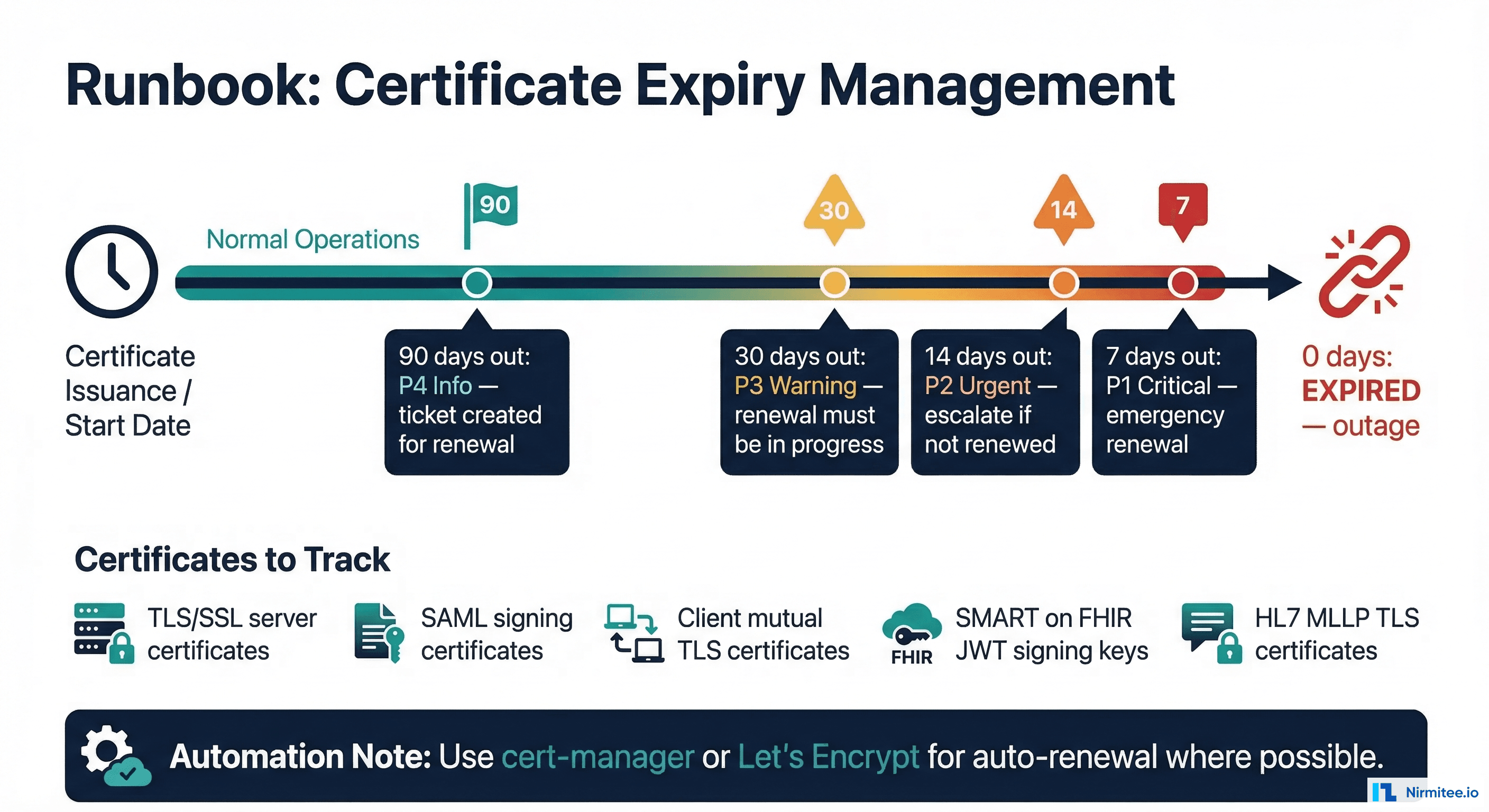

"Runbook 5 — Certificate Expiry

Alert Definition

- alert: CertificateExpiringSoon

expr: probe_ssl_earliest_cert_expiry - time() < 86400 * 30

for: 1h

labels:

severity: P3

service: certificates

runbook: "https://wiki.internal/runbooks/cert-expiry"

annotations:

summary: "Certificate for {{ $labels.instance }} expires in {{ $value | humanizeDuration }}"

- alert: CertificateExpiringCritical

expr: probe_ssl_earliest_cert_expiry - time() < 86400 * 7

for: 10m

labels:

severity: P1

service: certificates

annotations:

summary: "CRITICAL: Certificate for {{ $labels.instance }} expires in {{ $value | humanizeDuration }}"Certificate Inventory

Healthcare systems typically manage these certificate types:

# Automated certificate check script

#!/bin/bash

ENDPOINTS=(

"fhir.hospital.internal:443"

"mirth.hospital.internal:8443"

"auth.hospital.internal:443"

"api.hospital.internal:443"

)

for endpoint in "${ENDPOINTS[@]}"; do

EXPIRY=$(echo | openssl s_client -servername "${endpoint%%:*}" -connect "$endpoint" 2>/dev/null | openssl x509 -noout -enddate | cut -d= -f2)

DAYS=$(( ($(date -d "$EXPIRY" +%s) - $(date +%s)) / 86400 ))

if [ "$DAYS" -lt 7 ]; then

echo "CRITICAL: $endpoint expires in $DAYS days ($EXPIRY)"

elif [ "$DAYS" -lt 30 ]; then

echo "WARNING: $endpoint expires in $DAYS days ($EXPIRY)"

else

echo "OK: $endpoint expires in $DAYS days ($EXPIRY)"

fi

doneRenewal Process

- Generate CSR with the same Subject Alternative Names (SANs) as the current certificate

- Submit to CA (internal PKI or public CA like DigiCert)

- Test the new certificate in the staging environment before production deployment

- Deploy during maintenance window -- coordinate with clinical operations for any service restart

- Verify that all dependent systems can connect with the new certificate

- Update trust stores on all clients if the CA chain changed

Runbook 6 — Message Queue Backup

Alert Definition

- alert: MessageQueueBackup

expr: |

rabbitmq_queue_messages > 5000 OR

mirth_channel_queue_depth > 1000

for: 15m

labels:

severity: P2

service: message-queue

runbook: "https://wiki.internal/runbooks/queue-backup"

annotations:

summary: "Message queue backup: {{ $value }} messages pending on {{ $labels.queue }}"Diagnostic Steps

# Step 1: Identify which queue is backed up

rabbitmqctl list_queues name messages consumers | sort -k2 -rn | head -10

# Step 2: Check consumer health

rabbitmqctl list_consumers | grep -v "running"

# Step 3: Check message processing rate

# If consumers are connected but not processing, check for poison messages

rabbitmqctl list_queues name messages_ready messages_unacknowledged

# Step 4: If unacknowledged count is high, consumers are stuck

# Possible causes: downstream timeout, processing loop, resource exhaustionRecovery Procedure

# If consumers are stuck, restart consumer processes

sudo systemctl restart mirth-connect

# If queue contains poison messages (messages that crash consumers):

# Move to dead letter queue for analysis

rabbitmqctl eval 'rabbit_amqqueue:deliver([<<"dead-letter-exchange">>], <<"queue-name">>, 100).'

# For large backlogs (10K+ messages), increase consumer concurrency temporarily

# In Mirth: increase channel thread count from default 1 to 5-10

# In RabbitMQ: add more consumer instances

OpsGenie Alternative Configuration

For teams using OpsGenie instead of PagerDuty, here is the equivalent alert routing configuration:

# OpsGenie team and routing configuration

teams:

- name: "Healthcare Integration"

members:

- user: "oncall-engineer@hospital.org"

role: "admin"

- user: "integration-lead@hospital.org"

role: "admin"

routing_rules:

- name: "Clinical P1 Alerts"

conditions:

- field: "tags"

operation: "contains"

expectedValue: "P1"

notify:

type: "escalation"

name: "clinical-escalation"

- name: "Operational P2 Alerts"

conditions:

- field: "tags"

operation: "contains"

expectedValue: "P2"

time_restrictions:

type: "weekday-and-time-of-day"

restrictions:

- startDay: "monday"

endDay: "friday"

startHour: 7

endHour: 19

notify:

type: "schedule"

name: "integration-oncall"

escalations:

- name: "clinical-escalation"

rules:

- delay: 0

notify:

- type: "schedule"

name: "integration-oncall"

- delay: 10

notify:

- type: "user"

name: "engineering-lead@hospital.org"

- delay: 25

notify:

- type: "user"

name: "vp-engineering@hospital.org"Building a Runbook Culture

Writing the initial runbooks is the easy part. Keeping them accurate and useful requires discipline:

- Every incident updates a runbook. After resolving any P1 or P2 incident, the on-call engineer updates the relevant runbook with what they learned. New failure modes get new runbooks.

- Monthly runbook review. The interface team reviews all runbooks monthly, removing outdated procedures and verifying that alert thresholds still match current system behavior.

- Runbook drill. Once per quarter, simulate each P1 alert and have a team member walk through the runbook. Time the response. If it takes longer than the target response time, simplify the runbook.

- Link runbooks to alerts. Every PagerDuty/OpsGenie alert annotation must include a direct URL to the relevant runbook. An alert without a runbook link is an alert that will be responded to with guesswork.

FAQ

How many runbooks should a healthcare integration team maintain?

Start with 6-8 runbooks covering the most common alert types (the ones in this guide). Expand as you encounter new failure modes. A mature team with 20+ integration channels typically maintains 15-20 runbooks.

Should runbooks be automated or manual?

Start manual, then automate the diagnostic steps. A runbook that automatically collects diagnostic data (logs, metrics, connection states) and presents it alongside the alert saves the most time. Automate remediation only for well-understood, low-risk actions like restarting a stopped Mirth channel. Never automate actions that could cause data loss without human approval.

How do I handle alerts for systems I do not own (e.g., vendor-hosted EHR)?

Create runbooks that focus on what you can control: verify the issue is not on your side (network, firewall, credentials), document the vendor's support contact and escalation path, capture diagnostic evidence (timestamps, error messages, network traces) to share with the vendor, and implement workarounds (queue messages for replay when the vendor system recovers).

What is the right on-call rotation for a healthcare integration team?

Weekly rotations with a primary and secondary on-call. The primary handles P1/P2 alerts. The secondary handles P3 if the primary is engaged with a higher-severity incident. Ensure at least 2 people can respond to any runbook -- single points of knowledge failure are as dangerous as single points of system failure. For teams under 4 engineers, consider a managed NOC for overnight coverage with escalation to the on-call engineer for P1 events.