In February 2026, the U.S. Department of Health and Human Services announced that nearly 500 million health records had been exchanged through TEFCA, the Trusted Exchange Framework and Common Agreement. That number was roughly 10 million in January 2025. In just over a year, the national interoperability network achieved a 4,900% increase in health data exchange. By any measure, healthcare connectivity is no longer a dream. It is an operational reality.

Yet ask any clinician receiving those records whether the data they receive is useful, and you will hear a different story entirely. A cardiologist pulls up a patient's transferred records and finds 200 pages of C-CDA documents — lab results without clinical context, medication lists that may or may not be current, diagnosis codes from three different terminology systems, and no indication of what is actually relevant to the chest pain presentation in front of them. The data arrived. But it cannot be acted upon.

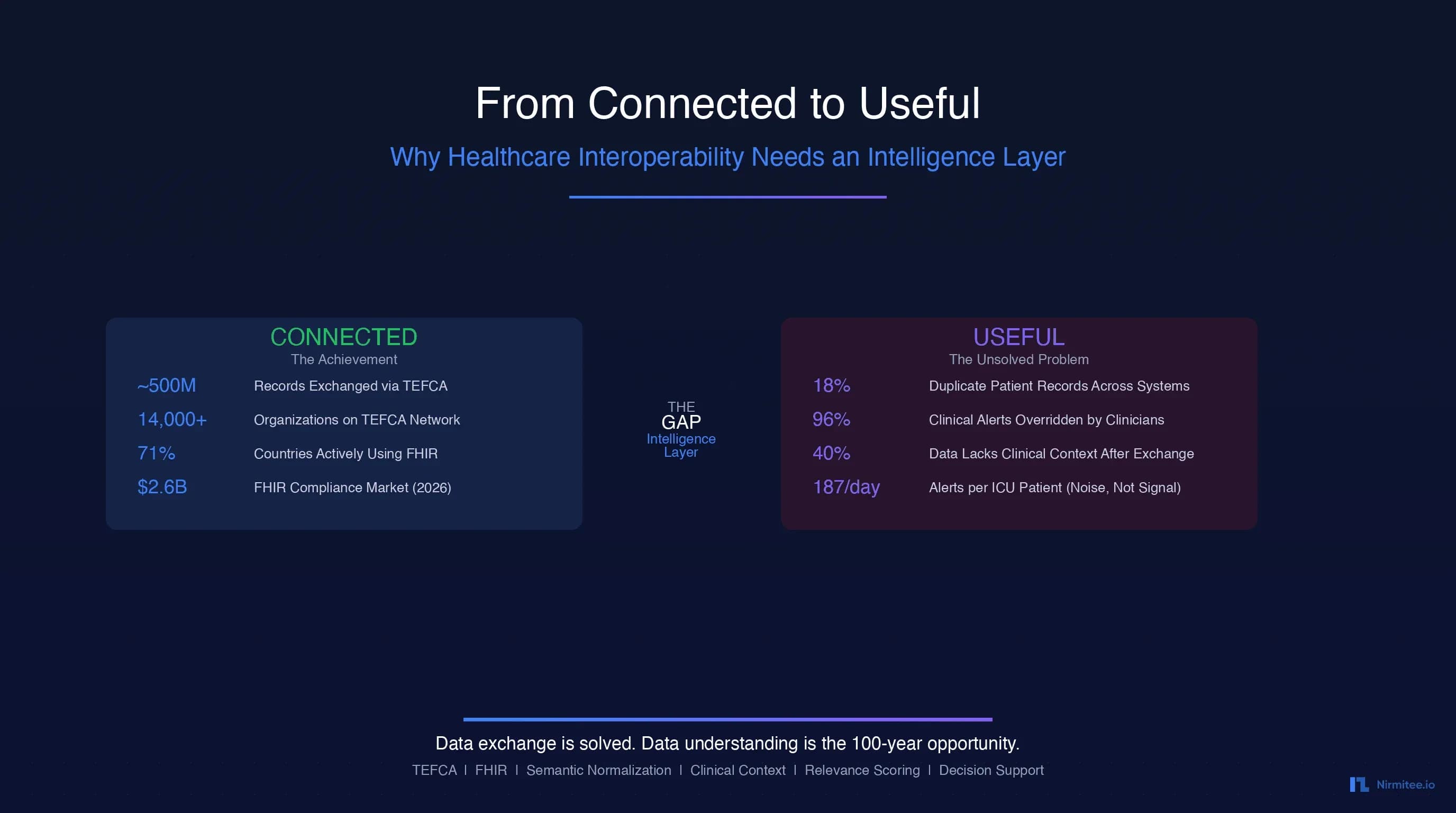

This is the paradox at the center of healthcare's next decade: we have solved the connectivity problem, but we have not solved the usefulness problem. The data flows. It just doesn't make sense when it gets there.

This essay argues that the next great infrastructure layer in healthcare is not another exchange protocol or another API standard. It is an intelligence layer — a set of capabilities that sit between raw data exchange and clinical decision-making, transforming exchanged data into understood, contextualized, prioritized, and actionable information. The company or ecosystem that builds this intelligence layer will not just build a product. It will build infrastructure that healthcare depends on for the next hundred years.

The Achievement: Healthcare Is Now Connected

Before we discuss what is missing, we should acknowledge what has been accomplished. The scale of healthcare connectivity in 2026 is historically unprecedented.

TEFCA: The National Network Is Real

TEFCA went live in December 2023 and has since connected thousands of organizations through Qualified Health Information Networks (QHINs). As of early 2026, the network has facilitated nearly 500 million record exchanges — a volume that would have been unimaginable even five years ago. The White House convened over 60 technology companies, payers, providers, and app vendors who signed commitments to interoperability objectives in Q1 2026.

FHIR Adoption Has Hit Critical Mass

According to the 2025 State of FHIR survey, 71% of respondents report FHIR is actively used in their country for at least a few use cases, up from 66% in 2024. In the United States, FHIR app adoption in outpatient settings climbed from 49% in 2021 to 64% in 2024. The HL7 FHIR compliance market is valued at $2.6 billion in 2026 and is projected to reach $8.6 billion by 2036. Meanwhile, 78% of surveyed countries now have regulations governing electronic health data exchange, and 73% explicitly mandate or recommend FHIR usage — up from 56% in 2023.

Regulatory Force Has Ended Information Blocking

The ONC Information Blocking Rule, effective since 2021, made it illegal for healthcare organizations to unreasonably restrict the exchange of electronic health information. CMS interoperability rules now require FHIR-based APIs for prior authorization and patient access by 2026, pushing payers to modernize their data platforms. ASTP/ONC released draft USCDI v7 in January 2026, proposing 29 new data elements to strengthen nationwide interoperability.

The bottom line: healthcare is connected. The pipes are built. The data flows. HL7v2 interfaces connect legacy systems, FHIR APIs connect modern ones, and TEFCA provides the national governance layer. This is a genuine achievement — the product of decades of standards development, billions of dollars in investment, and hard-fought regulatory change.

But connected does not mean useful.

The Problem Nobody's Talking About: Connected Does Not Mean Useful

Here is the uncomfortable truth that the interoperability community has been slow to confront: the mere exchange of health data does not produce better clinical outcomes. It produces more data — data that arrives in incompatible formats, stripped of clinical context, riddled with duplicates, and presented to clinicians who are already drowning in information.

Consider the current reality:

- 18% of patient EHR records are duplicates across systems, according to Verato research. Some organizations report duplication rates as high as 30%. Duplicate records account for nearly 2,000 preventable deaths and cost $1.7 billion in malpractice costs annually.

- Clinicians receive 187 alerts per ICU patient per day from physiologic monitors alone, per AHRQ PSNet research. In primary care, physicians experience a median of 63 alerts daily. The result: 96% of clinical alerts are overridden, creating a dangerous environment where critical alerts are lost in the noise.

- Traditional standardization leads to information loss. According to JMIR Medical Informatics research, the standardization process itself can lead to loss or distortion of original information, diminished adaptability, and increased cost of data exchange.

The healthcare industry has spent 15 years and billions of dollars building pipes. Now the pipes are flowing, and we are discovering that the water coming through them is not clean enough to drink.

If you are building on FHIR and TEFCA infrastructure, our technical guide to TEFCA for healthcare developers and CTOs covers the exchange layer in depth. What follows in this essay is the layer that must be built on top of that infrastructure.

The 5 Reasons Exchanged Data Fails to Be Useful

Through our work building healthcare integration systems and studying the interoperability landscape, we have identified five fundamental reasons why exchanged data fails to produce the clinical value it should. These are not implementation bugs. They are architectural gaps — problems that no amount of better pipes can solve.

Reason 1: Context Loss

When a lab result travels from one system to another, it typically arrives as a discrete data element: a LOINC code, a numeric value, a reference range. What does not travel with it is the clinical context that gives the result meaning. Why was this test ordered? What clinical question was the ordering physician trying to answer? What was the patient's presentation that prompted the workup?

A potassium level of 5.8 mEq/L means something very different for a patient in the emergency department with acute renal failure than it does for a patient with chronic kidney disease whose baseline potassium runs high. Without clinical context, the receiving system cannot distinguish between an emergency requiring immediate intervention and a known chronic condition requiring only monitoring.

This is not a theoretical problem. Healthcare IT Today reports that organizations consistently struggle with data from disparate sources precisely because of context loss during exchange. The data arrives, but the meaning stays behind.

Reason 2: Semantic Mismatch

Healthcare uses multiple overlapping terminology systems: SNOMED CT (over 350,000 clinical concepts), ICD-10-CM (over 70,000 diagnosis codes), LOINC (over 90,000 observation identifiers), CPT (over 10,000 procedure codes), RxNorm for medications, plus countless local code systems maintained by individual organizations.

The same clinical concept — "type 2 diabetes mellitus" — can be represented as SNOMED CT 44054006, ICD-10 E11.9, or any number of local codes. According to a Nature Scientific Reports study analyzing terminology system comprehensiveness, differences in granularity between terminologies have significant implications for data interoperability, causing information loss when mapping between systems. A systematic literature review in PMC found that SNOMED CT (32%) and LOINC (26%) were the most commonly used terminologies in semantic interoperability research, but mapping between them remains incomplete and imperfect.

FHIR helps by providing a common transport format, but as research published in PMC notes, because FHIR resources lack explicit contextual constraints, institutions define their own extensions for the same healthcare service, creating semantic ambiguity that undermines the purpose of standardization.

Reason 3: Volume Overwhelm

More data does not mean better care. In many cases, it means worse care — because the signal is lost in the noise.

A PMC study on alert burden reduction found that clinicians in electronic health record systems face such extreme alert volumes that alert fatigue has become a patient safety concern. JMIR research published in 2026 describes how cognitive overload results from not just system alerts but also pagers, emails, phone calls, and verbal prompts — all contributing to fatigue and dismissal of system alerts.

When TEFCA enables the exchange of a patient's complete longitudinal record — potentially thousands of documents from dozens of sources — the receiving clinician does not get a comprehensive picture. They get an overwhelming data dump with no indication of what matters right now. The sheer volume of exchanged data, without intelligent filtering, makes the problem of clinical decision-making harder, not easier.

Reason 4: Temporal Irrelevance

Exchanged health data often lacks temporal intelligence. A medication list from six months ago arrives and is presented alongside today's medication list with no clear indication of which is current. A diagnosis that was considered, investigated, and ruled out appears as an active condition because the resolution was never formally coded. A patient's blood pressure readings from three different visits are presented without trend analysis or context about what changed and why.

Temporal irrelevance is particularly dangerous because it can lead to clinical decisions based on outdated information. A medication that was discontinued due to an adverse reaction, if presented as current, could be re-prescribed. A resolved condition, if presented as active, could trigger unnecessary workups. The exchange layer moves data through time without understanding time.

Reason 5: Trust Deficit

When three different systems report three different blood pressures for the same patient on the same date, which one is correct? When a patient's allergy list from their primary care EHR conflicts with the allergy list from a hospital system, which should the receiving clinician trust?

Healthcare data exchange currently operates without a robust trust framework for data provenance and authority. Verato's research shows that the average healthcare organization has an 18% duplicate record rate, and matching errors are driven by inconsistencies in basic identifying fields — middle names, Social Security numbers, and demographic data. When the receiving system cannot confidently identify whether two records refer to the same patient, the entire premise of interoperability breaks down.

The trust deficit extends beyond patient matching. It encompasses source credibility (is this data from a clinician or an automated system?), recency confidence (when was this data last verified?), and completeness assurance (does this record represent the full picture or a partial view?). Without these trust signals, clinicians rationally discount exchanged data in favor of what they can directly verify — undermining the entire investment in interoperability.

What an Intelligence Layer Looks Like

The five problems above share a common root cause: the exchange layer moves data without understanding it. The solution is not better exchange — it is a new architectural layer that sits between exchange and application, transforming raw data into useful information.

We call this the intelligence layer. Its architecture follows a five-stage transformation pipeline:

Raw Exchange -> Semantic Normalization -> Clinical Contextualization -> Relevance Prioritization -> Actionable Intelligence

Each stage progressively increases the clinical utility of the data. Raw exchanged data is approximately 20% usable — it has the right information somewhere, but finding it, understanding it, and trusting it requires enormous manual effort. By the time data passes through all five stages of the intelligence layer, it reaches 95%+ usability — information that can be directly acted upon by clinicians, decision support systems, and population health platforms.

For teams building the data infrastructure to support this vision, our guide on healthcare data lake architecture for EHR and AI-ready analytics provides the foundational patterns for storing and processing the data that feeds the intelligence layer.

Component 1: Semantic Normalization Engine

The first component of the intelligence layer addresses semantic mismatch. Its job is to take clinical data expressed in any terminology system and map it to a unified, consistent representation.

What It Does

The semantic normalization engine performs four core functions:

- Terminology mapping: Translates between SNOMED CT, ICD-10-CM, LOINC, CPT, RxNorm, and local codes. When a system sends a local code for "diabetes," the engine maps it to the canonical SNOMED CT concept 44054006.

- Concept alignment: Identifies when different codes from different systems refer to the same clinical concept. "Type 2 diabetes mellitus" in ICD-10 (E11.9) and SNOMED CT (44054006) are recognized as semantically equivalent.

- Granularity reconciliation: Handles cases where one system uses a specific code and another uses a general code. If System A sends "vertebrogenic low back pain" (M54.51) and System B sends "low back pain" (M54.5), the engine recognizes the relationship and preserves the most specific available code.

- Deduplication: When the same clinical concept arrives from multiple sources in different terminologies, the engine deduplicates to a single canonical representation, maintaining provenance for all source entries.

Why It Matters

Without semantic normalization, every downstream process — clinical decision support, quality measurement, population health analytics, research — operates on inconsistent data. A quality measure that counts patients with diabetes will miss patients coded in a non-standard terminology. A clinical decision support rule for drug interactions cannot fire if the medications are coded in incompatible systems.

The Annals of Laboratory Medicine found that terminology standards are essential for data-driven artificial intelligence in healthcare, but most legacy systems still use incompatible terminologies or unstructured data, requiring manual transformations. The normalization engine automates this transformation at scale.

Component 2: Clinical Context Engine

The second component addresses context loss — the most critical gap in current interoperability. Its job is to attach clinical meaning to discrete data elements.

What It Does

The clinical context engine enriches raw data with the context needed for clinical interpretation:

- Clinical question attachment: Links lab results, imaging orders, and referrals to the clinical questions that prompted them. A troponin test is connected to the differential diagnosis of acute coronary syndrome. An MRI order is linked to the presenting symptom of progressive weakness.

- Encounter contextualization: Associates data elements with the clinical encounters that generated them, including the type of encounter (emergency, inpatient, outpatient, telehealth), the presenting complaint, and the clinical assessment at the time.

- Problem list association: Maps every data point to the problem list entries it relates to. A hemoglobin A1c result is associated with the patient's diabetes diagnosis. A PT/INR result is linked to their anticoagulation therapy for atrial fibrillation.

- Care episode mapping: Groups related encounters, orders, results, and documentation into coherent care episodes — the cancer treatment journey, the post-surgical recovery, the chronic disease management cycle — providing narrative coherence to what would otherwise be disconnected data points.

Why It Matters

Context is what transforms data into information. The same vital sign means different things in different clinical contexts. The same lab result demands different actions depending on why it was ordered and what question it was meant to answer. Without context, clinicians must spend time reconstructing the clinical story from scattered data — time that should be spent on clinical decision-making.

As the World Economic Forum noted in January 2026, the era of simple data movement is ending. Integration without intelligence cannot keep pace with the complexity of healthcare requirements. The context engine is what makes integration intelligent.

Component 3: Relevance Scoring

The third component addresses volume overwhelm. Its job is to rank exchanged data by clinical relevance to the current encounter, presenting the most important information first and filtering noise.

The Scoring Framework

The relevance scoring engine evaluates every piece of incoming data across five weighted dimensions:

| Dimension | Weight | What It Measures | Example |

|---|---|---|---|

| Clinical Acuity | 30% | How urgent or critical is this data point? | Critical potassium = 100; routine vitals = 20 |

| Temporal Recency | 25% | How recent is the data? | Today's labs = 100; 6 months ago = 20 |

| Encounter Relevance | 20% | How related to the current clinical question? | Same diagnosis = 100; unrelated = 10 |

| Specialty Match | 15% | Does the data match the requesting specialty? | Cardiac data for cardiologist = 100; cross-specialty = 40 |

| Source Authority | 10% | How trustworthy is the source system? | Primary EHR = 100; HIE aggregate = 50 |

Scoring in Practice

Consider an emergency department physician evaluating a patient with chest pain. The patient's longitudinal record contains hundreds of data points from multiple sources. The relevance scoring engine processes each data point and produces a ranked list:

- Score 98: Troponin result from 2 hours ago — critical lab, fresh, directly relevant to chest pain evaluation

- Score 94: ECG report from today — cardiac test, same encounter

- Score 72: Blood pressure history from the past 30 days — related vitals, moderately recent

- Score 65: Prior echocardiogram from 3 months ago — relevant cardiology data, aging

- Score 48: Lipid panel from 6 months ago — related but not acute

- Score 8: Dental records from 1 year ago — unrelated specialty

Data scoring above 70 is surfaced immediately. Data scoring 40-69 is available on demand. Data scoring below 40 is archived but accessible if specifically requested. The result: the clinician sees what matters, when it matters, without being buried in noise.

Component 4: Longitudinal Intelligence

The fourth component addresses temporal irrelevance. Its job is to assemble a coherent patient timeline from fragmented data and detect clinically meaningful changes over time.

What It Does

- Patient timeline assembly: Constructs a unified chronological view of the patient's health journey from all available sources — encounters, diagnoses, medications, procedures, lab results, imaging — resolving conflicts and establishing temporal relationships.

- Trend detection: Identifies statistically significant trends in longitudinal data. A gradually rising creatinine over 18 months signals progressive kidney disease. A declining hemoglobin across three visits suggests chronic blood loss. These trends are invisible in individual data points but become obvious when plotted over time.

- Change detection: Flags clinically significant changes from baseline. When a patient's blood pressure shifts from well-controlled to consistently elevated, or when a previously normal lab value crosses into an abnormal range, the longitudinal intelligence engine alerts the care team to the change rather than just the current value.

- Medication reconciliation: Maintains a temporally accurate medication history that distinguishes between current medications, recently discontinued medications (with reasons), medications that were prescribed but never filled, and historical medications no longer relevant. This temporal medication intelligence prevents the dangerous scenario of restarting a medication that was stopped due to an adverse event.

- Gap analysis: Identifies missing elements in the expected care timeline. If a diabetic patient has no A1c result in the past 12 months, or a cancer patient is overdue for surveillance imaging, the engine flags the gap for clinical follow-up.

Why It Matters

Healthcare is inherently longitudinal. Diseases evolve. Treatments change. Patients move between providers. A single-point-in-time snapshot of exchanged data misses the narrative that makes clinical decision-making possible. The longitudinal intelligence engine transforms disconnected data points into a coherent clinical story with a beginning, middle, and current chapter.

Component 5: Decision Support Integration

The fifth component closes the loop from data to action. Its job is to translate the normalized, contextualized, prioritized, longitudinal intelligence into specific clinical recommendations.

What It Does

- Evidence-based alerting: Generates alerts that are clinically meaningful because they are informed by context, relevance, and longitudinal trends — not just threshold crossings. Instead of alerting on every out-of-range potassium, it alerts on potassium levels that are out of range in the context of the patient's medications, renal function, and recent trend.

- Clinical pathway matching: Maps the patient's current clinical state to evidence-based care pathways, identifying where the patient is in their treatment journey and what the next recommended steps are. A newly diagnosed heart failure patient is matched to guideline-directed medical therapy protocols.

- Risk stratification: Uses the enriched, contextualized data to calculate risk scores that are more accurate than those based on raw exchange data. A readmission risk model informed by medication adherence, social determinants, and longitudinal trend data produces meaningfully better predictions than one based on diagnosis codes alone.

- Closed-loop feedback: Tracks whether intelligence-driven recommendations were accepted or overridden, and uses this feedback to continuously improve the relevance and accuracy of future recommendations.

For a deeper look at how these intelligent workflows translate into real clinical processes, see our analysis of 5 healthcare workflows that agentic AI will transform by 2027.

The Role of AI and LLMs as the Intelligence Layer

Until recently, building the intelligence layer described above would have required an army of knowledge engineers manually encoding clinical logic, terminology mappings, and context rules. LLMs and modern AI have fundamentally changed this equation.

What LLMs Can Do That Rules-Based Systems Cannot

LLMs bring four breakthrough capabilities to the intelligence layer:

1. Read and understand clinical narratives. Approximately 80% of clinical data exists in unstructured form — progress notes, discharge summaries, operative reports, radiology reads, pathology findings. Rules-based NLP systems have struggled with clinical text for decades, limited by the ambiguity, abbreviation, and domain specificity of medical language. Modern LLMs trained on clinical corpora can extract structured information from unstructured notes with accuracy rates of 89% to 100% on clinical data extraction tasks, as demonstrated in recent studies.

2. Map between terminologies without explicit crosswalks. Traditional terminology mapping requires manually curated crosswalk tables — a process that is expensive, incomplete, and always outdated. LLMs understand the semantic meaning of clinical concepts and can map between terminologies by understanding what the concept means rather than relying on explicit table lookups. A local code for "sugar disease" can be mapped to SNOMED CT's concept for type 2 diabetes mellitus because the model understands the semantic relationship, not because someone created a mapping entry.

3. Infer clinical context from surrounding documentation. The clinical context that is lost during data exchange often exists in the clinical notes that accompany the structured data. An LLM can read the clinical note that says "ordering troponin to rule out ACS in the setting of acute chest pain onset 3 hours ago" and attach that context to the troponin lab result — something a rules-based system cannot do without predefined context extraction patterns for every possible clinical scenario.

4. Synthesize information across sources into coherent narratives. Rather than presenting clinicians with raw data from five different systems, an LLM-powered intelligence layer can synthesize that data into a coherent clinical summary: "Patient presents with 3-year history of progressively worsening heart failure (EF declined from 45% in 2023 to 32% in 2025), on guideline-directed medical therapy including sacubitril/valsartan 97/103mg BID and carvedilol 25mg BID, with recent troponin elevation suggesting possible ischemic etiology." This synthesis — combining data from multiple sources, identifying trends, and constructing a clinical narrative — is the core capability of the intelligence layer.

The Guardrails Question

Deploying LLMs in clinical settings demands rigorous safety frameworks. The intelligence layer must incorporate:

- Explainability: Every recommendation, every context inference, every relevance score must be traceable back to the source data and reasoning that produced it. "Black box" intelligence is unacceptable in clinical care. As CU Anschutz researchers note, LLMs must be able to present output that lets humans understand why they are presenting the output they are.

- Confidence scoring: Not all intelligence is equally certain. A terminology mapping with 99% confidence should be treated differently than one with 72% confidence. The intelligence layer must communicate uncertainty explicitly.

- Human-in-the-loop: Critical clinical decisions must always involve clinician judgment. The intelligence layer recommends; the clinician decides. The system must never present AI-generated insights as authoritative clinical truth without human validation.

- Continuous validation: The intelligence layer must measure its own performance against clinical outcomes, flagging degradation and incorporating feedback to improve over time.

Who Is Building This

The honest answer is that nobody has built the complete intelligence layer yet. But pieces exist, and the landscape is rapidly evolving.

The Current Landscape

EHR vendors (Epic, Oracle Health) are adding AI capabilities within their platforms — ambient documentation, clinical summarization, inbox management. But these capabilities are siloed within each vendor's ecosystem. Epic's intelligence does not extend to data from Cerner systems, and vice versa. This is intelligence within a walled garden, not an intelligence layer for the ecosystem.

Interoperability platforms (Redox, InterSystems, Health Gorilla) are adding normalization and data quality capabilities to their exchange products. Onyx acquired InteropX in 2026 to strengthen its position in large-scale payer interoperability. Edifecs launched its Healthcare Interoperability Cloud in 2025. But these platforms primarily focus on transport and format normalization, not clinical intelligence.

Healthcare AI companies (Viz.ai, Tempus, Aidoc) are building vertical intelligence solutions for specific clinical domains — stroke detection, oncology, radiology. These are valuable but narrow — they solve the intelligence problem for one domain, not for the healthcare ecosystem.

Data platform companies (Arcadia, Health Catalyst, Innovaccer) are building analytics platforms that aggregate and normalize data for population health and value-based care. These come closest to the intelligence layer vision, but they are typically oriented toward retrospective analytics rather than real-time clinical decision support.

AI infrastructure companies are building general-purpose clinical NLP and LLM capabilities. Viz.ai's work on unlocking healthcare data for LLM-powered innovation represents this emerging category.

The Gap

What is missing is a horizontal intelligence layer — one that works across all data sources (not just one EHR vendor), across all clinical domains (not just radiology or oncology), in real time (not just retrospective analytics), and with the full five-component architecture (normalization + context + relevance + longitudinal + decision support). This is the gap. This is the opportunity.

The 100-Year Opportunity

In every major industry, the company that builds the intelligence layer between raw data and human decision-making becomes infrastructure — becomes something the industry cannot function without.

- Google built the intelligence layer between the raw web (billions of unorganized pages) and human information needs. The web existed before Google. But Google made it useful.

- Bloomberg built the intelligence layer between raw financial data (market feeds, filings, news) and financial decision-making. Financial data existed before Bloomberg. But Bloomberg made it actionable.

- Stripe built the intelligence layer between raw payment infrastructure (banks, card networks, ACH) and commerce. Payment rails existed before Stripe. But Stripe made them usable for developers.

Healthcare is in the same position today that the web was in 1997, that financial data was in 1982, that payment infrastructure was in 2010. The raw infrastructure exists. The data flows. But nobody has built the layer that makes it useful.

Three Eras of Healthcare Data

We see the evolution of healthcare data infrastructure as three distinct eras:

Era 1: Connectivity (2009-2024) — Achieved. The HITECH Act mandated EHR adoption. HL7 FHIR created a modern API standard. ONC's Information Blocking Rule required data sharing. TEFCA created the national network. The healthcare interoperability solutions market reached $5.04 billion in 2025. This era is complete. The dominant players — Epic, InterSystems, Redox — are established.

Era 2: Intelligence (2025-2035) — Emerging. This is where we are now. The intelligence layer — semantic normalization, clinical context, relevance scoring, longitudinal intelligence, decision support — is being built in fragments by different companies. No dominant player has emerged. The market is projected to grow to $8.6 billion by 2030, driven by the convergence of AI capabilities and interoperability infrastructure. This is the window of opportunity.

Era 3: Infrastructure (2030+) — Future. The company that builds the intelligence layer in Era 2 becomes infrastructure in Era 3. When every clinical decision, every quality measure, every research query depends on the intelligence layer, the layer becomes as essential as the EHR itself — perhaps more so, because it sits between all EHRs and all applications. The projected market for healthcare AI and intelligence infrastructure exceeds $20 billion by 2035.

Why This Is a 100-Year Problem

Healthcare will always generate more data than humans can process. Clinical complexity will always exceed human cognitive capacity. The number of medical literature published doubles every 73 days. New diagnostic modalities, new treatment options, new drugs, new devices — the clinical knowledge base grows exponentially while the human brain remains constant.

This means the intelligence layer is not a feature. It is not a product cycle. It is a permanent infrastructure requirement — a capability that healthcare will need for as long as healthcare exists. The company that builds it is not building for a product market fit window. It is building for the century.

Conclusion: The Thesis

Healthcare has spent 15 years and billions of dollars solving the connectivity problem. That problem is solved. Nearly 500 million records flow through TEFCA. FHIR is adopted globally. Information blocking is illegal. The pipes are built.

The next problem — the problem that will define healthcare technology for the next decade — is making that exchanged data useful. Useful means normalized across terminologies. Useful means enriched with clinical context. Useful means prioritized by relevance. Useful means assembled into longitudinal narratives. Useful means translated into actionable clinical intelligence.

The intelligence layer is not optional. It is the inevitable next chapter. The question is not whether it will be built, but who will build it — and whether they will build it as a horizontal platform that serves the entire ecosystem, or as a vertical capability locked inside a walled garden.

At Nirmitee, we believe healthcare's intelligence layer must be open, interoperable, and standards-based — built on the same principles of openness that made FHIR successful, applied to the next level of the stack. We are building the components described in this essay because we believe this is not just a business opportunity. It is healthcare's most important technical challenge for the next generation.

The data flows. Now it is time to make it useful.

Frequently Asked Questions

What is a healthcare intelligence layer and how does it differ from interoperability?

A healthcare intelligence layer is a set of capabilities — semantic normalization, clinical contextualization, relevance scoring, longitudinal intelligence, and decision support integration — that sit between data exchange infrastructure (like TEFCA and FHIR APIs) and clinical applications (like EHRs and CDS systems). While interoperability solves the problem of moving data between systems, the intelligence layer solves the problem of making that data understandable and actionable. Interoperability ensures the data arrives; the intelligence layer ensures the data is useful when it gets there. Think of it as the difference between having a library (connectivity) and having a librarian who can find exactly the right information for your specific question (intelligence).

Why is TEFCA's 500 million record exchange milestone not enough to improve clinical outcomes?

TEFCA's 500 million record milestone demonstrates that healthcare connectivity is achieved at national scale. However, volume of exchange does not equal quality of use. The exchanged data suffers from five fundamental problems: context loss (lab results without clinical meaning), semantic mismatch (same concept coded differently across systems), volume overwhelm (too much data, not enough signal), temporal irrelevance (outdated information presented as current), and trust deficit (no framework for determining which source is authoritative). Until these problems are addressed by an intelligence layer, more data exchange actually makes the clinician's job harder, not easier, by increasing the noise they must filter through to find clinically relevant information.

How can LLMs improve healthcare interoperability beyond what FHIR and HL7 standards provide?

FHIR and HL7 standards provide the transport format and API specifications for moving data between systems — they define the envelope and the address format, but they do not understand the letter inside. LLMs add four critical capabilities: reading and extracting structured information from unstructured clinical narratives (80% of clinical data is unstructured); mapping between terminologies by understanding semantic meaning rather than relying on static crosswalk tables; inferring clinical context from documentation to attach meaning to discrete data elements; and synthesizing information from multiple sources into coherent clinical summaries. Studies show LLMs achieve 89-100% accuracy on clinical data extraction tasks, making them viable for production use when deployed with appropriate guardrails including explainability, confidence scoring, and human-in-the-loop validation.

What are the five components of a healthcare intelligence layer architecture?

The five components are: (1) Semantic Normalization Engine, which maps and aligns clinical concepts across terminology systems like SNOMED CT, ICD-10, LOINC, and RxNorm to create a unified vocabulary; (2) Clinical Context Engine, which attaches clinical meaning to data by linking results to the questions that prompted them, encounters to presenting complaints, and data points to relevant problems; (3) Relevance Scoring Engine, which ranks all available data by clinical relevance to the current encounter using weighted dimensions of acuity, recency, encounter relevance, specialty match, and source authority; (4) Longitudinal Intelligence Engine, which assembles patient timelines, detects trends, flags clinically significant changes, and identifies care gaps; and (5) Decision Support Integration, which translates enriched data into evidence-based alerts, pathway matching, risk stratification, and closed-loop recommendations. Each component incrementally increases data usability from approximately 20% (raw exchange) to 95%+ (actionable intelligence).

Which companies are currently building healthcare intelligence layer capabilities in 2026?

No single company has built the complete horizontal intelligence layer, but several are building important pieces: EHR vendors like Epic and Oracle Health are adding AI-powered clinical summarization and ambient documentation within their platforms, though these are siloed. Interoperability platforms like Redox, InterSystems, and Onyx (which acquired InteropX in 2026) focus on transport and normalization. Healthcare AI companies like Viz.ai, Tempus, and Aidoc build domain-specific intelligence for radiology, oncology, and other specialties. Data platform companies like Arcadia, Health Catalyst, and Innovaccer build analytics-oriented intelligence for population health. The gap that remains is a horizontal intelligence layer that works across all EHR vendors, all clinical domains, in real time, with the full five-component architecture from semantic normalization through decision support integration.