Your health system is running a $200 million EHR that stores 15 years of clinical data across 47 facilities. The board has approved a cloud migration. The CTO says "cloud-first for all new analytics." The CISO wants HIPAA-compliant everything. The CFO wants predictable costs. The CMO wants AI-powered clinical decision support by Q3. And you — the architect — need to pick a cloud provider that satisfies all four stakeholders for the next decade.

Here is the problem: there is no independent, technically rigorous comparison of AWS, Azure, and GCP for healthcare workloads. What exists online is vendor marketing dressed as analysis, or surface-level blog posts that compare three bullet points and call it a day. None of them cover FHIR data store performance, real pricing at scale, DICOM routing architectures, or the compliance certifications that actually matter for US healthcare.

This post is the comparison we wished existed when we started building healthcare data platforms. It covers every service, every pricing model, every compliance certification, and every architectural pattern across all three providers — with the depth required to make a $5-10 million infrastructure decision.

The Healthcare Cloud Landscape in 2026

Healthcare cloud infrastructure is no longer optional. It is the operating environment. The market reflects this shift: Grand View Research estimates the global healthcare cloud computing market at $89.4 billion in 2025, growing at a 17.8% CAGR through 2030. In the US alone, healthcare cloud spending exceeded $55 billion in 2025, driven by three converging forces.

Why Healthcare Cloud Is Different

Healthcare is not a standard enterprise workload. The regulatory, data, and integration requirements create constraints that eliminate most generic cloud architectures:

- HIPAA requires a Business Associate Agreement (BAA): Every cloud service that touches PHI must be covered under a signed BAA. Not every service within a cloud provider is BAA-eligible — AWS covers roughly 150 services under its BAA, Azure covers over 80, and GCP covers approximately 100. Using a non-covered service for PHI processing is a compliance violation, regardless of how you encrypt the data.

- Data gravity is extreme: A mid-size health system generates 50-100 TB of clinical data annually across FHIR resources, DICOM imaging, HL7v2 messages, PDF documents, and genomic files. Once that data lands in a cloud provider, egress costs and migration complexity create vendor lock-in within 18-24 months.

- Interoperability mandates are non-negotiable: The 21st Century Cures Act, CMS Interoperability Rules, and TEFCA require FHIR R4 APIs, patient access APIs, and standardized data exchange. Your cloud architecture must natively support FHIR, not bolt it on as an afterthought.

- Clinical AI requires specialized pipelines: Training models on de-identified clinical data, running NLP on unstructured notes, and deploying inference at the point of care all demand healthcare-specific ML services — not generic SageMaker or Vertex instances configured from scratch.

The Three Contenders

AWS, Azure, and GCP each entered healthcare with different strategies and different strengths. AWS built HealthLake as a purpose-built FHIR data store with integrated NLP. Azure acquired partnerships — most notably with Epic — and built Health Data Services as a unified FHIR/DICOM/MedTech platform. GCP leveraged its BigQuery analytics engine and DeepMind's clinical AI research to create the Cloud Healthcare API with best-in-class analytics integration.

The right choice depends on your existing EHR vendor, your analytics ambitions, your team's cloud expertise, and your budget. Let us break down each one.

AWS for Healthcare: HealthLake, Comprehend Medical, and the Breadth Play

Amazon Web Services approaches healthcare the way it approaches every vertical: with the broadest service catalog and the expectation that you will assemble the pieces yourself. AWS has more BAA-eligible services than any other provider, and its healthcare-specific offerings have matured significantly since HealthLake's general availability in 2021.

Core Healthcare Services

Amazon HealthLake is a FHIR R4-compliant data store built on top of AWS infrastructure. Unlike a generic FHIR server, HealthLake provides:

- Managed FHIR R4 store: Full CRUD operations, search, and $everything operations on FHIR R4 resources. Supports US Core Implementation Guide profiles.

- Integrated NLP tagging: Automatic extraction of medical entities (conditions, medications, procedures, test results) from unstructured text within FHIR DocumentReference resources, powered by Comprehend Medical.

- Analytics export: Native integration with AWS Lake Formation and Athena for SQL-based analytics over FHIR data, exported in Apache Parquet format.

- SMART on FHIR: Supports SMART on FHIR authorization for third-party application access.

- Import/Export: Bulk FHIR import from S3 (ndjson format) and export to S3 for downstream processing.

Amazon Comprehend Medical is an NLP service specifically trained on clinical text. It extracts entities across five categories: medication (drug names, dosages, routes), medical conditions (diagnoses, symptoms, signs), test/treatment/procedure (lab tests, surgical procedures), anatomy (body systems, organs), and protected health information (PHI for de-identification). The service also performs ICD-10-CM and RxNorm code inference, mapping free-text clinical notes to standardized terminologies.

Amazon SageMaker provides the ML platform for custom clinical model training and deployment. While not healthcare-specific, SageMaker's integration with HealthLake data exports enables end-to-end pipelines from clinical data to deployed prediction models. AWS also offers Amazon Bedrock for generative AI applications, with foundation models from Anthropic, Meta, and others that can be fine-tuned on clinical data under BAA coverage.

AWS Healthcare Architecture

A typical AWS healthcare data platform looks like this: HL7v2 messages arrive via Amazon MQ or a custom integration engine, get transformed to FHIR R4 via AWS Lambda functions, and land in HealthLake. DICOM imaging flows through S3 with metadata indexed in a custom DynamoDB catalog (AWS does not have a native DICOM service comparable to Azure's). Clinical notes in HealthLake trigger Comprehend Medical for NLP enrichment. Analytics consumers query via Athena on Parquet exports, and ML pipelines pull from Lake Formation into SageMaker.

AWS Healthcare Pricing

HealthLake pricing operates on a consumption model:

| Component | Pricing (US East, 2026) | Notes |

|---|---|---|

| FHIR resource storage | $4.16 per 10,000 FHIR resources/month | Includes versioning |

| Read requests | $0.06 per 1,000 requests | GET, search, $everything |

| Write requests | $0.20 per 1,000 requests | POST, PUT, PATCH, DELETE |

| Data import (bulk) | $6.00 per GB imported | From S3, ndjson format |

| Comprehend Medical | $0.01 per 100 characters (NER) | Volume discounts after 5M characters |

Cost at scale: For a health system with 10 million FHIR resources, 5 million monthly read requests, and 500,000 monthly writes, the HealthLake bill alone runs approximately $4,460/month before analytics, ML, and infrastructure costs. Add Comprehend Medical processing for 1 million clinical notes at an average of 5,000 characters each, and NLP adds $500,000/month — a significant line item that requires careful volume estimation.

AWS Strengths and Limitations

Strengths: Broadest BAA-eligible service catalog (~150 services). Deepest infrastructure primitives. Best for organizations with strong cloud engineering teams that want maximum control. HealthLake's integrated NLP tagging is unique — no other provider tags FHIR resources with NLP entities at the storage layer. Healthcare data lake architectures benefit from AWS's mature Lake Formation and Glue ecosystem.

Limitations: No native DICOM service — you must build or buy a DICOM management layer on S3. HealthLake does not support FHIR R5. No native HL7v2 message ingestion — requires custom transformation logic. The NLP pricing model becomes expensive at scale for high-volume clinical note processing.

Azure for Healthcare: Health Data Services, Epic Integration, and the Enterprise Play

Microsoft Azure's healthcare strategy is built on two pillars: a unified health data platform and deep EHR vendor partnerships. If your organization runs Epic (which covers roughly 38% of the US acute care market) or Cerner/Oracle Health, Azure offers integration pathways that no other cloud provider can match.

Core Healthcare Services

Azure Health Data Services is a unified platform containing three interconnected services:

- FHIR service: Fully managed FHIR R4 server with SMART on FHIR v1 and v2 support, FHIR search parameter customization, $convert-data operations (C-CDA to FHIR, HL7v2 to FHIR using Liquid templates), and $export for bulk data access.

- DICOM service: DICOMweb-compliant medical imaging management — STOW-RS (store), WADO-RS (retrieve), QIDO-RS (query). This is the only native cloud DICOM service among the three providers. Supports extended query tags for custom metadata indexing.

- MedTech service (IoMT): Ingestion and normalization of IoT medical device data (wearables, patient monitors, infusion pumps), with configurable device-to-FHIR mapping templates that generate FHIR Observation resources.

The critical differentiator is that these three services share a single workspace, identity layer (Azure AD), and compliance boundary. A patient's FHIR resources, DICOM studies, and device telemetry exist within one governance perimeter.

Azure AI Health Insights provides clinical NLP capabilities including radiology report summarization, clinical trial matching, and cancer biomarker inference. Text Analytics for Health extracts medical entities, relations, and assertions (negation, conditional, associated-subject) from clinical text with healthcare-specific entity linking to UMLS, ICD-10, SNOMED CT, and RxNorm.

The Epic Factor

In November 2022, Epic announced that it was migrating its hosting infrastructure to Microsoft Azure. This partnership means:

- Epic on Azure: Epic's hosted environment runs on Azure infrastructure, giving Azure-native organizations lower-latency data access to Epic's backend.

- Microsoft Fabric integration: Epic data can flow into Microsoft Fabric (the successor to Azure Synapse) for analytics, with pre-built connectors and data models.

- Azure AI integration: Epic's integration with Azure OpenAI Service enables clinical documentation assistance (DAX Copilot) and other AI features within Epic workflows.

For organizations running Epic as their primary EHR, Azure offers a uniquely streamlined data pipeline. The FHIR data from Epic can flow directly into Azure Health Data Services without crossing cloud boundaries, reducing latency, egress costs, and compliance complexity.

Azure Healthcare Pricing

| Component | Pricing (US East, 2026) | Notes |

|---|---|---|

| FHIR service — structured storage | $0.378 per GB/month | GB-based, not per-resource |

| FHIR service — API requests | $0.715 per 10,000 API calls | All CRUD and search operations |

| DICOM service — storage | $0.189 per GB/month | DICOMweb storage |

| DICOM service — transactions | $0.715 per 10,000 transactions | STOW/WADO/QIDO |

| MedTech service | $0.429 per 10,000 messages | Device data ingestion |

| Text Analytics for Health | $25.00 per 1,000 text records | Volume discounts available |

Cost at scale: Azure's GB-based FHIR pricing model is more predictable than AWS's per-resource model. For 10 million FHIR resources (approximately 50 GB of structured data), storage costs roughly $19/month. At 5 million monthly API calls, transaction costs add $358/month. The total FHIR service cost for the same workload as our AWS example comes to approximately $377/month — an order of magnitude less expensive for FHIR storage and access. However, Azure's NLP services are priced per text record rather than per character, which can be more expensive for short notes and cheaper for long documents.

Azure Strengths and Limitations

Strengths: Only provider with native DICOM service. Unified workspace for FHIR + DICOM + device data. Epic partnership creates a compelling story for the 38% of US hospitals running Epic. Microsoft ecosystem integration (Teams, Power Platform, Fabric) appeals to organizations already invested in the Microsoft stack. Most cost-effective FHIR storage at scale.

Limitations: Fewer BAA-eligible services than AWS (~80 vs ~150). Health Data Services is region-limited compared to AWS and GCP's broader availability. The dependency on Azure AD for identity can be a constraint for organizations with non-Microsoft identity providers. HL7v2 to FHIR conversion requires Liquid template authoring, which has a learning curve.

GCP for Healthcare: Cloud Healthcare API, BigQuery, and the Analytics Play

Google Cloud Platform's healthcare strategy reflects Google's DNA: data and analytics. The Cloud Healthcare API provides a solid FHIR, HL7v2, and DICOM foundation, but GCP's true differentiator is what happens after the data lands — BigQuery for analytics, Vertex AI for ML, and Looker for visualization create the most powerful healthcare analytics pipeline available from any single cloud provider.

Core Healthcare Services

Cloud Healthcare API is a single API surface that manages three data types:

- FHIR stores: FHIR R4-compliant data stores with full search, CRUD, $everything, $export, and $purge operations. Supports configurable FHIR profiles, search parameter tuning, and referential integrity enforcement. Native SMART on FHIR support.

- HL7v2 stores: Native ingestion and storage of HL7v2 messages — the only major cloud provider with a managed HL7v2 message store. Messages can be parsed, filtered, and routed to FHIR stores via configurable mapping pipelines.

- DICOM stores: DICOMweb-compliant imaging data management with STOW-RS, WADO-RS, and QIDO-RS. Includes de-identification operations for PHI removal from DICOM metadata and burned-in pixel annotations.

Healthcare Natural Language API provides medical entity extraction from clinical text, mapping to ICD-10, SNOMED CT, RxNorm, and LOINC. It also provides entity relation extraction and contextual features (negation, temporality, certainty).

BigQuery is GCP's analytical engine, and for healthcare, it is the single strongest differentiator. The Cloud Healthcare API can stream FHIR resources directly into BigQuery as structured, queryable tables. This means a FHIR Patient resource becomes a BigQuery row with typed columns — no ETL pipeline, no Parquet export, no Athena configuration. The result is real-time SQL analytics over live FHIR data at petabyte scale.

The BigQuery Advantage

The FHIR-to-BigQuery streaming integration deserves specific attention because it fundamentally changes healthcare analytics architecture:

- Zero-ETL analytics: FHIR resources are available in BigQuery within seconds of being written to the FHIR store. No batch export. No nightly sync. No Parquet conversion.

- Standard SQL: Analysts query FHIR data using familiar SQL syntax, not FHIR search parameters. This dramatically lowers the skill barrier for data teams.

- Petabyte scale: BigQuery's serverless architecture handles petabytes without capacity planning. A health system with 500 million FHIR resources can run complex cohort queries in seconds.

- ML integration: BigQuery ML enables model training directly within BigQuery using SQL — predict readmission risk, identify coding gaps, or flag sepsis indicators without moving data to a separate ML platform.

- Cost efficiency: BigQuery's on-demand pricing ($6.25/TB scanned) means you pay only for queries executed, not for always-on compute clusters.

For organizations where the primary goal of cloud migration is building a healthcare data lake for AI-ready analytics, GCP's FHIR-to-BigQuery pipeline is the most direct path available.

GCP Healthcare Pricing

| Component | Pricing (US Multi-Region, 2026) | Notes |

|---|---|---|

| FHIR store — structured storage | $0.259 per GB/month | GB-based pricing |

| FHIR store — operations | $0.50 per 10,000 operations | All CRUD, search, bundles |

| HL7v2 store — storage | $0.259 per GB/month | Same rate as FHIR |

| HL7v2 store — operations | $0.50 per 10,000 messages | Ingest, parse, list |

| DICOM store — storage | $0.259 per GB/month | DICOMweb operations |

| BigQuery — storage | $0.02 per GB/month (active) | $0.01/GB for long-term (90+ days) |

| BigQuery — queries | $6.25 per TB scanned | On-demand pricing |

| Healthcare NLP API | $0.05 per 1,000 characters | Entity extraction + linking |

Cost at scale: For our reference workload of 10 million FHIR resources (~50 GB), GCP FHIR storage runs $13/month. Operations at 5 million monthly calls cost $250/month. BigQuery storage for the FHIR export adds $1/month. The total FHIR + analytics baseline comes to approximately $264/month — the lowest raw infrastructure cost among the three providers. The real cost advantage emerges at analytics scale: organizations running 100+ queries per day across the full dataset pay BigQuery on-demand rates rather than provisioning always-on Athena or Synapse clusters.

GCP Strengths and Limitations

Strengths: Best-in-class analytics pipeline (FHIR to BigQuery streaming). Only provider with native HL7v2 message stores. Most cost-effective at scale for analytics-heavy workloads. Strong DICOM de-identification capabilities. Vertex AI integration for clinical ML.

Limitations: Smallest healthcare-specific partner ecosystem. No EHR vendor partnerships comparable to Azure-Epic. The Cloud Healthcare API documentation, while technically accurate, is less comprehensive than Azure's. GCP has the smallest overall market share in healthcare (~10-12% vs Azure's ~25% and AWS's ~30-33%), which means fewer healthcare-specific solution architects and implementation partners.

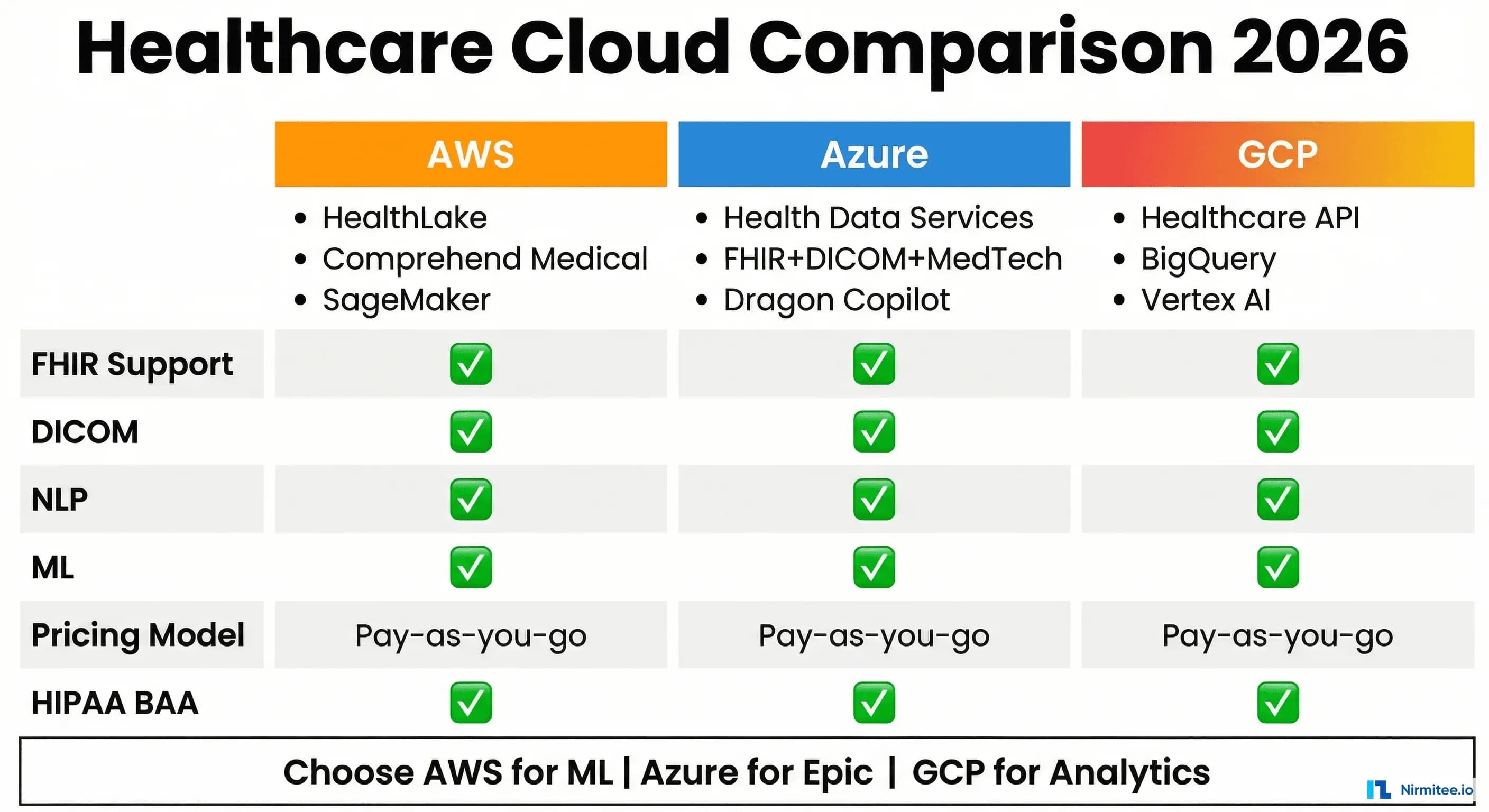

Head-to-Head Feature Comparison

The following table is the most comprehensive feature-by-feature comparison of healthcare cloud services available. It covers 32 capabilities across infrastructure, data management, AI/ML, compliance, and pricing dimensions.

| Capability | AWS | Azure | GCP |

|---|---|---|---|

| FHIR Data Management | |||

| FHIR version support | R4 | R4 | R4 (STU3 also supported) |

| Managed FHIR server | HealthLake | Health Data Services — FHIR | Cloud Healthcare API — FHIR stores |

| SMART on FHIR | Yes (v1) | Yes (v1 and v2) | Yes (v1) |

| Bulk Data ($export) | Yes (to S3) | Yes (to Blob Storage) | Yes (to GCS, BigQuery) |

| Bulk Import | Yes (from S3, ndjson) | Yes ($import operation) | Yes (from GCS, ndjson) |

| $convert-data (C-CDA/HL7v2 to FHIR) | No (requires custom Lambda) | Yes (Liquid templates) | Partial (HL7v2 mapping engine) |

| Custom search parameters | Limited | Yes | Yes |

| FHIR subscriptions | No (use CloudWatch Events) | Yes (FHIR R4 Subscriptions) | Yes (via Pub/Sub notifications) |

| US Core IG support | Yes | Yes | Yes |

| Medical Imaging (DICOM) | |||

| Managed DICOM service | No (S3 + custom solution) | Yes (Health Data Services — DICOM) | Yes (Cloud Healthcare API — DICOM stores) |

| DICOMweb support (STOW/WADO/QIDO) | N/A | Yes | Yes |

| DICOM de-identification | Custom (Lambda + pydicom) | Partial (metadata only) | Yes (metadata + burned-in pixel redaction) |

| Extended query tags | N/A | Yes | Yes (custom search attributes) |

| HL7v2 and Legacy Integration | |||

| Native HL7v2 message store | No | No | Yes |

| HL7v2 message parsing | Custom (Lambda) | Custom (Azure Functions) | Yes (built-in parser) |

| HL7v2 to FHIR mapping | Custom | Yes ($convert-data with Liquid) | Yes (configurable mapping engine) |

| C-CDA ingestion | Custom | Yes ($convert-data) | Partial (via custom mapping) |

| IoMT/device data ingestion | AWS IoT Core (generic) | MedTech service (healthcare-specific) | Cloud IoT (generic, deprecated) / Pub/Sub |

| AI/ML and NLP | |||

| Clinical NLP service | Comprehend Medical | Text Analytics for Health / AI Health Insights | Healthcare Natural Language API |

| Medical entity extraction | Yes (5 entity types) | Yes (8+ entity types, relations, assertions) | Yes (entity types + relations + contextual features) |

| Terminology mapping (ICD-10, SNOMED, RxNorm) | ICD-10-CM, RxNorm | ICD-10, SNOMED CT, RxNorm, LOINC, MeSH | ICD-10, SNOMED CT, RxNorm, LOINC |

| PHI de-identification | Comprehend Medical DetectPHI | Text Analytics for Health + FHIR de-ID | Cloud DLP + Healthcare NLP API de-identification |

| ML platform | SageMaker | Azure Machine Learning | Vertex AI |

| Generative AI (LLM) | Bedrock (Claude, Llama, Titan) | Azure OpenAI Service (GPT-4, GPT-4o) | Vertex AI (Gemini, Med-PaLM 2) |

| Healthcare-specific foundation model | No (general-purpose via Bedrock) | No (GPT-4 fine-tuned for health via partners) | Med-PaLM 2 (research preview) |

| Analytics | |||

| FHIR-to-analytics pipeline | HealthLake export to S3, query via Athena | $export to Synapse/Fabric | Streaming export to BigQuery (zero-ETL) |

| Real-time analytics over FHIR data | No (batch export required) | Near-real-time via Fabric | Yes (BigQuery streaming) |

| SQL-based FHIR querying | Athena (on exported Parquet) | Synapse / Fabric | BigQuery (native) |

| Compliance and Security | |||

| HIPAA BAA available | Yes | Yes | Yes |

| BAA-eligible services count | ~150 | ~80+ | ~100 |

| HITRUST CSF certified | Yes | Yes | Yes |

| SOC 2 Type II | Yes | Yes | Yes |

| FedRAMP High | Yes (GovCloud) | Yes (Azure Government) | Yes (Assured Workloads) |

| Encryption at rest | AES-256 (KMS, HSM-backed) | AES-256 (Key Vault, HSM-backed) | AES-256 (Cloud KMS, HSM-backed) |

| Customer-managed encryption keys | Yes (KMS CMK) | Yes (Key Vault CMK) | Yes (Cloud KMS CMEK) |

| Audit logging | CloudTrail + CloudWatch | Azure Monitor + Diagnostic Logs | Cloud Audit Logs |

| Pricing Model | |||

| FHIR storage model | Per-resource | Per-GB | Per-GB |

| FHIR API pricing model | Per-request (read/write split) | Per-API call (unified) | Per-operation (unified) |

| Free tier | HealthLake: No free tier | FHIR: No free tier (trial available) | FHIR: No free tier |

| Reserved/committed pricing | Yes (Savings Plans) | Yes (Reserved capacity) | Yes (Committed Use Discounts) |

Architecture Patterns for Healthcare Cloud

Choosing a cloud provider is only the first decision. How you architect the data platform determines whether you get a compliant FHIR store or a transformative clinical intelligence platform. Here are three patterns we see across health systems of different sizes and maturity levels.

Pattern 1: FHIR-Native Data Platform

Best for: Health systems building greenfield analytics platforms, digital health startups, organizations pursuing FHIR-first AI strategies.

In this pattern, the cloud FHIR store is the system of record for all clinical data. EHR data flows in via FHIR APIs, third-party apps connect via SMART on FHIR, and analytics run directly against the FHIR data.

- AWS implementation: HealthLake as primary FHIR store, Comprehend Medical for NLP enrichment, Lake Formation for analytics export, SageMaker for ML.

- Azure implementation: Health Data Services FHIR as primary store, DICOM service for imaging, Text Analytics for Health for NLP, Microsoft Fabric for analytics.

- GCP implementation: Cloud Healthcare API FHIR stores as primary, BigQuery streaming for real-time analytics, Vertex AI for ML, Looker for visualization.

Recommendation: GCP wins this pattern. The zero-ETL FHIR-to-BigQuery streaming pipeline eliminates the most complex and failure-prone component of any healthcare data platform — the ETL layer. For organizations where analytics is the primary cloud use case, GCP delivers the fastest time-to-insight.

Pattern 2: Hybrid — On-Prem EHR with Cloud Analytics

Best for: Large health systems with significant on-premises EHR investments (Epic, Oracle Health, MEDITECH) that want cloud-based analytics and AI without disrupting clinical workflows.

This is the most common pattern in US healthcare today. The EHR remains on-premises (or vendor-hosted), and the cloud serves as the analytics and AI layer. Data flows via HL7v2 feeds, FHIR API subscriptions, or batch extracts.

- AWS implementation: Amazon MQ or MSK for HL7v2 ingestion, Lambda for HL7v2-to-FHIR transformation, HealthLake for FHIR storage, Redshift or Athena for analytics.

- Azure implementation: Azure Event Hubs for HL7v2 ingestion, $convert-data for HL7v2-to-FHIR transformation (built-in Liquid templates), Health Data Services FHIR for storage, Fabric for analytics.

- GCP implementation: Cloud Healthcare API HL7v2 stores for native ingestion (no transformation engine needed), configurable mapping to FHIR stores, BigQuery for analytics.

Recommendation: Azure wins this pattern if you run Epic (data stays in the same cloud). GCP wins if HL7v2 is your primary data feed (native HL7v2 stores eliminate an entire integration layer). AWS is the choice if you need maximum flexibility in how you handle the transformation — at the cost of building the pipeline yourself.

Pattern 3: Multi-Cloud Healthcare Platform

Best for: Large IDNs (Integrated Delivery Networks), health plans with diverse provider networks, organizations with regulatory requirements for data residency or redundancy.

Multi-cloud in healthcare is not about avoiding vendor lock-in for its own sake — it is about placing workloads where each provider excels. A viable multi-cloud architecture might use Azure Health Data Services for FHIR + DICOM management (leveraging the Epic partnership and native DICOM), GCP BigQuery for analytics (leveraging the streaming FHIR export), and AWS for ML workloads (leveraging SageMaker's broader model framework support).

The critical challenge is data governance across cloud boundaries. PHI moving between providers requires BAAs with each provider, encryption in transit, audit logging at both endpoints, and a unified identity layer — typically implemented via a cloud-agnostic identity provider (Okta, Ping Identity) federated to each cloud's IAM.

Recommendation: Multi-cloud adds 40-60% infrastructure complexity and 25-35% cost overhead. Pursue it only when a specific workload genuinely requires a specific provider's unique capability (e.g., Azure's DICOM service, GCP's BigQuery analytics, AWS's breadth of BAA-eligible services). Do not pursue multi-cloud for abstract "vendor independence" — the cost does not justify the benefit for most health systems under 20 facilities.

HIPAA Compliance Deep-Dive: What Each Provider Actually Delivers

Every cloud provider will tell you they are "HIPAA compliant." The technically accurate statement is that no cloud provider is inherently HIPAA compliant — HIPAA compliance is a shared responsibility between the covered entity and the business associate. What varies is how much each provider does to make your compliance posture achievable. For a detailed treatment of HIPAA compliance automation and continuous monitoring, see our dedicated guide.

Business Associate Agreements

| Aspect | AWS | Azure | GCP |

|---|---|---|---|

| BAA activation | Accept via AWS Artifact (self-service) | Auto-included in Online Services Terms | Accept via amendment to Cloud agreement |

| BAA cost | Free | Free | Free |

| BAA scope | Covers ~150 specific services listed | Covers ~80+ services in compliance documentation | Covers ~100 services in BAA documentation |

| Non-covered service risk | Customer responsibility to restrict PHI to BAA-covered services | Same | Same |

Encryption Requirements

HIPAA requires encryption for PHI at rest and in transit, but does not mandate specific algorithms. All three providers exceed the minimum:

- At rest: All three use AES-256 with provider-managed keys by default. All three support customer-managed keys (CMEK) for organizations that require key custody. AWS and Azure support HSM-backed keys (CloudHSM, Azure Dedicated HSM). GCP supports Cloud HSM within Cloud KMS.

- In transit: All three enforce TLS 1.2+ for all API calls. All three support VPC/VNet private endpoints to eliminate public internet traversal for PHI data flows.

- Key rotation: AWS KMS supports automatic annual rotation. Azure Key Vault supports configurable rotation policies. GCP Cloud KMS supports automatic rotation with configurable periods.

Audit Logging

HIPAA requires audit controls that record who accessed what PHI and when. Implementation differs significantly:

- AWS: CloudTrail captures all API calls across all services. For HealthLake specifically, data access events (FHIR reads) require enabling CloudTrail data events, which incur additional charges ($0.10 per 100,000 events). CloudWatch Logs provides real-time log analysis. AWS Config tracks configuration changes for compliance drift detection.

- Azure: Azure Monitor captures platform metrics and diagnostic logs. Health Data Services generates diagnostic logs for FHIR, DICOM, and MedTech operations. Azure Policy provides compliance dashboards with built-in HIPAA policy initiatives (pre-configured rule sets that check 100+ HIPAA-relevant controls).

- GCP: Cloud Audit Logs automatically captures Admin Activity logs (free, always-on) and Data Access logs (must be enabled, charged per volume). Access Transparency logs (available to premium customers) show when Google staff access your data for support purposes — a unique feature for organizations concerned about insider access.

Access Control

Granular access control over PHI is where the providers diverge most:

- AWS: IAM policies control access at the HealthLake datastore level. Finer-grained access (e.g., restricting by FHIR resource type or patient compartment) requires application-layer enforcement — AWS IAM cannot natively restrict "Practitioner A can only access Patient B's resources."

- Azure: Azure RBAC integrates with Health Data Services. Azure AD conditional access policies can enforce location-based, device-based, and risk-based access controls. FHIR-level access control is managed via SMART on FHIR scopes.

- GCP: Cloud IAM controls access at the dataset and data store level. The Cloud Healthcare API supports consent management via the FHIR Consent resource framework, enabling patient-level consent enforcement — the most granular native consent implementation among the three.

Migration Strategy: The 4-Phase Approach

Migrating healthcare data to the cloud is not an infrastructure project — it is a clinical operations project with infrastructure components. A failed migration does not just cause downtime; it disrupts patient care. Here is the 4-phase approach we recommend for health systems of any size.

Phase 1: Assessment and Workload Classification (Weeks 1-6)

Before selecting a provider, classify your workloads:

- Data inventory: Catalog all clinical data sources — EHR databases, PACS servers, lab information systems, pharmacy systems, device integration engines. Quantify data volume (GB/TB), data velocity (transactions per second), and data variety (FHIR, HL7v2, DICOM, proprietary formats).

- Workload classification: Categorize workloads as (a) FHIR-native analytics, (b) imaging/DICOM, (c) real-time clinical (latency-sensitive), (d) batch/research, (e) AI/ML training, (f) patient-facing applications.

- Dependency mapping: Identify which systems depend on which data sources. An EHR-to-cloud migration that breaks a downstream lab interface is a clinical safety event.

- Compliance audit: Document current HIPAA controls and identify gaps that the cloud migration must address — do not assume the cloud will automatically improve your compliance posture.

Phase 2: Provider Selection and Proof of Concept (Weeks 7-14)

Run a focused proof of concept with your top-choice provider:

- POC scope: Select a single, bounded data domain — one department's FHIR data, one facility's DICOM studies, or one HL7v2 feed. Do not attempt a full data migration as a POC.

- Performance benchmarking: Measure FHIR query latency, bulk import throughput, and analytics query performance against your SLAs. For FHIR stores, test search operations with realistic query patterns — _include, _revinclude, chained parameters, date ranges.

- Cost modeling: Run the POC for at least 30 days to capture realistic usage patterns. Extrapolate to full production volume. Add a 30% buffer for unexpected costs (egress, logging, support).

- Compliance validation: Verify BAA coverage for every service used in the POC. Conduct a penetration test against the POC environment. Validate audit logging captures all required access events.

Phase 3: Production Build and Staged Migration (Weeks 15-30)

- Infrastructure as Code: Define all cloud resources via Terraform, Bicep (Azure), or Pulumi. Never provision healthcare cloud infrastructure manually — manual provisioning leads to configuration drift, which leads to compliance gaps.

- Data migration: Migrate in stages: (1) historical data via bulk import during off-hours, (2) cutover live feeds with parallel running for 2-4 weeks, (3) decommission legacy connections only after validation.

- Integration testing: Test every downstream consumer of migrated data. If 47 applications consume HL7v2 ADT feeds from your integration engine, all 47 must be tested against the cloud-based feed.

- Disaster recovery: Implement cross-region replication and test failover before go-live. Healthcare data platforms require RPO (Recovery Point Objective) under 1 hour and RTO (Recovery Time Objective) under 4 hours for clinical systems.

Phase 4: Optimization and Operationalization (Weeks 31+)

- Cost optimization: After 60-90 days of production usage data, implement reserved capacity (AWS Savings Plans, Azure Reserved Instances, GCP CUDs) for predictable base workloads. Implement auto-scaling for variable analytics workloads.

- Performance tuning: Optimize FHIR search parameters based on actual query patterns. Implement caching layers (ElastiCache/Redis, Azure Cache for Redis, Memorystore) for frequently accessed resources.

- Team enablement: Train clinical informatics, data engineering, and DevOps teams on the specific healthcare services. Each provider offers healthcare-specific certification paths (AWS Healthcare Competency, Microsoft Healthcare Partner, Google Cloud Healthcare specialization).

- Continuous compliance: Implement automated compliance scanning — AWS Config Rules, Azure Policy, GCP Security Command Center — with alerting for any drift from HIPAA baseline configurations.

Decision Framework: Which Provider for Which Organization

After analyzing the services, pricing, compliance posture, and architectural patterns, here is our decision framework. This is not a generic "it depends" — it is specific guidance based on organizational profile.

Choose AWS If:

- Your team has deep AWS expertise and you want maximum control over every component. AWS rewards organizations that invest in cloud engineering talent.

- You need the broadest set of BAA-eligible services for complex, multi-service architectures. If your platform uses 50+ AWS services, the BAA coverage breadth matters.

- NLP-enriched FHIR storage is a priority. HealthLake's integrated Comprehend Medical tagging is unique — clinical entities are indexed at the storage layer, enabling NLP-powered search without a separate processing pipeline.

- You are building custom ML pipelines and want SageMaker's flexibility with model framework support (PyTorch, TensorFlow, Hugging Face, JAX).

- You operate in AWS GovCloud for government healthcare workloads (VA, DoD, CMS).

Choose Azure If:

- You run Epic as your primary EHR. The Azure-Epic partnership creates a data pipeline that no other cloud can replicate. Data stays within the same cloud boundary, reducing latency, egress, and compliance overhead.

- Medical imaging (DICOM) is a primary workload. Azure is the only provider with a fully managed, native DICOM service alongside FHIR. If you manage PACS data, radiology workflows, or pathology imaging, Azure eliminates an entire build-vs-buy decision.

- Your organization is Microsoft-native. If you use Azure AD, Microsoft 365, Teams, Power BI, and Dynamics 365, Azure Health Data Services integrates seamlessly into your existing governance and productivity stack.

- IoMT/device data ingestion is a requirement. The MedTech service provides device-to-FHIR mapping that neither AWS nor GCP offers natively.

- You want the lowest FHIR storage cost. Azure's per-GB pricing is the most economical for large FHIR datasets.

Choose GCP If:

- Analytics and AI are the primary reason for cloud migration. The FHIR-to-BigQuery streaming pipeline is GCP's killer feature. No other provider offers zero-ETL, real-time SQL analytics over FHIR data at this scale.

- HL7v2 feeds are your primary data source. GCP is the only provider with native HL7v2 message stores. If your integration engine sends thousands of ADT, ORM, and ORU messages per hour, GCP ingests them without transformation.

- Cost efficiency at scale is critical. GCP's on-demand BigQuery pricing and lower FHIR storage costs deliver the best unit economics for analytics-heavy workloads.

- You want the strongest DICOM de-identification. GCP's de-identification pipeline handles both metadata and burned-in pixel redaction — critical for research datasets derived from clinical imaging.

- Research and population health analytics are key use cases. BigQuery's petabyte-scale, serverless SQL engine is purpose-built for the cohort analysis, quality measurement, and predictive modeling that drive population health programs.

Frequently Asked Questions

Can I use multiple cloud providers for different healthcare workloads?

Yes, but proceed with caution. Multi-cloud healthcare architectures are viable when each provider serves a distinct workload (e.g., Azure for DICOM, GCP for analytics). However, multi-cloud adds 40-60% infrastructure complexity, requires BAAs with each provider, demands a cross-cloud identity solution, and increases egress costs. For organizations under 20 facilities, single-cloud is almost always the better choice. For large IDNs, multi-cloud can be justified when specific workload requirements genuinely require different provider capabilities.

Which cloud provider is cheapest for healthcare workloads?

GCP delivers the lowest unit costs for FHIR storage and analytics. Azure offers the most cost-effective FHIR service at the storage + API transaction level. AWS is generally the most expensive for healthcare-specific services but offers aggressive volume discounts through Enterprise Discount Programs (EDPs) and Savings Plans. The true answer depends on your workload mix: if 80% of your cloud spend is analytics, GCP wins. If 80% is FHIR + DICOM storage, Azure wins. If you need 100+ BAA-covered services, AWS's breadth may justify the premium.

Do I need a separate HIPAA compliance effort for each cloud provider?

Yes. Each provider's BAA is a separate agreement. Each provider has different BAA-eligible service lists, different audit logging mechanisms, different encryption key management, and different compliance reporting tools. Your HIPAA risk assessment must evaluate each provider independently. However, you can unify your compliance monitoring by feeding audit logs from all providers into a single SIEM (Splunk, Microsoft Sentinel, Chronicle) for consolidated access monitoring and breach detection.

How do I migrate FHIR data between cloud providers?

All three providers support FHIR bulk data export in ndjson format, which is the de facto portability standard. Export from the source FHIR store to the respective object storage (S3, Blob Storage, GCS), transfer the ndjson files to the target provider's object storage, and bulk import into the target FHIR store. For large datasets (10+ million resources), expect the export-transfer-import cycle to take 24-72 hours. The critical risk is not data loss — it is reference integrity. FHIR resources reference each other via relative URLs; ensure your import process preserves or correctly re-maps these references.

Which provider has the best healthcare AI capabilities?

It depends on the AI workload. For clinical NLP (entity extraction, de-identification), Azure Text Analytics for Health offers the most comprehensive entity model with the broadest terminology mapping. For analytics-driven ML (readmission prediction, risk stratification), GCP's BigQuery ML enables model training in SQL without data movement. For custom ML with maximum framework flexibility, AWS SageMaker supports the widest range of model architectures. For generative AI applications, all three offer competitive foundation model access — AWS via Bedrock, Azure via OpenAI Service, GCP via Vertex AI with Gemini.

How long does a healthcare cloud migration take?

For a mid-size health system (5-15 facilities, 50-100 TB of clinical data), expect 30-40 weeks from assessment to optimized production. Phase 1 (assessment) takes 4-6 weeks. Phase 2 (POC) takes 6-8 weeks. Phase 3 (build and migrate) takes 12-16 weeks. Phase 4 (optimization) is ongoing but reaches stability around week 30-40. The most common cause of timeline overrun is underestimating integration testing complexity — every downstream system that consumes clinical data must be validated post-migration.

The Architecture Decision That Defines the Next Decade

Choosing a healthcare cloud provider is a 7-10 year commitment. Data gravity, team expertise, and integration depth make provider switches increasingly expensive over time. The wrong choice does not manifest immediately — it shows up 18 months later when your analytics team cannot get real-time FHIR data without building a $500,000 ETL pipeline, or when your imaging team discovers there is no managed DICOM service and they need to build one from S3 primitives.

The right approach is to match provider strengths to organizational priorities: Azure for Epic shops and imaging-heavy organizations, GCP for analytics-driven and research-focused organizations, AWS for organizations that need maximum infrastructure breadth and custom ML pipelines. The comparison table and decision framework in this post should give your architecture team the data to make that decision with confidence.

At Nirmitee, we build healthcare data platforms on all three providers. We have implemented FHIR data stores on HealthLake, Health Data Services, and Google Cloud Healthcare API. We have built the migration pipelines, the analytics layers, and the compliance frameworks. If your organization is evaluating cloud providers for healthcare workloads — or struggling with a migration already in progress — talk to our healthcare cloud architecture team. We will help you make the decision that your clinical operations, data teams, and CFO can all support.