High availability (HA) is essential for modern healthcare systems. When Mirth Connect goes down, clinical workflows stop, alerts fail, and patient safety is at risk. Since Mirth Connect often handles HL7, FHIR, DICOM, XML, and other healthcare messages, ensuring a zero-downtime, fault-tolerant setup is critical.

This guide explains how to design and implement a complete HA architecture for Mirth Connect including database clustering, load balancing, shared storage, session management, failover testing, and long-term maintenance.

Why High Availability Matters in Healthcare

Mirth Connect is the backbone of many interoperability workflows. When it goes offline:

- Clinical workflows are delayed

- Critical alerts fail to trigger

- Duplicate or lost patient data increases risk

- HIPAA compliance issues arise

- Financial losses occur due to downtime

Healthcare systems typically aim for 99.99% uptime, equal to less than one hour of downtime per year.

Understanding HA Requirements — RTO and RPO

Before designing the architecture, define your business expectations:

- RTO (Recovery Time Objective): Maximum acceptable downtime

- RPO (Recovery Point Objective): Maximum acceptable data loss

Typical healthcare requirements:

- RTO: 5 - 30 minutes

- RPO: 0- 5 minutes

These values directly shape your HA design, especially database clustering and failover mechanisms.

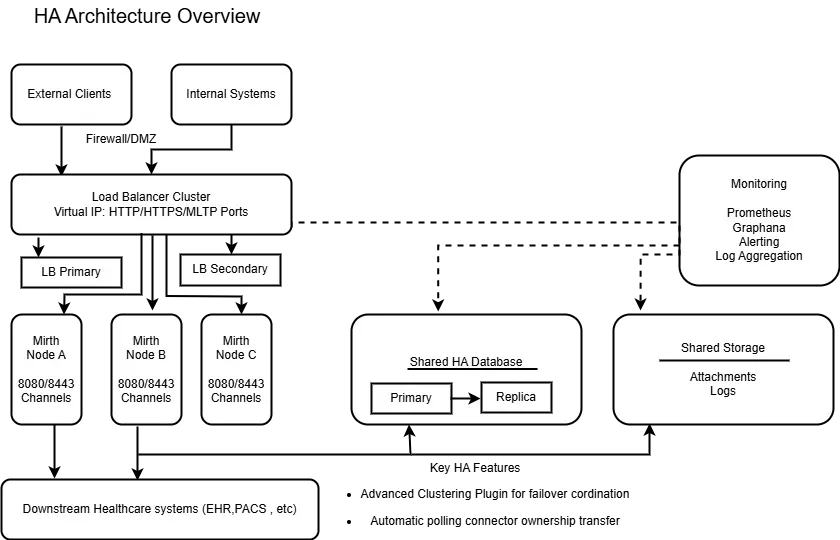

High Availability Architecture Overview

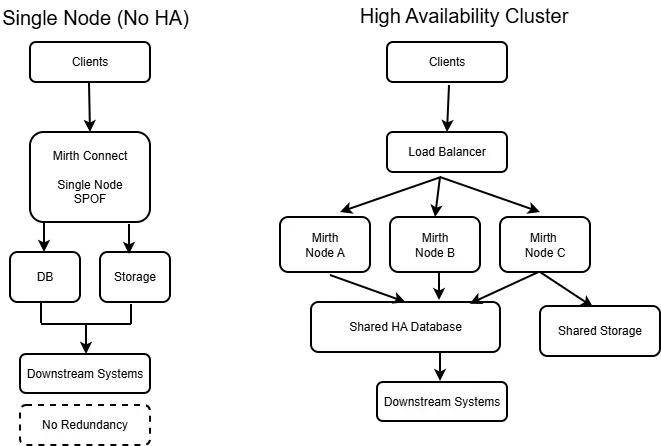

A single-server Mirth setup has multiple failure points: application crashes, hardware failures, network issues, or database downtime.

A proper HA design removes these risks with layered redundancy:

- Load Balancer Cluster: HAProxy or NGINX

- Multiple Mirth Instances: active or passive

- Clustered Database: PostgreSQL + Patroni or MySQL Galera

- Shared Storage: NFS or GlusterFS

- Redundant Networking: VLANs, redundant switches, dual NICs

A front-end load balancer distributes traffic across active Mirth nodes, ensuring that clients connect through a virtual service or VIP rather than directly to individual nodes.

The load balancer can be configured to use Layer 4 or Layer 7 methods, with Layer 7 Reverse Proxy being the recommended method for Mirth Connect due to its high performance and implementation flexibility.

Note that certain behaviors, such as header insertion or transparency, may not be active by default and require specific configuration. Using a load balancer allows Mirth Connect to achieve high availability by directing traffic only to healthy nodes, and load balancing helps handle increased traffic and improve response times for healthcare applications.

Mirth Connect's architecture supports various load-balancing configurations to meet different deployment needs.

This ensures no single failure can stop message processing.

Prerequisites and Planning

Hardware Requirements

- Mirth Servers: 4 - 8 vCPU, 8 - 16 GB RAM

- Database Nodes: 8- 32 vCPU, 16 - 32 GB RAM

- Load Balancers: 2 nodes minimum

- Shared Storage: RAID 10 SSD/NVMe

Software Requirements

- Linux (RHEL, CentOS, Ubuntu)

- Java 11+

- Mirth Connect 4.0+

- PostgreSQL 12+ or MySQL 8.0+

- HAProxy 2.0+ or NGINX

Network Requirements

- 1 Gbps internal bandwidth

- < 5 ms latency to the database

- Correct DNS + clock synchronization across all server nodes using NTP is essential to prevent database conflicts and reduce waiting times in data processing, ensuring minimal latency and seamless interoperability

- Open ports for Mirth, DB, HAProxy

Setting Up the Database Cluster

Implementing Mirth Connect for high availability involves clustering multiple Mirth Connect instances to share a single database, which eliminates single points of failure. A clustered database is mandatory, because Mirth stores:

- Channels

- Metadata

- Message logs

- User sessions

The recommended method for ensuring high availability is to use a clustered database configuration that includes replication and automatic failover. PostgreSQL is recommended as a highly available database for Mirth Connect, though Oracle, SQL Server, and MySQL are also supported.

This approach ensures data integrity and reliable failover, which are critical for maintaining continuous operations in healthcare environments.

Option 1 — PostgreSQL + Patroni

- Automatic failover

- Synchronous replication

- Quorum and split-brain protection

Option 2 — MySQL/MariaDB Galera Cluster

- Synchronous multi-master replication

- High write throughput

- Automatic cluster recovery

Connection pooling must be enabled in Mirth to prevent DB overload.

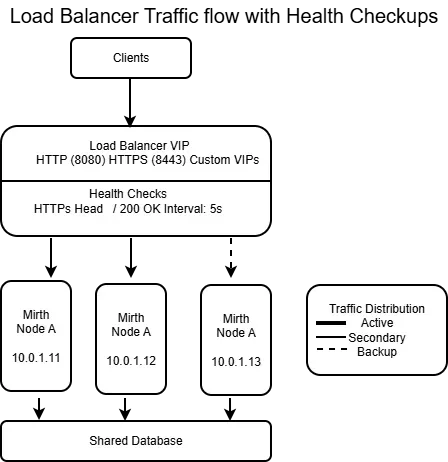

Load Balancer Configuration

Utilize HAProxy or NGINX to distribute traffic and ensure high availability. A Layer 7 Reverse Proxy is recommended to handle SSL termination and manage X-Forwarded-For headers for Mirth Connect, ensuring accurate client IP recognition and secure connections.

Key responsibilities:

- Routing incoming traffic across nodes

- Health checking Mirth instances

- SSL termination

- Failover handling

- Session stickiness when required

The load balancer should be configured to log client IP addresses and health checks for monitoring and security. Configuration files can be downloaded via API or scripts for backup and migration purposes, supporting automation and disaster recovery. If a primary node fails, a secondary node can automatically take over the Virtual IP and resume processing queued messages through a heartbeat mechanism.

Correct health checks ensure nodes are only used when fully healthy.

Mirth Connect HA Node Configuration

Each node must have identical configurations except for node IDs.

Critical settings:

- Cluster mode enabled

- Database sessions activated

- Centralized logs

- Consistent channel deployment

- Cache synchronization scripts

If you are achieving high availability without the Advanced Clustering plugin, more manual configuration is required. For example, you need to disable auto-start for polling channels on each node to prevent duplicate processing.

Tools like Ansible should manage configuration consistency across nodes.

Shared Storage Configuration

Shared storage is required for:

- Logs

- Channel exports

- Certificates

- Custom libraries

Secure access to shared storage and credentials is essential for automation and seamless data exchange, ensuring that only authorized systems and users can retrieve or integrate sensitive healthcare data.

Common options:

- NFS Server

- GlusterFS Cluster (replicated)

Utilize an SSD or NVMe for improved throughput and reduced latency.

Session and State Management

For proper failover:

- Enable database-backed sessions

- Synchronize caches periodically

- Use idempotent channel logic to avoid duplicate processing

- Store critical queues in the database

This ensures users remain authenticated and messages are not reprocessed after a failover.

NextGen Healthcare Integration

NextGen Healthcare Integration, powered by NextGen Connect, delivers a proven architecture for healthcare organizations seeking high availability, scalability, and seamless interoperability.

Its proven architecture processes hundreds of millions of clinical documents annually, supporting mission-critical workflows that demand zero downtime and reliable message processing. The platform’s high availability features, combined with ongoing support from both the NextGen Healthcare team and a vibrant open-source community, ensure that healthcare organizations can depend on continuous improvements, security verification, and rapid response to evolving industry needs.

While recent licensing changes have shifted the landscape for the free version, the commitment to community-driven development and enterprise-grade support means that NextGen Connect remains a cornerstone for secure, scalable, and future-ready healthcare integration.

This ongoing collaboration empowers organizations to meet the increasing demands for secure data exchange, regulatory compliance, and resilient system performance in a rapidly changing healthcare world.

Configuration Best Practices

- Use connection pooling

- Set correct timeouts (HTTP + DB)

- Use retry & backoff strategies

- Implement circuit breakers

- Isolate instance-specific directories

These prevent cascading failures and ensure stable HA behavior.

Common Pitfalls and How to Avoid Them

- Split brain: Fix with proper quorum (Patroni/Galera)

- DB connection exhaustion: Enforce pooling & connection limits

- File locking issues: Separate instance-specific directories

- Version mismatch: Use automation (Ansible, CI/CD)

- Configuration drift: Validate config checksums

Testing and Validation

Perform structured testing:

- Failover test (shutdown one node)

- Database failover simulation

- Network partition testing

- Load testing (JMeter)

- End-to-end message testing

Automated test suites should run after each deployment.

Monitoring and Maintenance

Monitor:

- Mirth node health

- Database replication lag

- Error rates

- Message throughput

- Load balancer status

Use tools like Prometheus, Alertmanager, and ELK for visibility.

Maintenance tasks:

- Daily health checks

- Weekly DB maintenance

- Monthly failover drills

- Regular certificate rotation

Conclusion

Mirth Connect HA requires much more than running multiple servers. Proper planning, database clustering, load balancing, shared storage, synchronized configuration, and continuous testing all contribute to a reliable HA environment.

With the right architecture, healthcare organizations can achieve:

- Zero-downtime failover

- Consistent message processing

- Audit-ready compliance

- Resilience against hardware and network faults

A well-architected Mirth HA setup ensures safe, uninterrupted patient data flow across the entire healthcare ecosystem. Thousands of clients and organizations account for Mirth Connect's widespread adoption, supported by active forums and a dedicated website for resources and support.

Mirth Connect offers a cost-effective alternative to more costly proprietary solutions, making it accessible for organizations seeking robust interoperability without excessive expenses.

Sample Configuration

A minimal Mirth Connect clustering configuration:

database.url = jdbc:postgresql://db-primary:5432/mirthdb

database.max-connections = 40

server.mirthconnect.cluster.enabled = true

server.mirthconnect.cluster.name = mirth-ha-clusterRelated Reading

- clinical decision support

- FHIR R4

- clinical safety

- EHR

Struggling with healthcare data exchange? Our Healthcare Interoperability Solutions practice helps organizations connect clinical systems at scale. We also offer specialized Healthcare Software Product Development services. Talk to our team to get started.