Your FHIR server was down for 47 minutes. The team heroically restored service. Everyone is exhausted. The natural instinct is to move on. Don't.

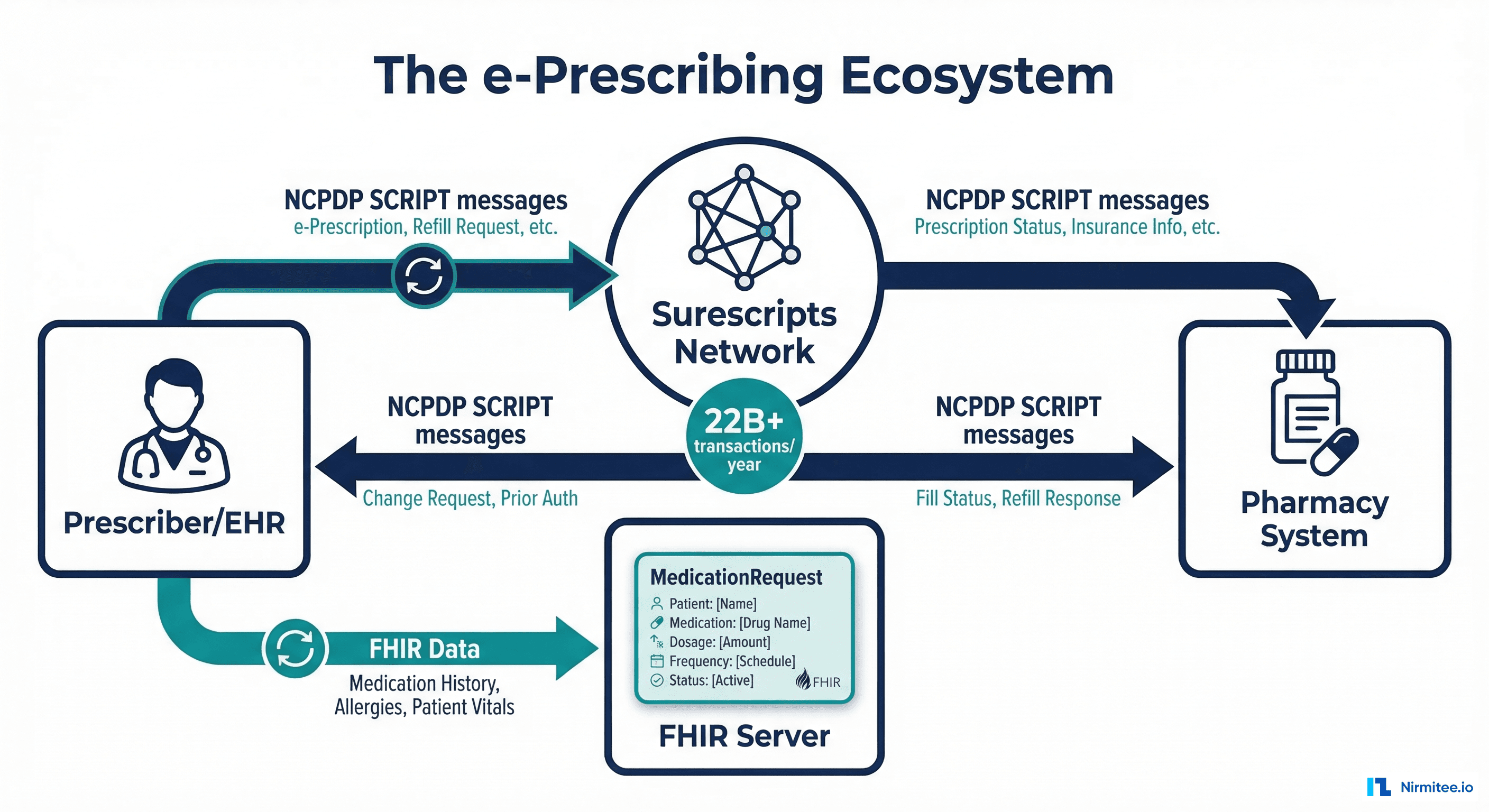

The post-incident review (PIR) is where incidents become investments. Every outage teaches you something about your system that no amount of design review, code review, or load testing would have revealed. But standard tech-company postmortem templates miss what healthcare needs most: clinical impact assessment. Did patient care degrade? Were clinical workflows disrupted? Was PHI exposed? Were clinical decisions made with incomplete data?

This guide provides a complete PIR template designed for healthcare systems, including the clinical impact scoring matrix, HIPAA breach assessment checklist, and a sample completed PIR that shows what excellent looks like.

Why Standard Postmortems Fall Short for Healthcare

Google's postmortem template (from the SRE book) is excellent for SaaS companies. It covers timeline, root cause, action items, and lessons learned. But it assumes the blast radius is measured in user impact and revenue. Healthcare needs three additional dimensions:

- Clinical impact: Which clinical workflows were disrupted? How many patients were affected? Were workarounds effective? Did any clinician make a decision without information they would normally have had?

- PHI exposure: Was protected health information accessed, disclosed, or made unavailable? If so, HIPAA breach notification requirements may apply with strict timelines.

- Regulatory assessment: Does this incident trigger any reporting requirements? State health department notification? Joint Commission reporting? CMS reporting for meaningful use?

Adding these dimensions transforms the postmortem from an engineering exercise into a patient safety tool.

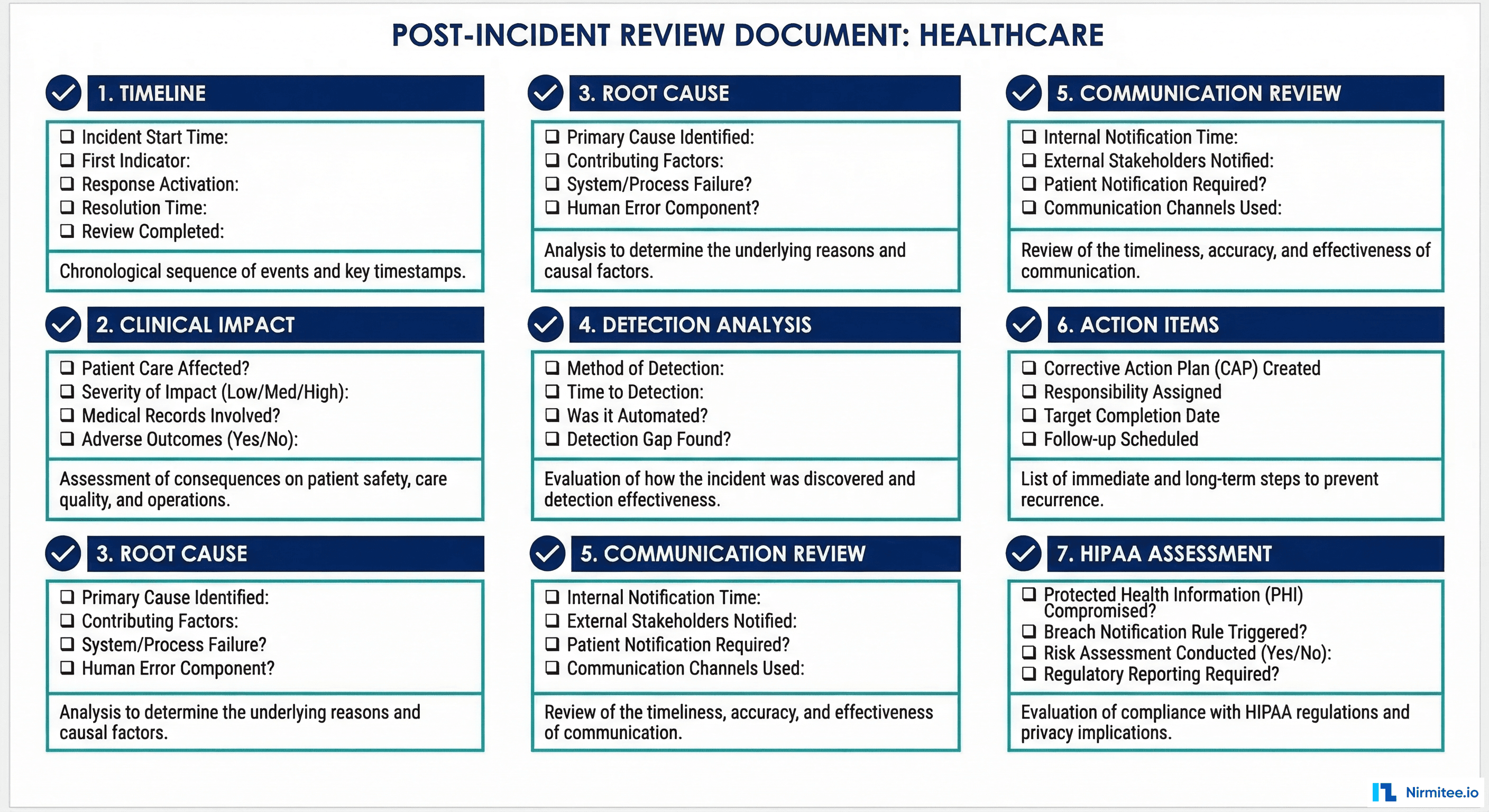

The Seven-Section Healthcare PIR Template

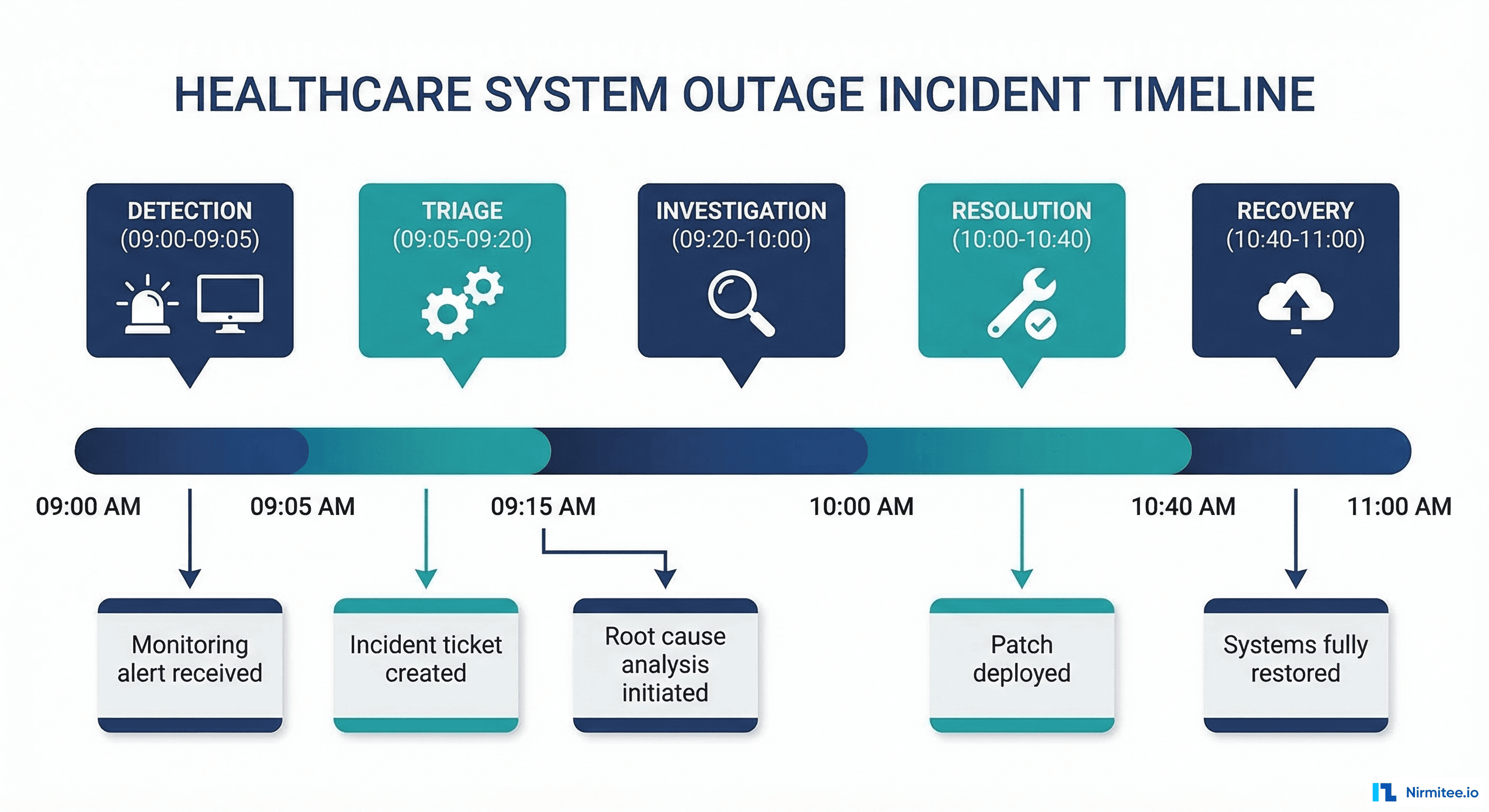

Section 1: Incident Timeline

The timeline is the backbone of the PIR. Every event gets a timestamp, author, and classification (detection, communication, investigation, resolution, or recovery).

# Incident Timeline Template

# Copy this template for every PIR

## Incident: [INC-2026-0342] — FHIR Server Outage

## Date: 2026-03-14

## Duration: 47 minutes (02:13 - 03:00 ET)

## Severity: P1

## Incident Commander: [Name]

### Timeline

| Time (ET) | Event | Actor | Category |

|-----------|-------|-------|----------|

| 02:13 | Prometheus alert: FHIR server error rate > 5% | Automated | Detection |

| 02:14 | PagerDuty pages primary on-call (Engineer A) | Automated | Detection |

| 02:16 | Engineer A acknowledges page | Engineer A | Response |

| 02:18 | Engineer A checks FHIR server pods — 2 of 3 in CrashLoopBackOff | Engineer A | Investigation |

| 02:20 | Engineer A checks logs — OutOfMemoryError in FHIR server | Engineer A | Investigation |

| 02:22 | Slack #incident-active channel created, P1 notification sent | Engineer A | Communication |

| 02:25 | Clinical IT Lead notified — confirms ED staff reporting slow chart access | Clinical IT | Communication |

| 02:28 | Engineer A identifies cause: bulk export query consuming all heap | Engineer A | Investigation |

| 02:30 | Engineer A kills bulk export process, restarts FHIR pods | Engineer A | Resolution |

| 02:35 | 2 of 3 pods healthy, error rate dropping | Automated | Recovery |

| 02:40 | All 3 pods healthy, error rate < 0.1% | Automated | Recovery |

| 02:45 | Clinical IT Lead confirms ED staff can access charts normally | Clinical IT | Recovery |

| 03:00 | Incident declared resolved, monitoring period begins | Engineer A | Resolution |

| 04:00 | 1-hour monitoring period complete, no recurrence | Engineer A | Recovery |Section 2: Clinical Impact Assessment

This is the section that separates a healthcare PIR from a standard postmortem. It answers the question that matters most to clinical leadership: "What happened to patient care?"

# Clinical Impact Assessment Template

## Clinical Impact Summary

- **Clinical workflows affected:** EHR chart access, medication reconciliation, lab result viewing

- **Departments impacted:** Emergency Department, ICU, Medical-Surgical floors

- **Patient count impacted:** ~45 patients with active encounters during outage window

- **Duration of clinical impact:** 47 minutes

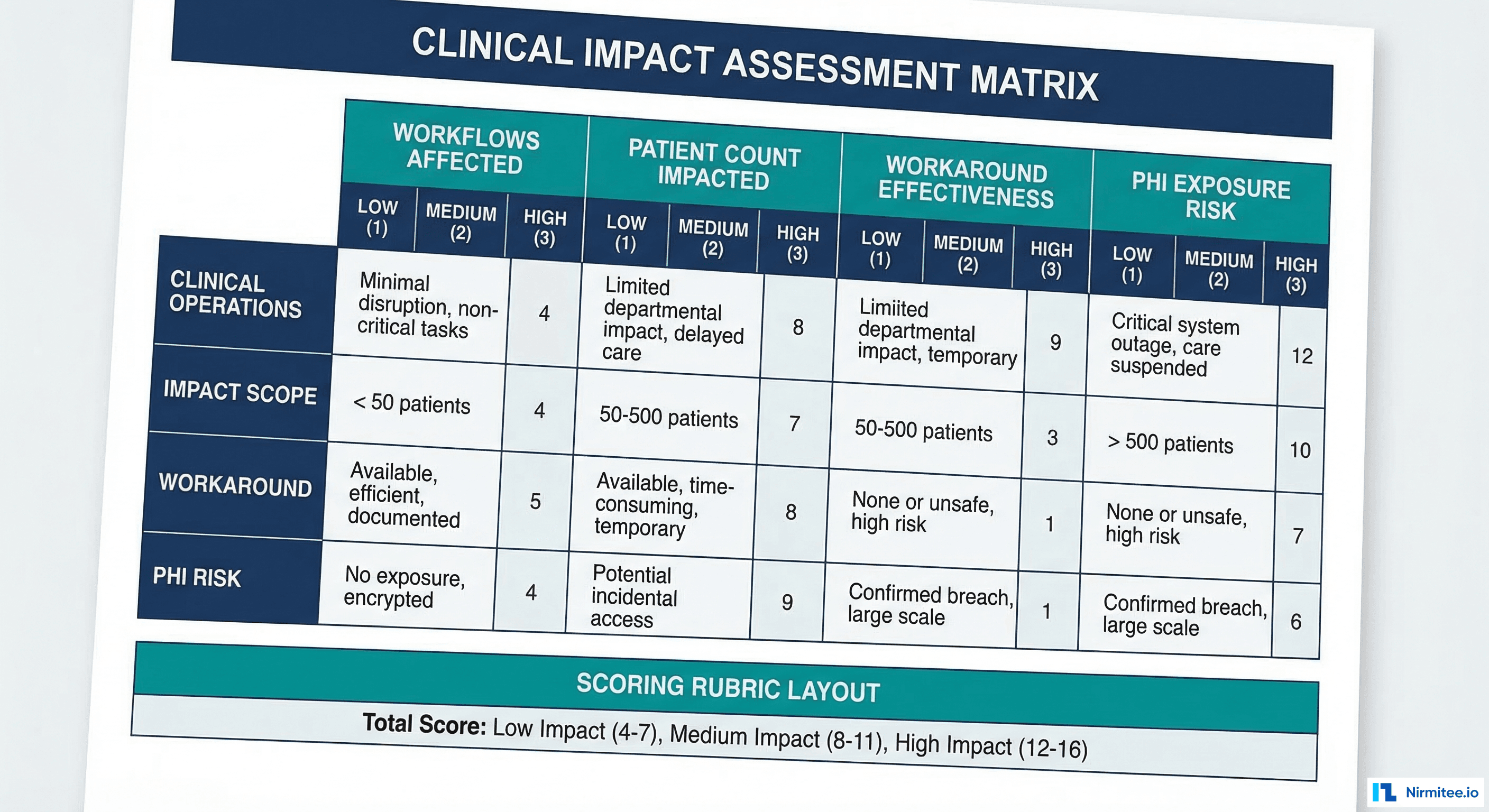

## Clinical Impact Scoring

| Dimension | Score (1-5) | Details |

|-----------|-------------|----------|

| Workflow disruption severity | 4 | Clinicians unable to access patient charts for 47 min |

| Patient volume affected | 3 | ~45 active encounters; ED had 12 patients |

| Workaround effectiveness | 3 | Paper-based workaround available but slow |

| Decision-making impact | 2 | No known clinical decisions made without data |

| Duration | 3 | 47 minutes — significant but under 1 hour |

| **Overall Clinical Impact Score** | **15/25** | **Moderate-High** |

## Scoring Guide

- 5-10: Low clinical impact — minor inconvenience, no patient care degradation

- 11-15: Moderate clinical impact — workflow disruption with effective workarounds

- 16-20: High clinical impact — significant care degradation, workarounds insufficient

- 21-25: Critical clinical impact — direct patient safety risk, care decisions affected

## Clinical Questions

1. Were any clinical decisions made with incomplete information? **No** — clinicians deferred non-urgent decisions until system restored

2. Were any medication administrations delayed? **Possible** — 2 medication reconciliations in ED were delayed ~30 min

3. Were any test results unavailable when needed? **Yes** — 3 lab results posted during outage were not visible until restoration

4. Were clinical alerts (sepsis, deterioration) delayed? **No** — bedside monitors continued functioning independently

5. Was any patient discharge delayed? **No** — outage occurred during low-discharge window (2-3 AM)Section 3: Technical Root Cause (5 Whys)

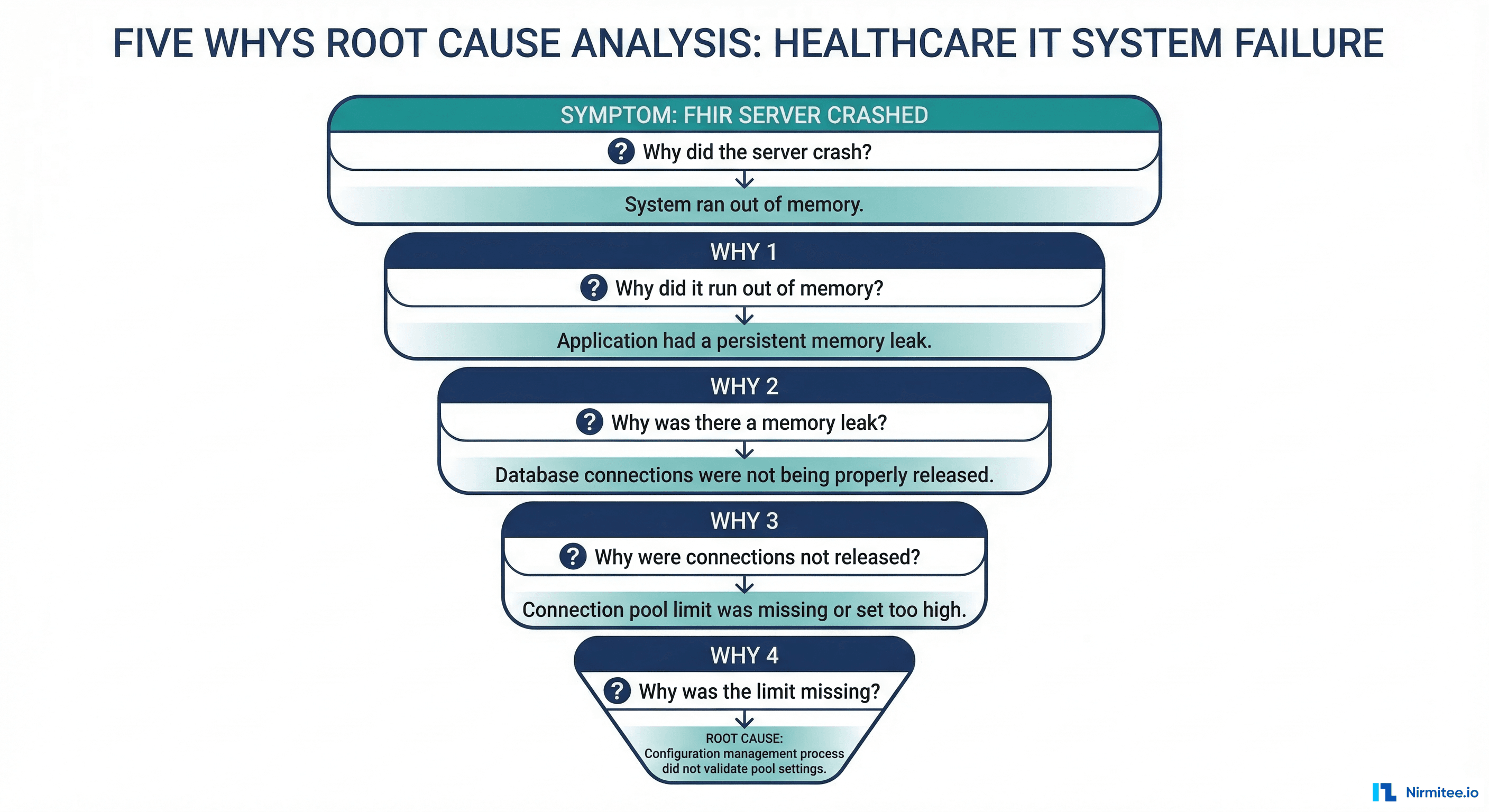

The 5 Whys technique drills past symptoms to reach the systemic root cause. For healthcare incidents, extend the analysis to ask why the clinical impact was as severe as it was.

# 5 Whys Analysis

## Technical Root Cause

**Why 1:** Why did the FHIR server pods crash?

→ OutOfMemoryError: Java heap space exhausted.

**Why 2:** Why was the heap exhausted?

→ A bulk FHIR export request ($export operation) loaded the entire result set into memory before streaming.

**Why 3:** Why was the export loading everything into memory?

→ The export implementation used a naive approach (collect all, then stream) rather than cursor-based streaming.

**Why 4:** Why wasn't this caught in testing?

→ Load tests used small datasets (1,000 patients). Production has 85,000 patients. The export only fails at scale.

**Why 5:** Why weren't there memory limits preventing one request from crashing the server?

→ No per-request memory budget was configured. The FHIR server's JVM heap is shared across all concurrent requests without isolation.

**Root Cause:** Missing per-request resource limits on the FHIR server's bulk export operation, combined with insufficient load testing at production scale.

## Clinical Impact Root Cause

**Why 1:** Why did a FHIR server OOM cause clinical workflow disruption?

→ The EHR's clinical apps depend on the FHIR server for chart data retrieval.

**Why 2:** Why did all clinical apps fail when 2 of 3 pods crashed?

→ The remaining pod was overwhelmed by traffic that normally distributes across 3 pods.

**Why 3:** Why didn't autoscaling kick in?

→ HPA was configured with a 5-minute stabilization window. The remaining pod crashed before new pods could scale up.

**Root Cause for clinical impact:** Insufficient autoscaling aggressiveness for critical clinical-facing services.Section 4: Detection and Response Analysis

This section evaluates how quickly the incident was detected and whether the detection was fast enough to minimize clinical impact.

# Detection & Response Analysis

## Detection

- **How was the incident detected?** Prometheus alert on FHIR error rate > 5%

- **Time from start to detection:** ~2 minutes (first OOM at ~02:11, alert at 02:13)

- **Was detection automated?** Yes

- **Was this the first signal?** Yes — no user reports before the alert

- **Detection assessment:** GOOD — automated detection caught this before clinical staff noticed

## Response

- **Time from detection to acknowledgment (MTTA):** 3 minutes

- **Time from acknowledgment to resolution (repair time):** 14 minutes

- **Total MTTR:** 17 minutes from page to fix (47 min total from first failure to full recovery)

- **Were runbooks available?** Partial — "FHIR Server 5xx" runbook existed but didn't cover OOM specifically

- **Was the right person paged?** Yes — platform engineer with FHIR server knowledge

## Response Assessment

- **What went well:** Fast detection, fast acknowledgment, correct diagnosis

- **What could improve:** Runbook should cover OOM scenario; autoscaling should be more aggressive

- **Was communication timely?** Yes — clinical IT notified within 10 minutes of detectionSection 5: Communication Review

Evaluate whether the right people were notified through the right channels at the right time.

# Communication Review

| Stakeholder | Notified? | Time | Channel | Assessment |

|-------------|-----------|------|---------|------------|

| Primary on-call | Yes | 02:14 | PagerDuty | GOOD — 1 min after detection |

| Clinical IT Lead | Yes | 02:22 | Phone | GOOD — 9 min after detection |

| Nursing (ED) | Yes | 02:30 | Clinical IT relay | OK — 17 min (could be faster) |

| Help Desk | Yes | 02:25 | Slack | GOOD — script updated |

| CIO | No | — | — | Not required (resolved in < 1 hour) |

| Engineering Manager | No | — | — | Not escalated (primary resolved) |

## Communication Gaps

1. ED nursing was notified 17 minutes after detection. For a P1 affecting chart access, nursing should be notified within 10 minutes.

2. No automated clinical notification mechanism — relied on Clinical IT Lead making manual phone calls.

## Improvement

- Add automated Slack notification to #clinical-it-alerts for P1 incidents

- Create clinical notification automation that pages nursing informatics for P1 incidents affecting chart accessSection 6: Action Items

Action items without owners and deadlines are wishes, not commitments. Every action item must have an owner, a deadline, and a tracking mechanism.

# Action Items

## Prevention (prevent this incident from recurring)

| # | Action | Owner | Deadline | Status | Ticket |

|---|--------|-------|----------|--------|--------|

| 1 | Implement streaming export (cursor-based) for FHIR $export | Engineer B | 2026-04-01 | Open | PLAT-1234 |

| 2 | Add per-request memory limits to FHIR server (256MB max per request) | Engineer A | 2026-03-28 | Open | PLAT-1235 |

| 3 | Add production-scale load tests for bulk operations (85K patients) | Engineer C | 2026-04-15 | Open | PLAT-1236 |

## Detection (detect faster next time)

| # | Action | Owner | Deadline | Status | Ticket |

|---|--------|-------|----------|--------|--------|

| 4 | Add JVM heap usage alert (> 80% for 2 minutes) | Engineer A | 2026-03-21 | Open | PLAT-1237 |

| 5 | Add FHIR bulk export duration alert (> 5 min) | Engineer A | 2026-03-21 | Open | PLAT-1238 |

## Response (respond better next time)

| # | Action | Owner | Deadline | Status | Ticket |

|---|--------|-------|----------|--------|--------|

| 6 | Update FHIR runbook with OOM-specific steps | Engineer A | 2026-03-21 | Open | PLAT-1239 |

| 7 | Reduce HPA stabilization window to 60s for FHIR deployment | Engineer B | 2026-03-21 | Open | PLAT-1240 |

| 8 | Create automated clinical notification for P1 chart-access incidents | SRE Lead | 2026-04-01 | Open | PLAT-1241 |

## Process

| # | Action | Owner | Deadline | Status | Ticket |

|---|--------|-------|----------|--------|--------|

| 9 | Add production-scale dataset to staging environment | DevOps | 2026-04-15 | Open | PLAT-1242 |Section 7: HIPAA Breach Assessment

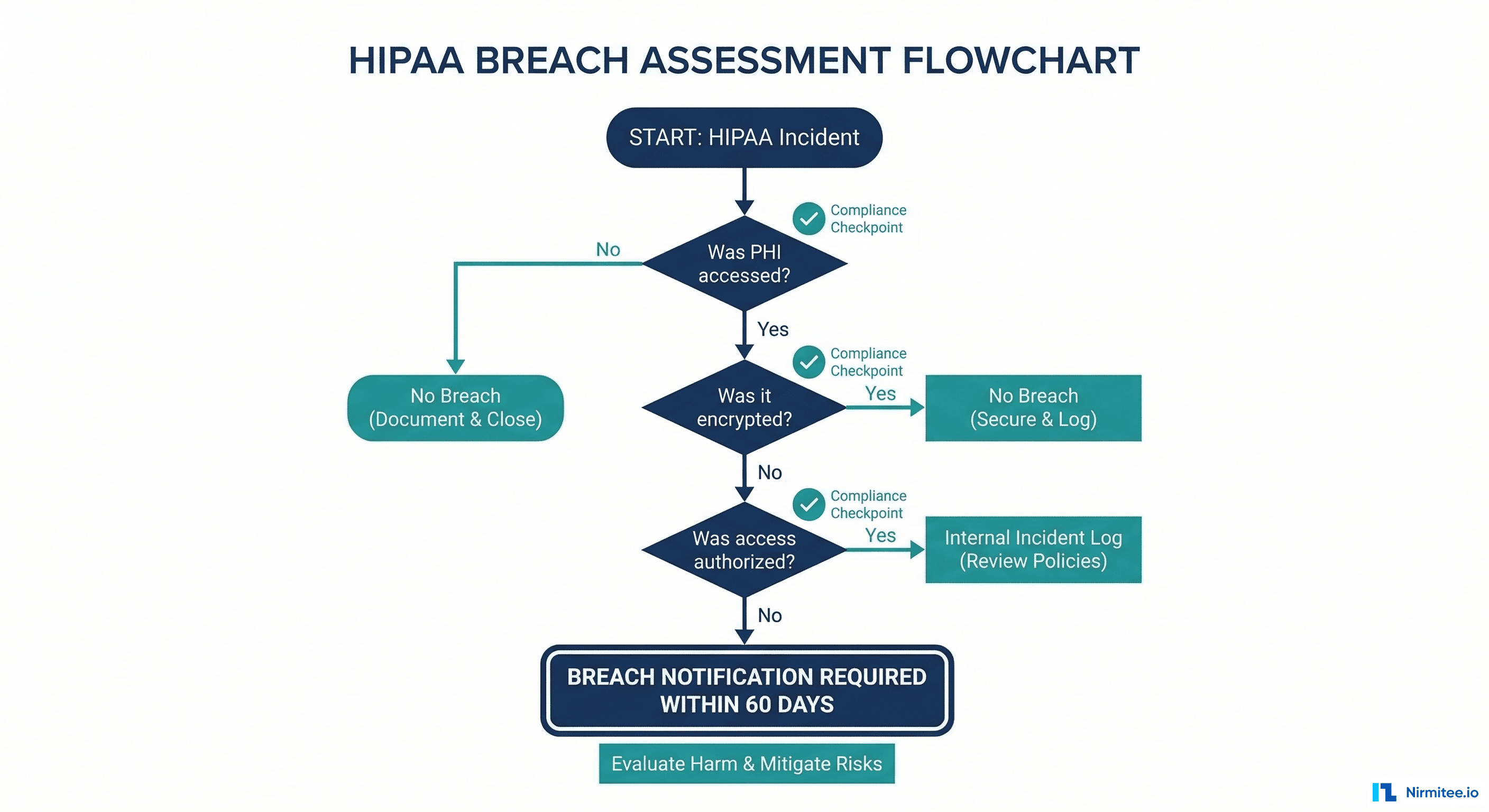

Every P1 and P2 incident must include a HIPAA breach assessment. Even if the answer is "no breach," documenting the assessment demonstrates due diligence.

# HIPAA Breach Assessment

## PHI Access Evaluation

1. **Was PHI accessed by unauthorized individuals?** No

- The incident was an availability failure, not an access control failure

- No unauthorized access to the FHIR server or database was detected

2. **Was PHI disclosed to unauthorized parties?** No

- Error logs do not contain PHI (confirmed: FHIR server logs redact patient data)

- No data was transmitted to unintended recipients

3. **Was PHI rendered unavailable?** Yes

- Patient records were inaccessible for 47 minutes

- This constitutes an availability impact under HIPAA

4. **Was the PHI encrypted at rest and in transit?** Yes

- Database encryption at rest: AES-256

- TLS 1.3 for all API traffic

## Breach Determination

- **Is this a reportable breach?** No

- Reason: Availability disruption without unauthorized access or disclosure

- The HIPAA Breach Notification Rule (45 CFR 164.404) defines breach as

unauthorized acquisition, access, use, or disclosure of PHI

- Temporary unavailability without unauthorized access does not meet

the breach definition

## Documentation

- This assessment was completed by: [Privacy Officer name]

- Date of assessment: 2026-03-15

- Assessment retained in incident management system for 6 years per HIPAA retention requirements

## Note

If the incident had involved unauthorized access, the following timelines would apply:

- Internal assessment: Immediately

- Individual notification: Within 60 days of discovery

- HHS notification: Within 60 days if 500+ individuals affected; annual log if fewer

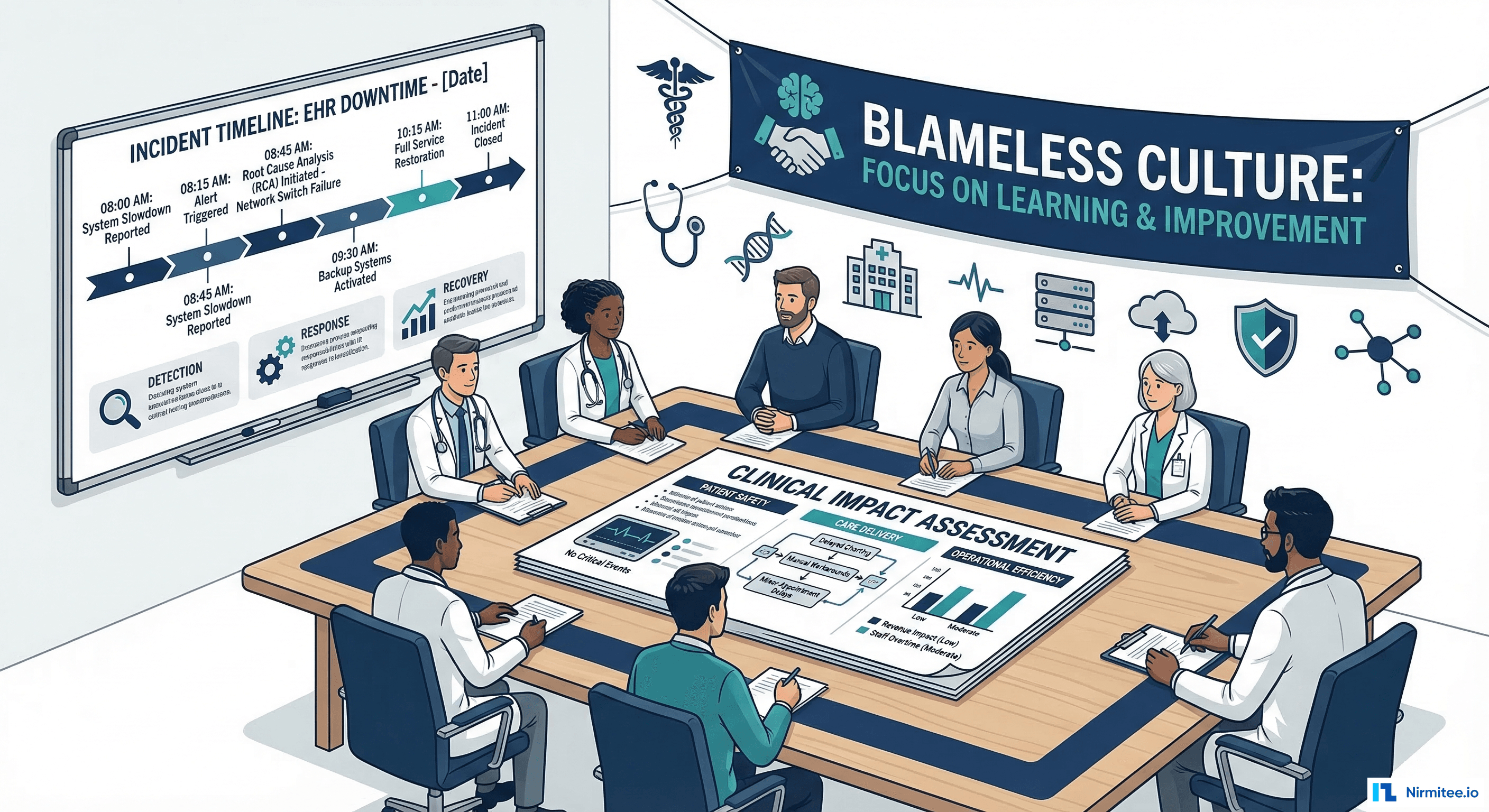

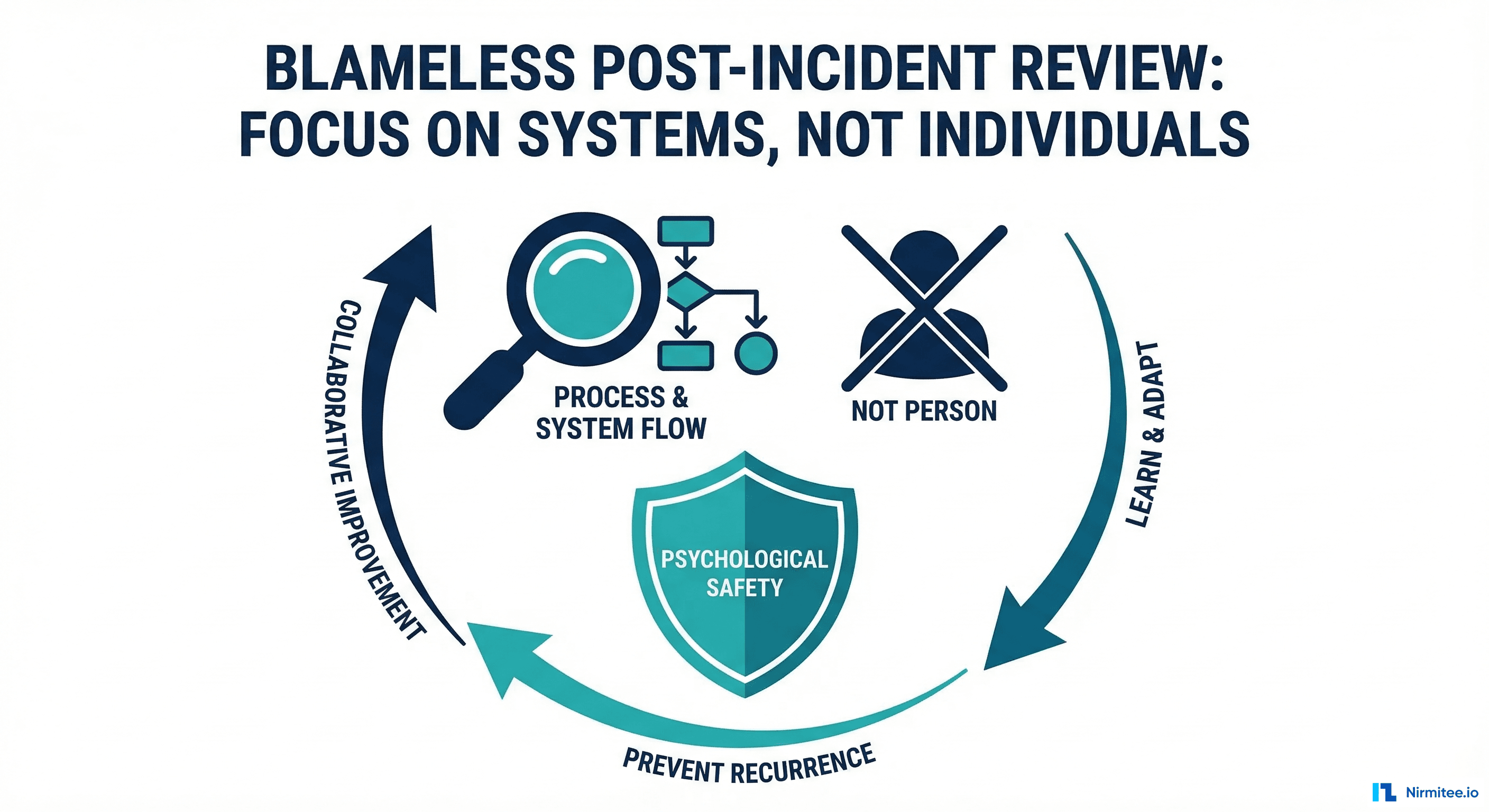

- Media notification: Within 60 days if 500+ individuals in a single state/jurisdictionBlameless Culture: The Foundation

None of this works if people are afraid. If Engineer A fears punishment for the OOM incident, they'll hide information. If clinical staff fear blame for workaround failures, they won't share what happened at the bedside. Blameless culture has specific rules:

- No blame language in the PIR document. Replace "Engineer A caused the outage by deploying bad code" with "The deployment at 14:30 introduced a code path that consumed excessive memory under production load conditions." Systems cause outages, not people.

- Mandatory participation for all involved parties. Everyone who responded joins the PIR meeting. No one is called in for punishment; everyone is called in for learning.

- PIR facilitator is not the incident commander. The facilitator's role is to keep the discussion focused on systems, not individuals. A separate person from the incident provides fresh perspective.

- Action items are systemic. "Retrain Engineer A" is not a valid action item. "Add guardrails that prevent any engineer from deploying bulk export without memory limits" is. The system should prevent the error, not rely on human perfection.

PIR Metrics: Measuring Your Review Practice

Track these metrics to ensure your PIR practice is producing results, not just documents:

| Metric | Target | Why It Matters |

|---|---|---|

| PIR completion rate | 100% of P1/P2 incidents | Every significant incident gets reviewed |

| PIR timeliness | Within 5 business days | Context degrades rapidly; review while memories are fresh |

| Action item completion | > 90% within deadline | Reviews without follow-through are theater |

| Repeat incident rate | < 5% | Same root cause should not cause multiple incidents |

| Clinical impact inclusion | 100% of P1 PIRs | Every major incident includes clinical assessment |

| HIPAA assessment inclusion | 100% of P1/P2 PIRs | Regulatory due diligence on every significant incident |

Frequently Asked Questions

How soon after the incident should we conduct the PIR?

Within 3-5 business days. Sooner is better because memories are fresher, but not the same day because people need rest after a P1 incident. Schedule the PIR meeting before closing the incident so it doesn't get deprioritized. The maximum acceptable delay is 2 weeks; beyond that, key details are forgotten and the review loses most of its value.

Who should attend the PIR meeting?

Everyone who participated in the incident response: on-call engineer, incident commander, clinical IT liaison, anyone who investigated or communicated. For P1 incidents, invite the clinical stakeholder who was affected (e.g., the ED nursing informatics lead). Their perspective on clinical impact is irreplaceable. Do not invite management unless they participated in the response; their presence can inhibit candid discussion.

How do we handle PIRs for incidents caused by vendor systems (Epic, Oracle)?

Your PIR still has value even when you don't control the root cause. Focus on: How quickly did you detect the vendor issue? How effectively did you escalate with the vendor? How well did you communicate clinical impact to affected departments? What can you do to reduce dependency on this vendor system or improve failover? Track the vendor action item ("Epic to fix bug X") and follow up until resolved. For teams managing the on-call response to these incidents, see our On-Call for Healthcare IT guide.

Should we share PIR documents with clinical leadership?

Yes, with an executive summary. Clinical leadership doesn't need the full 5 Whys technical analysis, but they need: what happened (in clinical terms), the clinical impact assessment, the HIPAA breach determination, and the action items that prevent recurrence. Create a one-page executive summary that accompanies the full technical PIR. This transparency builds trust between IT and clinical teams.

How do we prevent PIR action items from being deprioritized by sprint work?

Allocate a fixed percentage of sprint capacity (15-20%) for reliability work, including PIR action items. If action items consistently miss deadlines, escalate to engineering leadership with data: "We have 12 open PIR action items. 4 are overdue. The risk of recurrence for the incidents behind those items is X." Frame it as risk management, not engineering preference. Many teams use a "reliability budget" that is protected from sprint negotiations, just like the incident management framework protects SLO budgets. For building resilience that prevents recurring incidents, see our Chaos Engineering for Healthcare guide.

Conclusion

Every incident is a gift — an unplanned experiment that reveals how your system actually behaves under failure conditions. The post-incident review is how you unwrap that gift. Without it, you paid the cost of the outage (clinical disruption, team stress, reputational impact) without extracting the value (systemic improvement, better detection, faster response).

The healthcare-specific additions — clinical impact assessment, HIPAA breach evaluation, clinical communication review — transform the standard tech postmortem into a patient safety tool. When you can tell the CMO "Our PIR process assessed clinical impact on 45 patients, confirmed no care decisions were affected, documented the HIPAA evaluation, and produced 9 action items that will prevent recurrence," you've demonstrated the operational maturity that healthcare demands.

Use the templates in this guide as a starting point. Customize them for your organization's regulatory requirements and clinical workflows. The most important thing is to start: conduct your first PIR within a week of your next P1 incident, and iterate from there. For the monitoring that feeds into detection analysis, see our guide on Alert Fatigue in Healthcare IT.