In most industries, a few minutes of downtime means lost revenue or frustrated customers. In healthcare, downtime can mean a clinician cannot access a patient's medication list during an emergency, a STAT lab result sits in a queue while a physician waits, or a prescription never reaches the pharmacy. Site Reliability Engineering (SRE) practices — Service Level Indicators, Service Level Objectives, error budgets, incident management — are not optional for healthcare IT. They are a patient safety discipline.

Yet most healthcare organizations still operate with vague uptime targets ("we aim for 99.9%"), no formal error budgets, and incident processes borrowed from general IT that do not account for clinical impact. This article covers how to build a healthcare-specific SRE practice: defining SLIs that matter clinically, setting SLOs with the right granularity for clinical vs. administrative systems, managing error budgets against patient safety requirements, running incident response with clinical severity levels, and conducting post-incident reviews that assess both technical root cause and clinical impact.

Service Level Indicators for Healthcare Systems

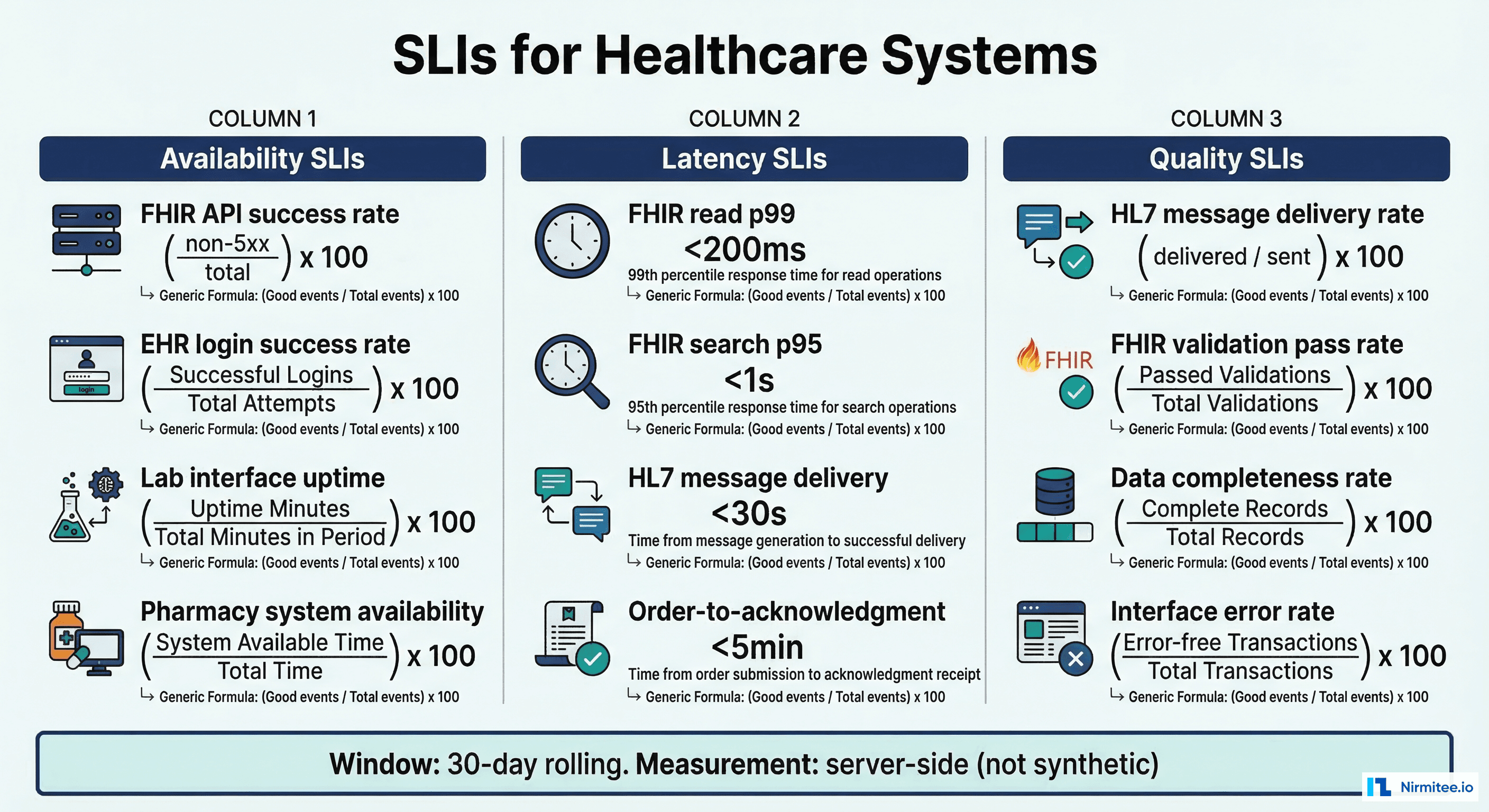

An SLI is a quantitative measure of some aspect of the level of service that is provided. For healthcare IT, choosing the right SLIs is the difference between measuring what matters to clinicians versus measuring what is easy to collect. The foundational SLIs for healthcare platforms fall into four categories.

Availability SLIs

Availability measures whether the system responds to requests at all. For healthcare, this needs to be more nuanced than a simple up/down check:

- FHIR API availability: Percentage of FHIR API requests that return a non-5xx response within the timeout window. Formula:

(total_requests - server_errors) / total_requests. This is your primary availability SLI for any system exposing FHIR endpoints. - EHR login success rate: Percentage of clinician authentication attempts that succeed within 3 seconds. A system that is "up" but takes 30 seconds to authenticate is effectively down for a physician between patients.

- Integration engine throughput: Percentage of HL7 messages processed without entering an error state. A Mirth Connect channel that is running but dropping 5% of ADT messages is not "available" in any meaningful clinical sense.

Latency SLIs

Latency in healthcare has direct clinical workflow implications. A FHIR search that takes 8 seconds instead of 800 milliseconds means a physician waits during a patient encounter — compounding across dozens of interactions per shift:

- FHIR query response time (p50, p95, p99): Separate by interaction type. A Patient read should be under 200ms at p95. A complex

Observation?patient=X&category=laboratory&date=gt2025-01-01search might have a 2-second p95 target. - HL7 message delivery latency: Time from message receipt at the integration engine to acknowledgment from the destination system. STAT orders should have a p99 under 30 seconds.

- Clinical document rendering time: Time to render a CCD/C-CDA document for a patient summary view. This directly affects clinical workflow during care transitions.

Correctness SLIs

Healthcare systems must not just respond — they must respond correctly. Data integrity failures in healthcare can have severe consequences:

- FHIR validation pass rate: Percentage of resources that pass FHIR profile validation. A system returning malformed resources is worse than one returning errors, because downstream systems may silently misinterpret the data.

- Message delivery completeness: Percentage of HL7 messages that are delivered without data loss or corruption. An ADT message that arrives with a truncated allergy list is a patient safety issue.

- Data reconciliation accuracy: For systems that replicate or transform data, the percentage of records that match between source and destination after transformation.

Freshness SLIs

In healthcare, stale data can be as dangerous as missing data. A medication list that is 6 hours out of date might not reflect a newly prescribed anticoagulant:

- Data synchronization lag: Time between a change in the source system and its availability in the consuming system. For clinical data, this should be measured in seconds, not minutes.

- Cache staleness: If you cache FHIR resources or terminology lookups, the maximum age of cached data that is served to users.

- Report generation delay: Time between data availability and report/dashboard update. Quality measure dashboards that lag by days miss time-sensitive clinical quality interventions.

Setting SLOs with Clinical Context

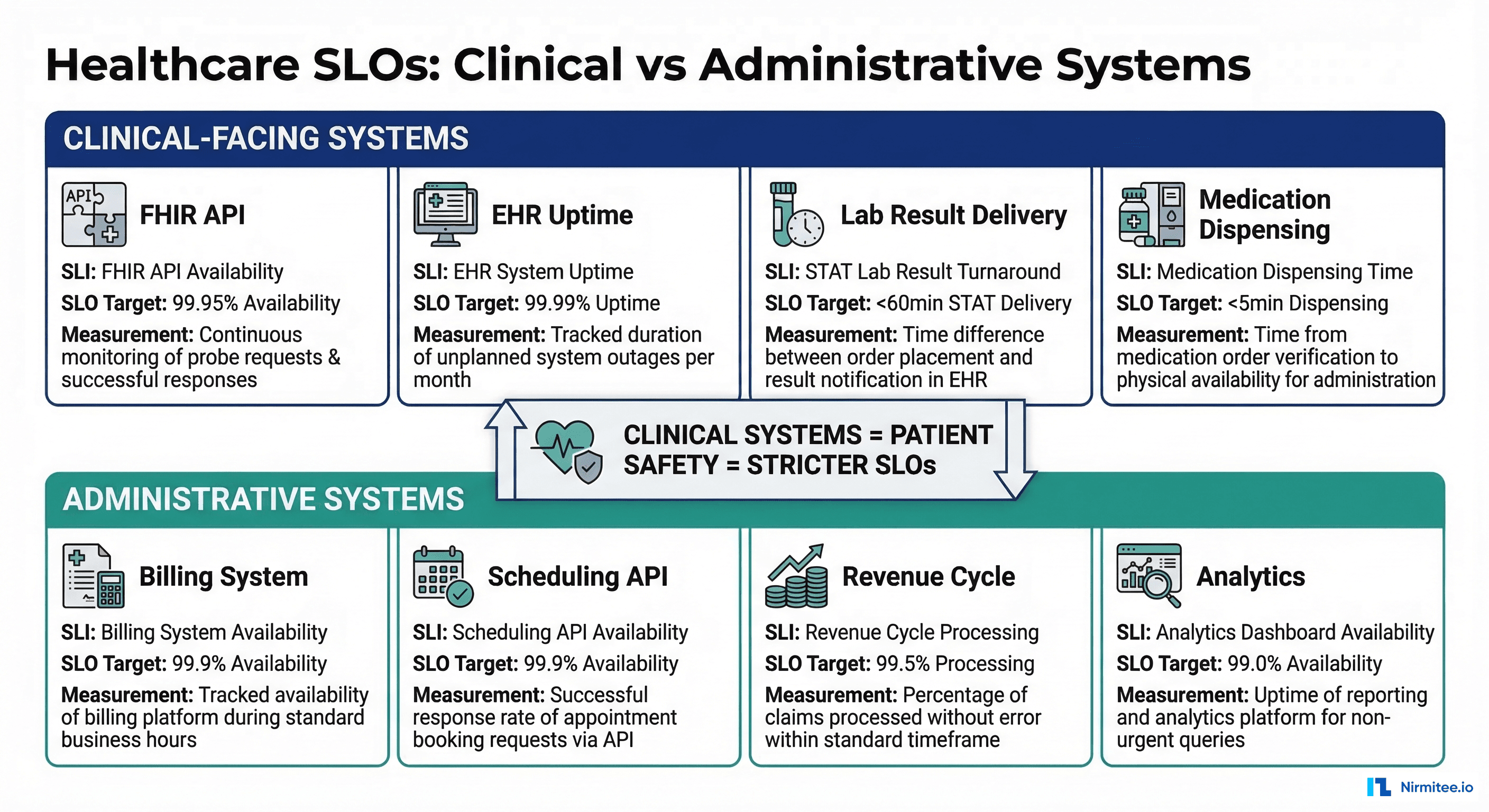

An SLO attaches a target to an SLI: "99.95% of FHIR API requests will return a non-5xx response within 2 seconds, measured over a rolling 30-day window." The critical decision in healthcare SRE is not one SLO fits all. Clinical-facing systems need different targets than administrative systems, and the consequences of missing each target are fundamentally different.

Tiered SLO Framework for Healthcare

We recommend a three-tier approach:

Tier 1 — Clinical-Facing (99.95% availability, <500ms p95 latency): Systems that clinicians use during patient care. Includes: EHR read APIs, clinical decision support, medication ordering, lab result delivery, and patient-facing portals for telehealth. The 99.95% target allows approximately 22 minutes of downtime per month — which sounds generous until you consider that 22 minutes during morning rounds at a 500-bed hospital means hundreds of disrupted clinical workflows.

Tier 2 — Clinical-Supporting (99.9% availability, <2s p95 latency): Systems that support clinical operations but are not in the direct path of patient care. Includes: scheduling systems, referral management, administrative HL7 feeds (ADT, SIU), and data pipeline infrastructure. The 99.9% target allows approximately 44 minutes of downtime per month.

Tier 3 — Administrative (99.5% availability, <5s p95 latency): Systems used for billing, reporting, compliance, and back-office operations. Includes: claims processing, quality measure calculation, analytics dashboards, and batch integration feeds. The 99.5% target allows approximately 3.6 hours of downtime per month.

SLO Definitions in Practice

# Healthcare SLO definitions (YAML format for SLO tooling)

slos:

- name: fhir-api-availability-clinical

tier: 1

description: "Clinical FHIR API availability"

sli:

type: availability

metric: |

sum(rate(http_requests_total{

service="fhir-server",

code!~"5.."

}[5m]))

/

sum(rate(http_requests_total{

service="fhir-server"

}[5m]))

target: 0.9995

window: 30d

alerting:

burn_rate_1h: 14.4 # 1-hour burn rate for page

burn_rate_6h: 6.0 # 6-hour burn rate for ticket

- name: fhir-api-latency-clinical

tier: 1

description: "Clinical FHIR API latency (p95)"

sli:

type: latency

metric: |

histogram_quantile(0.95,

sum(rate(http_request_duration_seconds_bucket{

service="fhir-server"

}[5m])) by (le)

)

target: 0.5 # 500ms

window: 30d

- name: hl7-message-delivery

tier: 2

description: "HL7 message delivery success rate"

sli:

type: availability

metric: |

sum(rate(mirth_messages_sent_total{

status="success"

}[5m]))

/

sum(rate(mirth_messages_received_total[5m]))

target: 0.999

window: 30d

- name: stat-order-turnaround

tier: 1

description: "STAT lab order turnaround time"

sli:

type: latency

metric: |

histogram_quantile(0.95,

sum(rate(clinical_order_turnaround_seconds_bucket{

priority="STAT",

order_type="laboratory"

}[5m])) by (le)

)

target: 3600 # 60 minutes

window: 30d

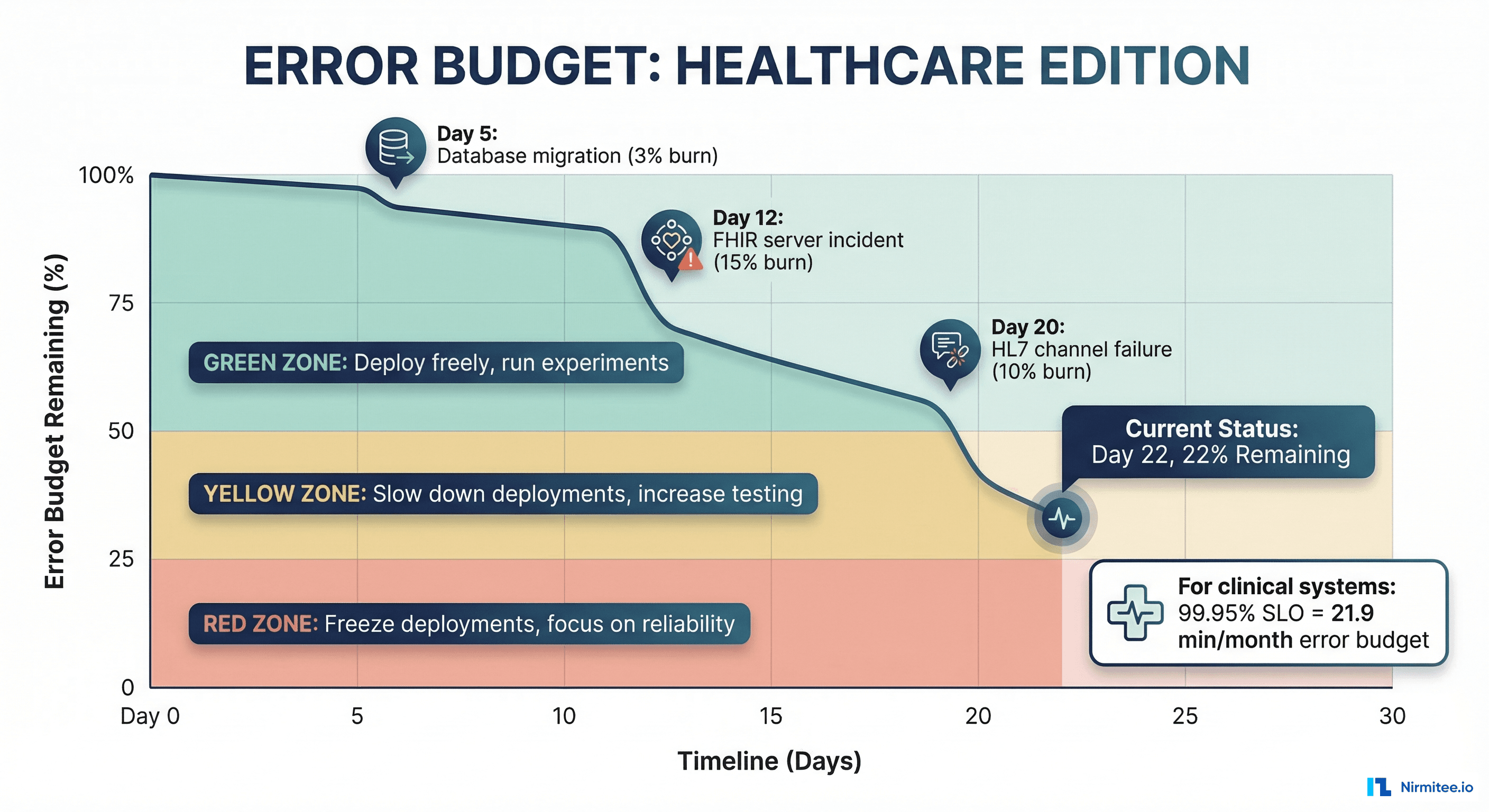

Error Budgets: Quantifying Acceptable Risk in Healthcare

An error budget is the inverse of an SLO. If your SLO is 99.95% availability over 30 days, your error budget is 0.05% — approximately 21.6 minutes of downtime. The error budget is not a target to hit; it is an allowance that funds the velocity of change. If you have budget remaining, you can deploy faster and take more risks. If your budget is exhausted, you slow down and focus on reliability.

Why Error Budgets Matter More in Healthcare

In a typical SaaS product, exhausting your error budget means unhappy customers and possible SLA credit payouts. In healthcare, exhausting your error budget means clinicians cannot access critical information during the budget-exceeded window. The stakes reshape how you manage the budget:

- No borrowing from next month: Unlike revenue targets, you cannot make up for lost clinical availability. A 2-hour outage during the first week of the month is a 2-hour outage — it does not matter that you had 99.99% availability the rest of the month.

- Context-sensitive consumption: 10 minutes of downtime at 3 AM on a Sunday consumes error budget but has minimal clinical impact. 10 minutes of downtime at 8 AM on a Monday during shift change is catastrophic. Some SRE teams apply a clinical impact multiplier to error budget consumption during peak clinical hours (7 AM to 7 PM weekdays).

- Regulatory floor: HIPAA, ONC, and CMS regulations create minimum reliability requirements that exist independent of your error budget. You cannot choose to "spend" budget on changes that create compliance gaps.

Error Budget Policy for Healthcare

A formal error budget policy defines what happens at different consumption levels:

| Budget Remaining | Status | Actions |

|---|---|---|

| >50% | Healthy | Normal development velocity. Feature deployments proceed as planned. Experimentation allowed. |

| 25-50% | Caution | Reduce deployment frequency. All changes require additional review. Begin root cause investigation of top budget consumers. |

| 10-25% | Warning | Freeze non-critical deployments. Engineering focus shifts to reliability improvements. Daily error budget review with engineering leadership. |

| <10% | Critical | Full deployment freeze except security patches and reliability fixes. Incident review for all budget-consuming events. Escalation to CTO/CMIO for clinical impact assessment. |

| 0% (exhausted) | Budget Exceeded | Complete change freeze. All engineering effort on reliability. Post-mortem required. Executive communication to clinical leadership on risk posture. |

Tracking Error Budget Consumption

# Prometheus recording rules for error budget tracking

groups:

- name: error_budget

interval: 1m

rules:

# Total error budget in seconds (30 days)

- record: slo:error_budget_total_seconds

expr: |

(1 - 0.9995) * 30 * 24 * 3600

labels:

slo: "fhir-api-clinical"

# = 1296 seconds = 21.6 minutes

# Consumed error budget in seconds (rolling 30 days)

- record: slo:error_budget_consumed_seconds

expr: |

sum_over_time(

(1 - (

sum(rate(http_requests_total{service="fhir-server",code!~"5.."}[1m]))

/

sum(rate(http_requests_total{service="fhir-server"}[1m]))

)) [30d:1m]

) * 60

labels:

slo: "fhir-api-clinical"

# Remaining budget percentage

- record: slo:error_budget_remaining_ratio

expr: |

1 - (

slo:error_budget_consumed_seconds{slo="fhir-api-clinical"}

/

slo:error_budget_total_seconds{slo="fhir-api-clinical"}

)

labels:

slo: "fhir-api-clinical"

# Burn rate (how fast we are consuming budget)

- record: slo:error_budget_burn_rate

expr: |

slo:error_budget_consumed_seconds{slo="fhir-api-clinical"}

/

slo:error_budget_total_seconds{slo="fhir-api-clinical"}

/

(time() - (time() - 30*24*3600))

* 30 * 24 * 3600

Incident Severity for Clinical Systems

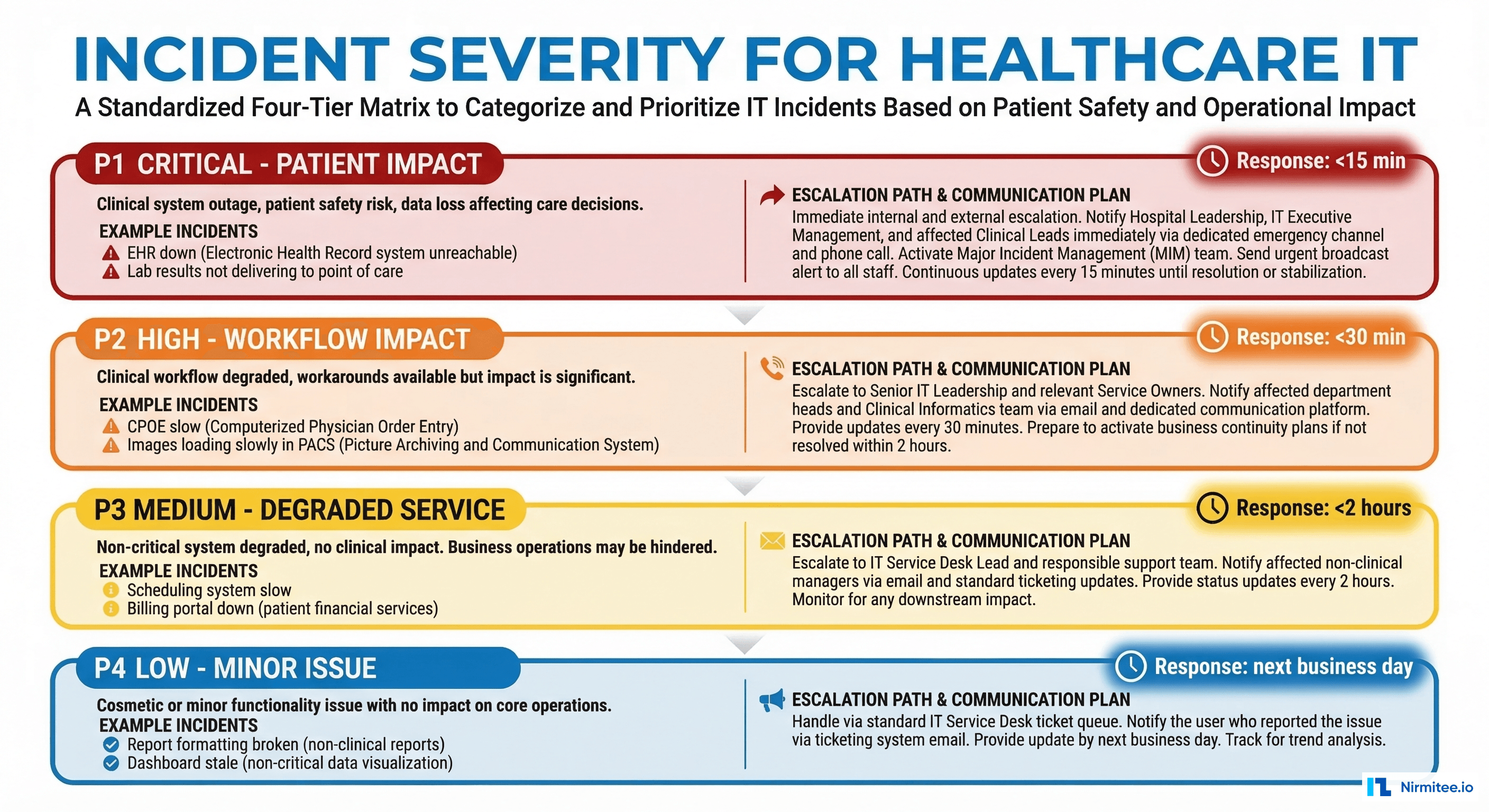

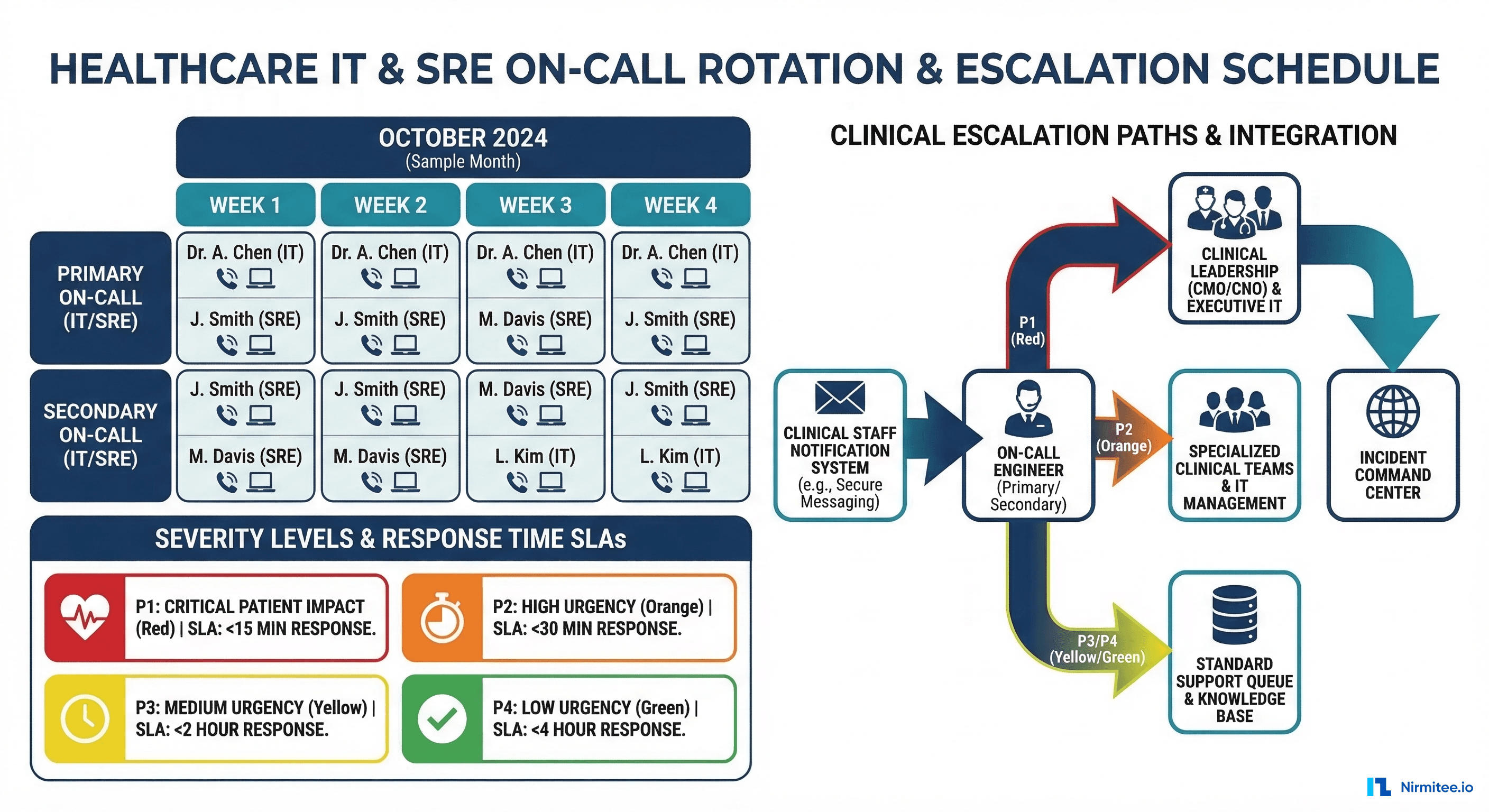

Standard incident severity frameworks (SEV1 through SEV4 or P1 through P4) classify incidents by business impact: revenue loss, customer count affected, data loss. Healthcare needs a severity framework that adds clinical impact as the primary classification dimension.

Healthcare Incident Severity Matrix

P1 — Patient Safety Impact: Any incident where clinical data is unavailable, incorrect, or delayed in a way that could affect patient care decisions. Examples: EHR is completely down, medication data is returning incorrect results, STAT lab results are not being delivered, clinical decision support is producing wrong recommendations. Response: immediate page to on-call SRE + engineering lead + clinical informatics. All hands on deck. Target acknowledgment in 5 minutes, mitigation in 30 minutes.

P2 — Clinical Workflow Degradation: Clinical systems are functioning but significantly degraded. Clinicians can work around the issue, but with reduced efficiency or missing some data. Examples: FHIR search latency above 5 seconds, patient portal is down (patients cannot access results), scheduling system is unavailable (clinicians use manual workarounds). Response: page on-call SRE. Target acknowledgment in 15 minutes, mitigation in 2 hours.

P3 — Operational Impact: Non-clinical systems are degraded or down. Impact is on administrative efficiency, reporting, or non-urgent workflows. Examples: billing feed is delayed, analytics dashboard is stale, batch data pipelines are failing. Response: alert via Slack/Teams. Target acknowledgment in 1 hour, resolution in 24 hours.

P4 — Minor Issue: Cosmetic issues, minor bugs, or issues affecting non-production environments. Examples: staging environment instability, documentation errors, minor UI rendering bugs. Response: ticket created. Resolution within sprint or next maintenance window.

The Clinical Impact Assessment

For every P1 and P2 incident, the incident commander must answer these questions within the first 15 minutes:

- Is patient data availability affected? Can clinicians access the information they need for patient care?

- Is data integrity compromised? Could any clinical data have been corrupted, lost, or delivered to the wrong patient?

- What is the blast radius? How many clinical users, patients, or facilities are affected?

- Is there a clinical workaround? Can clinicians access the data through an alternative path (downtime procedures)?

- Do we need clinical leadership notification? Should the CMIO, CNO, or department chairs be informed?

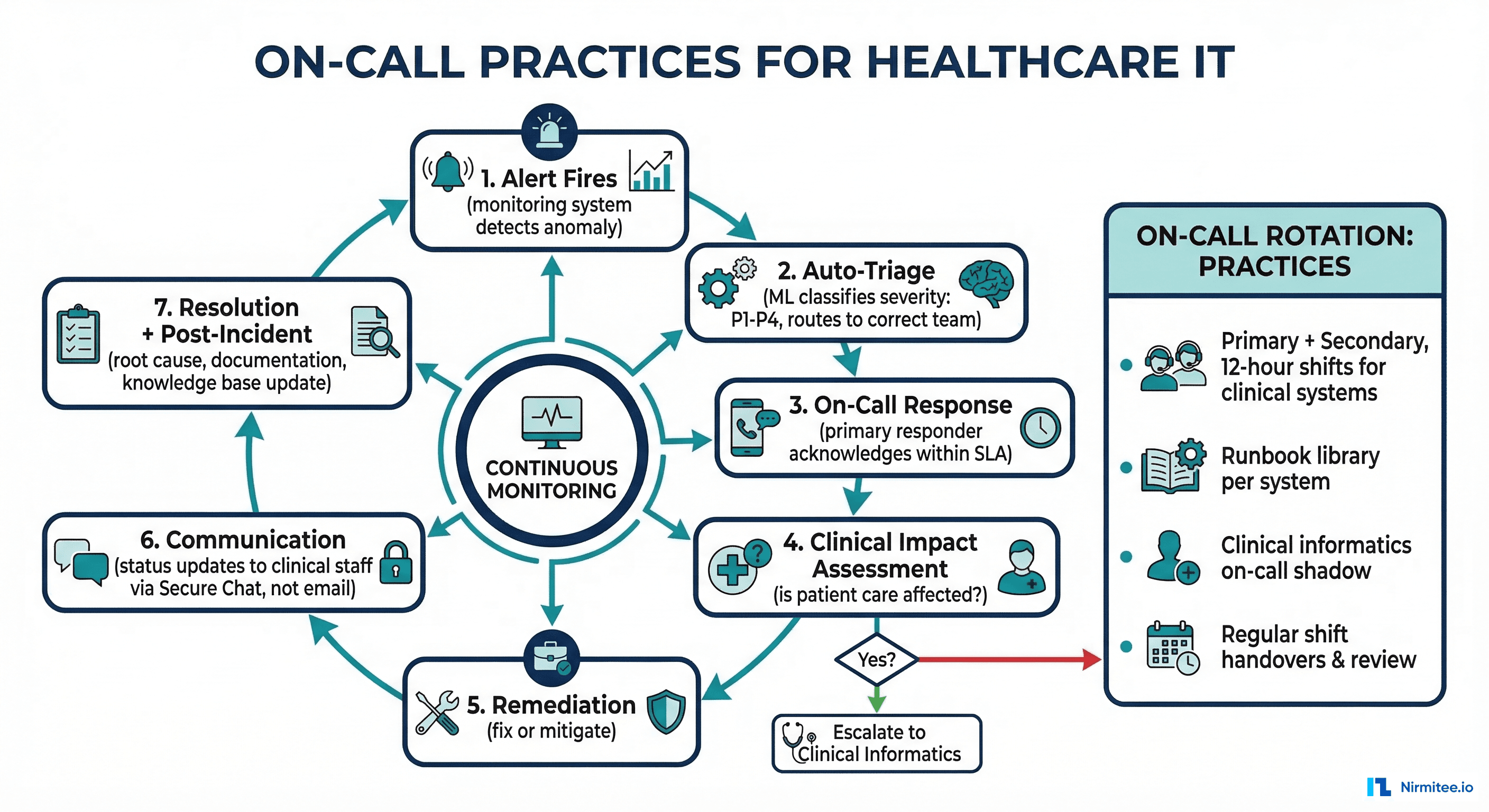

On-Call Practices for Healthcare IT

On-call for healthcare systems carries unique requirements. Healthcare operates 24/7/365 — there are no "off-peak hours" in an ICU. Your on-call practices need to reflect this reality.

On-Call Structure

Primary on-call: The first responder for all alerts. Should be an engineer with deep system knowledge and the ability to mitigate most incidents independently. Rotation: weekly, with handoffs on Monday mornings (not Fridays — you do not want a fresh on-call engineer inheriting weekend issues without context).

Secondary on-call: Backup for the primary. Engaged when the primary does not acknowledge within 10 minutes, or when the primary requests additional help. Should be a senior engineer or team lead.

Clinical escalation path: For P1 incidents, the on-call SRE must have a direct escalation path to clinical informatics (a physician or nurse informaticist who can assess clinical impact). This is the single most important difference from standard SRE on-call. A clinical informaticist can determine whether an issue is genuinely patient-safety-affecting or merely inconvenient.

Alert Design for Healthcare

Healthcare on-call engineers suffer from the same alert fatigue as everyone else — but the consequences of ignoring a critical alert are different. Alert design principles:

- SLO-based alerting, not threshold-based: Alert on error budget burn rate, not on individual metric thresholds. A single 5xx error should not page anyone. A burn rate that would exhaust your error budget in 1 hour should.

- Multi-window burn rates: Use the Google SRE multi-window approach: a fast burn (1-hour window at 14.4x normal rate) triggers a page, a slow burn (6-hour window at 6x normal rate) creates a ticket.

- Clinical context in alerts: Every alert should include: which SLO is affected, current error budget remaining, estimated clinical impact, and suggested first response action. Do not make the on-call engineer figure out whether an alert matters — tell them.

- Suppress during planned maintenance: Healthcare systems have maintenance windows (typically Saturday nights). Suppress non-critical alerts during these windows, but never suppress P1-eligible alerts.

On-Call Health Metrics

Track the health of your on-call process itself:

- Alert volume per on-call shift: More than 2 pages per 12-hour shift indicates alert quality issues.

- Time to acknowledge: Target under 5 minutes for P1, 15 minutes for P2. Track this religiously.

- Escalation rate: How often does the primary need to escalate to secondary or clinical? High rates indicate training or tooling gaps.

- On-call satisfaction survey: Monthly survey of on-call engineers. Burnout in healthcare IT on-call leads to attrition, and replacing healthcare-domain engineers takes months.

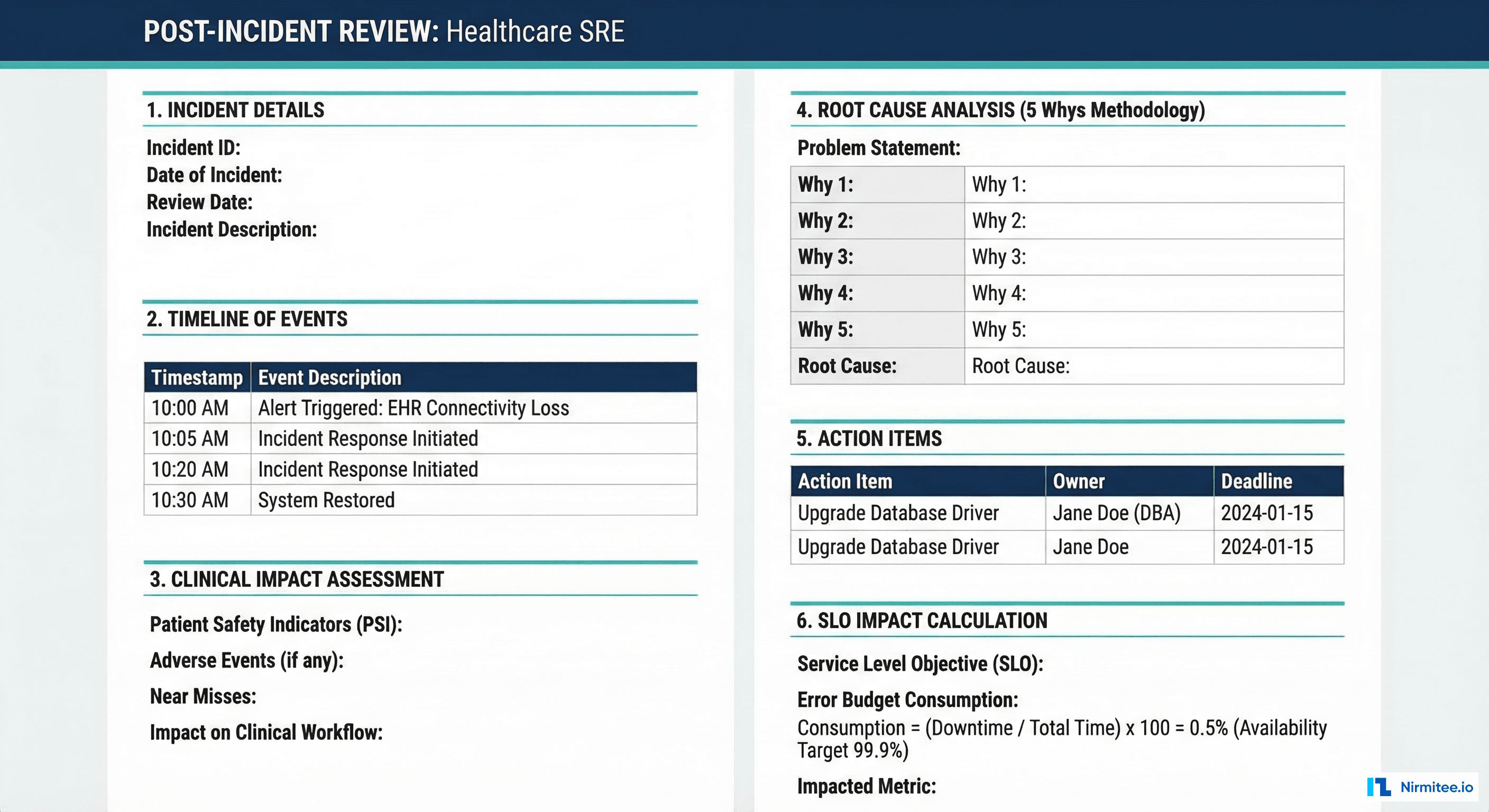

Post-Incident Reviews with Clinical Impact Assessment

Post-incident reviews (PIRs) in healthcare must go beyond the standard blameless postmortem format. They need a dedicated clinical impact assessment section and involvement from clinical informatics.

Healthcare PIR Template

Section 1: Incident Summary

- Incident ID, severity, duration

- Systems affected

- Timeline of events (detection, acknowledgment, mitigation, resolution)

- Who was involved (on-call engineers, escalations, clinical staff)

Section 2: Clinical Impact Assessment

- Number of clinical users affected

- Number of patients whose care could have been impacted

- Was patient data unavailable, delayed, or incorrect?

- Were downtime procedures activated? If so, for how long?

- Were any patient safety events reported related to this incident?

Section 3: Root Cause Analysis (5 Whys)

- Work through the causal chain from symptom to root cause

- Distinguish between proximate cause (what broke) and contributing factors (why it could break)

- Identify whether existing monitoring detected the issue, and if not, why not

Section 4: SLO Impact

- Error budget consumed by this incident (minutes/percentage)

- Remaining error budget for the current window

- Does this incident trigger any error budget policy actions?

Section 5: Action Items

- Each action item has an owner, a due date, and a priority

- Distinguish between: immediate fixes (days), medium-term improvements (weeks), and long-term systemic changes (quarters)

- Track action item completion in your project management system — PIR action items that are not tracked do not get done

Running Effective PIRs

Three rules for healthcare PIRs:

- Blameless, but not accountability-free: The goal is to improve the system, not punish individuals. But action items need owners, and systemic issues need executive sponsors.

- Include clinical perspective: For P1 and P2 incidents, a clinical informaticist should attend the PIR. They bring context about how the incident affected clinical workflows that the engineering team may not have visibility into.

- Close the loop: Schedule a follow-up review (2-4 weeks later) to verify that action items are completed and that the changes actually improve the relevant SLI. An action item without verification is wishful thinking.

Putting It All Together: The Healthcare SRE Maturity Model

Building a healthcare SRE practice is a multi-quarter journey. Here is a pragmatic maturity model:

Level 1 — Foundations (Month 1-2): Define SLIs for your top 3 clinical systems. Set initial SLOs based on historical performance data. Implement basic availability and latency monitoring. Establish an on-call rotation with documented escalation paths.

Level 2 — Error Budgets (Month 3-4): Implement error budget tracking dashboards. Define and ratify an error budget policy with engineering and clinical leadership. Move from threshold-based to SLO-based alerting. Begin conducting PIRs for all P1 and P2 incidents.

Level 3 — Clinical Integration (Month 5-6): Add clinical impact assessment to PIR process. Implement tiered SLOs (clinical, clinical-supporting, administrative). Build clinical escalation paths into on-call procedures. Start tracking freshness and correctness SLIs alongside availability and latency.

Level 4 — Optimization (Ongoing): Implement distributed tracing for end-to-end workflow visibility. Build automated error budget reporting for clinical and executive leadership. Continuously refine SLOs based on clinical feedback and incident learnings. Contribute to healthcare observability frameworks that incorporate AI-driven anomaly detection.

Frequently Asked Questions

What does "five nines" (99.999%) actually mean for a healthcare system?

Five nines means approximately 26 seconds of downtime per month, or 5 minutes and 15 seconds per year. Very few healthcare systems need this level of availability — it requires redundancy at every layer, automated failover, and a significant infrastructure investment. For most healthcare organizations, 99.95% (Tier 1 clinical systems) is the right target: it acknowledges that 22 minutes of downtime per month is acceptable with proper downtime procedures in place, while being achievable with modern cloud infrastructure.

How do you measure SLOs across multiple vendors (Epic, Cerner, lab systems)?

You cannot control vendor system reliability, but you can measure the SLIs at your integration boundaries. Measure FHIR API response times at your API gateway, HL7 message delivery at your integration engine, and end-to-end workflow latency across systems. Your SLO covers the end-to-end experience — if a vendor system is slow, it still consumes your error budget. This creates the right incentive to negotiate vendor SLAs that support your SLOs.

Should error budgets be shared across teams?

For healthcare systems, error budgets should be shared across all teams that contribute to a given SLO. If the FHIR API availability SLO is shared by the platform team, the database team, and the infrastructure team, all three teams share the error budget. This prevents finger-pointing and creates shared accountability for reliability.

How do you handle planned maintenance with SLOs?

Planned maintenance consumes error budget — there is no free pass. This is intentional: it forces you to minimize maintenance windows and invest in zero-downtime deployment practices (rolling updates, blue-green deployments, database migrations without downtime). If your monthly maintenance window consumes half your error budget, that is a signal to invest in better deployment automation.

Conclusion

SRE for healthcare is not a different discipline than SRE for other industries — it is the same discipline applied with clinical context. The SLIs measure clinical impact, not just technical metrics. The SLOs reflect the tiered nature of healthcare systems. The error budgets account for the reality that downtime affects patient care. The incident process includes clinical impact assessment. And the post-incident reviews close the loop between technical root causes and clinical outcomes.

For organizations building healthcare platforms or managing health system IT infrastructure, investing in SRE practices is not an engineering luxury — it is a patient safety requirement. At Nirmitee, we help healthcare technology teams build the reliability engineering foundations that clinical operations depend on — from SLO frameworks and observability infrastructure to incident management processes designed for clinical environments.