An AI Medication Suggestion Gone Wrong Is Not a Hallucination. It Is a Patient Safety Event.

In January 2025, a hospital in the Midwest ran a pilot program: an LLM-powered clinical decision support tool that suggested medications based on patient history and chief complaint. Within three weeks, the tool made three errors that ended the pilot immediately.

The first: it suggested amoxicillin for a patient with a documented penicillin allergy. The allergy was recorded in the EHR, but the agent processed the note as free text and missed it. The second: it recommended an adult dose of metformin for a 9-year-old with Type 2 diabetes — 2,000mg instead of the weight-adjusted 500mg. The third: it suggested adding ciprofloxacin to a patient already on warfarin, a well-known interaction that increases bleeding risk by 2-3x (Baillargeon et al., 2012).

None of these were exotic edge cases. They were the kind of mistakes a second-year pharmacy student would catch. But the LLM had no guardrails — it generated free-text suggestions, and the only check was a busy clinician glancing at the output between patients.

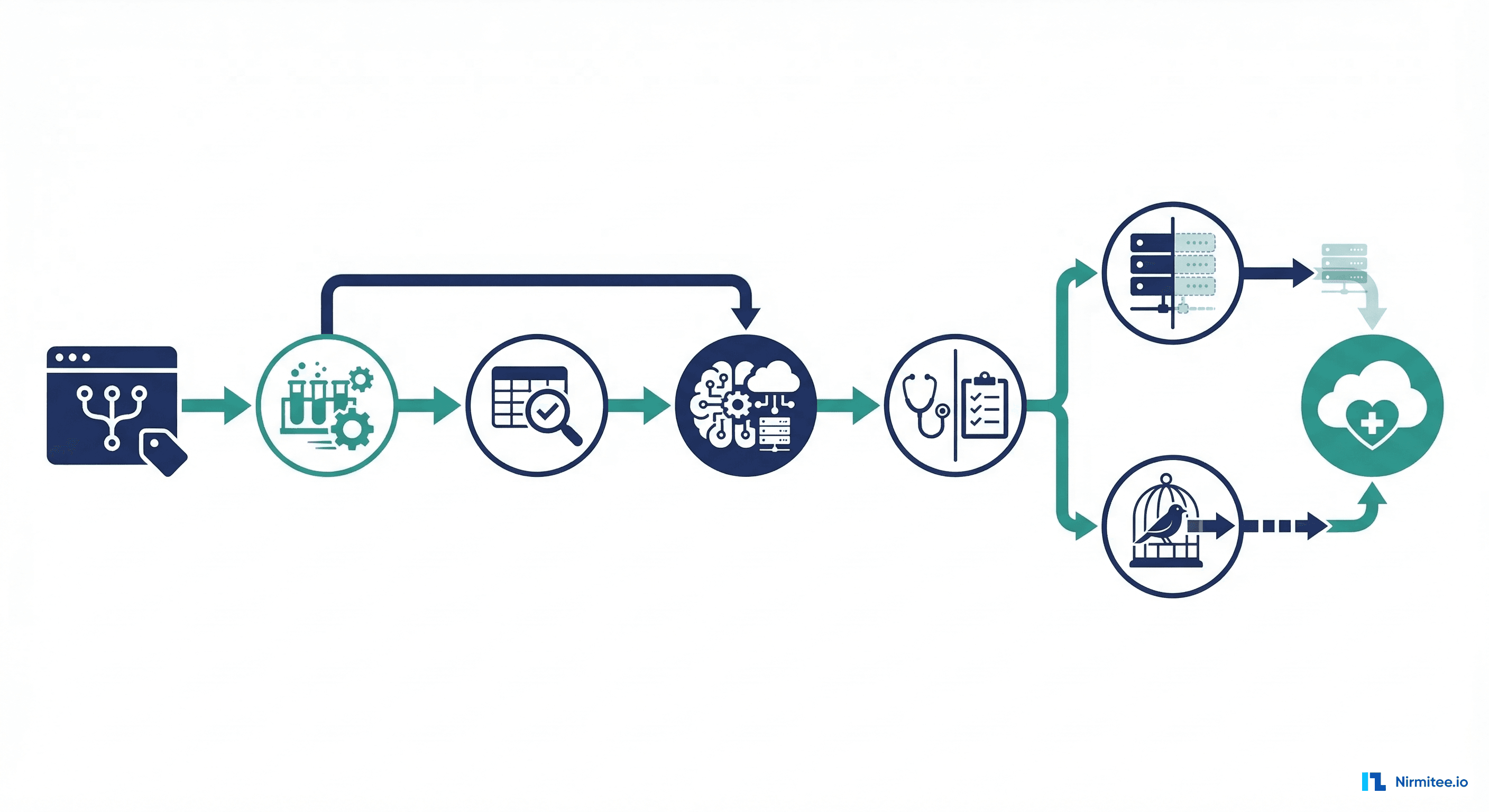

If you are building clinical-facing AI agents that touch medication decisions, this article is your engineering blueprint. We will walk through a six-layer guardrail architecture that makes it structurally difficult for your agent to recommend a dangerous medication. Not impossible — nothing is impossible to break — but difficult enough that failures become auditable, catchable, and rare.

Layer 1: Output Schema Enforcement — Kill Free Text

The single most impactful change you can make is eliminating free-text medication output entirely. When your agent returns "I'd suggest starting amoxicillin, maybe around 500mg three times a day," you have lost the ability to programmatically validate anything. "Maybe around 500mg" is not a dosage — it is a liability.

Force every medication suggestion through a strict schema. If the LLM cannot fill every required field, the recommendation fails safely — it does not reach the clinician.

from pydantic import BaseModel, Field, validator

from enum import Enum

from typing import Optional

class RouteOfAdministration(str, Enum):

ORAL = "oral"

IV = "intravenous"

IM = "intramuscular"

SC = "subcutaneous"

TOPICAL = "topical"

INHALED = "inhaled"

RECTAL = "rectal"

SUBLINGUAL = "sublingual"

class FrequencyCode(str, Enum):

QD = "QD" # Once daily

BID = "BID" # Twice daily

TID = "TID" # Three times daily

QID = "QID" # Four times daily

Q4H = "Q4H" # Every 4 hours

Q6H = "Q6H" # Every 6 hours

Q8H = "Q8H" # Every 8 hours

Q12H = "Q12H" # Every 12 hours

PRN = "PRN" # As needed

ONCE = "ONCE" # Single dose

class MedicationRecommendation(BaseModel):

"""Structured medication recommendation."""

drug_name: str = Field(..., description="Generic drug name")

rxnorm_cui: str = Field(..., pattern=r"^\d{4,7}$",

description="RxNorm Concept Unique Identifier")

dose_value: float = Field(..., gt=0, description="Numeric dose value")

dose_unit: str = Field(..., pattern=r"^(mg|mcg|g|mL|units|mEq)$")

route: RouteOfAdministration

frequency: FrequencyCode

indication: str = Field(..., min_length=10,

description="Clinical indication for this medication")

duration_days: Optional[int] = Field(None, gt=0, le=365)

requires_renal_adjustment: bool = False

requires_hepatic_adjustment: bool = False

confidence_score: float = Field(..., ge=0.0, le=1.0)

@validator("rxnorm_cui")

def validate_rxnorm_format(cls, v):

if not v.isdigit() or len(v) < 4:

raise ValueError(f"Invalid RxNorm CUI format: {v}")

return vThis is not optional polish. This is the foundation that makes every subsequent layer possible. Without a structured CUI, you cannot check drug interactions. Without a numeric dose, you cannot validate ranges. Without a coded route, you cannot catch "oral methotrexate prescribed as IV" errors.

Use your LLM framework's structured output mode — OpenAI's response_format, Anthropic's tool use, or LangChain's PydanticOutputParser — to enforce this at the generation layer, not as a post-hoc parse.

Layer 2: Drug-Drug Interaction Checking via RxNorm

Once you have a structured RxNorm CUI, you can check it against the patient's current medication list using the NLM Drug Interaction API. This is a free, authoritative, FDA-backed data source. There is no reason to skip this step.

import httpx

from dataclasses import dataclass

from typing import Optional

@dataclass

class DrugInteraction:

severity: str # "high", "moderate", "low"

description: str

source: str

drug_a: str

drug_b: str

async def check_drug_interactions(

new_drug_cui: str,

current_med_cuis: list[str]

) -> list[DrugInteraction]:

"""

Check a proposed medication against the patient's current

medication list using the NLM Drug Interaction API.

"""

interactions = []

all_cuis = [new_drug_cui] + current_med_cuis

cui_param = "+".join(all_cuis)

url = (

"https://rxnav.nlm.nih.gov/REST/interaction/"

f"list.json?rxcuis={cui_param}"

)

async with httpx.AsyncClient(timeout=10.0) as client:

resp = await client.get(url)

resp.raise_for_status()

data = resp.json()

full_interactions = data.get("fullInteractionTypeGroup", [])

for group in full_interactions:

source = group.get("sourceName", "unknown")

for itype in group.get("fullInteractionType", []):

for pair in itype.get("interactionPair", []):

severity = pair.get("severity", "N/A").lower()

description = pair.get("description", "")

concepts = pair.get("interactionConcept", [])

drug_names = [

c.get("minConceptItem", {}).get("name", "unknown")

for c in concepts

]

interactions.append(DrugInteraction(

severity=severity,

description=description,

source=source,

drug_a=drug_names[0] if drug_names else "unknown",

drug_b=drug_names[1] if len(drug_names) > 1 else "unknown",

))

return interactions

def evaluate_interaction_risk(

interactions: list[DrugInteraction]

) -> tuple[str, list[DrugInteraction]]:

"""

Returns a decision: 'block', 'warn', or 'pass',

plus the relevant interactions.

"""

high = [i for i in interactions if i.severity == "high"]

moderate = [i for i in interactions if i.severity == "moderate"]

if high:

return "block", high

elif moderate:

return "warn", moderate

else:

return "pass", []A critical implementation detail: the RxNorm API uses specific CUI formats. "Warfarin" is RxCUI 11289, but "Warfarin Sodium 5mg Oral Tablet" is RxCUI 855332. Use the RxNorm name lookup endpoint to resolve the correct CUI for your agent's output, and check interactions at both the ingredient level and the specific product level.

Layer 3: Dosage Range Validation

LLMs are notoriously bad at numbers. A model that correctly identifies metformin as the right drug for Type 2 diabetes may suggest 5,000mg daily — a dose that would cause severe lactic acidosis. Dosage validation is non-negotiable.

Build a dosage reference table from DailyMed (FDA-approved labeling data) and flag anything outside 0.5x to 2x the standard adult range. For pediatric patients, require weight-based dosing calculations.

from dataclasses import dataclass

from typing import Optional

@dataclass

class DosageRange:

drug_name: str

rxnorm_cui: str

min_dose_mg: float # Minimum single dose

max_dose_mg: float # Maximum single dose

max_daily_mg: float # Maximum total daily dose

pediatric_mg_per_kg: Optional[float] = None

requires_renal_adjustment: bool = False

requires_weight_based: bool = False

source: str = "DailyMed FDA Label"

# Reference table (production: load from database)

DOSAGE_RANGES: dict[str, DosageRange] = {

"311670": DosageRange( # Metformin 500mg

drug_name="Metformin", rxnorm_cui="311670",

min_dose_mg=500, max_dose_mg=1000, max_daily_mg=2550,

pediatric_mg_per_kg=None,

requires_renal_adjustment=True,

),

"308182": DosageRange( # Amoxicillin 500mg

drug_name="Amoxicillin", rxnorm_cui="308182",

min_dose_mg=250, max_dose_mg=500, max_daily_mg=3000,

pediatric_mg_per_kg=25.0, # 25-45 mg/kg/day

requires_weight_based=True,

),

"855332": DosageRange( # Warfarin 5mg

drug_name="Warfarin", rxnorm_cui="855332",

min_dose_mg=1, max_dose_mg=10, max_daily_mg=10,

),

}

@dataclass

class DosageValidationResult:

is_valid: bool

decision: str # "pass", "warn", "block"

reason: Optional[str] = None

suggested_range: Optional[str] = None

def validate_dosage(

rxnorm_cui: str,

proposed_dose_mg: float,

frequency: str,

patient_weight_kg: Optional[float] = None,

patient_age_years: Optional[float] = None,

egfr: Optional[float] = None,

) -> DosageValidationResult:

"""Validate proposed dosage against known safe ranges."""

ref = DOSAGE_RANGES.get(rxnorm_cui)

if not ref:

return DosageValidationResult(

is_valid=False, decision="warn",

reason=f"No dosage reference for RxCUI {rxnorm_cui}. "

f"Requires manual pharmacist review.",

)

freq_multipliers = {

"QD": 1, "BID": 2, "TID": 3, "QID": 4,

"Q4H": 6, "Q6H": 4, "Q8H": 3, "Q12H": 2,

"ONCE": 1, "PRN": 4,

}

multiplier = freq_multipliers.get(frequency, 1)

daily_dose = proposed_dose_mg * multiplier

# Single dose exceeds 2x maximum

if proposed_dose_mg > ref.max_dose_mg * 2:

return DosageValidationResult(

is_valid=False, decision="block",

reason=f"Single dose {proposed_dose_mg}mg exceeds 2x max "

f"({ref.max_dose_mg}mg) for {ref.drug_name}",

suggested_range=f"{ref.min_dose_mg}-{ref.max_dose_mg}mg",

)

# Daily dose exceeds maximum

if daily_dose > ref.max_daily_mg:

return DosageValidationResult(

is_valid=False, decision="block",

reason=f"Daily dose {daily_dose}mg exceeds max daily "

f"({ref.max_daily_mg}mg) for {ref.drug_name}",

)

# Pediatric: require weight-based dosing

if patient_age_years and patient_age_years < 18:

if ref.requires_weight_based and not patient_weight_kg:

return DosageValidationResult(

is_valid=False, decision="block",

reason=f"Pediatric patient requires weight-based "

f"dosing for {ref.drug_name}.",

)

if ref.pediatric_mg_per_kg and patient_weight_kg:

max_ped = ref.pediatric_mg_per_kg * patient_weight_kg

if daily_dose > max_ped * 1.5:

return DosageValidationResult(

is_valid=False, decision="block",

reason=f"Pediatric daily dose {daily_dose}mg "

f"exceeds weight-based max "

f"({max_ped:.0f}mg for "

f"{patient_weight_kg}kg patient)",

)

# Renal adjustment required

if ref.requires_renal_adjustment and egfr and egfr < 30:

return DosageValidationResult(

is_valid=False, decision="block",

reason=f"{ref.drug_name} requires renal dose adjustment. "

f"eGFR is {egfr} (< 30).",

)

return DosageValidationResult(is_valid=True, decision="pass")In production, load your dosage reference from DailyMed's structured product label (SPL) data or the FDA Drugs@FDA database. The hardcoded table above is for illustration — a production system needs thousands of entries, updated quarterly.

Layer 4: Allergy Cross-Reference via FHIR AllergyIntolerance

Allergy checking seems simple until you realize patients rarely have allergies coded to specific RxNorm CUIs. A patient's allergy list might say "penicillin" (the drug class), not "amoxicillin" (the specific drug). Your system must handle three levels of matching: exact drug match, drug class match, and known cross-reactivity.

import httpx

from dataclasses import dataclass

# Drug class membership: RxNorm ingredient CUIs to classes

DRUG_CLASS_MAP: dict[str, list[str]] = {

"penicillins": ["723", "733", "7984", "1043553"],

"cephalosporins": ["2231", "2348", "20481", "2193"],

"fluoroquinolones": ["2551", "82122", "140587"],

"sulfonamides": ["10831", "36437"],

"nsaids": ["5640", "161", "7646"],

"statins": ["36567", "301542", "83367"],

}

# Known cross-reactivity between drug classes

CROSS_REACTIVITY: dict[str, list[str]] = {

"penicillins": ["cephalosporins"], # ~2% cross-reactivity

"cephalosporins": ["penicillins"],

"sulfonamides": [],

}

@dataclass

class AllergyCheckResult:

decision: str # "block", "warn", "pass"

match_type: str # "exact", "class", "cross_reactivity", "none"

details: str

allergy_source: str

def get_drug_classes(rxnorm_cui: str) -> list[str]:

"""Find which drug classes a given CUI belongs to."""

return [cls for cls, members in DRUG_CLASS_MAP.items()

if rxnorm_cui in members]

async def fetch_patient_allergies(

fhir_base: str, patient_id: str, auth_token: str,

) -> list[dict]:

"""Fetch active AllergyIntolerance resources from FHIR."""

url = (

f"{fhir_base}/AllergyIntolerance"

f"?patient={patient_id}&clinical-status=active"

)

async with httpx.AsyncClient(timeout=10.0) as client:

resp = await client.get(

url, headers={"Authorization": f"Bearer {auth_token}",

"Accept": "application/fhir+json"}

)

resp.raise_for_status()

bundle = resp.json()

allergies = []

for entry in bundle.get("entry", []):

resource = entry.get("resource", {})

coding = resource.get("code", {}).get("coding", [{}])[0]

allergies.append({

"display": coding.get("display", ""),

"code": coding.get("code", ""),

"system": coding.get("system", ""),

"text": resource.get("code", {}).get("text", ""),

})

return allergies

def check_allergy_match(

drug_rxnorm_cui: str,

drug_name: str,

patient_allergies: list[dict],

) -> AllergyCheckResult:

"""

Three-level allergy check:

1. Exact match (specific drug CUI)

2. Drug class match (e.g., penicillin class)

3. Cross-reactivity (e.g., cephalosporin + penicillin allergy)

"""

drug_classes = get_drug_classes(drug_rxnorm_cui)

for allergy in patient_allergies:

allergy_code = allergy.get("code", "")

allergy_text = (

allergy.get("display", "") or allergy.get("text", "")

).lower()

# Level 1: Exact CUI match

if allergy_code == drug_rxnorm_cui:

return AllergyCheckResult(

decision="block", match_type="exact",

details=f"Patient has documented allergy to "

f"{drug_name} (exact RxNorm match)",

allergy_source=allergy_text,

)

# Level 2: Drug class match

allergy_classes = get_drug_classes(allergy_code)

for cls in DRUG_CLASS_MAP:

if cls in allergy_text:

allergy_classes.append(cls)

overlap = set(drug_classes) & set(allergy_classes)

if overlap:

return AllergyCheckResult(

decision="block", match_type="class",

details=f"{drug_name} belongs to class "

f"{list(overlap)} — patient allergic "

f"to {allergy_text}",

allergy_source=allergy_text,

)

# Level 3: Cross-reactivity

for allergy_cls in allergy_classes:

cross = CROSS_REACTIVITY.get(allergy_cls, [])

cross_overlap = set(drug_classes) & set(cross)

if cross_overlap:

return AllergyCheckResult(

decision="warn",

match_type="cross_reactivity",

details=f"{drug_name} has known "

f"cross-reactivity with "

f"{allergy_cls} (~2% rate). "

f"Patient allergic to {allergy_text}.",

allergy_source=allergy_text,

)

return AllergyCheckResult(

decision="pass", match_type="none",

details="No allergy matches found",

allergy_source="",

)The FHIR AllergyIntolerance resource is your source of truth here. If your health system uses a different EHR data model, map it to FHIR first — this standardization is what makes the class-level matching possible. For a deeper dive on building FHIR-based clinical data pipelines, see our guide on FHIR for AI/ML pipelines.

Layer 5: The "Never Do" List — Hardcoded Rules Outside the LLM

Some rules should never be subject to LLM judgment. These are deterministic, non-negotiable safety rules that exist in code, not in prompts. No amount of prompt engineering makes these safe to delegate to a language model.

from dataclasses import dataclass

from typing import Optional

@dataclass

class HardRuleResult:

blocked: bool

rule_name: str

reason: str

requires_specialist: Optional[str] = None

requires_human_review: bool = True

# DEA Schedule II controlled substances (partial list)

SCHEDULE_II_CUIS = {

"7052", # Morphine

"5489", # Hydrocodone

"7804", # Oxycodone

"6813", # Methylphenidate

"723", # Amphetamine

"3423", # Fentanyl

}

INSULIN_CUIS = {

"5856", "253182", "261542", "274783", "400008",

}

ANTICOAGULANT_CUIS = {

"11289", "1364430", "1114195", "1232082", "67108",

}

CHEMOTHERAPY_CUIS = {

"3002", "12574", "5101", "51499", "224905",

}

def apply_hard_rules(

rxnorm_cui: str,

drug_name: str,

patient_age_years: Optional[float] = None,

patient_weight_kg: Optional[float] = None,

is_off_label: bool = False,

action: str = "prescribe",

) -> Optional[HardRuleResult]:

"""Non-negotiable safety rules in application code."""

if rxnorm_cui in SCHEDULE_II_CUIS:

return HardRuleResult(

blocked=True,

rule_name="controlled_substance_gate",

reason=f"{drug_name} is Schedule II. Requires "

f"physician review and DEA authorization.",

requires_specialist="attending_physician",

)

if rxnorm_cui in INSULIN_CUIS and action == "modify":

return HardRuleResult(

blocked=True,

rule_name="insulin_modification_gate",

reason=f"Insulin dosing modification for {drug_name} "

f"requires endocrinologist review.",

requires_specialist="endocrinologist",

)

if rxnorm_cui in ANTICOAGULANT_CUIS and action == "discontinue":

return HardRuleResult(

blocked=True,

rule_name="anticoagulant_discontinuation_block",

reason=f"Cannot recommend discontinuing {drug_name}. "

f"Requires hematology/cardiology review.",

requires_specialist="hematologist",

)

if patient_age_years and patient_age_years < 18 \

and not patient_weight_kg:

return HardRuleResult(

blocked=True,

rule_name="pediatric_weight_required",

reason=f"Pediatric patient ({patient_age_years}y) "

f"requires documented weight for dosing.",

)

if is_off_label:

return HardRuleResult(

blocked=True,

rule_name="off_label_disclosure_required",

reason=f"{drug_name} off-label use requires "

f"disclosure and physician authorization.",

requires_specialist="attending_physician",

)

if rxnorm_cui in CHEMOTHERAPY_CUIS:

return HardRuleResult(

blocked=True,

rule_name="chemotherapy_block",

reason=f"{drug_name} is a chemotherapy agent. "

f"Requires oncology protocol and BSA calc.",

requires_specialist="oncologist",

)

return None # No hard rules triggeredThese rules are not in the prompt. They are not "guidelines" the LLM is asked to follow. They are if statements in your application code that run after every single recommendation. The LLM does not get a vote.

This pattern is directly relevant to why human-in-the-loop is the entire point of healthcare AI — certain decisions should never be fully automated, regardless of model accuracy.

Layer 6: Confidence Gating — When in Doubt, Route to a Human

Even after all five layers pass, the agent's own confidence matters. If the LLM is uncertain about its recommendation — low logit scores, hedging language in its reasoning chain, or a clinical scenario outside its training distribution — the recommendation should not reach the clinician as a suggestion. It should be routed to the pharmacy team for manual review.

@dataclass

class ConfidenceGateResult:

passed: bool

confidence: float

action: str # "show", "flag", "suppress"

reason: Optional[str] = None

def apply_confidence_gate(

recommendation: MedicationRecommendation,

show_threshold: float = 0.85,

flag_threshold: float = 0.70,

) -> ConfidenceGateResult:

"""

Three-tier confidence gating:

>= 0.85: Show recommendation to clinician

0.70-0.84: Show with prominent uncertainty flag

< 0.70: Suppress and route to pharmacist review

"""

score = recommendation.confidence_score

if score >= show_threshold:

return ConfidenceGateResult(

passed=True, confidence=score, action="show"

)

elif score >= flag_threshold:

return ConfidenceGateResult(

passed=True, confidence=score, action="flag",

reason=f"Confidence {score:.0%} below standard threshold."

)

else:

return ConfidenceGateResult(

passed=False, confidence=score, action="suppress",

reason=f"Confidence {score:.0%} below minimum "

f"({flag_threshold:.0%}). Routing to pharmacist."

)Putting It All Together: The Guardrail Chain

Here is the complete pipeline that chains all six layers. Every medication recommendation runs through this before it reaches a clinician's screen.

from dataclasses import dataclass, field

from typing import Optional

@dataclass

class GuardrailResult:

safe: bool

decision: str # "approved", "flagged", "blocked"

blocked_at_layer: Optional[str] = None

details: list[str] = field(default_factory=list)

requires_human_review: bool = False

specialist_required: Optional[str] = None

async def run_medication_guardrails(

recommendation: MedicationRecommendation,

patient_id: str,

current_med_cuis: list[str],

patient_allergies: list[dict],

patient_age_years: Optional[float] = None,

patient_weight_kg: Optional[float] = None,

egfr: Optional[float] = None,

) -> GuardrailResult:

"""Six-layer medication safety guardrail chain."""

result = GuardrailResult(safe=True, decision="approved")

# Layer 1: Schema (enforced by Pydantic at parse time)

result.details.append(

f"L1 PASS: {recommendation.drug_name} "

f"{recommendation.dose_value}{recommendation.dose_unit} "

f"{recommendation.frequency.value} "

f"(RxCUI: {recommendation.rxnorm_cui})"

)

# Layer 2: Drug-drug interactions

interactions = await check_drug_interactions(

recommendation.rxnorm_cui, current_med_cuis

)

decision, flagged = evaluate_interaction_risk(interactions)

if decision == "block":

result.safe = False

result.decision = "blocked"

result.blocked_at_layer = "drug_interaction"

result.details.append(

f"L2 BLOCK: {flagged[0].description}"

)

return result

result.details.append(f"L2 PASS: {len(interactions)} checked")

# Layer 3: Dosage validation

dosage = validate_dosage(

recommendation.rxnorm_cui,

recommendation.dose_value,

recommendation.frequency.value,

patient_weight_kg=patient_weight_kg,

patient_age_years=patient_age_years,

egfr=egfr,

)

if dosage.decision == "block":

result.safe = False

result.decision = "blocked"

result.blocked_at_layer = "dosage_validation"

result.details.append(f"L3 BLOCK: {dosage.reason}")

return result

result.details.append("L3 PASS: Dosage within range")

# Layer 4: Allergy cross-reference

allergy = check_allergy_match(

recommendation.rxnorm_cui,

recommendation.drug_name,

patient_allergies,

)

if allergy.decision == "block":

result.safe = False

result.decision = "blocked"

result.blocked_at_layer = "allergy_check"

result.details.append(f"L4 BLOCK: {allergy.details}")

return result

if allergy.decision == "warn":

result.details.append(f"L4 WARN: {allergy.details}")

result.requires_human_review = True

else:

result.details.append("L4 PASS: No allergy matches")

# Layer 5: Hard rules

hard = apply_hard_rules(

recommendation.rxnorm_cui,

recommendation.drug_name,

patient_age_years=patient_age_years,

patient_weight_kg=patient_weight_kg,

)

if hard:

result.safe = False

result.decision = "blocked"

result.blocked_at_layer = hard.rule_name

result.details.append(f"L5 BLOCK: {hard.reason}")

result.specialist_required = hard.requires_specialist

return result

result.details.append("L5 PASS: No hard rules triggered")

# Layer 6: Confidence gate

conf = apply_confidence_gate(recommendation)

if not conf.passed:

result.safe = False

result.decision = "blocked"

result.blocked_at_layer = "confidence_gate"

result.details.append(f"L6 BLOCK: {conf.reason}")

return result

if conf.action == "flag":

result.details.append(f"L6 FLAG: {conf.reason}")

result.requires_human_review = True

else:

result.details.append(

f"L6 PASS: Confidence {conf.confidence:.0%}"

)

if result.requires_human_review:

result.decision = "flagged"

return resultDeployment Considerations

This guardrail chain adds latency — roughly 200-400ms per recommendation (dominated by the RxNorm API call). In a clinical workflow where a physician is waiting for suggestions, this is acceptable. Medication safety is not a use case where you optimize for speed over correctness.

Three additional production concerns worth addressing:

Logging and Auditability

Every guardrail evaluation must be logged with the full chain result — which layers passed, which warned, which blocked, and why. This is not optional. Under the 2026 HIPAA Security Rule, AI-assisted clinical decisions require audit trails. Build structured logging from day one, not as a compliance afterthought. For a deeper discussion of how agent audit trails simplify HIPAA compliance, see our analysis of agent audit trails for HIPAA.

Keeping Reference Data Current

The RxNorm API is updated monthly. Your dosage reference table must track FDA label changes. Your drug class membership maps need updating when new drugs are approved. Build a quarterly refresh cycle for all reference data, and subscribe to the RxNorm release notifications.

Testing with Adversarial Cases

Your test suite should include every failure mode described in this article: a drug the patient is allergic to, a pediatric overdose, a contraindicated combination, a controlled substance, a low-confidence recommendation. If your guardrails pass all of these without any blocks, your test suite is too weak. Real clinical data will always surface edge cases you did not anticipate — your test suite should be growing continuously.

Building Clinical AI That Earns Trust

The six-layer architecture described here is not theoretical. It is the minimum viable safety layer for any AI agent that touches medication decisions. Every layer can be implemented with open, freely available tools — RxNorm, DailyMed, FHIR AllergyIntolerance — and none of them require a PhD in machine learning. They require software engineering discipline applied to a domain where errors have consequences measured in patient harm, not conversion rate drops.

At Nirmitee, we build healthcare software where agent orchestration and clinical safety are engineering problems, not afterthoughts. If you are building clinical-facing AI and need the kind of guardrail infrastructure described here — integrated with FHIR, tested against real clinical scenarios, and designed for regulatory compliance — we should talk.

Ready to deploy AI agents in your healthcare workflows? Explore our Agentic AI for Healthcare services to see what autonomous automation can do. We also offer specialized Healthcare Software Product Development services. Talk to our team to get started.