Introduction

Every U.S. health system is trying to solve the same equation — and failing.

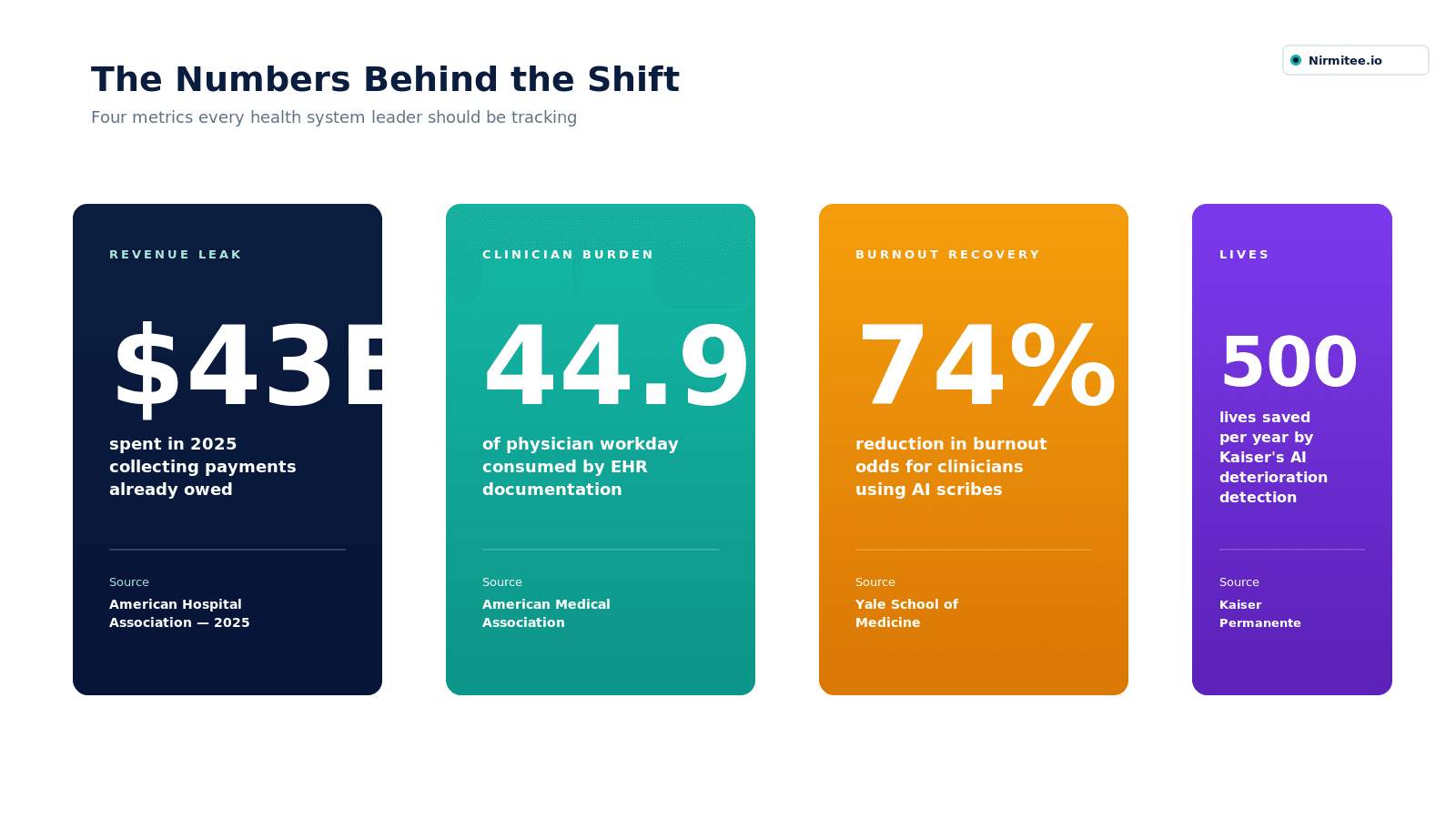

Physicians now spend 44.9% of their workday inside the EHR, with nearly a quarter doing additional documentation after hours. At the same time, hospitals spent $43 billion in 2025 chasing payments that were already contractually owed.

This is not a tooling problem. It is a workflow problem.

The industry has already tried incremental fixes:

- hiring scribes

- redesigning workflows

- layering point solutions on top of legacy systems

None of it has changed the underlying equation.

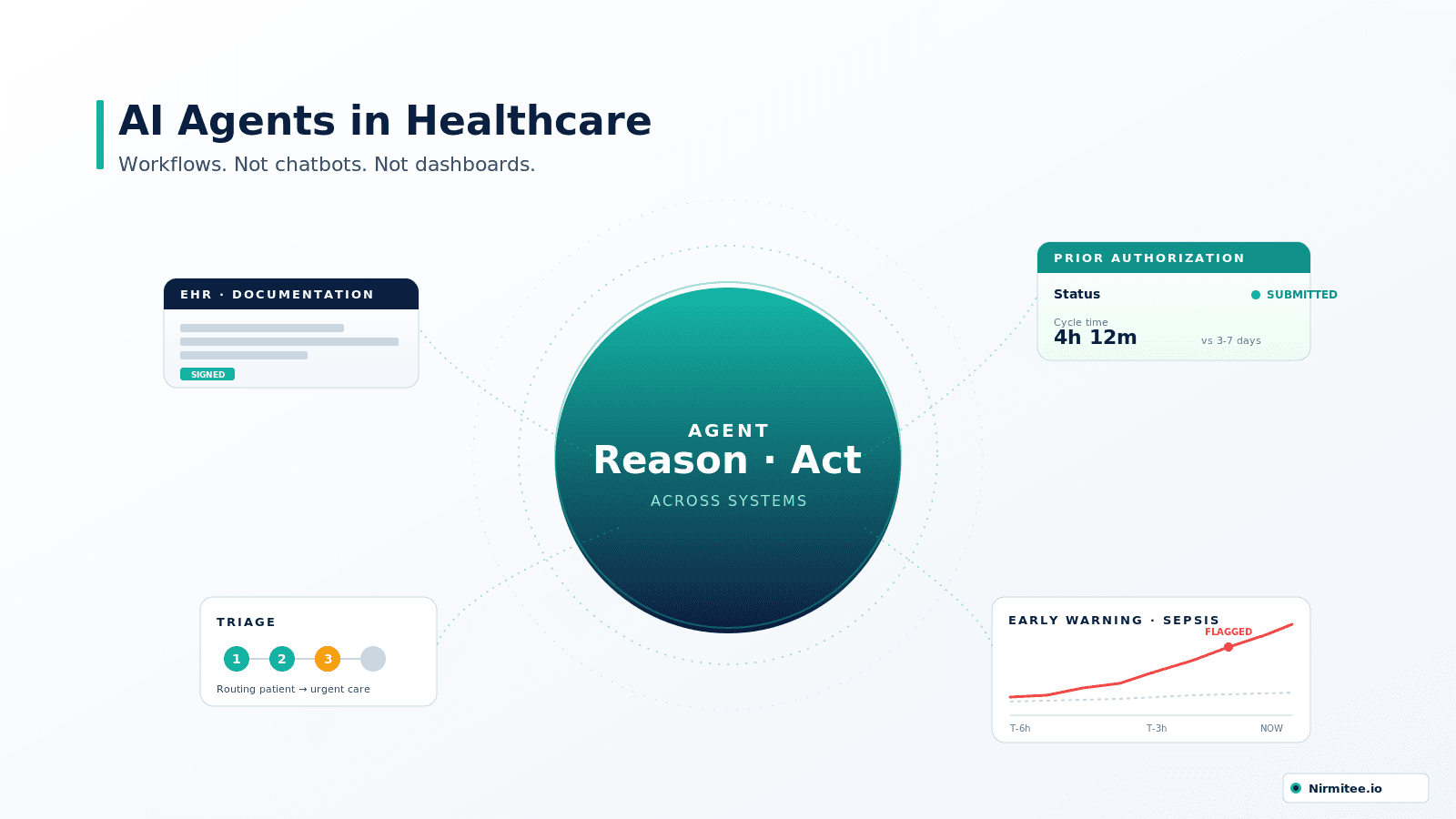

AI agents represent a structural shift — not because they are "more intelligent," but because they operate differently. They don't just surface information. They take action across systems.

That distinction is what separates another wave of healthcare AI hype from a category that is already delivering measurable clinical and financial outcomes in production environments.

This guide is written for healthcare leaders who are past the experimentation phase — and need a clear, data-backed understanding of where AI agents actually work, where they fail, and how to evaluate them without relying on vendor narratives.

What Is an AI Agent in Healthcare?

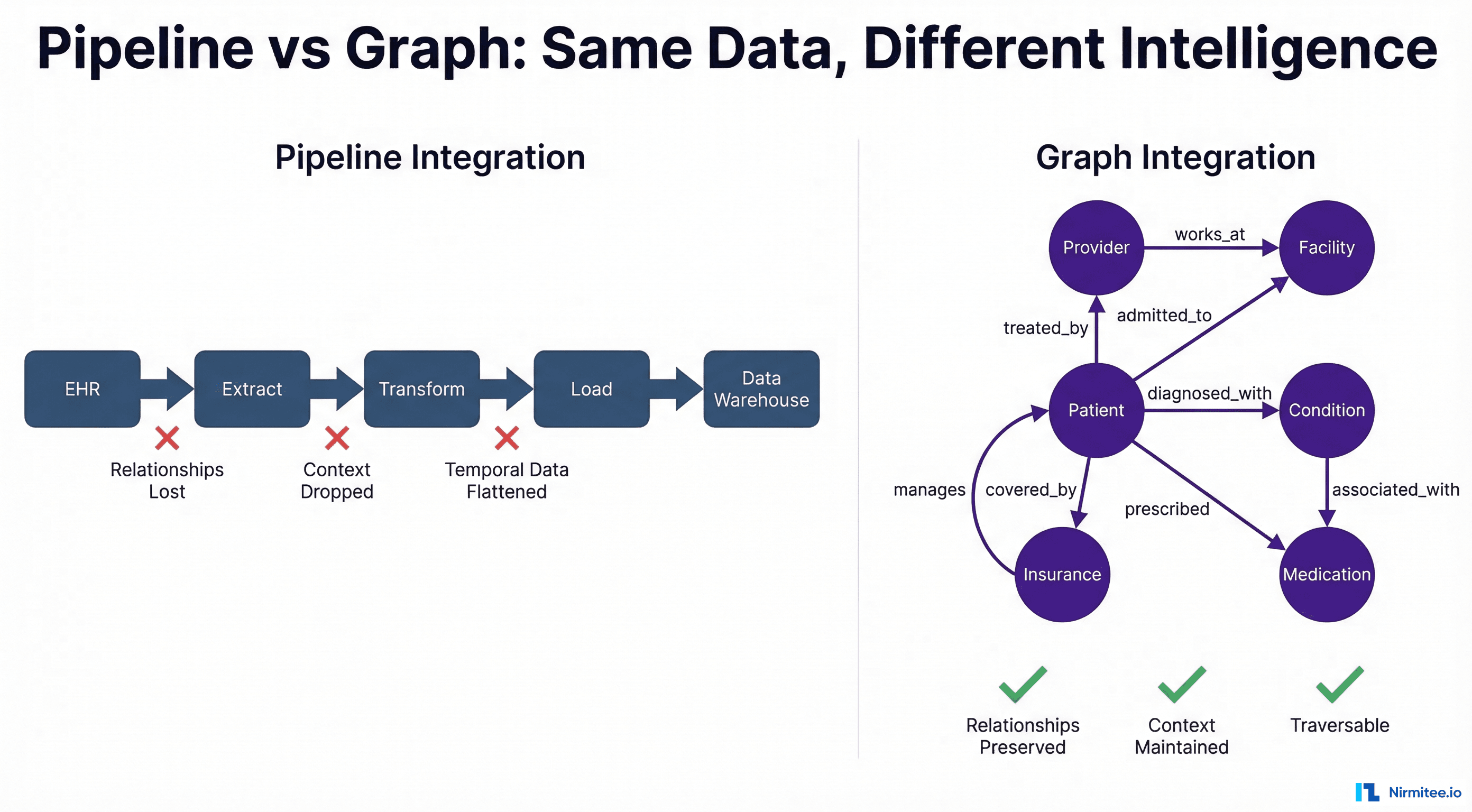

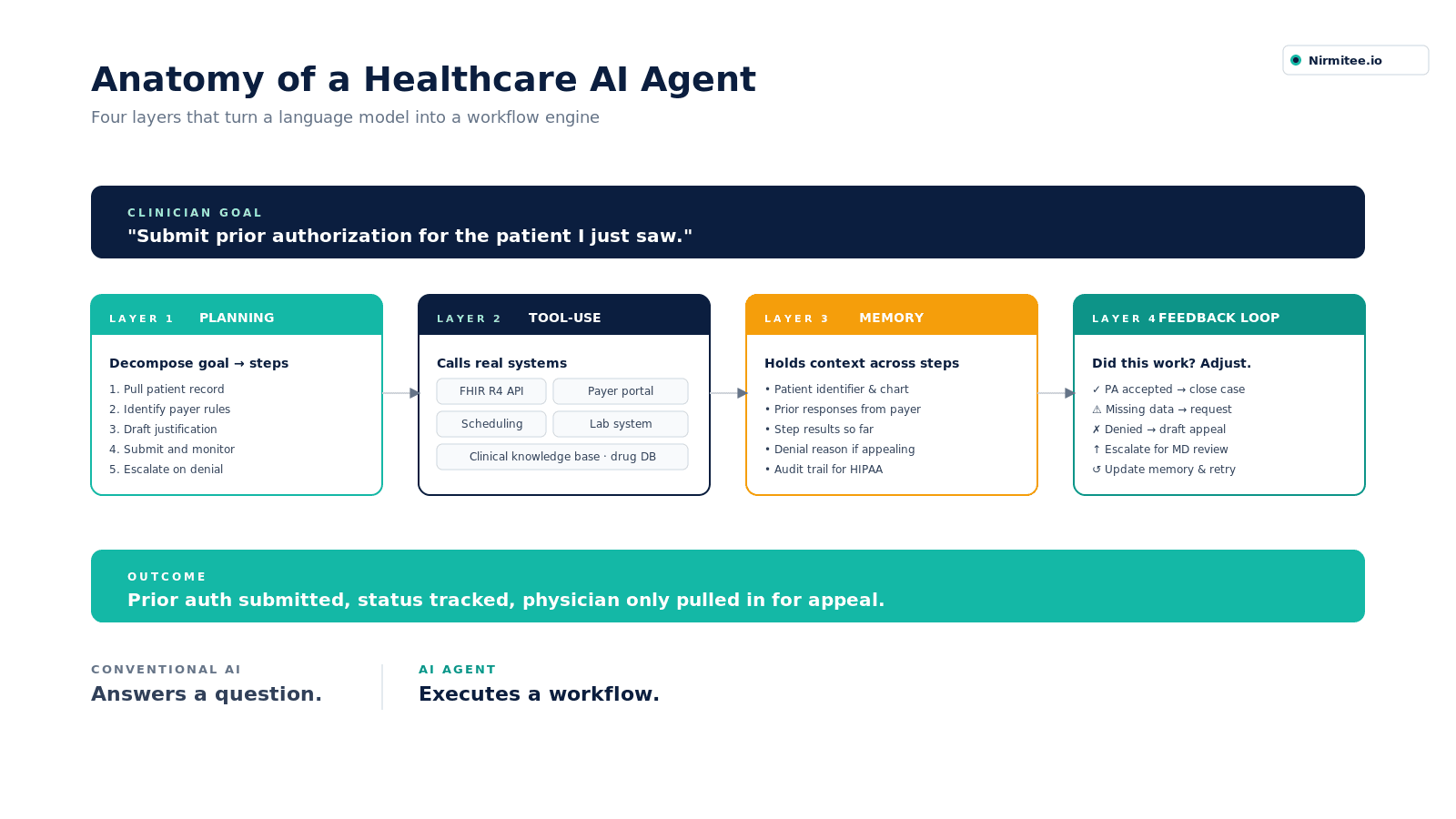

An AI agent is a software system that uses a large language model (or specialized AI model) as its reasoning engine, connected to external tools — EHR APIs, scheduling systems, payer portals, clinical databases — to complete multi-step tasks autonomously.

The distinction from a standard AI model is not subtle. A conventional AI answers a question. An agent executes a workflow. It can query a patient's medication list, identify a contraindication, draft a clinical note, flag it for physician review, and file a prior authorization — in sequence, without a human managing each handoff.

The architecture typically consists of four layers:

- Planning layer: breaks a goal into executable sub-steps

- Tool-use layer: calls external systems (FHIR APIs, databases, communication tools)

- Memory layer: maintains context across steps in the workflow

- Feedback loop: evaluates whether actions achieved the intended outcome and adjusts

AI Agents in Healthcare — Market Growth

- 📈 2025: $1.11 billion (current market value)

- 📈 2030: $6.92 billion (CAGR 44.1%)

- 📈 2035: $33.66 billion (CAGR 45.6%)

North America leads at 55% market share | 70% of organizations use AI agents to support clinical workflows.

Why Traditional Clinical Decision Support Has Failed

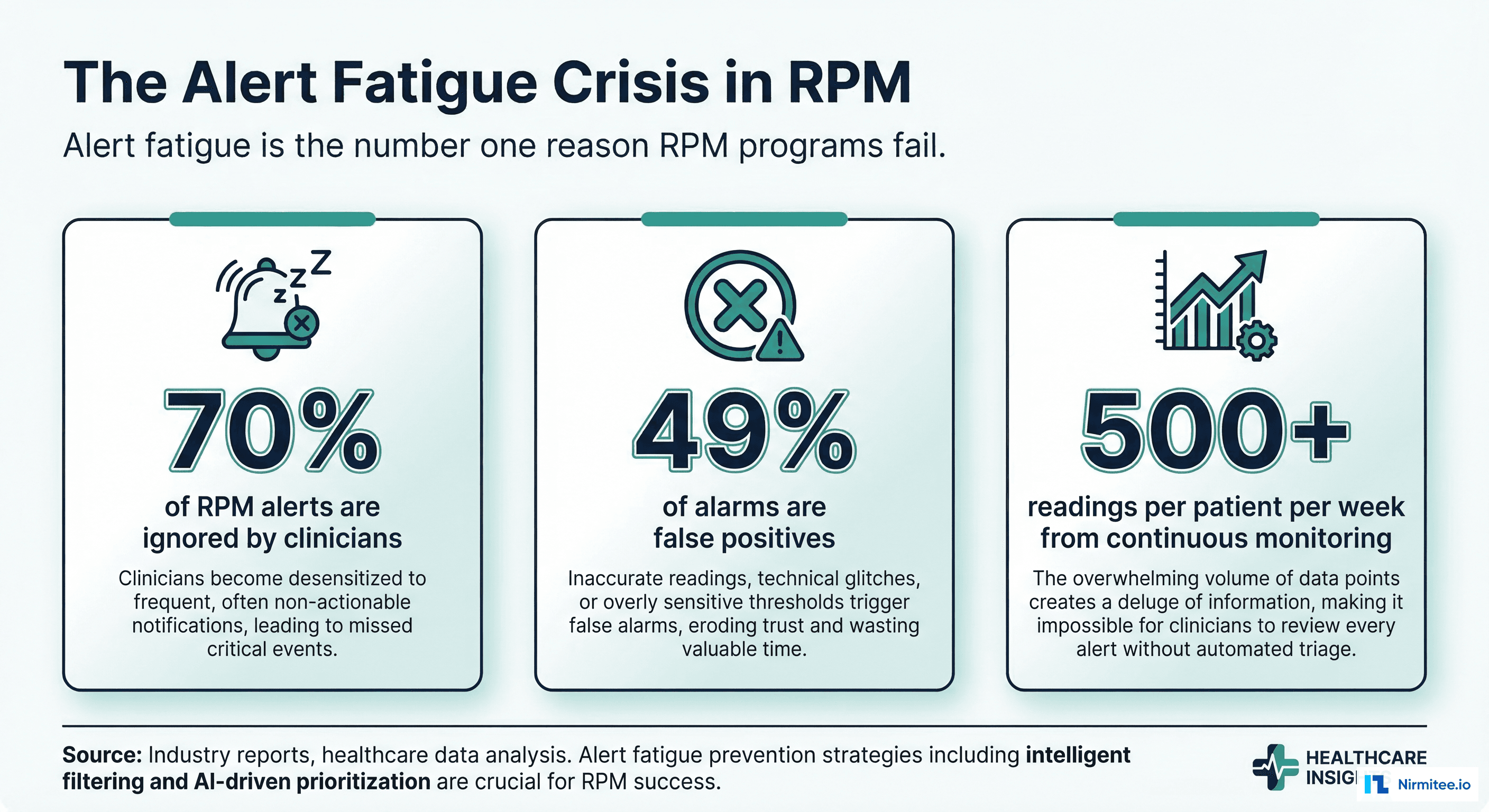

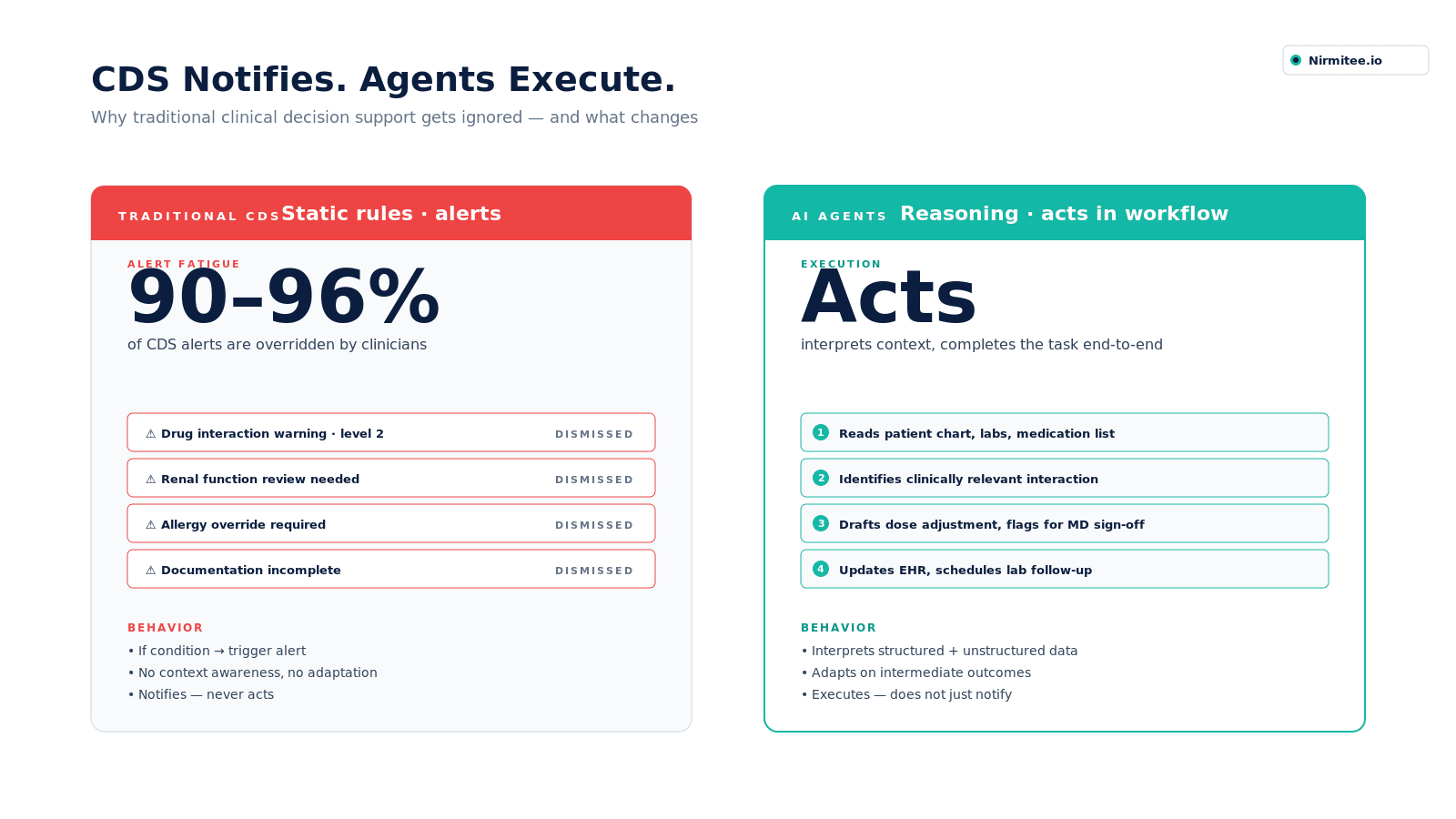

Clinical decision support (CDS) was supposed to improve care quality. At scale, it has done the opposite.

Modern EHR systems generate thousands of alerts — drug interactions, abnormal labs, missed protocols. In theory, this should reduce clinical risk. In practice, 90–96% of these alerts are overridden.

This is not a usability issue. It is a design failure. CDS systems operate on static rules:

- If condition → trigger alert

- If threshold crossed → notify clinician

They do not understand context. They do not adapt. And they do not act. The result is predictable: alert fatigue. Clinicians are trained — correctly — to ignore most of what the system tells them. A system that is ignored is not decision support. It is noise.

AI agents change the architecture entirely. Instead of interrupting workflows, they operate within them:

- Interpreting structured and unstructured clinical data together

- Reasoning across multiple sources simultaneously

- Taking action — not just generating recommendations

- Adapting based on intermediate outcomes rather than fixed logic

This is the critical shift: CDS systems notify. AI agents execute. And in a system where clinicians are already overloaded, execution matters more than notification.

Where AI Agents Are Transforming Healthcare: Real Applications Across the Care Continuum

These are the workflows where agentic AI creates the highest measurable impact — across clinical, administrative, and operational functions.

1. Clinical Documentation — Giving Physicians Their Time Back

Every physician-patient encounter generates a documentation obligation that has nothing to do with medicine. Subjective findings, assessment, plan, billing codes, follow-up instructions — all of it typed after the patient leaves the room.

An AI agent deployed in clinical documentation listens to the encounter, interprets clinical language in real time, structures it into the correct note format for the specialty and EHR, cross-references the patient's existing record for context, and presents a completed draft for physician review before the next patient walks in.

What this changes operationally:

- Physicians review and sign notes instead of authoring them from scratch

- After-hours documentation time drops significantly — restoring personal time and reducing burnout (74% reduction in burnout odds — Yale Medicine)

- Note quality improves because the agent captures the full encounter, not just what the physician remembers to type at 9 PM

- Specialty-specific documentation — behavioral health progress notes, home health OASIS assessments, pediatric therapy sessions — can be handled with the same workflow, adapted to each regulatory format

The broader implication: a health system where documentation is handled by an AI agent is a health system where the physician's cognitive capacity is reserved for clinical judgment, not administrative transcription.

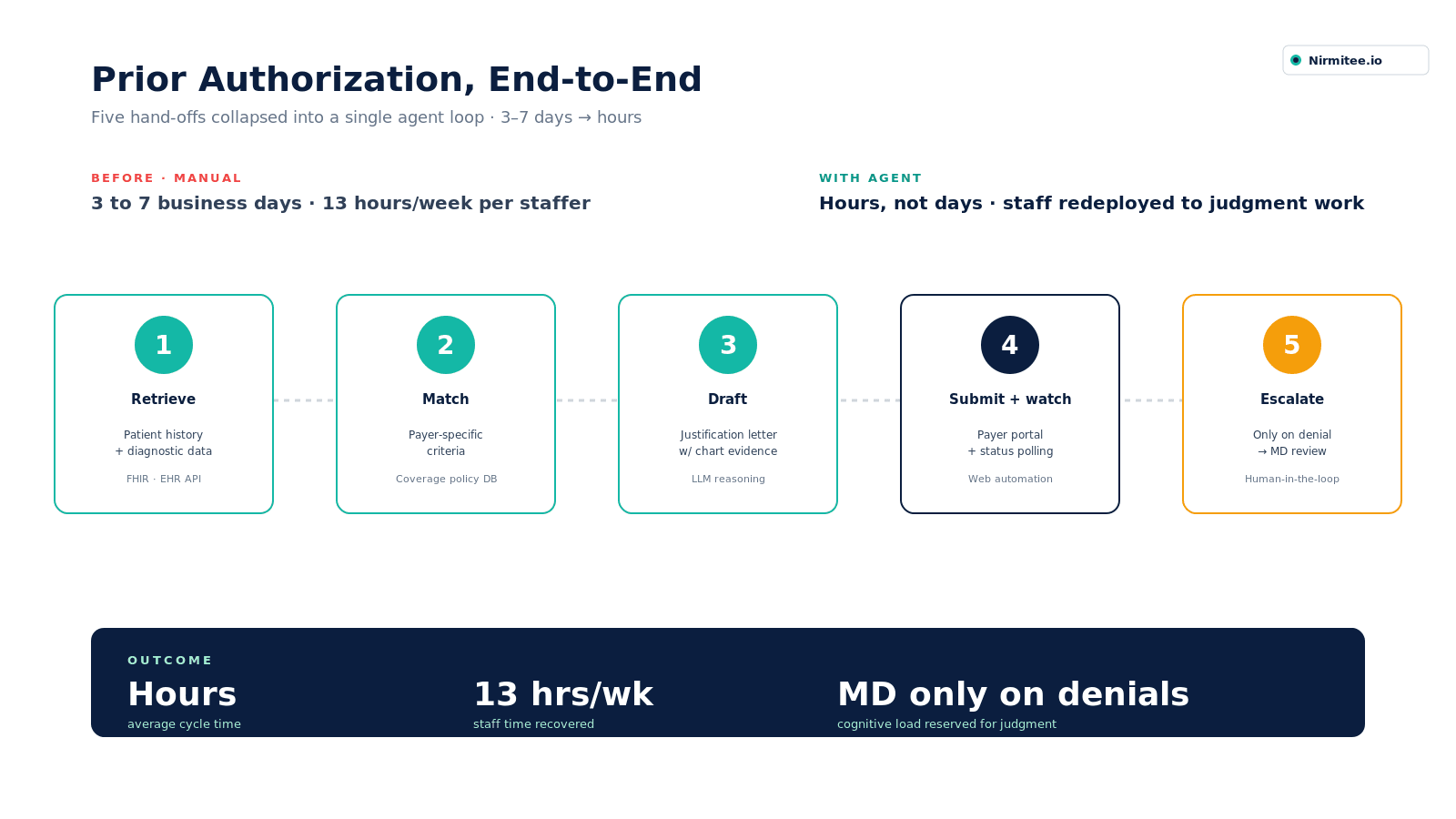

2. Prior Authorization — Closing the Loop on a Broken Process

Prior authorization requires a clinical professional to locate patient records, extract the clinically relevant information, match it against payer-specific criteria, draft a justification, submit it through a payer portal, monitor for a response, and initiate an appeal if denied. That sequence currently requires human action at every step (AMA — Prior Authorization Physician Survey).

An AI agent handles the entire cycle:

- Retrieves the patient's clinical history and relevant diagnostic data from the EHR

- Identifies the applicable payer criteria for the procedure or medication being requested

- Drafts the clinical justification letter using the patient's own record as evidence

- Submits through the payer portal and monitors status

- Flags for clinical review only when a denial requires physician-level judgment to appeal

The outcome: prior authorizations that currently take 3–7 business days will be completed in hours. Staff who spent 13 hours per week on prior auth work are redeployed to tasks that require human judgment.

3. Revenue Cycle Management — Preventing Denials Before They Happen

Claim denials in healthcare are largely predictable — and largely preventable. Most denials result from coding errors, missing documentation, eligibility gaps, or mismatched clinical criteria that could have been caught before submission (Experian Health — State of Claims 2025).

AI agents applied to the revenue cycle operate at every stage of the claims workflow:

Before submission: The agent reviews the claim against payer rules, flags documentation gaps, suggests correct coding based on clinical notes, and verifies patient eligibility — before the claim leaves the system.

At submission: The agent routes claims intelligently based on payer-specific requirements, reducing technical denials from misformatting or missing fields.

After denial: The agent analyzes the denial reason, identifies whether it's appealable, retrieves the supporting documentation, and drafts the appeal — presenting it to the billing team for review rather than creating work from scratch.

Pattern recognition at scale: Across thousands of claims, the agent identifies systemic denial patterns — a particular payer consistently denying a specific code combination, for example — and surfaces those patterns for proactive correction.

4. Patient Triage and Care Navigation — Matching Patients to the Right Setting, Faster

The first interaction a patient has with a health system is often the most inefficient. Patients call, explain their symptoms to someone who isn't clinically trained, get routed based on availability rather than acuity, and either end up in a care setting that's too intensive for their needs, or not intensive enough.

An AI triage agent changes this at the point of first contact:

- Gathers structured symptom information through a guided conversation (web, SMS, or voice)

- Applies validated clinical triage protocols — the same criteria a nurse triage line uses — to assess urgency

- Recommends the appropriate care setting: self-care, telehealth, urgent care, primary care, or emergency

- Books the appointment directly into the scheduling system, with clinical context pre-populated

- Escalates immediately when red-flag symptoms are detected

For patients with chronic diseases, specifically, AI agents can proactively reach out — not just respond. A diabetic patient who hasn't had a recent HbA1c check receives an outreach message, answers a few questions, and is scheduled for a lab order, without a care coordinator manually making the call.

5. Clinical Early Warning — Detecting Deterioration Before It Becomes a Crisis

In inpatient and emergency settings, the gap between a patient's initial deterioration and clinical intervention is often measured in hours. Those hours determine outcomes. Johns Hopkins' TREWS sepsis detection system is a benchmark for what continuous, agent-driven monitoring can do at scale.

AI agents designed for early warning continuously monitor patient data streams — vital signs, lab values, medication administration records, nursing notes — and identify patterns that precede clinical events:

- Sepsis onset: falling blood pressure trending alongside rising lactate and altered mental status, hours before the picture becomes obvious to a clinician reviewing individual data points in sequence

- Respiratory failure: subtle changes in respiratory rate, oxygen saturation trends, and ventilator parameters that individually appear within normal range but collectively signal deterioration

- Cardiac events: rhythm changes and hemodynamic patterns that precede acute decompensation

- Post-surgical complications: abnormal recovery trajectories flagged in the hours after a procedure, before complications become emergencies

Critically, these agents don't fire a generic alert and hope someone reads it. They escalate through the appropriate clinical channel, surface the specific data pattern that triggered the flag, and recommend the response protocol — giving the clinician context, not just a notification.

6. Population Health Management — Proactive Care at Scale

Managing a population of 50,000 attributed patients for chronic disease outcomes is operationally impossible for a care team working from manual lists and spreadsheets. An AI agent changes the economics of proactive care.

Running continuously against the attributed population, the agent:

- Identifies patients who have crossed risk thresholds based on claims data, EHR data, and social determinants

- Prioritizes outreach by likelihood of benefit — not alphabetically or by last contact date

- Generates individualized care gap summaries for care coordinators: this patient is overdue for these screenings, missed their last medication refill, and has two recent ED visits for the same complaint

- Triggers automated outreach for routine tasks — appointment reminders, medication adherence check-ins, post-discharge follow-up — reserving human care coordinator time for patients who need a conversation

The result: a care team of ten coordinators can actively manage a population that previously required thirty, with better coverage of the patients most likely to benefit.

7. Administrative Operations — Eliminating the Work That Shouldn't Require a Human

Beyond clinical workflows, AI agents are closing the administrative gap across health system operations:

Scheduling optimization: Agents fill appointment gaps in real time, matching cancellations to patients on waitlists based on clinical urgency, provider preference match, and patient availability — without a scheduler making manual calls.

Insurance eligibility and benefits verification: Real-time verification at the point of scheduling, with automatic flagging of coverage gaps before the patient arrives.

Staff communication and handoff documentation: Shift handoffs, care transition summaries, and discharge instruction generation — drafted automatically from the clinical record, reviewed and signed by the responsible clinician.

Compliance and audit documentation: AI agents that monitor clinical documentation for regulatory completeness — flagging missing elements in real time rather than catching gaps in a quarterly audit.

The Risks Healthcare Leaders Cannot Afford to Minimize

Clinical Hallucinations Are a Patient Safety Issue

Large language models confabulate. They produce plausible-sounding outputs that are factually wrong. In a product description, that is correctable. In an AI-generated medication reconciliation summary before surgery, it is a potential sentinel event.

The FDA has now authorized 1,451 AI/ML medical devices, with 295 cleared in 2025 alone — 62% falling under Software as a Medical Device (SaMD). Any AI that informs or executes clinical decisions must meet evidence standards commensurate with clinical risk. If a vendor cannot produce validation data on a population resembling yours, that is a disqualifying gap — not a roadmap item.

HIPAA Compliance in Agentic Workflows

When an AI agent queries your EHR, calls an external API, logs its reasoning, and stores patient context in a vector database — each step creates a potential HIPAA compliance surface that traditional Business Associate Agreement frameworks were not designed to address.

Before any deployment, your compliance team must answer:

- Where is PHI stored during the agent's active working memory?

- Who is the Business Associate when the agent calls a third-party tool mid-workflow?

- What are the audit trail requirements for agent-initiated clinical actions?

Algorithmic Bias in Clinical Populations

A landmark 2019 Science study demonstrated that a widely deployed commercial risk prediction algorithm systematically underestimated illness severity in Black patients. AI agents trained on EHR data from academic medical centers serving primarily white, commercially insured populations will reproduce those disparities at scale.

Mitigation requires: diverse training data, prospective bias auditing, and performance monitoring stratified by race, ethnicity, payer type, and geography.

Liability and Accountability Gaps

Malpractice law has not kept pace with agentic AI. When an AI agent makes an error and a physician signs off on its output without full review, liability is genuinely unresolved in most U.S. states. Risk management and legal must be at the table before go-live — not after the first adverse event.

How to Get Started with Implementing Agentic AI in Healthcare

Most healthcare organizations don't fail at AI because they chose the wrong vendor. They fail because they started in the wrong place — picking a tool before defining the problem, or deploying broadly before validating narrowly. Here is the implementation sequence that works.

Step 1 — Define One Workflow Problem, Not an AI Strategy

The organizations that get results start with a specific, measurable workflow failure. Not "we want to improve efficiency." Something like:

- Prior authorizations are taking 5 days on average and our denial rate is 14%

- Physicians are spending 3.5 hours per day on documentation after clinic ends

- Our care coordinators have 4,000 attributed patients and are actively managing 200

That specificity determines everything downstream — which architecture fits, what success looks like, and how you prove ROI to a skeptical CFO. If you can't define the baseline metric today, you are not ready to deploy AI.

Step 2 — Audit Your Data Infrastructure Before Talking to Vendors

Agentic AI is only as good as the data it can access. Before any vendor conversation, your team needs to answer:

- Is your EHR exposing FHIR R4 APIs, or is data access limited to exports and reports?

- What percentage of your clinical data is structured vs. locked in free-text notes?

- Do you have a reliable patient identity matching layer across systems?

- How clean is your claims data — and how current is it?

Vendors will tell you their system integrates with everything. The integration question is not about the vendor's capability — it is about the state of your data environment. A sophisticated AI agent running against incomplete or poorly structured data produces sophisticated wrong answers.

Step 3 — Start Narrow, Validate Rigorously, Then Expand

The pilot-to-production failure rate in healthcare AI is high — roughly 80% of projects don't scale beyond the pilot phase. The consistent reason: the pilot ran in a controlled environment on curated data, and production exposed the gap. The implementation sequence that avoids this:

Phase 1 — Shadow deployment (4–8 weeks). Run the AI agent in parallel with your existing workflow. The agent produces outputs; your staff produces outputs independently. Compare accuracy, completeness, and edge case handling on your actual patient population — not the vendor's demo set. This is where the real performance picture emerges.

Phase 2 — Supervised deployment (6–12 weeks). The agent handles the workflow. Human staff review every output before it takes effect. Track error rates, flag categories, and the types of cases where the agent underperforms. Use this data to set your go-live thresholds.

Phase 3 — Production with monitoring. Full deployment with defined human review checkpoints for high-risk outputs. Continuous performance monitoring stratified by patient demographics, diagnosis type, and payer. A rollback trigger defined before go-live — not after the first adverse event.

Step 4 — Build Your Governance Structure Before You Deploy

Governance is not a compliance checkbox. It is the operational infrastructure that determines whether your AI deployment stays safe and effective at month 18, not just month 2. At a minimum, your governance structure needs:

- A named clinical owner — a physician or clinical informaticist accountable for the agent's clinical performance, not just the IT team

- A defined review cadence — monthly performance review against baseline metrics, quarterly bias audit across demographic subgroups

- An escalation protocol — what happens when the agent produces an output that triggers a clinical concern? Who reviews it, how fast, and what is the documentation trail?

- A BAA map — a documented record of every third-party system the agent touches, with confirmed Business Associate Agreements in place for each PHI exposure point

Step 5 — Measure What Changes, Not What the Vendor Reports

Your vendor will report metrics that make their system look good. Your job is to track metrics that show whether your organization is actually better. Before go-live, establish your baseline on:

| Metric | How to Measure |

|---|---|

| Documentation time per encounter | EHR audit logs, time-motion study |

| Prior auth cycle time | Days from submission to decision |

| Claim denial rate | Monthly denial report by payer and code |

| Staff time on administrative tasks | Time tracking by role |

| Patient access wait time | Scheduling system data |

| Clinician satisfaction | Pre/post survey, standardized burnout instrument |

At 90 days post-deployment, compare against baseline. If the numbers haven't moved, the problem is either the tool, the implementation, or the workflow design — and you need to know which one before investing further.

How to Evaluate an AI Agent Before You Deploy

Use this framework before signing a contract. Not a vendor's curated demo environment.

- Clinical validation on your population — demand performance data on a patient cohort that resembles yours. A demo on curated cases is sales material, not clinical evidence.

- EHR integration depth — ask specifically which modules, whether access is bidirectional, and whether the vendor uses FHIR R4 APIs or screen scraping. Screen scraping is a disqualifier.

- Human-in-the-loop design — every clinical workflow must have defined physician review checkpoints. Fully autonomous clinical action is inconsistent with current regulatory and liability frameworks.

- Explainability — the agent must articulate why it made a recommendation. Black-box outputs are incompatible with CMS quality documentation requirements.

- Bias monitoring plan — ask how the vendor monitors for performance disparities across patient subgroups. Performance must be stratified by race, ethnicity, age, payer, and geography.

The Numbers Behind the Shift

A Final Word

AI agents are no longer a future concept in healthcare. They are already operating inside health systems — reducing documentation burden, improving revenue cycle performance, and, in some cases, directly impacting patient outcomes. The question is not whether this category will exist. It already does.

The real question is who will implement it correctly. Most AI initiatives in healthcare do not fail at the model level. They fail at the workflow level — where integration, clinical validation, and change management determine whether a system is used or ignored.

The organizations that will extract lasting value from AI agents are not the ones moving the fastest. They are the ones applying the most discipline:

- Clear, high-impact use cases — not broad experimentation

- Clinical validation that holds up under real-world conditions

- Deep integration into systems like Epic and Cerner — not superficial overlays

- Governance frameworks that treat AI as a clinical capability, not a feature

For founders, the opportunity is real — but so is the complexity. Healthcare does not reward speed without rigor. It punishes it.

For health system leaders, the window to act is open — but not indefinitely. Peer organizations are already deploying these systems in production environments. The gap between early adopters and laggards will not remain theoretical. It will show up in operational efficiency, clinician satisfaction, and financial performance.

AI agents will not replace clinicians. But they will redefine how clinical and administrative work gets done. And over time, the systems that adopt them with discipline will not just operate more efficiently — they will make better decisions, faster, at scale.

The only real risk now is non-adoption — or adopting without the rigor the environment demands.

Talk to Nirmitee.io

Nirmitee.io builds production-grade AI agents and healthcare platforms for U.S. health systems and digital-health founders — with FHIR-native integrations, HIPAA-compliant infrastructure, and the clinical-validation discipline this category demands. Schedule a working session with our team or explore our AI development and healthcare engineering capabilities.

Sources

- MarketsandMarkets — AI Agents in Healthcare Market (2025–2030)

- Towards Healthcare — Agentic AI in Healthcare Market Sizing (2025–2035)

- Experian Health — State of Claims 2025

- American Hospital Association — Costs of Caring 2025

- FDA — AI-Enabled Medical Devices (2025)

- KLAS Research — Ambient AI Adoption Projections

- Science (2019) — Racial Bias in Commercial Risk Prediction Algorithms

- HHS OCR — HIPAA for Professionals