The Data Reproducibility Crisis in Healthcare ML

When the FDA asks "which data was this model trained on?" you need an exact answer—not "the data we had in the database around February." Healthcare ML models are regulated software, and regulatory frameworks like the FDA's Software as a Medical Device (SaMD) guidance require complete traceability from a deployed model back to its exact training data, preprocessing steps, and validation datasets. Git handles code versioning perfectly, but it was never designed to track 10GB+ clinical datasets, evolving patient cohorts, or versioned feature tables that change with every ETL run.

The consequences of poor data versioning in healthcare are severe. If a data quality issue is discovered six months after a model was trained, you need to determine exactly which patients were in the training set, what their feature values were at training time, and whether the issue affected model performance. Without data versioning, this investigation becomes a forensic exercise that may never produce a definitive answer—which means the model's regulatory standing is compromised.

This guide covers three production-grade data versioning tools—DVC, LakeFS, and Delta Lake—with hands-on setup, healthcare-specific use cases, and a comparison to help you choose the right tool for your clinical ML pipeline.

Why Git Fails for Clinical Datasets

Git is optimized for text files under a few megabytes. Clinical ML datasets break Git in several ways that make it unsuitable as a standalone data versioning solution.

| Problem | Git Behavior | Impact on Healthcare ML |

|---|---|---|

| Large files | Stores full copy of every version in .git/ | 10GB dataset x 50 versions = 500GB repository |

| Binary files | Cannot diff binary formats (Parquet, HDF5) | No meaningful change tracking for data files |

| Clone time | Downloads entire history including all data versions | New team members wait hours to clone |

| Push/pull speed | Transfers over Git protocol, not optimized for large files | Slow CI/CD pipelines, frustrated developers |

| Access control | All-or-nothing repository access | Cannot restrict PHI access at file level |

| Storage cost | Git hosting (GitHub/GitLab) charges per GB | S3/GCS is 10-100x cheaper for bulk data |

Git LFS (Large File Storage) partially addresses the size problem by storing large files in external storage and tracking pointers in Git. However, Git LFS lacks branching semantics for data, has no built-in data quality validation, does not support querying specific data versions without downloading them, and adds complexity to the Git workflow. For serious healthcare ML projects, purpose-built data versioning tools provide a much better experience.

DVC: Git-Like Commands for Data Version Control

DVC (Data Version Control) extends Git with data versioning capabilities while keeping the Git workflow developers already know. DVC stores lightweight metadata files (.dvc files) in Git and the actual data in remote storage (S3, GCS, Azure Blob, HDFS). This separation means your Git repository stays small while your data is fully versioned with the same branch-and-merge workflow.

DVC Setup for a Healthcare ML Project

# Install DVC with S3 support

pip install dvc[s3]

# Initialize DVC in your Git repository

cd readmission-model/

git init

dvc init

# Configure remote storage (HIPAA-compliant S3 bucket)

dvc remote add -d clinical-data s3://your-hipaa-bucket/ml-data/

dvc remote modify clinical-data region us-east-1

# Enable server-side encryption (required for PHI)

dvc remote modify clinical-data sse AES256

# Track your training data

dvc add data/processed/training_data.csv

# DVC creates a .dvc file (small metadata pointer)

# training_data.csv.dvc contains:

# md5: abc123def456...

# size: 2147483648 (2GB)

# path: training_data.csv

# Commit the pointer to Git

git add data/processed/training_data.csv.dvc data/processed/.gitignore

git commit -m "Track training data v1: 50,000 encounters, 2024-01 to 2025-12"

# Push data to remote storage

dvc pushVersioning Workflow: Update Data, Track Changes

# New data arrives — updated training set with 2026 Q1 data

# Replace the training data file

cp /data/exports/training_data_2026q1.csv data/processed/training_data.csv

# DVC detects the change

dvc status

# Output:

# data/processed/training_data.csv.dvc:

# changed outs:

# modified: data/processed/training_data.csv

# Track the new version

dvc add data/processed/training_data.csv

git add data/processed/training_data.csv.dvc

git commit -m "Update training data: add 2026 Q1, now 62,000 encounters"

dvc push

# Now you have two versions of the data:

# v1: 50,000 encounters (git show HEAD~1:data/processed/training_data.csv.dvc)

# v2: 62,000 encounters (current)

# Switch back to v1 data (for debugging or retraining)

git checkout HEAD~1 -- data/processed/training_data.csv.dvc

dvc checkout

# training_data.csv is now the v1 version from S3

# Return to latest

git checkout main -- data/processed/training_data.csv.dvc

dvc checkoutDVC Pipelines: Reproducible Training

# dvc.yaml — Define the ML pipeline stages

stages:

validate:

cmd: python src/data_validation.py --data data/processed/training_data.csv

deps:

- src/data_validation.py

- data/processed/training_data.csv

outs:

- reports/validation_report.json

train:

cmd: python src/train.py --config config/model_config.json

deps:

- src/train.py

- config/model_config.json

- data/processed/training_data.csv

params:

- config/model_config.json:

- hyperparameters

- features

- random_seed

outs:

- models/xgb_readmission.joblib

metrics:

- reports/metrics.json:

cache: false

evaluate:

cmd: python src/evaluate.py --model models/xgb_readmission.joblib

deps:

- src/evaluate.py

- models/xgb_readmission.joblib

- data/processed/test_data.csv

metrics:

- reports/evaluation.json:

cache: false

plots:

- reports/calibration_plot.csv:

x: mean_predicted

y: fraction_positive

# Run the full pipeline

dvc repro

# Compare metrics across experiments

dvc metrics diff

# Output:

# Path Metric Old New Change

# reports/metrics.json auroc 0.789 0.812 0.023

# reports/metrics.json auprc 0.421 0.456 0.035DVC's pipeline definition ensures that every model training run is fully reproducible. If you change the training data, DVC knows to re-run validation, training, and evaluation. If you only change a hyperparameter, DVC skips the data validation stage and re-runs only training and evaluation. This dependency tracking eliminates the "I can't reproduce last month's results" problem that plagues healthcare ML teams. DVC integrates directly into the CI/CD pipeline via dvc pull and dvc repro commands in GitHub Actions workflows.

LakeFS: Git-Like Branching for Data Lakes

LakeFS takes a fundamentally different approach: instead of tracking pointers to data, it provides Git-like branching and versioning directly on your data lake. LakeFS sits between your applications and your object storage (S3, GCS, Azure), exposing a versioned, branched view of the data through an S3-compatible API. This means your existing tools—Spark, Pandas, DuckDB, Presto—can read versioned data without any code changes.

LakeFS Setup and Branching

# Install LakeFS (Docker)

docker run -d --name lakefs \

-p 8000:8000 \

treeverse/lakefs:latest \

run --local-settings

# Install lakectl CLI

curl -sL https://github.com/treeverse/lakeFS/releases/latest/download/lakeFS_darwin_amd64.tar.gz | tar xz

sudo mv lakectl /usr/local/bin/

# Create a repository for clinical ML data

lakectl repo create lakefs://clinical-ml s3://your-hipaa-bucket/lakefs-data/

# Upload training data to main branch

lakectl fs upload lakefs://clinical-ml/main/training/encounters.parquet \

--source data/processed/encounters.parquet

lakectl fs upload lakefs://clinical-ml/main/training/features.parquet \

--source data/processed/features.parquet

# Commit the initial data

lakectl commit lakefs://clinical-ml/main \

-m "Initial training data: 50,000 encounters, features v1"

# Create a branch for data experimentation (zero-copy, instant)

lakectl branch create lakefs://clinical-ml/experiment-new-features \

--source lakefs://clinical-ml/main

# The branch is a zero-copy snapshot — no data duplication!

# Modify data on the branch without affecting main

lakectl fs upload lakefs://clinical-ml/experiment-new-features/training/features_v2.parquet \

--source data/processed/features_v2.parquet

lakectl commit lakefs://clinical-ml/experiment-new-features \

-m "Add social determinants of health features"

# Compare branches

lakectl diff lakefs://clinical-ml/main lakefs://clinical-ml/experiment-new-featuresReading Versioned Data with Standard Tools

# Python — Read specific data version via LakeFS S3 gateway

import pandas as pd

import s3fs

# Configure S3 client to point to LakeFS

fs = s3fs.S3FileSystem(

key="your-lakefs-access-key",

secret="your-lakefs-secret-key",

client_kwargs={"endpoint_url": "http://localhost:8000"}

)

# Read from main branch (current production data)

df_prod = pd.read_parquet(

"s3://clinical-ml/main/training/features.parquet",

filesystem=fs

)

# Read from experiment branch (new features)

df_experiment = pd.read_parquet(

"s3://clinical-ml/experiment-new-features/training/features_v2.parquet",

filesystem=fs

)

# Read from a specific commit (exact data version for reproducibility)

df_v1 = pd.read_parquet(

"s3://clinical-ml/abc123def456/training/features.parquet",

filesystem=fs

)

print(f"Production: {len(df_prod)} rows, {len(df_prod.columns)} features")

print(f"Experiment: {len(df_experiment)} rows, {len(df_experiment.columns)} features")LakeFS's zero-copy branching is particularly powerful for healthcare ML because you can create isolated experiment environments without duplicating terabytes of clinical data. A data scientist can branch from production, add new features or clean data quality issues, validate the changes, and merge back—all without any risk of corrupting the production dataset that serves live models.

Delta Lake: Versioned Tables with Time Travel

Delta Lake adds ACID transactions and time travel to data lakes built on Parquet. Unlike DVC (which versions files) and LakeFS (which versions object storage paths), Delta Lake versions individual tables with row-level granularity. This makes it ideal for scenarios where your training data is stored in a data warehouse or lakehouse and you need to query specific historical snapshots.

# Python — Delta Lake time travel for healthcare ML

from delta import DeltaTable

from pyspark.sql import SparkSession

spark = SparkSession.builder \

.config("spark.jars.packages", "io.delta:delta-spark_2.12:3.1.0") \

.config("spark.sql.extensions", "io.delta.sql.DeltaSparkSessionExtension") \

.getOrCreate()

# Write training data as Delta table

df = spark.read.parquet("data/processed/features.parquet")

df.write.format("delta").save("data/delta/training_features")

# Each write creates a new version automatically

# Version 0: initial write

# Version 1: after appending Q1 2026 data

# Version 2: after fixing data quality issue

# Time travel: query the exact data used to train model v3

df_v0 = spark.read.format("delta") \

.option("versionAsOf", 0) \

.load("data/delta/training_features")

# Query by timestamp

df_jan = spark.read.format("delta") \

.option("timestampAsOf", "2026-01-15") \

.load("data/delta/training_features")

# View table history

delta_table = DeltaTable.forPath(spark, "data/delta/training_features")

history = delta_table.history()

history.select("version", "timestamp", "operation", "operationMetrics").show()

# +-------+-------------------+---------+--------------------+

# |version| timestamp|operation| operationMetrics|

# +-------+-------------------+---------+--------------------+

# | 2|2026-03-15 10:30:00| UPDATE|{numUpdatedRows: 42}|

# | 1|2026-02-01 08:00:00| APPEND|{numOutputRows: 12k}|

# | 0|2026-01-01 00:00:00| WRITE|{numOutputRows: 50k}|

# +-------+-------------------+---------+--------------------+Rollback When Data Issues Are Discovered

# Scenario: You discover that data from February had a coding error

# that assigned wrong diagnosis codes to 500 patients

# Option 1: Restore the entire table to pre-error state

delta_table = DeltaTable.forPath(spark, "data/delta/training_features")

delta_table.restoreToVersion(0) # Back to version 0

# Option 2: Restore to a specific timestamp

delta_table.restoreToTimestamp("2026-01-31")

# Option 3: Selectively fix — read clean version, merge corrections

df_clean = spark.read.format("delta") \

.option("versionAsOf", 0) \

.load("data/delta/training_features")

df_corrections = spark.read.parquet("data/fixes/corrected_diagnoses.parquet")

# Merge corrections into current table

from delta.tables import DeltaTable

delta_table.alias("target").merge(

df_corrections.alias("source"),

"target.patient_id = source.patient_id AND target.encounter_id = source.encounter_id"

).whenMatchedUpdateAll().execute()

Tool Comparison: DVC vs LakeFS vs Delta Lake

Choosing the right data versioning tool depends on your data architecture, team size, and specific healthcare ML requirements. Here is a detailed comparison across the dimensions that matter most for clinical ML projects.

| Dimension | DVC | LakeFS | Delta Lake |

|---|---|---|---|

| Versioning unit | Files | Object storage paths | Table rows |

| Branching | Via Git branches | Native zero-copy branches | No branching (time travel only) |

| Storage overhead | Deduplicated (content-addressable) | Zero-copy (no duplication) | Copy-on-write (new files per version) |

| Query integration | Must download to query | S3-compatible API (Spark, Pandas, etc.) | Native Spark, Pandas via delta-rs |

| Infrastructure | CLI only (no server needed) | Server + S3 gateway | Requires Spark or delta-rs |

| Learning curve | Low (Git-like commands) | Medium (S3 gateway concepts) | Medium (Spark/lakehouse concepts) |

| Best for | Small-medium teams, file-based ML | Large data lakes, multi-team | Data warehouse/lakehouse environments |

| HIPAA compliance | Data stays in your storage | Data stays in your storage | Data stays in your storage |

| CI/CD integration | Excellent (dvc pull/push in pipelines) | Good (lakectl in pipelines) | Good (via Spark jobs) |

| Cost | Free + storage costs | Free (open source) or managed | Free + Spark compute costs |

Why Versioning Matters for FDA SaMD Compliance

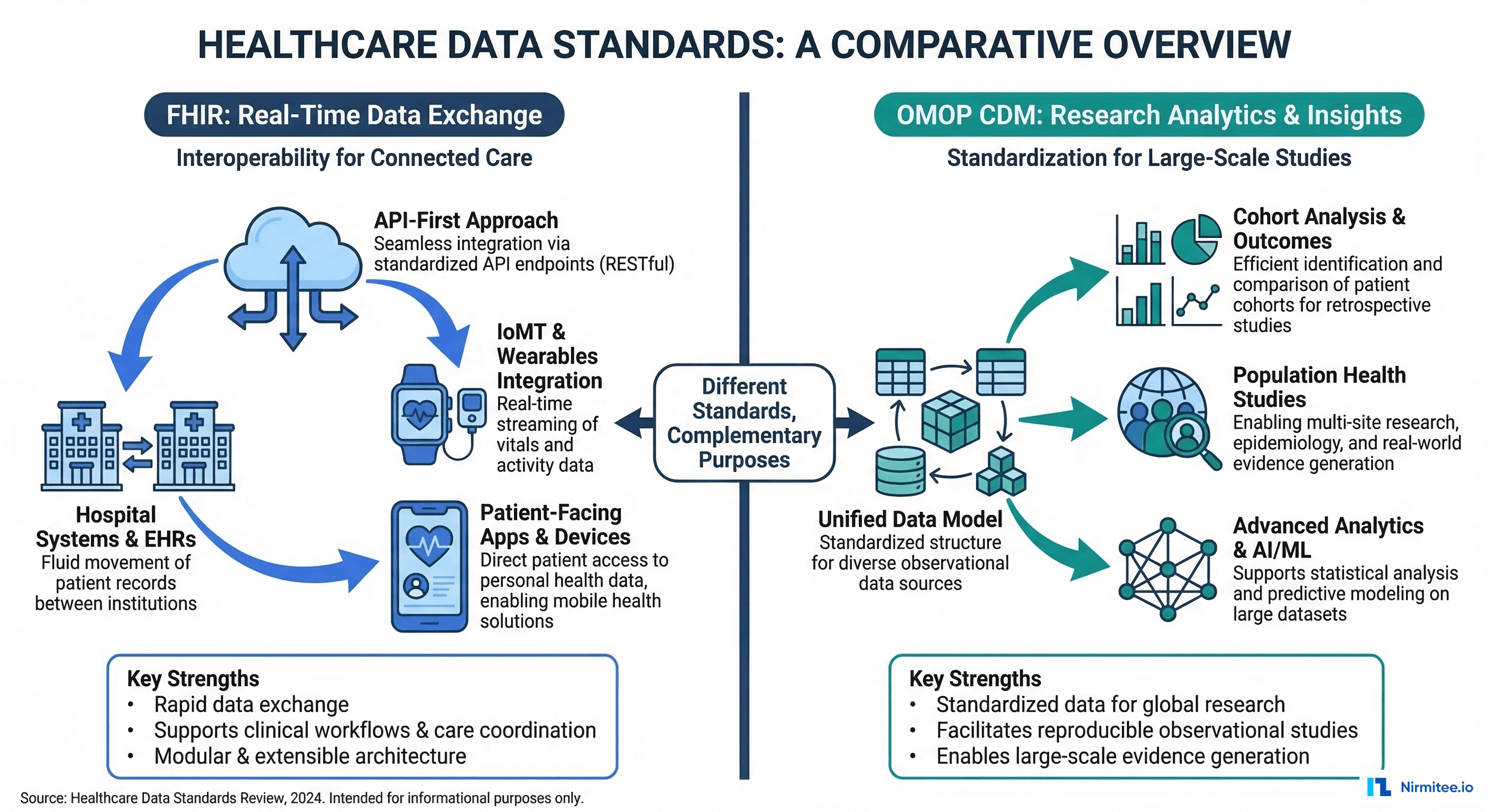

The FDA's guidance on Software as a Medical Device establishes that organizations must maintain complete documentation of the data used to develop, train, and validate ML-based medical devices. Specifically, the FDA expects evidence of data provenance (where did the data come from?), data integrity (was the data modified between collection and model training?), data representativeness (does the training data represent the intended patient population?), and reproducibility (can the model training be exactly replicated with the same results?).

Data versioning tools provide the technical foundation for meeting these requirements. When a DVC commit is linked to a Git commit that is linked to an MLflow run, you have a complete chain of evidence: this specific model (MLflow run ID) was trained on this specific data (DVC hash) using this specific code (Git commit SHA). This traceability chain is exactly what FDA reviewers look for in a SaMD 510(k) or De Novo submission.

# Traceability record linking code, data, and model

import json

from datetime import datetime

def create_training_record(git_sha, dvc_hash, mlflow_run_id, metrics):

"""Create an audit record for FDA SaMD compliance."""

record = {

"timestamp": datetime.utcnow().isoformat(),

"code_version": {

"git_sha": git_sha,

"repository": "github.com/org/readmission-model",

"branch": "main"

},

"data_version": {

"dvc_hash": dvc_hash,

"data_source": "ehr-fhir-export",

"record_count": metrics["data_rows"],

"date_range": metrics["date_range"],

"population": "adult inpatients, all payers"

},

"model_version": {

"mlflow_run_id": mlflow_run_id,

"framework": "xgboost",

"model_type": "binary_classification"

},

"validation_results": {

"auroc": metrics["auroc"],

"sensitivity": metrics["sensitivity"],

"specificity": metrics["specificity"],

"bias_audit_passed": metrics["bias_passed"]

},

"regulatory": {

"intended_use": "30-day readmission risk prediction",

"risk_class": "Class II (SaMD)",

"predicate_device": "K123456"

}

}

# Store immutably

filepath = f"audit/training_record_{mlflow_run_id}.json"

with open(filepath, "w") as f:

json.dump(record, f, indent=2)

return recordThis traceability pattern integrates with the broader healthcare ML CI/CD pipeline, where every stage produces audit artifacts that collectively document the model's development history. Organizations building clinical ML models should implement data versioning from day one—retrofitting versioning onto an existing unversioned data pipeline is significantly harder than building it in from the start.

Practical Recommendations

Choose DVC If...

- Your team is small (1-5 data scientists)

- You work primarily with file-based datasets (CSV, Parquet, HDF5)

- You want the lowest setup overhead and learning curve

- Your CI/CD pipeline already uses Git and can add

dvc pullcommands - You need to version model artifacts alongside data

Choose LakeFS If...

- You have a large data lake (100GB+ with many tables/files)

- Multiple teams need isolated data environments

- You want zero-copy branching to avoid storage duplication

- Your tools already read from S3/GCS and you want transparent versioning

- You need atomic commits across multiple files/tables

Choose Delta Lake If...

- You use Spark or a lakehouse architecture (Databricks, etc.)

- You need row-level versioning and time travel queries

- Your training data is managed as structured tables, not raw files

- You want ACID transactions on your data lake

- You need to merge corrections into historical data versions

Frequently Asked Questions

Can I use Git LFS instead of DVC for healthcare data?

Git LFS works for simple cases where you have a few large files that change infrequently. However, DVC offers significant advantages for ML workflows: pipeline definitions that track data-code-model dependencies, built-in metrics comparison across experiments, content-addressable storage that deduplicates identical data across branches, and native integration with ML experiment tracking tools. For any project beyond a proof of concept, DVC is worth the minimal additional setup.

How do these tools handle PHI and HIPAA compliance?

All three tools store data in your own infrastructure (S3, GCS, Azure, on-premises). None of them transmit data to third-party services. DVC stores metadata pointers in Git and data in your remote storage. LakeFS runs as a gateway in front of your storage. Delta Lake writes versioned Parquet files to your storage. HIPAA compliance depends on configuring the underlying storage correctly: encryption at rest (AES-256), encryption in transit (TLS), access logging, and IAM policies. The versioning tools themselves do not introduce new compliance risks.

What about versioning unstructured clinical data like imaging or notes?

DVC and LakeFS handle unstructured data well because they version files and objects of any format. A 500MB chest X-ray DICOM file or a collection of clinical note PDFs can be versioned the same way as a CSV. Delta Lake is less suited for unstructured data because it is designed for tabular data. For imaging ML projects, DVC or LakeFS are the better choices.

How much storage overhead does data versioning add?

DVC uses content-addressable storage, so identical files across versions are stored once. LakeFS uses zero-copy branching, so branches share data blocks until modifications are made. Delta Lake uses copy-on-write, creating new Parquet files for each version, but retaining unchanged files. In practice, expect 20-50% storage overhead for DVC and LakeFS, and 50-100% for Delta Lake (depending on update frequency). The cost is minimal compared to the regulatory and operational risks of unversioned data.

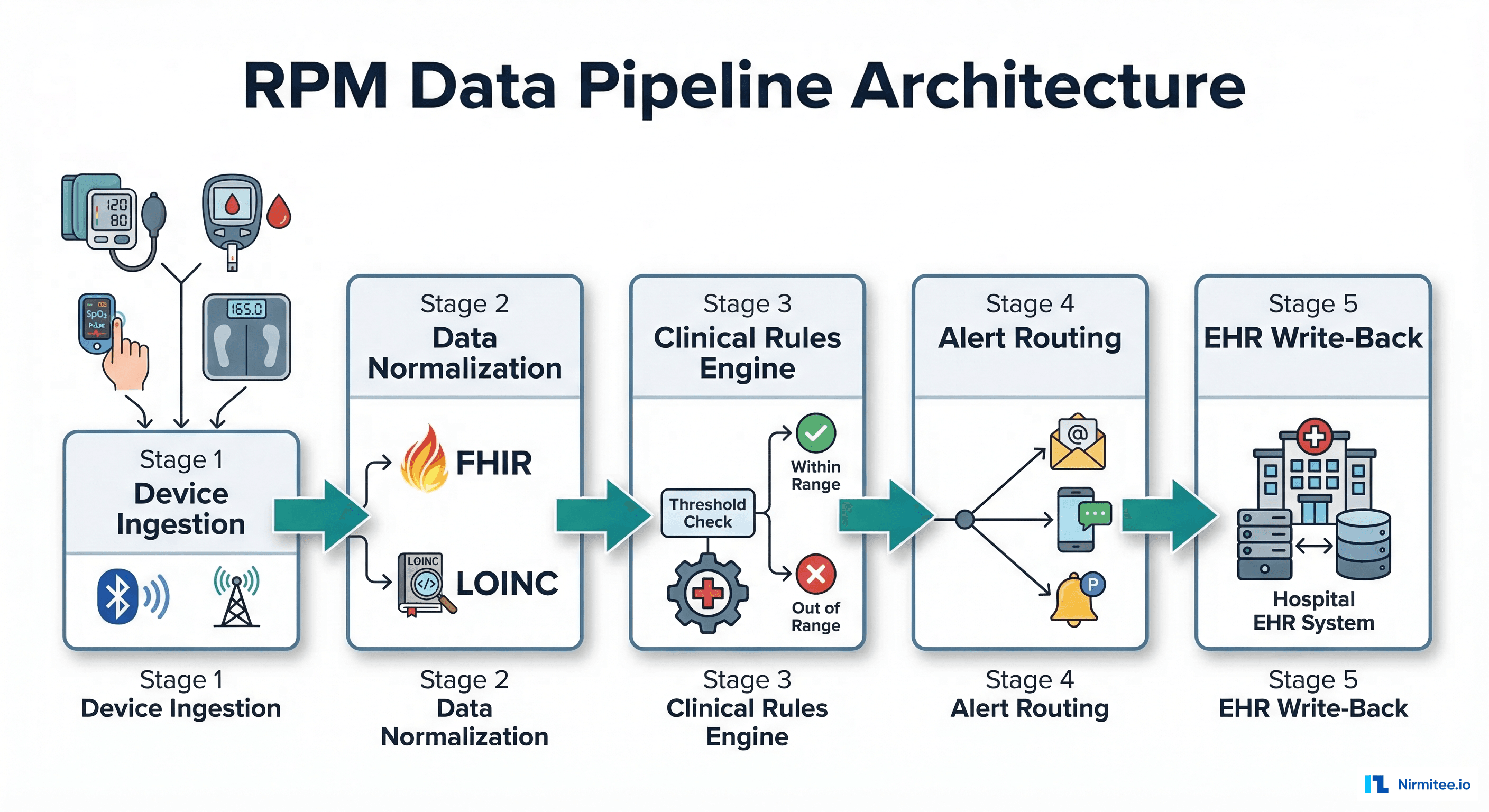

Can these tools integrate with FHIR data pipelines?

Yes. FHIR Bulk Data Export produces NDJSON files that can be tracked by DVC as regular data files, stored in LakeFS-managed object storage, or loaded into Delta Lake tables. The typical pattern is: export FHIR data via $export, run feature engineering scripts to produce training datasets, and version the resulting feature tables with your chosen tool. The raw FHIR exports and the processed features should both be versioned to maintain complete data lineage.