Why Databricks Is Winning the Healthcare Data Platform Race

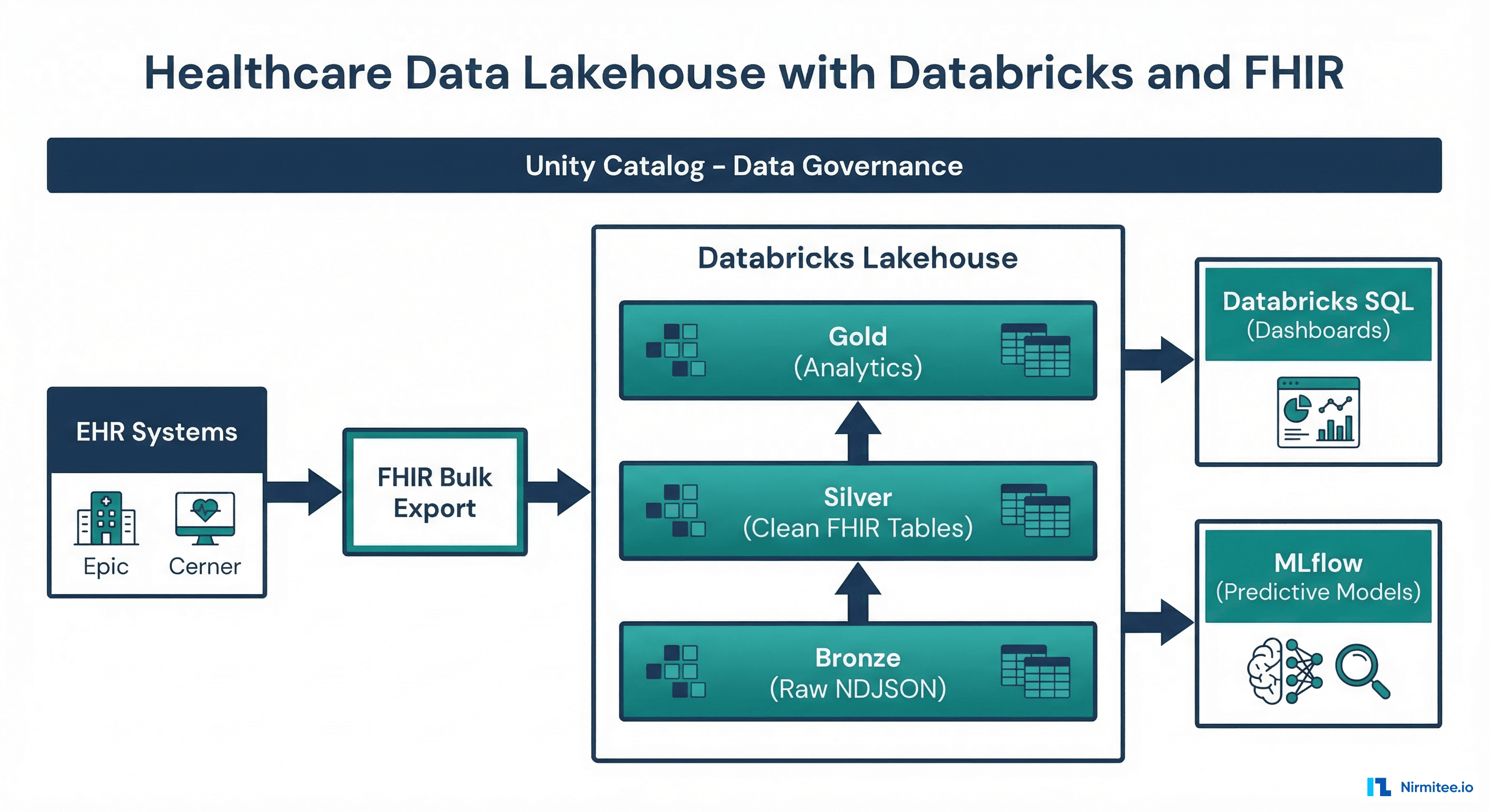

The healthcare data platform market is consolidating around lakehouse architectures, and Databricks is leading the charge. Innovaccer, one of the largest healthcare data platforms serving 70+ health systems, migrated to Databricks. Health Catalyst built their next-gen analytics on the lakehouse pattern. Microsoft's Cloud for Healthcare recommends Databricks as the analytics engine.

The reason is straightforward: healthcare organizations need to run SQL dashboards for clinical operations, train ML models for risk prediction, and maintain HIPAA-compliant data governance — all on the same platform. Databricks' lakehouse architecture handles all three workloads without the data duplication and governance fragmentation of separate warehouse + lake architectures.

This guide walks through building a production healthcare lakehouse on Databricks — from FHIR Bulk $export ingestion through medallion transformations to serving clinical dashboards and ML models.

Step 1: FHIR Bulk Data Export to Delta Lake

Understanding FHIR Bulk Data Access

FHIR Bulk Data Access ($export) is the standard mechanism for extracting large volumes of clinical data from EHR systems. Instead of querying individual patient records via REST, $export produces NDJSON files — one JSON object per line, one file per resource type — that can be downloaded in bulk.

Most major EHR vendors now support Bulk Data: Epic (since 2020), Cerner/Oracle Health (since 2021), and Meditech (since 2023). The CMS Interoperability Rules effectively mandate this capability.

Auto Loader: Incremental FHIR Ingestion

Databricks Auto Loader is the recommended ingestion mechanism for FHIR NDJSON files. It provides exactly-once processing guarantees, automatic schema detection, and file-level checkpointing — meaning if a pipeline fails mid-batch, it resumes from the exact file where it stopped.

from pyspark.sql.functions import (

current_timestamp, current_date, input_file_name,

get_json_object, lit, regexp_extract

)

def configure_auto_loader(source_path, checkpoint_path, source_system):

"""

Configure Auto Loader for FHIR NDJSON ingestion.

Uses file notification mode for S3 (faster than directory listing).

"""

return (

spark.readStream

.format("cloudFiles")

.option("cloudFiles.format", "text")

.option("cloudFiles.schemaLocation", f"{checkpoint_path}/schema")

.option("cloudFiles.useNotifications", "true") # S3 SNS/SQS

.option("cloudFiles.maxFilesPerTrigger", "1000")

.option("cloudFiles.maxBytesPerTrigger", "1g")

.load(source_path)

.withColumn("raw_json", col("value"))

.withColumn("resource_type",

get_json_object("value", "$.resourceType"))

.withColumn("resource_id",

get_json_object("value", "$.id"))

.withColumn("source_system", lit(source_system))

.withColumn("source_file", input_file_name())

.withColumn("resource_type_from_path",

regexp_extract(input_file_name(), r"/([A-Z][a-z]+)\.ndjson", 1))

.withColumn("ingestion_ts", current_timestamp())

.withColumn("ingestion_date", current_date())

)

def write_to_bronze(stream_df, checkpoint_path):

"""Write Auto Loader stream to Bronze Delta table."""

return (

stream_df

.select("raw_json", "resource_type", "resource_id",

"source_system", "source_file",

"ingestion_ts", "ingestion_date")

.writeStream

.format("delta")

.outputMode("append")

.option("checkpointLocation", checkpoint_path)

.partitionBy("ingestion_date", "resource_type")

.trigger(availableNow=True)

.toTable("healthcare_prod.bronze.fhir_resources")

)

# Run ingestion

stream = configure_auto_loader(

source_path="s3://acme-health-fhir/exports/",

checkpoint_path="s3://acme-health-fhir/_checkpoints/bronze",

source_system="epic-prod"

)

query = write_to_bronze(stream, "s3://acme-health-fhir/_checkpoints/bronze")

query.awaitTermination()

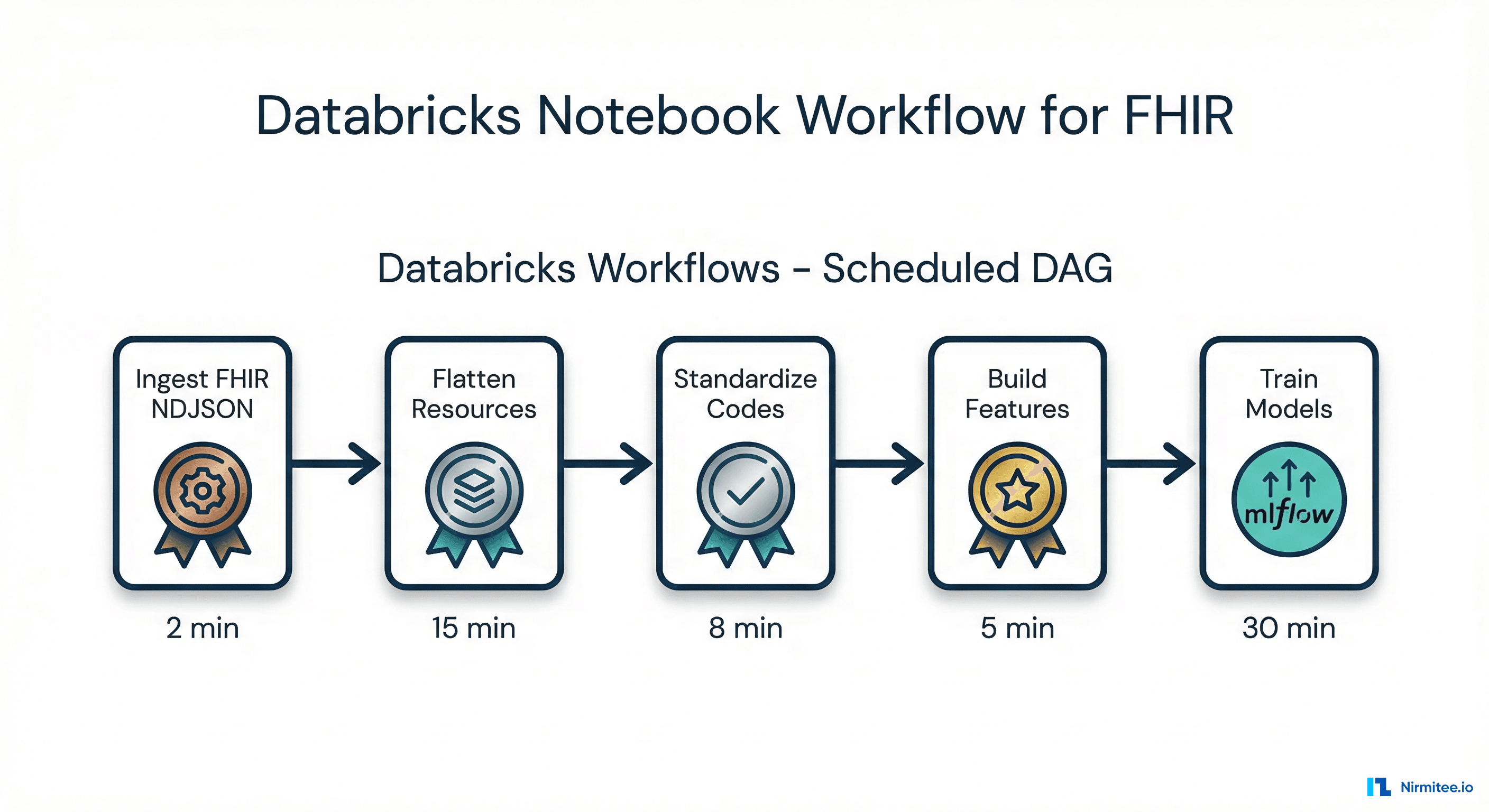

Step 2: Medallion Transformations in Databricks Notebooks

Notebook Architecture

In production, each medallion transformation runs as a separate Databricks notebook orchestrated by a Databricks Workflow (their managed DAG scheduler). This provides clear separation of concerns, independent failure handling, and granular monitoring.

Silver Layer: FHIR Resource Flattening

The Silver notebook transforms nested FHIR JSON into relational Delta tables. Here's the core transformation for the five most common clinical resources:

from pyspark.sql.functions import col, get_json_object, current_timestamp, coalesce, lit

from delta.tables import DeltaTable

def transform_patients_to_silver():

"""Flatten FHIR Patient resources into silver.patients Delta table."""

bronze = spark.table("healthcare_prod.bronze.fhir_resources") \

.filter(col("resource_type") == "Patient")

silver_df = bronze.select(

get_json_object("raw_json", "$.id").alias("patient_id"),

get_json_object("raw_json", "$.meta.lastUpdated").cast("timestamp").alias("last_updated"),

get_json_object("raw_json", "$.name[0].family").alias("last_name"),

get_json_object("raw_json", "$.name[0].given[0]").alias("first_name"),

get_json_object("raw_json", "$.birthDate").cast("date").alias("birth_date"),

get_json_object("raw_json", "$.gender").alias("gender"),

get_json_object("raw_json", "$.address[0].line[0]").alias("address_line"),

get_json_object("raw_json", "$.address[0].city").alias("city"),

get_json_object("raw_json", "$.address[0].state").alias("state"),

get_json_object("raw_json", "$.address[0].postalCode").alias("zip_code"),

get_json_object("raw_json", "$.telecom[0].value").alias("phone"),

get_json_object("raw_json", "$.maritalStatus.coding[0].code").alias("marital_status"),

col("source_system"),

current_timestamp().alias("silver_ts")

)

# MERGE for upsert (handle updated patient records)

if spark.catalog.tableExists("healthcare_prod.silver.patients"):

target = DeltaTable.forName(spark, "healthcare_prod.silver.patients")

target.alias("t").merge(

silver_df.alias("s"),

"t.patient_id = s.patient_id AND t.source_system = s.source_system"

).whenMatchedUpdateAll(

condition="s.last_updated > t.last_updated"

).whenNotMatchedInsertAll().execute()

else:

silver_df.write.format("delta") \

.saveAsTable("healthcare_prod.silver.patients")

def transform_observations_to_silver():

"""Flatten FHIR Observation resources with code standardization."""

bronze = spark.table("healthcare_prod.bronze.fhir_resources") \

.filter(col("resource_type") == "Observation")

silver_df = bronze.select(

get_json_object("raw_json", "$.id").alias("observation_id"),

get_json_object("raw_json", "$.subject.reference").alias("patient_ref"),

get_json_object("raw_json", "$.encounter.reference").alias("encounter_ref"),

get_json_object("raw_json", "$.code.coding[0].system").alias("code_system"),

get_json_object("raw_json", "$.code.coding[0].code").alias("code"),

get_json_object("raw_json", "$.code.coding[0].display").alias("code_display"),

get_json_object("raw_json", "$.valueQuantity.value").cast("double").alias("value_numeric"),

get_json_object("raw_json", "$.valueQuantity.unit").alias("value_unit"),

get_json_object("raw_json", "$.valueCodeableConcept.coding[0].code").alias("value_code"),

get_json_object("raw_json", "$.valueString").alias("value_string"),

get_json_object("raw_json", "$.effectiveDateTime").cast("timestamp").alias("effective_dt"),

get_json_object("raw_json", "$.status").alias("status"),

get_json_object("raw_json", "$.category[0].coding[0].code").alias("category"),

col("source_system"),

current_timestamp().alias("silver_ts")

)

# Clean patient reference (remove "Patient/" prefix)

silver_df = silver_df.withColumn(

"patient_id",

regexp_replace(col("patient_ref"), "^Patient/", "")

)

silver_df.write.format("delta").mode("overwrite") \

.option("overwriteSchema", "true") \

.saveAsTable("healthcare_prod.silver.observations")Step 3: Unity Catalog for Healthcare Data Governance

Unity Catalog is Databricks' unified governance layer — and for healthcare, it solves the critical problem of PHI access control at the column and row level.

Setting Up Healthcare-Specific Governance

-- Create catalog and schemas for healthcare lakehouse

CREATE CATALOG IF NOT EXISTS healthcare_prod;

USE CATALOG healthcare_prod;

CREATE SCHEMA IF NOT EXISTS bronze COMMENT 'Raw ingested data - append only';

CREATE SCHEMA IF NOT EXISTS silver COMMENT 'Cleaned and standardized data';

CREATE SCHEMA IF NOT EXISTS gold COMMENT 'Analytics-ready aggregated data';

CREATE SCHEMA IF NOT EXISTS reference COMMENT 'Terminology maps and lookup tables';

CREATE SCHEMA IF NOT EXISTS quality COMMENT 'Data quality metrics and reports';

-- Column-level security: mask PHI for non-privileged users

CREATE FUNCTION IF NOT EXISTS mask_ssn(ssn STRING)

RETURNS STRING

RETURN CASE

WHEN is_member('phi_authorized') THEN ssn

ELSE CONCAT('***-**-', RIGHT(ssn, 4))

END;

CREATE FUNCTION IF NOT EXISTS mask_dob(dob DATE)

RETURNS STRING

RETURN CASE

WHEN is_member('phi_authorized') THEN CAST(dob AS STRING)

ELSE CONCAT(YEAR(dob), '-XX-XX')

END;

-- Apply masking to Silver patient table

ALTER TABLE silver.patients

ALTER COLUMN birth_date SET MASK mask_dob;

-- Row-level security: filter by facility for multi-tenant access

CREATE FUNCTION IF NOT EXISTS facility_filter(facility_id STRING)

RETURNS BOOLEAN

RETURN CASE

WHEN is_member('all_facilities') THEN TRUE

ELSE facility_id = current_user_facility()

END;

ALTER TABLE silver.encounters SET ROW FILTER facility_filter ON (facility_id);

-- Tag PHI columns for discovery and audit

ALTER TABLE silver.patients ALTER COLUMN birth_date SET TAGS ('phi' = 'true');

ALTER TABLE silver.patients ALTER COLUMN last_name SET TAGS ('phi' = 'true');

ALTER TABLE silver.patients ALTER COLUMN zip_code SET TAGS ('phi' = 'true');

ALTER TABLE silver.patients ALTER COLUMN phone SET TAGS ('phi' = 'true');Step 4: MLflow Integration for Healthcare Models

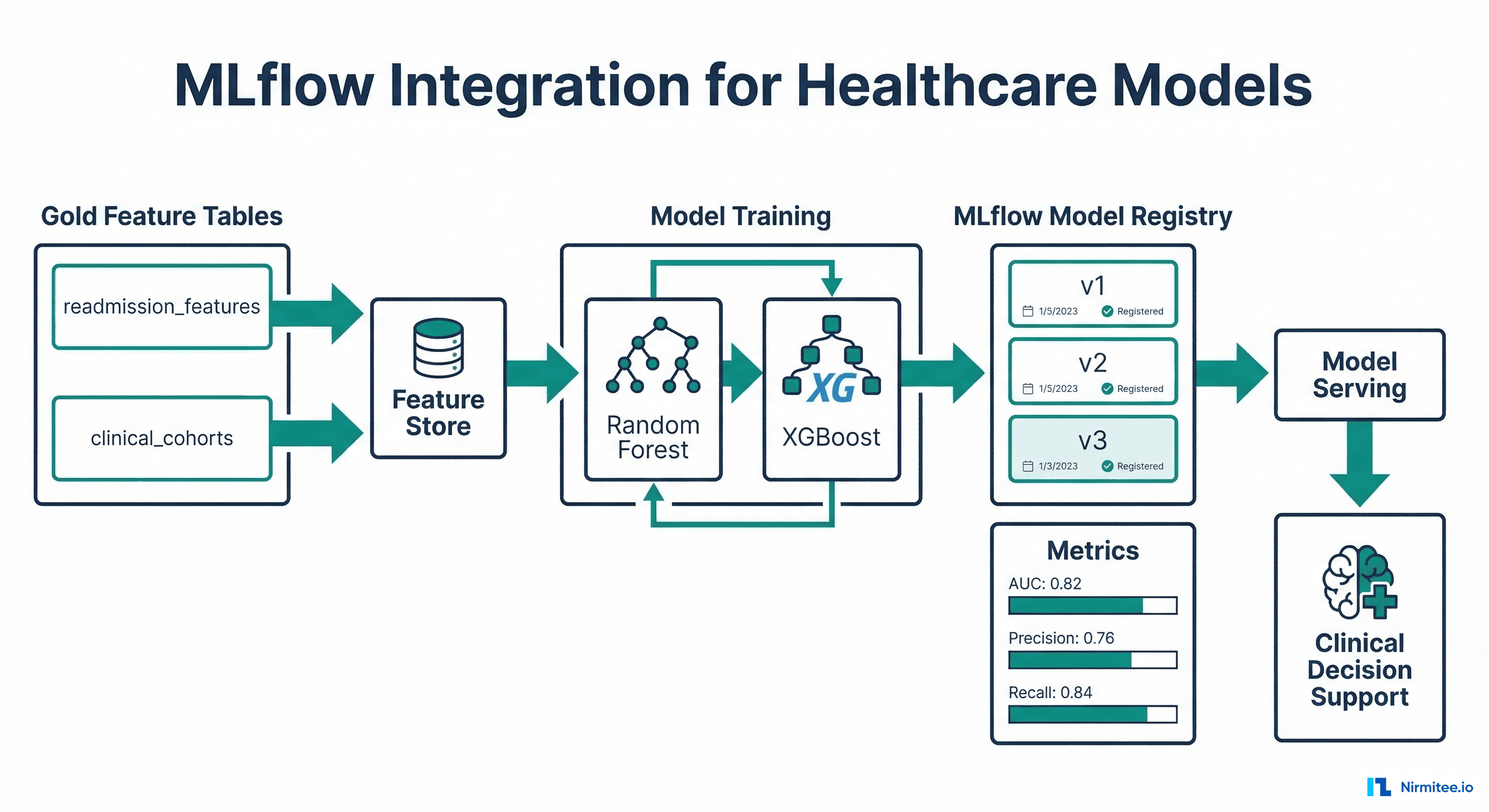

The lakehouse advantage for ML is that training data lives in the same platform as the feature store and model registry. No ETL between systems — Gold tables serve as feature sources, and MLflow tracks experiments, models, and deployments.

import mlflow

from mlflow.tracking import MlflowClient

from sklearn.ensemble import GradientBoostingClassifier

from sklearn.model_selection import train_test_split

from sklearn.metrics import roc_auc_score, precision_score, recall_score

# Load features from Gold table

features_df = spark.table("healthcare_prod.gold.readmission_features").toPandas()

# Prepare training data

feature_cols = [

"total_encounters", "ed_visits_total", "inpatient_admits_12m",

"avg_los_days", "unique_conditions", "has_diabetes", "has_chf"

]

X = features_df[feature_cols].fillna(0)

y = features_df["readmitted_30d"].fillna(0)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# Train with MLflow tracking

mlflow.set_experiment("/healthcare/readmission-prediction")

with mlflow.start_run(run_name="gbm-readmission-v3") as run:

model = GradientBoostingClassifier(

n_estimators=200, max_depth=5, learning_rate=0.1,

min_samples_leaf=20, subsample=0.8

)

model.fit(X_train, y_train)

# Evaluate

y_pred_proba = model.predict_proba(X_test)[:, 1]

y_pred = model.predict(X_test)

auc = roc_auc_score(y_test, y_pred_proba)

precision = precision_score(y_test, y_pred)

recall = recall_score(y_test, y_pred)

# Log metrics and model

mlflow.log_params({"n_estimators": 200, "max_depth": 5, "lr": 0.1})

mlflow.log_metrics({"auc": auc, "precision": precision, "recall": recall})

mlflow.sklearn.log_model(model, "readmission_model",

registered_model_name="healthcare-readmission-predictor")

print(f"AUC: {auc:.3f}, Precision: {precision:.3f}, Recall: {recall:.3f}")Step 5: Databricks SQL for Clinical Dashboards

Databricks SQL provides a serverless SQL warehouse that clinical analysts can query directly. Gold tables power dashboards without requiring Spark knowledge.

-- Dashboard query: Real-time ED census

SELECT

facility_name,

COUNT(*) AS current_census,

AVG(TIMESTAMPDIFF(MINUTE, arrival_time, CURRENT_TIMESTAMP)) AS avg_wait_minutes,

SUM(CASE WHEN acuity = 'ESI-1' OR acuity = 'ESI-2' THEN 1 ELSE 0 END) AS critical_patients,

SUM(CASE WHEN disposition IS NULL THEN 1 ELSE 0 END) AS awaiting_disposition

FROM gold.ed_census

WHERE status = 'active'

GROUP BY facility_name

ORDER BY current_census DESC;

-- Dashboard query: 30-day readmission trend

SELECT

DATE_TRUNC('week', discharge_date) AS week,

COUNT(*) AS total_discharges,

SUM(CASE WHEN readmitted_30d = TRUE THEN 1 ELSE 0 END) AS readmissions,

ROUND(100.0 * SUM(CASE WHEN readmitted_30d = TRUE THEN 1 ELSE 0 END) / COUNT(*), 1)

AS readmission_rate_pct

FROM gold.discharge_outcomes

WHERE discharge_date >= DATE_SUB(CURRENT_DATE, 180)

GROUP BY DATE_TRUNC('week', discharge_date)

ORDER BY week;

-- Dashboard query: High-risk patients for care management

SELECT

p.patient_id, p.first_name, p.last_name,

f.readmission_risk_score,

f.ed_visits_total, f.inpatient_admits_12m,

f.has_diabetes, f.has_chf,

f.unique_conditions AS comorbidity_count

FROM gold.readmission_features f

JOIN silver.patients p ON f.patient_id = p.patient_id

WHERE f.readmission_risk_score > 0.7

ORDER BY f.readmission_risk_score DESC

LIMIT 50;Cost Model: Databricks vs. Alternatives

| Component | Snowflake | Databricks Lakehouse | Open Source (Iceberg + Spark) |

|---|---|---|---|

| Storage (50TB) | $48,000/yr | $14,000/yr (S3) | $14,000/yr (S3) |

| Compute | $150,000/yr (credits) | $72,000/yr (DBUs) | $45,000/yr (EMR) |

| Governance | Included | $30,000/yr (Unity Catalog) | $15,000/yr (Glue + custom) |

| ML Platform | $40,000/yr (Snowpark ML) | Included (MLflow) | $20,000/yr (SageMaker) |

| Engineering FTEs | 2.0 ($300K) | 2.0 ($300K) | 3.5 ($525K) |

| Total Annual | $538K | $416K | $619K |

Databricks provides the best total cost of ownership for healthcare organizations that need both analytics and ML. Open source is cheaper in compute but more expensive in engineering labor. Snowflake excels for pure SQL analytics but costs more for ML workloads.

FAQ: Databricks Healthcare Lakehouse

Is Databricks HIPAA-compliant?

Yes. Databricks offers HIPAA-compliant deployments on AWS, Azure, and GCP. They sign Business Associate Agreements (BAAs) and provide HITRUST CSF certification. Data remains in your cloud account — Databricks manages the compute layer only. Your HIPAA compliance checklist should include Databricks workspace configuration.

Can I use Databricks with Epic or Cerner?

Yes. Epic supports FHIR Bulk Data Export, and many Epic customers use Databricks to ingest Caboodle (Epic's data warehouse) extracts or FHIR $export NDJSON. Cerner (Oracle Health) similarly supports bulk FHIR export. The Auto Loader pipeline shown above works with any EHR that produces FHIR-compliant NDJSON.

What about real-time clinical data?

For near-real-time needs (sub-minute latency), use Delta Lake Structured Streaming instead of batch Auto Loader. Configure the trigger as processingTime="30 seconds" instead of availableNow=True. For true real-time (<1 second), complement the lakehouse with a streaming layer (Kafka + Flink) for clinical alerting, while batch analytics continue through the medallion pipeline.

How does Unity Catalog compare to AWS Lake Formation?

Unity Catalog is more tightly integrated with the Databricks compute layer, providing automatic data lineage, column-level security that works with Spark jobs (not just SQL), and cross-cloud support. Lake Formation is AWS-native and works well with Glue/Athena but lacks the ML-aware governance features. For a healthcare data architecture centered on Databricks, Unity Catalog is the better choice.

Should I use Delta Lake or Apache Iceberg?

If you are committed to Databricks, use Delta Lake — it has the deepest integration with the Databricks runtime, Unity Catalog, and MLflow. If you want to avoid vendor lock-in, Apache Iceberg provides comparable features with broader ecosystem support (Snowflake, AWS EMR, Trino). Both support ACID transactions, time travel, and schema evolution. Databricks now also supports reading Iceberg tables via UniForm.

Conclusion: The Healthcare Lakehouse Stack in 2026

The production healthcare lakehouse stack is: FHIR Bulk Export for ingestion, Databricks Auto Loader for Bronze, PySpark for Silver transforms, Unity Catalog for PHI governance, MLflow for model lifecycle, and Databricks SQL for dashboards. This stack handles the full spectrum of healthcare analytics needs — from regulatory reporting to predictive modeling — on a single, governed platform.

The Innovaccer-Databricks partnership and Microsoft's healthcare data foundations both validate this architecture pattern. For healthcare organizations currently running separate data warehouses and ML platforms, the lakehouse consolidation offers 20-40% cost reduction with better governance.

At Nirmitee, we implement healthcare lakehouses end-to-end — from EHR integration through FHIR export to production analytics. Contact our team to discuss your lakehouse migration.