Why intake is the right first build

If you are an engineer evaluating where to ship your first healthcare AI agent, patient intake is one of the cleanest entry points. The data model is well-defined, the failure surface is bounded, and the staff who own the workflow already know what good looks like. Done well, an intake agent collapses the 12 minutes the front desk spends per patient into 3 minutes of staff review on top of self-service capture — and produces structured FHIR resources rather than unstructured form fields.

This guide walks through the three-stage architecture, the FHIR resources you need to write, the failure modes that bite in production, and a working code skeleton you can adapt. It assumes you have read our pillar guide on AI agents in healthcare and are familiar with FHIR R4 at the conceptual level.

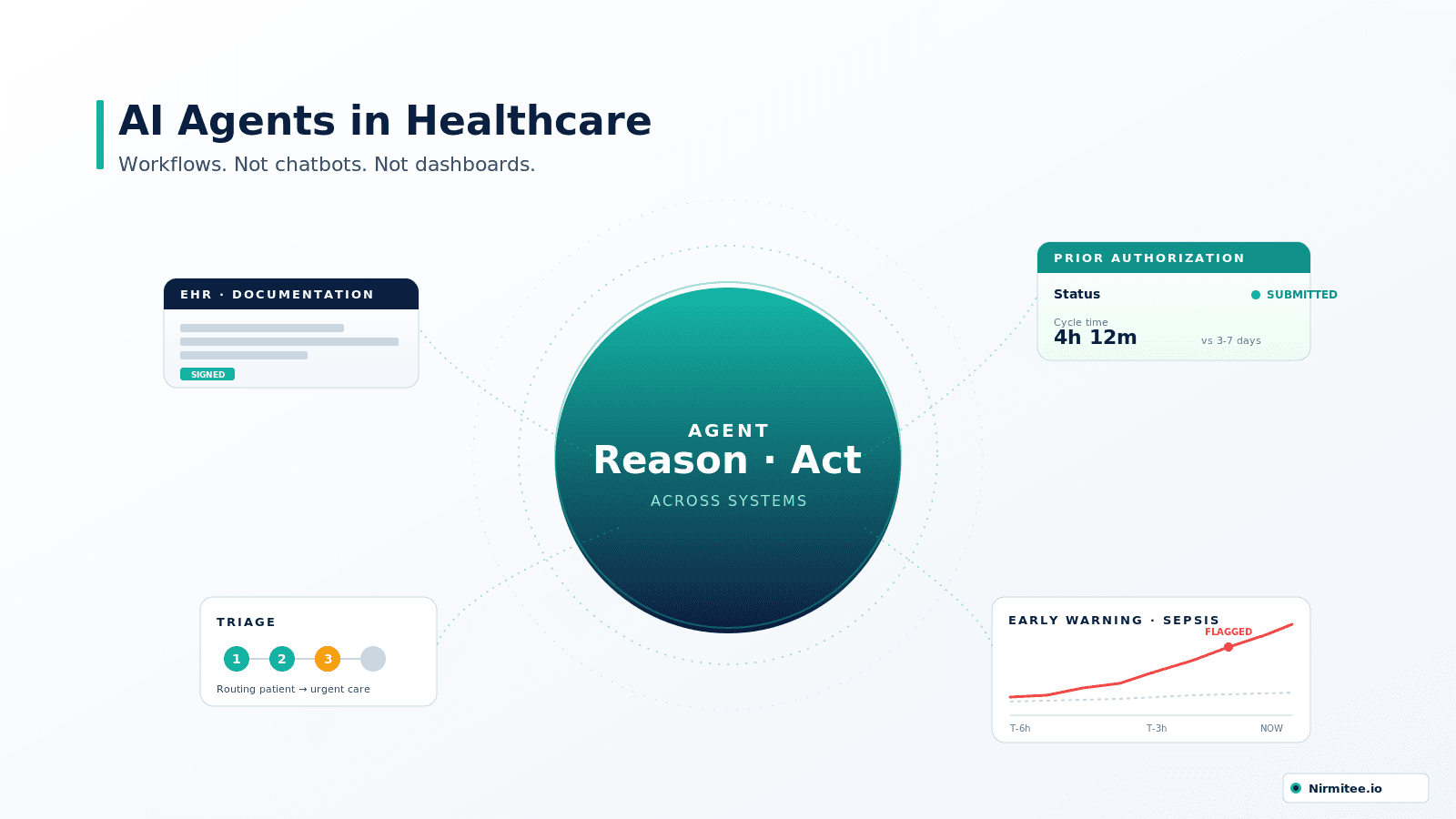

What is a patient-intake AI agent?

A patient-intake AI agent is a software workflow that captures, validates, and writes patient intake data into the EHR autonomously, with a human reviewer in the loop. It is not a chatbot. The chatbot answers; the agent acts. Three things distinguish an agent from a smart form: (1) it adapts the conversation to channel and history, (2) it produces structured FHIR resources rather than free-text fields, and (3) it issues real downstream calls — eligibility verification, scheduling, EHR writes — without staff retyping.

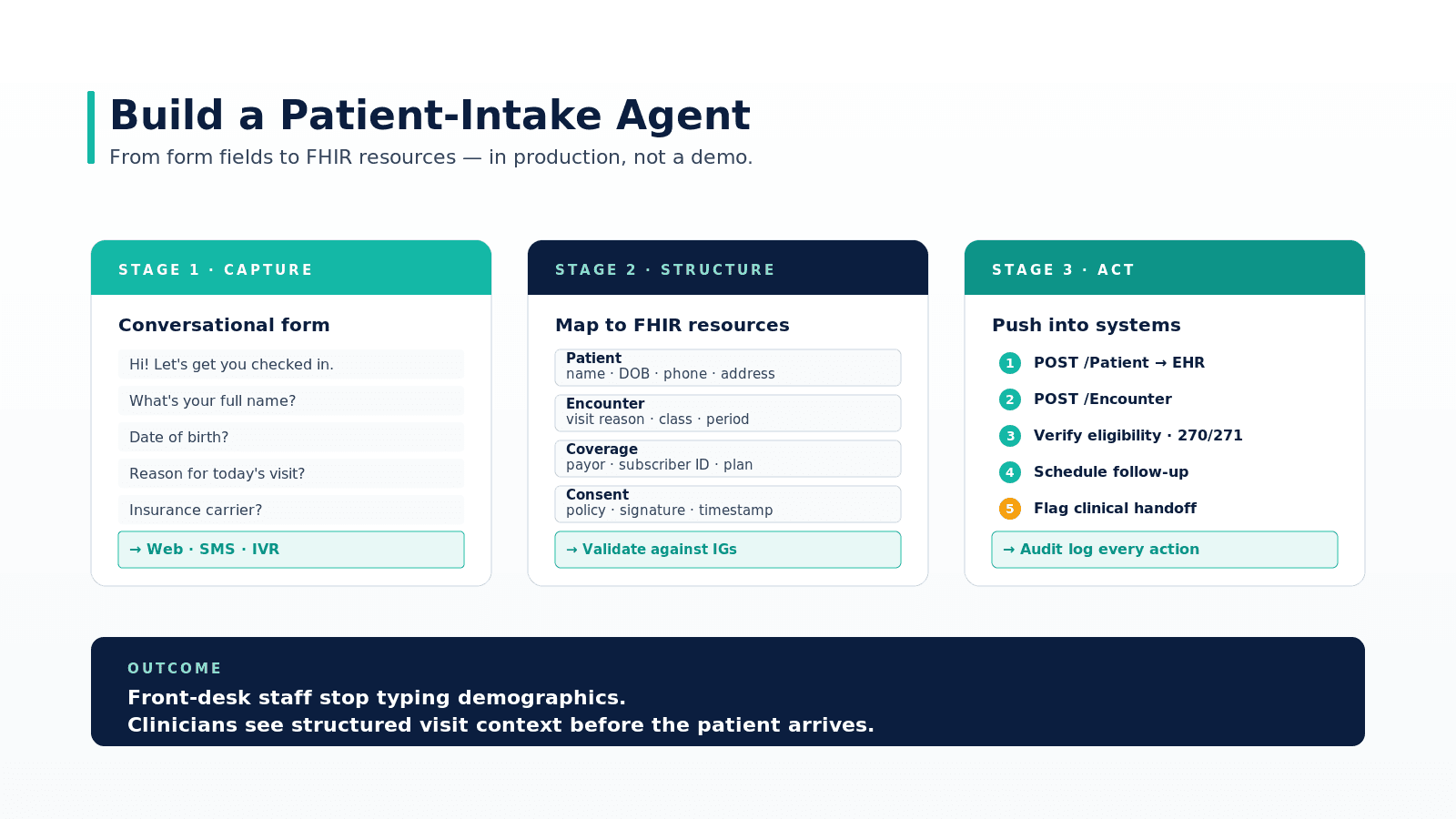

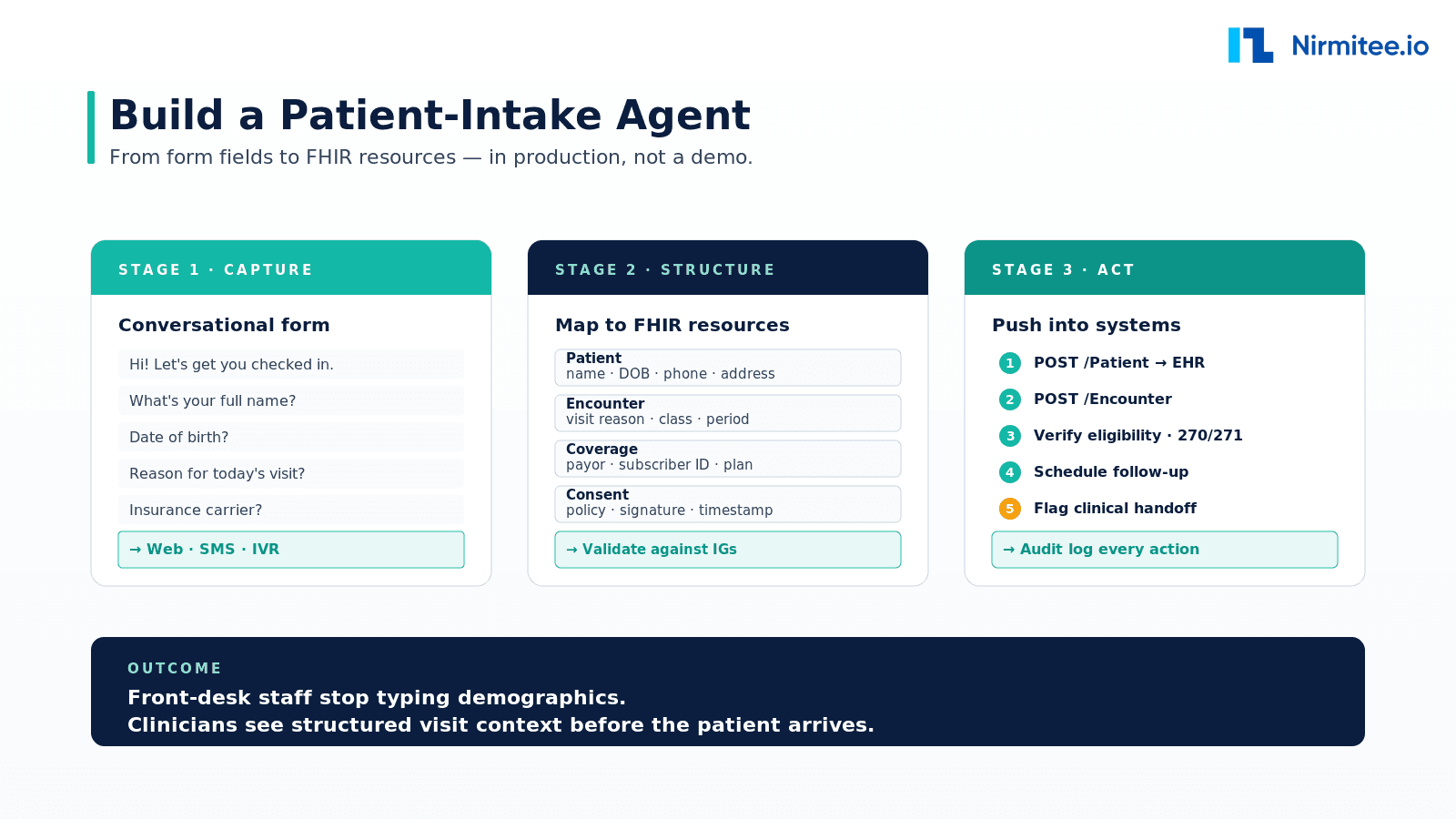

What the agent has to do, end to end

An intake agent is not a chatbot. It is a workflow engine that sits between the patient (web, SMS, voice) and the EHR. It captures the structured data the front desk would otherwise type, validates it, and writes it into the systems that downstream clinical and billing workflows depend on.

Stage 1 — Capture

The agent collects the same data your front desk asks for, but adapts the conversation to the channel and the patient. A returning patient does not need to re-enter their address; a new patient may need to confirm pronouns and language preference. The capture layer should be channel-agnostic: web embed, SMS bot, IVR, or voice in a kiosk. Where you draw the line on what to ask is a clinical-administrative decision — not a model decision.

Stage 2 — Structure

Every conversation field maps to a FHIR resource. Name, DOB, phone become a Patient resource. The reason for visit becomes an Encounter resource. Insurance details become a Coverage resource. Consent for telehealth or treatment becomes a Consent resource. The agent must validate these against your IGs (US Core, your hospital's profile) before they go anywhere.

Stage 3 — Act

This is where most home-grown intake forms stop and most production agents earn their keep. The agent issues real writes:

POST /Patientif no match, otherwise resolves to existing patient via MPIPOST /Encounterwith the visit reason and timingPOST /Coveragewith carrier, plan, subscriber ID- Eligibility check (270/271) routed through your EDI clearinghouse

POST /Appointmentif scheduling is part of the flow- Audit log entry for each action with actor, timestamp, scope

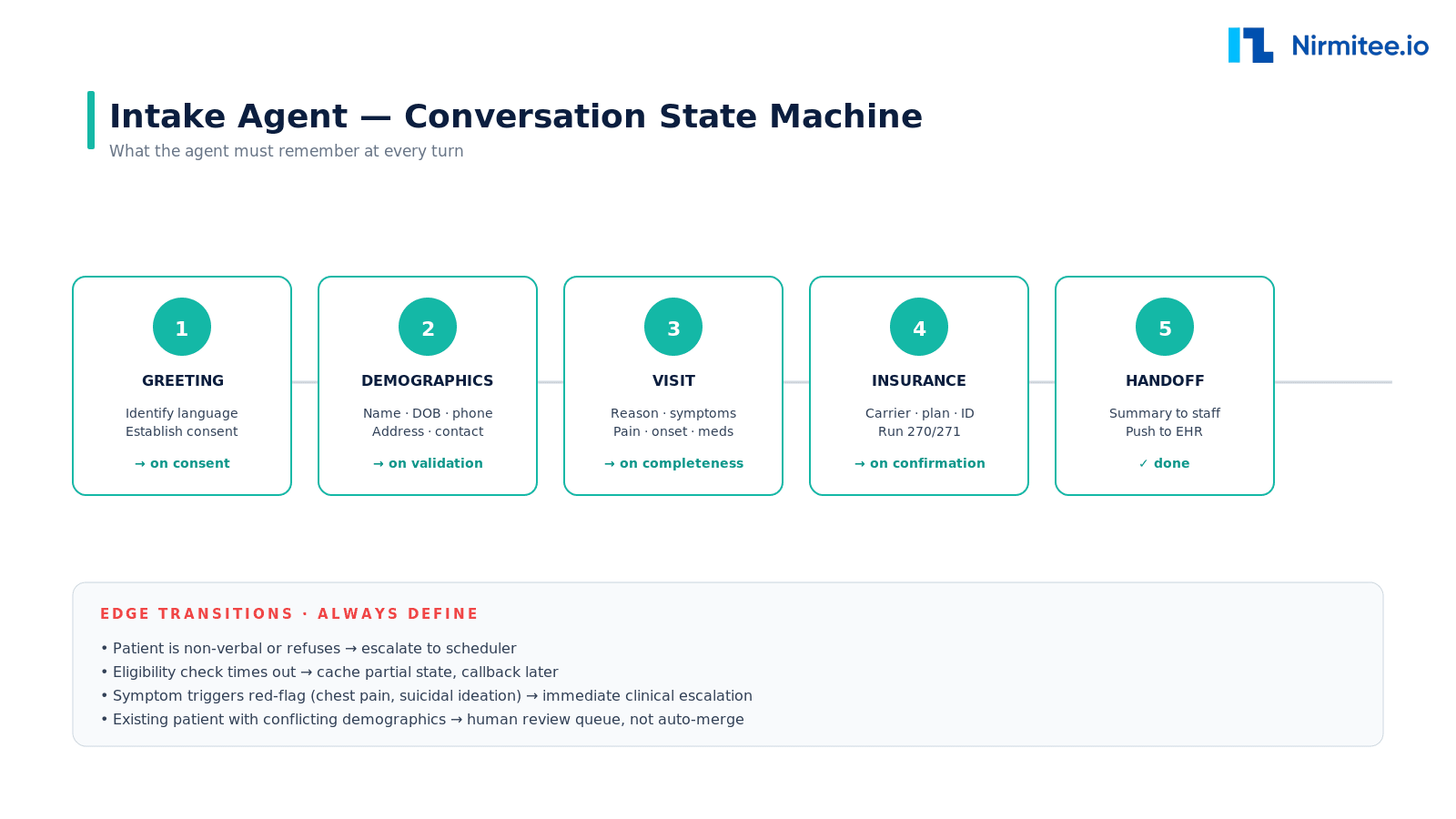

Conversation state machine

The single most important design decision is the state machine. Without one, your agent will lose the plot mid-conversation and your patients will repeat themselves into a void.

Five states cover most of intake: greeting, demographics, visit, insurance, handoff. What kills naive implementations is the edge transitions — non-verbal patients, conflicting demographics that should not auto-merge, eligibility timeouts, red-flag symptoms that warrant immediate clinical escalation. Define every one of these before you ship.

Implementation skeleton

Here is the agent loop written as a tool-calling pattern. The pattern is model-agnostic — it works with Claude, GPT-4 class models, or open-weights inference behind your firewall. The key insight is that the LLM is a planner; the FHIR work is deterministic.

# intake_agent.py — minimal tool-calling skeleton

from typing import Dict, Any

from fhir_client import FHIRClient

from llm import LLM

fhir = FHIRClient(base_url="https://your-ehr/api/FHIR/R4", auth=...)

llm = LLM(model="claude-3-7-sonnet")

TOOLS = {

"set_patient_field": lambda state, key, value: state.update({key: value}),

"validate_phone": lambda state, ph: state.update({"phone_valid": is_phone(ph)}),

"lookup_patient": lambda state, n, dob: fhir.search("Patient", {"name": n, "birthdate": dob}),

"check_eligibility": lambda state, plan, member_id: edi.x12_270(plan, member_id),

"create_resources": lambda state: [fhir.create(r) for r in build_resources(state)],

"escalate": lambda state, reason: notify_staff(state, reason),

}

def run(channel, session_id):

state: Dict[str, Any] = {"session_id": session_id}

while not state.get("done"):

user_msg = channel.read()

plan = llm.plan(state=state, user_message=user_msg, available_tools=TOOLS.keys())

for tool_name, args in plan.tool_calls:

TOOLS[tool_name](state, **args)

channel.send(plan.assistant_message)

if plan.next_state == "handoff":

TOOLS["create_resources"](state)

state["done"] = True

audit_log(state)

return state["session_id"]

This is intentionally naive. In production you will add: Pydantic schemas on every tool argument, a deterministic fallback for fields the LLM keeps tripping on (DOB parsing especially), retry with backoff on payor APIs, and an offline replay harness so a regression in your prompt does not silently break a clinic at 7am.

What measurably changes

The right way to defend the build to a CFO or COO is to set baseline metrics first and revisit them at 30, 60, 90 days. Intake time per patient, field-level error rate, eligibility resolution lag, and no-show rate are the four numbers that move. We have a deeper treatment of how to verify these in our companion piece on EHR integration patterns and the where AI agents deliver ROI piece.

Production-grade considerations

- Identity matching. Never auto-merge demographics. Conflicting matches go to a human review queue. The cost of a misjoined chart dwarfs any time saved.

- Consent and minor handling. Bake your jurisdiction's rules into the state machine. Pediatric flows, surrogate consent, and proxy access all have explicit FHIR support.

- Language and accessibility. Multilingual NLU is table stakes. So is graceful degradation: when the model is uncertain, route to a human, do not guess.

- Observability. Tag every tool call with the session id. The first time an intake agent silently drops a Coverage write, you will want to find it in 30 seconds, not 30 minutes. See our framework on observability for agentic AI in healthcare.

- HIPAA posture. The agent is a Business Associate. Map the data flow, sign the BAAs, and revisit when the LLM provider's terms change. Our HIPAA compliance checklist walks through it.

Common ways this build fails

The patterns we see go wrong:

- Agent that "asks" instead of "acts." If the staff still has to retype data into the EHR, you have built a chatbot, not an agent. The FHIR write is the deliverable.

- State held in the LLM context only. Persist state in a database. The model will forget; the database will not.

- One model, every step. Use the LLM for ambiguity (interpretation, extraction). Use deterministic code for everything else.

- No rollback. A regression detector should freeze the agent before it spreads the regression to thousands of patients.

What to build first

If you are starting from zero, ship a single-clinic pilot that handles new patient intake only — not returning, not check-in, not scheduling. Hit the metrics. Then expand to returning patients, then to scheduling, then to multi-clinic. Each new scope re-uses the resources and tools you already built. Trying to ship "the whole intake experience" in v1 is the failure mode that turns a 4-month pilot into an 18-month rebuild.

Going deeper? Read the full pillar on AI agents in healthcare, then the practical guide to how AI agents integrate with EHR systems, and the FHIR API practical overview for the underlying primitives.

Real-world example

Mayo Clinic's published work on conversational digital intake is a useful reference point: the program reduced new-patient enrollment time and front-desk calls by structuring intake conversationally and pushing validated data into Epic. Geisinger and Kaiser Permanente have published similar patterns for digital front-door intake. The architecture in this guide mirrors those production deployments — channel-agnostic capture, FHIR-validated structure, deterministic write-through, audit log on every action. The metrics in the before/after diagram earlier reflect typical outcome ranges across multi-clinic deployments of this pattern.

Key takeaways

- The deliverable is the FHIR write, not the transcript. If staff still retype data into the EHR, you have built a chatbot, not an agent.

- The LLM is a planner; the FHIR work is deterministic. Wrap every external call as a typed tool with strict argument schemas.

- Define every edge transition before launch. Non-verbal patients, conflicting demographics, eligibility timeouts, red-flag symptoms — all need explicit handlers.

- Never auto-merge identities. Conflicting demographic matches go to human review, regardless of model confidence.

- Ship one workflow, then expand. A single-clinic new-patient pilot in 8–12 weeks beats a year-long all-of-intake rebuild every time.

Call to Action

Want to build an AI Agent for your healthcare product? Get in touch with our team for a working session — we will scope the architecture, integration patterns, and 90-day plan against your own systems.

Learn more about AI Agents in Healthcare → read the full pillar guide.

Related reading: