When your Mirth Connect instance goes down, HL7 messages stop flowing. Lab results don't reach clinicians. ADT events don't trigger downstream pharmacy, billing, or bed management systems. Radiology reports sit in queues. Medication reconciliation stalls. This isn't a minor IT inconvenience — it's a patient safety issue that can cascade across every department in your hospital.

Yet most healthcare organizations treat their integration engine as a utility that will always be there, like electricity. They invest heavily in EHR redundancy and network failover but leave Mirth Connect — the single point through which hundreds of thousands of daily messages flow — running on a single server with nightly database backups and no documented recovery procedure.

This guide covers everything integration team leads and hospital IT operations teams need to build a production-grade disaster recovery strategy for Mirth Connect: failover architectures, backup procedures, in-flight message behavior, and a tested runbook you can use starting today.

Why Disaster Recovery Matters for Integration Engines

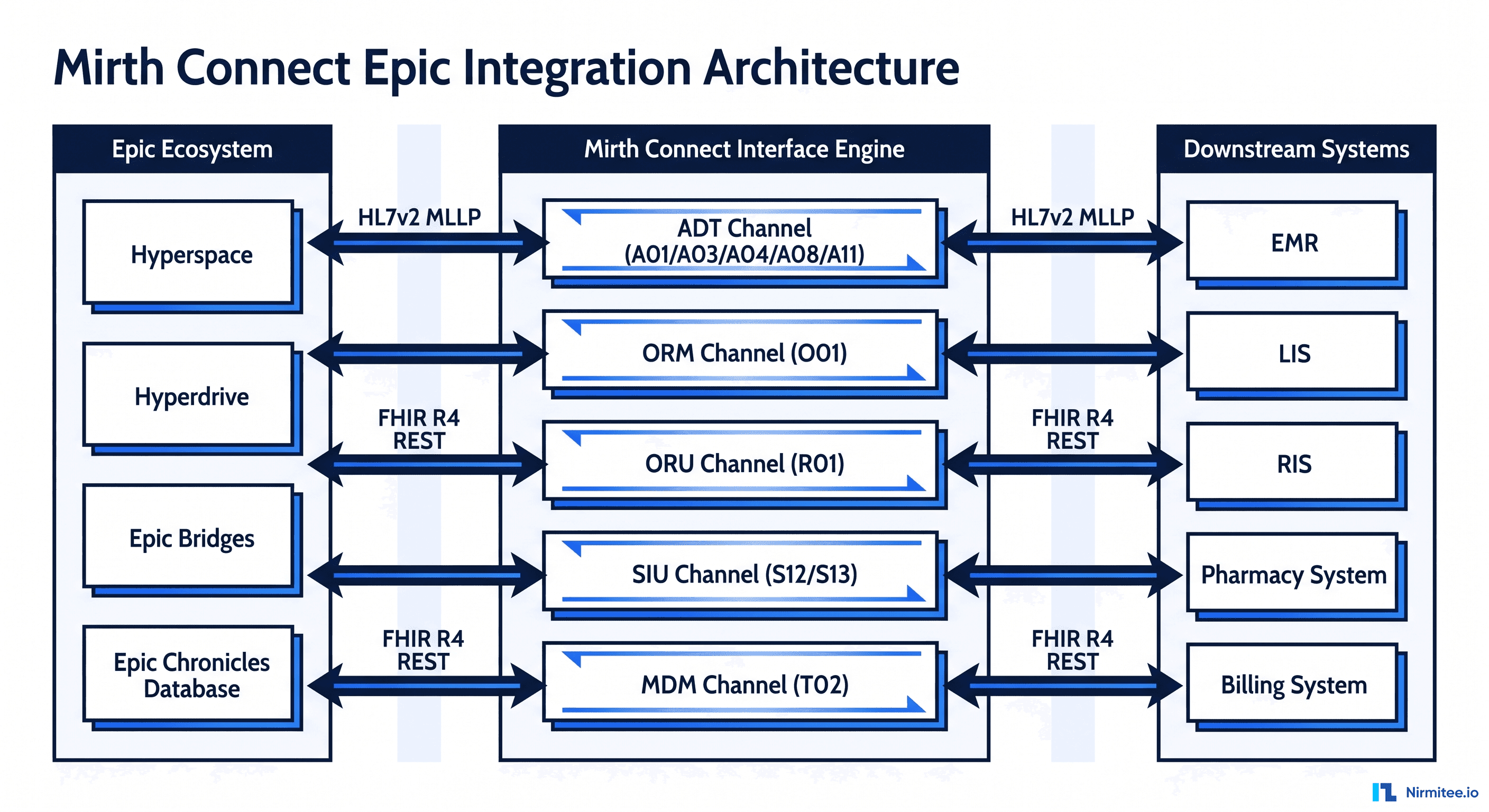

A mid-size hospital processes between 500,000 and 2 million HL7 messages per day through its integration engine. These messages represent real clinical events: patient admissions (ADT_A01), lab results (ORU_R01), medication orders (RDE_O11), discharge summaries, radiology reports, and billing triggers. When Mirth goes down for even 30 minutes, the downstream impact is measurable:

- Lab results delayed — clinicians waiting on critical values (troponin, lactate, blood cultures) don't receive them. This directly impacts treatment decisions in the ED and ICU.

- ADT events lost — downstream systems (pharmacy, dietary, transport, bed management) don't know about new admissions, transfers, or discharges. Bed boards go stale. Pharmacy doesn't receive medication reconciliation triggers.

- Orders don't route — CPOE orders for labs, imaging, and medications don't reach ancillary systems. Clinicians place orders that appear to succeed but never arrive.

- Billing gaps — charge capture messages (DFT_P03) don't flow to the revenue cycle system, creating reconciliation headaches that persist for weeks.

According to a Ponemon Institute study, the average cost of IT downtime in healthcare is approximately $7,900 per minute. For integration engines specifically, the cost compounds because every connected system is affected simultaneously. If you manage Mirth Connect in production, you need a DR strategy that's documented, tested, and can execute under pressure.

Defining RPO and RTO for Healthcare Integration

Before designing your failover architecture, you need two numbers that drive every decision: your Recovery Point Objective (RPO) and Recovery Time Objective (RTO).

Recovery Point Objective (RPO): How Much Data Can You Lose?

RPO defines the maximum acceptable amount of data loss measured in time. An RPO of 15 minutes means you can tolerate losing up to 15 minutes of messages. For Mirth Connect, this translates to:

- Database RPO — how far behind is your standby PostgreSQL database? With streaming replication, this is typically seconds, not minutes.

- Configuration RPO — when was your last channel configuration backup? If you deployed a new channel yesterday and your last config backup was last week, your RPO for configuration is 7 days.

- Message RPO — HL7 over TCP (MLLP) has a built-in retry mechanism. If the source system doesn't receive an ACK, it retries. This means your effective message RPO for TCP sources is often zero — the source retains the message until delivery is confirmed.

Recommended RPO for healthcare integration: 15 minutes for database state, near-zero for message data (relying on source system retry behavior).

Recovery Time Objective (RTO): How Fast Must You Recover?

RTO is the maximum acceptable time from failure to full service restoration. During the RTO window, no messages are being processed. For a hospital running Mirth Connect:

- Under 5 minutes — critical care environments (ICU, ED) where delayed lab results can impact treatment decisions

- Under 30 minutes — standard inpatient care where brief delays are tolerable but must be resolved within a shift

- Under 4 hours — administrative interfaces (billing, scheduling) where same-day recovery is acceptable

Recommended RTO for healthcare integration: 30 minutes for most hospital environments. If your channels include critical care lab results or real-time clinical alerting, target 5 minutes with automated failover.

Active-Passive Failover: The Standard Approach

Active-passive is the most common and well-understood failover model for Mirth Connect. A primary server handles all message processing while a standby server stays synchronized and ready to take over.

Architecture Components

A production active-passive deployment requires four components working together:

1. Shared PostgreSQL with Streaming Replication

Mirth Connect stores channel configurations, message history, and queue state in its database. Your standby server needs an up-to-date copy. PostgreSQL streaming replication provides continuous WAL (Write-Ahead Log) shipping from primary to standby with sub-second lag:

# On primary postgresql.conf

wal_level = replica

max_wal_senders = 3

wal_keep_size = '1GB'

# On standby recovery.conf (PostgreSQL 12+: standby.signal + postgresql.conf)

primary_conninfo = 'host=primary-db port=5432 user=replicator password=<secure_password>'

restore_command = 'cp /var/lib/pgsql/wal_archive/%f %p'2. Shared Filesystem for Channel Configurations

Channel XML files, code templates, and custom scripts should be accessible to both nodes. Options include NFS (on-premise), Amazon EFS (AWS), or Azure Files (Azure). Alternatively, use Git-based synchronization (covered in the backup section below).

3. Heartbeat Monitor

A monitoring service that checks the primary server's health every 10-15 seconds. When the primary fails consecutive health checks (typically 3-5 failures), it triggers the failover procedure. Tools like Keepalived, Corosync/Pacemaker, or a custom script using the Mirth Connect REST API work here:

#!/bin/bash

# Simple heartbeat check using Mirth REST API

MIRTH_URL="https://primary-mirth:8443/api"

MAX_FAILURES=3

failure_count=0

while true; do

response=$(curl -s -o /dev/null -w "%{http_code}" -k "$MIRTH_URL/system/stats" -H "X-Requested-With: XMLHttpRequest" -u admin:admin)

if [ "$response" != "200" ]; then

failure_count=$((failure_count + 1))

echo "$(date) - Health check failed ($failure_count/$MAX_FAILURES)"

if [ $failure_count -ge $MAX_FAILURES ]; then

echo "$(date) - TRIGGERING FAILOVER"

/opt/mirth-dr/promote-standby.sh

break

fi

else

failure_count=0

fi

sleep 15

done4. VIP/DNS Failover

Source and destination systems should connect to Mirth through a Virtual IP address or DNS name — never directly to a server's IP. When failover occurs, the VIP moves to the standby server or the DNS record updates. This means no reconfiguration of connected systems during failover.

Active-Active: Load-Balanced Mirth Deployment

Active-active deployments run two or more Mirth instances simultaneously, both processing messages. This provides better throughput and eliminates the cold-start delay of promoting a standby server. However, it introduces complexity that most healthcare organizations underestimate.

Dedicated Channel Assignment

Each Mirth node is responsible for a specific set of channels. Node A handles ADT and lab interfaces (channels 1-50), Node B handles pharmacy and radiology (channels 51-100). The load balancer routes connections based on port or path.

- Advantage: No locking contention, simpler debugging (you always know which node processes which channel), easier capacity planning.

- Disadvantage: Uneven load distribution, manual rebalancing required when adding channels, single-node failure still affects a subset of interfaces.

Shared Channel Processing

Both nodes deploy and run all channels. The load balancer distributes incoming connections across nodes. The shared database uses row-level locking to prevent duplicate processing.

- Advantage: Automatic load distribution, any node can handle any channel, true horizontal scaling.

- Disadvantages: Risk of duplicate message processing, database locking overhead, more complex debugging, and requires careful channel design (idempotent processing).

For most healthcare organizations, dedicated channel assignment is the safer choice. It's easier to operate, debug, and reason about. Shared processing only makes sense at very high volumes (10M+ messages/day) where a single Mirth node cannot keep up. Understanding common Mirth Connect integration failures helps you design channels that survive failover cleanly.

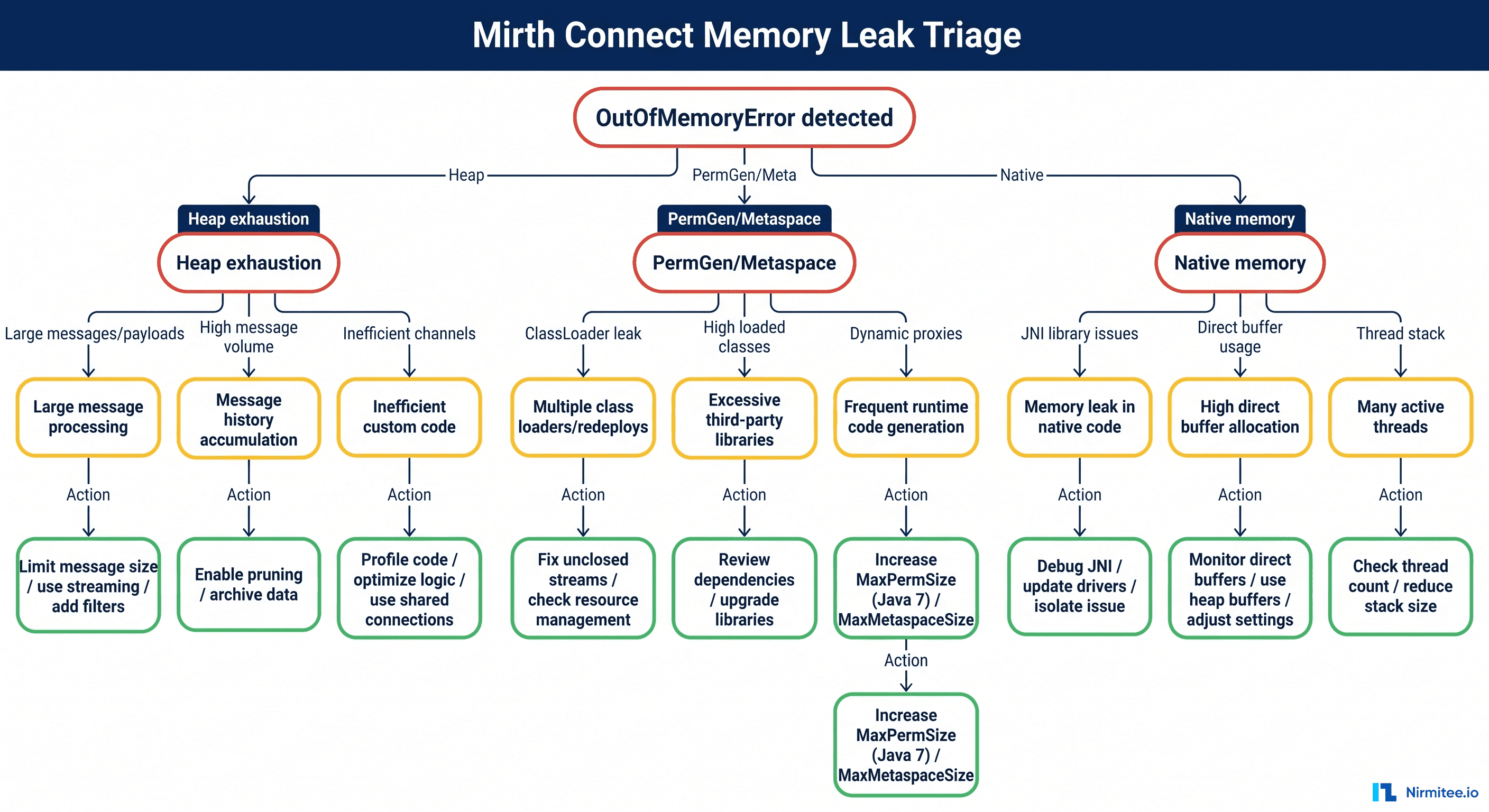

What Happens to In-Flight Messages When Mirth Crashes

This is the question that keeps integration engineers up at night. A message is mid-processing when Mirth crashes — what happens to it? The answer depends on where in the processing pipeline the crash occurs.

Scenario 1: TCP HL7 Listener (MLLP) — Source Retries Automatically

When a source system sends an HL7 message over TCP (MLLP), it expects an ACK response. If Mirth crashes before sending the ACK, the source system's MLLP client detects the connection failure and retries. This is built into the HL7 v2 protocol specification. Most source systems retry 3-5 times with exponential backoff before alerting.

Result: Messages are not lost. The source retains them and retransmits after Mirth recovers. However, if Mirth crashes after processing but before sending the ACK, the source will retry — potentially creating a duplicate. Your channels should handle duplicate detection (check MSH-10 Message Control ID).

Scenario 2: File Reader — Files Survive on Disk

If a File Reader channel picks up a file and Mirth crashes mid-transform, the file is still on disk. On restart, the File Reader will reprocess it (assuming "Move to error directory on failure" is configured, not "Delete after read"). This is one reason File Reader channels are inherently more resilient than TCP listeners for DR scenarios.

Result: Messages are not lost. Files persist on the filesystem and are reprocessed on restart.

Scenario 3: Destination Queue — Messages Survive in Database

When a message has been processed by the source connector and transformer but hasn't yet been delivered by the destination connector, it sits in the destination queue. Mirth stores queued messages in its PostgreSQL database. When Mirth restarts, it picks up queued messages and attempts delivery.

Result: Messages are not lost. The database queue persists across restarts. Messages will be delivered once the destination is reachable.

Scenario 4: HTTP/REST Endpoints — Callers Get 5xx

If Mirth exposes HTTP listener channels and crashes, callers receive connection refused or timeout errors. Unlike MLLP, HTTP callers may not automatically retry — this depends on the calling application's implementation. Your upstream systems need retry logic for HTTP-based interfaces.

Result: Messages may be lost unless the calling application implements retry logic. This is a design consideration when building healthcare integration architectures with Mirth and Kafka — Kafka can serve as a durable buffer in front of HTTP channels.

Database Backup Strategy for Mirth Connect

Your Mirth Connect database contains everything: channel configurations, message history, queue state, user accounts, and audit logs. Losing it means losing your entire integration engine state.

Scheduled pg_dump

Run pg_dump On a schedule that matches your RPO target. For a 15-minute RPO:

# Cron: every 15 minutes, full logical backup

*/15 * * * * pg_dump -U mirth -Fc mirthdb > /backup/mirth_$(date +\%Y\%m\%d_\%H\%M).dump

# Retain 7 days of backups

0 2 * * * find /backup -name "mirth_*.dump" -mtime +7 -deletePoint-in-Time Recovery with WAL Archiving

For RPOs tighter than 15 minutes, enable continuous WAL archiving. This lets you recover the database to any point in time, not just the last backup:

# postgresql.conf

archive_mode = on

archive_command = 'cp %p /wal_archive/%f'

# Restore to a specific point in time

restore_command = 'cp /wal_archive/%f %p'

recovery_target_time = '2026-03-16 14:30:00'Test Your Restores

A backup you've never tested is not a backup — it's a hope. Schedule monthly restore tests on a non-production server. Document the restore time (this becomes your baseline RTO for the database component). Verify that channels deploy and process messages correctly after restore.

Channel State Backup: Version Control for Integration

Beyond the database, you need to back up your channel definitions, code templates, and configuration independently. This gives you the ability to redeploy channels to a clean Mirth instance if needed.

What to Back Up

- Channel XML files — every channel's full definition, including source/destination connectors, transformers, filters, and response settings

- Code Template Libraries — shared JavaScript functions referenced across channels

- Global Scripts — deploy, undeploy, preprocessor, and postprocessor scripts

- Configuration Map — key-value pairs used across channels (connection strings, endpoints, credentials)

- Server Settings — global Mirth configuration including TLS certificates, LDAP settings, and resource configurations

Automating Backup to Git

Use the Mirth Connect REST API to export everything and commit to a Git repository. This gives you a versioned, auditable history of every channel change:

#!/bin/bash

# Automated Mirth channel backup to Git

MIRTH_URL="https://localhost:8443/api"

BACKUP_DIR="/opt/mirth-backup/channels"

AUTH="-u admin:admin"

# Export all channels

curl -sk $AUTH "$MIRTH_URL/channels" -H "Accept: application/xml" > "$BACKUP_DIR/all-channels.xml"

# Export code templates

curl -sk $AUTH "$MIRTH_URL/codeTemplateLibraries" -H "Accept: application/xml" > "$BACKUP_DIR/code-templates.xml"

# Export global scripts

curl -sk $AUTH "$MIRTH_URL/server/globalScripts" -H "Accept: application/xml" > "$BACKUP_DIR/global-scripts.xml"

# Export configuration map

curl -sk $AUTH "$MIRTH_URL/server/configurationMap" -H "Accept: application/xml" > "$BACKUP_DIR/config-map.xml"

# Commit to Git

cd "$BACKUP_DIR"

git add -A

git commit -m "Mirth backup $(date +%Y-%m-%d_%H:%M)"

git push origin mainRun this via cron (hourly for active environments, daily for stable ones). For organizations managing large-scale deployments, this backup strategy pairs well with building a robust HL7 interface engine using Mirth Connect.

DR Runbook Template

A runbook eliminates decision-making under pressure. When Mirth is down at 2 AM and the on-call engineer is groggy, they follow the runbook step by step. Here's a template you can adapt:

Phase 1: Detection and Assessment (0-5 minutes)

- Confirm the alert is real — check the monitoring dashboard, attempt to reach the Mirth Administrator Console

- Determine scope — is it a full outage (server unreachable) or partial (specific channels down)?

- Check dependencies — is the database reachable? Is it a network issue, not a Mirth issue?

- If a full outage is confirmed, proceed to Phase 2

Phase 2: Failover Execution (5-15 minutes)

- Verify that standby PostgreSQL is caught up:

SELECT pg_last_wal_receive_lsn(), pg_last_wal_replay_lsn(); - Promote the standby database:

pg_ctl promote -D /var/lib/pgsql/data - Start Mirth Connect on the standby server

- Update VIP/DNS to point to standby:

ip addr add 10.0.1.100/24 dev eth0(or update DNS record) - Verify channels are deployed and started

Phase 3: Verification (15-25 minutes)

- Send a test HL7 message through each critical channel

- Check destination queues — are messages flowing to downstream systems?

- Verify source systems have reconnected (check MLLP connections)

- Check for duplicate messages from source retries — review MSH-10 Control IDs

- Confirm dashboard metrics are populating

Phase 4: Communication (throughout)

- Notify clinical IT: "Integration engine failover in progress. Expect brief delays in lab results, ADT events, and order routing."

- Notify vendor contacts for critical interfaces

- Escalate to management if the RTO target is at risk

- Send all-clear once verification is complete

Phase 5: Post-Incident (within 24 hours)

- Root cause analysis — why did the primary fail?

- Timeline documentation — when was the failure detected, when was failover triggered, when was service restored?

- Measure actual RTO vs target — did you meet your 30-minute objective?

- Document lessons learned and update the runbook

- Plan a failback to the primary once the root cause is resolved

Testing Your Failover: Quarterly DR Drills

DR drills verify recovery from declared disasters; the complementary practice is continuously testing smaller failure modes — a slow destination, a dropped connection, a saturated queue — before they compound into one. That discipline is chaos engineering for healthcare integration, and it pairs naturally with the quarterly drill cadence below.

A disaster recovery plan that hasn't been tested is a disaster recovery fantasy. Schedule quarterly DR drills during planned maintenance windows. Here's what to test and measure:

DR Drill Checklist

| Step | Action | Target | Actual |

|---|---|---|---|

| 1 | Schedule a maintenance window with clinical IT | 30 min window | ___ |

| 2 | Stop primary Mirth service (simulate crash) | — | ___ |

| 3 | The heartbeat monitor detects failure | < 60 seconds | ___ |

| 4 | The failover procedure completes | < 5 minutes | ___ |

| 5 | Standby processing messages | < 10 minutes | ___ |

| 6 | All source systems are reconnected | < 15 minutes | ___ |

| 7 | In-flight messages recovered | 0 messages lost | ___ |

| 8 | Actual RTO (failure to full service) | < 30 minutes | ___ |

| 9 | Document findings and gaps | — | ___ |

| 10 | Failback to primary | < 15 minutes | ___ |

What You'll Discover

Every organization that runs its first DR drill discovers the same things: the runbook has gaps, the standby server has a different Mirth version, the DNS TTL is too long, and someone changed a channel on the primary without updating the standby. This is exactly why you drill. Fix what you find, update the runbook, and drill again next quarter.

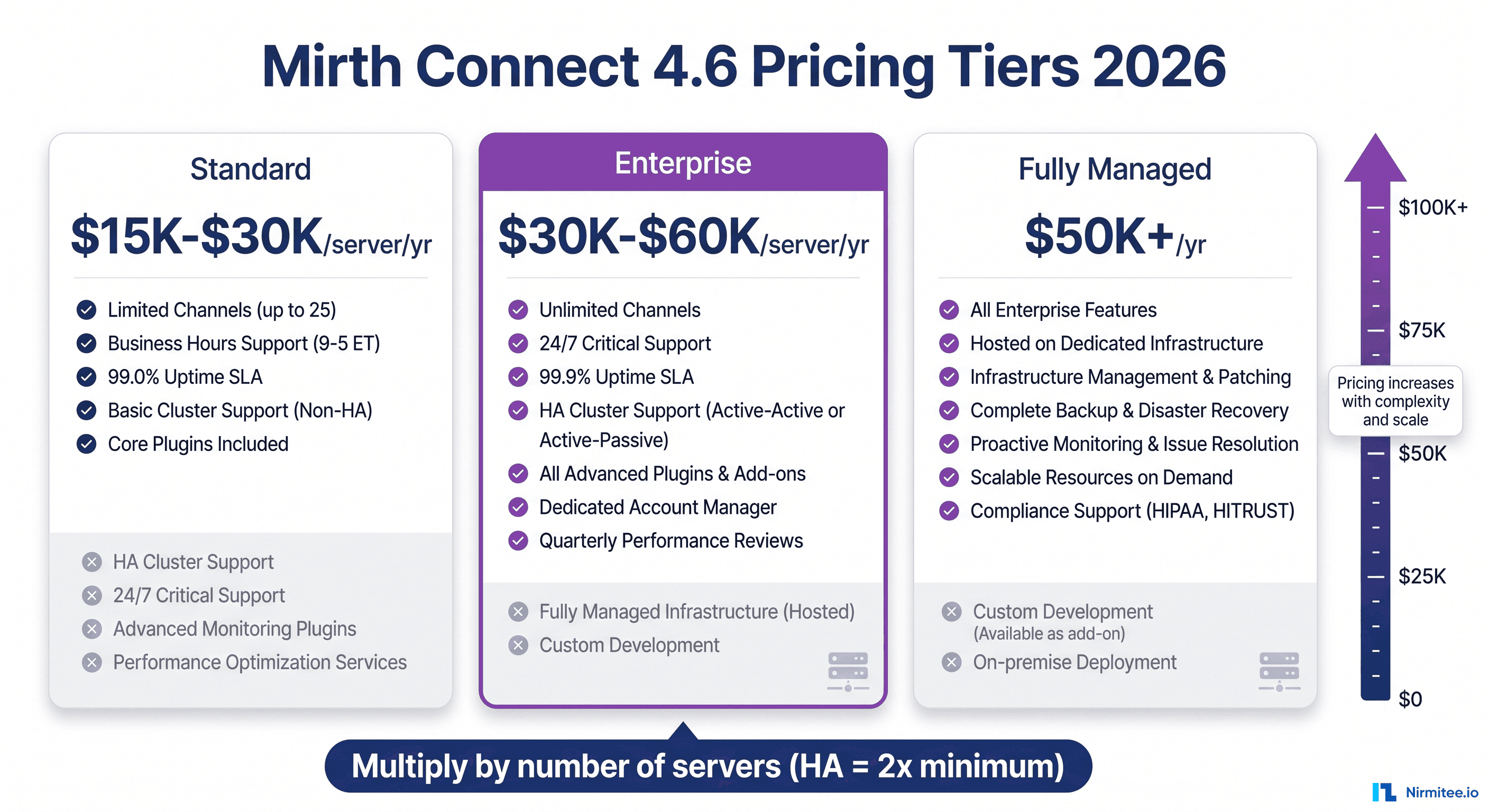

If your team is also navigating Mirth's licensing changes, our Mirth Connect 4.6 commercial transition guide covers migration strategies that affect how you plan your DR architecture.

Monitoring Checklist for DR Readiness

Your monitoring must cover both normal operations and DR readiness. Here's what to watch:

- PostgreSQL replication lag — alert if the standby is more than 60 seconds behind the primary

- Mirth channel status — alert on any channel in the STOPPED or ERROR state

- Message queue depth — alert if queued messages exceed threshold (indicates destination issues)

- Heartbeat check — alert if primary health check fails 2+ consecutive times

- Backup freshness — alert if the last pg_dump is older than the RPO target (15 min)

- Git backup age — alert if the last channel config commit is older than 24 hours

- Standby Mirth version — alert if standby is running a different version than primary

- Disk space on both nodes — alert at 80% (message store grows fast)

- WAL archive size — alert if WAL files are accumulating (replication may be broken)

- Certificate expiration — alert 30 days before TLS certs expire on either node

Building DR Into Your Integration Culture

Disaster recovery for Mirth Connect isn't a one-time project. It's an ongoing practice that requires investment, testing, and organizational commitment. The integration engine is the nervous system of your hospital IT — when it fails, every connected system feels it.

Start with the basics: define your RPO and RTO, set up active-passive failover with PostgreSQL streaming replication, automate your channel backups to Git, and run your first DR drill. You'll find gaps — that's the point. Each drill makes your recovery faster, your runbook better, and your team more confident.