Your healthcare AI agent nailed the demo. It pulled relevant patient history, summarized clinical notes in seconds, and even flagged a potential drug interaction the attending physician missed. Everyone in the room was impressed. You pushed the notebook to GitHub feeling like you'd just solved healthcare.

Then someone asked: "When can we put this in front of real patients?"

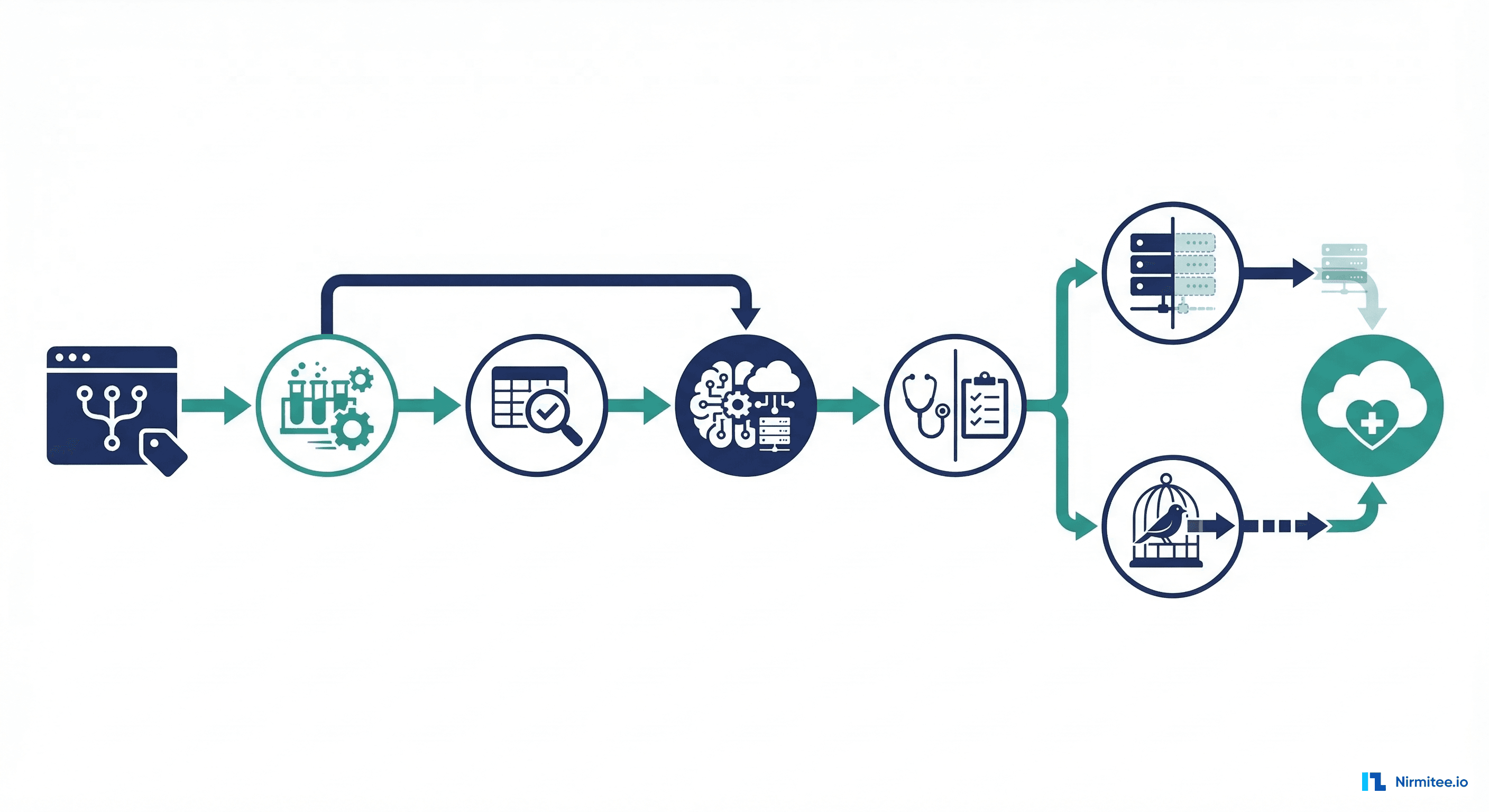

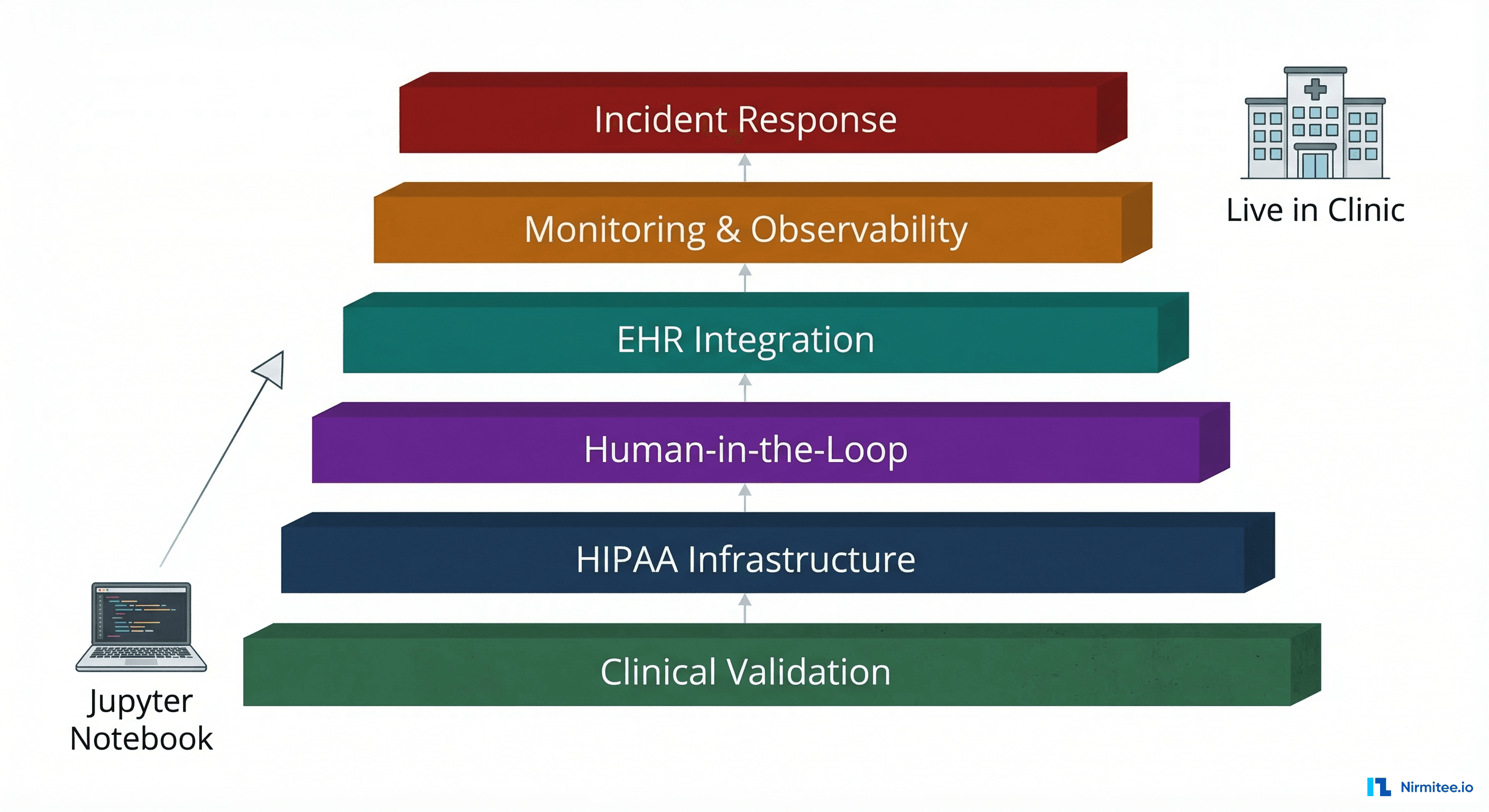

That question is where the real engineering begins. Between a working Jupyter notebook and an agent running in a live clinic, there are exactly six layers of infrastructure, compliance, and operational maturity that nobody warns you about. Each one has killed promising healthcare AI projects. Each one is solvable, but only if you know it exists before your go-live date.

This is the field guide we wish someone had given us.

Layer 1: Clinical Validation — Your 94% Accuracy Is a Liability

Here is the math that should terrify every healthcare AI engineer: a 94% accuracy rate on drug interaction detection sounds impressive until you realize it means 6 out of every 100 patients could receive incorrect information. In a busy urban ED seeing 200 patients a day, that is 12 potential adverse events. Every. Single. Day.

Standard ML evaluation metrics — accuracy, F1 score, AUC — were designed for ad clicks and product recommendations. They are dangerously insufficient for clinical applications. The FDA's framework for AI/ML-based Software as a Medical Device (SaMD) demands a fundamentally different evaluation approach.

Building a Clinical Test Suite That Actually Protects Patients

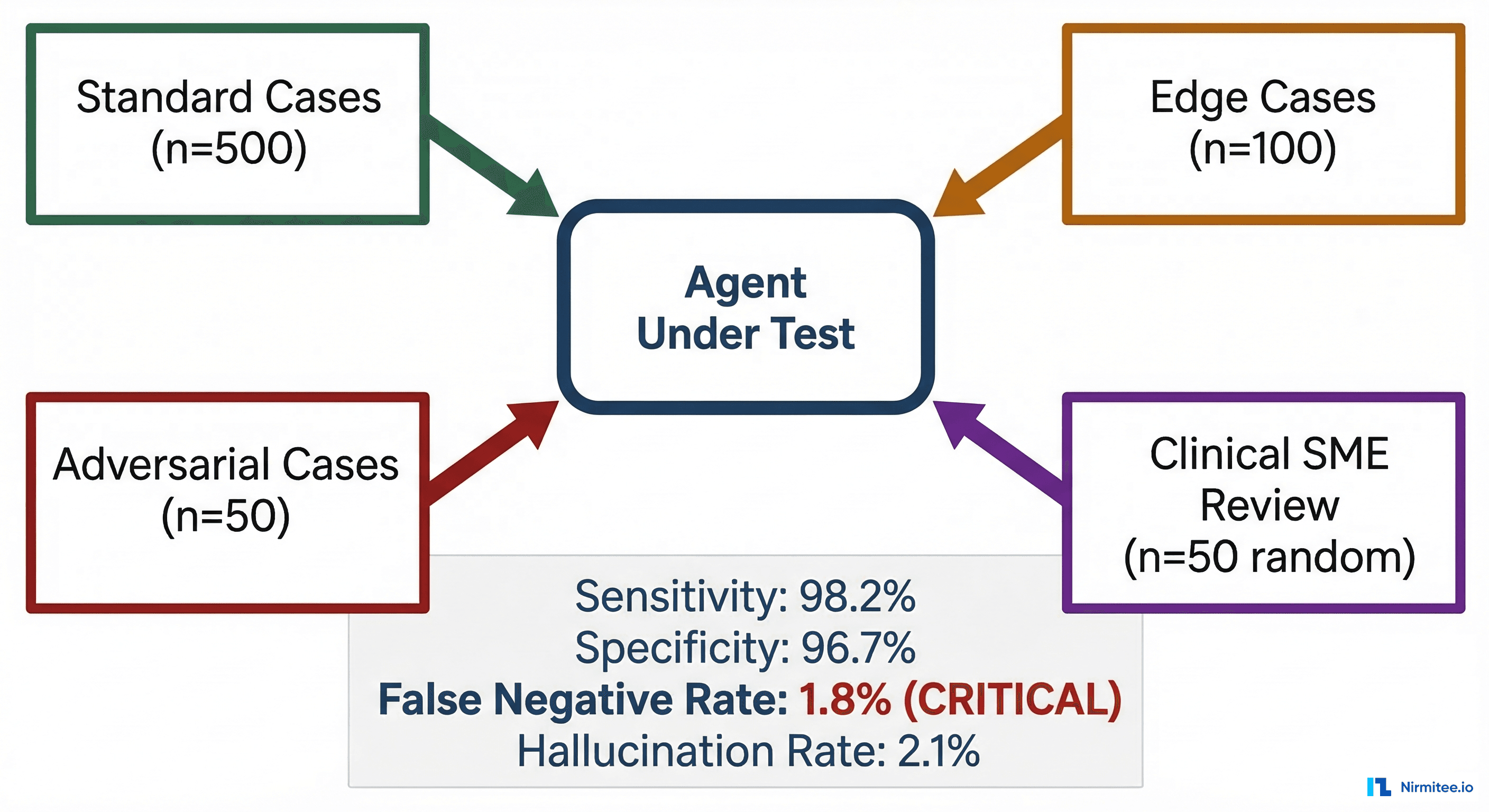

Your test suite needs four distinct tiers, each designed to catch different failure modes:

Tier 1: Standard Clinical Cases (n=500+). These are the bread-and-butter scenarios your agent will encounter daily. Hypertension management, diabetes monitoring, common medication reconciliation. Source these from de-identified clinical datasets like MIMIC-IV or partner with a clinical institution under a data use agreement. Your agent should score above 98% sensitivity here — not 94%. If it cannot handle routine cases near-perfectly, it has no business touching edge cases.

Tier 2: Edge Cases (n=100+). Polypharmacy patients on 12+ medications. Pediatric dosing calculations. Renal-adjusted dosing for elderly patients with eGFR below 30. Pregnant patients where half the standard formulary is contraindicated. These cases represent maybe 15% of real clinical volume but cause 60% of adverse drug events, according to a WHO patient safety analysis.

Tier 3: Adversarial Cases (n=50+). Intentionally craft prompts designed to break your agent. Feed it contradictory lab results. Give it patients with documented allergies and then ask about the allergen drug. Present it with off-label use scenarios where the "correct" answer is nuanced. This tier exists to find your agent's failure boundaries before a real patient does.

Tier 4: Clinical SME Blind Review (n=50 random). Randomly sample 50 outputs and have a board-certified physician review them without knowing they came from an AI. This is your ground truth. Their agreement rate with your agent's recommendations is your real accuracy number — not whatever your test harness reports.

The Metrics That Matter

For clinical AI, you need to track metrics the ML community rarely discusses:

- False Negative Rate for critical findings: If your agent misses a dangerous drug interaction, that is a potential patient death. Your false negative rate on critical alerts must be below 1%. Period. This is non-negotiable with every clinical informatics team we have worked with.

- Hallucination rate on clinical claims: Every factual assertion your agent makes — drug names, dosages, contraindications — must be traceable to a source document. Measure hallucination rate by checking a random sample of 200+ outputs against DailyMed, UpToDate, or your institution's formulary. Anything above 2% is a deployment blocker.

- Sensitivity vs. specificity tradeoff: In healthcare, you almost always want to bias toward sensitivity (catching true positives) at the expense of specificity (tolerating more false positives). A false alarm is an inconvenience; a missed critical finding is a lawsuit, or worse. Design your confidence thresholds accordingly.

If you are building clinical AI agents and need a deeper understanding of how observability frameworks apply specifically to agentic AI in healthcare, we have covered the monitoring patterns extensively.

Layer 2: HIPAA Infrastructure — Your Mock Data World Just Ended

The agent that worked beautifully with synthetic FHIR bundles on your laptop now needs to process real Protected Health Information. This is not a configuration change. It is an architectural transformation.

The BAA Question Nobody Asks Early Enough

If your agent sends any prompt containing PHI to an LLM provider, that provider is a Business Associate under HIPAA. You need a signed Business Associate Agreement (BAA) before a single real patient record touches their API. As of March 2026, the major providers offering HIPAA-eligible APIs with BAAs include Azure OpenAI Service, Google Cloud Vertex AI, and AWS Bedrock. OpenAI's direct API does not currently offer a BAA for most customers.

The practical implication: you may need to re-architect your agent to use a different LLM provider than the one you prototyped with. We have seen teams build entire proof-of-concepts on GPT-4 via the OpenAI API, only to discover at compliance review that they need to migrate to Azure OpenAI — same model, different infrastructure, different auth patterns, different rate limits.

PHI Isolation Architecture

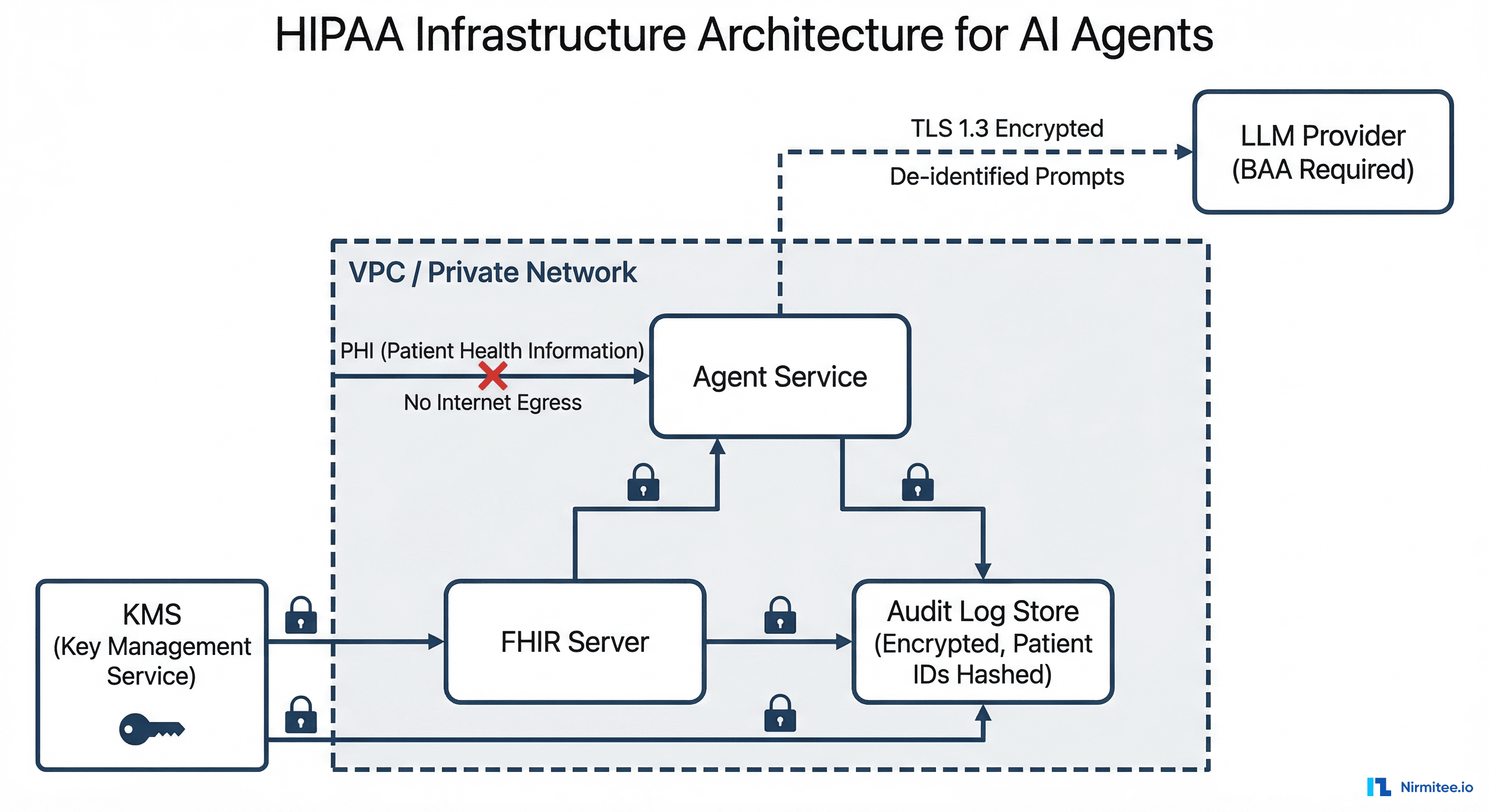

Your production architecture needs these components:

- VPC with no internet egress for PHI paths. Your agent service, FHIR server, and audit logs live inside a private network. The only outbound connection is to your BAA-covered LLM provider via a private endpoint or VPN tunnel. PHI never traverses the public internet unencrypted.

- Prompt sanitization pipeline. Before any prompt leaves your VPC, strip or hash direct patient identifiers. Replace "John Smith, DOB 03/15/1982, MRN 4471829" with "Patient [hash:a3f7], Age 44, Male." The clinical context survives; the PHI does not.

- Encrypted audit log store. Every prompt sent and every response received must be logged for compliance — but the logs themselves contain PHI. Encrypt at rest with KMS-managed keys. Hash patient identifiers in log entries so you can correlate events without exposing PHI to anyone reviewing logs.

- Separate compute for PHI and non-PHI workloads. Your agent's clinical reasoning (PHI-touching) and its UI serving (non-PHI) should run on different compute instances with different security groups. A vulnerability in your frontend should not expose your PHI processing layer.

This architecture is not theoretical. The HHS breach portal shows that in 2025 alone, over 170 million patient records were exposed in healthcare data breaches. Your agent cannot become the next entry on that wall of shame. For teams building on FHIR infrastructure, our guide on implementing SMART on FHIR with local-first storage covers the auth patterns that keep PHI contained.

Layer 3: Human-in-the-Loop — Not Every Output Deserves Auto-Delivery

The most dangerous misconception in healthcare AI: that the goal is full automation. It is not. The goal is appropriate automation — auto-delivering high-confidence outputs while routing uncertain ones to human reviewers. The ACM's principles on algorithmic transparency and the FDA's evolving guidance both point toward human oversight as a requirement, not a nice-to-have.

Confidence-Based Routing

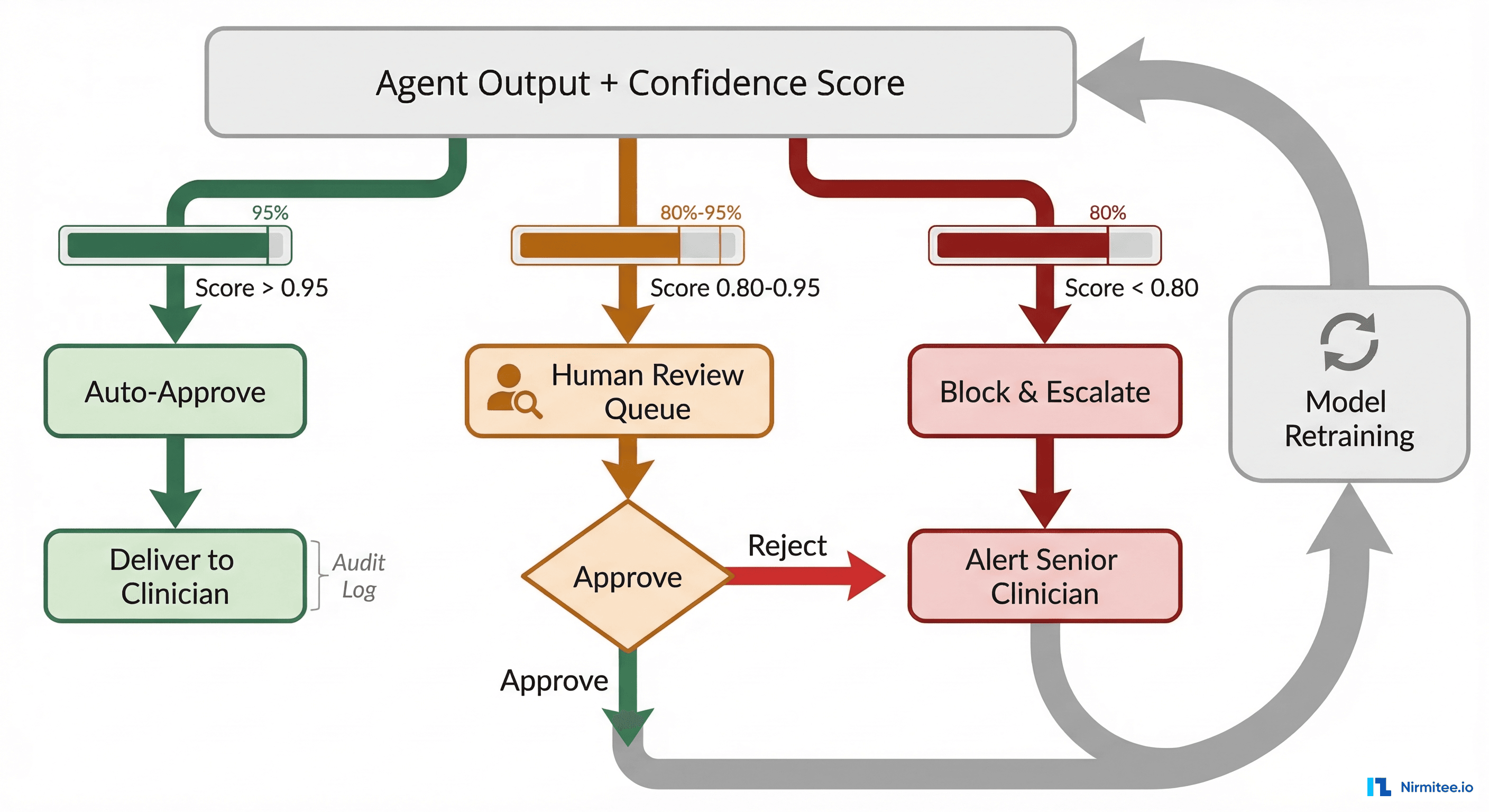

Every agent output needs a confidence score, and that score drives a three-tier routing decision:

Auto-approve (confidence > 0.95): The agent is highly certain, the output matches known patterns, and the clinical risk is low. Examples: summarizing a routine lab panel, pulling medication history, generating a standard referral letter. These go directly to the requesting clinician with an audit log entry. Even auto-approved outputs get spot-checked: randomly sample 5% for retrospective human review.

Human review queue (confidence 0.80–0.95): The agent produced an answer but flagged internal uncertainty. Maybe the patient's medication list has potential interactions the agent is not fully confident about. Maybe the clinical note contains ambiguous abbreviations. These go into a reviewer queue with the agent's output, its confidence breakdown, and the source documents it referenced. A trained reviewer (nurse informaticist, pharmacist, or physician) approves, modifies, or rejects.

Block and escalate (confidence < 0.80): The agent does not have enough information or the scenario is outside its training distribution. Instead of guessing, it explicitly declines and alerts the appropriate clinician. This is the hardest behavior to engineer because LLMs are trained to always produce an answer. You need explicit confidence calibration and refusal training.

The Feedback Loop That Makes Your Agent Smarter

Every human review decision is training data. When a reviewer corrects an agent output, that correction flows back into your evaluation dataset. Over 3–6 months, you will see the review queue shrink as the agent learns the patterns reviewers are correcting. Track your "review-to-auto-approve migration rate" as a key operational metric. If it is not improving, your feedback loop is broken.

This connects directly to the broader challenge of designing AI-driven clinical decision support systems that augment rather than replace clinical judgment.

Layer 4: EHR Integration — Where Your Agent Meets the Real World

Your agent needs to read patient data from and potentially write recommendations back to Epic, Cerner (now Oracle Health), athenahealth, or whichever EHR the clinic runs. This is not a REST API call. It is a political, technical, and regulatory negotiation that can take 6–18 months.

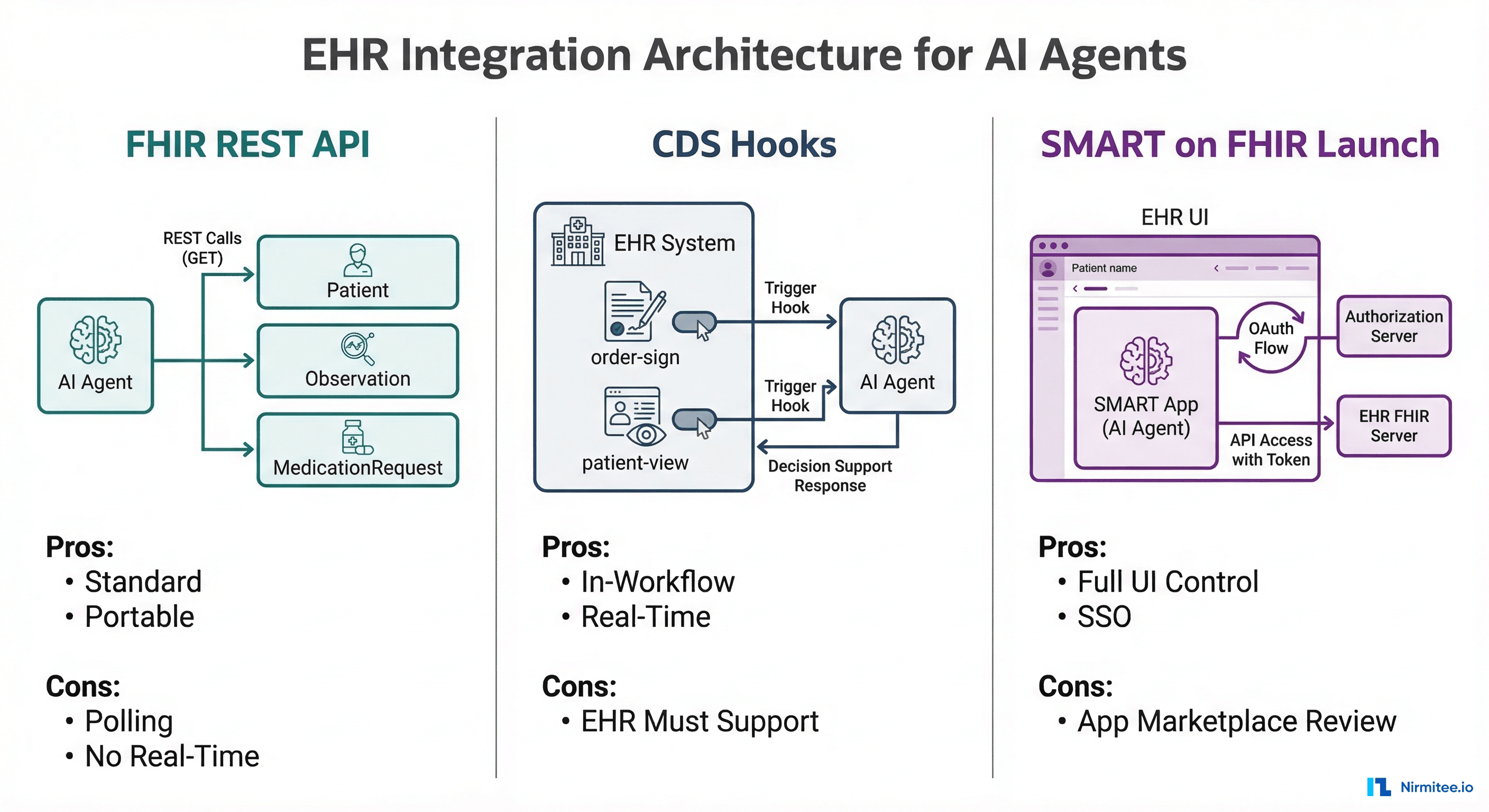

Three Integration Patterns, Each With Tradeoffs

Pattern 1: FHIR REST API. Your agent calls standard HL7 FHIR endpoints to read Patient, Observation, MedicationRequest, Condition, and other resources. This is the most portable approach — it works across any EHR that exposes FHIR R4 endpoints (which, post-21st Century Cures Act, is all certified EHRs in the US). The limitation: FHIR APIs are read-heavy. Writing back clinical recommendations requires navigating each EHR's specific write-back APIs, which are far less standardized.

Pattern 2: CDS Hooks. The CDS Hooks specification lets the EHR trigger your agent at specific clinical decision points: when a physician opens a patient chart (patient-view), when they sign an order (order-sign), or when they select a medication (order-select). Your agent receives the clinical context and returns "cards" with recommendations displayed inline in the EHR. This is the most clinically integrated pattern, but it requires EHR-side configuration and IT department buy-in.

Pattern 3: SMART on FHIR Launch. Your agent runs as a SMART on FHIR application embedded within the EHR's UI. The clinician launches it from within their workflow, it authenticates via OAuth 2.0, and it has access to the patient context. This gives you full UI control but requires going through the EHR vendor's app marketplace review process — which for Epic's App Orchard or Oracle Health's marketplace can take 3–12 months.

The Write-Back Problem

Reading from EHRs is straightforward. Writing back is where things get complicated. If your agent generates a clinical recommendation, it cannot just POST it as a new resource. It needs to go through a clinical review gate: a physician must explicitly approve the recommendation before it becomes part of the medical record. This is not just best practice — it is a CMS regulatory requirement for clinical documentation.

We covered the full spectrum of integration architecture in our guide to the mental model every engineer needs for healthcare integrations, including how FHIR, CDS Hooks, and SMART on FHIR fit together in real production systems.

Layer 5: Monitoring & Observability — You Cannot Grep Your Way Through Agent Failures

Traditional application monitoring — CPU usage, memory, HTTP error rates — tells you nothing about whether your healthcare agent is actually performing well. An agent can return 200 OK on every request while hallucinating drug names in 8% of responses. You need a purpose-built observability stack.

The Four Pillars of Agent Observability

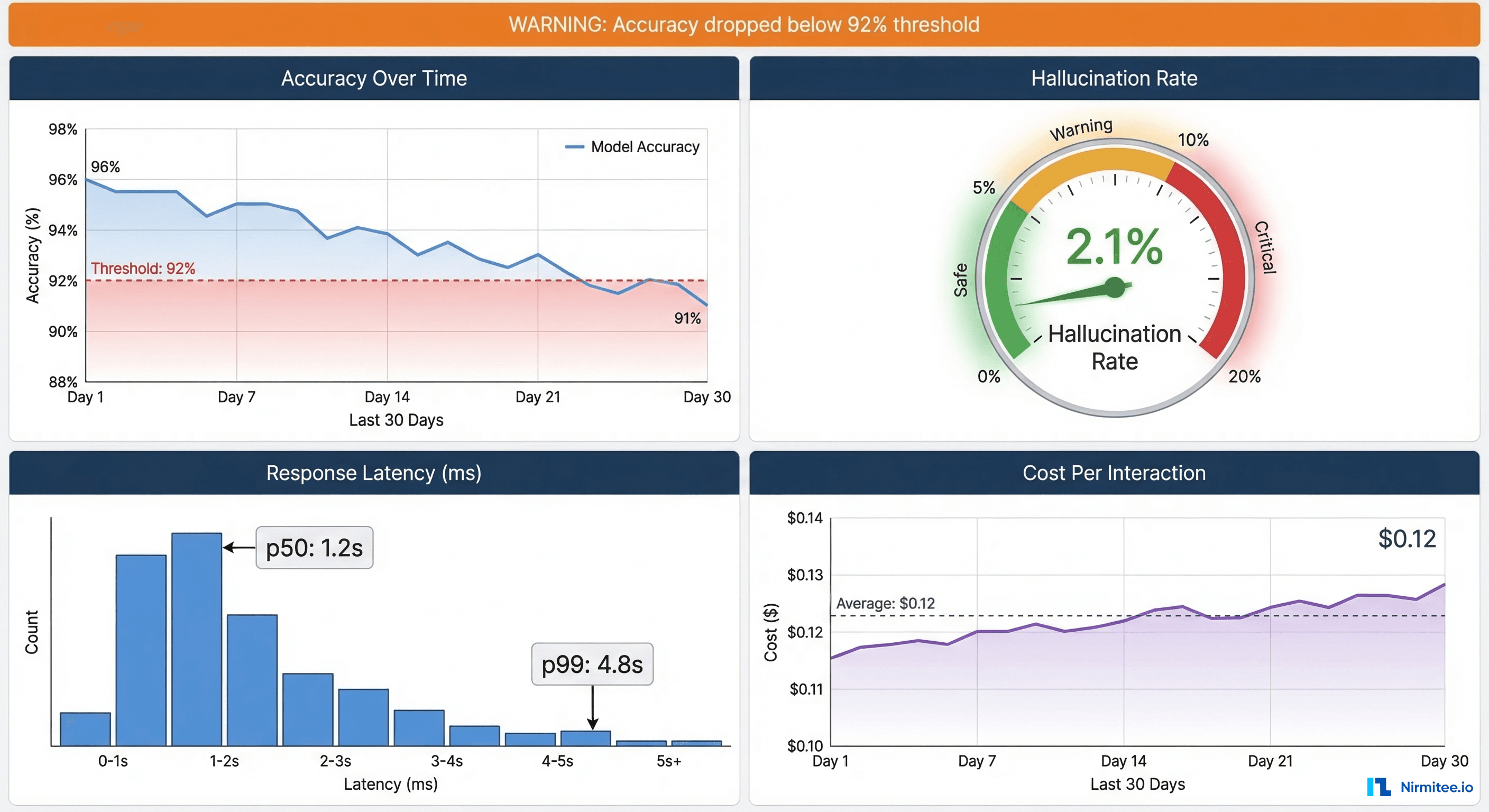

Pillar 1: Accuracy drift detection. Your agent's performance will degrade over time. New medications enter the market. Clinical guidelines get updated. The underlying LLM gets silently updated by the provider. Set up a canary test suite — 200 fixed clinical scenarios that run against your agent on a nightly cron job. Track accuracy over a 30-day rolling window. If it drops below your threshold (we recommend 92% as the alerting threshold, 90% as the deployment-block threshold), you need to investigate immediately. Gartner research indicates that model drift is the leading cause of AI system failures in production, ahead of infrastructure issues.

Pillar 2: Hallucination detection. For every agent response that includes clinical facts, compute a semantic similarity score between the agent's claims and the source documents it was supposed to reference. Use embedding-based similarity (not keyword matching) with a threshold of 0.85. Any response where the agent makes claims that do not semantically align with its sources gets flagged for human review. Track your hallucination rate as a daily metric. At Nirmitee, we have found that a sustained rate above 3% usually indicates a prompt engineering problem, while sudden spikes above 5% suggest a model provider change or data pipeline issue.

Pillar 3: Latency tracking per tool call. Healthcare AI agents are multi-step: they read from FHIR, call an LLM, possibly query a drug database, and format a response. Track latency at each step, not just end-to-end. A p99 latency of 8 seconds might be acceptable for a background summarization task, but for a CDS Hooks integration where a physician is waiting for an order-sign recommendation, anything above 3 seconds breaks the clinical workflow. Set per-tool-call latency budgets and alert when any single step exceeds its allocation.

Pillar 4: Cost per interaction. LLM API calls are expensive, and healthcare agents often require large context windows (full medication lists, lab histories, clinical notes). Track cost per interaction across all API calls. A clinical summarization agent we built saw costs spike from $0.08 to $0.34 per interaction when a prompt engineering change accidentally included 3x the necessary context. Without cost monitoring, that change would have burned through the monthly API budget in 10 days.

For a comprehensive framework on building this observability stack, our deep dive on observability for agentic AI in healthcare covers implementation patterns, alerting rules, and dashboard design in detail.

Layer 6: Incident Response — Your Agent Will Fail. Have a Runbook.

It is not a question of if your healthcare agent will produce a harmful output. It is a question of when. A Health Affairs study found that even FDA-cleared clinical decision support tools experience clinically significant errors in 3–7% of edge cases. Your agent, running on a general-purpose LLM, will have a higher baseline error rate.

The difference between a recoverable incident and a catastrophe is the speed and quality of your response.

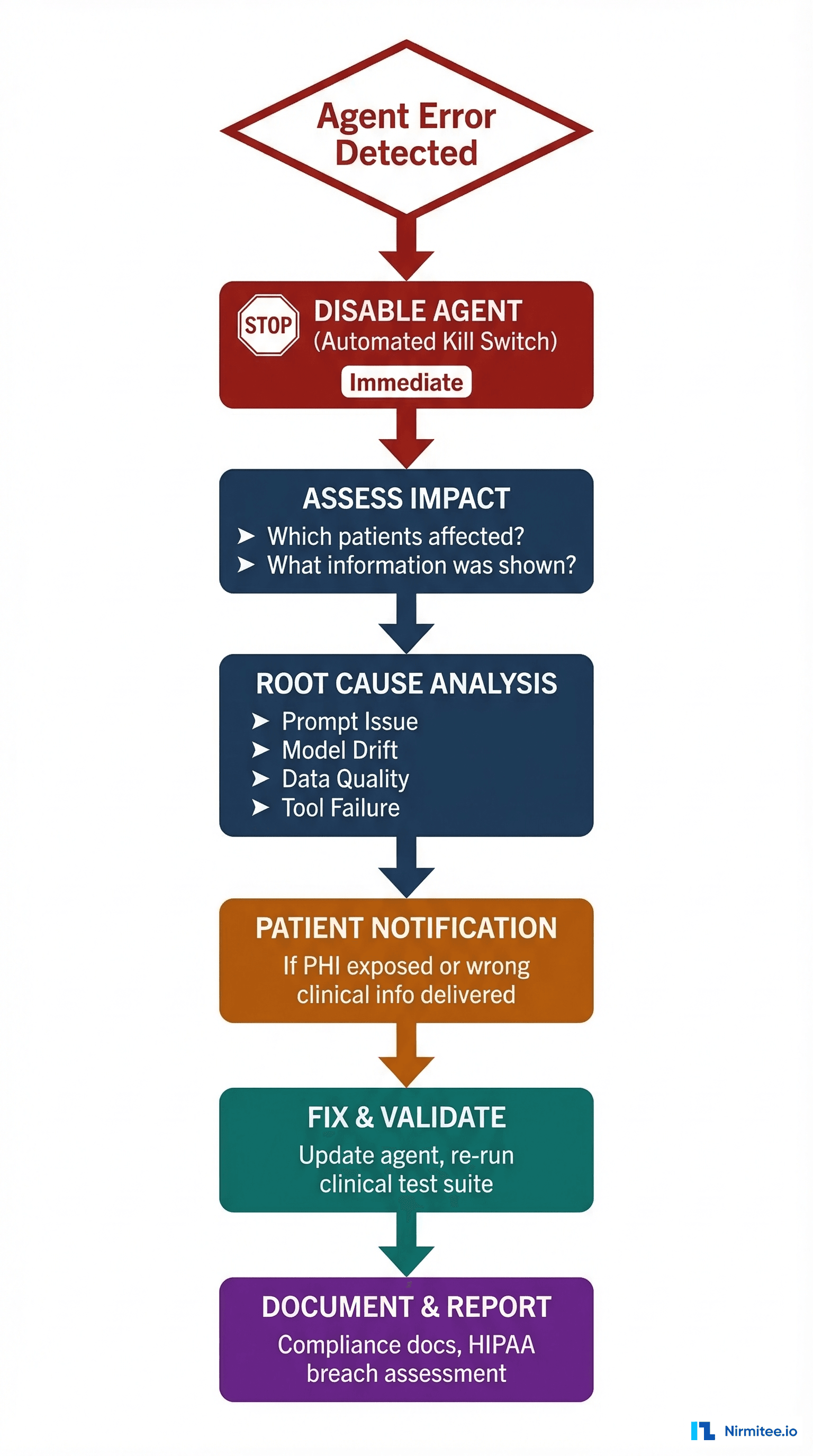

The Six-Step Runbook

Step 1: Immediate disable (automated). Your agent must have a kill switch — a single API call or feature flag that immediately stops it from serving responses to clinicians. This is not "graceful degradation." This is an emergency stop. Implement it as a Redis flag check at the top of every request handler. When the flag is set, the agent returns a standardized message: "This tool is temporarily unavailable. Please proceed with standard clinical workflow." The kill switch must be triggerable by your on-call engineer, your clinical informatics team, and your compliance officer. Test it monthly.

Step 2: Impact assessment. Within 1 hour of disabling, answer: How many patients were affected? What specific outputs did they receive? Were any clinical decisions made based on the incorrect output? This requires the comprehensive audit logging from Layer 2 — you need to reconstruct every interaction for the affected time window.

Step 3: Root cause analysis. The four most common root causes we have seen:

- Prompt regression: A prompt engineering change introduced an unintended behavior.

- Model drift: The LLM provider updated the model version without notice.

- Data quality: The FHIR data source returned malformed or incomplete records.

- Tool failure: An external API (drug database, clinical guidelines) returned unexpected results.

Step 4: Patient notification. Under HIPAA's Breach Notification Rule, if PHI was exposed, you have 60 days to notify affected patients and 60 days to notify HHS (immediately if 500+ patients affected). Even if no PHI breach occurred, if a patient received clinically incorrect information that could affect their care, ethical and legal obligations may still require notification through the treating physician.

Step 5: Fix and validate. Do not simply revert the change and re-enable. Add the failure scenario to your Tier 3 adversarial test suite (Layer 1). Re-run the full clinical validation suite. Only re-enable when the test suite passes and the specific failure scenario is confirmed fixed.

Step 6: Document and report. Create a formal incident report covering: timeline, root cause, patient impact, corrective actions, and preventive measures. If you operate under Joint Commission accreditation, sentinel events involving clinical decision support require reporting. Even without regulatory requirements, this documentation builds the institutional knowledge that prevents repeat incidents.

Understanding the prerequisites for building healthcare AI agents before you start helps you design these incident response capabilities into your architecture from day one, rather than bolting them on after the first failure.

Production Readiness Checklist

Before your healthcare AI agent sees its first real patient, every item on this list should be a confirmed "yes":

Clinical Validation

- Clinical test suite with 500+ standard cases, 100+ edge cases, 50+ adversarial cases

- False negative rate on critical findings below 1%

- Hallucination rate on clinical claims below 2%

- Clinical SME blind review completed with documented agreement rate

- Sensitivity/specificity thresholds defined and met for each clinical domain

HIPAA & Security

- BAA signed with LLM provider

- PHI isolation architecture deployed (VPC, no internet egress for PHI paths)

- Prompt sanitization pipeline stripping/hashing direct identifiers

- Encrypted audit logs with KMS-managed keys

- Penetration test completed on agent infrastructure

Human-in-the-Loop

- Confidence scoring calibrated and tested

- Three-tier routing (auto-approve, review, escalate) implemented

- Reviewer workflow UI built and user-tested with clinical staff

- Feedback loop from reviewer corrections to evaluation dataset active

EHR Integration

- FHIR read access tested against production EHR (not sandbox)

- Write-back flow includes physician approval gate

- SMART on FHIR or CDS Hooks integration tested end-to-end

- EHR vendor app marketplace review initiated (if applicable)

Monitoring & Observability

- Nightly canary test suite running with accuracy drift alerting

- Hallucination detection pipeline active with daily rate tracking

- Per-tool-call latency tracking with budget alerts

- Cost-per-interaction monitoring with budget thresholds

Incident Response

- Kill switch implemented and tested monthly

- Incident response runbook documented and tabletop-exercised

- Patient notification process defined and legal-reviewed

- Post-incident test suite expansion process documented

The Path Forward

Every one of these six layers represents real engineering work that does not show up in demos or pitch decks. But they are the difference between a healthcare AI agent that impresses investors and one that actually helps patients without harming them.

The good news: none of this is impossible. Teams are shipping production healthcare agents today. They are just doing it with the rigor and infrastructure these layers demand.

At Nirmitee, we have been building healthcare software — EHR integrations, FHIR infrastructure, clinical AI systems — long enough to know that the gap between "it works" and "it is safe for patients" is where the real engineering happens. If you are navigating these layers and want to talk architecture, we are here.

From architecture to production, our Healthcare Software Product Development team builds healthcare platforms that perform at scale. We also offer specialized Agentic AI for Healthcare services. Talk to our team to get started.

Frequently Asked QuestionsHow long does it take to go from a working healthcare AI prototype to production deployment?

Expect 6–18 months depending on the clinical domain, EHR integration complexity, and your organization's regulatory posture. The clinical validation and EHR marketplace review stages are typically the longest. Teams that build compliance infrastructure (Layers 2 and 6) in parallel with clinical validation (Layer 1) can compress the timeline significantly.

Do I need a BAA with my LLM provider even if I de-identify data before sending it?

Yes. The HIPAA Safe Harbor de-identification standard requires removal of 18 specific identifier types. If there is any possibility that your prompts contain residual PHI — and in clinical contexts, there almost always is — a BAA is required. Clinical narratives frequently contain identifiers in unexpected places (physician names, facility names, dates that narrow re-identification). A BAA is your safety net even with a sanitization pipeline.

What confidence scoring approach works best for healthcare AI agents?

We have found that a combination of LLM self-reported confidence (calibrated via temperature scaling), semantic similarity between the response and source documents, and coverage analysis (what percentage of the query is addressed by retrieved sources) produces the most reliable confidence scores. No single signal is sufficient. Calibrate your thresholds using your clinical SME review data from Layer 1.

Can I use CDS Hooks and SMART on FHIR simultaneously?

Yes, and many production systems do. CDS Hooks handle in-workflow triggers (alerting a physician during order entry), while SMART on FHIR provides the deep-dive interface when a clinician wants to explore the agent's full analysis. Think of CDS Hooks as the notification layer and SMART on FHIR as the application layer.

How often should I run my clinical validation test suite in production?

Nightly for the canary suite (200 fixed scenarios). Weekly for the full suite (500+ standard, 100+ edge, 50+ adversarial). Immediately after any change to prompts, model version, or data pipeline configuration. LLM providers occasionally update model weights without customer notification, so nightly canary runs are your early warning system for unexpected model drift.