Every healthcare AI project follows the same trajectory: six months of model development, two months of integration engineering, and then — on the day the model connects to real clinical data — the team discovers that 30% of patient records have incomplete demographics, the same diagnosis exists as three different codes across departments, and 8% of patients appear twice in the system. The model's accuracy in production is 15 points below what it achieved on clean training data.

Data quality is not a nice-to-have. It is the prerequisite that determines whether your AI model, analytics dashboard, or population health program will produce trustworthy results. Yet it is the step most teams skip. They assume FHIR data is clean because it is structured. It is not.

This guide covers the specific data quality problems in healthcare, FHIR-based quality validation, building a scoring framework, and automated remediation. Everything includes working Python code for a FHIR resource quality scoring pipeline that you can deploy against your own data.

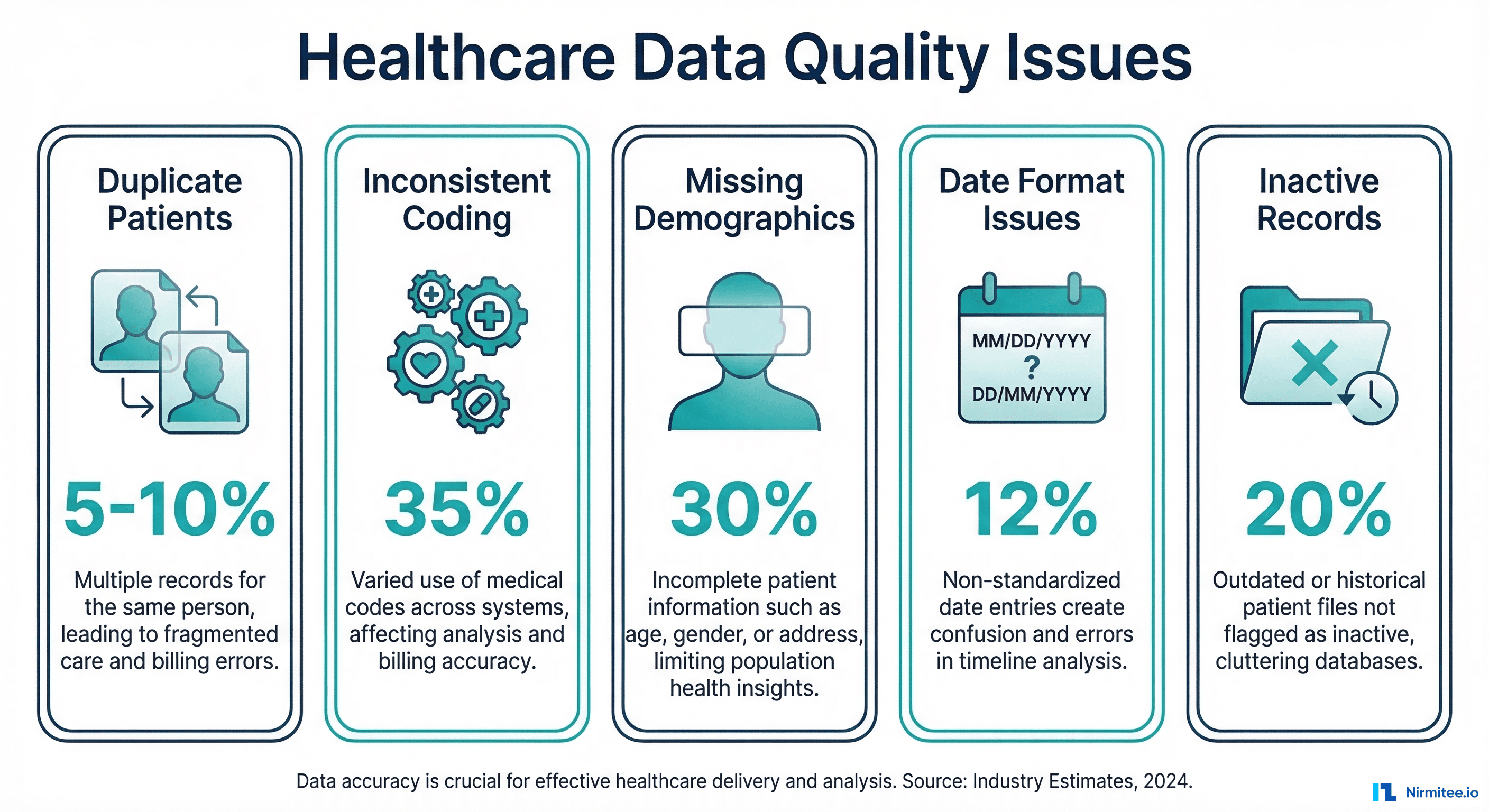

The Five Data Quality Problems That Break Everything

1. Duplicate Patients (5-10% in Most Systems)

Patient duplication is the most dangerous data quality problem in healthcare. AHIMA estimates that the average hospital has a 5-10% duplicate rate in their master patient index. Some systems exceed 20%. Duplicates cause:

- Fragmented clinical records — Half the patient's history is on one record, half on another. Your AI model sees an incomplete picture.

- Incorrect population counts — A diabetic patient counted twice inflates your prevalence statistics.

- Medication safety risks — Drug interaction checks fail when medications are split across duplicate records.

- Billing errors — Duplicate claims, duplicate eligibility checks, and reconciliation failures.

Duplicates arise from registration errors ("John Smith" vs "Johnathan Smith"), system migrations, mergers, and patients using different names or addresses at different visits.

2. Inconsistent Coding (35% of Records)

The same clinical concept coded differently across records. Type 2 diabetes appears as:

- ICD-10:

E11.9(Type 2 diabetes mellitus without complications) - SNOMED CT:

44054006(Type 2 diabetes mellitus) - ICD-10:

E11(truncated code, technically invalid) - Free text: "DM2", "diabetes", "Type II DM", "NIDDM"

- Local code:

DIAB-002(facility-specific coding system)

When you query "how many patients have diabetes?" the answer depends entirely on which codes you search for. An AI model trained on SNOMED-coded conditions will miss ICD-10-only records. For deep coverage of this problem, see our guide on FHIR Terminology Services.

3. Missing Demographics (30% Incomplete)

FHIR Patient resources with missing or partial data. Common gaps include:

| Field | % Missing | Impact |

|---|---|---|

| Race/Ethnicity | 40-60% | Health equity analytics impossible |

| Preferred Language | 50-70% | Communication barriers undetected |

| Address (complete) | 25-35% | Social determinants, geographic analysis fail |

| Phone/Email | 15-25% | Patient engagement/outreach blocked |

| Insurance/Coverage | 10-20% | Eligibility verification failures |

| Emergency Contact | 30-50% | Care coordination gaps |

Missing demographics are not just a data completeness issue. They are an equity issue. If 60% of minority patients lack race/ethnicity data, your population health analytics cannot identify disparities.

4. Date and Format Inconsistencies

FHIR specifies date formats (ISO 8601: YYYY-MM-DD), but real-world data arrives in many formats:

2026-03-16(FHIR standard)03/16/2026(US format)16-Mar-2026(narrative format)2026-03(month precision only)2026(year only, common for historical conditions)

More critically, temporal consistency matters: a lab result dated after the patient's death, a medication start date after the end date, or a diagnosis date before the patient's birth date. These are not hypothetical — they occur in 2-5% of records due to data entry errors and system clock issues.

5. Inactive Records Mixed With Active

FHIR resources have status fields (active, inactive, entered-in-error), but many systems do not maintain them correctly. Common issues:

- Conditions marked

activethat were resolved years ago - Medications with status

activethat were discontinued - Patient records marked

activefor deceased patients - Duplicate records where neither is marked

entered-in-error

An AI model that includes inactive medications in its drug interaction analysis will generate false alerts. An analytics dashboard that counts resolved conditions as active will overestimate disease prevalence.

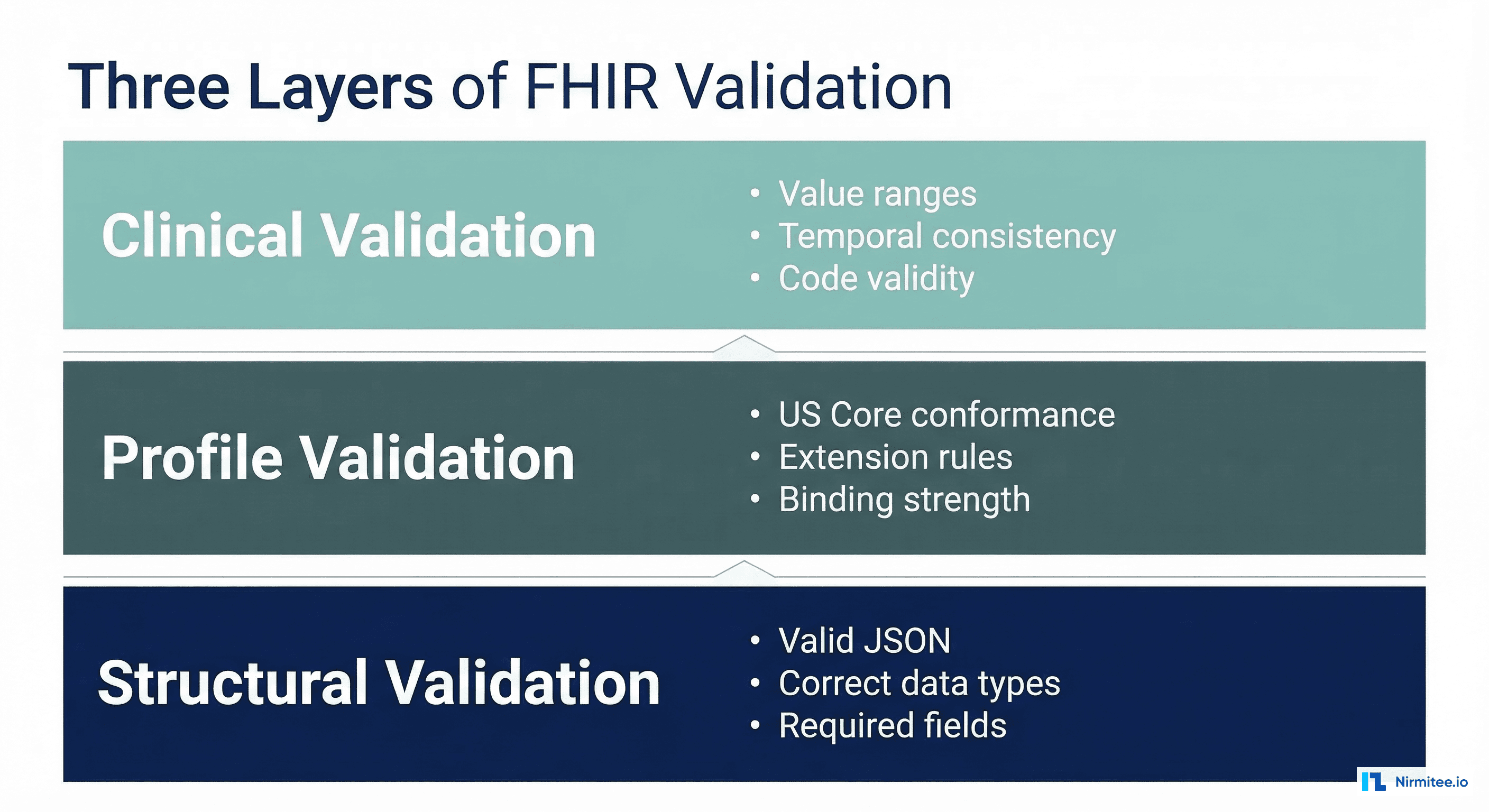

FHIR-Based Data Quality Checks

Here is a comprehensive data quality scoring framework for FHIR resources:

# fhir_quality_scorer.py

from dataclasses import dataclass, field

from datetime import datetime, date

from typing import Optional

import re

@dataclass

class QualityScore:

resource_type: str

resource_id: str

completeness: float = 0.0 # 0-1: are required fields present?

accuracy: float = 0.0 # 0-1: are values valid?

consistency: float = 0.0 # 0-1: do values agree?

timeliness: float = 0.0 # 0-1: is data current?

overall: float = 0.0 # weighted average

issues: list = field(default_factory=list)

def calculate_overall(self):

"""Calculate weighted overall score."""

weights = {

"completeness": 0.30,

"accuracy": 0.30,

"consistency": 0.25,

"timeliness": 0.15

}

self.overall = (

self.completeness * weights["completeness"] +

self.accuracy * weights["accuracy"] +

self.consistency * weights["consistency"] +

self.timeliness * weights["timeliness"]

)

class PatientQualityScorer:

"""Score the data quality of a FHIR Patient resource."""

REQUIRED_FIELDS = [

"name", "birthDate", "gender", "identifier"

]

RECOMMENDED_FIELDS = [

"address", "telecom", "communication",

"maritalStatus", "contact"

]

US_CORE_EXTENSIONS = [

"us-core-race", "us-core-ethnicity", "us-core-birthsex"

]

def score(self, patient: dict) -> QualityScore:

qs = QualityScore(

resource_type="Patient",

resource_id=patient.get("id", "unknown")

)

# Completeness

required_present = sum(

1 for f in self.REQUIRED_FIELDS if patient.get(f)

)

recommended_present = sum(

1 for f in self.RECOMMENDED_FIELDS if patient.get(f)

)

extensions = patient.get("extension", [])

ext_urls = [e.get("url", "") for e in extensions]

us_core_present = sum(

1 for ext in self.US_CORE_EXTENSIONS

if any(ext in url for url in ext_urls)

)

total_fields = (len(self.REQUIRED_FIELDS) +

len(self.RECOMMENDED_FIELDS) +

len(self.US_CORE_EXTENSIONS))

qs.completeness = (

required_present + recommended_present + us_core_present

) / total_fields

if required_present < len(self.REQUIRED_FIELDS):

missing = [f for f in self.REQUIRED_FIELDS if not patient.get(f)]

qs.issues.append(f"Missing required fields: {', '.join(missing)}")

# Accuracy

accuracy_checks = []

# Valid birth date

birth_date = patient.get("birthDate", "")

if birth_date:

try:

bd = datetime.strptime(birth_date, "%Y-%m-%d").date()

if bd > date.today():

qs.issues.append("Birth date is in the future")

accuracy_checks.append(0)

elif bd < date(1900, 1, 1):

qs.issues.append("Birth date before 1900 — likely error")

accuracy_checks.append(0.5)

else:

accuracy_checks.append(1.0)

except ValueError:

qs.issues.append(f"Invalid birth date format: {birth_date}")

accuracy_checks.append(0)

# Valid gender

gender = patient.get("gender", "")

if gender in ("male", "female", "other", "unknown"):

accuracy_checks.append(1.0)

elif gender:

qs.issues.append(f"Non-standard gender value: {gender}")

accuracy_checks.append(0.5)

# Valid name

names = patient.get("name", [])

if names:

name = names[0]

if name.get("family") and name.get("given"):

accuracy_checks.append(1.0)

else:

qs.issues.append("Name missing family or given component")

accuracy_checks.append(0.5)

# Valid identifiers (at least one with system)

identifiers = patient.get("identifier", [])

if identifiers:

valid_ids = [i for i in identifiers if i.get("system") and i.get("value")]

accuracy_checks.append(len(valid_ids) / len(identifiers))

qs.accuracy = sum(accuracy_checks) / len(accuracy_checks) if accuracy_checks else 0

# Consistency

consistency_checks = []

# Check deceased consistency

if patient.get("deceasedBoolean") or patient.get("deceasedDateTime"):

if patient.get("active", True):

qs.issues.append("Patient marked active but has deceased indicator")

consistency_checks.append(0)

else:

consistency_checks.append(1.0)

else:

consistency_checks.append(1.0)

# Address consistency

for addr in patient.get("address", []):

if addr.get("postalCode") and addr.get("state"):

consistency_checks.append(1.0)

elif addr.get("postalCode") or addr.get("state"):

qs.issues.append("Address partially complete")

consistency_checks.append(0.5)

qs.consistency = (

sum(consistency_checks) / len(consistency_checks)

if consistency_checks else 1.0

)

# Timeliness

meta = patient.get("meta", {})

last_updated = meta.get("lastUpdated", "")

if last_updated:

try:

updated = datetime.fromisoformat(

last_updated.replace("Z", "+00:00")

)

days_since = (datetime.now(updated.tzinfo) - updated).days

if days_since < 90:

qs.timeliness = 1.0

elif days_since < 365:

qs.timeliness = 0.7

elif days_since < 730:

qs.timeliness = 0.4

else:

qs.timeliness = 0.2

qs.issues.append(f"Record not updated in {days_since} days")

except (ValueError, TypeError):

qs.timeliness = 0.5

else:

qs.timeliness = 0.5

qs.issues.append("No lastUpdated timestamp")

qs.calculate_overall()

return qs

class ConditionQualityScorer:

"""Score the data quality of a FHIR Condition resource."""

STANDARD_SYSTEMS = [

"http://snomed.info/sct",

"http://hl7.org/fhir/sid/icd-10-cm",

"http://hl7.org/fhir/sid/icd-10"

]

def score(self, condition: dict) -> QualityScore:

qs = QualityScore(

resource_type="Condition",

resource_id=condition.get("id", "unknown")

)

# Completeness

completeness_checks = 0

total_checks = 6

if condition.get("code"):

completeness_checks += 1

else:

qs.issues.append("Missing condition code")

if condition.get("subject"):

completeness_checks += 1

if condition.get("clinicalStatus"):

completeness_checks += 1

else:

qs.issues.append("Missing clinicalStatus")

if condition.get("verificationStatus"):

completeness_checks += 1

if condition.get("onsetDateTime") or condition.get("onsetPeriod"):

completeness_checks += 1

else:

qs.issues.append("Missing onset date")

if condition.get("category"):

completeness_checks += 1

qs.completeness = completeness_checks / total_checks

# Accuracy - check coding

accuracy_checks = []

code = condition.get("code", {})

codings = code.get("coding", [])

if codings:

has_standard = any(

c.get("system") in self.STANDARD_SYSTEMS for c in codings

)

if has_standard:

accuracy_checks.append(1.0)

else:

qs.issues.append("No standard coding system (SNOMED/ICD-10)")

accuracy_checks.append(0.3)

has_display = all(c.get("display") for c in codings)

accuracy_checks.append(1.0 if has_display else 0.5)

elif code.get("text"):

qs.issues.append("Free-text only condition — no structured code")

accuracy_checks.append(0.2)

else:

accuracy_checks.append(0)

qs.accuracy = (

sum(accuracy_checks) / len(accuracy_checks)

if accuracy_checks else 0

)

# Consistency

clinical_status = condition.get("clinicalStatus", {})

status_code = ""

for coding in clinical_status.get("coding", []):

status_code = coding.get("code", "")

if status_code == "active" and condition.get("abatementDateTime"):

qs.issues.append("Status is active but abatement date exists")

qs.consistency = 0.3

else:

qs.consistency = 1.0

qs.timeliness = 0.8 # Conditions are less time-sensitive

qs.calculate_overall()

return qsBuilding the Data Quality Dashboard

Aggregate individual resource scores into an organizational quality dashboard:

# quality_dashboard.py

from collections import defaultdict

import json

class DataQualityDashboard:

"""Aggregate data quality metrics across a FHIR data store."""

def __init__(self):

self.scores_by_type = defaultdict(list)

self.issues_by_type = defaultdict(lambda: defaultdict(int))

def add_score(self, score: QualityScore):

"""Add a resource quality score to the dashboard."""

self.scores_by_type[score.resource_type].append(score)

for issue in score.issues:

self.issues_by_type[score.resource_type][issue] += 1

def generate_report(self) -> dict:

"""Generate comprehensive quality report."""

report = {

"generated_at": datetime.now().isoformat(),

"summary": {},

"by_resource_type": {},

"top_issues": [],

"remediation_priorities": []

}

all_scores = []

for resource_type, scores in self.scores_by_type.items():

if not scores:

continue

avg = lambda field: sum(

getattr(s, field) for s in scores

) / len(scores)

type_report = {

"count": len(scores),

"completeness": round(avg("completeness"), 3),

"accuracy": round(avg("accuracy"), 3),

"consistency": round(avg("consistency"), 3),

"timeliness": round(avg("timeliness"), 3),

"overall": round(avg("overall"), 3),

"below_threshold": sum(

1 for s in scores if s.overall < 0.7

),

"top_issues": sorted(

self.issues_by_type[resource_type].items(),

key=lambda x: x[1], reverse=True

)[:10]

}

report["by_resource_type"][resource_type] = type_report

all_scores.extend(scores)

if all_scores:

report["summary"] = {

"total_resources": len(all_scores),

"overall_quality": round(

sum(s.overall for s in all_scores) / len(all_scores), 3

),

"resources_below_threshold": sum(

1 for s in all_scores if s.overall < 0.7

),

"percentage_below_threshold": round(

sum(1 for s in all_scores if s.overall < 0.7)

/ len(all_scores) * 100, 1

)

}

# Generate remediation priorities

report["remediation_priorities"] = self._prioritize_remediation()

return report

def _prioritize_remediation(self) -> list:

"""Generate prioritized list of remediation actions."""

priorities = []

for resource_type, issues in self.issues_by_type.items():

for issue, count in sorted(

issues.items(), key=lambda x: x[1], reverse=True

)[:5]:

total = len(self.scores_by_type[resource_type])

impact = count / total if total else 0

priorities.append({

"resource_type": resource_type,

"issue": issue,

"affected_count": count,

"impact_percentage": round(impact * 100, 1),

"priority": "high" if impact > 0.2 else (

"medium" if impact > 0.05 else "low"

),

"suggested_action": self._suggest_action(issue)

})

return sorted(priorities, key=lambda x: x["affected_count"], reverse=True)

def _suggest_action(self, issue: str) -> str:

"""Suggest remediation action based on issue type."""

if "missing" in issue.lower():

return "Run data enrichment pipeline to fill missing fields"

elif "coding" in issue.lower() or "code" in issue.lower():

return "Map to standard terminology using ConceptMap"

elif "date" in issue.lower():

return "Validate and correct date formats"

elif "duplicate" in issue.lower():

return "Run patient matching/merge workflow"

elif "status" in issue.lower() or "active" in issue.lower():

return "Review and update resource status flags"

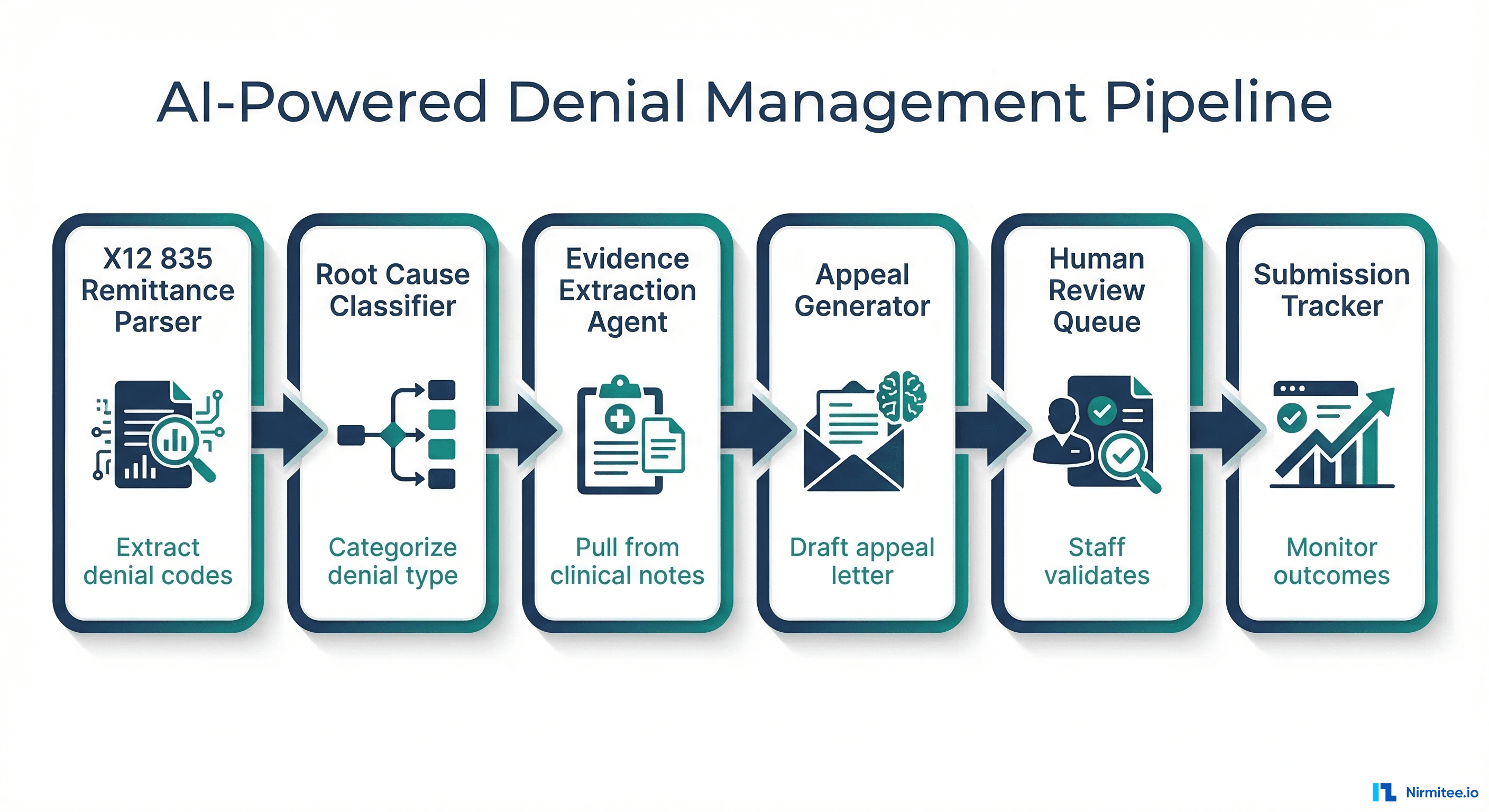

return "Manual review required"Automated Remediation Strategies

Data quality is not a one-time audit — it is a continuous process. Here are the automated remediation patterns that work in production:

Auto-Fixable Issues

| Issue | Automated Fix | Risk Level |

|---|---|---|

| Missing display name on coded values | $lookup against terminology server | Low |

| Date format inconsistencies | Parse and normalize to ISO 8601 | Low |

| Missing US Core extensions | Enrich from registration system data | Medium |

| Truncated ICD-10 codes (E11 vs E11.9) | Expand to most common specific code | Medium |

| Missing coding system URIs | Infer from code pattern and add system | Medium |

Requires Human Review

| Issue | Review Process | Reviewer |

|---|---|---|

| Potential duplicate patients | Present match candidates for merge/split | HIM staff |

| Free-text conditions with no codes | Present NLP suggestions for confirmation | Clinical coder |

| Inconsistent clinical status | Review clinical history and update | Clinician |

| Conflicting data across systems | Present conflicting values for resolution | Data analyst |

Critical Alerts

- Deceased patient with active encounters — Immediate data integrity concern

- Medication interactions on split records — Patient safety issue requiring urgent merge

- Missing allergies on records with medications — Clinical risk flagged for pharmacist review

For the systems that maintain these quality checks continuously, see our guide on Observability for Agentic AI in Healthcare.

Data Quality's Impact on AI Performance

The relationship between data quality and model performance is not linear — it is exponential at the extremes. Based on production deployments:

| Data Quality Score | AI Model Impact | Production Readiness |

|---|---|---|

| Below 60% | Models produce unreliable outputs. False positive rates exceed 30%. | Not deployable |

| 60-70% | Models work for broad pattern detection but fail on edge cases | Research only |

| 70-80% | Models achieve acceptable accuracy for non-critical use cases | Limited production with human oversight |

| 80-90% | Models match published benchmark performance | Production-ready for most use cases |

| Above 90% | Models exceed benchmarks due to high-quality training signal | Full production deployment |

The practical implication: investing one month in data quality improvement will deliver more AI performance gain than three months of model tuning. If your data quality score is below 80%, stop building models and start fixing data. For the architecture that makes this data pipeline work, see our guide on Medallion Architecture for Healthcare Data.

Setting Up Continuous Data Quality Monitoring

# continuous_monitoring.py

import schedule

import time

from datetime import datetime, timedelta

class DataQualityMonitor:

"""Continuous monitoring of FHIR data quality."""

def __init__(self, fhir_client, scorers: dict, alert_callback=None):

self.fhir_client = fhir_client

self.scorers = scorers # {"Patient": PatientQualityScorer(), ...}

self.alert_callback = alert_callback

self.baseline_scores = {}

def run_quality_check(self, sample_size: int = 100):

"""Run quality checks on a sample of recent resources."""

dashboard = DataQualityDashboard()

since = (datetime.now() - timedelta(days=1)).strftime("%Y-%m-%d")

for resource_type, scorer in self.scorers.items():

resources = self.fhir_client.search(

resource_type,

params={"_lastUpdated": f"ge{since}", "_count": sample_size}

)

for resource in resources:

score = scorer.score(resource)

dashboard.add_score(score)

# Alert on critical issues

if score.overall < 0.5 and self.alert_callback:

self.alert_callback(

f"Critical data quality issue: {resource_type}/{resource.get('id')} "

f"scored {score.overall:.2f}. Issues: {score.issues}"

)

report = dashboard.generate_report()

# Check for quality degradation

current_overall = report["summary"].get("overall_quality", 0)

if self.baseline_scores:

baseline = self.baseline_scores.get("overall_quality", 0)

if current_overall < baseline - 0.05: # 5% degradation

if self.alert_callback:

self.alert_callback(

f"Data quality degradation detected: "

f"{baseline:.3f} -> {current_overall:.3f}"

)

self.baseline_scores = report["summary"]

return report

def start_monitoring(self, interval_hours: int = 24):

"""Start scheduled quality monitoring."""

schedule.every(interval_hours).hours.do(self.run_quality_check)

while True:

schedule.run_pending()

time.sleep(60)This monitor runs daily quality checks, detects degradation trends, and alerts on critical issues. In production, integrate this with your existing monitoring stack (Prometheus metrics, PagerDuty alerts, Slack notifications).

Frequently Asked Questions

How do I get started with data quality when I have millions of FHIR resources?

Start with sampling. Run the quality scorer against a random 1% sample of each resource type. The results will be statistically representative. Focus remediation on the resource types and issues with the highest impact. Patient and Condition resources are typically the highest priority because they affect the most downstream analytics.

What data quality score should I target before deploying AI?

For clinical decision support: target 85% or above. For population health analytics: 75% is acceptable for trend detection, 85% for actionable insights. For research: 90% or above. These thresholds assume your quality scoring covers completeness, accuracy, consistency, and timeliness. A high completeness score with low accuracy is worse than moderate scores across all dimensions.

How do I handle data quality across multiple EHR sources?

Run quality checks per-source first. Identify which source has the worst data for each dimension. Then run cross-source consistency checks: does the same patient have the same conditions in both systems? Cross-source inconsistencies often reveal source-specific data entry practices that need standardization. See our guide on Multi-EHR Integration for the architecture.

Should I fix data quality at the source or in the pipeline?

Both. Fix at the source for new data going forward (better registration forms, mandatory coding fields, real-time validation). Fix in the pipeline for historical data (batch normalization, terminology mapping, duplicate resolution). Source fixes are more durable; pipeline fixes are faster to implement.

How do I measure ROI of data quality improvement?

Track three metrics: (1) reduction in downstream errors (fewer false positive alerts, fewer billing rejections), (2) improvement in AI model accuracy (measured against a held-out test set before and after quality improvement), (3) reduction in manual review time (fewer data issues requiring human intervention). Most organizations see 3-5x ROI within the first year of systematic data quality improvement.

Conclusion

Data quality is the foundation that every healthcare AI project, analytics initiative, and interoperability effort builds on. The tools and techniques covered here — FHIR resource scoring, automated validation pipelines, continuous monitoring, and prioritized remediation — give you a practical framework to measure, track, and improve the quality of your clinical data.

Do not wait until your AI model underperforms in production to discover your data problems. Build quality checks into your data pipeline from day one. Measure baseline quality scores, set improvement targets, and automate the remediation workflow. The organizations that invest in data quality consistently outperform those that skip it and go straight to model building.

For the broader context of building reliable healthcare data infrastructure, see our guides on The Mental Model for Healthcare Integrations and Prerequisites Before Building an AI Agent for Healthcare.