Every HIPAA guide on the internet was written by a lawyer. You can tell because they spend 2,000 words explaining what "reasonable and appropriate" means and zero words explaining how to actually implement envelope encryption with KMS key rotation. This guide is different. It is written by engineers, for engineers, and it maps every HIPAA technical safeguard to a concrete architecture component you can deploy this quarter.

The timing matters. The proposed HIPAA Security Rule update -- the most significant revision in over a decade -- is edging closer to finalization as of March 2026. Over 100 hospital systems pushed back on the proposed changes, arguing the compliance costs were too high. At HIMSS26, OCR Director Melanie Fontes Rainer defended the rule directly: "The cost of doing nothing is very high." She is right. The average healthcare data breach now costs $10.93 million -- more than double the next most expensive industry. And 41.2% of healthcare breaches trace to third-party vendors, which is exactly why the new rule mandates vendor security assessments.

Whether the final rule drops in Q2 or Q3 of 2026, the engineering requirements are clear. Mandatory MFA. Encryption at rest and in transit with no exceptions. Network segmentation. Annual technical risk assessments. 72-hour breach notification. These are not aspirational guidelines -- they are testable, auditable technical controls. This guide gives you the architecture to implement every one of them.

The 2026 HIPAA Security Rule: What Is Changing

The proposed rule, published in the Federal Register on January 6, 2025, rewrites major portions of 45 CFR Part 164. For engineers, the critical shift is the elimination of "addressable" versus "required" implementation specifications. Under the current rule, organizations could document why a particular control was not "reasonable and appropriate" and implement an alternative. Under the new rule, every specification is required. Period.

Here is what the proposed changes mean in engineering terms:

| Proposed Requirement | Current State | New Mandate | Engineering Translation |

|---|---|---|---|

| Multi-factor authentication | Addressable | Required for all ePHI access | MFA on every system touching PHI -- EHR, database, VPN, cloud console, API gateway |

| Encryption at rest | Addressable | Required, no exceptions | AES-256 for all storage: databases, file systems, backups, archives, removable media |

| Encryption in transit | Addressable | Required, no exceptions | TLS 1.2+ minimum on all connections carrying ePHI, including internal service-to-service |

| Network segmentation | Not specified | Required | Separate network zones for clinical, administrative, IoT/biomedical devices, and guest traffic |

| Risk assessment frequency | "Regular" (undefined) | Annual minimum | Automated vulnerability scanning + annual penetration testing + continuous risk scoring |

| Breach notification | 60 days | 72 hours | Automated detection pipeline, pre-staged notification templates, incident response runbooks |

| Vendor security assessment | BAA only | Written verification of vendor controls | Vendor security questionnaire automation, continuous third-party risk monitoring |

| Technology asset inventory | Not specified | Required, maintained current | CMDB with automated discovery, tagging ePHI-touching systems, drift detection |

| Vulnerability management | Implicit | Patch critical vulnerabilities within 15 days | Automated patch management, vulnerability prioritization, SLA-based remediation tracking |

| Backup and recovery | Required but vague | Specific RPO/RTO requirements, annual restore testing | Immutable backups, automated restore testing, documented recovery procedures per system |

The 72-hour breach notification window alone demands architectural changes. You cannot manually investigate a breach, determine its scope, and notify HHS in 72 hours without automated detection, pre-built forensic tooling, and well-rehearsed incident response runbooks. The current 60-day window allowed organizations to investigate at a measured pace. Seventy-two hours means your SIEM needs to surface the alert, your IR team needs to triage it, and your legal team needs to have notification templates ready -- all within three business days.

HIPAA Technical Safeguards to Architecture Components: The Complete Mapping

Section 164.312 of the HIPAA Security Rule defines five technical safeguard categories. Every compliance guide lists them. None of them map them to deployable architecture components. This table does.

| HIPAA Safeguard | Regulation Reference | Specification | Architecture Component | Implementation |

|---|---|---|---|---|

| Access Control (164.312(a)) | 164.312(a)(2)(i) | Unique user identification | Identity Provider (IdP) | Okta, Azure AD, Keycloak with unique user IDs, no shared accounts |

| 164.312(a)(2)(ii) | Emergency access procedure | Break-glass mechanism | Time-limited emergency role elevation with mandatory post-access audit | |

| 164.312(a)(2)(iii) | Automatic logoff | Session management | Token expiration (15-min idle for web, 1-hour absolute), refresh token rotation | |

| 164.312(a)(2)(iv) | Encryption and decryption | KMS + envelope encryption | AWS KMS / Azure Key Vault / HashiCorp Vault with per-tenant data encryption keys | |

| Audit Controls (164.312(b)) | 164.312(b)(1) | Record and examine activity | Centralized logging pipeline | Application logs to Fluentd/Vector to Elasticsearch/CloudWatch to SIEM with 6-year retention |

| 164.312(b)(1) | Log review and alerting | SIEM + alerting | Splunk/Elastic SIEM with automated anomaly detection and PagerDuty/Opsgenie integration | |

| Integrity (164.312(c)) | 164.312(c)(2) | Authenticate ePHI | Data integrity verification | HMAC checksums on stored records, database triggers on PHI tables, file integrity monitoring (OSSEC/Wazuh) |

| Person/Entity Auth (164.312(d)) | 164.312(d)(1) | Verify identity of person/entity | MFA + certificate-based auth | TOTP/FIDO2 for users, mTLS for service-to-service, OAuth 2.0 + SMART on FHIR for applications |

| Transmission Security (164.312(e)) | 164.312(e)(2)(i) | Integrity controls | TLS + message signing | TLS 1.3 on all external connections, TLS 1.2+ internal, HMAC on message payloads |

| 164.312(e)(2)(ii) | Encryption in transit | Transport encryption | mTLS for internal services, TLS 1.3 for external, IPsec for site-to-site VPN |

This is not academic. Each row in this table is a deployable unit of work. Your sprint backlog should have a ticket for each specification, with the architecture component as the deliverable and the regulation reference as the acceptance criteria.

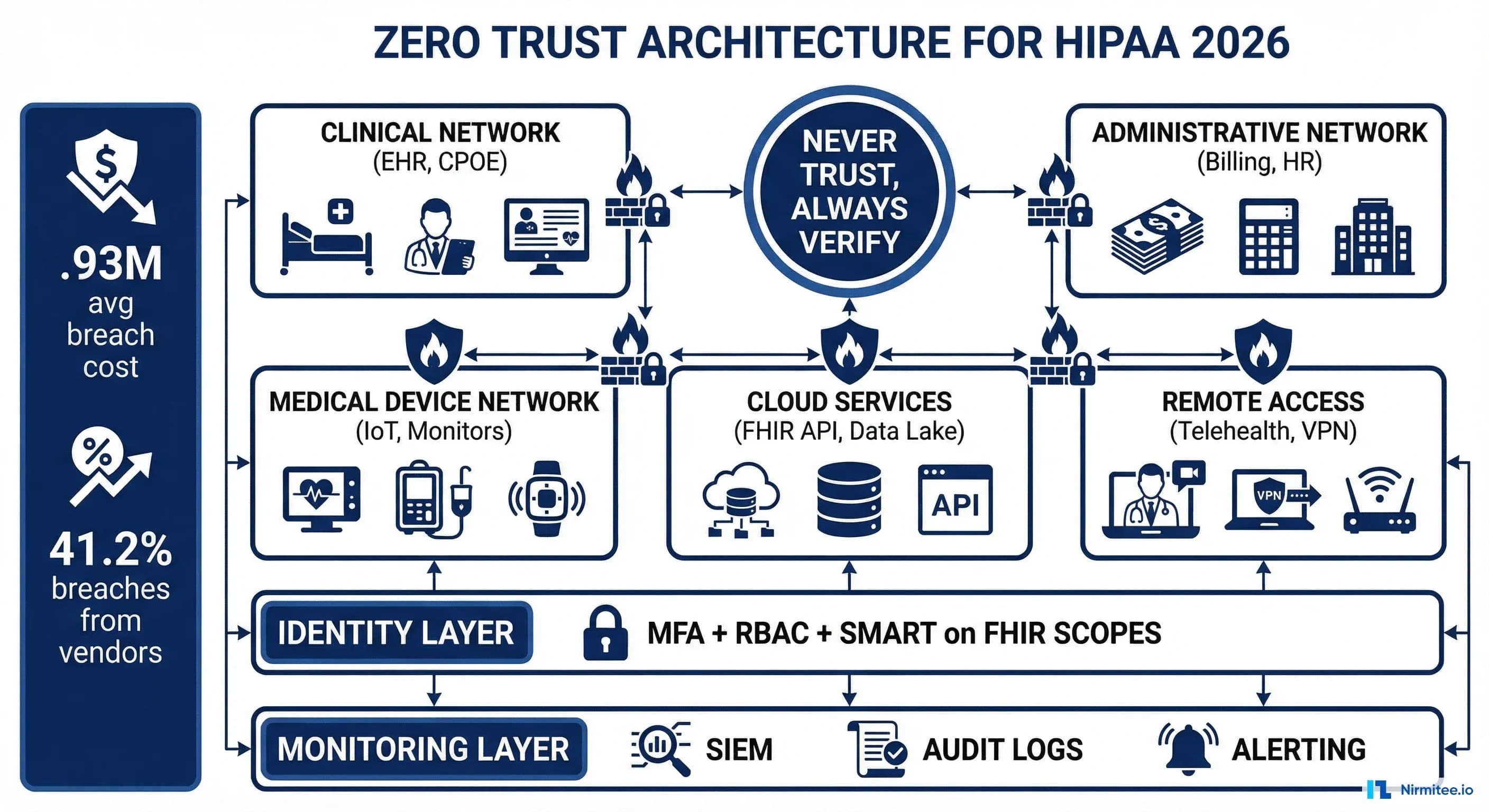

Zero Trust Architecture for HIPAA

The proposed rule does not use the phrase "zero trust," but the requirements it mandates -- MFA everywhere, network segmentation, continuous verification, vendor security assessment -- are zero trust by another name. If you are building a HIPAA-compliant system in 2026, zero trust is not optional. It is the architecture that satisfies the most requirements with the least complexity.

A zero trust architecture for healthcare rests on four pillars:

1. Identity-Based Access

Every request is authenticated and authorized based on the identity of the requester, not their network location. A clinician on the hospital Wi-Fi gets the same authentication challenge as one connecting from home. This eliminates the "trusted network" assumption that VPN-only architectures create -- and that attackers exploit after gaining an initial foothold.

In practice, this means:

- IdP as the single source of truth -- Okta, Azure AD, or Keycloak federating all applications

- Token-based access -- OAuth 2.0 access tokens with scoped claims for every API call

- No long-lived credentials -- session tokens expire in minutes, refresh tokens rotate on use

- Context-aware policies -- device posture, location, time of day, and risk score factor into access decisions

2. Microsegmentation

Network segmentation in a zero trust model goes beyond VLANs. Microsegmentation enforces policy at the workload level -- each service can only communicate with the specific services it needs, on the specific ports it needs, using the specific protocols it needs. A compromised lab results service cannot pivot to the billing database because there is no network path between them.

3. Device Attestation

The proposed rule's technology asset inventory requirement maps directly to device attestation. Every device accessing ePHI must be known (registered in your CMDB), healthy (meeting minimum security posture requirements), and verified (presenting a valid device certificate or passing an endpoint detection check).

4. Continuous Verification

Authentication is not a one-time event. Zero trust requires continuous evaluation of the access decision throughout the session. If a user's risk score changes (anomalous access pattern, impossible travel, compromised credential detected), the session is downgraded or terminated in real time.

Encryption Architecture

The elimination of "addressable" encryption means every byte of ePHI must be encrypted at rest and in transit. No exceptions for legacy systems. No exceptions for internal networks. Here is the encryption architecture that satisfies the requirement comprehensively.

Encryption at Rest: Envelope Encryption with KMS

Envelope encryption is the standard pattern for data-at-rest encryption at scale. The concept is straightforward: you encrypt your data with a Data Encryption Key (DEK), then encrypt the DEK with a Key Encryption Key (KEK) managed by your KMS. This gives you the performance of symmetric encryption (AES-256-GCM) with the key management benefits of a centralized KMS.

# Envelope encryption flow (pseudocode)

# 1. Generate a data encryption key (DEK) per record/tenant

dek = kms.generate_data_key(key_id="alias/phi-master-key")

plaintext_dek = dek.plaintext # Used to encrypt data

encrypted_dek = dek.ciphertext # Stored alongside encrypted data

# 2. Encrypt the PHI payload with the DEK

ciphertext = aes_256_gcm_encrypt(

plaintext=patient_record,

key=plaintext_dek,

aad=f"{tenant_id}:{record_id}" # Additional authenticated data

)

# 3. Store encrypted data + encrypted DEK together

store(record_id, encrypted_data=ciphertext, encrypted_key=encrypted_dek)

# 4. Wipe plaintext DEK from memory immediately

secure_zero(plaintext_dek)

# Decryption: retrieve encrypted DEK, call KMS to decrypt it,

# then decrypt the data. KMS never exposes the master key.This architecture means:

- Key compromise is limited -- compromising a single DEK exposes one record or one tenant, not the entire database

- Key rotation is non-disruptive -- rotating the KEK only requires re-encrypting DEKs, not re-encrypting all data

- Audit is centralized -- every KMS call (GenerateDataKey, Decrypt) is logged and auditable

- Multi-cloud portable -- the pattern works identically across AWS KMS, Azure Key Vault, GCP Cloud KMS, and HashiCorp Vault

Key rotation policy for HIPAA: rotate KEKs annually at minimum (the proposed rule implies annual review), rotate DEKs when the data is modified, and implement automatic rotation with your KMS provider.

Encryption in Transit: TLS 1.3 and mTLS

External traffic gets TLS 1.3. Internal service-to-service traffic gets mutual TLS (mTLS), where both the client and server present certificates. This satisfies both the transmission security safeguard and the person/entity authentication safeguard for machine-to-machine communication.

# Istio service mesh mTLS configuration (strict mode)

# Enforces mTLS for all service-to-service communication in the mesh

apiVersion: security.istio.io/v1

kind: PeerAuthentication

metadata:

name: default

namespace: healthcare-platform

spec:

mtls:

mode: STRICT

---

# Destination rule: require TLS 1.3 minimum for external services

apiVersion: networking.istio.io/v1

kind: DestinationRule

metadata:

name: external-fhir-server

namespace: healthcare-platform

spec:

host: fhir.partnerhospital.org

trafficPolicy:

tls:

mode: MUTUAL

clientCertificate: /etc/certs/client.pem

privateKey: /etc/certs/client-key.pem

caCertificates: /etc/certs/partner-ca.pem

minProtocolVersion: TLSV1_3Encryption in Use: Confidential Computing

The next frontier -- and one the proposed rule does not yet require but forward-looking organizations are adopting -- is confidential computing. Technologies like AWS Nitro Enclaves, Azure Confidential Computing, and Intel SGX/TDX allow you to process ePHI in hardware-isolated enclaves where even the cloud provider cannot access the data in memory. This is particularly relevant for AI workloads processing PHI, where model inference happens over decrypted patient data.

Access Control and Identity Management

HIPAA requires the "minimum necessary" standard -- users should only access the ePHI they need for their specific job function. Translating this into engineering means building a layered authorization system that combines role-based access control (RBAC), attribute-based access control (ABAC), and application-level scoping.

RBAC + ABAC: Defense in Layers

Pure RBAC is insufficient for healthcare. A nurse in the cardiac ICU and a nurse in the psychiatric unit both have the role "nurse," but they should not have access to the same patient records. ABAC adds contextual attributes -- department, care team membership, patient assignment, time of day -- to the authorization decision.

| Authorization Layer | Mechanism | Example Policy | Enforcement Point |

|---|---|---|---|

| Coarse-grained | RBAC | Role "physician" can access /fhir/Patient, /fhir/Observation | API gateway / reverse proxy |

| Fine-grained | ABAC | Physician can only access patients on their active care team | Application middleware / policy engine |

| Record-level | SMART on FHIR scopes | patient/Observation.read limited to launch context patient | FHIR server authorization |

| Field-level | Data masking | Billing staff cannot see clinical notes, only diagnosis codes | Database view / API response filter |

Policy-as-Code with Open Policy Agent

Hardcoding authorization logic in application code is an audit nightmare. Policy-as-code with Open Policy Agent (OPA) externalizes your access control decisions into versioned, testable, auditable Rego policies. Here is a real HIPAA access control policy:

# hipaa_access.rego — HIPAA minimum necessary access control

package hipaa.access

import rego.v1

default allow := false

# Rule 1: Users can access patients on their active care team

allow if {

input.action == "read"

input.resource.type == "Patient"

input.user.care_team[_] == input.resource.patient_id

}

# Rule 2: Break-glass override with mandatory audit trail

allow if {

input.action == "read"

input.break_glass == true

valid_break_glass_justification(input.justification)

# This access will trigger an immediate audit alert

}

# Rule 3: Substance use disorder records require explicit consent

# (42 CFR Part 2, now integrated with HIPAA as of Feb 2026)

allow if {

input.resource.type == "Condition"

input.resource.category == "substance-use-disorder"

has_valid_part2_consent(input.resource.patient_id, input.user.id)

}

deny_reason["No care team relationship"] if {

input.action == "read"

input.resource.type == "Patient"

not input.user.care_team[_] == input.resource.patient_id

not input.break_glass

}

# Validate break-glass justification is one of the approved categories

valid_break_glass_justification(j) if {

j in {"emergency_treatment", "danger_to_self_others", "public_health"}

}

# Check 42 CFR Part 2 consent registry

has_valid_part2_consent(patient_id, user_id) if {

consent := data.consent_registry[patient_id]

consent.authorized_users[_] == user_id

time.now_ns() < consent.expiration_ns

}This policy is testable (OPA has a built-in test framework), versionable (store it in Git), and auditable (every policy evaluation is logged with the input context and the decision). When an OCR auditor asks "how do you enforce minimum necessary access?", you point them to the Git repository, the policy test results, and the evaluation logs.

Break-Glass Procedures

HIPAA's emergency access procedure requirement (164.312(a)(2)(ii)) is the one control that every compliance guide mentions and almost no one implements correctly. Break-glass must simultaneously allow emergency access and create an audit trail that ensures the access was justified. The architecture:

- User requests break-glass through a dedicated UI flow -- not by calling IT to reset permissions

- User selects justification category (emergency treatment, danger to self/others, public health emergency)

- System grants temporary elevated access (time-limited: 4-8 hours)

- System immediately fires an audit event to the SIEM with user ID, patient ID, justification, timestamp, and access scope

- Privacy officer is notified within 24 hours to review and validate the justification

- Access automatically revokes when the time window expires

Audit Logging Pipeline

HIPAA audit controls (164.312(b)) require that you record and examine activity in information systems containing ePHI. The proposed rule goes further, requiring specific log content (who accessed what, when, from where) and specific retention periods. Building a compliant audit logging pipeline is not about installing Splunk -- it is about designing a data flow that captures the right events, enriches them with the right context, stores them immutably, and surfaces anomalies before they become breaches.

The Pipeline Architecture

┌──────────────────┐ ┌─────────────────┐ ┌──────────────────┐

│ Application │ │ Log Shipper │ │ Log Storage │

│ │ │ │ │ │

│ EHR Server │────▶│ Fluentd / │────▶│ Elasticsearch │

│ FHIR API │ │ Vector │ │ (hot: 90 days) │

│ Auth Service │ │ │ │ │

│ Database │ │ Enrichment: │ │ S3 / Glacier │

│ File Storage │ │ - GeoIP │ │ (cold: 6 years) │

│ │ │ - User context │ │ │

│ │ │ - PHI tagging │ │ WORM storage │

└──────────────────┘ └─────────────────┘ └──────────────────┘

│

▼

┌──────────────────┐

│ SIEM / Analytics│

│ │

│ Anomaly detect │

│ Correlation │

│ Dashboards │

│ │

│ ──▶ PagerDuty │

│ ──▶ Slack │

│ ──▶ Incident │

│ Response │

└──────────────────┘HIPAA-Specific Log Events

Not all log events are equal under HIPAA. These are the events you must capture:

| Event Category | Specific Events | Required Fields | Retention |

|---|---|---|---|

| Authentication | Login success/failure, MFA challenge, token refresh, session timeout | User ID, IP, device fingerprint, timestamp, MFA method, result | 6 years |

| Authorization | Access granted/denied, policy evaluation, scope check, break-glass activation | User ID, resource type, resource ID, action, policy decision, justification | 6 years |

| Data access | PHI read, search, export, print, download, copy | User ID, patient ID, resource type, fields accessed, access purpose | 6 years |

| Data modification | PHI create, update, delete, merge, unmerge | User ID, patient ID, resource type, before/after values (redacted), change reason | 6 years |

| Disclosure | PHI shared externally, patient portal access, FHIR API response to third-party apps | Disclosing user, recipient, patient ID, purpose, legal basis, data scope | 6 years |

| System | Config changes, user provisioning, role assignment, encryption key operations | Admin user ID, change type, before/after, approval reference | 6 years |

The six-year retention comes from HIPAA's requirement to retain documentation of security policies and procedures for six years from the date of creation or the date when it was last in effect, whichever is later. Apply this to audit logs as the safest interpretation.

Vector Configuration for Healthcare Log Enrichment

# vector.toml — Healthcare-specific log enrichment pipeline

[sources.app_logs]

type = "kafka"

bootstrap_servers = "kafka:9092"

group_id = "vector-hipaa-audit"

topics = ["ehr.audit", "fhir.access", "auth.events"]

[transforms.enrich_phi_context]

type = "remap"

inputs = ["app_logs"]

source = '''

# Tag events that involve PHI access

if includes(["Patient", "Observation", "Condition", "MedicationRequest",

"DiagnosticReport", "DocumentReference"], .resource_type) {

.phi_access = true

.hipaa_event_category = "data_access"

}

# Enrich with GeoIP for impossible-travel detection

.geo = get_enrichment_table_record("geoip", {"ip": .client_ip}) ?? {}

# Classify sensitivity level

if .resource_type == "Condition" && .resource_category == "substance-use-disorder" {

.sensitivity = "42cfr_part2"

.hipaa_event_category = "restricted_data_access"

} else if .phi_access == true {

.sensitivity = "phi"

} else {

.sensitivity = "standard"

}

# Ensure no PHI leaks into log fields

del(.request_body)

del(.response_body)

.patient_id = redact(.patient_id, filters: ["pattern"],

patterns: ["\\d{3}-\\d{2}-\\d{4}"])

'''

[sinks.elasticsearch_hot]

type = "elasticsearch"

inputs = ["enrich_phi_context"]

endpoints = ["https://es-hipaa-cluster:9200"]

index = "hipaa-audit-%Y-%m-%d"

bulk.action = "create" # Append-only, no updates or deletes

[sinks.s3_cold_archive]

type = "aws_s3"

inputs = ["enrich_phi_context"]

bucket = "hipaa-audit-archive"

key_prefix = "audit/year=%Y/month=%m/day=%d/"

encoding.codec = "json"

compression = "gzip"Note the bulk.action = "create" setting. This enforces append-only writes to Elasticsearch, ensuring logs cannot be modified or deleted after creation. For long-term storage, the S3 sink combined with S3 Object Lock provides true WORM (Write Once Read Many) compliance, aligning with your data governance requirements.

Network Segmentation for Healthcare

The proposed rule explicitly requires network segmentation -- a first for HIPAA. For healthcare organizations that have been running flat networks (and there are more than you would think), this is the most expensive infrastructure change in the proposed rule. For organizations that already segment, the rule requires documentation and regular validation of segmentation effectiveness.

Healthcare Network Zones

Healthcare networks have unique segmentation requirements because of the diversity of devices on the network. A cardiac ICU has infusion pumps, patient monitors, and ventilators that were designed in 2012 and cannot be patched -- they share network infrastructure with clinician workstations running modern browsers and tablets accessing cloud-based EHRs. Segmentation is the only defense for unpatchable devices.

| Zone | Contents | Security Posture | Allowed Flows |

|---|---|---|---|

| Clinical | EHR servers, FHIR API, clinical databases, PACS | Highest: MFA, mTLS, encrypted storage, full audit logging | Inbound: authenticated API calls from DMZ. Outbound: audit logs to logging zone, backups to storage zone |

| Administrative | Billing systems, scheduling, HR, email, productivity apps | High: MFA, TLS, encrypted storage | Inbound: user sessions from endpoints. Outbound: claims to payers (via DMZ), limited clinical queries |

| IoT/Biomedical | Infusion pumps, patient monitors, ventilators, imaging devices | Isolated: no internet, no user access, device allowlisting | Inbound: firmware updates from management server only. Outbound: telemetry to clinical zone via protocol gateway |

| DMZ | API gateway, load balancers, patient portal, FHIR facade | Hardened: WAF, rate limiting, DDoS protection | Inbound: internet traffic. Outbound: proxied requests to clinical/admin zones |

| Management | SIEM, log servers, vulnerability scanners, CMDB, patch management | Restricted: admin-only access, MFA + jump box | Inbound: logs from all zones. Outbound: scan traffic to all zones, alerts to external services |

| Guest | Patient and visitor Wi-Fi | Untrusted: internet-only, no internal access | Outbound: internet only. No inbound. Complete isolation from all other zones |

Remote Access: ZTNA Over VPN

Traditional VPNs grant full network-level access once authenticated -- a single compromised VPN credential gives an attacker a foothold in your clinical network. Zero Trust Network Access (ZTNA) replaces this with application-level access: remote clinicians authenticate to a ZTNA broker, which proxies their connection to specific applications (the EHR, the PACS viewer) without granting any network-level access. Products like Zscaler Private Access, Cloudflare Access, or Tailscale implement this pattern.

For healthcare SRE teams managing five-nines patient safety systems, ZTNA also simplifies the operational model. You do not need to manage VPN concentrators, split tunneling policies, or VPN client compatibility across every device type clinicians use.

The 42 CFR Part 2 Integration

As of February 16, 2026, 42 CFR Part 2 -- the federal regulation governing confidentiality of substance use disorder (SUD) treatment records -- is integrated with HIPAA. This is not a minor administrative change. It fundamentally alters how you handle a specific category of PHI and has immediate engineering implications.

What Changed

Previously, Part 2 records had stricter consent requirements than HIPAA: a patient had to provide specific written consent before their SUD treatment records could be shared for any purpose, including treatment. Under the new integrated framework:

- Single consent can cover treatment, payment, and healthcare operations (TPO) -- aligning with HIPAA's general consent framework

- Breach notification for Part 2 records now follows HIPAA's breach notification rule (previously Part 2 had no breach notification requirement)

- Anti-discrimination protections are strengthened -- Part 2 records cannot be used in legal proceedings against the patient

- Redisclosure notice is still required on all Part 2 records when shared

Engineering Impact

The integration creates a two-tier PHI system within your architecture. SUD records carry additional metadata (consent status, redisclosure notice) and additional access controls (even within HIPAA's framework, they have heightened protections). Your system must:

- Tag SUD records at the data layer -- FHIR Condition resources with ICD-10 codes in the F10-F19 range (mental and behavioural disorders due to psychoactive substance use) and related Encounter, Procedure, and MedicationRequest resources

- Enforce consent checks on SUD record access -- the OPA policy example above demonstrates this pattern

- Attach redisclosure notices when SUD records are shared via API, export, or disclosure

- Track consent provenance -- who consented, when, what scope, and expiration

- Support data segmentation in your FHIR server -- the DS4P (Data Segmentation for Privacy) FHIR implementation guide provides the standard for tagging sensitive resources with security labels

This is not hypothetical. If your system serves behavioral health, addiction medicine, or any facility that provides SUD treatment, you must implement these controls now. The integration is already in effect.

Implementation Checklist

This is the engineering checklist for HIPAA Security Rule compliance mapped to the 2026 proposed requirements. Each item is a deployable control, not a policy document.

Access Controls (164.312(a))

- Deploy centralized identity provider -- Okta, Azure AD, or Keycloak with SAML/OIDC federation for all PHI-touching applications. Eliminate local accounts and shared credentials.

- Implement MFA on all ePHI access points -- FIDO2/WebAuthn preferred, TOTP acceptable. Cover: EHR login, database admin consoles, cloud provider consoles, VPN/ZTNA, API developer portals.

- Configure session management -- 15-minute idle timeout for web sessions, 1-hour absolute timeout, refresh token rotation on every use, token binding to device fingerprint.

- Build break-glass procedure -- dedicated UI flow, justification capture, 4-8 hour time-limited elevation, automatic audit event and privacy officer notification.

- Deploy policy-as-code (OPA/Cedar) -- externalized ABAC policies for minimum-necessary enforcement, versioned in Git, with automated policy testing in CI/CD.

Audit Controls (164.312(b))

- Implement centralized logging pipeline -- application logs through Vector/Fluentd, enriched with user context and PHI flags, stored in append-only Elasticsearch (hot) and S3 with Object Lock (cold, 6-year retention).

- Deploy SIEM with healthcare-specific rules -- anomalous PHI access patterns, impossible travel, bulk data export, after-hours access to restricted records, failed MFA attempts.

- Build disclosure tracking -- log every instance of PHI shared externally (API responses to third-party apps, patient portal access, printed records, faxes) with recipient, purpose, and legal basis.

Integrity Controls (164.312(c))

- Implement data integrity verification -- HMAC checksums on stored PHI records, database triggers capturing before/after states on PHI table modifications, file integrity monitoring (Wazuh/OSSEC) on file-based PHI storage.

- Deploy immutable audit logs -- WORM storage for all audit records, cryptographic hash chaining to detect tampering, separation of duties (log admins cannot modify log storage).

Authentication Controls (164.312(d))

- Implement mTLS for service-to-service -- Istio/Linkerd service mesh with STRICT mTLS mode, short-lived certificates (24-hour expiry) with automatic rotation via cert-manager.

- Deploy OAuth 2.0 + SMART on FHIR for API access -- scoped access tokens with patient/user launch context, PKCE for public clients, token introspection for resource servers.

Transmission Security (164.312(e))

- Enforce TLS 1.2+ on all connections -- TLS 1.3 preferred for external, TLS 1.2 minimum for internal. Disable TLS 1.0/1.1 and all SSL. Monitor certificate expiration with automated alerting.

- Implement network-level encryption -- IPsec or WireGuard for site-to-site connections, encrypted VPC peering for cloud-to-cloud, no unencrypted traffic carrying ePHI on any network segment.

Network and Infrastructure

- Implement network segmentation -- minimum six zones (clinical, administrative, IoT, DMZ, management, guest) with firewall rules enforcing least-privilege traffic flows between zones.

- Deploy technology asset inventory -- CMDB with automated discovery (ServiceNow, Snipe-IT, or cloud-native tools), tagging all ePHI-touching assets, drift detection alerting when new devices appear on clinical network segments.

- Implement vulnerability management SLAs -- critical: 15 days (proposed rule), high: 30 days, medium: 90 days. Automated scanning (Qualys, Tenable, or Wazuh), ticket generation, SLA tracking dashboard.

Encryption

- Deploy envelope encryption for data at rest -- AES-256-GCM with per-tenant DEKs, KEKs managed by KMS (AWS KMS, Azure Key Vault, or HashiCorp Vault), annual KEK rotation, DEK rotation on data modification.

- Encrypt all backups -- encrypted at the backup level (not just underlying storage), stored in separate region/account, monthly restore testing documented, immutable backup copies (ransomware protection).

Incident Response

- Build 72-hour breach notification pipeline -- automated breach detection (SIEM alerts), pre-staged investigation runbooks, pre-approved notification templates for HHS/OCR and affected individuals, quarterly tabletop exercises to validate the 72-hour SLA.

Compliance Automation: From Checklist to Continuous

A checklist is a point-in-time artifact. The proposed rule's annual assessment requirement demands continuous compliance validation. The organizations that build automated compliance monitoring will spend orders of magnitude less on audit preparation than those that rely on annual manual assessments.

The compliance-as-code pattern works like this:

# compliance_checks.py — Automated HIPAA control validation

# Run daily via CI/CD or scheduled job

class HIPAAComplianceChecker:

def check_encryption_at_rest(self):

"""164.312(a)(2)(iv) — Verify all databases have encryption enabled"""

rds_instances = aws.rds.describe_db_instances()

violations = []

for db in rds_instances:

if not db.storage_encrypted:

violations.append({

"control": "164.312(a)(2)(iv)",

"resource": db.db_instance_identifier,

"finding": "Storage encryption disabled",

"severity": "CRITICAL",

"remediation": "Enable encryption (requires snapshot + restore)"

})

return violations

def check_mfa_enforcement(self):

"""164.312(d) — Verify MFA is required for all IAM users"""

iam_users = aws.iam.list_users()

violations = []

for user in iam_users:

mfa_devices = aws.iam.list_mfa_devices(user.user_name)

if len(mfa_devices) == 0 and user.password_enabled:

violations.append({

"control": "164.312(d)",

"resource": user.user_name,

"finding": "Console access without MFA",

"severity": "CRITICAL",

"remediation": "Enable MFA or remove console access"

})

return violations

def check_log_retention(self):

"""164.312(b) — Verify audit logs meet 6-year retention"""

log_groups = aws.logs.describe_log_groups()

violations = []

required_days = 2190 # 6 years

for lg in log_groups:

if "hipaa" in lg.log_group_name or "audit" in lg.log_group_name:

if lg.retention_in_days < required_days:

violations.append({

"control": "164.312(b)",

"resource": lg.log_group_name,

"finding": f"Retention {lg.retention_in_days}d < required {required_days}d",

"severity": "HIGH",

"remediation": f"Set retention to {required_days} days"

})

return violations

def check_tls_versions(self):

"""164.312(e)(2)(ii) — Verify TLS 1.2+ on all load balancers"""

lbs = aws.elbv2.describe_load_balancers()

violations = []

for lb in lbs:

listeners = aws.elbv2.describe_listeners(lb.load_balancer_arn)

for listener in listeners:

policy = aws.elbv2.describe_ssl_policies(listener.ssl_policy)

if "TLSv1" in policy.protocols or "SSLv3" in policy.protocols:

violations.append({

"control": "164.312(e)(2)(ii)",

"resource": lb.load_balancer_name,

"finding": "Legacy TLS/SSL protocol enabled",

"severity": "CRITICAL",

"remediation": "Update SSL policy to ELBSecurityPolicy-TLS13-1-2-2021-06"

})

return violationsRun these checks daily. Push violations to your ticketing system. Track remediation SLAs. When the OCR auditor arrives, your evidence is not a spreadsheet from last year's assessment -- it is a live dashboard showing continuous control validation with historical compliance trends.

Frequently Asked Questions

When does the 2026 HIPAA Security Rule take effect?

The proposed rule has not been finalized as of April 2026. The NPRM (Notice of Proposed Rulemaking) was published January 6, 2025, with the comment period closing March 7, 2025. As of March 2026, the final rule is "edging closer" per HIPAA Journal, with HHS reviewing the 4,000+ public comments received. Most industry analysts expect finalization in mid-to-late 2026 with a 180-day compliance window for most provisions. However, engineering teams should be building now -- the technical requirements are clear from the NPRM, and the controls required (MFA, encryption, segmentation) are security best practices regardless of the rule's effective date.

Does the new rule apply to cloud-hosted healthcare applications?

Yes. The proposed rule applies to all covered entities and business associates that create, receive, maintain, or transmit ePHI, regardless of where the infrastructure is hosted. Cloud providers operating under a BAA (Business Associate Agreement) share compliance obligations. The proposed rule adds explicit requirements for vendor security verification -- you must obtain written confirmation that your cloud provider implements the required technical controls, not just rely on the BAA. AWS, Azure, and GCP all publish HIPAA eligibility documentation, but you are responsible for configuring their services in a compliant manner (shared responsibility model).

How does 42 CFR Part 2 integration affect my FHIR server?

If your FHIR server stores any data from substance use disorder treatment programs, you must implement data segmentation. This means tagging SUD-related FHIR resources (Condition, Encounter, MedicationRequest, Procedure) with security labels per the DS4P (Data Segmentation for Privacy) FHIR implementation guide, enforcing consent verification before granting access to tagged resources, and attaching redisclosure notices to any API response that includes Part 2 data. The OPA policy example in this guide demonstrates the consent verification pattern. Most commercial FHIR servers (HAPI, Smile CDR, Google Healthcare API) support security labels -- you need to implement the classification and enforcement logic.

What is the penalty for non-compliance with the new requirements?

HIPAA penalties have four tiers based on culpability, ranging from $141 to $2,134,831 per violation (2024 adjusted amounts), with an annual maximum of $2,134,831 per identical violation. However, the real financial exposure is breach cost: the average healthcare breach costs $10.93 million when factoring in detection, notification, lost business, and regulatory fines. OCR has been increasingly aggressive with enforcement -- 2024 saw record settlement amounts, and the proposed rule's elimination of "addressable" specifications removes the most common defense organizations used to justify missing controls.

Can I use a service mesh instead of traditional network segmentation?

A service mesh (Istio, Linkerd) provides microsegmentation at the application layer through mTLS and authorization policies. This satisfies the spirit of network segmentation for containerized workloads but should be combined with network-level controls (VPC security groups, firewalls) for defense in depth. The proposed rule does not specify the implementation mechanism -- it requires that ePHI systems are segmented from other systems and that the segmentation is documented and tested. A service mesh with strict mTLS, combined with network policies (Kubernetes NetworkPolicy or Calico), provides a robust implementation. Document both layers for your risk assessment.

How do I handle legacy systems that cannot support modern encryption or MFA?

The elimination of "addressable" specifications means you cannot simply document why a legacy system does not support encryption. The proposed rule requires compensating controls for systems that cannot directly implement a specification. For legacy systems: place them in an isolated network segment with a protocol gateway that terminates TLS and authenticates on behalf of the legacy device. All traffic to and from the legacy system passes through the gateway, which enforces encryption and authentication. This is particularly common for IoT/biomedical devices. Document the compensating control, the risk assessment that justified it, and the timeline for replacing the legacy system.

The Bottom Line for Engineering Teams

The 2026 HIPAA Security Rule update is not a compliance exercise. It is an architecture project. The proposed changes -- mandatory MFA, universal encryption, network segmentation, 72-hour breach notification -- are engineering deliverables that require infrastructure investment, not just policy documents.

The organizations that treat this as a security engineering initiative will build systems that are not only compliant but genuinely secure. The organizations that treat it as a checkbox exercise will spend more money, take longer, and end up with controls that look good on paper but crumble under a real attack.

Start with the highest-impact controls: MFA on all ePHI access points, encryption at rest and in transit, and a centralized audit logging pipeline. These three controls address the majority of breach vectors and satisfy the most critical proposed requirements. Then build outward: network segmentation, policy-as-code authorization, 42 CFR Part 2 data segmentation, and automated compliance monitoring.

The cost of doing nothing is $10.93 million per breach. The OCR director is right -- that number makes the implementation cost look trivial.

Nirmitee builds HIPAA-compliant healthcare infrastructure -- from FHIR servers with SMART on FHIR authorization to zero trust architectures with automated compliance monitoring. If your engineering team is preparing for the 2026 Security Rule, let's talk architecture.