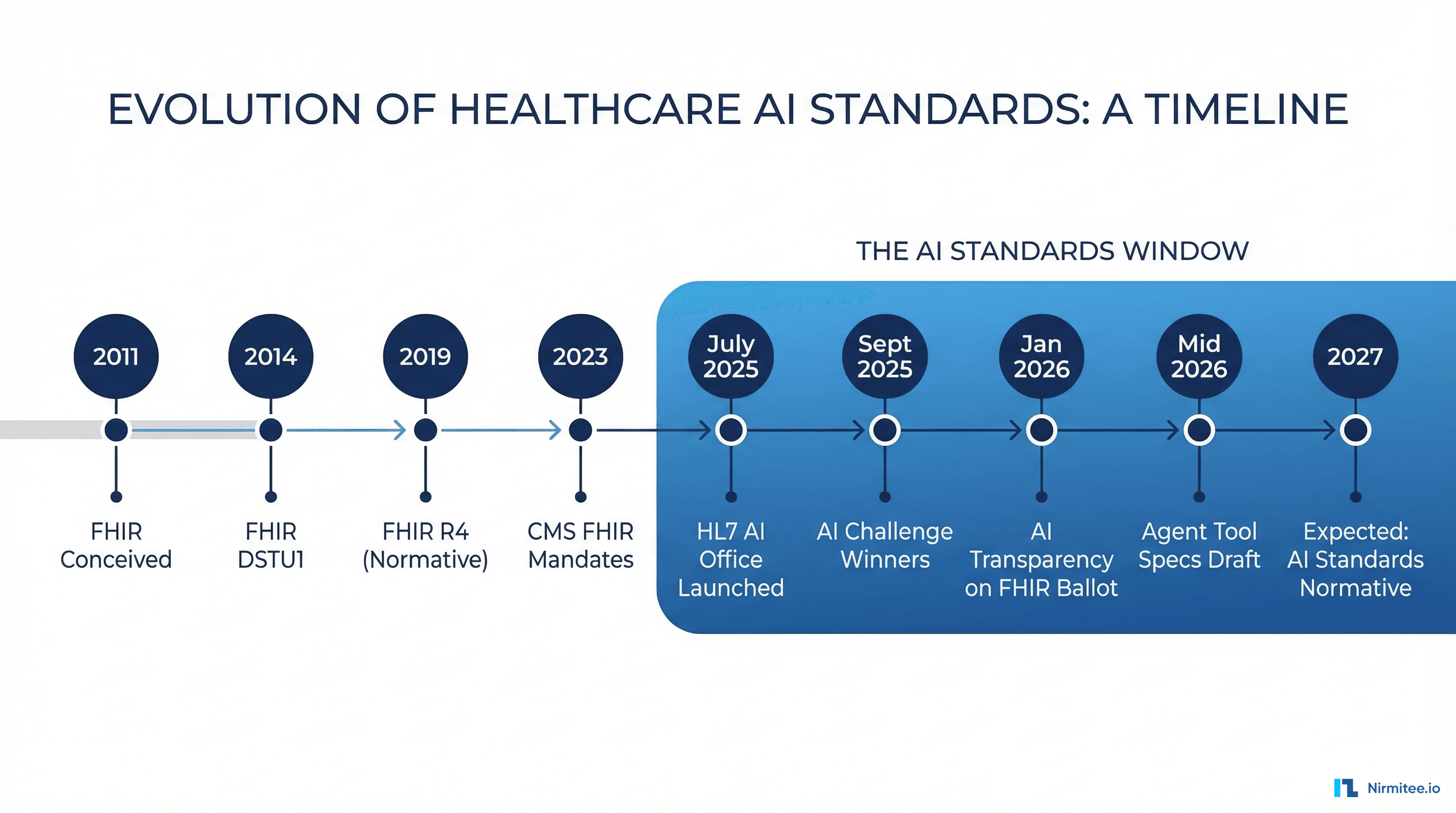

In July 2025, HL7 International made the most consequential organizational move in its 38-year history: it launched a dedicated AI Office with a mandate to build the standards infrastructure for healthcare AI. Daniel Vreeman, HL7's chief standards development officer, was appointed as the organization's first-ever chief AI officer. Six months later, the AI Transparency on FHIR implementation guide entered its first ballot cycle. If you build healthcare software, this changes your roadmap.

This is not a committee that publishes white papers. The AI Office is shipping specifications that will define how AI-generated clinical data is tagged, traced, and trusted across every EHR, payer system, and clinical decision support tool in production. Here is what they are building, what the first standards look like, and exactly how to prepare your systems.

Why HL7 Launched an AI Office Now

The timing is not accidental. Three converging forces made 2025 the inflection point:

- Regulatory pressure: The ONC HTI-1 final rule requires algorithm transparency in certified EHR technology. CMS's 2026 interoperability rules reference AI-generated content. The EU AI Act classifies medical AI as high-risk. Standards bodies need to provide the technical specifications regulators reference.

- Production reality: Over 80% of health care executives expect agentic AI to deliver moderate-to-significant value in 2026, per Deloitte's State of AI survey. AI agents are already writing clinical notes, generating prior authorization requests, and flagging sepsis risk from remote monitoring data. Without standards, every vendor implements transparency differently.

- The FHIR foundation: FHIR R4 is normative and widely deployed. FHIR R6 is on the horizon. The Provenance resource, AuditEvent, and extension mechanisms provide the technical building blocks to represent AI metadata. HL7 realized they could build AI transparency on top of FHIR rather than creating a parallel standard.

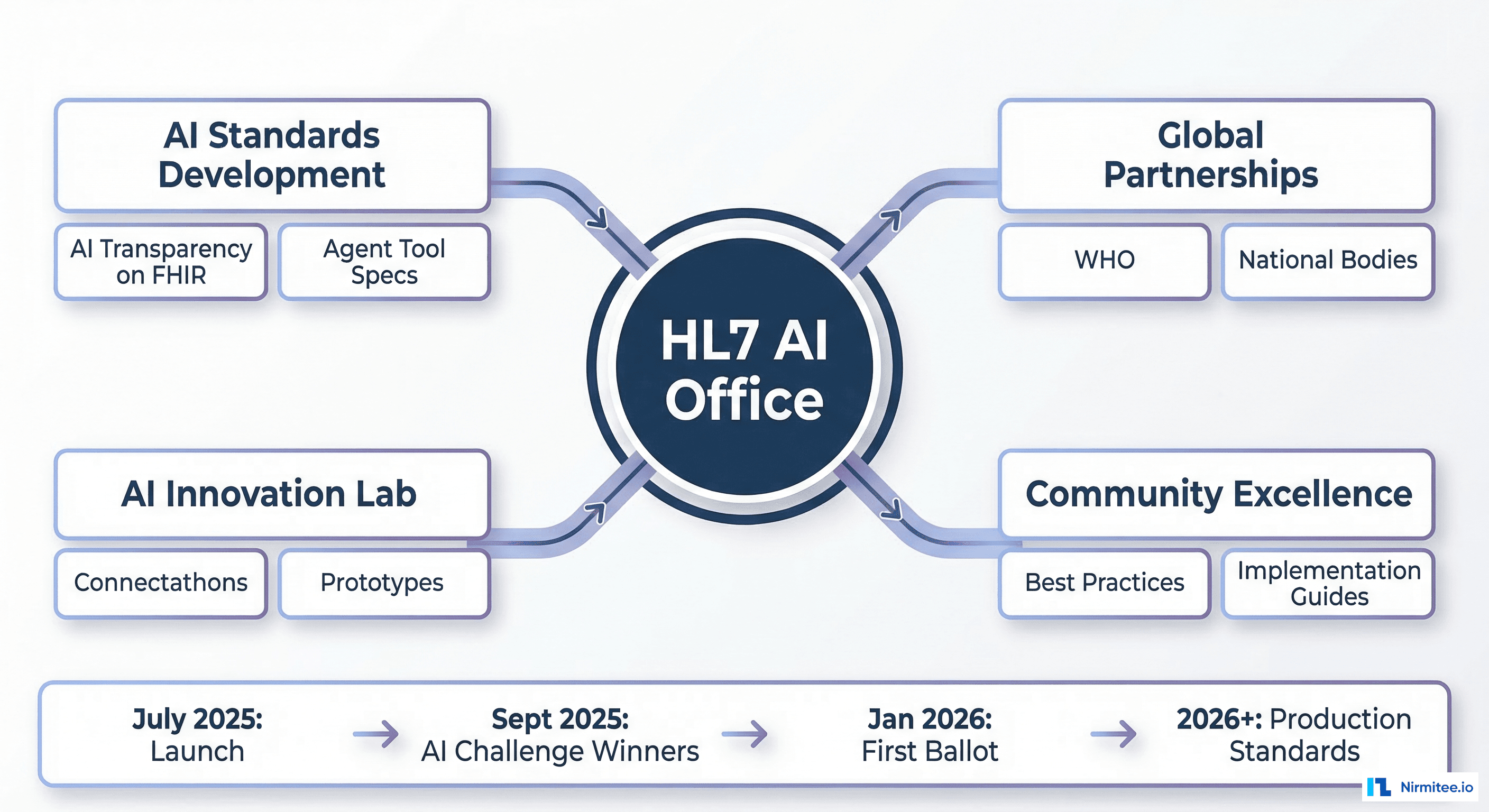

The result: four strategic workstreams that cover everything from specification development to global policy alignment.

The Four Strategic Workstreams

The AI Office operates through four parallel tracks, each with distinct deliverables:

1. Standards Development: The AI-Ready Interoperability Stack

This is the core technical track. Two specifications are in active development:

- AI Transparency on FHIR Implementation Guide (IG): Entered Draft Standard ballot in January 2026. Defines how to document when AI has created, modified, or influenced a FHIR resource. Based on FHIR 4.0.1. Package ID:

hl7.fhir.uv.aitransparency#1.0.0-ballot. - Agent Tool Specifications: Early-stage work on defining standardized interfaces for AI agents that interact with clinical systems. Think of it as CDS Hooks for the agentic era.

The standards track also includes extensions to the FHIR Provenance resource for AI lineage tracking and model card profiles that capture algorithm metadata in a FHIR-native format.

2. Global Leadership and Partnerships

HL7 is convening cross-SDO alignment with IHE, DICOM (for AI in imaging), and national health IT bodies. The goal: prevent fragmentation where every country builds its own AI transparency standard. The EU AI Act, US ONC rules, and WHO guidelines should all reference the same underlying FHIR profiles.

3. AI Innovation Lab

This is the proving ground. The Innovation Lab runs connectathons where implementers test AI specifications against real systems. The January 2026 FHIR Connectathon included the first-ever AI Transparency track, where teams validated the IG against production-like scenarios.

4. Community Excellence

Implementation guides, best practice libraries, and developer training. This track ensures that when the standards are published, the community has the tools to adopt them without a two-year learning curve.

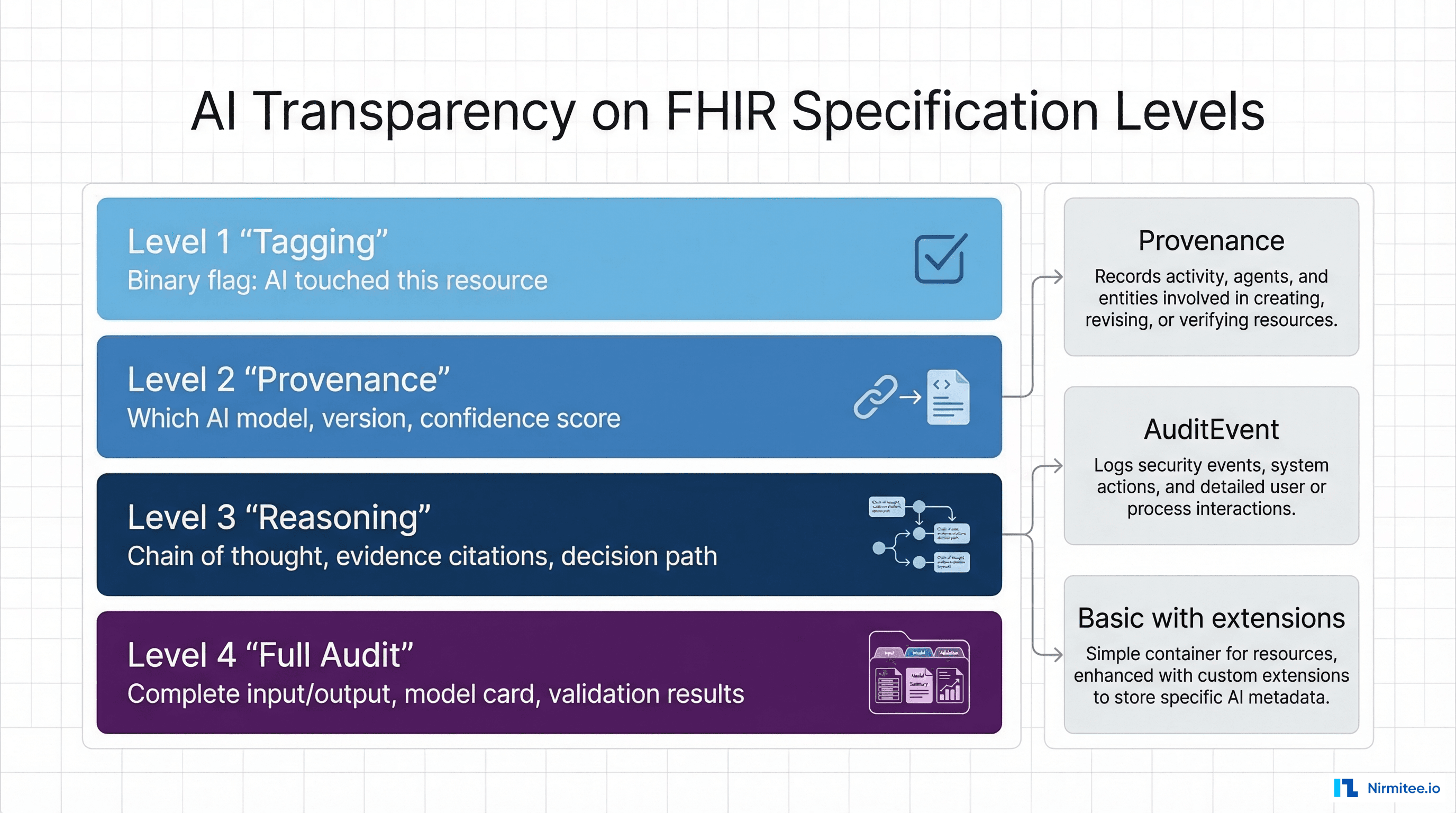

AI Transparency on FHIR: What the Specification Actually Says

The AI Transparency on FHIR IG is the first concrete deliverable. It establishes four levels of observability for AI-influenced health data:

Level 1: Tagging

The minimum requirement. A binary flag on any FHIR resource that has been created or modified by AI. Implemented via Resource.meta.tag with a standardized code from the IG's value set. This answers the question: Was AI involved in producing this data?

{

"resourceType": "Observation",

"meta": {

"tag": [

{

"system": "http://hl7.org/fhir/uv/aitransparency/CodeSystem/ai-involvement",

"code": "ai-generated",

"display": "AI Generated"

}

]

},

"status": "preliminary",

"code": {

"coding": [{ "system": "http://loinc.org", "code": "59408-5", "display": "Oxygen saturation" }]

},

"valueQuantity": { "value": 94, "unit": "%", "system": "http://unitsofmeasure.org", "code": "%" }

}Level 2: Provenance

Which AI model produced or influenced the data? What version? What was the confidence score? This uses the FHIR Provenance resource with extensions for model identification.

{

"resourceType": "Provenance",

"target": [{ "reference": "Observation/ai-spo2-reading" }],

"recorded": "2026-03-15T14:30:00Z",

"agent": [

{

"type": {

"coding": [{

"system": "http://hl7.org/fhir/uv/aitransparency/CodeSystem/agent-type",

"code": "ai-algorithm"

}]

},

"who": { "display": "Sepsis Risk Model v3.2" }

}

],

"extension": [

{

"url": "http://hl7.org/fhir/uv/aitransparency/StructureDefinition/ai-confidence",

"valueDecimal": 0.94

},

{

"url": "http://hl7.org/fhir/uv/aitransparency/StructureDefinition/ai-algorithm-type",

"valueCode": "non-deterministic"

}

]

}Level 3: Reasoning

The chain of thought. What evidence did the AI consider? What was the decision path? This is critical for clinical decision support where a physician needs to evaluate why the AI reached a conclusion. The IG provides guidance on representing reasoning chains through linked Provenance resources and the entity element.

Level 4: Full Audit

Complete input/output capture, model card documentation, and validation results. Required for FDA SaMD compliance and high-risk clinical applications. The IG defines profiles for model cards that capture training data characteristics, performance metrics, known limitations, and intended use populations.

Algorithm Classification

The specification distinguishes three algorithm types, each with different transparency requirements:

| Algorithm Type | Description | Minimum Observability | Example |

|---|---|---|---|

| Deterministic | Same input always produces same output | Level 1 (Tagging) | Rule-based sepsis screening |

| Non-deterministic | Output may vary; includes LLMs and neural networks | Level 2 (Provenance) | GPT-based clinical note generation |

| Hybrid | Deterministic logic with non-deterministic components | Level 2 (Provenance) | Rules engine + ML risk scorer |

The HL7 Global AI Challenge: What Won and Why It Matters

In parallel with the AI Office launch, HL7 ran its first-ever Global AI Challenge. Thirty entries from every inhabited continent. Winners announced at the 39th Annual Plenary in Pittsburgh, September 2025. The results signal where the standards community sees the highest-value applications:

- Clinical Data Quality: Health Samurai's Aidbox Forms — an AI assistant for FHIR SDC (Structured Data Capture) and analytics. This directly addresses the data quality problem that undermines every downstream AI model.

- AI Transparency and Trust: Trisotech — demonstrating that transparency tooling is a first-class category, not an afterthought.

- Interoperability Leadership: Whitefox FHIR Converter — automating the translation layer between legacy formats and FHIR, which remains the largest bottleneck for AI adoption in hospitals running HL7v2 interfaces.

- Pioneer Innovation: Ignyte Group and Appian — agentic AI for clinical workflow automation, validating that agents on FHIR is the emerging architecture pattern.

The pattern across winners: standards-based AI that is transparent, interoperable, and integrated into existing clinical workflows. None of the winners were standalone AI models. Every one was an AI system built on open health data standards.

Agent Tool Specifications: CDS Hooks for the Agentic Era

The least-discussed but potentially most impactful work: HL7 is developing standardized interfaces for AI agents that interact with clinical systems. Today, every agent framework defines its own tool contracts. One agent uses custom REST endpoints. Another uses MCP (Model Context Protocol). A third wraps FHIR operations in proprietary tool definitions.

HL7's agent tool specifications aim to standardize:

- Tool discovery: How an agent discovers what actions it can perform against a clinical system (analogous to FHIR CapabilityStatement for agents)

- Input/output contracts: Standardized schemas for tool inputs and outputs, built on FHIR resource types

- Authorization scoping: How SMART on FHIR scopes map to agent tool permissions

- Audit requirements: What must be logged when an agent invokes a tool, linked to the AI Transparency IG

This is early-stage work — expect a Draft Standard for Trial Use (DSTU) in late 2026 or early 2027. But the direction is clear: the same organization that standardized CDS Hooks for point-of-care decision support is building the equivalent for autonomous clinical agents.

The Standards Timeline: What Ships When

Here is the current timeline based on HL7 publications and working group schedules:

| Date | Milestone | Impact |

|---|---|---|

| July 2025 | AI Office launched | Organizational commitment, chief AI officer appointed |

| September 2025 | AI Challenge winners announced | Community validation of standards-based AI approach |

| January 2026 | AI Transparency on FHIR — first ballot | Specification available for implementer testing |

| January 2026 | First AI Transparency Connectathon track | Real-world validation against production scenarios |

| Mid 2026 | Ballot reconciliation + STU publication | Standard for Trial Use — production implementation begins |

| Late 2026 | Agent Tool Specifications — initial draft | Standardized agent-to-EHR interfaces |

| 2027+ | Normative status (if adoption warrants) | Mandatory for certified EHR technology |

The window between now and mid-2026 is the preparation period. Organizations that implement early will shape the final standard through ballot comments and connectathon feedback.

How to Prepare Your Systems: A Developer Checklist

Whether you are building AI agents, maintaining an EHR, or integrating clinical decision support, here are the concrete steps to take now:

1. Instrument AI Provenance on Every Write

Every time your AI creates or modifies a FHIR resource, attach a Provenance resource. Start with Level 1 tagging (the meta.tag approach) and add Level 2 provenance as the IG stabilizes. This is backward-compatible — it does not break existing FHIR consumers that ignore the tag.

# Python: Adding AI provenance to a FHIR resource write

import json

from datetime import datetime

def create_ai_provenance(target_ref, model_name, version, confidence):

return {

"resourceType": "Provenance",

"target": [{"reference": target_ref}],

"recorded": datetime.utcnow().isoformat() + "Z",

"activity": {

"coding": [{

"system": "http://terminology.hl7.org/CodeSystem/v3-DataOperation",

"code": "CREATE",

"display": "Create"

}]

},

"agent": [{

"type": {

"coding": [{

"system": "http://hl7.org/fhir/uv/aitransparency/CodeSystem/agent-type",

"code": "ai-algorithm"

}]

},

"who": {"display": f"{model_name} v{version}"}

}],

"extension": [{

"url": "http://hl7.org/fhir/uv/aitransparency/StructureDefinition/ai-confidence",

"valueDecimal": confidence

}]

}

# Usage

provenance = create_ai_provenance(

"Observation/sepsis-risk-123", "SepsisRiskModel", "3.2", 0.94

)

# POST to /fhir/Provenance alongside the Observation2. Build Model Cards as FHIR Resources

Document every AI model your system uses in a structured format. The IG's model card profile captures: algorithm type (deterministic / non-deterministic / hybrid), training data characteristics, performance metrics (sensitivity, specificity, AUROC), known limitations, and intended use populations. Start building this documentation now — it will map directly to the IG profiles.

3. Implement Audit Infrastructure

Every AI inference that touches patient data should generate an AuditEvent resource. This is not just good practice — it is the foundation for Level 4 compliance. Use the patterns from our HIPAA-compliant logging guide and extend them with AI-specific fields.

4. Test Against the IG

The AI Transparency on FHIR IG is available at build.fhir.org. Validate your resources against its profiles using the FHIR Validator. Better yet, participate in the next HL7 Connectathon. Use Inferno for automated conformance testing once test suites are published.

5. Map Your SMART Scopes to Agent Permissions

If you are building FHIR-based agents, review your SMART App Launch v2 scope definitions. The agent tool specifications will likely build on SMART scopes for authorization. Agents that already use granular FHIR scopes will have less refactoring when the specs land.

6. Monitor for Model Drift

The AI Transparency IG implicitly requires ongoing monitoring — if you tag a resource as AI-generated with a confidence score of 0.94, but your model has drifted and actual accuracy is 0.71, the provenance data is misleading. Implement the drift detection patterns we covered previously and update provenance metadata when model performance changes.

What This Means for EHR Vendors

For Epic, Oracle Health, athenahealth, and other major EHR platforms: the AI Transparency IG will eventually become a certification requirement. ONC's HTI-1 rule already references algorithm transparency. When the IG reaches normative status, expect it to be cited in the certification criteria.

The practical impact:

- Resource tagging: EHRs must support

meta.tagvalues from the AI Transparency value set on stored resources - Provenance storage: FHIR servers must accept and return Provenance resources linked to AI-generated content

- API surface: FHIR search must support filtering by AI involvement tags

- Display requirements: Clinical UIs must visually distinguish AI-generated content from human-authored content

Vendors that build this infrastructure now will be ahead of the certification timeline. Those that wait will face compressed implementation schedules when the rule drops.

What This Means for Agent Builders

If you are building AI agents that interact with clinical data — whether through CDS Hooks, direct FHIR API access, or event-driven architectures — the agent tool specifications will define how your agents are discovered, authorized, and audited.

Start building with these patterns now:

- Declare capabilities: Every agent should expose a machine-readable description of what it does, what data it needs, and what actions it can take.

- Use SMART scopes: Authorize agents via SMART App Launch v2 with the most granular scopes possible.

- Log everything: Every agent action, every data access, every clinical recommendation. Build the logging infrastructure now.

- Tag your outputs: Every resource your agent creates or modifies gets the AI Transparency tag. Non-negotiable.

The Bigger Picture: Standards as Competitive Advantage

The organizations that shaped early FHIR adoption — through connectathon participation, early implementation, and ballot feedback — are the ones that dominate health IT interoperability today. The same dynamic is playing out with AI standards.

Participating in the AI Transparency on FHIR ballot process, testing at connectathons, and implementing the draft specifications gives you three advantages:

- Influence: Ballot comments directly shape the final specification. Your production edge cases become the spec's test scenarios.

- Speed: When standards become mandatory, you are already compliant. No scramble, no retrofit.

- Trust: Healthcare buyers increasingly require evidence of standards compliance. Early adoption signals maturity and reliability — the qualities that win RFPs and pass security reviews.

The window for early-mover advantage is roughly 18 months: from now through mid-2027 when normative status is expected. After that, compliance becomes table stakes.

Getting Started with Nirmitee

At Nirmitee, we build healthcare AI systems that are standards-compliant from day one. Our teams implement FHIR-native agent architectures with full AI provenance tracking, SMART-scoped authorization, and audit infrastructure that satisfies both the emerging HL7 standards and current HIPAA requirements.

Whether you need to instrument AI transparency in an existing system or design a greenfield agent architecture aligned with the HL7 AI Office specifications, our healthcare engineering team has the deep standards expertise to get it right.

Talk to our healthcare AI team about building standards-ready clinical AI systems.

Frequently Asked Questions

What is the HL7 AI Office?

What does the AI Transparency on FHIR specification require?

When will HL7 AI standards become mandatory?

How should healthcare developers prepare for HL7 AI standards?

What are HL7 Agent Tool Specifications?