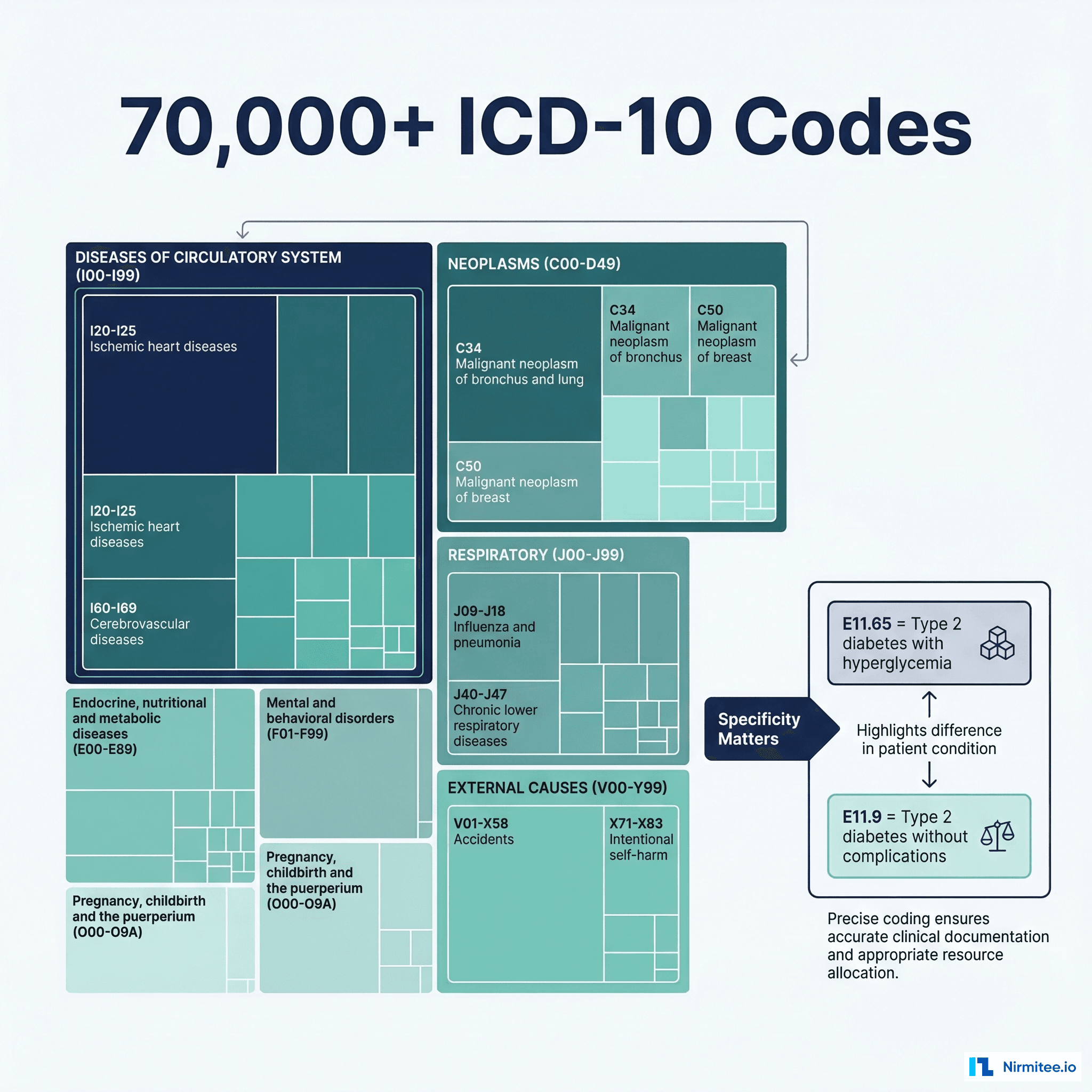

Medical coding is the financial backbone of every hospital and health system in the United States. Each year, coders translate millions of clinical encounters into standardized codes — ICD-10-CM for diagnoses, CPT for procedures, and HCPCS for supplies and services. These codes determine how much a hospital gets paid, whether a claim gets denied, and whether an audit triggers a compliance investigation.

The numbers are staggering. The ICD-10-CM codeset contains over 72,000 diagnosis codes. CPT has more than 10,000 procedure codes. HCPCS Level II adds another 7,000+. And every year, CMS updates these code sets — in October 2025 alone, 1,174 new ICD-10 codes were added, 40 were revised, and 26 were deleted. Keeping up is a full-time job, and getting it wrong costs the US healthcare system an estimated $36 billion annually in coding errors, according to the American Health Information Management Association (AHIMA).

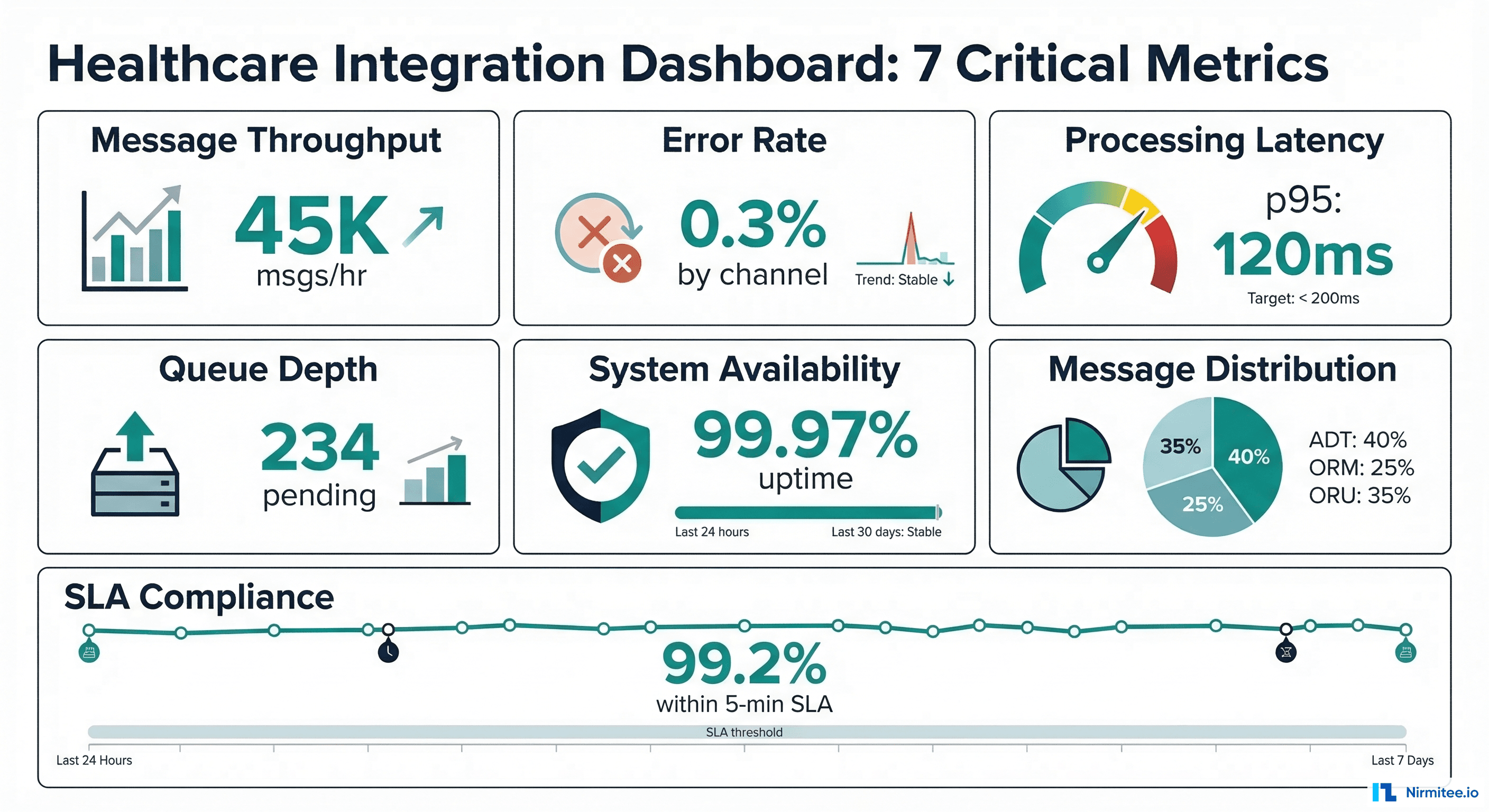

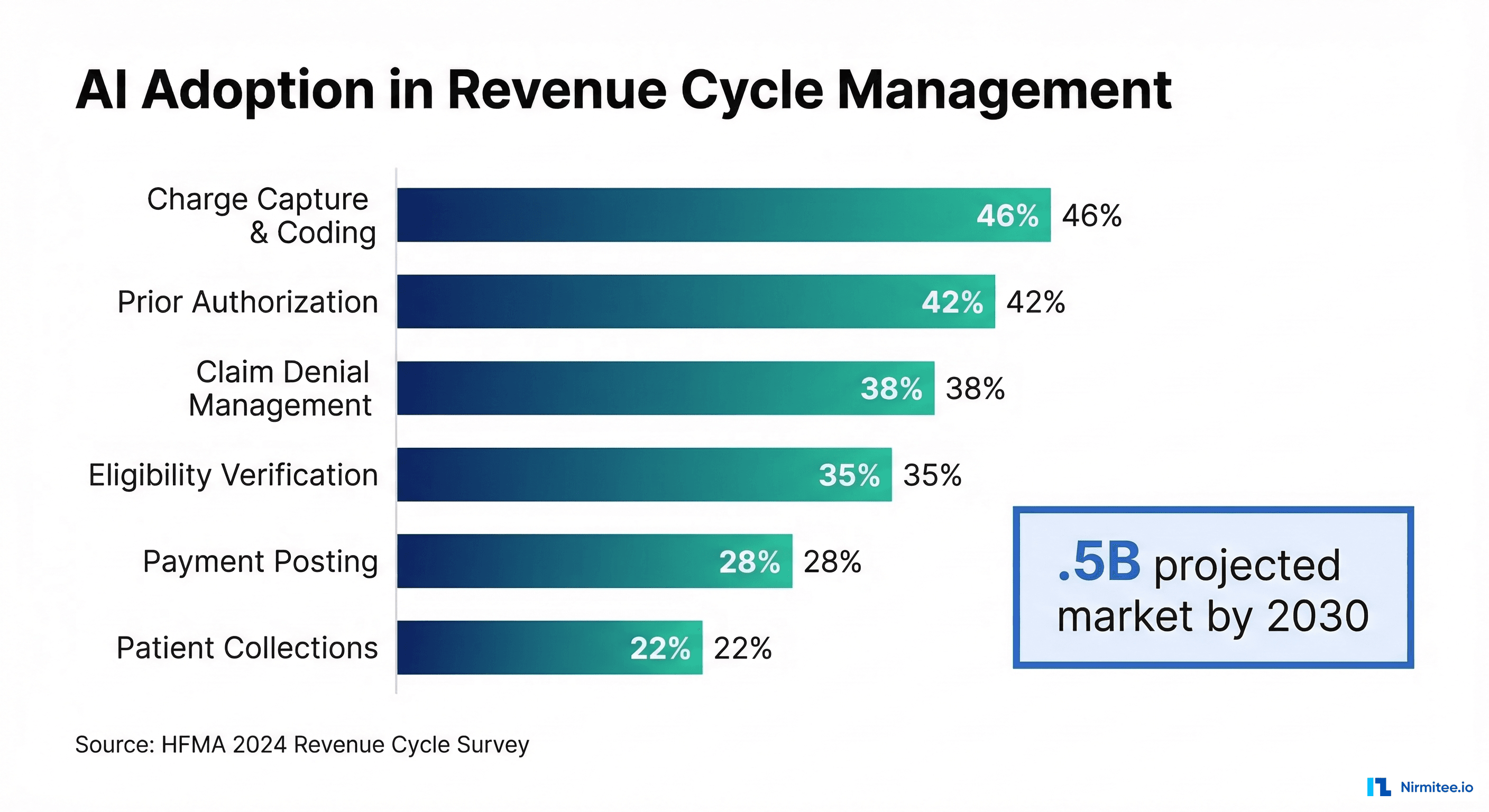

So naturally, the industry has turned to AI. According to a 2024 HFMA survey, 46% of hospitals now use some form of AI in their revenue cycle operations, with charge capture and coding being the most common application. But here is the uncomfortable truth that vendors rarely advertise: NLP-only approaches to medical coding achieve roughly 70% accuracy on complex cases — far below the 95%+ threshold required for production use.

This article examines why medical coding is so hard for machines, where NLP falls short, and what a production-grade AI coding pipeline actually looks like. If you are building or buying AI coding technology, this is the technical foundation you need.

The Complexity of Medical Code Sets: Why This Is Not a Simple Classification Problem

To understand why AI struggles with medical coding, you first need to appreciate the sheer complexity of the code sets themselves.

ICD-10-CM: 72,000+ Diagnosis Codes with Clinical Nuance

ICD-10-CM is not just a list of diseases. It is a hierarchical classification system where specificity determines reimbursement. Consider diabetes coding alone:

- E11.9 — Type 2 diabetes mellitus without complications

- E11.65 — Type 2 diabetes mellitus with hyperglycemia

- E11.621 — Type 2 diabetes mellitus with foot ulcer

- E11.3211 — Type 2 diabetes mellitus with mild nonproliferative diabetic retinopathy with macular edema, right eye

The difference between E11.9 and E11.3211 can mean thousands of dollars in reimbursement. A coder — or an AI system — must extract the specific complication, laterality, severity, and associated conditions from the clinical documentation to assign the correct code. Assigning the "unspecified" code when a more specific code is documented is a compliance risk and a revenue loss.

CPT Codes: Procedure Specificity and Modifier Logic

CPT codes, maintained by the AMA, encode not just what was done but how. A simple office visit is coded differently based on medical decision-making complexity:

| CPT Code | Description | MDM Level | Avg Reimbursement |

|---|---|---|---|

| 99213 | Established patient, low complexity | Low | $92 |

| 99214 | Established patient, moderate complexity | Moderate | $131 |

| 99215 | Established patient, high complexity | High | $182 |

Add modifiers — 25 (significant, separately identifiable E/M), 59 (distinct procedural service), 76 (repeat procedure by same physician) — and the combinatorial complexity explodes. A 2023 study in the Journal of AHIMA found that modifier errors account for 15-20% of claim denials across major commercial payers.

HCPCS Level II: The Forgotten Code Set

HCPCS Level II codes cover durable medical equipment, drugs, ambulance services, and supplies. These codes are maintained by CMS (not the AMA) and follow entirely different update cycles and documentation requirements. Most AI coding systems ignore HCPCS entirely, leaving a significant gap in automated revenue capture.

Why Context Determines the Code: The Fundamental NLP Challenge

The single biggest reason NLP-only approaches fail at medical coding is that the same clinical finding can map to entirely different codes depending on context. This is not a vocabulary problem — it is a reasoning problem.

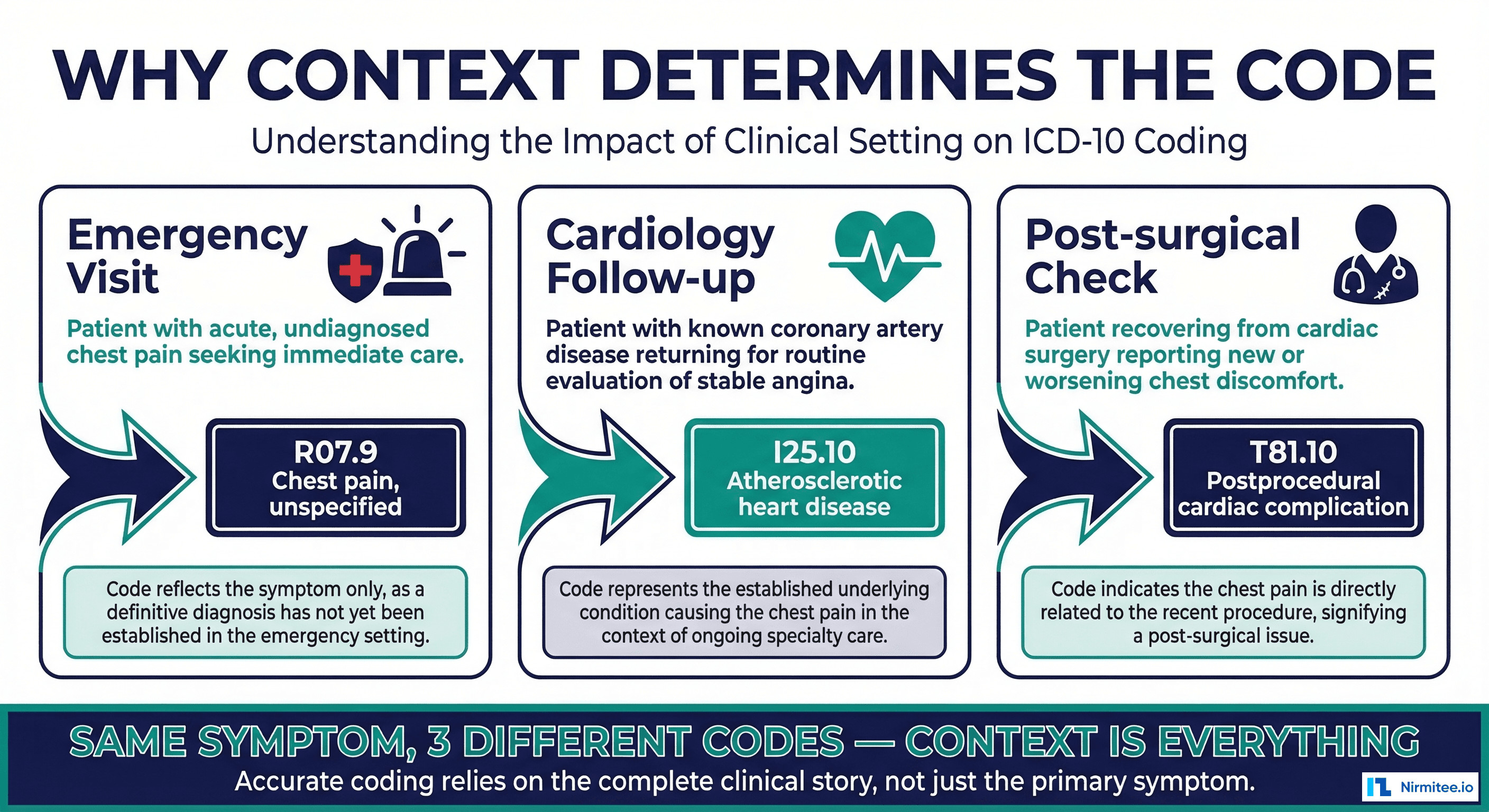

Context Type 1: Encounter Setting

A patient presenting with chest pain in the emergency department gets coded differently than the same patient with chest pain at a cardiology follow-up:

- ED visit: R07.9 (Chest pain, unspecified) — because the workup is in progress

- Cardiology follow-up: I25.10 (Atherosclerotic heart disease of native coronary artery) — because the underlying condition is known

- Post-surgical check: T81.10XA (Postprocedural shock, initial encounter) — if chest pain is a complication

An NLP system that simply extracts "chest pain" from the note and suggests R07.9 will be wrong two-thirds of the time in this scenario. The correct code requires understanding the purpose of the encounter, the patient's history, and the clinical reasoning documented by the provider.

Context Type 2: Negation and Uncertainty

Clinical notes are full of negation: "no evidence of metastasis," "denies chest pain," "ruled out PE." A naive NLP system sees the terms "metastasis," "chest pain," and "PE" and may suggest codes for conditions the patient does not have. Research from the 2024 Journal of the American Medical Informatics Association (JAMIA) found that negation detection errors account for 12-18% of false-positive code suggestions in production NLP systems.

Context Type 3: Historical vs. Active Conditions

When a physician documents "history of breast cancer, currently in remission," the correct code is Z85.3 (Personal history of malignant neoplasm of breast), not C50.9 (Malignant neoplasm of breast). Coding an active malignancy when the patient is in remission is not just an error — it can trigger unnecessary prior authorizations, inflate risk scores, and create compliance exposure under the False Claims Act.

Context Type 4: Sequencing Rules

ICD-10 has strict rules about which code is listed first (the "principal diagnosis"). For inpatient stays, the principal diagnosis is the condition established after study to be chiefly responsible for the admission. This requires understanding the entire clinical narrative, not just extracting entities. The Official ICD-10-CM Coding Guidelines run to 131 pages — and every rule affects reimbursement.

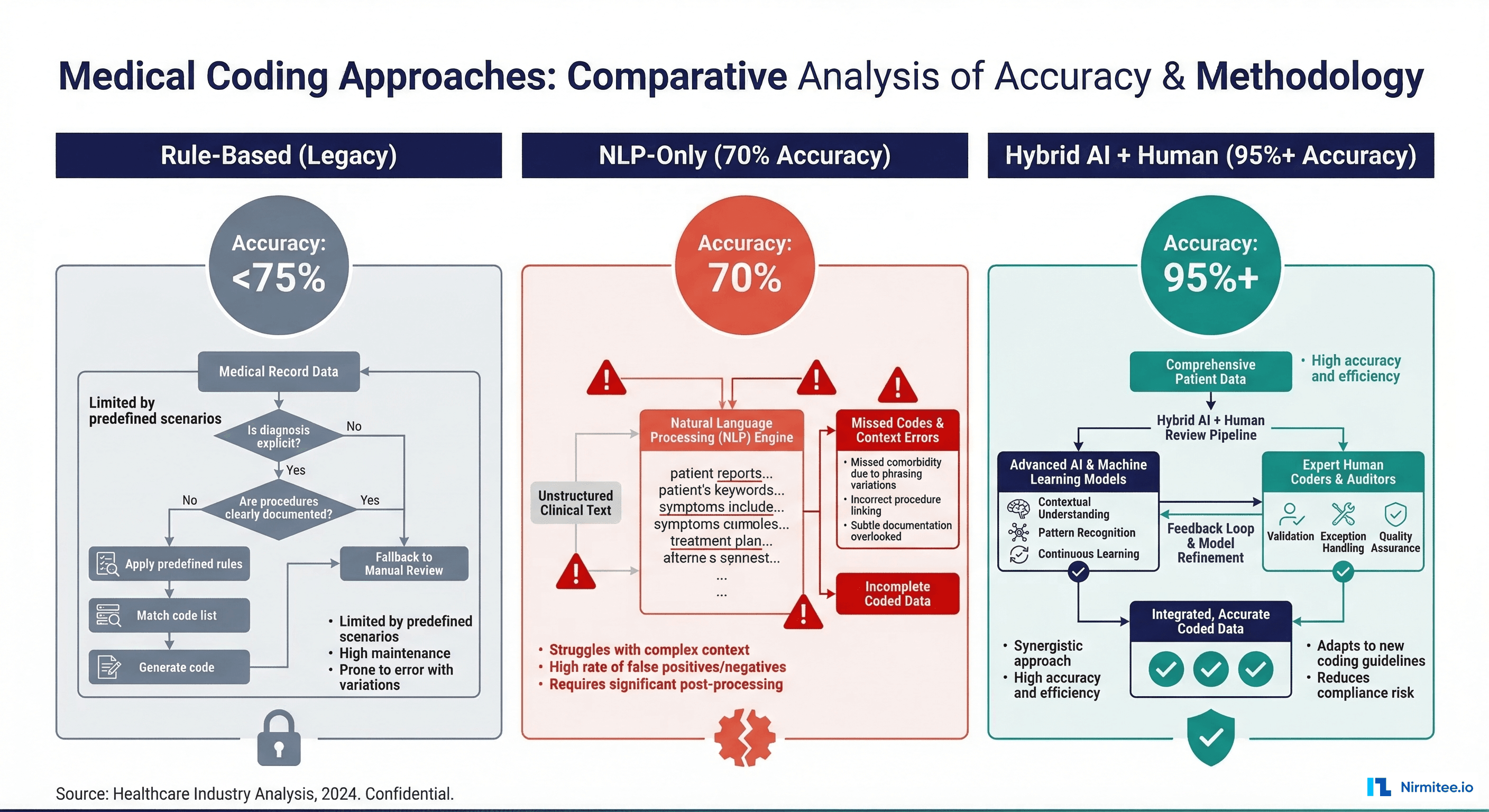

The Three Generations of AI Medical Coding

The evolution of AI in medical coding has followed a clear progression, each generation addressing (but not fully solving) the challenges above.

Generation 1: Rule-Based Systems (1990s-2010s)

The earliest automated coding systems used decision trees and keyword matching. Products like 3M's CodeFinder and Optum's EncoderPro used structured rules: if a note contains "fracture" and "femur" and "left," suggest S72.001A. These systems were highly precise for straightforward cases but brittle — any variation in documentation language caused them to miss codes entirely. Typical accuracy: 40-55% on unstructured notes.

Generation 2: NLP-Only Systems (2015-2022)

The arrival of clinical NLP — powered first by rule-based NLP tools like cTAKES and MetaMap, then by transformer models like ClinicalBERT — promised to solve the documentation variability problem. These systems could extract medical entities from free text and map them to codes. But as we have discussed, entity extraction without contextual reasoning leads to the 70% accuracy ceiling. Key failure modes:

- Over-coding: Suggesting codes for negated or historical conditions (12-18% error rate)

- Under-coding: Missing codes that require inference from multiple sections of the note (20-25% of missed opportunities)

- Wrong specificity: Defaulting to "unspecified" codes when the documentation supports a more specific code (30% of suggestions)

- Modifier blindness: Ignoring CPT modifiers entirely, which payers use to adjudicate bundling and unbundling

The 70% figure comes from multiple sources. A 2020 study in npj Digital Medicine found that state-of-the-art NLP achieved 69.8% F1 score on ICD coding from discharge summaries. A 2023 AAPC benchmark of commercial NLP products showed 65-73% agreement with human coders on complex multi-code encounters.

Generation 3: Hybrid AI + Human-in-the-Loop (2023-Present)

The current state of the art combines multiple AI techniques with structured human review. This is where the industry is heading, and it is the only approach that achieves 95%+ accuracy in production. We will examine this architecture in detail.

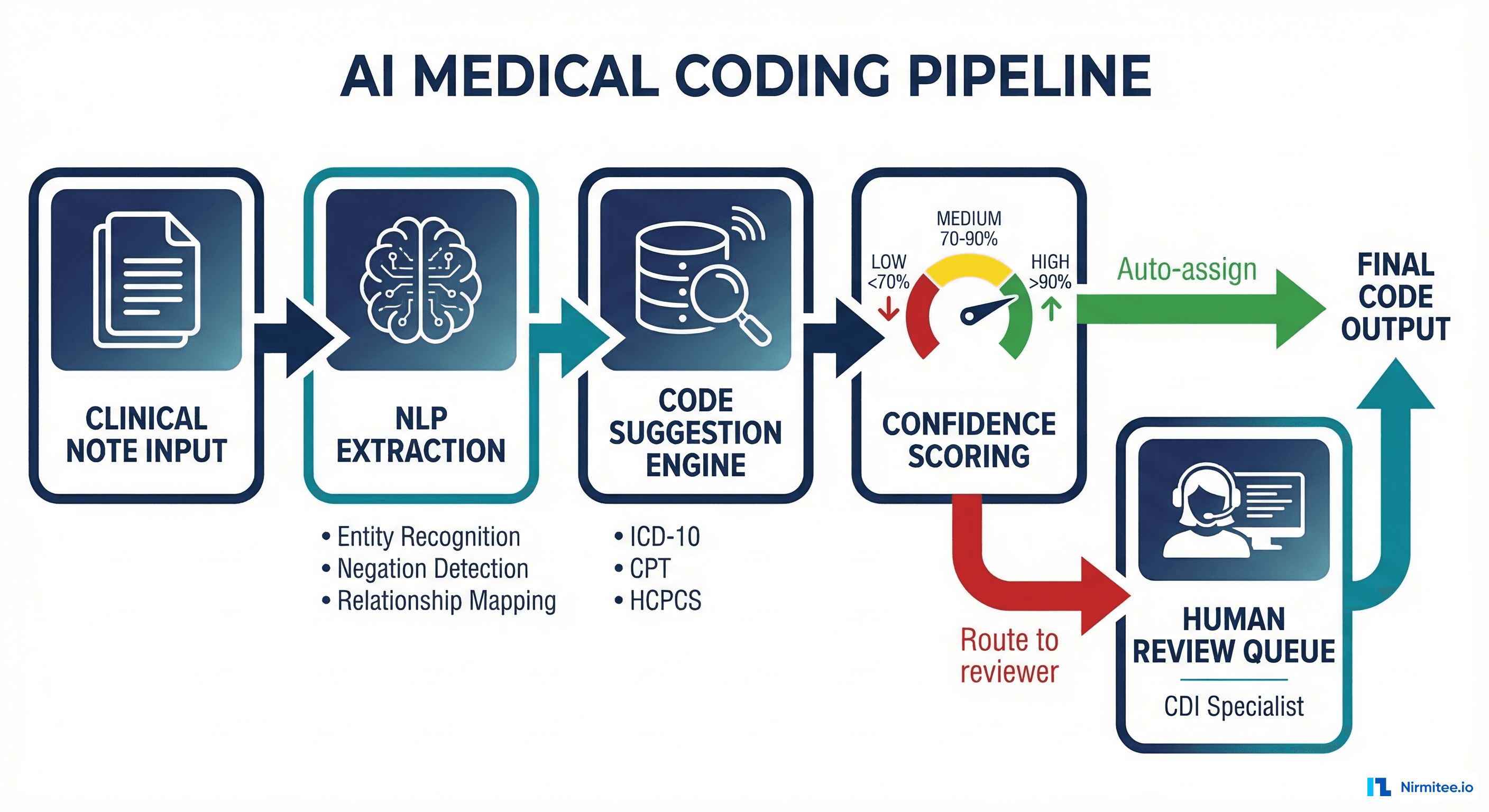

Building a Production-Grade AI Coding Pipeline

A production AI coding system is not a single model — it is a pipeline of specialized components, each handling a different aspect of the coding challenge. Here is the architecture that leading health systems and vendors are converging on.

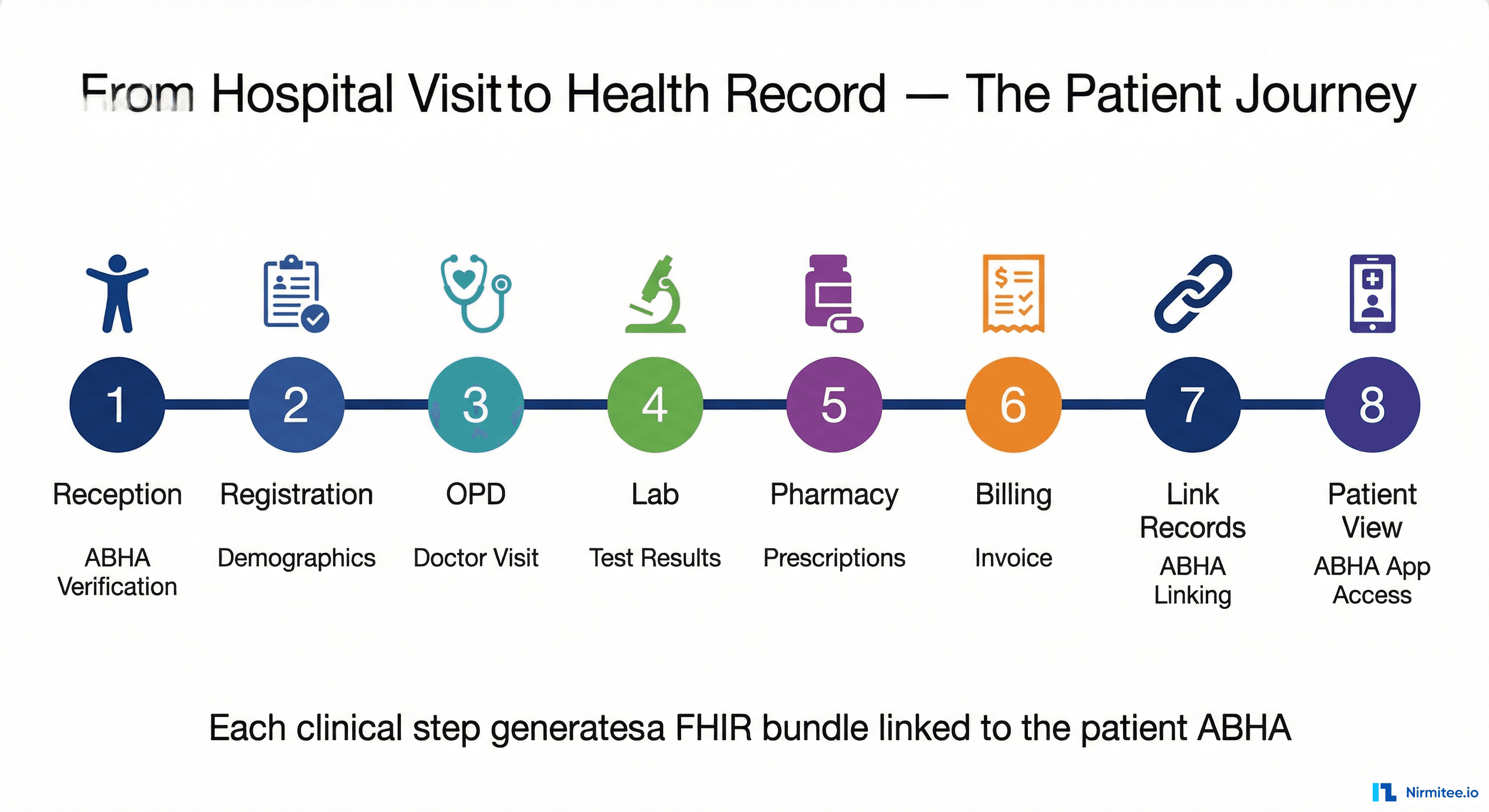

Stage 1: Clinical Document Ingestion

Before any NLP runs, the system must handle the messy reality of clinical documentation. Notes come from multiple sources — Epic, Cerner, Meditech, paper scans via OCR — in different formats. The ingestion layer normalizes these into a common representation, handles deduplication (the same note appearing in multiple systems), and segments the document into clinically meaningful sections: chief complaint, history of present illness, assessment, and plan.

This segmentation matters enormously. The assessment section carries a different coding weight than the review of systems. A well-designed ingestion layer improves downstream coding accuracy by 8-12 percentage points, according to internal benchmarks published by Optum's coding analytics team.

Stage 2: Multi-Model NLP Extraction

Rather than relying on a single NLP model, production systems use an ensemble approach:

- Entity recognition: Identifying medical concepts (diseases, procedures, medications, anatomy) using models fine-tuned on clinical text. ClinicalBERT and BiomedBERT are common base models.

- Negation detection: Separate model specifically trained on clinical negation patterns ("no," "denies," "rules out," "unlikely").

- Temporal reasoning: Determining whether a condition is current, historical, or planned — critical for history-of codes (Z85-Z87 range).

- Relationship extraction: Linking findings to body sites, severities, and lateralities. "Mild nonproliferative diabetic retinopathy, right eye" requires extracting four separate attributes and combining them.

Stage 3: Code Suggestion Engine

The extracted clinical concepts feed into a code mapping engine that considers:

- Direct concept-to-code mapping: Using UMLS (Unified Medical Language System) and SNOMED-to-ICD-10 crosswalks

- Coding guidelines logic: Applying the 131 pages of ICD-10-CM guidelines as computational rules — excludes, includes, code-first notes, use-additional-code instructions

- Payer-specific rules: Medicare, Medicaid, and commercial payers have different medical necessity requirements. A code that is payable under Medicare Part B may require a different modifier or supporting diagnosis for UnitedHealthcare.

- Historical patterns: What codes were previously assigned for similar encounters by this provider, in this specialty, at this facility? Pattern matching against coded encounter databases improves accuracy by identifying facility-specific documentation habits.

Stage 4: Confidence Scoring and Routing

This is where the "human-in-the-loop" architecture becomes concrete. Every code suggestion gets a confidence score:

- High confidence (>90%): Auto-assigned, queued for post-coding audit sampling (typically 5-10% sample rate)

- Medium confidence (70-90%): Presented to a coder with the AI's suggestion pre-filled, supporting evidence highlighted in the note. Coder accepts, modifies, or rejects.

- Low confidence (<70%): Routed to a Clinical Documentation Improvement (CDI) specialist who may query the physician for additional documentation before coding.

The routing thresholds are critical and must be calibrated per specialty. Orthopedic coding — with its extensive laterality, fracture type, and encounter-type variations — may need a higher confidence threshold than straightforward primary care visits. Organizations that implement adaptive thresholds see 12-15% fewer denials compared to those using fixed cutoffs.

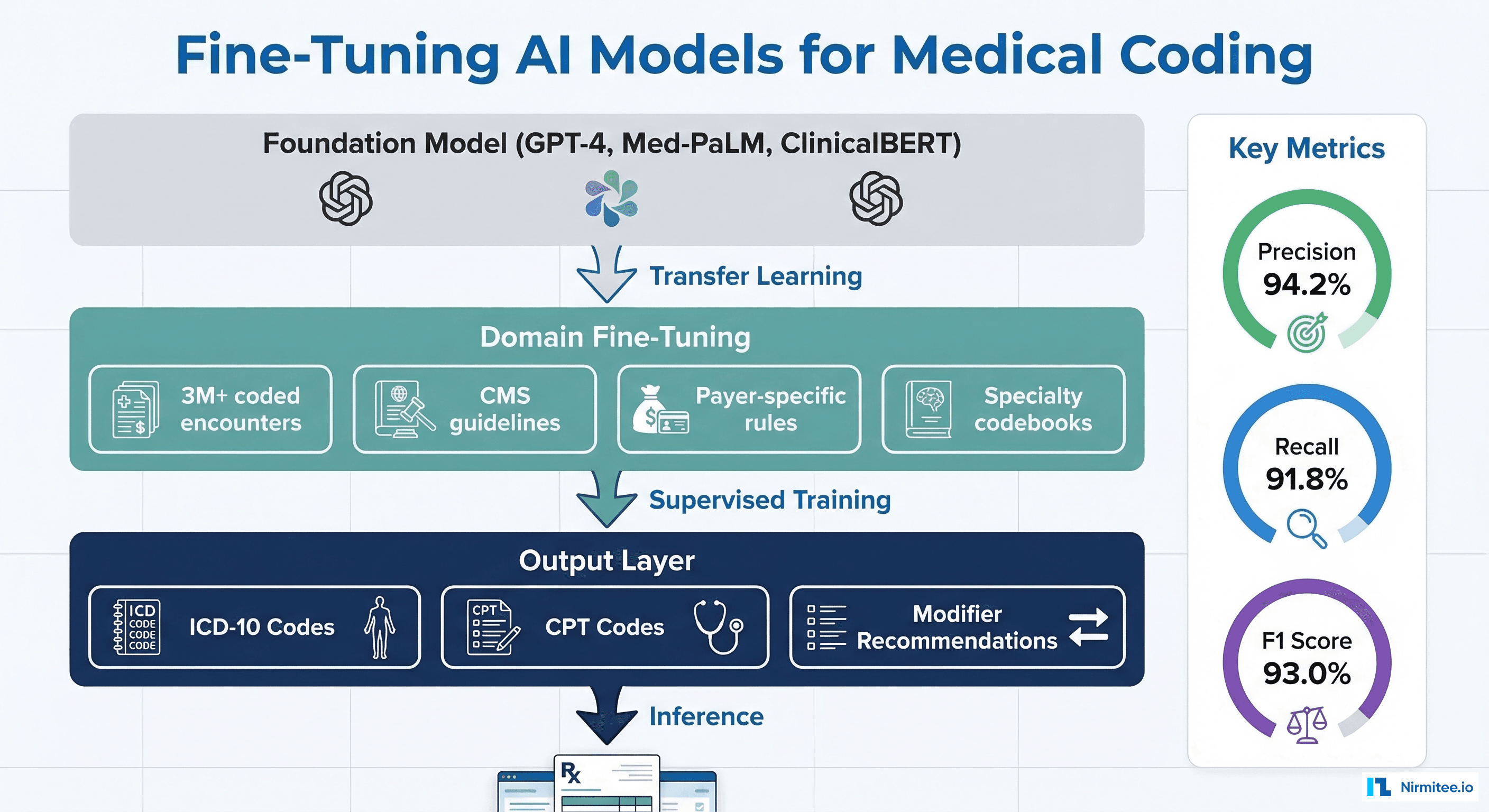

Fine-Tuning AI Models on Coding Data

Off-the-shelf language models, even clinical ones, perform poorly on medical coding without domain-specific fine-tuning. The reason is that coding requires a very specific type of knowledge that general medical training does not provide: the mapping between clinical language and billing codes, including all the regulatory logic that governs which codes are valid together.

Training Data Requirements

Effective fine-tuning requires:

- Volume: Minimum 500,000 coded encounters, ideally 3M+, spanning multiple specialties and payer types

- Quality: Encounters coded by certified coders (CCS, CPC) and validated through audit, not just accepted claims (which may include coding errors that were never caught)

- Diversity: Multi-facility data that captures documentation style variations across Epic, Cerner, and other EHR platforms

- Recency: Annual code set changes mean training data older than 2 years includes deprecated codes and outdated guidelines

Model Architecture Choices

The field has largely converged on two approaches:

- Fine-tuned encoder models (BERT-family): Best for classification tasks where you need to assign one or more codes from a fixed set. Models like PLM-ICD (a PubMedBERT variant fine-tuned specifically for ICD coding) achieve state-of-the-art results on the MIMIC-III benchmark.

- Retrieval-augmented generation (RAG): Using a large language model with a retrieval layer that pulls relevant coding guidelines and similar past encounters. This approach handles novel documentation patterns better but requires more infrastructure and has higher latency. For organizations building AI systems in healthcare, the RAG approach offers more flexibility when guidelines change.

Key Metrics for Model Evaluation

| Metric | What It Measures | Target |

|---|---|---|

| Precision (per code) | Of codes suggested, how many are correct? | >94% |

| Recall (per code) | Of codes that should be assigned, how many were found? | >91% |

| F1 Score | Harmonic mean of precision and recall | >92% |

| Exact Match Ratio | % of encounters where the full code set matches exactly | >78% |

| Specificity Rate | % of codes assigned at highest valid specificity level | >88% |

Note the distinction between per-code metrics and exact match. A system can have 94% precision per code but only 78% exact match because complex encounters have 5-12 codes, and getting all of them right simultaneously is harder. This is why human review remains essential — the AI does the heavy lifting, but a certified coder validates the complete code set.

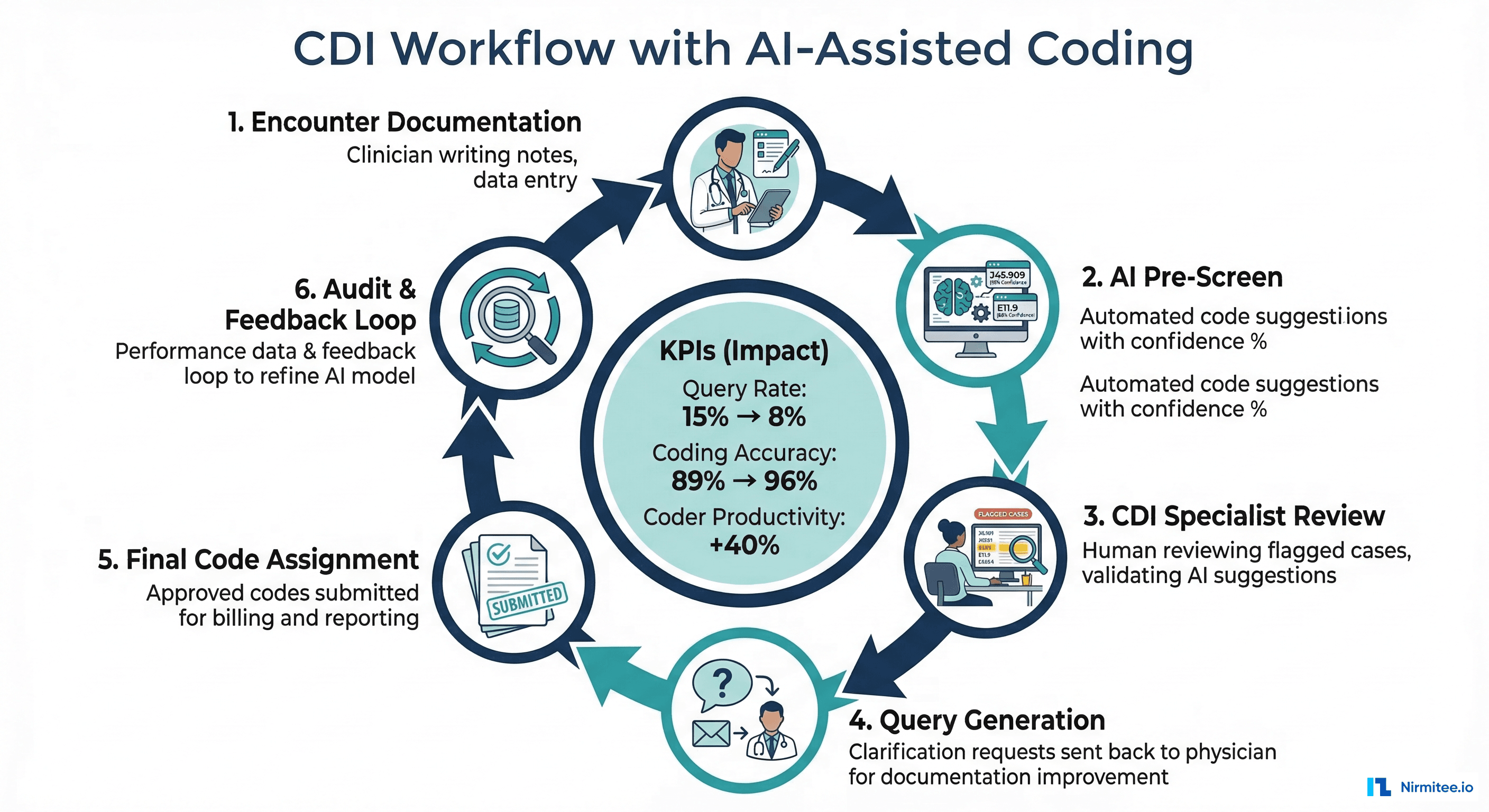

The CDI Workflow: Where Human Expertise Meets AI

Clinical Documentation Improvement (CDI) is the process of ensuring that clinical documentation accurately reflects the patient's clinical status and supports the assigned codes. AI has transformed CDI from a retrospective audit function into a concurrent, real-time workflow.

Concurrent CDI with AI

In a modern CDI workflow:

- Day 0 (Admission): AI scans the H&P and initial orders, generates preliminary DRG prediction for inpatient cases

- Day 1-2: CDI specialist reviews AI-flagged documentation gaps — e.g., "Clinical indicators suggest sepsis (WBC 18K, lactate 4.2, tachycardia) but physician documented 'infection' — query recommended."

- Day 3+: Physician responds to query, documentation updated, AI recalculates DRG impact

- Discharge: Final code set generated by AI, reviewed by coder, submitted to payer

Health systems using AI-assisted concurrent CDI report query rates dropping from 15% to 8% (because the AI can identify which queries will actually change the DRG assignment), coding accuracy improving from 89% to 96%, and coder productivity increasing by 40% (measured in relative value units processed per hour).

The Compliance Dimension

AI coding must operate within strict regulatory guardrails. The Office of Inspector General (OIG) has explicitly stated that AI-assisted coding does not absolve providers of responsibility for code accuracy. Key compliance considerations include:

- Audit trails: Every AI suggestion must be logged with the evidence that supported it, the confidence score, and whether a human accepted, modified, or rejected it

- Upcoding detection: The system must flag cases where AI suggestions systematically shift the case mix index (CMI) upward — a red flag for auditors

- Coder attestation: Certified coders must attest that they reviewed the AI output and agree with the final code assignment. "Rubber-stamping" AI suggestions without review is a compliance violation.

- Regular validation: Monthly comparison of AI-suggested codes vs. external audit findings, with model retraining when accuracy drops below thresholds

For organizations navigating HIPAA compliance requirements, AI coding systems add another layer of complexity — the training data, model inputs, and audit logs all contain PHI and must be secured accordingly.

The Economics: ROI of AI Medical Coding

The business case for AI coding is compelling, but the numbers depend heavily on implementation quality.

Direct Cost Savings

- Coder labor: A certified coder earns $55,000-$80,000/year. AI augmentation increases productivity by 35-45%, meaning a 10-coder team can handle the volume that previously required 14-15 coders. Annual savings: $220,000-$400,000 per 10-coder team.

- Denial prevention: Coding-related denials cost $25-$50 per denial in rework (appeal, resubmission, staff time). A 200-bed hospital with 5,000 coding-related denials/year saves $125,000-$250,000 in rework costs alone.

- Revenue capture: Under-coding is more common than over-coding. AI systems that capture missed specificity codes typically increase net revenue by 2-4% of total coded revenue.

Implementation Costs

Reality check: AI coding is not cheap to implement well.

- Vendor licensing: $150,000-$500,000/year for enterprise solutions (3M, Optum, Fathom Health, Nym Health)

- Integration: 3-6 months of EHR integration work, typically $100,000-$300,000 in professional services

- Training data preparation: 2-4 months of coding team involvement to validate training data and calibrate confidence thresholds

- Ongoing model management: Annual code set updates require model retraining — budget $50,000-$100,000/year for model operations

For organizations building custom solutions — particularly those that need to integrate coding AI with their existing EHR integration infrastructure — the development cost is higher but the long-term total cost of ownership can be lower. Health systems with strong engineering teams are increasingly building proprietary coding AI using open-source models and their own coded encounter databases.

What Is Coming Next: LLMs and Medical Coding

Large language models (GPT-4, Med-PaLM 2, Llama 3) have introduced a new paradigm for medical coding. Unlike previous approaches that treat coding as a classification problem, LLMs can reason about clinical documentation in natural language, apply complex guidelines, and explain their code assignments.

Early results are promising. Google's Med-PaLM 2 achieved 86.5% top-5 accuracy on ICD-10 coding from clinical notes in internal benchmarks — a significant improvement over previous state-of-the-art, though still below the 95% threshold needed for production. The key advantage of LLMs is in handling complex cases that require multi-step reasoning: sequencing rules, excluding/including logic, and documentation interpretation.

The key challenges for LLM-based coding remain:

- Hallucination: LLMs can confidently suggest codes that do not exist or do not apply. This is a non-negotiable failure mode in a compliance-regulated domain.

- Latency: Production coding requires sub-second response times for concurrent CDI. Current LLMs are too slow for real-time coding at scale without significant infrastructure investment.

- Cost: Running GPT-4-class models on millions of encounters is expensive — $0.50-$2.00 per encounter versus $0.02-$0.05 for fine-tuned BERT models.

- PHI exposure: Sending clinical notes to external LLM APIs raises HIPAA concerns. On-premise deployment of large models requires substantial GPU infrastructure.

The most likely near-term architecture is a hybrid: fine-tuned encoder models for high-volume straightforward cases, with LLM-powered reasoning for complex cases that fall below the confidence threshold. This mirrors the human workflow — most coding is routine, but the hard cases require deep thinking. Organizations already investing in fine-tuning AI for clinical applications are well-positioned to extend those capabilities to coding.

AI in healthcare demands both technical depth and domain expertise. See how our Healthcare AI Solutions team can help you ship responsibly. We also offer specialized Healthcare Software Product Development services. Talk to our team to get started.

Frequently Asked Questions

Can AI completely replace human medical coders?

No, not with current technology. AI can automate 60-75% of routine coding tasks and significantly augment coder productivity, but complex cases, compliance review, and coding quality oversight require certified human coders. The role is shifting from "code every encounter" to "validate AI output, handle exceptions, and ensure compliance." AHIMA projects that coder roles will evolve but not disappear through at least 2030.

What accuracy level is needed for production AI coding?

Most health systems require 95%+ accuracy (measured as code-level F1 score) before allowing AI to auto-assign codes. Below that threshold, AI is used as a suggestion engine with mandatory human review. The 95% threshold is not arbitrary — it reflects the point at which AI-introduced errors are fewer than human-only coding errors (which run 5-7% in most organizations).

How does AI coding handle annual code set updates?

Annual ICD-10 updates (effective October 1) and CPT updates (effective January 1) require model retraining. Best practices include: (1) pre-release training on new code descriptions 60 days before go-live, (2) parallel running of old and new models during a 30-day transition period, and (3) increased human review rates for encounters involving new codes during the first 90 days.

Does AI coding work for all specialties?

Accuracy varies significantly by specialty. Primary care and general medicine coding, which involves a relatively constrained set of high-frequency codes, achieves the highest AI accuracy (92-97%). Surgical specialties with complex modifier logic (orthopedics, cardiothoracic) run lower (85-91%). Behavioral health coding, which depends heavily on subjective clinical assessments, remains the most challenging (78-85%).

What is the ROI timeline for AI coding implementation?

Most organizations see positive ROI within 12-18 months, with the caveat that the first 6 months are typically a net cost (integration, training, calibration). The fastest ROI comes from denial reduction (measurable within 90 days of go-live) and revenue capture improvements from better code specificity (measurable within 6 months). Full coder productivity gains take 9-12 months as staff adapt to the new workflow.

Conclusion: The Path to Production AI Coding

Medical coding with AI is not a solved problem — it is a rapidly maturing one. The key insight is that NLP alone is necessary but insufficient. Production-grade AI coding requires a pipeline architecture: document ingestion, multi-model NLP extraction, guideline-aware code suggestion, confidence-based routing, and structured human review. Organizations that treat AI coding as a technology deployment miss the point — it is a workflow transformation that requires changes to coder roles, CDI processes, compliance programs, and physician documentation practices.

The organizations seeing the best results are those that invest in three things simultaneously: (1) high-quality training data from their own coded encounter history, (2) tight EHR integration that gives the AI system real-time access to the complete clinical picture, and (3) a CDI program that uses AI-generated insights to improve documentation at the point of care, not just code it better after the fact.

For technology teams building in the healthcare revenue cycle space, the architecture described in this article is the current state of the art. The future will bring better models, faster inference, and potentially autonomous coding for routine encounters — but the need for domain expertise, regulatory awareness, and human oversight is not going away anytime soon.