In January 2024, a 40-person health tech company deployed their third clinical AI agent into production. It shared a codebase with their first two agents, a patient intake summarizer and a lab result interpreter. Within six weeks, a memory leak in the lab agent started crashing the intake agent during peak hours. Three agents, one process, zero fault isolation. They spent the next four months extracting services under pressure, a migration that would have taken six weeks if planned from the start.

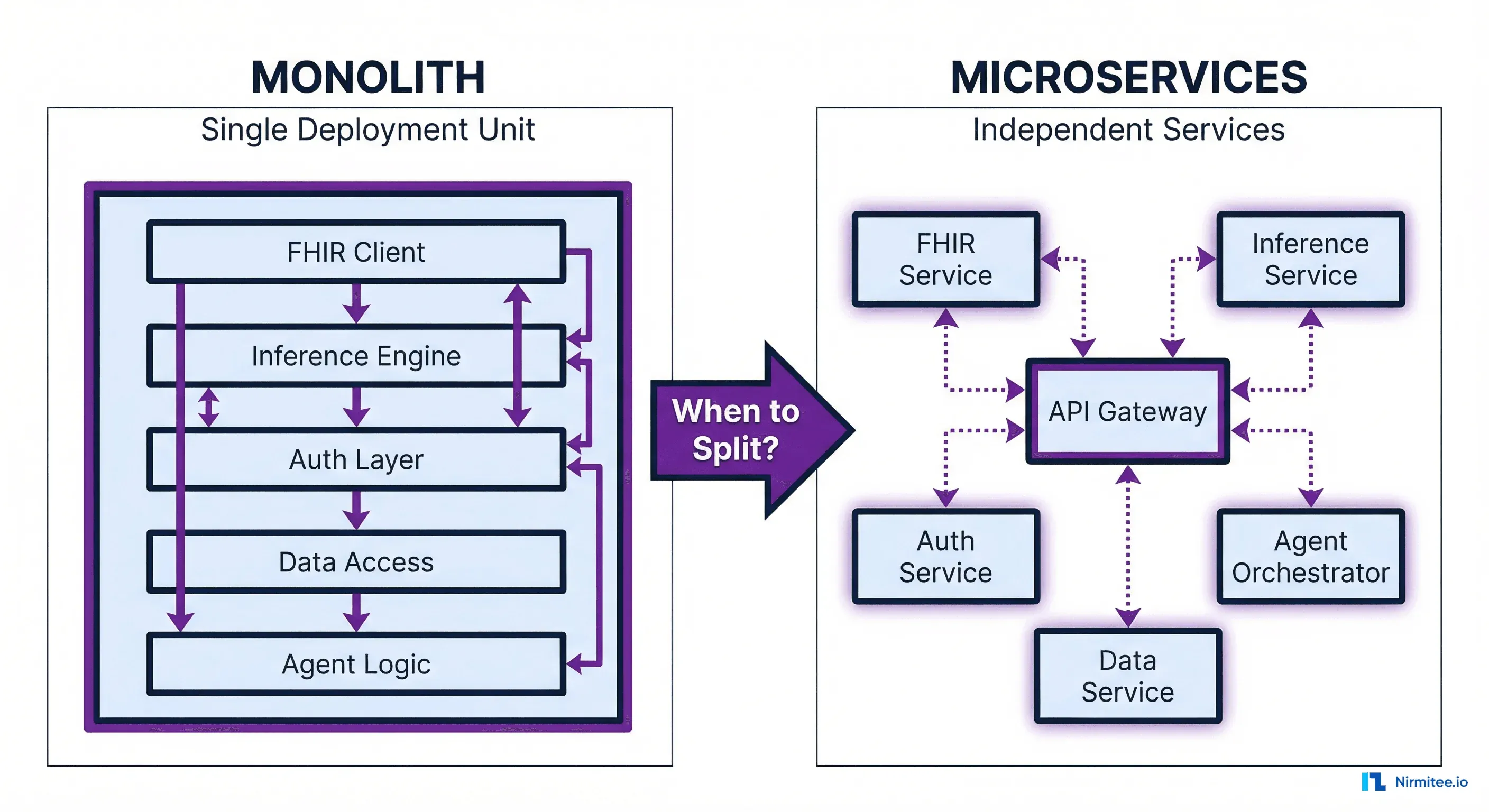

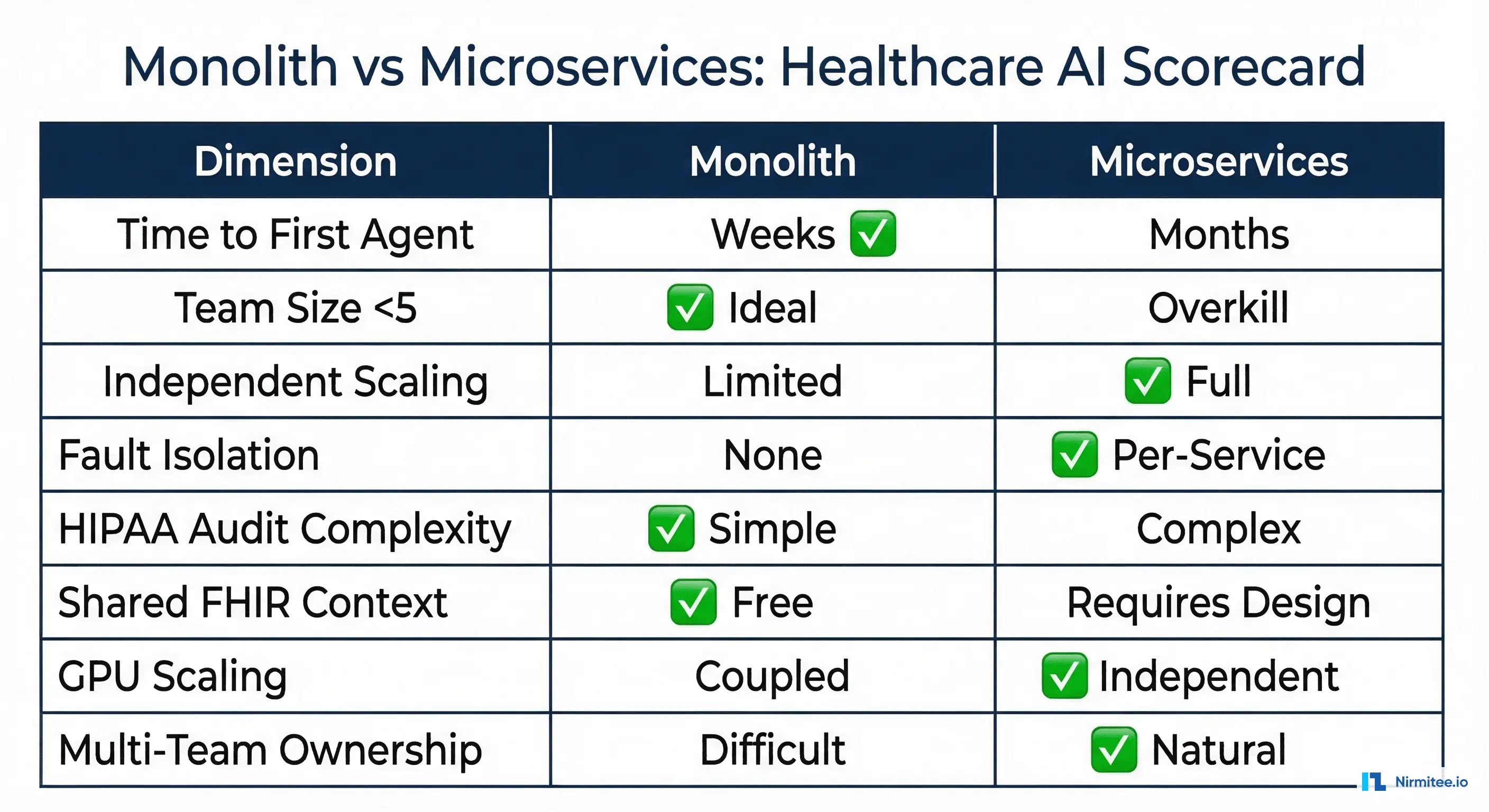

The microservices vs. monolith debate is decades old. But healthcare AI adds constraints that generic architecture advice ignores entirely: HIPAA audit trails across service boundaries, shared FHIR client context for patient data, GPU scaling for inference workloads, and clinical safety requirements that make fault isolation a patient safety concern rather than just an engineering preference.

This guide gives you the decision framework we use with healthcare engineering teams: start monolith, go modular, split selectively. With specific signals for when each transition makes sense.

Why Healthcare AI Architecture Is Different

Generic microservices guidance from Martin Fowler or Sam Newman assumes your services are processing orders or rendering web pages. Healthcare AI agents operate under constraints that fundamentally change the architecture calculus.

Constraint 1: HIPAA Audit Trails Across Service Boundaries

Every time an AI agent accesses Protected Health Information (PHI), that access must be logged with the requesting user, purpose, timestamp, and data elements accessed. In a monolith, this is one audit log. In microservices, every inter-service call that touches PHI needs its own audit entry, correlation IDs across services, and a way to reconstruct the full access chain during HHS audits.

This is not optional complexity. It is a regulatory requirement that adds real engineering cost to every service boundary you create.

Constraint 2: Shared FHIR Client Context

Clinical AI agents rarely operate on isolated data. A medication review agent needs the patient's current MedicationRequest resources, their Condition list, recent Observation values (labs, vitals), and AllergyIntolerance records. If your intake agent and your clinical decision support agent are separate services, they both need FHIR access, and they both need the same patient context.

In a monolith, the FHIR client is shared. In microservices, you either duplicate the client (maintenance burden), create a shared FHIR data service (now you have a distributed system), or pass FHIR bundles between services (serialization overhead and staleness risk).

Constraint 3: Inference Scaling Is GPU-Bound

Your FHIR data layer scales horizontally on commodity hardware. Your inference layer runs on GPUs that cost 10-50x more per hour. When these live in the same process, you scale them together, paying for GPU capacity even when the bottleneck is database queries. Separating inference into its own service lets you scale GPU resources independently, often reducing infrastructure costs by 40-60%.

Constraint 4: Clinical Safety Requires Fault Isolation

When a scheduling agent crashes, patients get inconvenienced. When a clinical decision support agent crashes and takes down the medication ordering workflow, patient safety is at risk. Fault isolation in healthcare is not an optimization; it is a clinical safety requirement that FDA SaMD guidance increasingly expects.

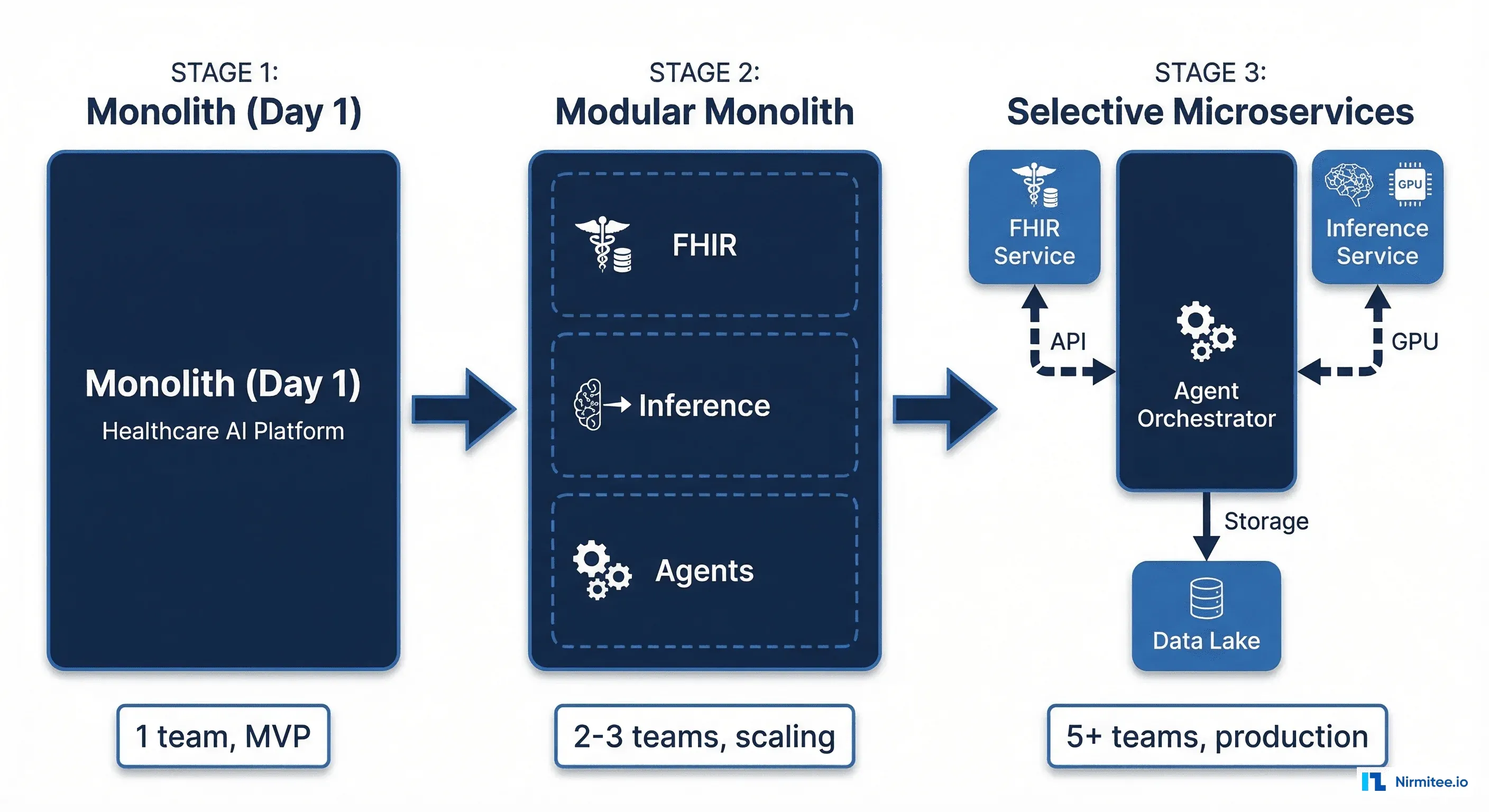

Phase 1: Start With a Monolith (Weeks 1-12)

Every healthcare AI system should start as a monolith. Not because monoliths are better, but because you do not yet know where the service boundaries should be. Drawing service boundaries before you understand your domain produces the wrong boundaries, and moving them later is the most expensive refactoring in software engineering.

What Your Day-1 Monolith Looks Like

// cmd/agent-server/main.go

package main

import (

"agent-platform/internal/agents/intake"

"agent-platform/internal/agents/labreviewer"

"agent-platform/internal/fhir"

"agent-platform/internal/inference"

"agent-platform/internal/platform/audit"

)

func main() {

// Single FHIR client shared across all agents

fhirClient := fhir.NewClient(fhir.Config{

BaseURL: os.Getenv("FHIR_SERVER_URL"),

AuthMethod: "smart-backend",

Scopes: []string{"system/*.read", "system/*.write"},

})

// Single inference engine

inferenceEngine := inference.NewEngine(inference.Config{

ModelPath: "/models/clinical-bert-v2",

DeviceType: "cuda",

MaxBatch: 32,

})

// Audit logger — one instance, one log stream

auditLog := audit.NewHIPAALogger(audit.Config{

Destination: "postgresql",

RetentionYears: 6,

})

// Register agents — they share everything

intakeAgent := intake.New(fhirClient, inferenceEngine, auditLog)

labAgent := labreviewer.New(fhirClient, inferenceEngine, auditLog)

server := platform.NewServer(intakeAgent, labAgent)

server.ListenAndServe(":8080")

}This is the right architecture for a team of 2-5 engineers building their first 1-3 agents. The FHIR client is shared, the audit log is unified, deployment is one binary, and debugging is straightforward. You can ship your first agent to production in weeks instead of months.

When the Monolith Works Perfectly

- Team size under 5: One team can own the entire codebase without coordination overhead

- Fewer than 3 agents: The cognitive load of understanding the full system is manageable

- Single deployment environment: One cloud account, one Kubernetes namespace, one CI/CD pipeline

- Shared scaling profile: All agents have similar compute and memory requirements

- Early-stage product: Requirements are changing weekly, and service boundaries would shift with them

Phase 2: The Modular Monolith (Months 3-9)

Before splitting into services, make your monolith modular. This is the step that Shopify famously documented and that approximately 42% of organizations that rushed to microservices are now returning to.

Module Boundaries in Healthcare AI

Draw your module boundaries around these domains:

| Module | Responsibility | Key Dependencies |

|---|---|---|

| FHIR Data | All FHIR read/write operations, resource caching, bundle management | FHIR server, PostgreSQL |

| Inference | Model loading, batching, prediction, confidence scoring | GPU runtime, model registry |

| Agent Core | Agent orchestration, prompt management, tool execution | FHIR Data, Inference |

| Audit | HIPAA logging, access tracking, compliance reporting | PostgreSQL, log aggregator |

| Auth | SMART on FHIR auth, token management, scope enforcement | Identity provider, JWKS |

The key discipline is enforcing module boundaries through Go packages (or Java modules, or Python namespace packages) with explicit public interfaces. No module reaches into another module's internals.

// internal/fhirdata/module.go — public interface

package fhirdata

type Module interface {

// GetPatientContext returns all FHIR resources needed for agent reasoning

GetPatientContext(ctx context.Context, patientID string) (*PatientContext, error)

// WriteObservation creates a new Observation resource

WriteObservation(ctx context.Context, obs *fhir.Observation) error

// SearchResources performs a FHIR search with audit logging

SearchResources(ctx context.Context, resourceType string, params url.Values) (*fhir.Bundle, error)

}

// internal/inference/module.go — public interface

package inference

type Module interface {

// Predict runs inference on clinical text, returns structured output

Predict(ctx context.Context, input ClinicalInput) (*PredictionResult, error)

// BatchPredict handles multiple inputs efficiently on GPU

BatchPredict(ctx context.Context, inputs []ClinicalInput) ([]*PredictionResult, error)

// ModelHealth returns current model status and latency percentiles

ModelHealth(ctx context.Context) (*HealthStatus, error)

}Why Modular Monolith Before Microservices

The modular monolith gives you 80% of the organizational benefits of microservices (clear ownership, independent development, testable boundaries) with none of the distributed systems complexity (network failures, eventual consistency, distributed tracing). When you eventually split, each module becomes a service with a well-defined API that has already been tested in production.

Phase 3: Selective Microservices (Month 9+)

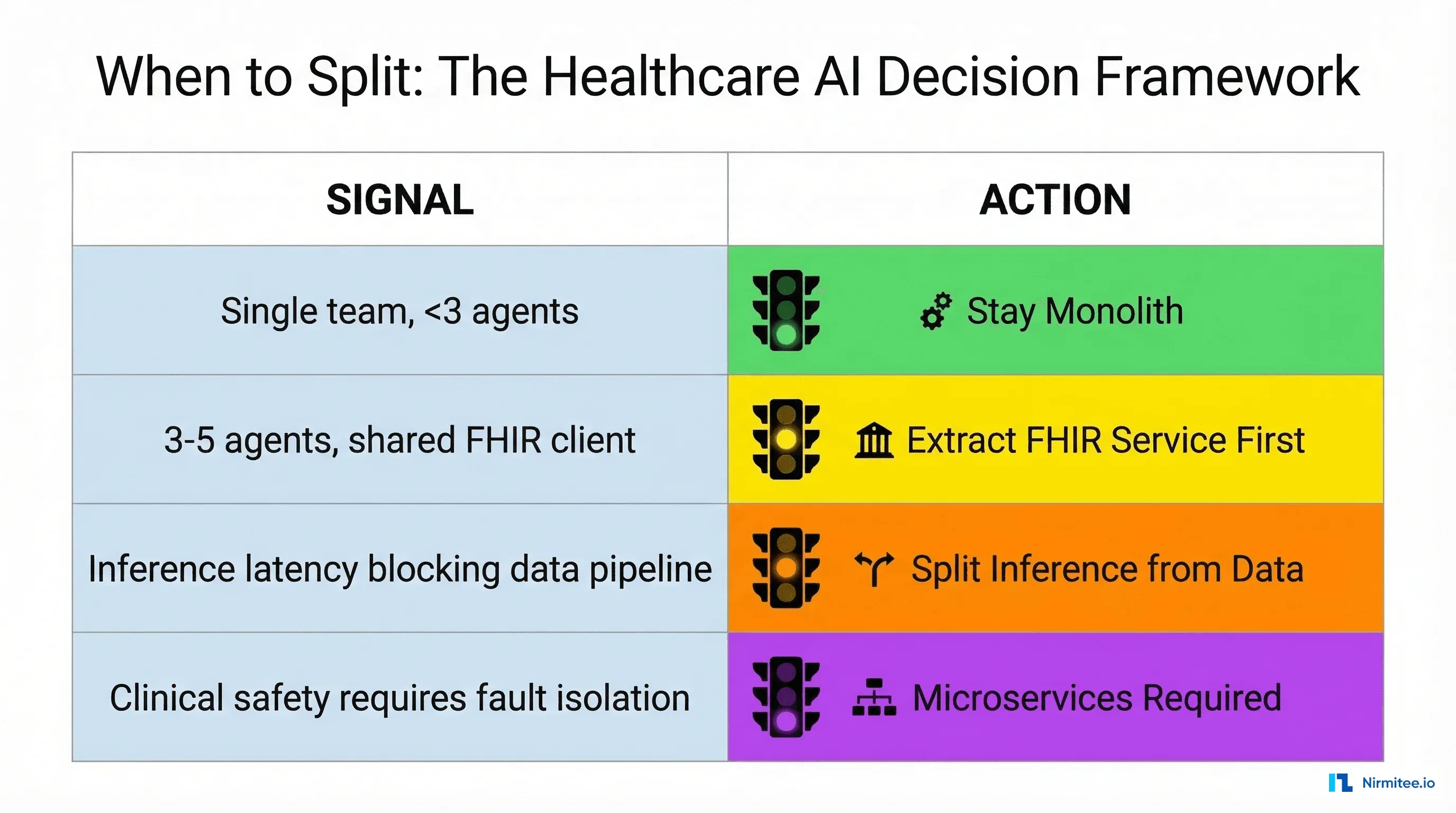

You do not migrate to microservices. You extract specific modules into services when concrete signals tell you to. Here are the five signals we watch for.

Signal 1: Inference and Data Have Different Scaling Curves

Your FHIR data service handles 10,000 reads per minute on a $200/month VM. Your inference engine maxes out its GPU at 500 predictions per minute and needs a $3,000/month GPU instance. Scaling them together means paying for GPU capacity during off-peak data-only workloads. When your GPU bill exceeds $5,000/month, extracting inference into its own service typically saves 40-60% on infrastructure.

Signal 2: Agent Failures Are Cascading

When the lab review agent's model update causes an out-of-memory error that crashes the process, taking down the medication verification agent that was handling active orders, you have a clinical safety problem. Extract the high-risk agent into its own service with independent health checks, circuit breakers, and failure isolation.

Signal 3: Multiple Teams Need Independent Deployment

Two teams working in the same codebase with a single deployment pipeline creates merge conflicts, deployment coordination overhead, and the "we can't deploy because team B's feature isn't ready" problem. When you have 3+ teams contributing agents, independent deployment becomes a productivity requirement.

Signal 4: Compliance Requires Physical Isolation

Some healthcare customers require that AI inference happens in specific geographic regions, or that certain agent capabilities run in isolated network segments. A physically separate service with its own infrastructure-as-code definition is the only way to meet these requirements.

Signal 5: FHIR Client Is the Bottleneck

When 5+ agents all share a single FHIR client and connection pool, the slowest FHIR query (a complex $everything operation on a patient with 10 years of history) blocks the fastest (a simple Patient read). Extracting the FHIR layer into a dedicated service with request prioritization, connection pooling, and caching solves this without making every agent team manage their own FHIR client.

The Extraction Playbook: FHIR Service First

When you are ready to extract your first service, start with the FHIR data layer. Here is why: every agent depends on it, it has clear request/response semantics, it benefits from centralized caching, and it does not require GPU resources.

Step 1: Define the gRPC Contract

// proto/fhirdata/v1/service.proto

syntax = "proto3";

service FHIRDataService {

// Patient context for agent reasoning

rpc GetPatientContext(PatientContextRequest) returns (PatientContextResponse);

// FHIR search with built-in audit logging

rpc SearchResources(SearchRequest) returns (SearchResponse);

// Write-back with optimistic concurrency

rpc WriteResource(WriteResourceRequest) returns (WriteResourceResponse);

}

message PatientContextRequest {

string patient_id = 1;

repeated string resource_types = 2; // e.g., ["Condition", "MedicationRequest"]

string requesting_agent = 3; // For HIPAA audit trail

string correlation_id = 4; // Cross-service trace ID

}Step 2: Run Both Paths in Parallel

Before cutting over, run the new service alongside the monolith module. Every request goes to both the old in-process call and the new gRPC service. Compare results, measure latency delta, catch discrepancies. This shadow deployment pattern is essential for healthcare systems where silent data discrepancies can affect clinical decisions.

Step 3: Migrate Agent by Agent

Switch one agent at a time from the in-process module to the gRPC service. Start with the lowest-risk agent (scheduling, documentation), validate for a week, then move the next agent. Never big-bang migrate all agents simultaneously.

Healthcare-Specific Microservices Patterns

Pattern 1: The Audit Sidecar

Instead of each service implementing its own HIPAA audit logging, deploy an audit sidecar container alongside every service. The sidecar intercepts all requests, logs PHI access, and forwards to a centralized HIPAA-compliant log store. This keeps audit logic consistent and removes it from the service team's responsibility.

Pattern 2: FHIR Context Cache

Clinical agents frequently need the same patient context within a single encounter. A Redis-backed FHIR context cache at the API gateway level reduces redundant FHIR server calls by 60-80%. The cache TTL should match your clinical workflow duration (typically 15-30 minutes for an outpatient visit) with immediate invalidation on any write-back.

Pattern 3: Clinical Circuit Breaker

Standard circuit breakers trip on error rate thresholds. Clinical circuit breakers add a safety dimension: if the inference service is degraded, the agent should fall back to a "no recommendation" state rather than providing potentially incorrect clinical guidance. This is a regulatory expectation for SaMD systems.

Real-World Cost Comparison

Here is what the infrastructure cost difference looks like for a healthcare AI platform running 5 agents, based on actual client deployments:

| Dimension | Monolith (5 agents) | Microservices (5 agents) |

|---|---|---|

| Compute (monthly) | $8,400 (2x GPU instances, always on) | $5,200 (GPU scales to zero off-peak) |

| Engineering overhead | 0.5 FTE (single deploy pipeline) | 1.5 FTE (service mesh, distributed tracing) |

| Deployment frequency | Weekly (coordination required) | Daily per service (independent) |

| Mean time to recovery | 45 min (full restart) | 5 min (single service restart) |

| HIPAA audit complexity | Low (single log stream) | High (distributed trace correlation) |

| Break-even point | Immediate | ~6 months (after infra savings offset eng cost) |

The microservices approach saves $3,200/month on compute but costs an additional 1.0 FTE in engineering overhead. At a fully-loaded engineer cost of $15,000/month, the break-even requires at least $15,000/month in combined compute savings and deployment velocity gains. For most teams, this happens around 5-7 agents with 3+ teams.

The Decision Framework

After working with healthcare engineering teams scaling from 1 to 20+ agents, this is the framework that consistently produces good outcomes:

- 1-3 agents, 1 team: Monolith. Do not even consider microservices. Ship fast, learn your domain.

- 3-5 agents, 2 teams: Modular monolith. Enforce module boundaries, prepare for extraction, but do not split yet.

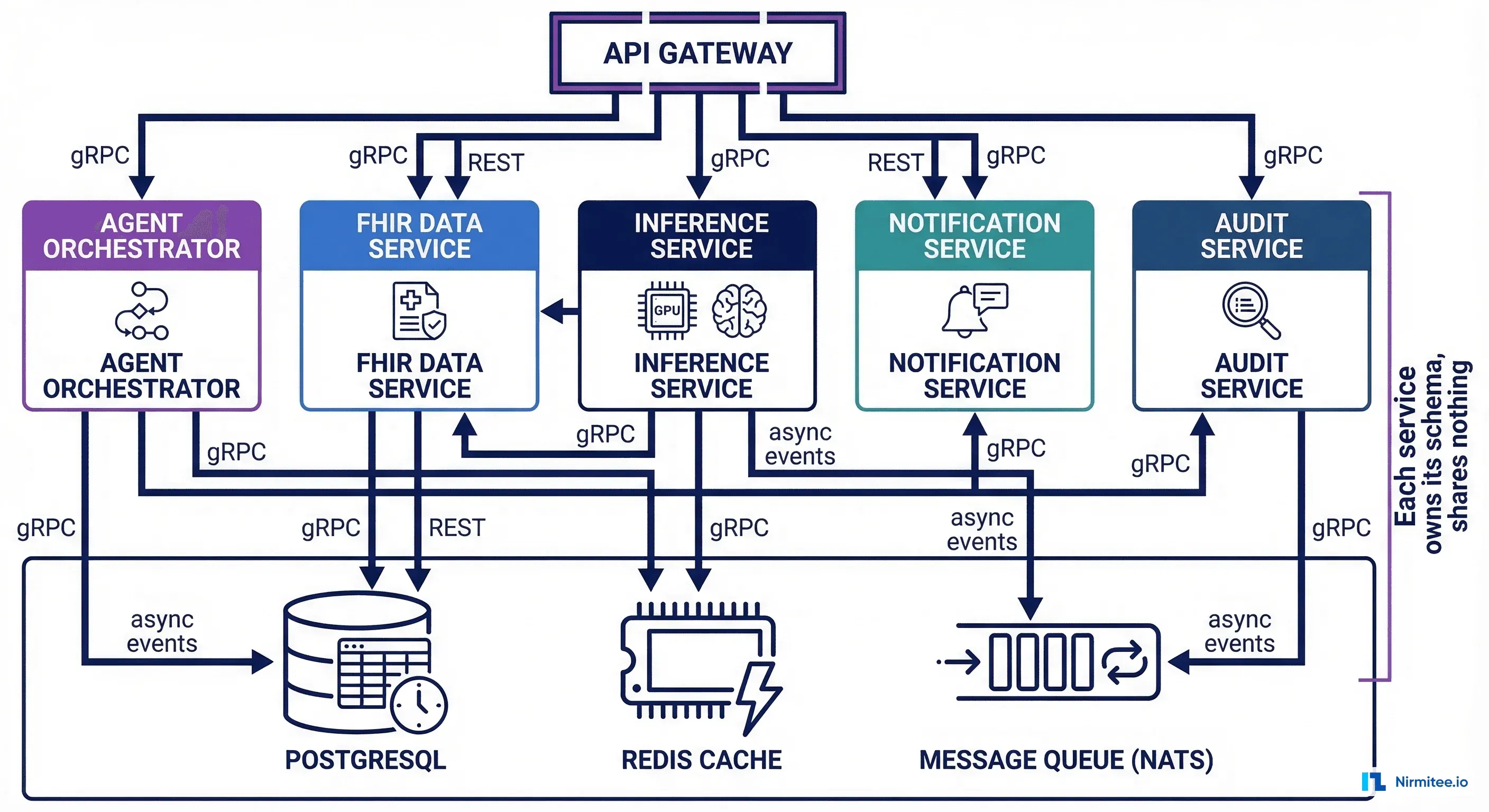

- 5-10 agents, 3+ teams: Extract FHIR data service and inference service. Keep agent orchestration as a modular monolith.

- 10+ agents, 5+ teams: Full microservices with service mesh, distributed tracing, and dedicated platform engineering team.

The organizations that get this wrong almost always err on the side of splitting too early, not too late. A monolith that is well-structured can be split in weeks. A distributed system with wrong boundaries takes months to fix.

Getting Started

If you are building your first healthcare AI agent today, start with a monolith. Use Go modules or Python namespace packages to enforce clean boundaries from day one. Plan for extraction by keeping your FHIR client, inference engine, and audit logger behind well-defined interfaces.

When the signals tell you it is time to split, extract the FHIR data layer first, then inference, then let each agent team own their service. The organizations that follow this progression consistently ship faster and spend less than those that start with microservices on day one.

At Nirmitee, we help healthcare engineering teams design agent architectures that start simple and scale intentionally. Whether you are building your first clinical AI agent or untangling a monolith that has outgrown its architecture, our team has built the patterns that work.