Why You Cannot A/B Test Clinical AI Like a Website Button

In consumer tech, A/B testing is simple: show 50% of users a blue button and 50% a green button, measure which gets more clicks, ship the winner. In clinical AI, the "button" is a sepsis prediction that determines whether a patient gets antibiotics. You cannot randomly assign half your patients to a potentially inferior algorithm and wait to see who dies.

Yet clinical AI models need validation in real-world settings before full deployment. The gap between retrospective accuracy (tested on historical data) and prospective performance (tested on live patients) is often significant — a 2020 Nature Medicine study found that 93% of clinical AI studies were retrospective only, and models frequently underperformed when deployed prospectively. The challenge is validating new models without risking patient safety.

Healthcare-safe deployment strategies solve this by creating controlled environments where new models can be evaluated against real clinical data without ever influencing patient care until their safety is proven.

This guide covers four deployment strategies — shadow mode, champion/challenger, canary deployment, and staged rollout — with implementation code, statistical analysis methods, and decision frameworks for choosing the right strategy based on your model's clinical risk level. For monitoring these deployments in production, see our guide on model monitoring for healthcare AI.

Strategy 1: Shadow Deployment

Shadow deployment is the safest possible validation strategy. The new model runs alongside the existing clinical workflow, processes the same inputs, and generates predictions — but those predictions are never shown to clinicians or patients. They are logged for offline comparison against actual clinical decisions and outcomes.

When to Use Shadow Mode

- First deployment of any clinical AI model — always shadow first

- High-risk models — sepsis prediction, drug interaction alerting, diagnostic imaging

- Models replacing human judgment — need evidence of equivalence before substitution

- Regulatory requirements — FDA SaMD guidance recommends prospective silent studies

Shadow Deployment Framework

import time

import json

import logging

from datetime import datetime

from dataclasses import dataclass, asdict

from typing import Optional, Dict, Any

import uuid

@dataclass

class ShadowPrediction:

prediction_id: str

patient_id: str

encounter_id: str

timestamp: str

model_version: str

model_prediction: float # probability

model_label: str # predicted class

model_confidence: float

model_latency_ms: float

clinician_decision: Optional[str] = None

actual_outcome: Optional[str] = None

outcome_timestamp: Optional[str] = None

class ShadowDeploymentFramework:

"""Run ML model in shadow mode alongside clinical workflow."""

def __init__(self, model, model_version: str,

log_store: Any, metrics_store: Any):

self.model = model

self.model_version = model_version

self.log_store = log_store

self.metrics = metrics_store

self.logger = logging.getLogger("shadow")

def predict_shadow(self, patient_data: dict,

encounter_id: str) -> ShadowPrediction:

"""Generate shadow prediction — NEVER return to caller for clinical use."""

start = time.perf_counter()

try:

result = self.model.predict(patient_data)

latency = (time.perf_counter() - start) * 1000

prediction = ShadowPrediction(

prediction_id=str(uuid.uuid4()),

patient_id=patient_data["patient_id"],

encounter_id=encounter_id,

timestamp=datetime.now().isoformat(),

model_version=self.model_version,

model_prediction=result["probability"],

model_label=result["label"],

model_confidence=result["confidence"],

model_latency_ms=round(latency, 2),

)

# Log to persistent store (NEVER return to clinical system)

self.log_store.write(asdict(prediction))

self.metrics.increment("shadow_predictions_total")

return prediction

except Exception as e:

self.logger.error(f"Shadow prediction failed: {e}")

self.metrics.increment("shadow_prediction_errors")

raise

def record_clinician_decision(self, encounter_id: str,

decision: str):

"""Record what the clinician actually decided."""

self.log_store.update(

{"encounter_id": encounter_id},

{"clinician_decision": decision}

)

def record_outcome(self, encounter_id: str,

outcome: str):

"""Record actual patient outcome for comparison."""

self.log_store.update(

{"encounter_id": encounter_id},

{"actual_outcome": outcome,

"outcome_timestamp": datetime.now().isoformat()}

)Strategy 2: Champion/Challenger

In champion/challenger deployment, both the existing model (champion) and the new model (challenger) generate predictions, and both are shown to the clinician. The clinician sees two recommendations side by side and chooses which to follow — maintaining full clinical autonomy while generating comparison data.

Key Metrics to Track

| Metric | Definition | Target | Action if Missed |

|---|---|---|---|

| Agreement Rate | % of cases where both models agree | >85% | Investigate discordant cases for systematic patterns |

| Challenger Win Rate | % of disagreements where clinician chose challenger | >55% | Below 50% means challenger is worse — do not promote |

| Override Rate | % of cases where clinician rejected both models | <10% | High override rate means both models are insufficient |

| Decision Time Delta | Additional time clinician spent with two options | <15 seconds | Too much cognitive load — simplify presentation |

| Clinician Satisfaction | Survey score on usefulness of comparison | >7/10 | Below 5 — clinicians find it annoying, not helpful |

Strategy 3: Canary Deployment

Canary deployment routes a small percentage of predictions (typically 5-10%) through the new model while the rest continue using the existing model. In healthcare, this must be limited to non-critical use cases — you can canary a chart summarization model or a scheduling optimizer, but never a diagnostic or treatment-affecting model without the safeguards of shadow or champion/challenger first.

import random

import hashlib

from typing import Callable

class ClinicalCanaryRouter:

"""Route predictions between champion and canary models."""

def __init__(self, champion_model: Callable,

canary_model: Callable,

canary_percentage: float = 0.05,

excluded_categories: list = None):

self.champion = champion_model

self.canary = canary_model

self.canary_pct = canary_percentage

self.excluded = excluded_categories or [

"critical_care", "emergency", "pediatric"

]

def route(self, patient_data: dict,

clinical_context: dict) -> dict:

"""Route prediction to champion or canary model."""

# NEVER canary critical categories

if clinical_context.get("category") in self.excluded:

return {

"prediction": self.champion(patient_data),

"model": "champion",

"reason": "excluded_category"

}

# Deterministic routing by patient ID (consistent experience)

hash_val = int(hashlib.md5(

patient_data["patient_id"].encode()

).hexdigest(), 16)

use_canary = (hash_val % 100) < (self.canary_pct * 100)

if use_canary:

return {

"prediction": self.canary(patient_data),

"model": "canary",

"canary_version": self.canary.version

}

else:

return {

"prediction": self.champion(patient_data),

"model": "champion"

}Strategy 4: Staged Rollout

Staged rollout progressively expands the model's deployment scope from a single nursing unit to an entire health system, with validation gates between each stage. This is the standard approach recommended by the American Hospital Association for clinical technology deployment.

| Stage | Scope | Duration | Sample Size | Gate Criteria |

|---|---|---|---|---|

| Stage 1: Unit | One nursing unit (30 beds) | 2 weeks | ~150 predictions | No safety events, >80% clinician acceptance |

| Stage 2: Floor | One hospital floor (120 beds) | 2 weeks | ~600 predictions | Accuracy within 2% of validation, no fairness issues |

| Stage 3: Hospital | Full hospital (500 beds) | 4 weeks | ~2,500 predictions | Statistical significance on primary metrics |

| Stage 4: System | All facilities | Ongoing | 10,000+ predictions | Continuous monitoring thresholds met |

Statistical Analysis for Clinical A/B Testing

Standard A/B testing statistics assume independent observations, fixed sample sizes, and binary outcomes. Clinical AI testing violates most of these assumptions: patients have repeated encounters, sample sizes are constrained by clinical volume, and outcomes are often continuous or delayed. Here is the statistical toolkit adapted for healthcare. For teams already tracking model performance, this connects to our guide on model monitoring dashboards.

import numpy as np

from scipy import stats

from typing import Tuple

class ClinicalABTestAnalyzer:

"""Statistical analysis for clinical model comparison."""

def __init__(self, alpha: float = 0.05,

power: float = 0.80,

min_clinically_significant_delta: float = 0.03):

self.alpha = alpha

self.power = power

self.mcs_delta = min_clinically_significant_delta

def sample_size_needed(self, baseline_rate: float,

expected_improvement: float) -> int:

"""Calculate required sample size per arm."""

p1 = baseline_rate

p2 = baseline_rate + expected_improvement

# Two-proportion z-test sample size

z_alpha = stats.norm.ppf(1 - self.alpha / 2)

z_beta = stats.norm.ppf(self.power)

p_bar = (p1 + p2) / 2

n = ((z_alpha * np.sqrt(2 * p_bar * (1 - p_bar)) +

z_beta * np.sqrt(p1 * (1 - p1) + p2 * (1 - p2)))

/ (p2 - p1)) ** 2

return int(np.ceil(n))

def compare_models(self, champion_correct: int,

champion_total: int,

challenger_correct: int,

challenger_total: int) -> dict:

"""Two-proportion z-test for model comparison."""

p1 = champion_correct / champion_total

p2 = challenger_correct / challenger_total

p_pool = (champion_correct + challenger_correct) / \

(champion_total + challenger_total)

se = np.sqrt(p_pool * (1 - p_pool) *

(1/champion_total + 1/challenger_total))

z_stat = (p2 - p1) / se if se > 0 else 0

p_value = 2 * (1 - stats.norm.cdf(abs(z_stat)))

# Confidence interval for the difference

se_diff = np.sqrt(

p1 * (1 - p1) / champion_total +

p2 * (1 - p2) / challenger_total

)

ci_lower = (p2 - p1) - 1.96 * se_diff

ci_upper = (p2 - p1) + 1.96 * se_diff

return {

"champion_accuracy": round(p1, 4),

"challenger_accuracy": round(p2, 4),

"absolute_improvement": round(p2 - p1, 4),

"relative_improvement": round((p2 - p1) / p1 * 100, 2),

"z_statistic": round(z_stat, 4),

"p_value": round(p_value, 6),

"ci_95": [round(ci_lower, 4), round(ci_upper, 4)],

"significant": p_value < self.alpha,

"clinically_significant": abs(p2 - p1) >= self.mcs_delta,

"recommendation": self._recommend(p_value, p2 - p1)

}

def _recommend(self, p_value: float, delta: float) -> str:

if p_value >= self.alpha:

return "INCONCLUSIVE — insufficient evidence to reject null"

elif delta > 0 and abs(delta) >= self.mcs_delta:

return "PROMOTE CHALLENGER — statistically and clinically significant improvement"

elif delta > 0:

return "HOLD — statistically significant but clinically marginal"

else:

return "KEEP CHAMPION — challenger is worse"

def bayesian_comparison(self, champion_successes: int,

champion_trials: int,

challenger_successes: int,

challenger_trials: int,

n_simulations: int = 100000) -> dict:

"""Bayesian A/B test — better for small samples and continuous monitoring."""

# Beta-Binomial model with uniform prior

champion_samples = np.random.beta(

champion_successes + 1,

champion_trials - champion_successes + 1,

n_simulations

)

challenger_samples = np.random.beta(

challenger_successes + 1,

challenger_trials - challenger_successes + 1,

n_simulations

)

prob_challenger_better = np.mean(

challenger_samples > champion_samples

)

expected_improvement = np.mean(

challenger_samples - champion_samples

)

return {

"prob_challenger_better": round(prob_challenger_better, 4),

"expected_improvement": round(expected_improvement, 4),

"ci_95_improvement": [

round(np.percentile(challenger_samples - champion_samples, 2.5), 4),

round(np.percentile(challenger_samples - champion_samples, 97.5), 4)

],

"recommendation": "PROMOTE" if prob_challenger_better > 0.95

else "HOLD" if prob_challenger_better > 0.80

else "KEEP CHAMPION"

}Model Comparison Dashboard

The comparison dashboard aggregates all shadow/champion-challenger data into a single view for the clinical review committee. This is distinct from the day-to-day model monitoring dashboard — it focuses specifically on the comparison between two models rather than ongoing health of a single model.

import pandas as pd

import numpy as np

class ModelComparisonReport:

"""Generate comparison report for clinical review committee."""

def __init__(self, shadow_logs: pd.DataFrame):

self.logs = shadow_logs

def generate_report(self) -> dict:

df = self.logs

# Core agreement metrics

agreement = (df["model_label"] == df["clinician_decision"]).mean()

# Performance vs outcomes (where outcomes are available)

outcome_df = df.dropna(subset=["actual_outcome"])

if not outcome_df.empty:

model_correct = (

outcome_df["model_label"] == outcome_df["actual_outcome"]

).mean()

clinician_correct = (

outcome_df["clinician_decision"] == outcome_df["actual_outcome"]

).mean()

else:

model_correct = None

clinician_correct = None

return {

"total_predictions": len(df),

"agreement_with_clinician": round(agreement, 4),

"model_accuracy_vs_outcome": round(model_correct, 4) if model_correct else "N/A",

"clinician_accuracy_vs_outcome": round(clinician_correct, 4) if clinician_correct else "N/A",

"mean_latency_ms": round(df["model_latency_ms"].mean(), 1),

"p95_latency_ms": round(df["model_latency_ms"].quantile(0.95), 1),

"evaluation_period_days": (df["timestamp"].max() - df["timestamp"].min()).days,

}The Clinical AI Validation Lifecycle

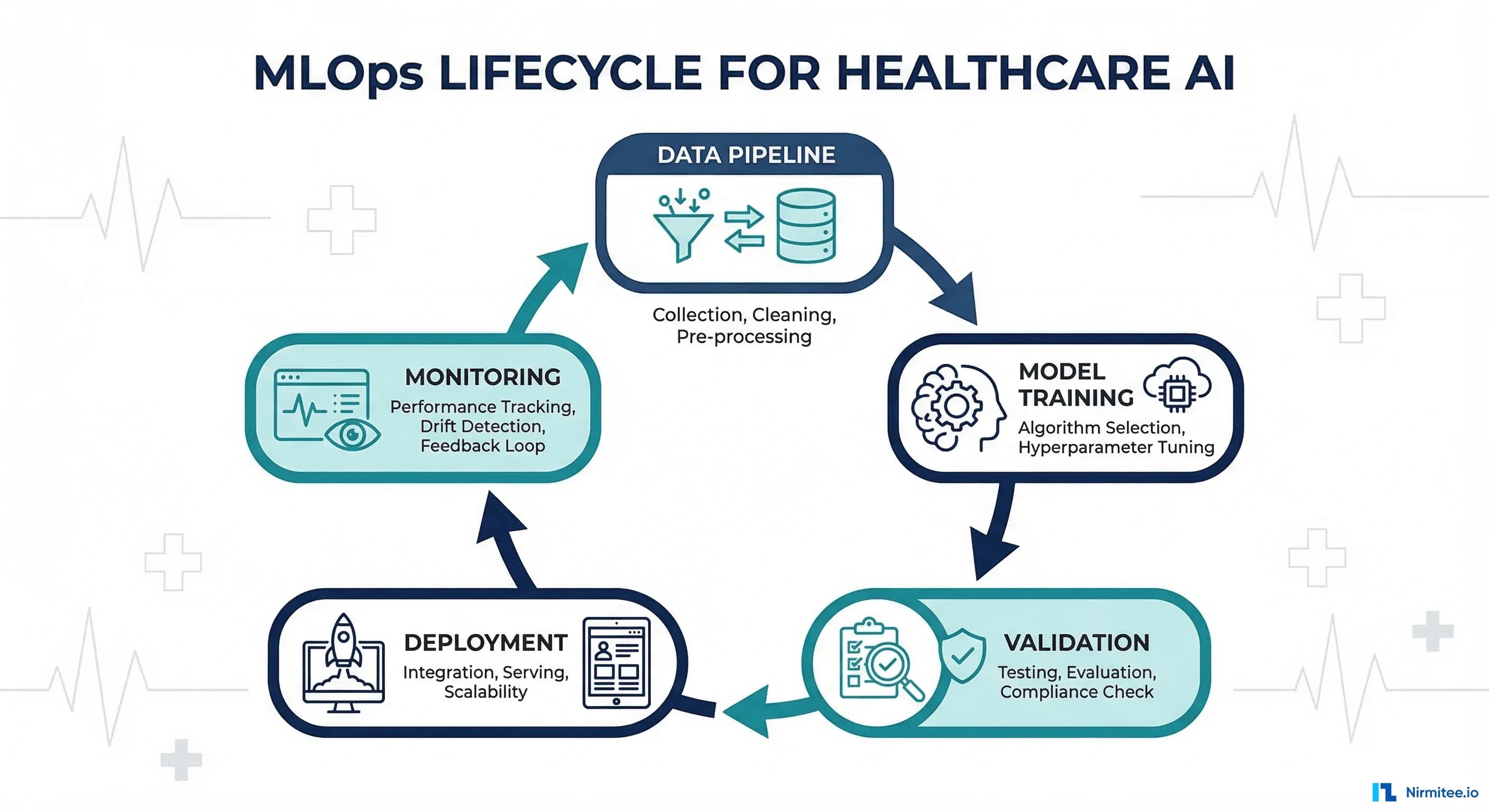

The four deployment strategies are not alternatives — they are stages in a validation lifecycle. Every clinical AI model should progress through this sequence, with the duration of each stage determined by the model's clinical risk level. This aligns with the SRE practices for healthcare that treat safety as a non-negotiable prerequisite.

Risk-Based Deployment Decision Framework

| Clinical Risk Level | Example Models | Required Strategy Sequence | Minimum Validation Duration |

|---|---|---|---|

| Critical (life-threatening) | Sepsis prediction, drug dosing, ventilator management | Shadow (90 days) + Champion/Challenger (60 days) + Staged | 6 months minimum |

| High (significant harm) | Readmission prediction, diagnostic imaging triage | Shadow (60 days) + Canary (30 days) + Staged | 3 months minimum |

| Medium (moderate impact) | Scheduling optimization, resource allocation | Shadow (30 days) + Canary (14 days) | 6 weeks minimum |

| Low (informational only) | Chart summarization, documentation assistance | Canary (14 days) + Full deployment | 2 weeks minimum |

Frequently Asked Questions

How long should shadow deployment run before promoting a model?

Duration depends on clinical risk level and data volume. For high-risk models (sepsis, diagnostics), run shadow mode for at minimum 60-90 days to capture seasonal variations, weekend vs. weekday patterns, and sufficient outcome data. For medium-risk models (readmission prediction), 30 days may suffice if you reach statistical significance. The key constraint is often not time but outcome availability — if patient outcomes take 30 days to materialize (e.g., 30-day readmission), your shadow period must be long enough to collect outcomes for your earliest predictions.

Can shadow deployment affect model performance due to lack of feedback?

No — shadow deployment is purely observational. The model receives the same inputs it would in production. The only difference is that its outputs are not acted upon. However, if the model is designed to incorporate clinician feedback (reinforcement learning from human feedback), shadow mode will not provide that signal. In that case, use champion/challenger mode where clinician actions with the model generate the feedback signal.

How do you handle class imbalance in clinical A/B testing?

Clinical datasets are inherently imbalanced (e.g., 2% sepsis prevalence, 15% readmission rate). Use metrics robust to imbalance: AUC-ROC, precision-recall curves, and calibration plots rather than raw accuracy. For statistical testing, ensure both champion and challenger groups have comparable class distributions. Stratified randomization by acuity level helps.

What happens if the challenger model causes a patient safety event during canary deployment?

Immediate rollback to champion model, full incident investigation, and clinical review. This is why canary deployment should only be used for non-critical models unless preceded by extensive shadow validation. Your deployment pipeline must include automated rollback triggers — if any safety metric crosses a predefined threshold, the canary is automatically killed and all traffic returns to the champion within seconds.

How do you ensure informed consent for patients in A/B testing?

Shadow mode does not require patient consent because model outputs do not influence care. Champion/challenger requires IRB review because clinicians see two recommendations (informed consent may be waived under the common rule if the comparison is between two standard-of-care approaches). Canary deployment with clinical impact typically requires IRB approval and may require patient notification. Consult your institution's IRB and legal team before implementing any strategy that affects clinical decisions.

Can these strategies work with federated learning or multi-site deployments?

Yes. Shadow mode is particularly well-suited to federated settings — run the model at each site independently, collect comparison metrics locally, and aggregate results centrally without sharing patient data. Staged rollout naturally maps to multi-site deployment: start at one facility, validate, expand to the next. The statistical analysis must account for site-level effects (use mixed-effects models or stratified analysis). See our guide on healthcare data quality for data consistency considerations across sites.