The honest reason most hospital AI programs stall

It is not the model. It is rarely the data. It is almost always governance and sequencing.

Hospital leaders evaluating agentic AI usually have access to vendors with credible technology. The differentiator over an 18-month horizon is not whose model is best. It is which organizations adopt with structure — picking the right workflow first, defining exit gates between phases, and naming who owns clinical performance after go-live. This framework is built for COOs and CMIOs who have read our guide to AI agents in healthcare and need to translate it into an operating plan.

What is a strategic adoption framework?

A strategic adoption framework is a documented sequence of phases, exit gates, and named owners that takes a hospital from "we are evaluating AI agents" to "we are operating AI agents at scale" — with the discipline that distinguishes the systems compounding returns at month 18 from the systems still running 12-week pilots at month 18. It is not a vendor selection process. It is the operating model that sits underneath whichever vendor or build path you choose.

Phase 1 — Discovery (Month 1–2)

The single most important output of Phase 1 is not a vendor shortlist. It is a ranked, scoped list of three workflows that meet four criteria: high ROI, low clinical risk, an existing baseline metric, and a willing operational owner. If you cannot find three, build one and stop.

Anchor the discovery on a workflow scan led by your COO and a clinical lead, not by IT. The scan should produce: total volume per week, current cycle time, current denial/error rate (if applicable), staff hours consumed, downstream rework cost, and the name of the human who owns the workflow today. This is the data you need to defend the project to your board, and it is the data you need to compare a vendor's pitch against reality.

The exit gate from Phase 1 is simple: three ranked use cases, baseline metrics set in writing, and a clinical-operational sponsor who has signed up to own the result. Without this, do not move to Phase 2.

Phase 2 — Pilot (Month 3–4)

Pick one of the three workflows. Run it shadow first, then supervised. Shadow means the agent produces outputs that no one acts on — your existing staff continues to do the work — and you measure the agent's output against theirs. Supervised means the agent's outputs go into the workflow but every output is reviewed by a human before it has effect.

The exit gate is two things: greater than 95% accuracy on a representative cohort of cases (not the vendor's curated set) and a clean bias audit across the demographic subgroups your population actually contains. If either fails, you go back to Phase 2 with the vendor — you do not move forward.

Phase 3 — Scale (Month 5–8)

Multi-site rollout begins here, not earlier. The vendor's deployment playbook will not survive contact with your second site; the differences in clinical workflows between two hospitals in the same system are larger than most vendors expect. Plan for that.

The exit gate is a production SLA met (uptime, latency, error rate) and adoption above 70%. Adoption is the one that surprises hospitals — having a tool live and having clinicians use it are different things. Track active usage by user, not by license.

Phase 4 — Optimize (Month 9–12)

Phase 4 is where ROI is realized at the portfolio level — not at a single workflow level. By month 12, the question shifts from "is the agent working?" to "where do we deploy the next agent, and how do we reuse what we built?" If your team has not internalized the architecture by now, the next workflow will cost as much as the first.

Phase 5 — Compound (Year 2+)

Compound returns come from the agent platform, not the agent. Hospitals that win this category in year two are the ones that built reusable identity matching, FHIR write libraries, audit logging, and observability infrastructure during Phase 2 and 3. By year two, deploying a new workflow takes 6 weeks instead of 6 months because most of the infrastructure already exists.

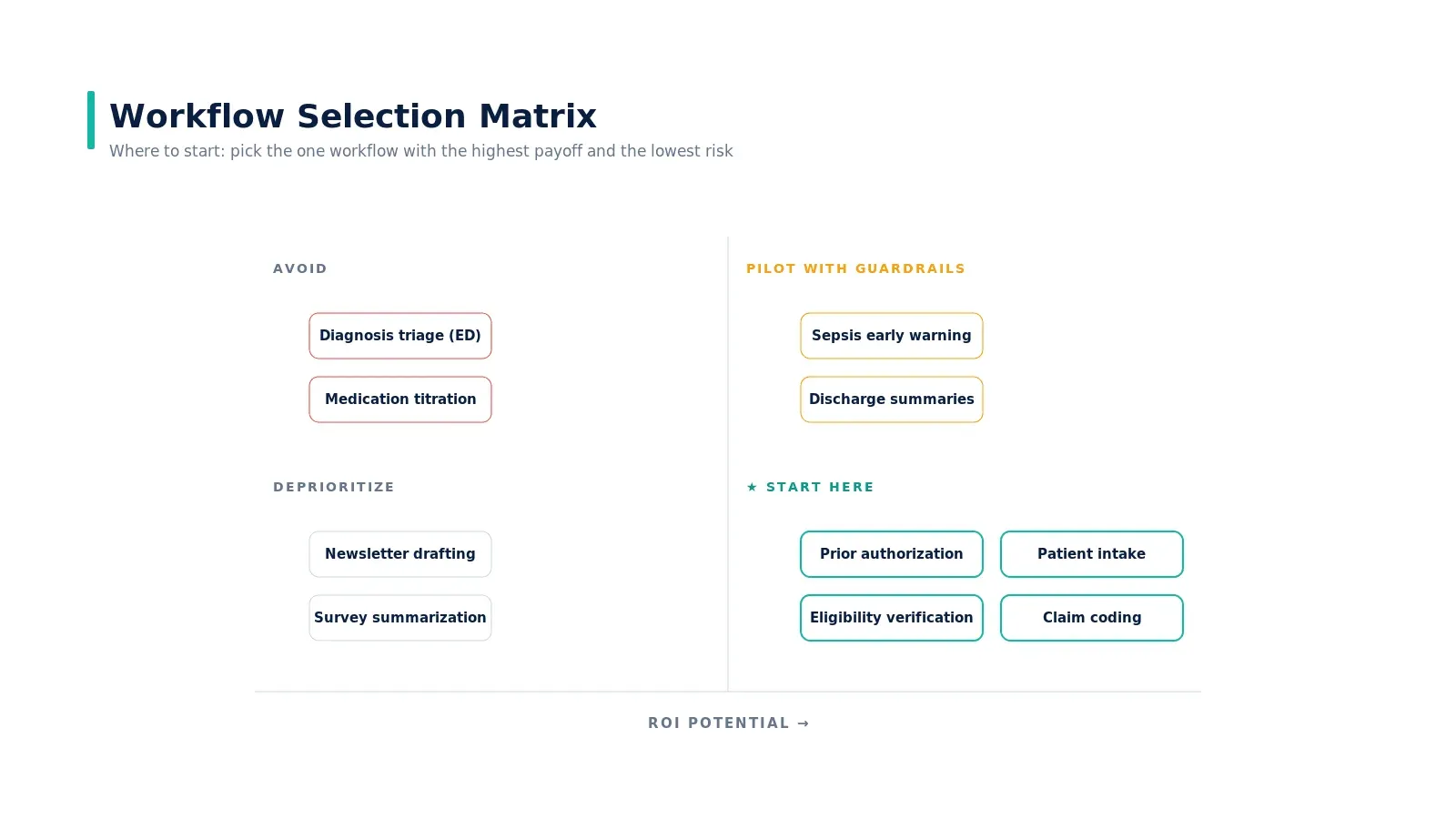

Where to start: the workflow selection matrix

The decision is two questions: how big is the financial or operational return, and how much clinical risk does the workflow carry? Eligibility verification, prior auth, patient intake, and claim coding sit firmly in the start-here quadrant. Sepsis early warning sits in pilot-with-guardrails. Diagnosis from imaging alone sits in avoid for now — not because it cannot be done, but because the liability and reimbursement environment is not yet aligned. We expand on this in top use cases of AI agents in healthcare and why prior authorization is broken.

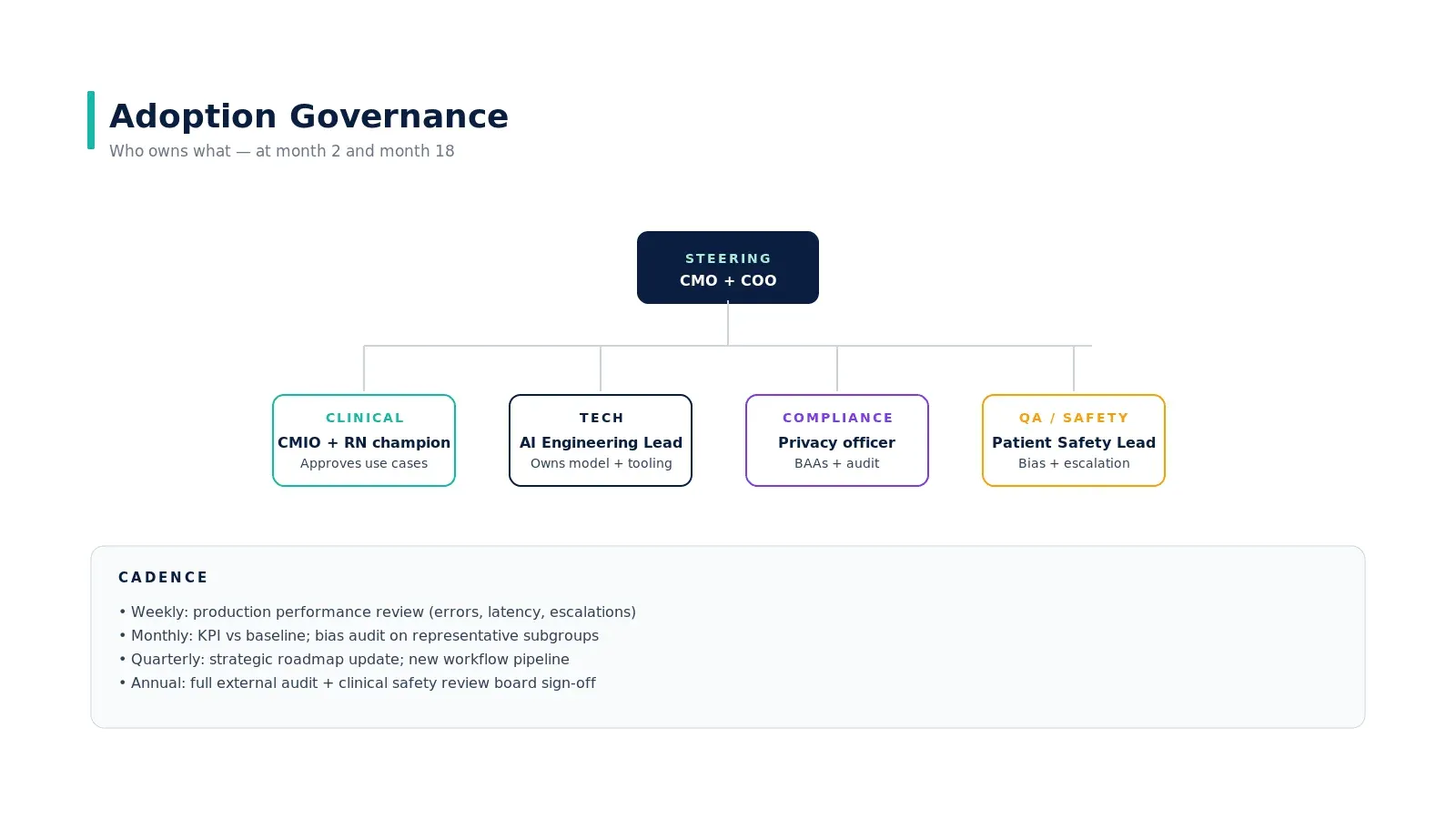

The governance you build now matters more in year two

Governance is operational infrastructure, not a compliance checkbox. The structure that holds up at month 18 has a steering committee that includes both the CMO and the COO, four functional lanes (clinical, tech, compliance, QA/safety), and four cadences (weekly performance, monthly KPIs and bias audits, quarterly strategy, annual external audit).

The named clinical owner is the most consequential role. Without one, the agent's clinical performance will degrade and no one will catch it until a patient event. The clinical owner is a physician or clinical informaticist who reviews the agent's outputs against clinical reality, not against vendor metrics.

What success looks like at month 18

Eight metrics any hospital board will recognize as outcomes:

- After-hours documentation time per encounter

- Percent of prior authorizations submitted in under four hours

- First-pass claim denial rate

- Patient access NPS

- Appointments per FTE per week

- Front-office overtime spend

- MBI emotional exhaustion score

- Months to net positive ROI

None of these depend on the vendor. All depend on whether you tracked the baseline before go-live. If you cannot tell me the number on day one, the project is not ready to start.

What this framework does not do

It does not pick the vendor for you. It does not solve clinical change management. It does not eliminate the political work of getting a CFO and CMIO to agree on a single workflow. What it does is force the structure that distinguishes a credible 18-month plan from a 12-week pilot that nobody can build on.

For the operating muscle behind this — the pattern observed across the hospitals that have actually compounded — read our piece on how AI agents actually work in production systems and the case study on eliminating the prior authorization bottleneck.

Real-world example

Mass General Brigham's published deployment of ambient documentation across 18,000 clinicians offers a useful reference point. Their rollout used phased exit gates, a clinical informatics lead as named owner, and KPI tracking against burnout instruments — the same pattern this framework describes. Mayo Clinic's revenue cycle deployment of agentic automation followed an analogous structure: shadow → supervised → production with rollback triggers defined upfront. Hospitals that ship this pattern publicly tend to share two things — they started with a high-ROI, low-risk workflow, and they kept governance ahead of scale, not behind it.

Key takeaways

- Pick one workflow first. A specific, measurable target — not "an AI strategy."

- Audit your data infrastructure before talking to vendors. The vendor's pitch comes second; FHIR availability, structured data ratio, and identity matching come first.

- Shadow → Supervised → Production with explicit exit gates. Skipping a gate produces 18-month-old technical debt.

- Name a clinical owner before go-live. Without one, performance degrades unnoticed until a patient event.

- Measure what changes, not what the vendor reports. Set baselines on documentation time, PA cycle time, denial rate, and clinician satisfaction before the agent goes live.

Call to Action

Want to deploy an AI Agent inside your hospital or healthcare product? Get in touch with our team — we will scope the workflow, governance, and 90-day rollout plan against your own baseline metrics.

Learn more about AI Agents in Healthcare → read the full pillar guide.

Related reading:

- Also read: Where AI Agents Deliver ROI in Healthcare

- Also read: Top Use Cases of AI Agents in Healthcare