Here is a sentence that will get you fired from any payer's engineering team: "The AI said the patient probably qualifies for coverage."

Probably is not a reimbursement decision. Coverage determinations, clinical protocol adherence, and compliance validations must be deterministic. Same input, same output, every single time, with a complete audit trail that a CMS auditor can follow three years later. This is the non-negotiable contract that healthcare automation must honor.

Yet the industry is racing to deploy agentic AI systems that are, by mathematical design, probabilistic. Large language models do not guarantee the same output for the same input. They hallucinate. They drift. They cannot explain their reasoning in the structured, reproducible format that HIPAA, CMS, and state regulators demand.

The answer is not to abandon AI. The answer is to contain it within a deterministic execution framework — using BPMN for workflow orchestration, DMN for rules-based decisions, and AI agents only at the specific judgment points where unstructured reasoning genuinely adds value.

This article lays out the architecture that makes this work. Not theory — production patterns used by organizations deploying agentic AI in healthcare workflows today.

The Fundamental Problem: LLMs Are Probabilistic. Healthcare Compliance Is Not.

Every large language model — GPT-4, Claude, Gemini, Llama — works by predicting the next most likely token in a sequence. Even with temperature set to zero, the outputs are non-deterministic across model versions, infrastructure changes, and context window variations. According to the American Medical Association, nearly 1 in 4 physicians report that prior authorization delays have led to a serious adverse event for a patient. The stakes of getting automation wrong in this domain are clinical, not just financial.

Consider what "deterministic" means in healthcare billing and compliance:

- Prior authorization: Given payer X, procedure code Y, and diagnosis Z, the answer to "Is prior auth required?" must be the same every time. It is a lookup, not a judgment call.

- Fee schedule validation: CPT 99214 with modifier 25 for payer Aetna in Texas has one allowed amount. Not a range. Not an estimate.

- Clinical protocol adherence: CMS Sepsis Bundle (SEP-1) requires lactate measurement within 3 hours of severe sepsis identification.

- Compliance checks: HIPAA minimum necessary standard, 42 CFR Part 2 substance use disorder protections, state consent laws — all binary rule evaluations.

An LLM cannot guarantee binary consistency. A clinical decision support system built purely on LLM agents will eventually produce different outputs for identical inputs. In healthcare, that inconsistency is a compliance violation, a denied claim, or a patient safety event.

BPMN: The Visual Language for Healthcare Process Orchestration

Business Process Model and Notation (BPMN) is an ISO standard (ISO/IEC 19510:2013) for defining executable business processes as visual diagrams. In healthcare, it provides something no agent framework offers: a single, auditable, version-controlled definition of how a process should execute.

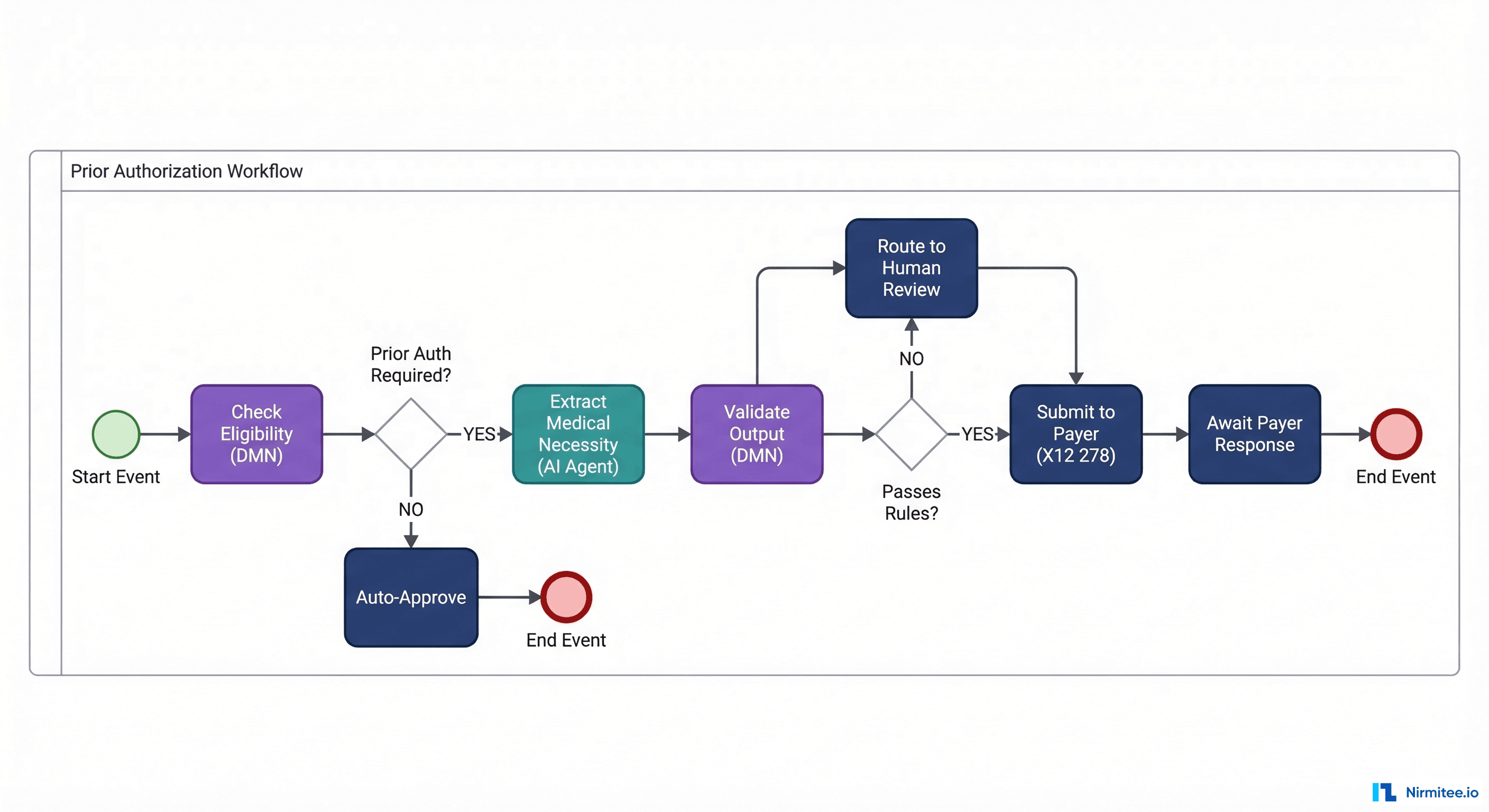

A BPMN diagram for prior authorization defines every step, every gateway decision, every exception path, and every human review point — before a single line of code runs. The diagram is the execution. Not documentation of the execution. The actual runtime artifact.

Why BPMN Matters for Healthcare Specifically

- Regulatory transparency: When CMS or a state regulator asks, "How do you determine prior authorization requirements?", you show them the BPMN diagram. It is human-readable. A compliance officer, a clinician, and an engineer can all read the same artifact.

- Version-controlled process changes: When Aetna updates its prior auth rules on January 1, you update the BPMN definition and deploy a new version. The old version continues running for in-flight cases. Full audit trail of what process was in effect when.

- Exception handling by design: Healthcare processes have more exception paths than happy paths. BPMN models these explicitly — timeout escalations, human review loops, retry policies, compensation flows for partial failures.

- Durable execution: BPMN engines like Camunda (used by 3 of the top 6 US healthcare companies) persist process state. If a server crashes mid-workflow, execution resumes from the last checkpoint. Prior auth workflows that span days — waiting for payer responses — survive infrastructure failures.

Healthcare Workflows That Map Naturally to BPMN

| Workflow | Why BPMN Fits | Key BPMN Elements Used |

|---|---|---|

| Prior Authorization | Multi-step, multi-day, multiple decision points | Service tasks, gateways, timer events, human tasks |

| Discharge Planning | Cross-department coordination, checklist completion | Parallel gateways, sub-processes, signal events |

| Referral Routing | Rules-based routing with escalation paths | Business rule tasks, exclusive gateways, escalation events |

| Claims Adjudication | Sequential validation with branching logic | Decision tasks (DMN), error boundary events |

| Care Gap Closure | Patient outreach with retry and escalation | Timer events, loop markers, message events |

DMN: Decision Tables That Encode Payer Rules Without Ambiguity

Decision Model and Notation (DMN) is the companion standard to BPMN, designed specifically for encoding business decisions as structured, testable, version-controlled tables. Where BPMN defines how a process flows, DMN defines what decisions are made at each decision point.

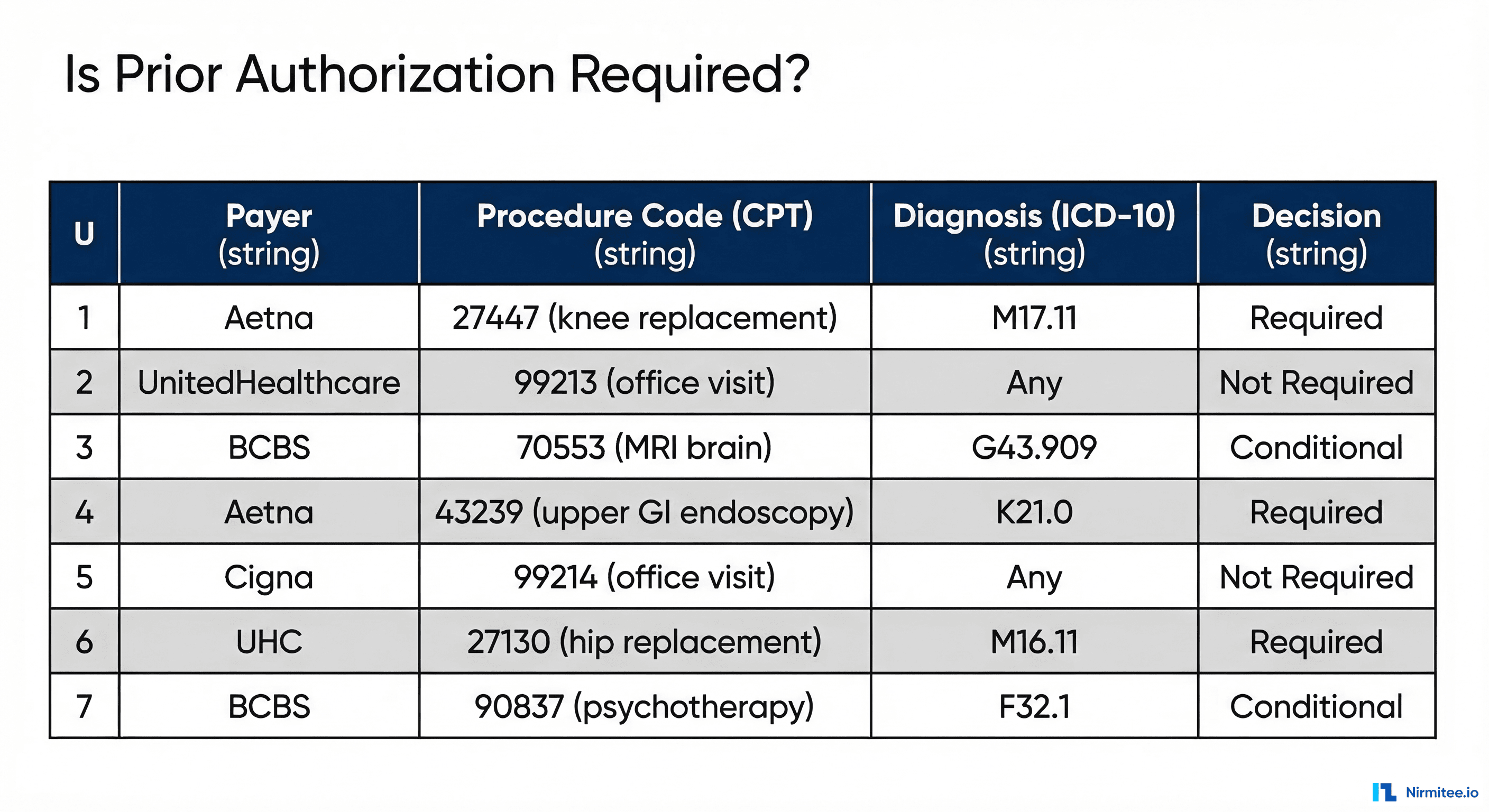

A DMN decision table for prior authorization requirements might look like this:

| Hit Policy: U (Unique) | Inputs | Output | ||

|---|---|---|---|---|

| Rule | Payer | CPT Code | ICD-10 Category | Decision |

| 1 | Aetna | 27447 | M17.* | Required |

| 2 | UnitedHealthcare | 99213 | Any | Not Required |

| 3 | BCBS | 70553 | G43.* | Conditional |

| 4 | Aetna | 43239 | K21.* | Required |

| 5 | Cigna | 99214 | Any | Not Required |

| 6 | UnitedHealthcare | 27130 | M16.* | Required |

The hit policy "U" (Unique) guarantees that exactly one rule matches any given input combination. No ambiguity. No probability. If the input is Aetna + CPT 27447 + ICD-10 M17.11, the output is "Required" — today, tomorrow, and when the auditor reviews it in 2029.

What DMN Handles in Healthcare

- Payer-specific prior auth rules: Each payer's requirements encoded as decision tables, updated when contracts change

- Clinical protocol validation: Sepsis bundle criteria, stroke protocol timelines, medication interaction checks

- Fee schedule lookups: Allowed amounts by payer, geography, modifier, and place of service

- Eligibility determination: Coverage verification logic that varies by plan type, state, and benefit design

- AI output validation: Rules that check whether an AI agent's extracted data meets minimum criteria before proceeding

The critical insight: DMN tables are maintainable by non-engineers. A revenue cycle analyst can update payer rules in a DMN editor without touching code, deploying containers, or understanding Python. This is how rules should be managed in healthcare — by the domain experts who understand them.

Where Agentic AI Fits: The Judgment Calls Between Structured Steps

If BPMN handles the "how" and DMN handles the "what," agentic AI handles the "interpret." There are specific points in healthcare workflows where structured rules cannot operate because the input is unstructured, ambiguous, or requires clinical reasoning that no decision table can encode.

These are the only points where AI agents should operate:

- Medical necessity extraction: Reading a physician's clinical note — free-text, dictated, full of abbreviations — and extracting the structured medical necessity justification a payer requires. This is NLP, not a rule.

- Appeal letter generation: When a prior auth is denied, generating a clinically-grounded appeal that references the patient's specific clinical history, relevant clinical guidelines, and the payer's stated denial reason. This requires reasoning over unstructured data.

- Clinical summarization: Condensing a 200-page medical record into a 2-page summary for a utilization review nurse. No rule table can do this.

- Coding suggestion: Given a clinical encounter note, suggest the most appropriate CPT and ICD-10 codes. The final code assignment is validated by DMN rules, but the initial suggestion requires understanding clinical language.

- Anomaly detection: Identifying patterns in claims data that suggest upcoding, unbundling, or other billing anomalies. Pattern recognition across unstructured signals — an AI strength.

Notice the pattern: AI operates on unstructured inputs and produces structured outputs that are then validated by deterministic rules. The agent never makes the final decision. It provides input to a decision framework that guarantees consistency.

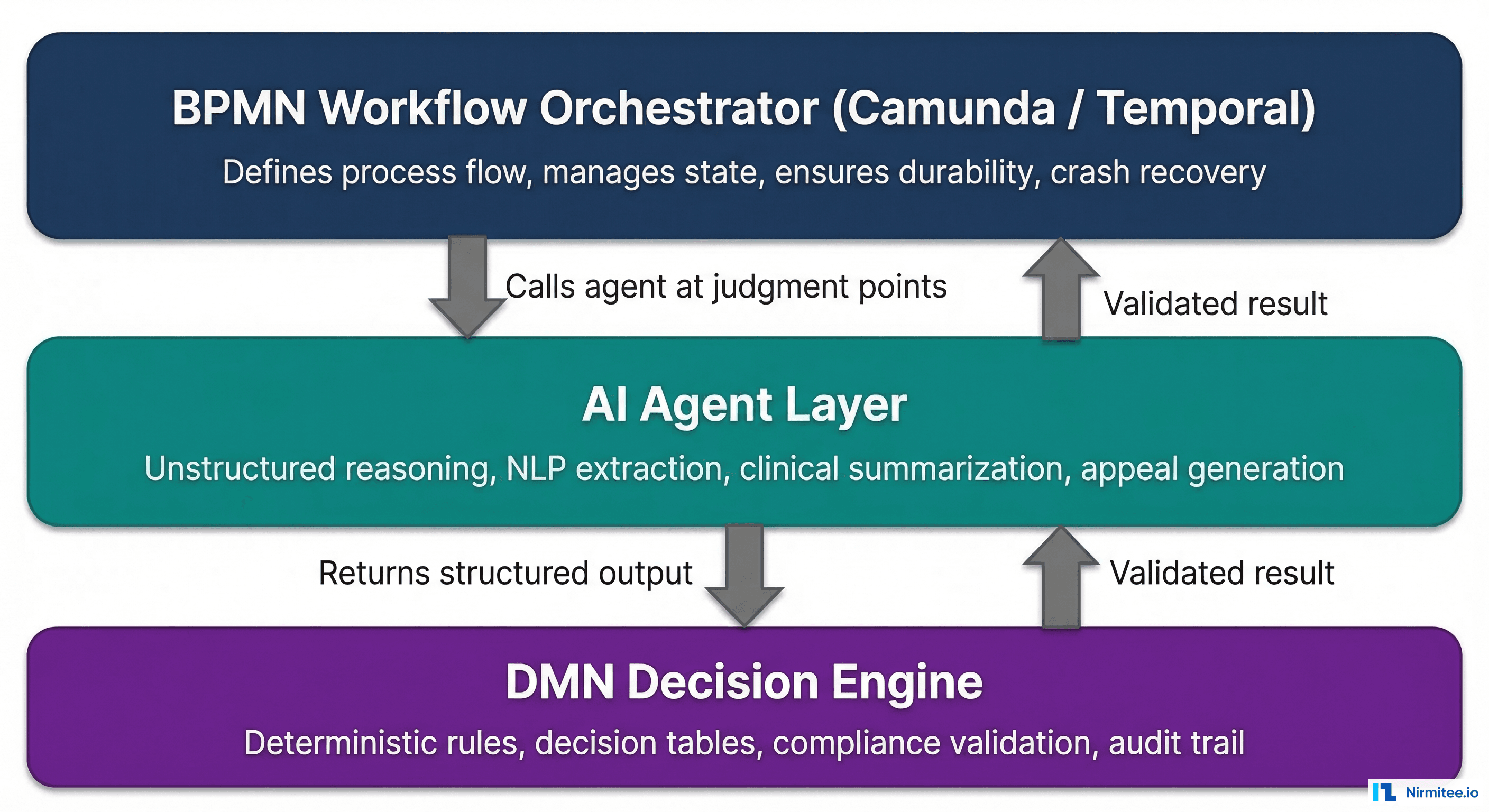

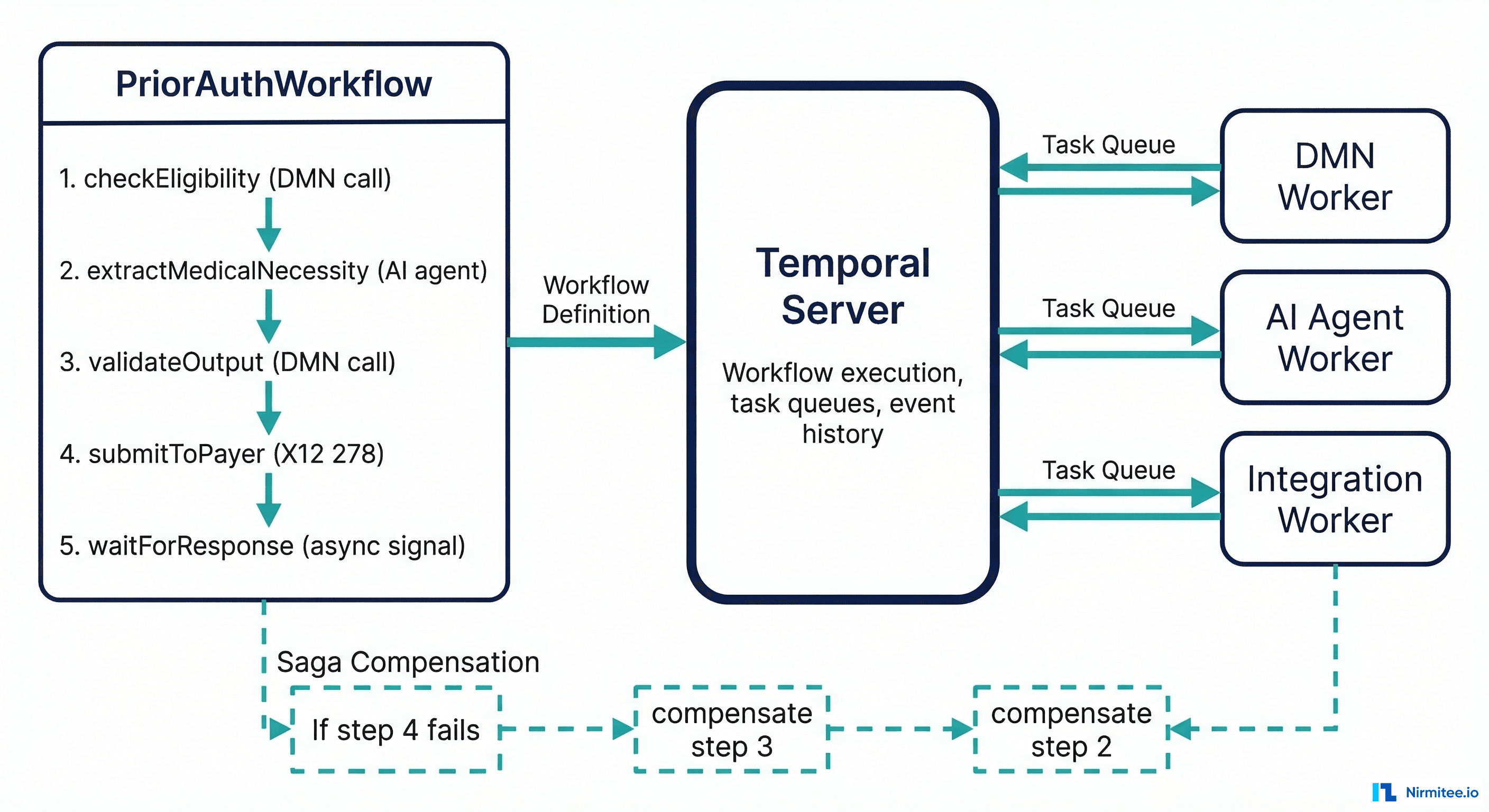

The Architecture: BPMN Orchestrator + AI Agents + DMN Validation

Here is the reference architecture that combines all three layers:

- BPMN orchestrator (Camunda 8 or Temporal) manages the end-to-end workflow. It defines the sequence of steps, persists state, handles timeouts, and manages human task assignments.

- At judgment points, the BPMN workflow calls an AI agent as a service task. The agent receives structured input (patient ID, note ID, payer context) and returns structured output (extracted medical necessity, confidence score, supporting evidence).

- The AI agent's output flows to a DMN decision table that validates it against deterministic rules. Does the extracted diagnosis match a covered condition? Does the confidence score meet the minimum threshold? Are all required fields populated?

- If DMN validation passes, the workflow continues to the next step (payer submission, code assignment, etc.).

- If DMN validation fails, the workflow routes to a human review task. A clinician or coder reviews the AI's output, corrects it if needed, and the workflow resumes.

This architecture ensures that no AI output reaches a payer, a patient record, or a billing system without passing through deterministic validation. The AI is powerful but contained. The rules are rigid but only applied to structured decisions. The workflow engine ensures nothing falls through the cracks.

Camunda 8 Implementation: BPMN with AI Service Tasks

Camunda 8 is a cloud-native BPMN engine that supports event-driven healthcare integration architectures. Here is a simplified BPMN XML definition for a prior authorization workflow with an AI service task:

<?xml version="1.0" encoding="UTF-8"?>

<bpmn:definitions xmlns:bpmn="http://www.omg.org/spec/BPMN/20100524/MODEL"

xmlns:zeebe="http://camunda.org/schema/zeebe/1.0">

<bpmn:process id="prior-auth-workflow" name="Prior Authorization" isExecutable="true">

<!-- Start: Receive prior auth request -->

<bpmn:startEvent id="start" name="PA Request Received" />

<!-- Step 1: Check eligibility via DMN -->

<bpmn:businessRuleTask id="check-eligibility" name="Check Eligibility">

<bpmn:extensionElements>

<zeebe:calledDecision decisionId="prior-auth-required"

resultVariable="eligibilityResult" />

</bpmn:extensionElements>

</bpmn:businessRuleTask>

<!-- Gateway: Is prior auth required? -->

<bpmn:exclusiveGateway id="pa-required-gw" name="Prior Auth Required?" />

<!-- Step 2: AI Agent extracts medical necessity -->

<bpmn:serviceTask id="extract-necessity" name="Extract Medical Necessity (AI)">

<bpmn:extensionElements>

<zeebe:taskDefinition type="ai-medical-necessity-extraction" />

<zeebe:taskHeaders>

<zeebe:header key="model" value="clinical-extraction-v3" />

<zeebe:header key="minConfidence" value="0.85" />

</zeebe:taskHeaders>

</bpmn:extensionElements>

</bpmn:serviceTask>

<!-- Step 3: Validate AI output via DMN -->

<bpmn:businessRuleTask id="validate-output" name="Validate AI Output">

<bpmn:extensionElements>

<zeebe:calledDecision decisionId="validate-necessity-output"

resultVariable="validationResult" />

</bpmn:extensionElements>

</bpmn:businessRuleTask>

<!-- Gateway: Passes validation? -->

<bpmn:exclusiveGateway id="validation-gw" name="Passes Rules?" />

<!-- Human review if validation fails -->

<bpmn:userTask id="human-review" name="Clinical Review Required">

<bpmn:extensionElements>

<zeebe:assignmentDefinition assignee="clinical-review-team" />

</bpmn:extensionElements>

</bpmn:userTask>

<!-- Step 4: Submit to payer -->

<bpmn:serviceTask id="submit-to-payer" name="Submit X12 278">

<bpmn:extensionElements>

<zeebe:taskDefinition type="x12-278-submission" />

</bpmn:extensionElements>

</bpmn:serviceTask>

<bpmn:endEvent id="end" name="PA Complete" />

</bpmn:process>

</bpmn:definitions>The key elements: the businessRuleTask calls DMN decision tables directly. The serviceTask with ai-medical-necessity-extraction type routes to a Zeebe job worker that calls the LLM endpoint. The userTask creates a human review assignment when AI output fails DMN validation. All of this is version-controlled, auditable, and survives crashes.

Temporal Implementation: Workflow-as-Code with AI Activities

For teams that prefer workflow-as-code over visual BPMN modeling, Temporal offers the same durability guarantees with a developer-first approach. Here is the same prior authorization workflow in Temporal (Go SDK):

package workflows

import (

"time"

"go.temporal.io/sdk/workflow"

)

type PriorAuthRequest struct {

PatientID string

PayerID string

ProcedureCode string

DiagnosisCode string

ClinicalNoteID string

}

type PriorAuthResult struct {

Approved bool

DecisionRuleID string

AuditTrail []AuditEntry

}

func PriorAuthWorkflow(ctx workflow.Context, req PriorAuthRequest) (*PriorAuthResult, error) {

opts := workflow.ActivityOptions{

StartToCloseTimeout: 30 * time.Second,

RetryPolicy: &temporal.RetryPolicy{

MaximumAttempts: 3,

},

}

ctx = workflow.WithActivityOptions(ctx, opts)

audit := []AuditEntry{}

// Step 1: Check eligibility via DMN engine

var eligibility EligibilityResult

err := workflow.ExecuteActivity(ctx, CheckEligibilityActivity, req).Get(ctx, &eligibility)

if err != nil {

return nil, err

}

audit = append(audit, AuditEntry{

Step: "eligibility_check",

Rule: eligibility.MatchedRule,

Result: eligibility.Decision,

})

if !eligibility.PriorAuthRequired {

return &PriorAuthResult{Approved: true, AuditTrail: audit}, nil

}

// Step 2: AI agent extracts medical necessity from clinical note

var necessity MedicalNecessity

err = workflow.ExecuteActivity(ctx, ExtractMedicalNecessityActivity,

req.ClinicalNoteID, req.DiagnosisCode).Get(ctx, &necessity)

if err != nil {

return nil, err

}

audit = append(audit, AuditEntry{

Step: "ai_extraction",

Result: necessity.Summary,

Confidence: necessity.Confidence,

})

// Step 3: Validate AI output against DMN rules

var validation ValidationResult

err = workflow.ExecuteActivity(ctx, ValidateNecessityActivity,

necessity, req.PayerID).Get(ctx, &validation)

if err != nil {

return nil, err

}

// Step 4: Route to human review if validation fails

if !validation.Passed {

// Human task — blocks until reviewer completes

var reviewResult HumanReviewResult

err = workflow.ExecuteActivity(ctx, RequestHumanReviewActivity,

req, necessity, validation.FailureReasons).Get(ctx, &reviewResult)

if err != nil {

return nil, err

}

necessity = reviewResult.CorrectedNecessity

}

// Step 5: Submit to payer via X12 278

var submission SubmissionResult

longOpts := workflow.ActivityOptions{

StartToCloseTimeout: 24 * time.Hour, // Payer responses can take days

HeartbeatTimeout: 1 * time.Hour,

}

ctx = workflow.WithActivityOptions(ctx, longOpts)

err = workflow.ExecuteActivity(ctx, SubmitToPayer278Activity,

req, necessity).Get(ctx, &submission)

return &PriorAuthResult{

Approved: submission.Approved,

DecisionRuleID: eligibility.MatchedRule,

AuditTrail: audit,

}, err

}The Temporal approach gives you the same guarantees: durable execution (survives server crashes), deterministic replay (every workflow can be replayed exactly), structured audit trail (every activity result is persisted), and human task management (via activity timeouts and signals). The AI activity worker calls the LLM endpoint, but the workflow engine — not the LLM — controls the process flow.

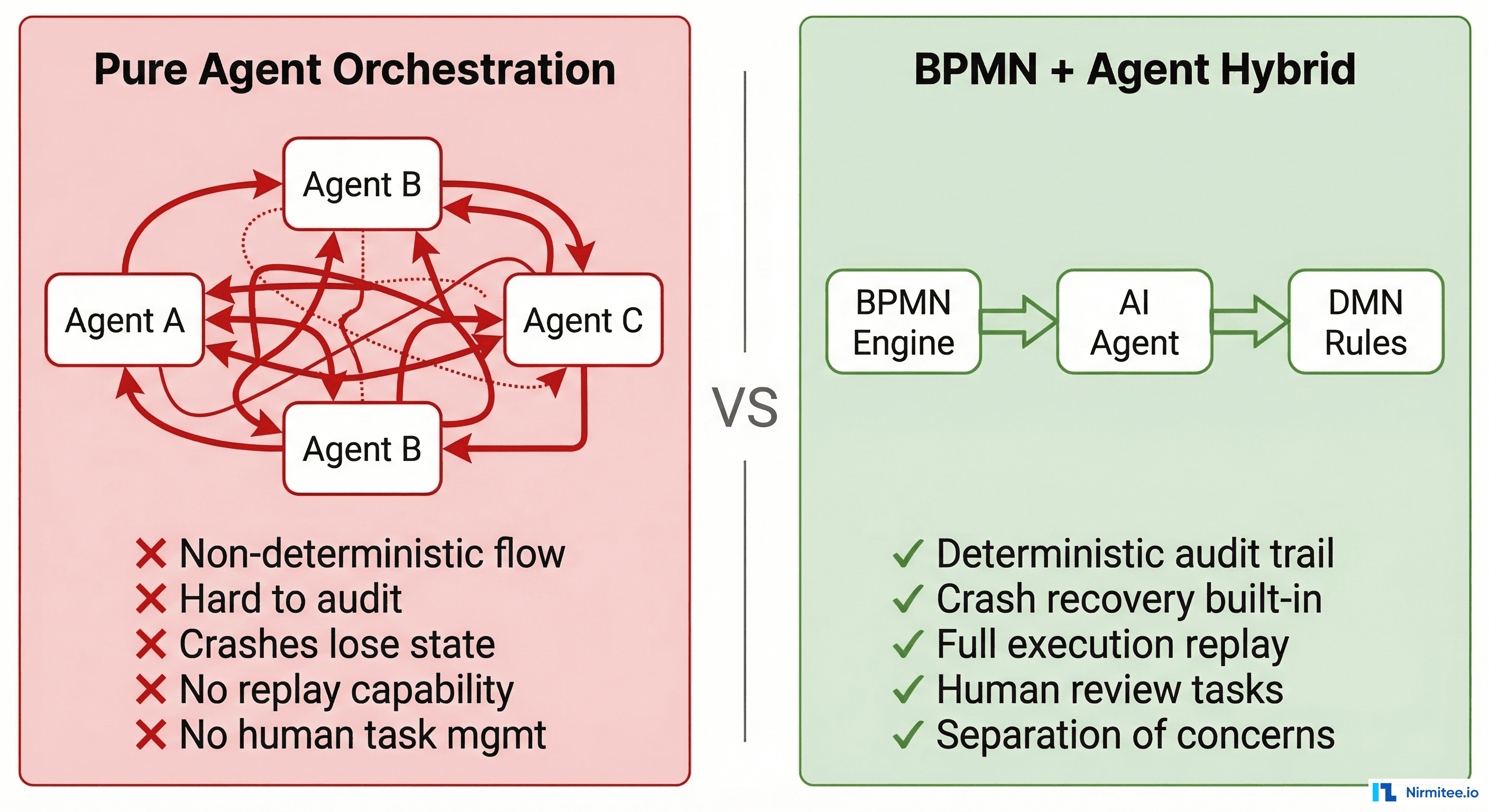

Why This Is Better Than Pure Agent Orchestration

Frameworks like LangGraph, CrewAI, and AutoGen enable agents to orchestrate other agents. This works for research prototypes. It fails catastrophically for production healthcare systems for five specific reasons:

1. Durable Execution

Agent frameworks keep state in memory. If the process crashes mid-execution, the state is lost. A prior auth workflow that spans 5 days waiting for a payer response cannot live in a Python process's memory. BPMN engines and Temporal persist state to a database. Execution survives node failures, deployments, and infrastructure changes.

2. Deterministic Audit Trail

Agent frameworks log "agent called tool X and returned Y." BPMN engines log "workflow instance #4521 executed business rule task 'check-eligibility,' evaluated DMN decision 'prior-auth-required' with inputs {payer: Aetna, CPT: 27447, ICD: M17.11}, matched rule row 3, output: Required." The difference matters when a payer disputes a claim or a regulator audits your process.

3. Replay and Debugging

Temporal's killer feature: deterministic replay. You can take any completed workflow execution and replay it step-by-step to see exactly what happened. Same inputs, same outputs, same decision paths. Try debugging an agent chain that ran two weeks ago with temperature-based token sampling. You cannot reproduce it.

4. Human Task Management

Healthcare workflows require human-in-the-loop at specific points — clinical review, supervisor approval, and exception handling. BPMN engines have native userTask support for assignment, escalation, delegation, and SLA tracking. Agent frameworks treat humans as an afterthought — a tool the agent can call, rather than a first-class participant in the process.

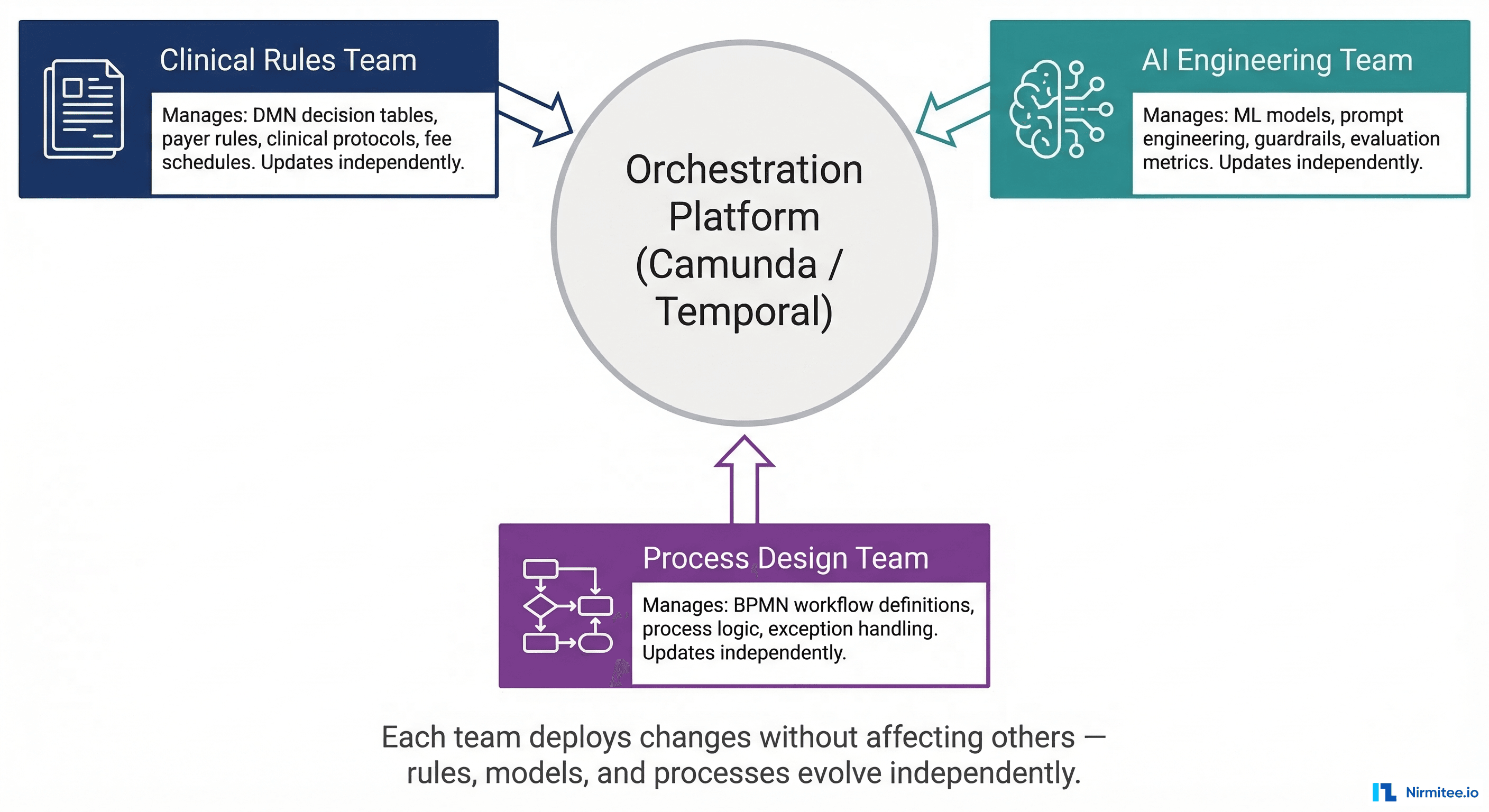

5. Separation of Concerns

This is the organizational argument, and it may be the most important one. In a BPMN + DMN + Agent architecture:

- The clinical rules team manages DMN decision tables. They update payer rules, clinical protocols, and fee schedules without touching code.

- AI engineering team manages models, prompts, and guardrails. They improve extraction accuracy without changing the workflow.

- The process design team manages BPMN workflow definitions. They add new steps, change routing logic, or add exception handling without affecting rules or models.

Each team deploys changes independently. A payer rule update does not require retraining an AI model. A model improvement does not require changing the workflow definition. This is how scalable healthcare engineering organizations actually operate.

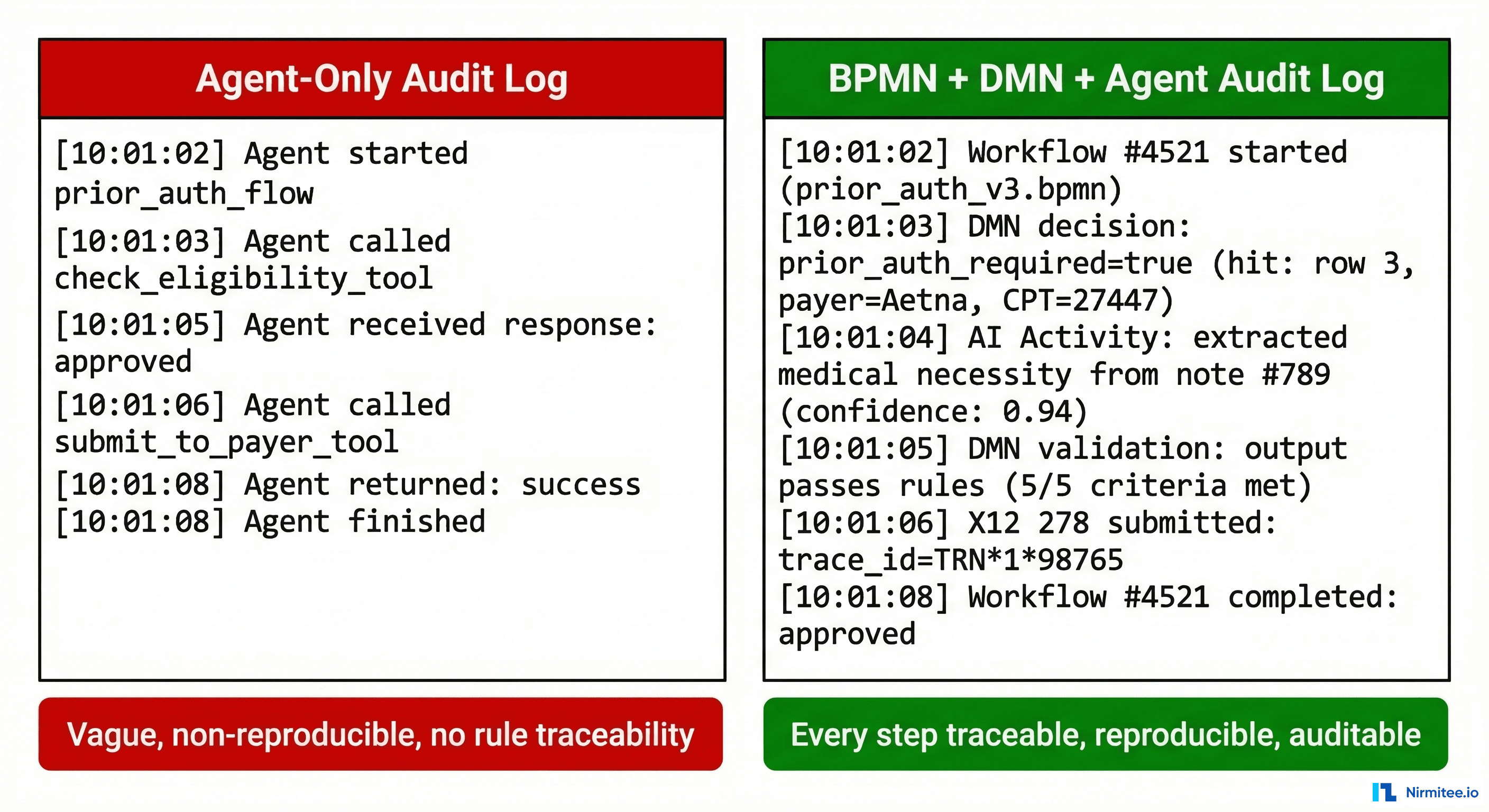

The Audit Trail Difference

Audit trails are not a feature. In healthcare, they are a regulatory requirement. Here is what the difference looks like in practice:

Agent-Only Audit

[10:01:02] Agent started prior_auth_flow

[10:01:03] Agent called check_eligibility tool → result: required

[10:01:05] Agent called extract_necessity tool → result: medical necessity found

[10:01:06] Agent called submit_to_payer tool → result: submitted

[10:01:08] Agent finished → outcome: approvedBPMN + DMN + Agent Audit

[10:01:02] Workflow instance #4521 started (process: prior-auth-v3.bpmn, version: 12)

[10:01:03] DMN evaluation: decision=prior_auth_required

Inputs: {payer: Aetna, cpt: 27447, icd10: M17.11}

Hit: row 3, output: {decision: Required, review_level: Standard}

[10:01:04] AI activity: extract_medical_necessity

Model: clinical-extraction-v3 (version: 2026.03.1)

Input: note_id=clinical-note-789

Output: {necessity: "Severe osteoarthritis limiting ADLs", confidence: 0.94}

Token usage: 1,847 input / 312 output

[10:01:05] DMN evaluation: decision=validate_necessity_output

Inputs: {confidence: 0.94, fields_complete: true, diagnosis_match: true}

Hit: row 1, output: {valid: true, requires_review: false}

[10:01:06] Service task: submit_x12_278

Payer: Aetna, TRN: 1*98765*NIRMITEE

Response: 278 accepted, reference: AUTH-2026-44521

[10:01:08] Workflow #4521 completed: approved (duration: 6.2s)The second log tells a regulator exactly which rule was applied, which model version produced the output, what the confidence score was, and how the output was validated. Three years later, you can reproduce the entire decision. That is the difference between a system that passes an audit and one that does not.

Getting Started: A Practical Roadmap

If you are building healthcare automation and want to adopt this architecture, here is the sequencing that works:

- Map your processes in BPMN first. Before writing any code, diagram your prior auth, claims, or referral workflows in BPMN. Identify every decision point, exception path, and human review step. Tools: Camunda Modeler (free), bpmn.io (open source).

- Encode rules in DMN. For every decision gateway in your BPMN diagram, create a DMN decision table. Start with payer-specific prior auth rules — they have the clearest input/output structure. Have your revenue cycle team review and validate the tables.

- Identify AI insertion points. Look for tasks that require unstructured input processing: clinical note extraction, document summarization, coding suggestion. These are your AI service tasks. Everything else should be deterministic.

- Build AI activities with structured I/O. Your AI agents should accept typed inputs (patient ID, note ID, payer context) and return typed outputs (extracted fields, confidence scores, supporting evidence). No free-form agent "reasoning" — structured contracts only.

- Add DMN validation after every AI step. Every AI output passes through a DMN validation table before it can influence downstream steps. This is your safety net. If the AI's confidence is below threshold, if required fields are missing, if the output contradicts known rules — DMN catches it and routes to human review.

- Instrument observability from day one. Log every DMN evaluation, every AI activity input/output, every workflow state transition. This is not optional. It is how you pass audits, debug production issues, and prove compliance.

Frequently Asked Questions

Can I use BPMN with any AI model or framework?

Yes. BPMN engines call AI models through service tasks or activity workers. The AI model is an external service — GPT-4, Claude, a fine-tuned open-source model, or a custom ML pipeline. The BPMN engine does not care what model you use. It sends input, receives output, and validates it via DMN. You can swap models without changing the workflow.

Is Camunda or Temporal better for healthcare?

Camunda 8 is better when you need visual BPMN modeling, non-technical stakeholder involvement, and native DMN support. Temporal is better when your engineering team prefers workflow-as-code, you need extreme scalability, and you want full control over the execution environment. Both provide durable execution and deterministic audit trails. Many organizations use Camunda for business-facing processes and Temporal for high-throughput technical workflows.

Does this architecture add latency?

DMN evaluation typically adds 1-5ms per decision. BPMN orchestration adds 10-50ms per step for state persistence. The AI service task itself — the LLM call — dominates latency at 500ms-5s. The deterministic wrapper adds negligible overhead compared to the AI processing time. Camunda reports that healthcare organizations have reduced prior authorization timelines from 7 days to under 24 hours using this pattern.

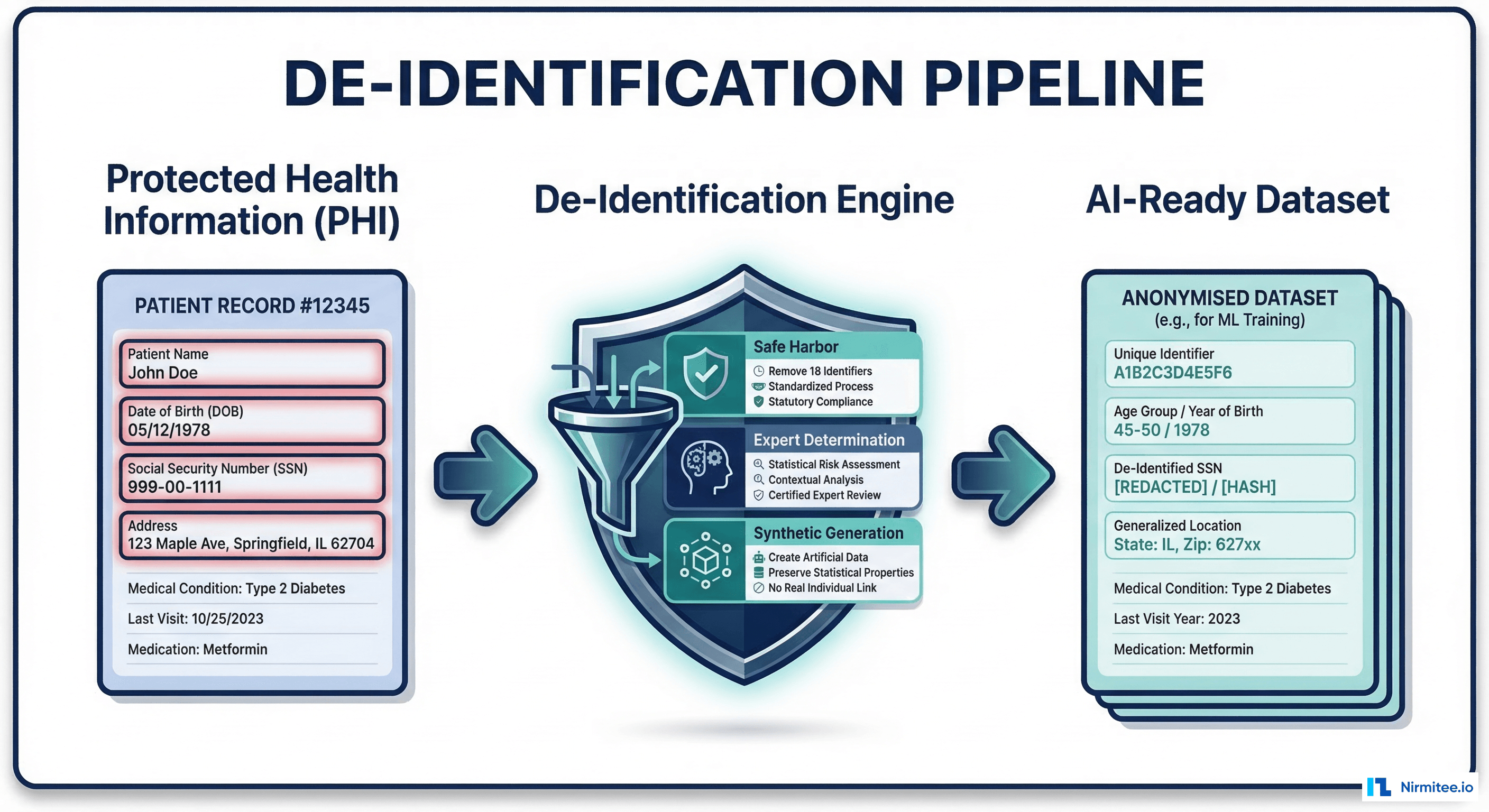

How does this handle HIPAA compliance?

BPMN engines can be deployed in HIPAA-compliant environments (both Camunda and Temporal offer self-hosted options). PHI flows through the workflow as process variables with encryption at rest and in transit. DMN decision tables do not contain PHI — they contain rules. AI activities process PHI within your security boundary. The audit trail itself becomes part of your HIPAA compliance documentation.

The Bottom Line

Agentic AI is transformative for healthcare — at the right insertion points. But the moment you let a probabilistic system make a deterministic decision, you have built a compliance risk, not an automation.

The architecture is clear: BPMN for orchestration, DMN for decisions, AI for judgment. Each layer does what it does best. The workflow engine ensures durability and auditability. The decision engine ensures consistency and compliance. The AI agent handles the unstructured reasoning that no rule table can encode.

At Nirmitee, we build healthcare platforms with this exact architecture — deterministic workflow engines with AI agents at the judgment points, not the decision points. Because in healthcare, "the AI said probably" is never an acceptable answer.

If you are building healthcare automation and want to get the architecture right from the start, let's talk.