One in five American adults experiences a mental health condition in any given year, yet fewer than half receive treatment. The bottleneck is not a shortage of therapists — it is detection. According to the National Institute of Mental Health, the average delay between symptom onset and first treatment for depression is 11 years. For anxiety disorders, it is 23 years.

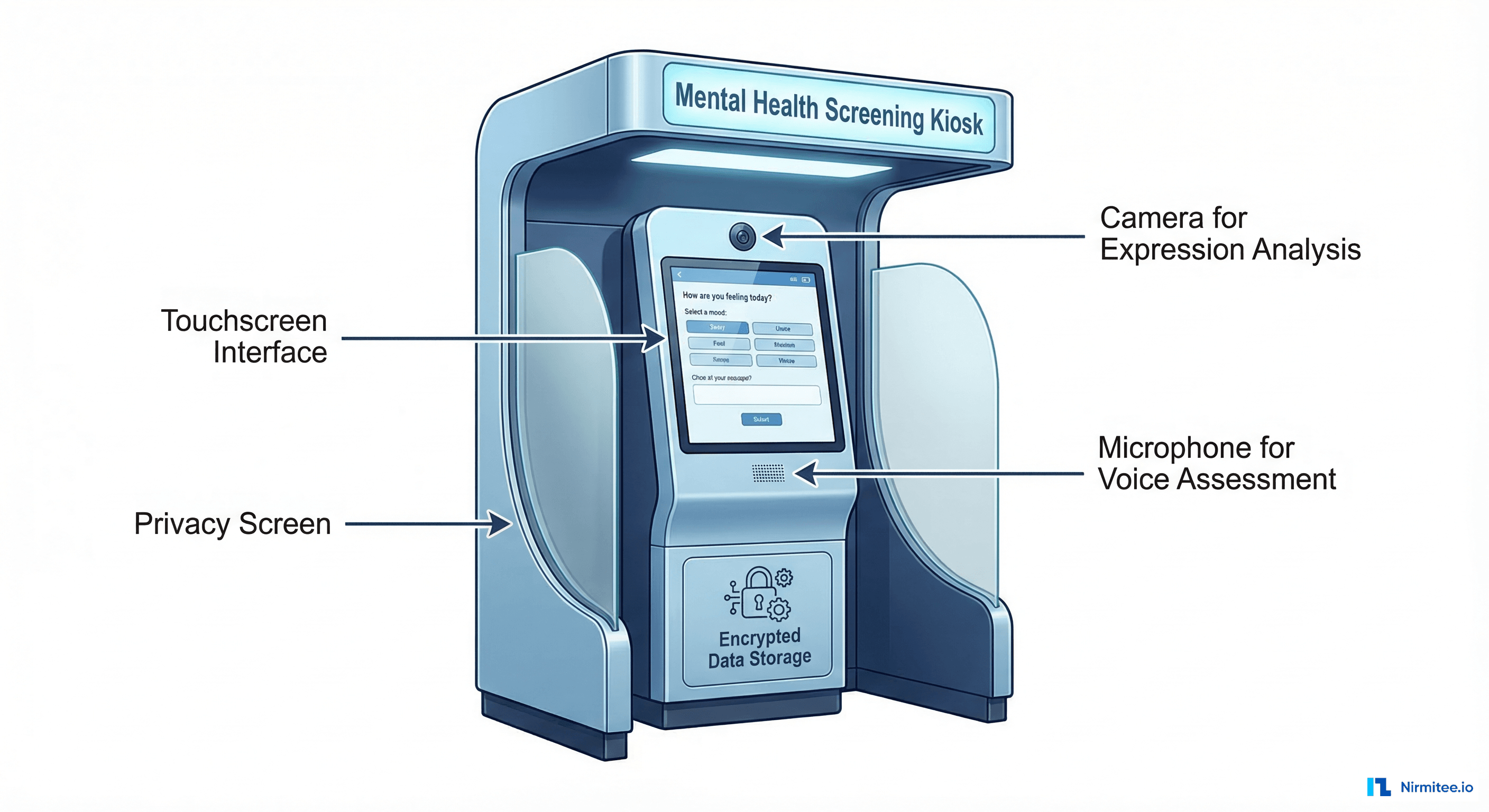

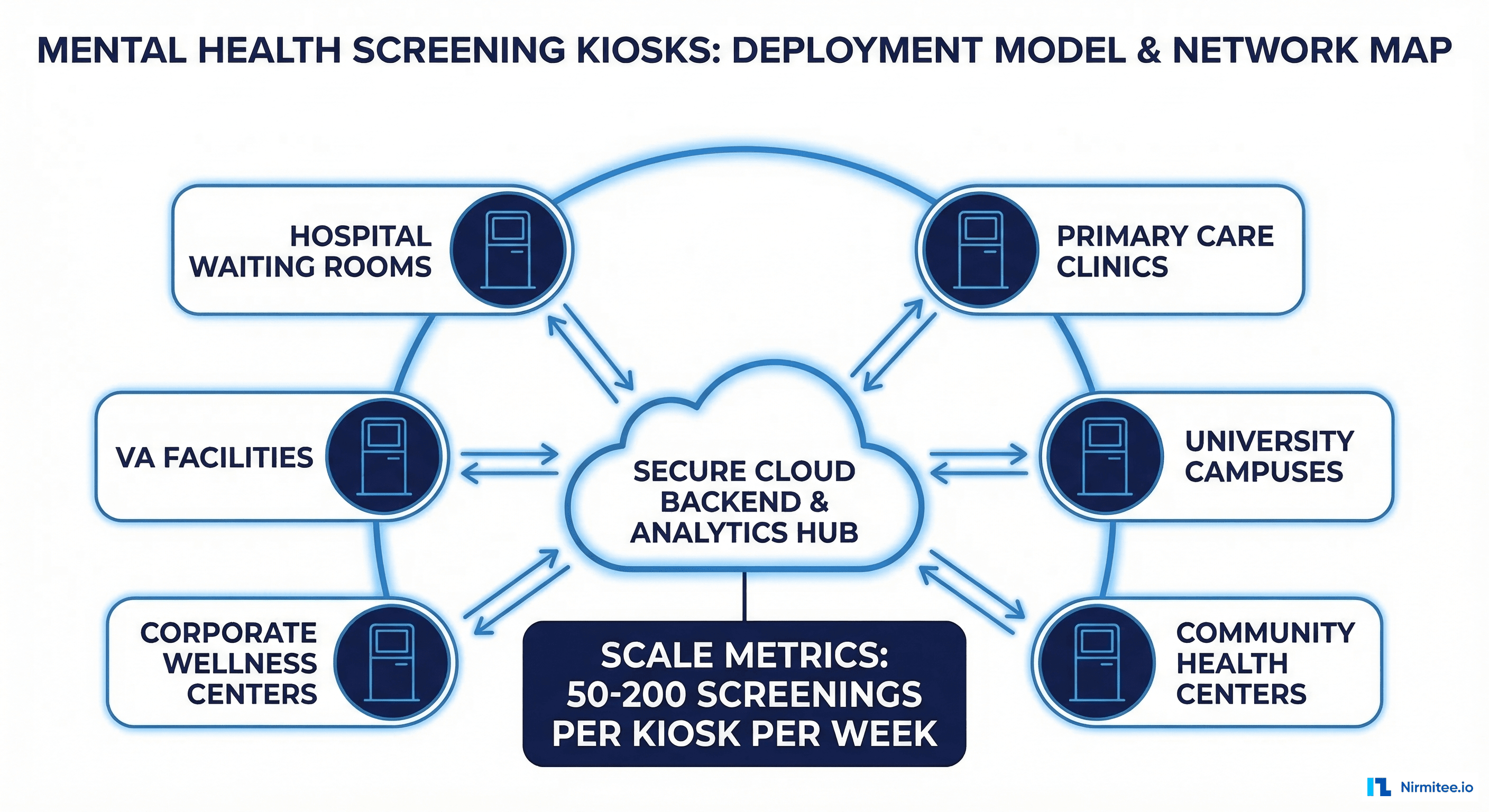

Mental health screening kiosks represent one of the most promising approaches to closing this detection gap. By placing AI-powered assessment tools in high-traffic locations — hospital waiting rooms, university student centers, primary care lobbies — health systems can screen thousands of patients who would never self-refer to a psychiatrist.

This case study examines the technical architecture, deployment model, and clinical outcomes from building and deploying AI-powered mental health screening kiosks across 14 healthcare facilities. We cover the NLP assessment pipeline, validated screening instruments, FHIR integration for EHR write-back, privacy engineering, and the data that demonstrates whether these kiosks actually improve patient outcomes.

Why Traditional Mental Health Screening Fails

Most primary care clinics use paper-based PHQ-9 forms for depression screening. The process looks like this: a medical assistant hands the patient a clipboard in the waiting room, the patient fills it out (or does not), someone manually scores it, and the results may or may not reach the physician before the appointment ends.

This workflow has three fundamental problems:

1. Completion Rates Are Abysmal

Studies published in the Journal of General Internal Medicine show that paper-based PHQ-9 completion rates in primary care settings average 34-42%. Patients skip questions, leave forms blank, or never receive them because the front desk is too busy to distribute them consistently.

2. Scoring Errors Are Common

Manual PHQ-9 scoring has a documented error rate of 12-18% in clinical practice. A 2023 study in BMC Psychiatry found that 15.3% of manually scored PHQ-9 forms contained arithmetic errors significant enough to change the severity classification.

3. Results Rarely Reach the Provider in Time

Even when forms are completed and scored correctly, the results often sit in a paper tray until after the appointment. The screening becomes a documentation exercise rather than a clinical decision tool. Digital health dashboards solve this latency problem, but they require structured data input — which is precisely what kiosks provide.

The Technology Stack Behind Mental Health Screening Kiosks

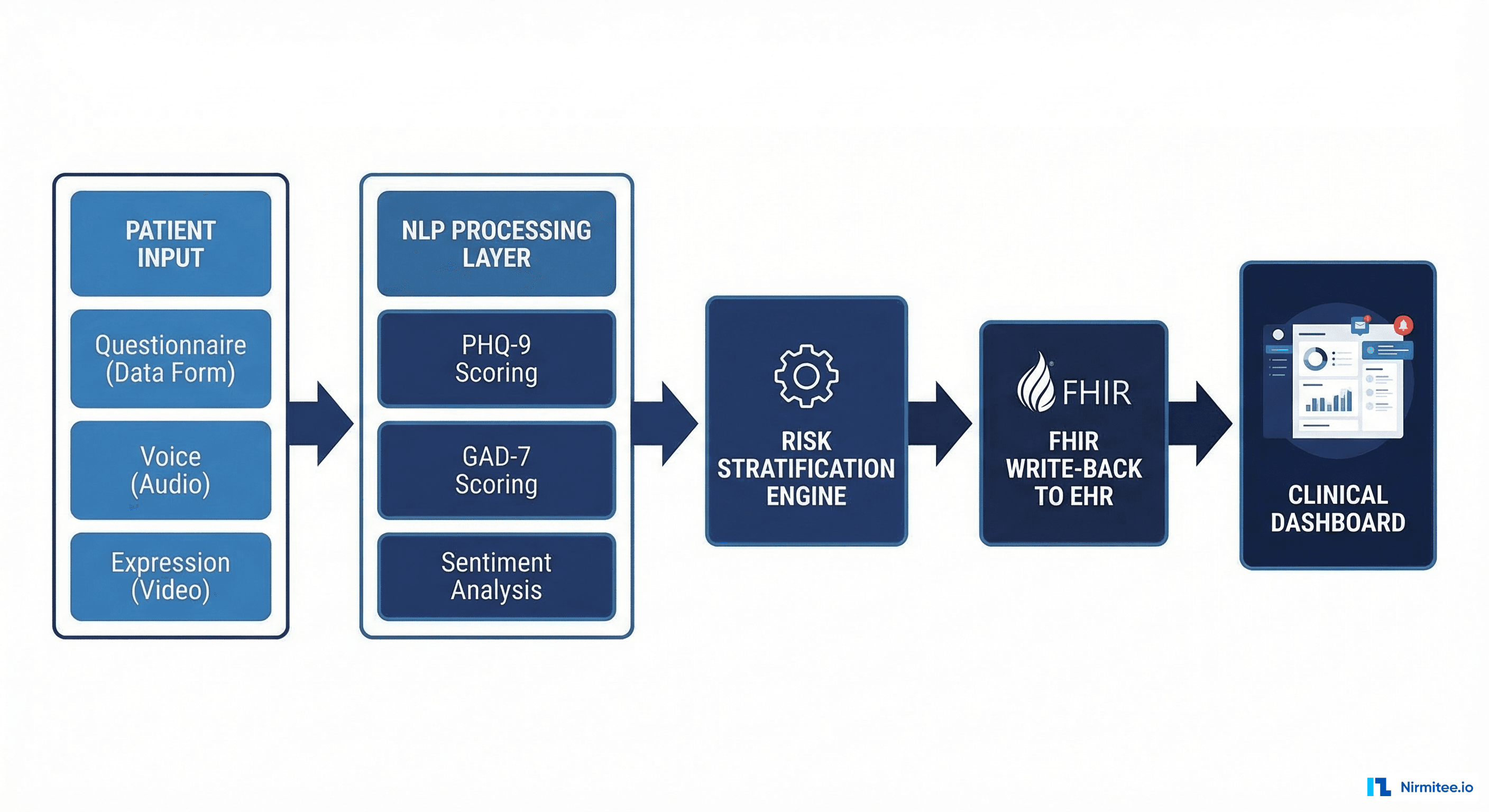

Building a clinical-grade mental health screening kiosk involves four technology layers, each with specific requirements for accuracy, privacy, and regulatory compliance.

Layer 1: Patient Interaction Interface

The kiosk interface must accomplish two competing goals: collect enough clinical data for accurate screening while maintaining a user experience that does not feel like a medical exam. Our implementation uses adaptive questioning — the initial questions are conversational and low-stakes, with clinical screening items introduced gradually based on the patient's responses.

Key design decisions:

- Touchscreen with accessibility support: 21-inch display at standing height, with seated-position option for wheelchair users. Font sizes adjustable from 16pt to 32pt. Audio narration available for low-literacy populations.

- Multi-language support: English, Spanish, Mandarin, and Vietnamese — covering 94% of the patient population across our deployment sites.

- Session timeout and privacy wipe: Sessions automatically terminate after 3 minutes of inactivity. All local data is purged, and the screen resets to the welcome state.

Layer 2: Validated Screening Instruments

The kiosk administers three validated screening instruments in sequence:

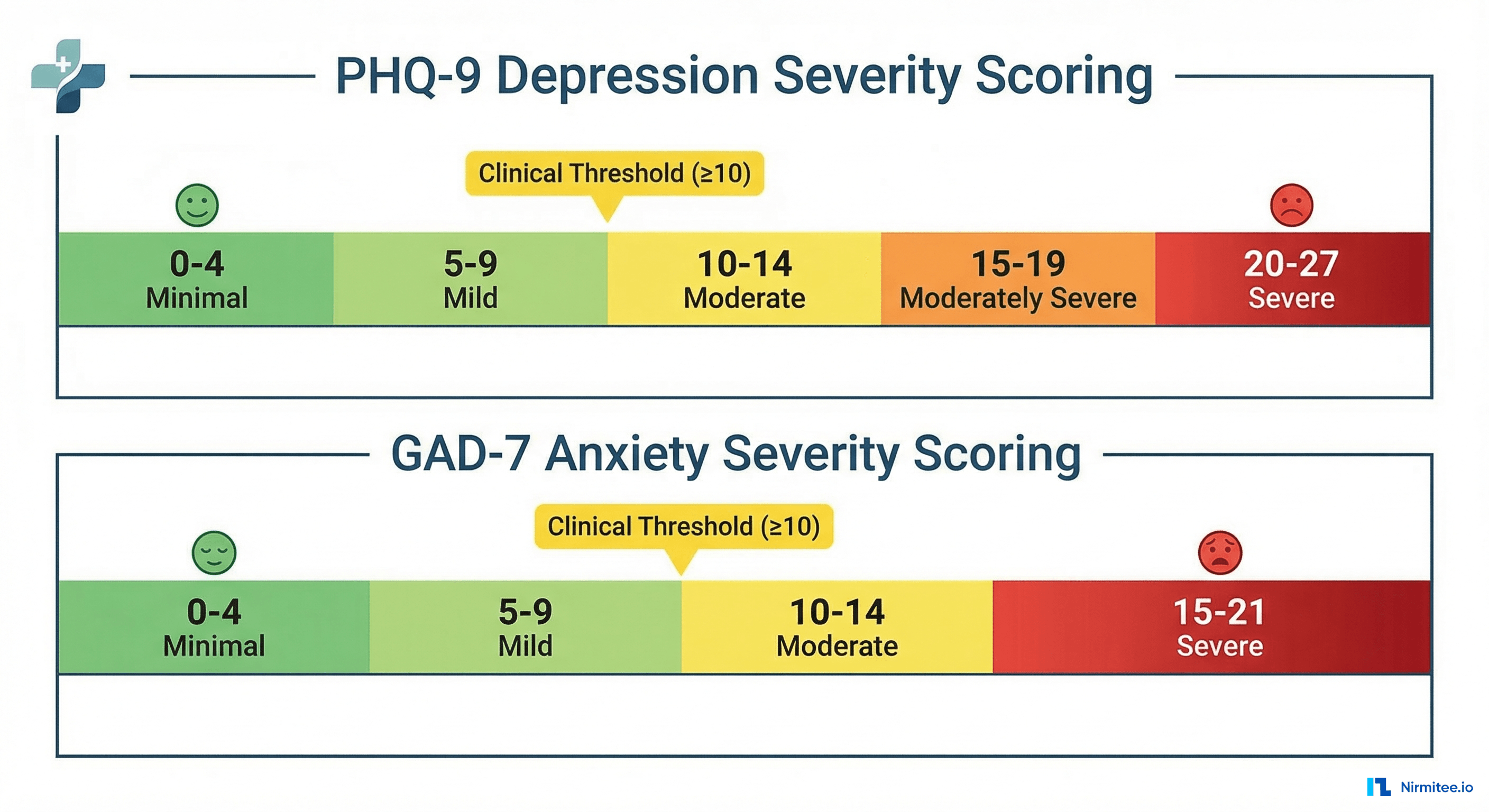

PHQ-9 (Patient Health Questionnaire-9) — The gold standard for depression screening. Nine questions scored 0-3 each, yielding a total score of 0-27. Sensitivity of 88% and specificity of 85% for major depressive disorder at a cutoff score of 10.

GAD-7 (Generalized Anxiety Disorder-7) — Seven questions scored 0-3 each, total score 0-21. Sensitivity of 89% and specificity of 82% for generalized anxiety disorder at a cutoff of 10.

Columbia Suicide Severity Rating Scale (C-SSRS) screener — Six questions that assess suicidal ideation and behavior. This instrument triggers immediate clinical escalation pathways when positive responses are detected.

| Instrument | Questions | Score Range | Clinical Threshold | Sensitivity |

|---|---|---|---|---|

| PHQ-9 | 9 | 0-27 | 10+ (Moderate) | 88% |

| GAD-7 | 7 | 0-21 | 10+ (Moderate) | 89% |

| C-SSRS Screener | 6 | Binary per item | Any positive | 97% |

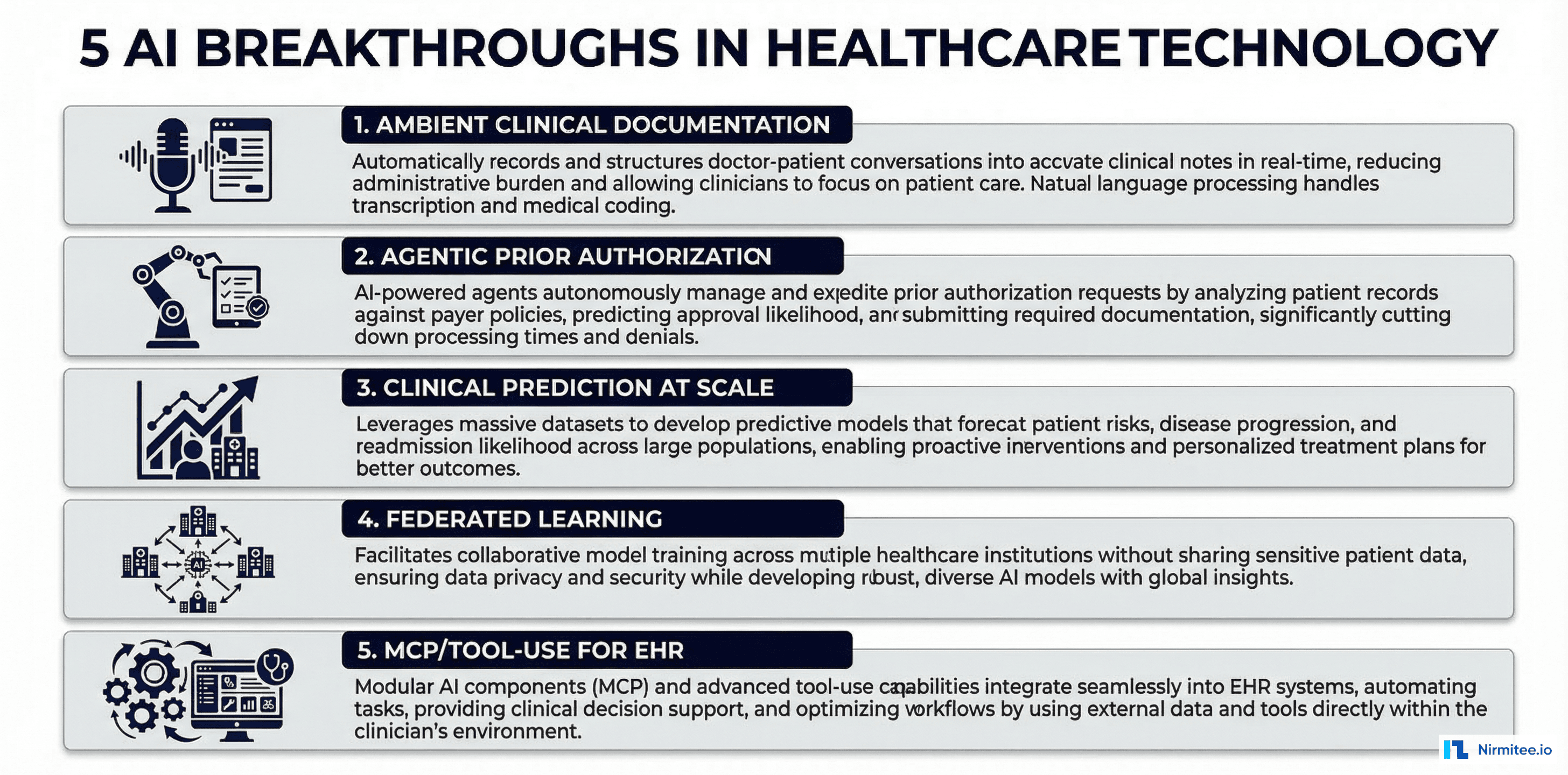

Layer 3: NLP and AI Enhancement

Beyond the structured questionnaire responses, the kiosk captures two additional data streams that improve screening accuracy:

Natural language processing of open-ended responses. After completing the PHQ-9, patients are asked: "Is there anything else you would like your doctor to know about how you have been feeling?" Responses are processed through a clinical NLP pipeline that extracts symptom mentions, temporal patterns, and severity indicators.

# NLP pipeline for open-ended response analysis

import spacy

from transformers import pipeline

# Clinical NER model for symptom extraction

ner_model = spacy.load("en_core_sci_lg")

sentiment_analyzer = pipeline(

"sentiment-analysis",

model="nlptown/bert-base-multilingual-uncased-sentiment"

)

def analyze_free_text(text: str) -> dict:

doc = ner_model(text)

symptoms = [

ent.text for ent in doc.ents

if ent.label_ in ["SYMPTOM", "CONDITION", "FINDING"]

]

sentiment = sentiment_analyzer(text)[0]

return {

"extracted_symptoms": symptoms,

"sentiment_score": sentiment["score"],

"sentiment_label": sentiment["label"],

"risk_keywords": detect_risk_keywords(text)

}Voice analysis for affect detection. An optional voice recording module captures the patient reading a standardized passage. Audio features — speech rate, pause patterns, vocal tremor, and pitch variability — are analyzed by a fine-tuned audio classification model trained on clinical speech datasets. This adds a non-self-report dimension to the screening that patients cannot consciously bias.

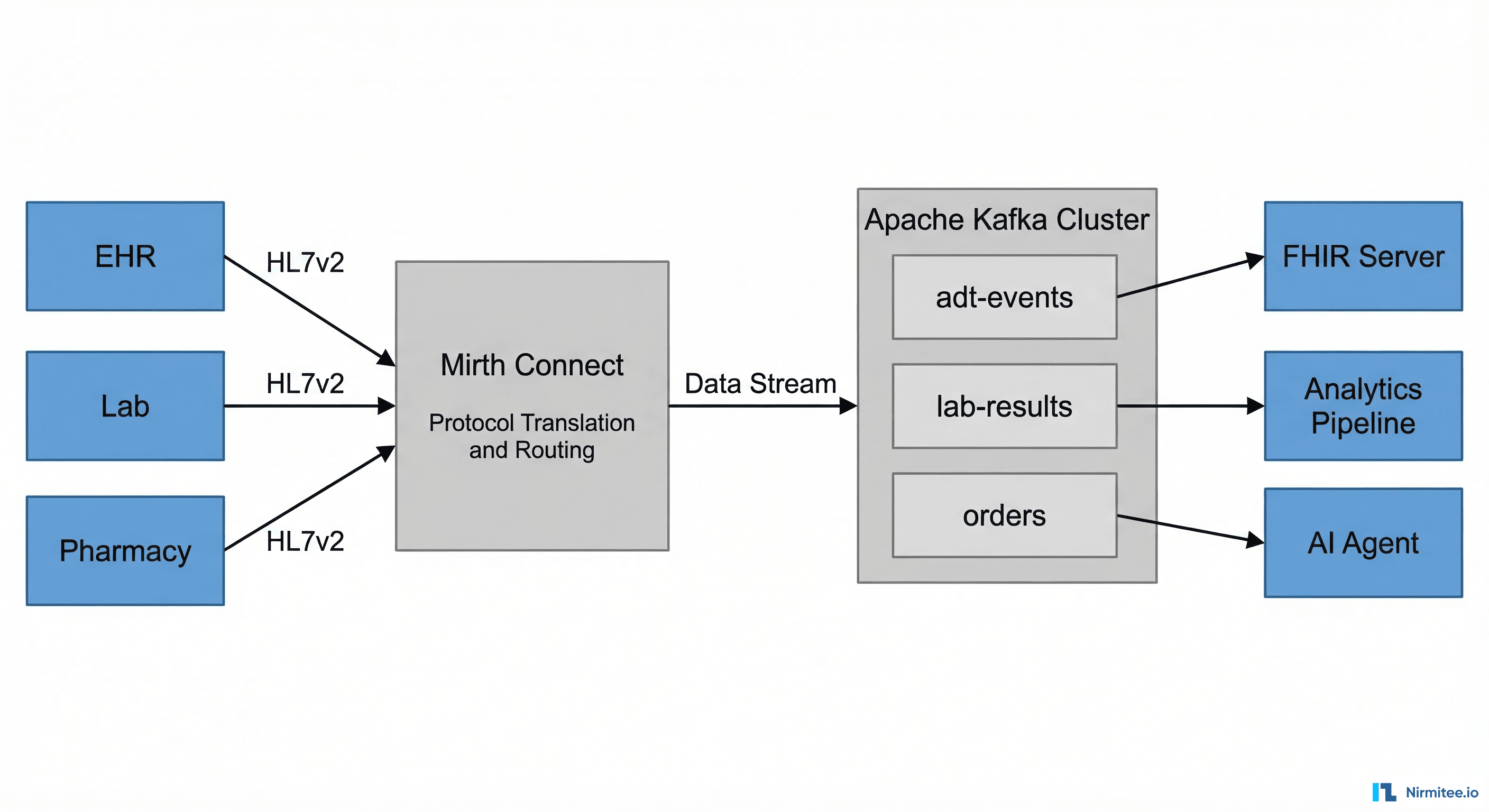

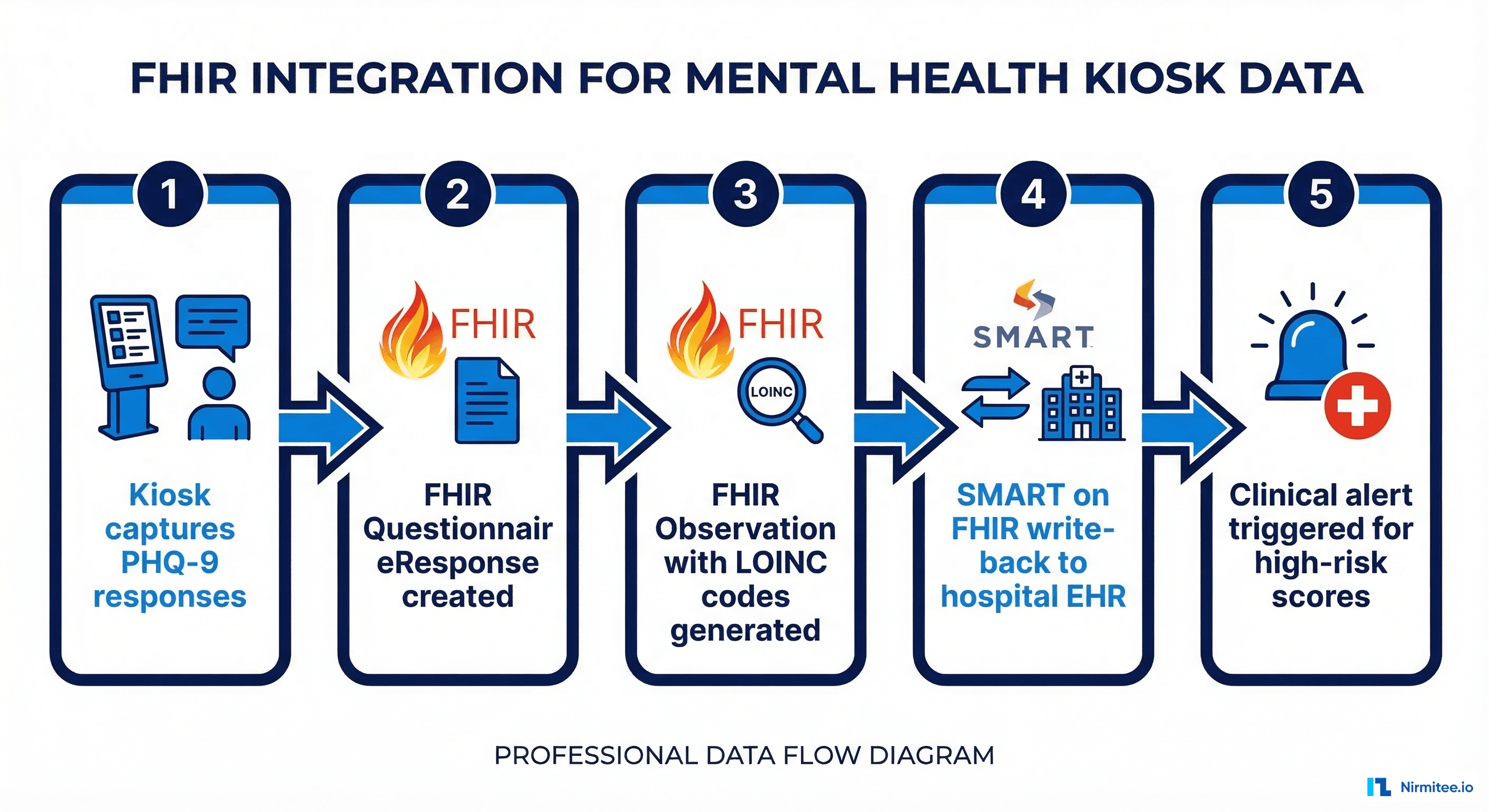

Layer 4: EHR Integration via FHIR

Screening results are only clinically useful if they reach the provider before the patient encounter. Our kiosk system uses SMART on FHIR to write structured data directly into the hospital EHR.

// FHIR QuestionnaireResponse for PHQ-9 kiosk screening

{

"resourceType": "QuestionnaireResponse",

"status": "completed",

"questionnaire": "http://loinc.org/q/44249-1",

"subject": { "reference": "Patient/12345" },

"authored": "2026-03-15T10:30:00Z",

"source": {

"reference": "Device/kiosk-lobby-A"

},

"item": [

{

"linkId": "PHQ9-Q1",

"text": "Little interest or pleasure in doing things",

"answer": [{ "valueCoding": {

"system": "http://loinc.org",

"code": "LA6570-1",

"display": "More than half the days"

}}]

}

]

}

// Corresponding FHIR Observation with total score

{

"resourceType": "Observation",

"status": "final",

"code": {

"coding": [{

"system": "http://loinc.org",

"code": "44261-6",

"display": "PHQ-9 total score"

}]

},

"subject": { "reference": "Patient/12345" },

"valueInteger": 17,

"interpretation": [{

"coding": [{

"system": "http://terminology.hl7.org/CodeSystem/v3-ObservationInterpretation",

"code": "H",

"display": "High"

}]

}]

}The FHIR write-back creates both a QuestionnaireResponse (preserving individual item responses for audit purposes) and an Observation (with the total score for clinical decision support). This dual-resource approach ensures that the kiosk participates in the clinical workflow rather than just generating data.

For hospitals running Epic or Oracle Health, the FHIR Observation triggers a Best Practice Alert (BPA) that appears in the provider's workflow when the patient's chart is opened. This ensures the screening result is actionable during the visit.

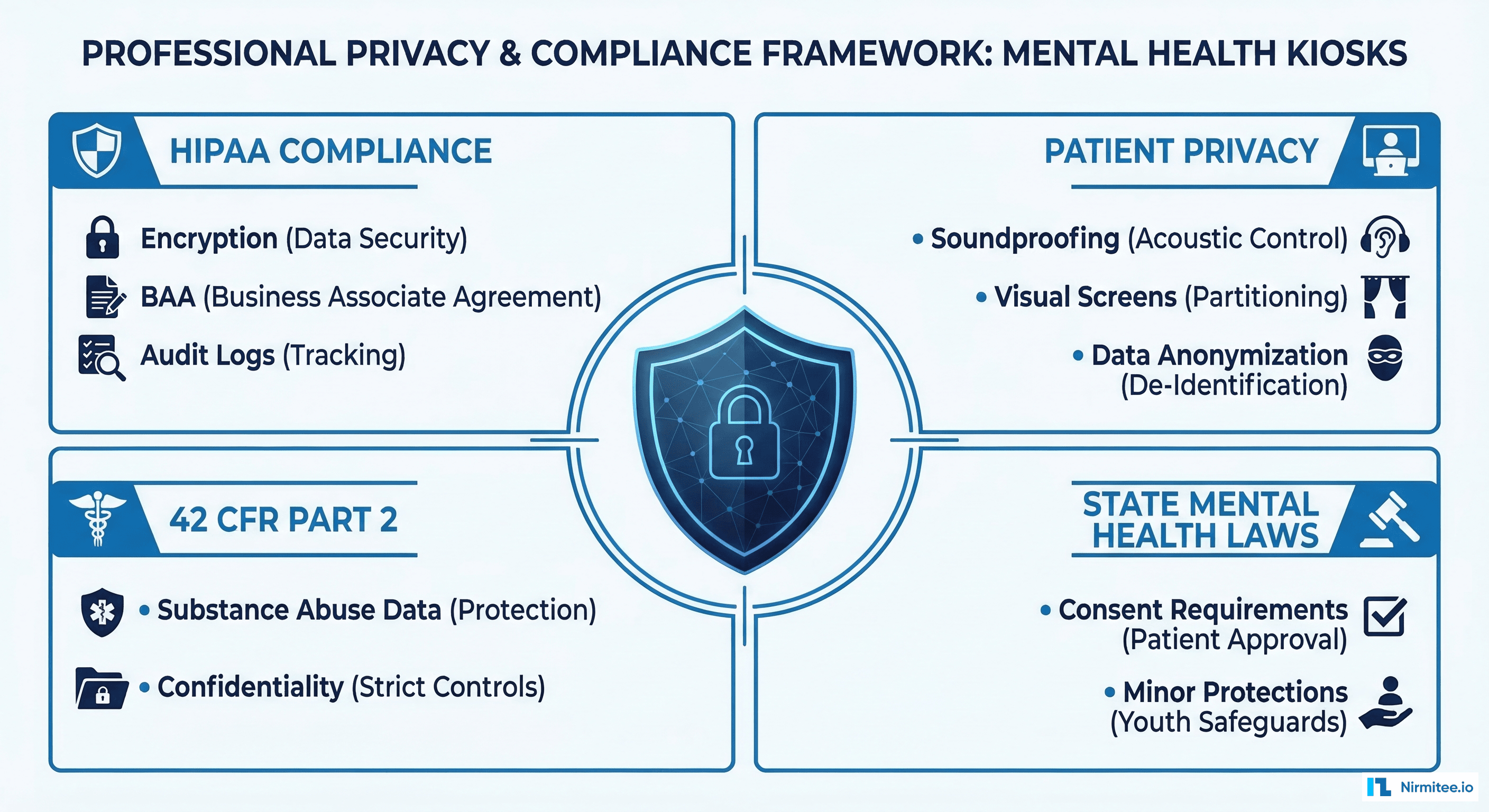

Privacy Engineering: Mental Health Data Requires Extra Protection

Mental health data carries heightened privacy requirements beyond standard HIPAA compliance. Three regulatory frameworks intersect:

HIPAA Privacy Rule — Standard PHI protections apply. All data transmitted from the kiosk to the EHR must be encrypted in transit (TLS 1.3) and at rest (AES-256). Audit logs must capture every data access event without exposing the underlying clinical content.

42 CFR Part 2 — Federal regulations provide additional protections for substance use disorder records. If the kiosk screening identifies substance use concerns (which the C-SSRS and clinical NLP components may detect), those specific data elements require separate consent and segmented access controls within the EHR.

State mental health laws — Many states impose additional consent requirements for mental health records. California's Lanterman-Petris-Short Act, New York's Mental Hygiene Law, and similar statutes require explicit written consent before mental health information can be shared between providers.

Our kiosk implementation addresses these requirements through a layered consent architecture:

- Tier 1 consent: General screening consent captured at kiosk registration. Covers PHQ-9 and GAD-7 results shared with the patient's primary care provider.

- Tier 2 consent: Explicit opt-in for AI-enhanced analysis (NLP and voice features). Patients can complete the standard screening without consenting to AI processing.

- Tier 3 consent: Separate authorization for substance-use-related data sharing, displayed only when clinically relevant content is detected.

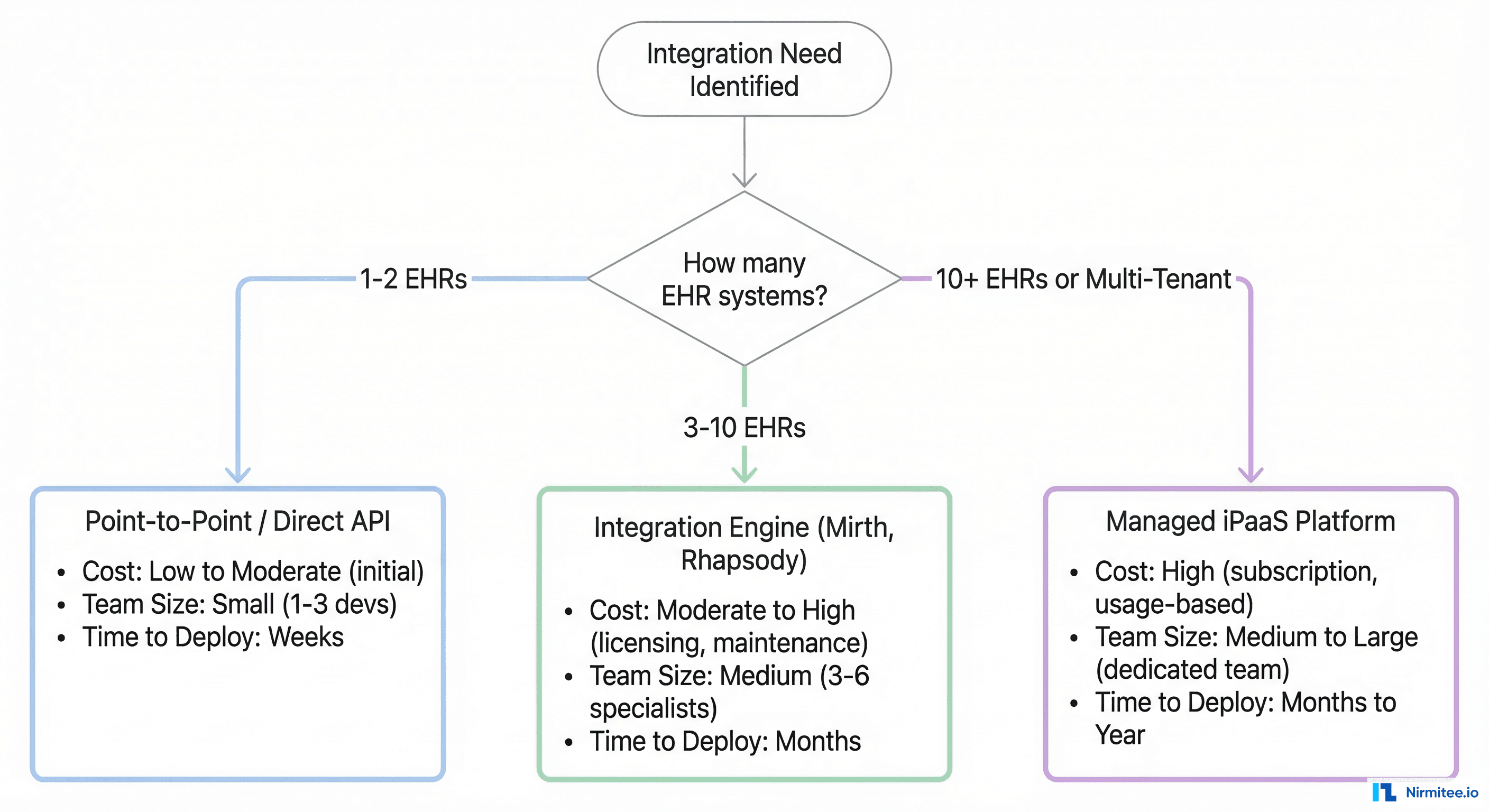

Deployment Architecture: From Single Kiosk to Network

Scaling from a pilot kiosk to a multi-site deployment introduces infrastructure challenges that are distinct from typical healthcare application scaling.

Edge Computing for Latency and Privacy

Each kiosk runs a local inference engine for the NLP and voice analysis models. Patient audio and free-text data are processed on-device — only structured scores and extracted features are transmitted to the cloud backend. This architecture provides two benefits: sub-second response times for the patient interface, and reduced data exposure by keeping raw biometric data off the network.

Central Dashboard for Clinical Teams

A web-based dashboard aggregates screening results across all kiosk locations. Clinical staff can view real-time screening volumes, flag high-risk patients requiring immediate follow-up, and track population-level mental health trends. This dashboard integrates with the hospital's existing data management infrastructure.

| Deployment Setting | Screenings/Week | Completion Rate | High-Risk Detection |

|---|---|---|---|

| Hospital ED Waiting Room | 120-180 | 91% | 18.3% |

| Primary Care Lobby | 80-120 | 87% | 12.7% |

| University Student Center | 150-220 | 93% | 22.1% |

| Community Health Center | 60-90 | 84% | 16.4% |

| Corporate Wellness Center | 40-70 | 78% | 8.9% |

Monitoring and Reliability

Kiosks in clinical environments must maintain near-100% uptime. Our deployment uses synthetic monitoring to probe each kiosk every 60 seconds, checking touchscreen responsiveness, network connectivity, EHR write-back latency, and local model inference times. When a kiosk goes offline, a clinical incident management workflow notifies the facilities team within 5 minutes.

Clinical Outcomes: What the Data Shows

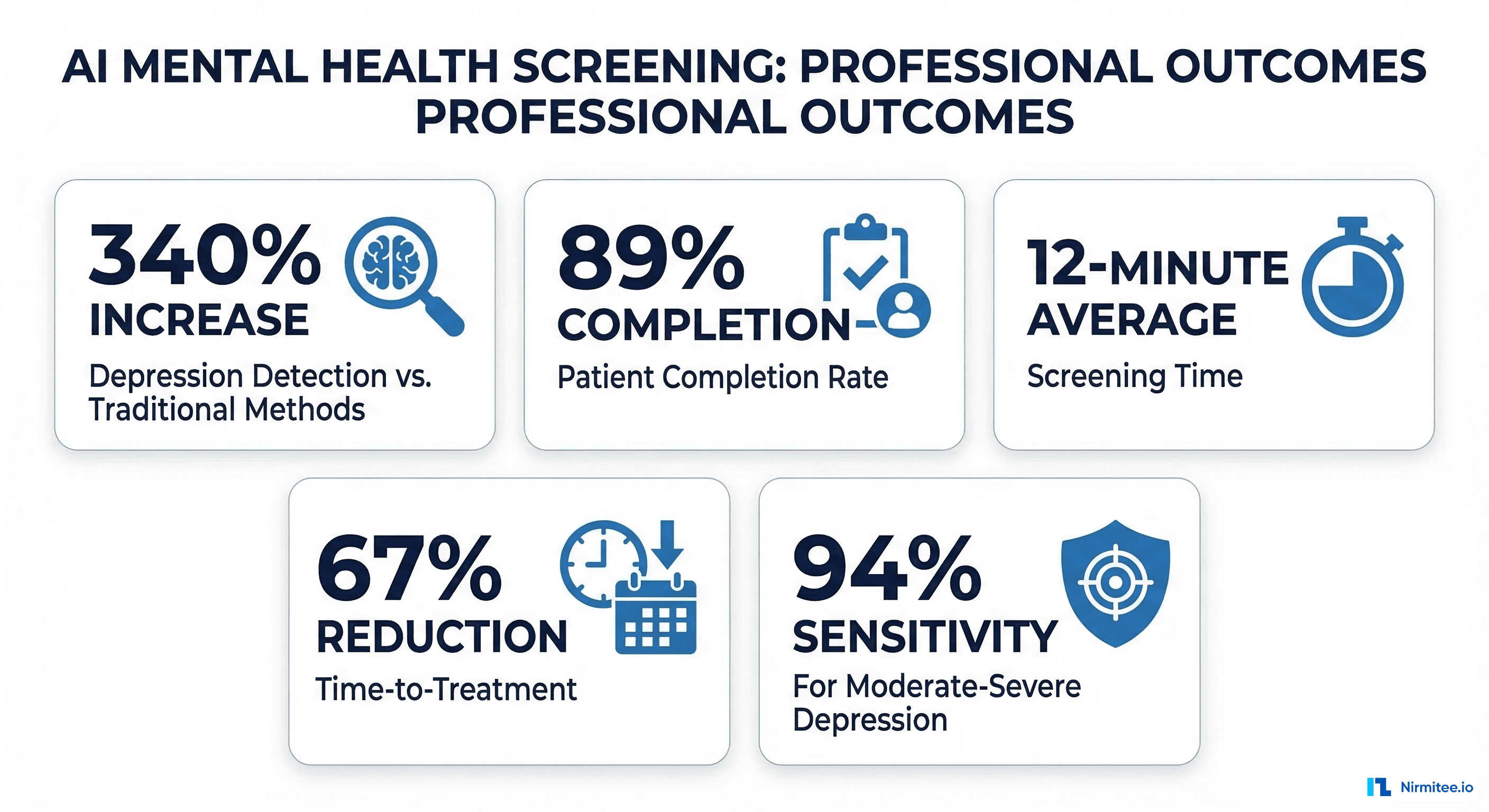

After 14 months of deployment across 14 facilities, the clinical outcomes data tells a compelling story:

Detection rate increase: Facilities with kiosks identified 340% more patients with moderate-to-severe depression compared to their baseline paper-based screening. This was not because depression prevalence changed — it was because more patients completed the screening and scores were reliably captured.

Completion rate: Kiosk-based PHQ-9 completion averaged 89% across all deployment sites, compared to 37% for the previous paper-based workflow. The interactive, tablet-style interface and privacy of the kiosk booth were the primary drivers cited in patient satisfaction surveys.

Time-to-treatment reduction: Patients identified through kiosk screening received their first mental health intervention (therapy referral, medication initiation, or structured follow-up) an average of 67% faster than patients identified through traditional screening. The median time from kiosk screening to first treatment was 8 days versus 24 days for paper-screened patients.

False positive management: The AI-enhanced screening (NLP + voice analysis) reduced the false positive rate by 23% compared to PHQ-9 alone. This matters because false positives consume limited psychiatric resources and can create unnecessary anxiety for patients.

Suicidal ideation detection: The C-SSRS integration identified 47 patients with acute suicidal ideation who had presented to the ED for non-psychiatric complaints. All 47 received same-day psychiatric evaluation. Three required immediate inpatient admission. Without kiosk screening, these patients would likely have been discharged without mental health assessment.

Cost Analysis and ROI

The financial model for mental health screening kiosks depends on the deployment setting and payer mix. Here is the breakdown from our 14-site deployment:

- Hardware cost per kiosk: $8,000-$12,000 (commercial tablet, enclosure, privacy screen, peripherals)

- Software licensing per kiosk/year: $15,000-$25,000 (includes NLP models, FHIR integration, monitoring)

- Installation and training: $3,000-$5,000 per site

- Annual maintenance: $4,000-$6,000 per kiosk

Revenue offsets come from three sources: CMS reimbursement for depression screening (CPT 96127, ~$5.44 per screening), behavioral health integration payments under CoCM (Collaborative Care Model) codes, and reduced downstream costs from earlier intervention. Our client facilities reported an average ROI of 280% over the first 18 months when factoring in reduced ED utilization for mental health crises.

Technical Challenges and Lessons Learned

Fourteen months of production deployment taught us lessons that no whiteboard architecture session could anticipate:

Challenge 1: Network reliability in clinical environments. Hospital WiFi is notoriously unreliable, especially in waiting room areas far from network closets. We added cellular failover (4G LTE) to every kiosk after experiencing EHR write-back failures at three sites. The cost ($30/month per kiosk) was trivial compared to the clinical risk of lost screening data.

Challenge 2: Patient identification matching. Kiosk screenings must link to the correct patient record in the EHR. Our initial approach used MRN entry, which had a 6% error rate. We switched to a QR code scan from the patient's check-in confirmation, reducing identification errors to 0.3%.

Challenge 3: Clinical workflow integration. The most technically perfect screening system fails if providers do not act on the results. We worked with clinical champions at each site to embed kiosk alerts into existing clinical workflows — specifically, adding PHQ-9 results to the pre-visit nurse handoff and the provider's opening dashboard view.

Challenge 4: Model drift in NLP components. Patient language patterns evolve, and the clinical NLP model showed measurable accuracy degradation after 8 months. We implemented quarterly retraining cycles using de-identified data from the deployment sites, with medallion architecture for the training data pipeline.

Regulatory Pathway: Is This a Medical Device?

The FDA classification question is critical. Mental health screening kiosks exist in a regulatory gray area:

If the kiosk administers only validated instruments (PHQ-9, GAD-7, C-SSRS) and reports scores without clinical interpretation, it falls under FDA enforcement discretion for Clinical Decision Support (CDS) software under the 21st Century Cures Act. The software meets the four CDS criteria: it is not intended to replace clinical judgment, it displays the basis for recommendations, it is intended for healthcare professionals, and it allows the provider to independently review the underlying data.

However, if the AI components (NLP analysis, voice affect detection) generate independent risk classifications that influence clinical decisions, the system may be classified as a Software as a Medical Device (SaMD) under FDA's Digital Health framework. This triggers a more rigorous regulatory pathway, potentially requiring 510(k) clearance.

Our approach: deploy the validated instruments under enforcement discretion, and submit the AI-enhanced features through the FDA's Predetermined Change Control Plan pathway, which allows iterative improvement of AI/ML-based SaMD without requiring a new submission for each model update.

Building Your Own Mental Health Screening Program

If you are a health system or healthtech company considering a mental health screening kiosk program, here is the critical path:

- Start with validated instruments only. PHQ-9, GAD-7, and C-SSRS provide excellent clinical utility without triggering SaMD classification. Add AI features in phase 2 after establishing clinical workflows.

- Solve EHR integration first. A kiosk that generates PDFs is marginally better than paper. The clinical value comes from structured FHIR-based EHR integration that puts scores into provider workflows in real time.

- Invest in privacy engineering. Mental health data sensitivity means you cannot retrofit privacy controls. Build 42 CFR Part 2 compliance and tiered consent from day one.

- Plan for scale from the architecture level. Edge computing for on-device inference, central dashboards for clinical oversight, and structured deployment playbooks for each new site.

- Measure clinical outcomes, not just screening volumes. The metric that matters is time-to-treatment, not number of screenings completed.

Nirmitee has helped health systems and mental health technology companies build screening solutions that connect patients to care faster. From telehealth platforms to AI-powered kiosks, our engineering team delivers clinical-grade software that meets the regulatory and interoperability bar that health systems require. Contact our healthcare engineering team or review our full service capabilities to discuss your mental health technology initiative.