The pattern every hospital ops leader has seen

You buy a portal, the patient experience improves for six months. You add an RPA bot, claim throughput jumps for a quarter. You hire two more coders, denials drop until the next payer policy change. The workflow does not stay fixed because the patches address symptoms, not structure.

This piece is for the hospital operations leader who has spent two years adding automation and is watching denial rates, prior auth cycle times, and front-desk overtime drift back to baseline. We will name the five structural causes — and identify what AI agents change about each. If you have read our pillar guide on AI agents in healthcare, this is the layer underneath.

What does "broken workflow" actually mean?

A broken workflow is one that produces the wrong output, the right output too slowly, or the right output at unsustainable cost — even after every layer of automation has been applied. In healthcare, the symptoms are universal: rising overtime in front-office and revenue cycle teams, denial rates that drift back to baseline after every Six Sigma project, and clinician burnout that reads as "more EMR clicks per encounter, year over year." The workflow is not failing because the staff are bad. It is failing because the structure underneath it has not changed.

The five structural causes

Cause 1 — Fragmented data

The same patient lives in three systems. Demographic mismatches block deduplication. Staff become the integration layer — they look up the same patient in three tabs and reconcile the differences in their head. The workflow looks like it works, but the rework cost is hidden in the staff time.

What agents change: the agent reads from all three systems and joins them at the moment a decision is needed. It does not need to wait for a master data management initiative to complete. It carries the join with itself, per workflow, per encounter.

Cause 2 — Hand-offs without context

The chart moves between scheduling, intake, the clinician, the coder, and the biller. The reasoning behind each decision does not. The biller is asking why a procedure was ordered three weeks after the encounter — by which point nobody remembers.

What agents change: the agent carries the reasoning. When the coder asks "why this code?" the agent points at the specific note section. When the biller asks "why this procedure?" the agent surfaces the diagnostic evidence. Context becomes a first-class resource.

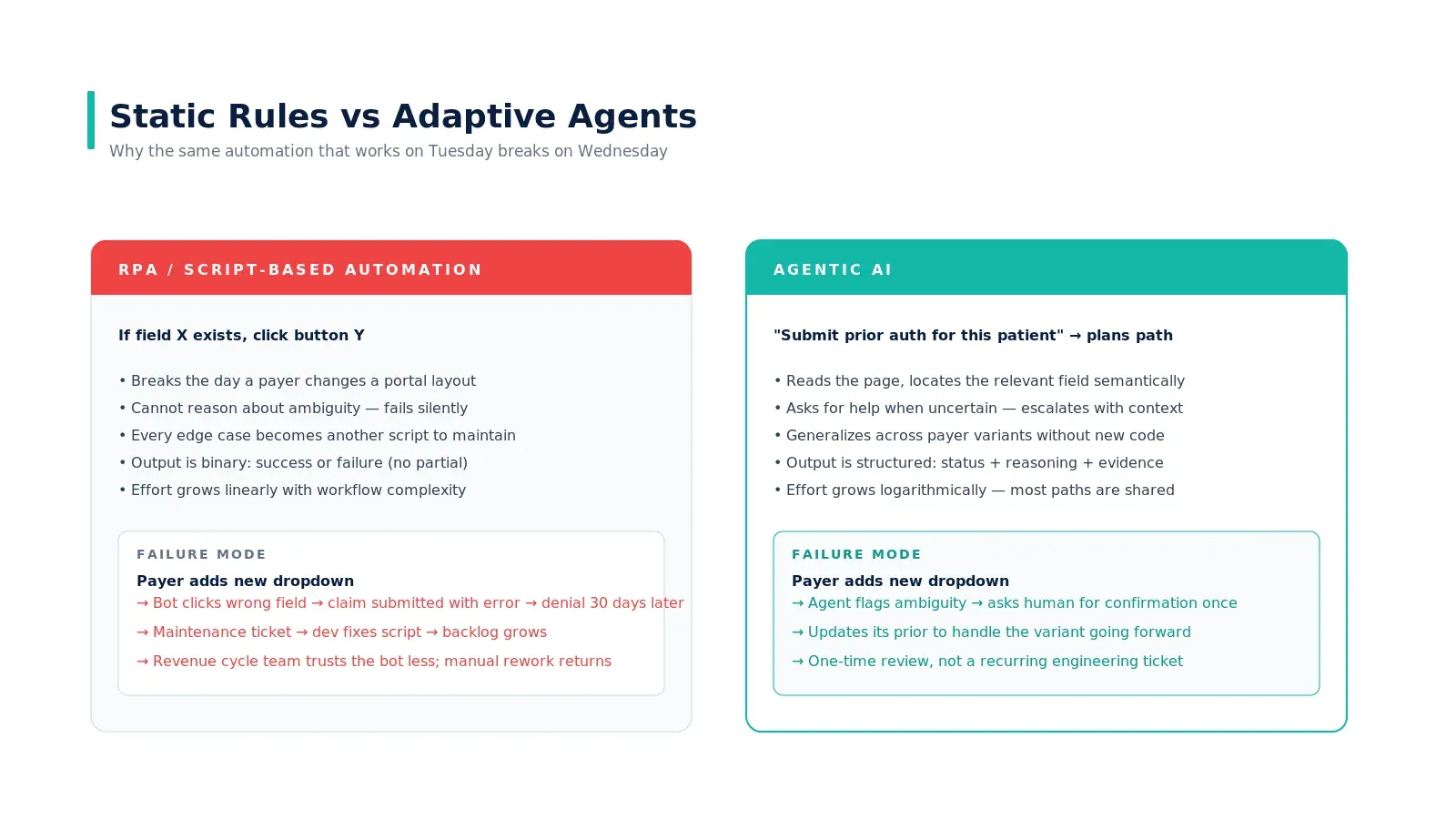

Cause 3 — Static rules versus real cases

Your existing automation is brittle because the rules are static. If the payer changes a portal layout, the bot breaks. If a new diagnosis appears in your population, the rule misses it. Staff override the rules quietly, and the override patterns become invisible institutional knowledge.

What agents change: the agent reasons about the case rather than matching it against a fixed rule. Edge cases are handled by the same architecture as common cases — the cost of an exception is not 100x the cost of a normal case.

Cause 4 — Error-prone manual entry

Re-keying ICD codes, demographics, eligibility, dosages — error rates scale linearly with volume. Quality programs reduce the rate but not the floor. A 1% error rate at 50,000 encounters per month is 500 errors. Most produce silent denials weeks later.

What agents change: the agent generates the structured fields directly from source. There is no re-keying. Errors that remain are at the source-of-truth level (the clinical note itself), where they are easier to catch and fix.

Cause 5 — No feedback loop

A claim gets denied; the cause stays buried in the EOB. By the time the pattern is visible, the payer has issued a thousand denials. Your workflow does not learn from outcomes — it learns from the few cases that surface in QA reviews.

What agents change: the agent reads the EOB on every cycle and updates its prior. It surfaces patterns automatically — payer X consistently denies CPT Y when Z is present — and either changes its behavior or escalates the pattern to a human owner. Hospitals learn at the speed of the agent's deployment, not the speed of the next QA cycle.

Where the time actually goes

Run the math on your own organization. Of 100 inbound referrals, how many get scheduled? Of those, how many have eligibility confirmed before the visit? Of those, how many have prior auth where required? Of those, how many actually show up? Of those, how many are coded cleanly the first time? Of those, how many get paid in full on the first submission? The number at the bottom is your real conversion. For most U.S. hospitals it is between 25% and 40%.

The fix is not better dashboards. The fix is closing the gaps where they happen — at the moment of the failure, in the workflow itself. That is what an agent is structurally good at and what static automation is structurally bad at.

Why more automation has not helped

RPA, OCR, and form automation all optimize the same thing: data entry. Most healthcare workflows have already optimized data entry. What is left is judgment — reading the chart, mapping to policy, drafting the letter, deciding to appeal. That is why each new automation layer produces diminishing returns. We go deeper on this distinction in why RPA failed in healthcare and what agentic AI does differently.

What does the fix look like in practice

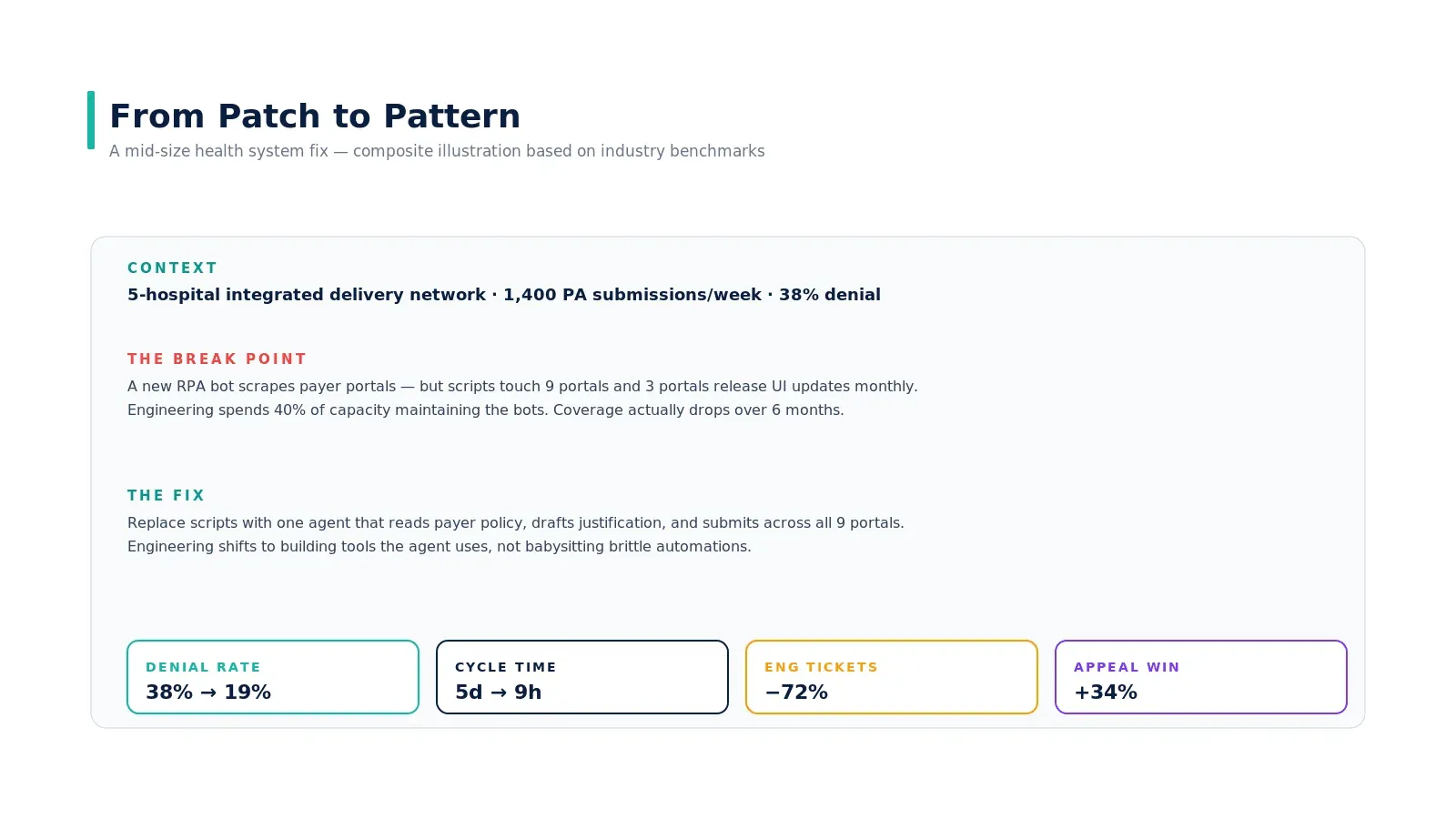

A 5-hospital integrated delivery network running 1,400 prior auth submissions per week with a 38% denial rate. Their existing fix was a stack of RPA bots scraping payer portals — but with 9 portals and three of them releasing UI updates monthly, engineering was spending 40% of capacity maintaining the bots. Coverage actually dropped over six months.

The structural fix was to replace the script stack with one agent that reads payer policy, drafts the medical-necessity letter, and submits across all 9 portals. Engineering shifted to building tools the agent uses — not babysitting brittle automations. Outcome ranges over 12 months: denial rate from 38% to 19%, cycle time from 5 days to 9 hours, engineering tickets down 72%, appeal win rate up 34%.

This is a composite example synthesized from public Experian and AHA benchmarks; the pattern is what holds across deployments, not the specific numbers.

Where to start if you are operationally responsible

If you own ops, the highest-leverage move is not buying a vendor. It is mapping the leak in one specific workflow honestly — what fraction of cases pass each stage cleanly, where the rework lives, and whose hours are the bottleneck. Once you have that, the question of whether an agent is the right tool answers itself. We have a companion piece on how to find the right healthcare workflows for AI agents that walks through the assessment.

The other prerequisite reading: prerequisites before building an AI agent for a healthcare hospital and the EHR integration patterns piece — both directly inform whether the architecture is feasible inside your system.

Real-world example

The pattern is well-documented in healthcare operations literature. AHA's "Costs of Caring" reporting shows hospitals collectively spent $43B in 2025 chasing payments that were already contractually owed — a structural leak, not a staffing leak. AMA's prior-authorization survey shows 88% of physicians describe the PA burden as high or extremely high — and that number has risen even as automation tools proliferated. The case study earlier in this article is a clearly-labeled composite based on these benchmarks; the durable insight is that no automation layer fixes a workflow if the bottleneck is judgment rather than data entry.

Key takeaways

- The cause is structural, not staffing. More headcount and more dashboards do not fix the underlying breakage.

- RPA optimizes data entry. Most healthcare workflows are bottlenecked by judgment. That is why each automation layer produces diminishing returns.

- Internal queueing dwarfs payer review time in PA, claims, and intake. Agents collapse the internal portion; the external SLA stays the same; cycle time still drops 4–8x.

- Static rules cannot represent every edge case. Agents reason about cases instead of matching them — exception handling becomes the norm, not an exception.

- Map your workflow leak honestly before buying anything. The conversion from referral to paid claim tells you the real problem.

Call to Action

Want to deploy an AI Agent inside your hospital or healthcare product? Get in touch with our team — we will scope the workflow, governance, and 90-day rollout plan against your own baseline metrics.

Learn more about AI Agents in Healthcare → read the full pillar guide.

Related reading: