Healthcare integration projects fail at a rate that would be unacceptable in any other area of IT. According to a 2024 Standish Group analysis, 52% of healthcare IT integration projects exceed their original budget by more than 30%, and 38% miss their go-live date by more than two months. These are not failures of technology. Mirth Connect works. HL7 messaging works. The failures are failures of planning: underestimating the scope, understaffing the team, underbudgeting the project, and underappreciating the coordination complexity of connecting healthcare systems.

This guide exists because nobody gives project managers and IT directors a realistic picture of what a Mirth Connect implementation actually requires. Vendor sales decks show best-case scenarios. Conference presentations showcase the success stories. Nobody talks about the six-month vendor delay for HL7 specifications, the integration engineer who left mid-project, or the "simple" interface that took three months because the source system sends non-standard HL7.

What follows is an honest, detailed breakdown of timeline, team composition, budget components, and risk factors for Mirth Connect implementations across three common project sizes. Every number is grounded in real project experience. Every warning comes from a real project failure that could have been avoided with better planning.

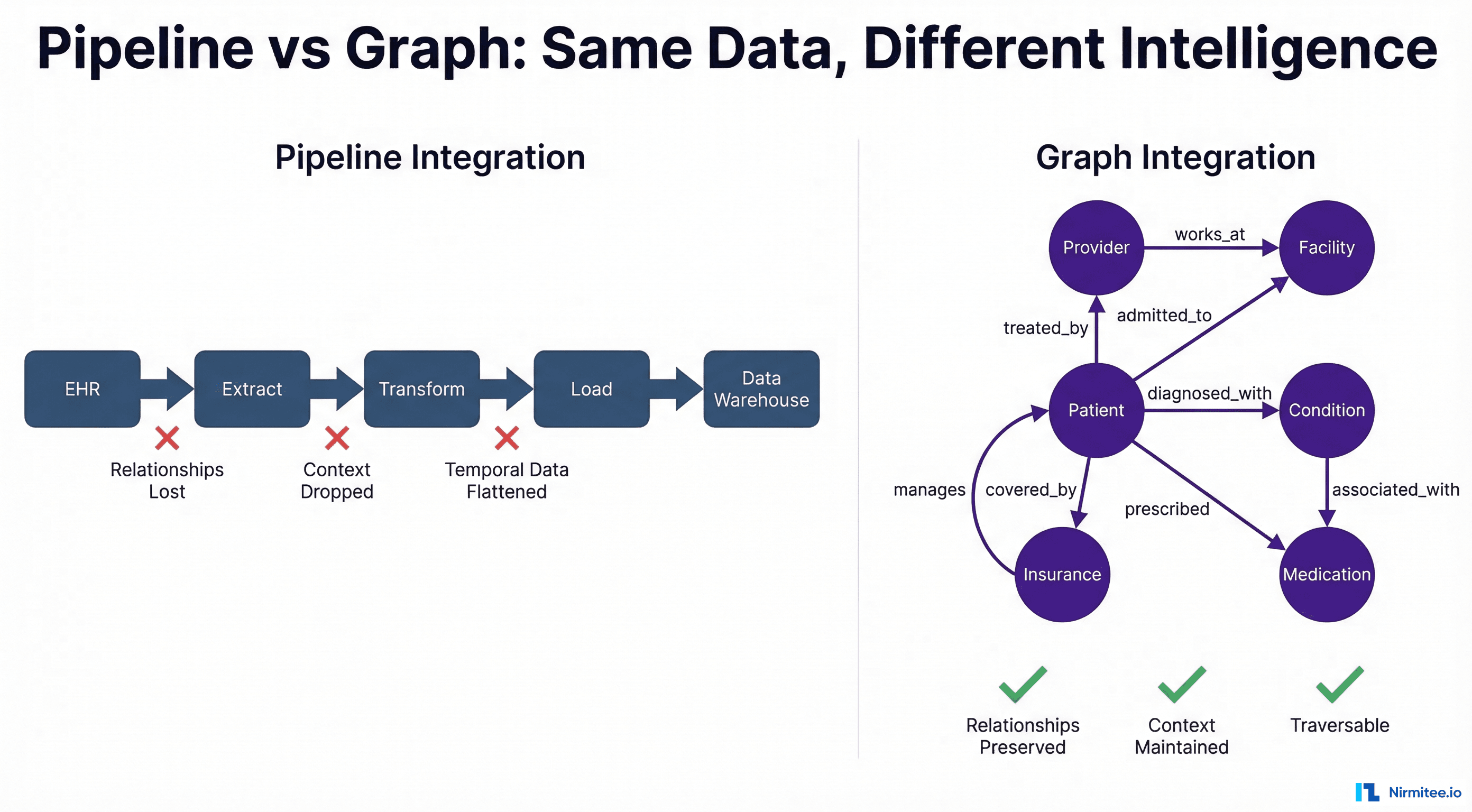

Why Integration Projects Fail to Plan

The root cause of integration project failures is almost always the same: the project was scoped as a technology deployment when it is actually a coordination exercise. Installing Mirth Connect takes an afternoon. Configuring, testing, and going live with 30 healthcare interfaces takes 6-12 months, and the bottleneck is almost never the integration engine.

The real bottlenecks are:

- Vendor responsiveness. Getting HL7 interface specifications from an EHR or lab system vendor takes 2-8 weeks. Getting access to a vendor test environment takes 4-12 weeks. Scheduling vendor resources for go-live support requires 6-8 weeks advance notice. None of this is in your control, and all of it is on your critical path.

- Specification ambiguity. Vendors send HL7 specifications that are incomplete, outdated, or different from what their system actually sends in production. According to HL7 International, the HL7 v2 standard has over 500 optional fields and segments. Every implementation makes different choices about which optional elements to include, creating a combinatorial explosion of edge cases.

- Testing dependencies. You cannot test an interface end-to-end without both systems available, configured, and staffed with people who can verify the results. Coordinating testing windows across 5-10 vendor organizations is a scheduling nightmare.

- Scope discovery. The project starts with "we need 20 interfaces" and by month three, it is 35 interfaces because nobody inventoried the departmental systems, the research data feeds, or the legacy reporting interfaces that "somebody set up years ago."

Planning that accounts for these realities produces projects that succeed. Planning that ignores them produces the industry's 52% budget overrun rate.

Scoping the Project

Interface inventory

Before you can plan a timeline or budget, you need a complete inventory of every interface in scope. This is harder than it sounds. Start with the known interfaces (the ones in the project charter). Then systematically discover the unknown ones:

- Interview every department head: "What systems do your staff use, and which ones need to share data with other systems?"

- Review existing network configurations for MLLP ports, VPN tunnels, and point-to-point connections.

- Check the current integration engine (if one exists) for active and inactive interfaces.

- Review vendor contracts for interface requirements that may not have been implemented yet.

For each interface, document: source system, destination system, message types (ADT, ORM, ORU, etc.), estimated daily volume, clinical criticality (critical/high/medium/low), and any known complexity factors (custom segments, non-standard formatting, real-time requirements).

Prioritization

Not all interfaces are equal. Prioritize by clinical impact and implementation complexity:

- Wave 1 (critical, straightforward): ADT messages between core systems, lab results to the EHR, pharmacy orders. These are high-impact, well-understood interface patterns with standard HL7 message types.

- Wave 2 (important, moderate complexity): Radiology orders, scheduling interfaces, clinical document exchange. Standard but may involve more complex transformations.

- Wave 3 (supporting, higher complexity): Research data feeds, departmental system integrations, custom reporting interfaces, FHIR-based interfaces alongside legacy HL7 v2.

Dependency mapping

Some interfaces depend on others. The lab system cannot send results (ORU) until it has received patient registrations (ADT). The pharmacy cannot process orders (ORM) until the formulary interface is active. Map these dependencies to avoid paralysis in later phases when a blocked interface holds up downstream work.

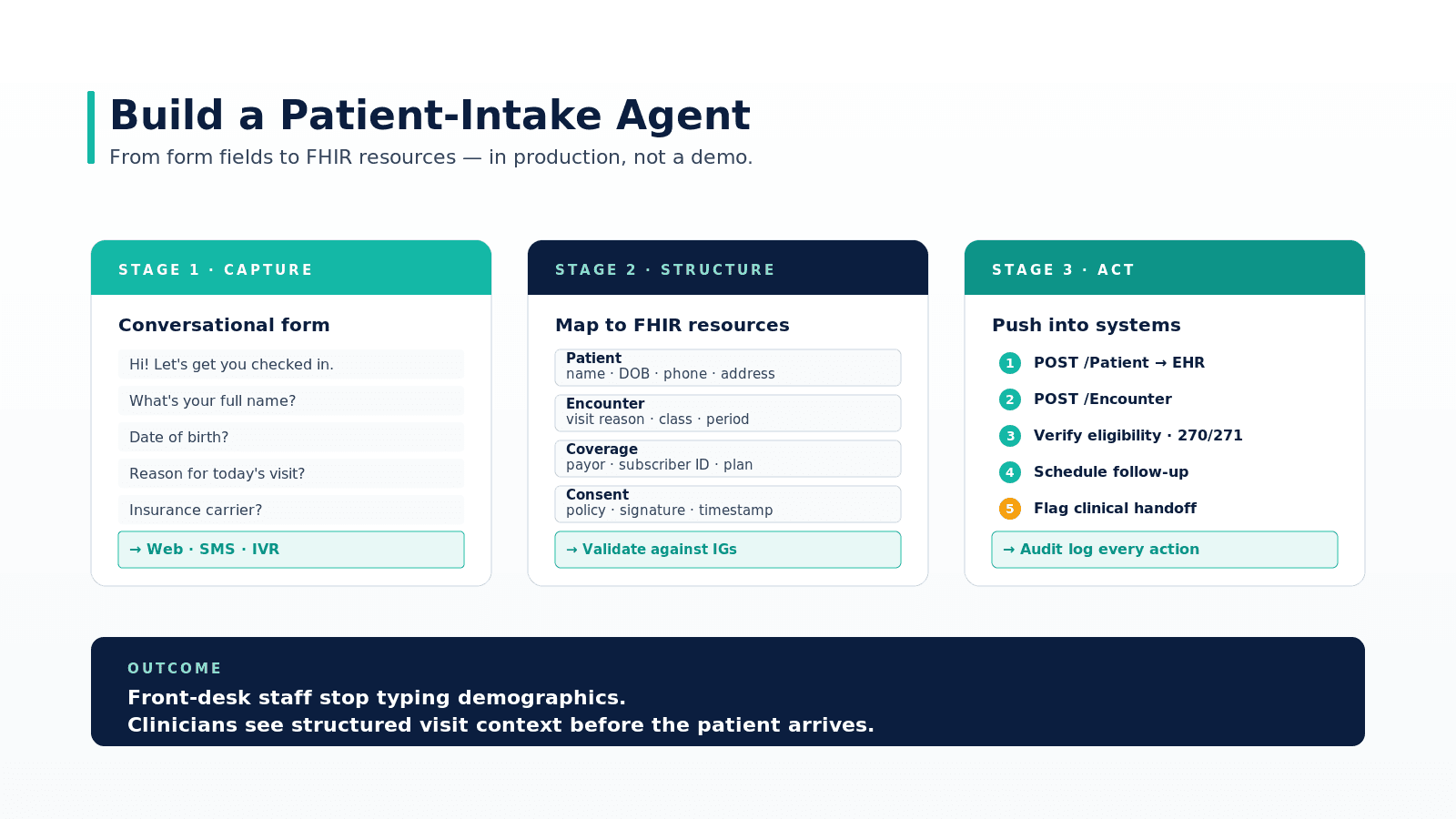

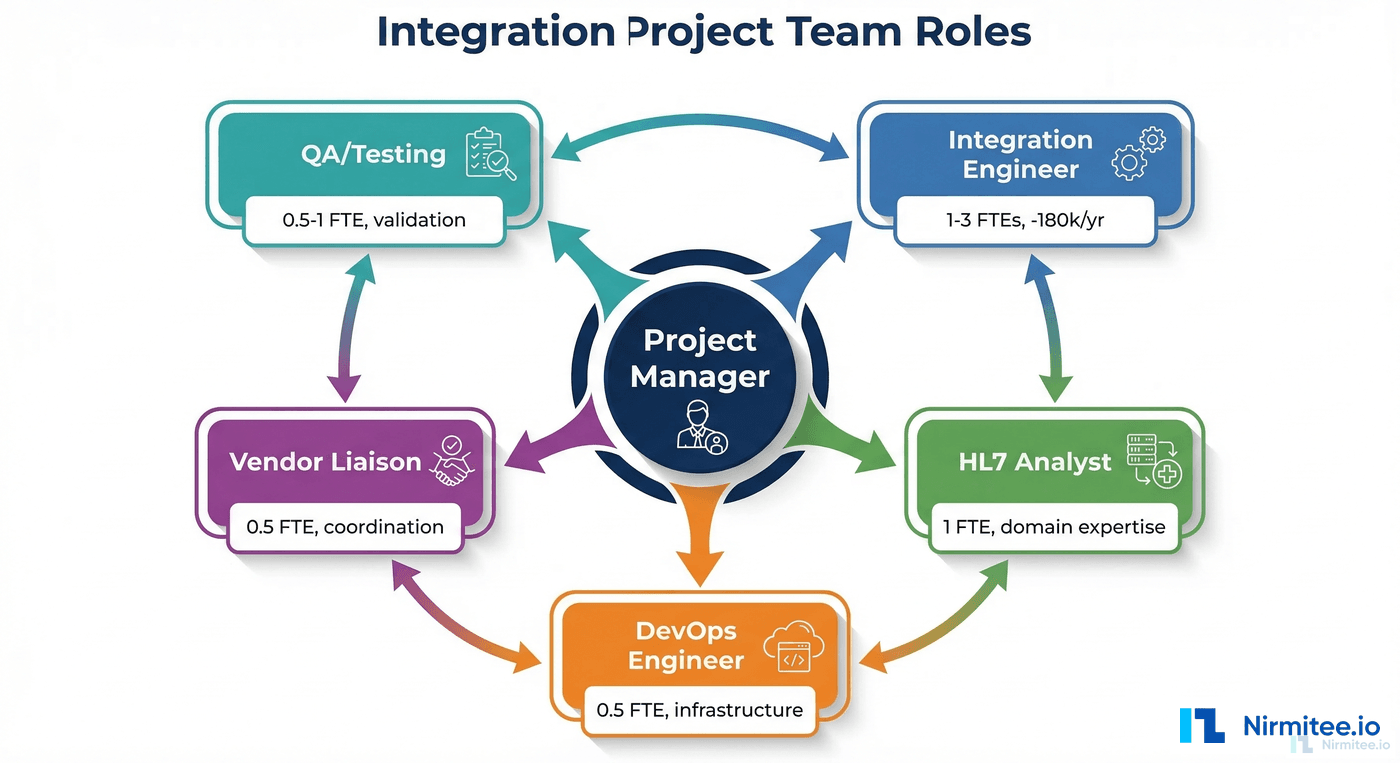

Team Roles Needed

Integration projects require a specific mix of skills. Understaffing any of these roles creates bottlenecks that delay the entire project.

Integration Engineer (1-3 FTEs depending on project size)

The core technical role. Builds Mirth Connect channels, writes transformer code, configures connectors, and troubleshoots message processing issues. Requires deep knowledge of Mirth Connect, HL7 v2 message structure, and JavaScript. According to Bureau of Labor Statistics data, healthcare integration engineers command $120,000-$180,000/year, and the talent market is extremely competitive. Budget 3-6 months for hiring if you do not have this role filled internally. For existing Mirth teams, review our guide on building a robust HL7 interface engine as a project reference.

HL7/FHIR Analyst (1 FTE)

The domain expert who interprets vendor HL7 specifications, validates message content accuracy with clinical stakeholders, and resolves ambiguities in interface definitions. This role requires deep understanding of healthcare workflows, clinical data models, and interoperability standards. Often filled by someone with clinical informatics or health information management background.

Project Manager (1 FTE or dedicated 50%+)

Coordinates vendor schedules, tracks interface progress, manages risks, and communicates status to stakeholders. Integration project management requires understanding of technical dependencies (not all interfaces can be built in parallel) and vendor coordination experience (getting vendors to meet deadlines requires persistent, diplomatic pressure).

Vendor Liaison (0.5 FTE)

Dedicated contact for vendor coordination: requesting specifications, scheduling test environments, coordinating go-live support. Can be combined with the PM role for smaller projects, but larger projects benefit from a dedicated person who builds relationships with vendor technical contacts.

DevOps/Infrastructure Engineer (0.5 FTE)

Sets up and maintains the Mirth Connect infrastructure: servers, databases, monitoring, high availability, backups, and CI/CD pipelines. Also handles network configuration (firewall rules, VPN tunnels, MLLP port management). Typically shared with other infrastructure responsibilities.

QA/Testing Resource (0.5-1 FTE)

Validates that interfaces process messages correctly end-to-end. Creates test message libraries, coordinates testing with vendor contacts, and verifies clinical data accuracy with stakeholders. Often underestimated — testing takes 25-30% of the total project timeline.

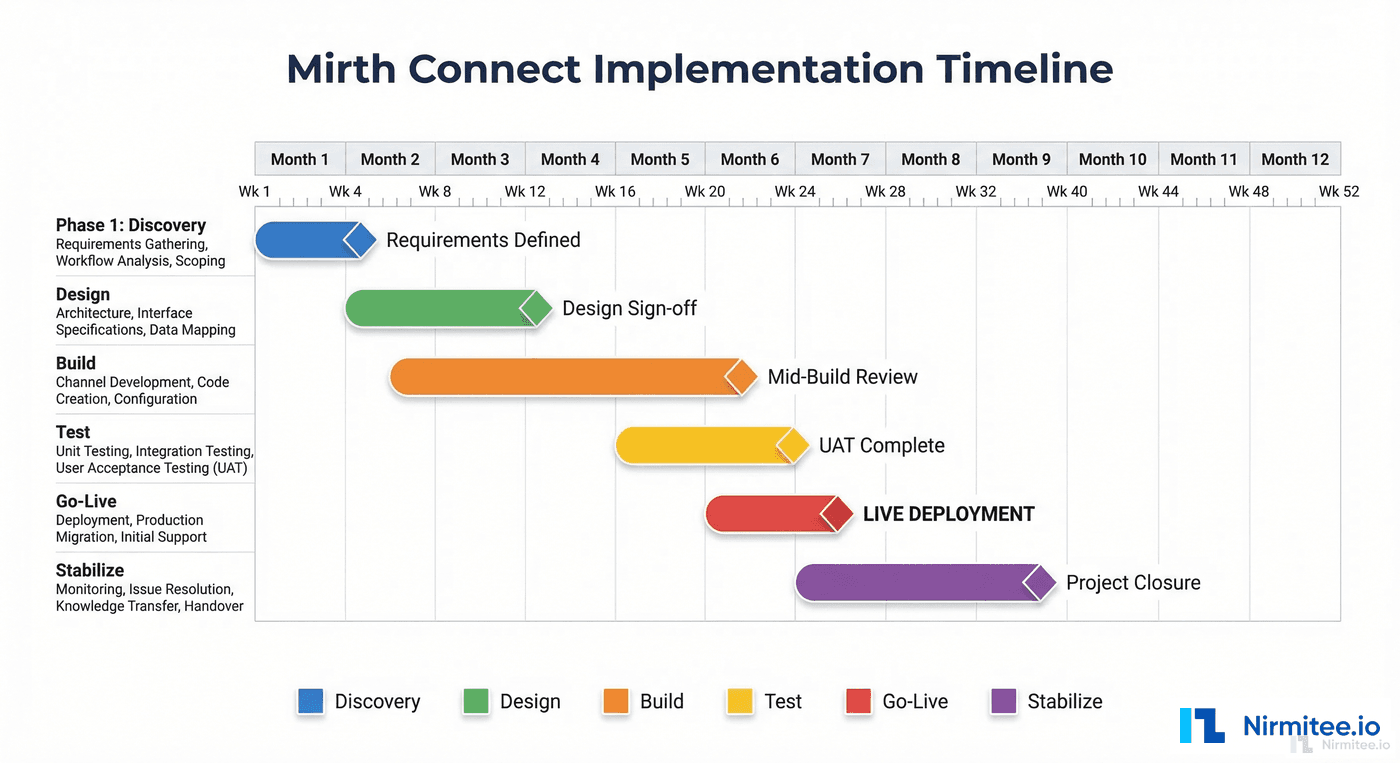

Timeline by Project Size

Small project: 5-10 interfaces (3-6 months)

| Phase | Duration | Key Activities |

|---|---|---|

| Discovery | 2-3 weeks | Interface inventory, vendor spec requests, infrastructure planning |

| Design | 2-3 weeks | Channel architecture, naming conventions, error handling strategy |

| Build | 6-10 weeks | Channel development, code template creation, unit testing |

| Test | 3-4 weeks | Integration testing with vendor test systems, clinical validation |

| Go-Live | 1-2 weeks | Production cutover (1-2 waves), monitoring setup |

| Stabilize | 4 weeks | Issue resolution, performance tuning, documentation finalization |

Total: 18-26 weeks (4.5-6.5 months)

Team: 1 integration engineer, 0.5 HL7 analyst, 0.5 PM. Total FTE: 2.0

Medium project: 20-50 interfaces (6-12 months)

| Phase | Duration | Key Activities |

|---|---|---|

| Discovery | 4-6 weeks | Complete interface inventory, vendor coordination kickoff, infrastructure provisioning |

| Design | 3-4 weeks | Architecture patterns, template channels, deployment workflow, monitoring strategy |

| Build | 12-20 weeks | Channel development in waves, code template libraries, automated testing |

| Test | 6-8 weeks | Integration testing per wave, end-to-end workflow testing, performance testing |

| Go-Live | 3-4 weeks | Phased production cutover (3-5 waves), parallel run periods |

| Stabilize | 6-8 weeks | Issue resolution, optimization, knowledge transfer, documentation |

Total: 34-50 weeks (8.5-12.5 months)

Team: 2-3 integration engineers, 1 HL7 analyst, 1 PM, 0.5 vendor liaison, 0.5 DevOps, 0.5 QA. Total FTE: 5.0-6.0

Large project: 100+ interfaces (12-24 months)

| Phase | Duration | Key Activities |

|---|---|---|

| Discovery | 6-8 weeks | Enterprise interface inventory, vendor assessment, multi-environment architecture |

| Design | 4-6 weeks | Enterprise architecture, governance framework, team structure, tooling selection |

| Build | 24-40 weeks | Parallel channel development (2-3 teams), shared libraries, continuous integration |

| Test | 10-16 weeks | Continuous testing, vendor coordination across 10-20 organizations, clinical validation |

| Go-Live | 8-12 weeks | Phased cutover (8-12 waves), parallel run, rollback readiness |

| Stabilize | 8-12 weeks | 30-60-90 day support plan, performance optimization, governance handoff |

Total: 60-94 weeks (15-24 months)

Team: 4-6 integration engineers, 2 HL7 analysts, 1 PM + 1 assistant PM, 1 vendor liaison, 1 DevOps, 1 QA. Total FTE: 10-12

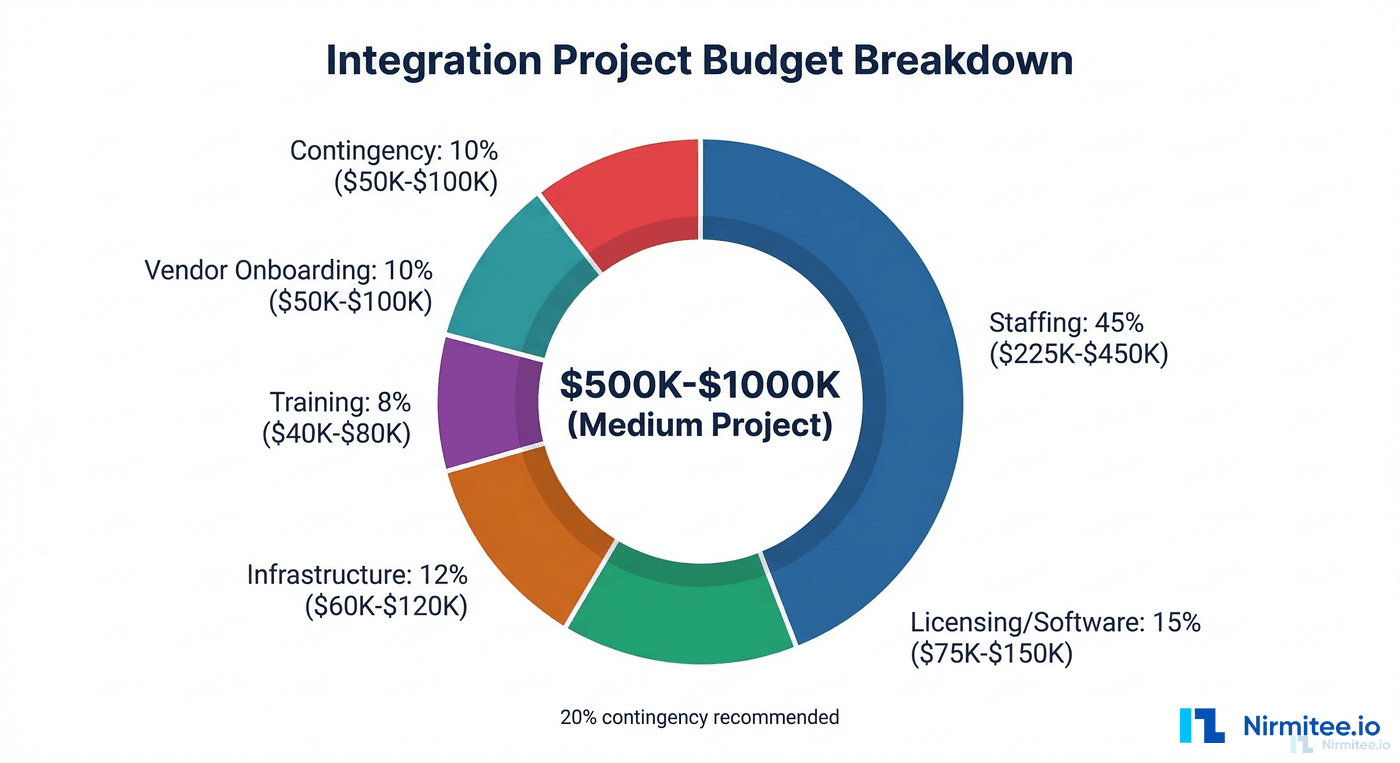

Budget Components Breakdown

Integration project budgets have six major components. Organizations that budget only for licensing discover the hard way that licensing is typically less than 15% of the total project cost.

1. Staffing (40-50% of total budget)

The largest single cost. Calculate based on team composition and project duration:

| Role | Annual Cost | Small Project (6mo) | Medium Project (12mo) | Large Project (24mo) |

|---|---|---|---|---|

| Integration Engineer | $150,000 | $75,000 (1 FTE) | $375,000 (2.5 FTE) | $1,500,000 (5 FTE) |

| HL7 Analyst | $110,000 | $27,500 (0.5 FTE) | $110,000 (1 FTE) | $440,000 (2 FTE) |

| Project Manager | $130,000 | $32,500 (0.5 FTE) | $130,000 (1 FTE) | $260,000 (1 FTE) |

| DevOps | $140,000 | $35,000 (0.5 FTE) | $70,000 (0.5 FTE) | $280,000 (1 FTE) |

| QA/Testing | $100,000 | $0 | $50,000 (0.5 FTE) | $200,000 (1 FTE) |

| Subtotal | $170,000 | $735,000 | $2,680,000 |

2. Licensing and Software (10-15% of total budget)

Mirth Connect licensing varies by edition and deployment model. With the 2025 commercial transition, budget for commercial licensing from day one:

- Mirth Connect Standard: $15,000-$30,000/year per production server

- Mirth Connect Enterprise: $30,000-$60,000/year per production server

- Additional tools: MirthSync (free), monitoring tools ($5,000-$15,000/year), testing frameworks (typically free/open-source)

Small project: $15,000-$30,000. Medium project: $45,000-$120,000. Large project: $120,000-$300,000+.

3. Infrastructure (10-15% of total budget)

Servers, databases, network equipment, and cloud resources:

- Production servers: 2 servers minimum (primary + failover) with 8+ CPU cores, 32GB+ RAM, SSD storage

- Non-production environments: Development, staging, and testing environments (can be smaller specifications)

- Database: PostgreSQL (recommended) with appropriate storage for message logs and channel data

- Monitoring: Dedicated monitoring server or cloud-based monitoring service

- Network: VPN equipment, firewall rules, SSL certificates

Small project: $20,000-$40,000/year. Medium project: $40,000-$80,000/year. Large project: $80,000-$200,000/year.

4. Training (5-10% of total budget)

NextGen offers Mirth Connect certification courses. Budget for initial training plus ongoing skill development:

- Mirth Connect certification: $3,000-$5,000 per engineer, 2-4 week program

- HL7 training: $2,000-$4,000 per analyst

- FHIR training: $2,000-$3,000 per engineer (if FHIR interfaces are in scope)

- Ongoing learning: Conference attendance, vendor training updates ($5,000-$10,000/year for the team)

Small project: $10,000-$20,000. Medium project: $25,000-$50,000. Large project: $50,000-$100,000.

5. Vendor Onboarding and Coordination (5-10% of total budget)

Often overlooked. Many vendors charge for interface activation, test environment access, or technical support during implementation:

- Interface activation fees: $2,000-$10,000 per vendor per interface

- Test environment access: $500-$2,000/month per vendor

- Vendor technical support during testing: Often included but sometimes billed separately

- Travel for on-site vendor coordination: $5,000-$15,000 for medium/large projects

Small project: $10,000-$25,000. Medium project: $30,000-$75,000. Large project: $75,000-$200,000.

6. Contingency (15-20% of total budget)

Non-negotiable. Integration projects have too many external dependencies to run without contingency. The industry average budget overrun of 30% makes a 20% contingency a minimum, not a luxury.

Total budget summary

| Component | Small (5-10 interfaces) | Medium (20-50 interfaces) | Large (100+ interfaces) |

|---|---|---|---|

| Staffing | $170,000 | $735,000 | $2,680,000 |

| Licensing | $22,500 | $82,500 | $210,000 |

| Infrastructure | $30,000 | $60,000 | $140,000 |

| Training | $15,000 | $37,500 | $75,000 |

| Vendor Onboarding | $17,500 | $52,500 | $137,500 |

| Contingency (20%) | $51,000 | $193,500 | $648,500 |

| Total | $306,000 | $1,161,000 | $3,891,000 |

These numbers are realistic for a North American healthcare organization in 2026. Adjust for your geography, existing staff (reduce staffing costs if roles are already filled), and infrastructure (reduce if using cloud/existing servers).

Phase-by-Phase Plan

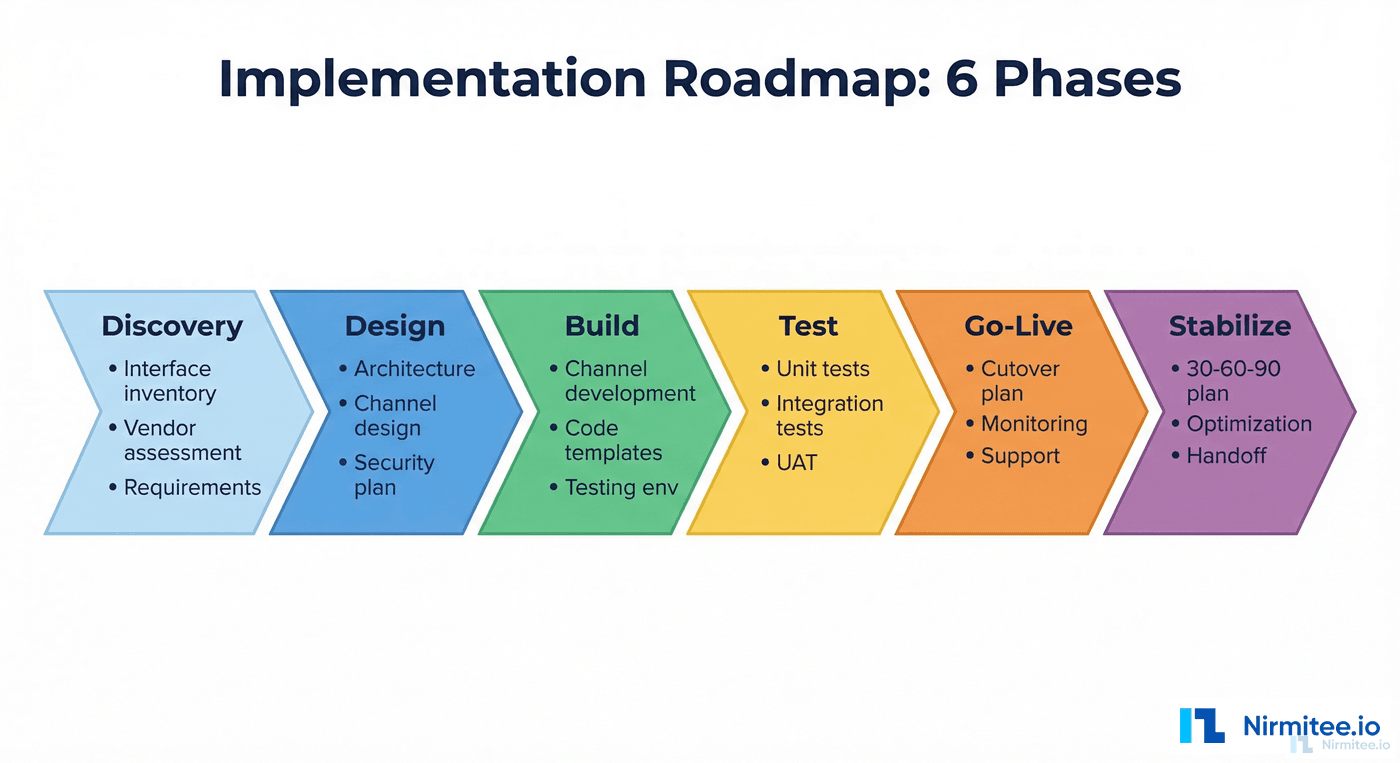

Phase 1: Discovery (4-8 weeks)

The discovery phase determines everything that follows. Invest the time here and you save multiples later.

- Week 1-2: Stakeholder interviews, system inventory, initial interface list

- Week 2-3: Vendor outreach for HL7 specifications and test environment access

- Week 3-4: Infrastructure requirements analysis, network architecture review

- Week 4-6: Prioritization, wave planning, dependency mapping

- Week 6-8: Project plan finalization, budget approval, team onboarding

Deliverables: Interface inventory spreadsheet, wave plan, infrastructure requirements document, project plan with timeline, approved budget.

Phase 2: Design (3-6 weeks)

- Mirth Connect architecture: server topology, database design, high availability configuration

- Channel design patterns: naming conventions, template channels, code template libraries

- Error handling strategy: error channels, alerting, escalation paths

- Deployment workflow: Git-based promotion, CI/CD pipeline design

- Monitoring strategy: dashboard design, alert thresholds, on-call structure

- Security design: TLS configuration, authentication, audit logging, HIPAA compliance controls

Deliverables: Architecture document, channel design guide, deployment runbook, security plan.

Phase 3: Build (6-40 weeks depending on project size)

- Infrastructure provisioning and Mirth Connect installation

- Template channel creation and code template library development

- Channel development in priority waves

- Unit testing for transformer functions

- CI/CD pipeline implementation

- Monitoring and alerting configuration

Deliverables: Deployed channels (in dev/staging), test message libraries, CI/CD pipeline, monitoring dashboards. See our CI/CD guide for implementation details.

Phase 4: Test (3-16 weeks depending on project size)

- Integration testing with vendor test systems

- End-to-end workflow testing across connected channels

- Performance testing at expected peak volumes

- Clinical validation with domain experts

- Security testing and HIPAA compliance verification

- Failover and disaster recovery testing

Deliverables: Test results documentation, clinical sign-off, performance benchmarks, security assessment.

Phase 5: Go-Live (1-12 weeks depending on project size)

- Pre-go-live checklist verification (see below)

- Phased production cutover by wave

- Parallel processing period (old and new running simultaneously)

- Message comparison and validation

- Stakeholder sign-off per wave

- Rollback execution if critical issues arise

Phase 6: Stabilize (4-12 weeks)

- 30 days: Active monitoring, rapid issue resolution, daily status meetings

- 60 days: Performance optimization, documentation finalization, reduced monitoring cadence

- 90 days: Knowledge transfer to operations team, governance handoff, project closure

Go-Live Checklist

Do not go live until every item on this list is true. Every item represents a lesson learned from a failed go-live:

- All channels tested end-to-end with production-like data

- Clinical stakeholders have validated message content accuracy

- Monitoring and alerting configured and verified (send a test alert)

- On-call rotation established with runbooks for common issues

- Rollback plan documented and tested (you can revert in under 30 minutes)

- Vendor support contacts confirmed and available during go-live window

- Network team confirmed firewall rules and VPN tunnels for production

- Database backups verified and tested for restoration

- Performance baseline established (normal message volume and processing time)

- Security controls verified (TLS, authentication, audit logging)

- Communication plan: who gets notified of issues and how

- Post-go-live support schedule: who is on-site, for how long, and what triggers escalation

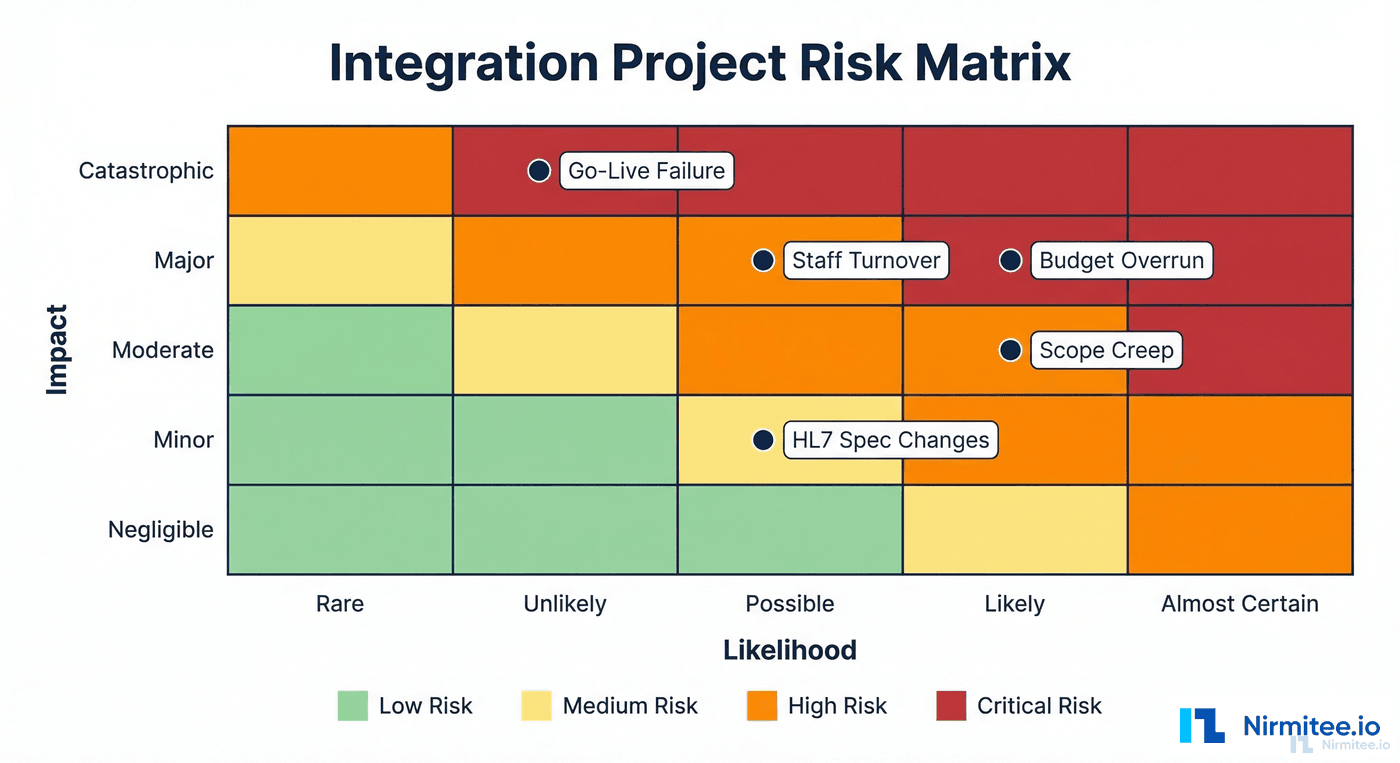

Risk Factors and Contingency

Plan for these risks explicitly. Each one has derailed real integration projects:

Vendor delays (likelihood: high, impact: moderate)

Vendors will be late with specifications, test environments, and go-live support. Mitigate by: starting vendor coordination in Week 1 of discovery, building 4-week buffers for vendor dependencies, and having parallel work (other interfaces) to fill gaps when a vendor is slow.

Staff turnover (likelihood: moderate, impact: high)

Integration engineers are in high demand. Losing a key engineer mid-project can delay the timeline by 3-6 months (time to hire + ramp up). Mitigate by: ensuring no single engineer is the only person who understands critical channels, documenting everything, cross-training the team, and offering competitive compensation and interesting work to retain talent.

Scope creep (likelihood: high, impact: moderate)

New interfaces will be discovered after the project starts. Stakeholders will request "just one more" interface. Mitigate by: defining a clear change control process, maintaining a backlog of out-of-scope interfaces for a future phase, and having the PM enforce scope boundaries with executive backing.

HL7 specification changes (likelihood: moderate, impact: moderate)

Vendors update their HL7 implementations between the time you receive the spec and the time you go live. Mitigate by: testing against the actual system (not just the spec document), building flexible transformers that handle optional fields gracefully, and including a specification re-validation step before go-live.

Budget overrun (likelihood: high, impact: major)

The 52% overrun statistic is real. Mitigate by: budgeting 20% contingency (non-negotiable), tracking actuals against budget weekly, escalating early when trends indicate overrun, and being willing to descope Wave 3 interfaces to stay within budget for Waves 1 and 2.

Go-live failure (likelihood: low, impact: catastrophic)

A production cutover that fails and cannot be rolled back. Mitigate by: always having a tested rollback plan, running parallel processing during transition, cutting over in small waves (not all-at-once), and having vendor support on standby during every go-live window.

Post-Go-Live Support Plan

The project does not end at go-live. The 30-60-90 day stabilization period is where production issues are resolved, performance is optimized, and the project transitions from the implementation team to the operations team.

Days 1-30: Active stabilization

- Daily monitoring review by the implementation team

- Rapid response to production issues (4-hour SLA for critical, 24-hour for non-critical)

- Daily status meeting with stakeholders (15 minutes)

- Track and resolve all go-live issues in a shared tracker

- Adjust alerting thresholds based on actual production patterns

Days 31-60: Optimization and documentation

- Performance tuning based on production data. Refer to performance tuning guidelines for optimization techniques.

- Documentation finalization: all channels documented, runbooks complete, architecture diagrams updated

- Monitoring review: are the right things being monitored? Any false positives to tune out?

- Weekly status meetings (reduced cadence)

Days 61-90: Transition to operations

- Knowledge transfer sessions to the operations/support team

- On-call rotation fully transferred to operations

- Project closure documentation: lessons learned, final budget reconciliation, interface catalog

- Implementation team transitions to other projects

- Quarterly health check process established

Need expert help with healthcare data integration? Explore our Healthcare Interoperability Solutions to see how we connect systems seamlessly. Talk to our team to get started.

Frequently Asked QuestionsCan I use contractors or consultants instead of hiring full-time integration engineers?

Yes, and many organizations do, especially for the build phase. Consultants with Mirth Connect expertise typically bill $150-$250/hour ($300,000-$500,000/year equivalent). The advantages are: no long-term hiring commitment, immediate availability of experienced talent, and known skill level. The disadvantages are: higher hourly cost, knowledge leaves when the engagement ends, and you still need at least one internal engineer who understands the system for ongoing operations. The optimal approach for medium projects is 1 internal engineer (long-term owner) + 1-2 consultants (build phase acceleration).

What if we cannot get vendor specifications on time?

This is the most common schedule risk. Three mitigation strategies: (1) Build channels based on HL7 standard specifications and adjust when vendor-specific specs arrive. Most ADT and ORU interfaces are 80% standard with 20% vendor customization. (2) Use production message samples if available from an existing integration engine. Reverse-engineer the specification from actual messages. (3) Request a "test feed" from the vendor — many systems can generate sample messages that reveal the actual message structure, even before formal specifications are delivered. Never wait passively for vendor specs. Always have parallel work available.

How do I justify the budget to executive leadership?

Frame the investment in terms of operational outcomes, not technology features. Integration infrastructure enables: reduced manual data entry (quantify nurse hours saved per interface), faster lab result delivery (quantify clinical impact of 30-minute vs 4-hour turnaround), elimination of duplicate patient records (quantify registration error reduction), and regulatory compliance with interoperability mandates (quantify penalties for non-compliance). A single EHR integration that eliminates manual result entry for a lab processing 500 tests/day saves approximately $180,000/year in staff time. The integration infrastructure pays for itself within the first year for most organizations.

Should we build the infrastructure in-house or use a managed Mirth hosting service?

Managed hosting (including NextGen's Fully Managed offering) makes sense when: your IT team lacks server administration capacity, you want predictable monthly costs, and you do not need deep infrastructure customization. In-house makes sense when: you have existing server infrastructure and operations staff, you need maximum control over security and network configuration, or you have compliance requirements that prevent using third-party hosting. Managed hosting typically adds $20,000-$50,000/year but saves 0.5 FTE in DevOps effort, so it can be cost-neutral or cost-positive for organizations without existing infrastructure teams.

What is the single biggest factor that determines project success?

Vendor coordination. The integration engine is ready in weeks. The team is productive in months. But vendor responsiveness determines the pace of the entire project. Organizations that assign a dedicated vendor liaison who proactively manages vendor timelines, escalates delays early, and maintains relationships with vendor technical contacts consistently deliver projects closer to plan. The second biggest factor is realistic scoping — every interface you discover after the project starts costs 2-3x more than one that was in the original plan, because it disrupts schedules, competes for resources, and often has dependencies on interfaces that are already built.